promethes-联邦-服务监控-nginx监控-二进制安装grafana

联邦配置

Prometheus性能足够支撑上万台规模的集群,有非常高效的时间序列数据存储方法,每个采样数据仅仅占用3.5byte左右空间,上百万条时间序列,30秒间隔,保留60天,大概200多G空间

@本地存储简介

默认情况下,Prometheus将采集到的数据存储在本地的TSDB数据库中,默认路径为Prometheus安装目录的data目录,数据写入过程为先把数据写入wal日志并放在内存,然后2小时后将内存数据保存至一个新的block块,同时再把新采集的数据写入内存并在2小时后再保存至一个新的block块,以此类推

内存数据 磁盘中数据

2小时内存数据 block

@block简介

每个block为一个data目录中以01开头的存储目录

root@pro-server-182:/apps/prometheus/data# ls -l total 20 drwxr-xr-x 3 root root 01FSXXTEPNT3FC2MJSNH605WNN #block drwxr-xr-x 3 root root 01FSY4P5YJBY7E5B6RRQ71VV9C

block会压缩,合并历史数据块,以及删除过期的块,随着压缩合并,block的数量会减少,在压缩过程中会发生三件事:定期执行压缩,合并小的block到大的block,清理过期的块

chunks 001 #数据目录,每个大小为512M超过会被切分为多个

index #索引文件,记录存储的数据的索引信息,通过文件的几个表来查找时序数据

meta.json #block元数据,包含了样本数,采集数据的起始时间

tombstones #逻辑数据,主要记录删除记录和标记删除的内容,删除标记,可在查询块时排除样本

@本地存储配置参数

--config.file=/apps/prometheus/prometheus.yml #指定配置文件

--storage.tsdb.path="data" #指定数据存储目录

--web.listen-address="0.0.0.0:9090"

--storage.tsdb.retention.size=MB,GB,TB,PB,EB #指定chunk大小,默认512MB

--query.timeout=2m #最大查询超时时间

--query.max-concurrency=20 #最大查询并发数

--web.read-timeout=5m #最大空闲超时时间

--web.max-connection=512 #最大并发连接数

--web.enable-lifecycle #启用API动态加载配置功能

环境

pro-server-182 pro-liangbang-183 pro-liangbang-184

k8s 192.168.192.241-243

nginx 132

1.联邦节点配置

root@pro-liangbang-183:/apps/prometheus# cat prometheus.yml global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. scrape_configs: # - job_name: "prometheus-cadvisor" # static_configs: # - targets: ["192.168.192.243:8080","192.168.192.242:8080","192.168.192.241:8080"] - job_name: "node_exporter242" static_configs: - targets: ["192.168.192.242:39100"] - job_name: 'tomcat' file_sd_configs: - files: - /configs/kafka.json - job_name: 'haproxy' static_configs: - targets: ['192.168.192.241:5674',"192.168.192.242:5674"]

root@pro-liangbang-183:~# cat /configs/tomcat.json [ { "targets": [ "192.168.192.243:31080"] } ]

root@pro-liangbang-184:/apps/prometheus# cat prometheus.yml global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. scrape_configs: # - job_name: "prometheus-cadvisor" # static_configs: # - targets: ["192.168.192.243:8080","192.168.192.242:8080","192.168.192.241:8080"] - job_name: "node_exporter243" static_configs: - targets: ["192.168.192.243:39100"]

2,Prometheus节点配置

root@pro-server-182:/apps/prometheus# cat prometheus.yml global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. alerting: alertmanagers: - static_configs: - targets: - 192.168.192.189:9093 rule_files: - "/apps/prometheus/*.yaml" scrape_configs: # - job_name: "prometheus-cadvisor" # static_configs: # - targets: ["192.168.192.243:8080","192.168.192.242:8080","192.168.192.241:8080"] # - job_name: "node_exporter" # static_configs: # - targets: ["192.168.192.241:39100","192.168.192.242:39100","192.168.192.243:39100"] - job_name: 'prometheus-federate-192.183' scrape_interval: 5s #收集间隔 honor_labels: true #保留源标签 metrics_path: '/federate' #联邦节点隐藏目录 params: 'match[]': - '{job="prometheus"}' - '{__name__=~"job:.*"}' #通配 - '{job="tomcat"}' #和联邦节点job_name名称一致 - '{job="haproxy"}' - '{job="node_exporter242"}' static_configs: - targets: - '192.168.192.183:9090' - job_name: 'prometheus-federate-192.184' scrape_interval: 5s honor_labels: true metrics_path: '/federate' params: 'match[]': - '{job="prometheus"}' - '{__name__=~"job:.*"}' - '{job="node_exporter243"}' static_configs: - targets: - '192.168.192.184:9090'

root@pro-server-182:/apps/prometheus# rm -rf data/ #重启服务

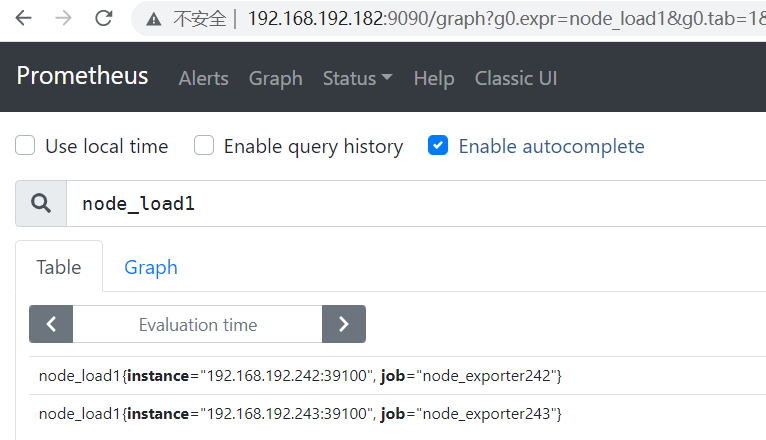

验证指标数据

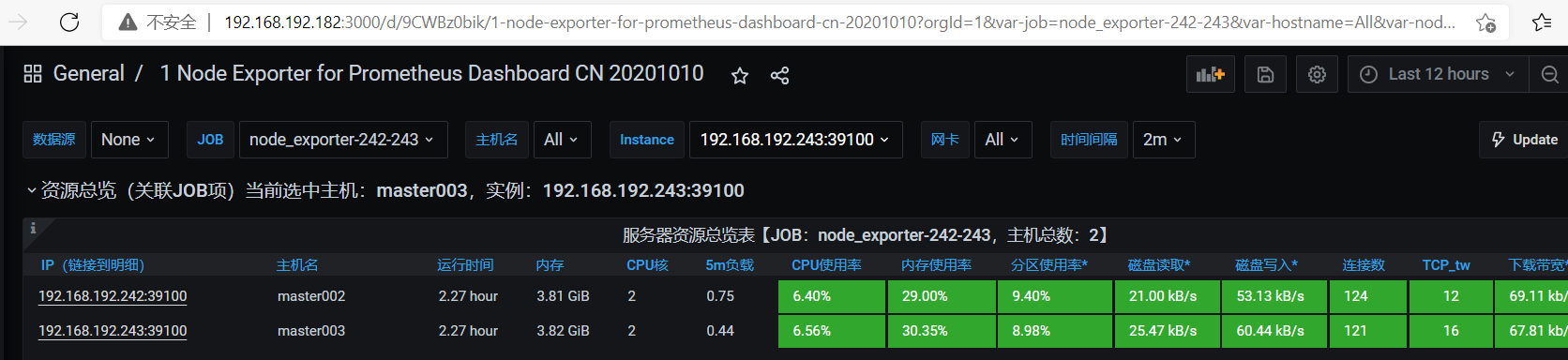

3, grafana 查看

导入模板 8919 查看node

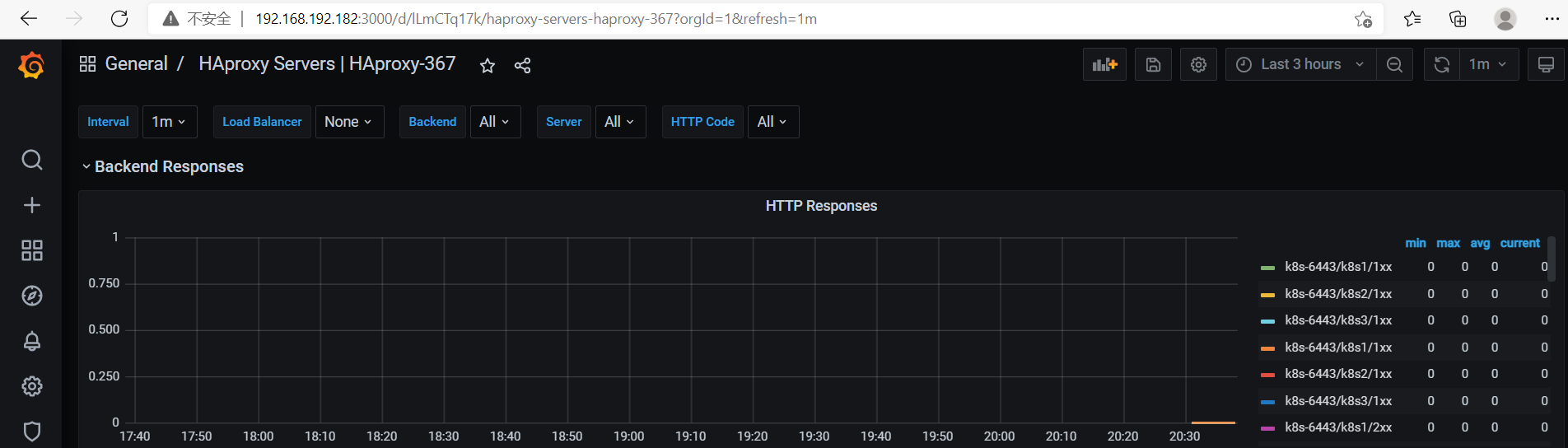

查看haproxy

模板 367 2428

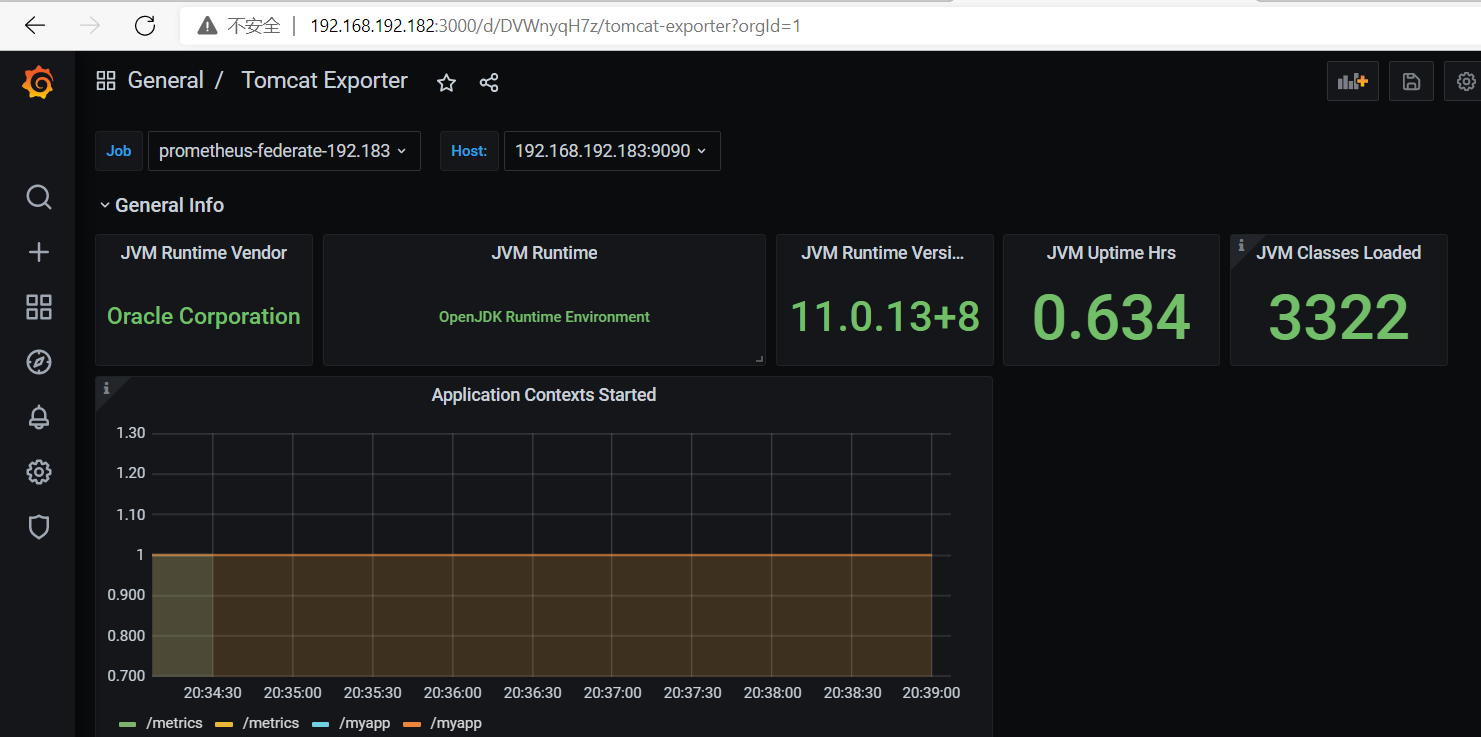

查看tomcat

监控nginx

[root@localhost src]# pwd

/usr/local/src

[root@localhost src]# git clone git://github.com/vozlt/nginx-module-vts.git

yum install -y gcc gcc-c++ openssl-devel pcre-devel make zlib-devel wget psmisc

[root@localhost src]# wget http://nginx.org/download/nginx-1.20.2.tar.gz

[root@localhost src]# tar xvf nginx-1.20.2.tar.gz

[root@localhost to]# useradd nginx -M -s /sbin/nologin

[root@localhost src]# cd nginx-1.20.2 路径可改为/apps/nginx

[root@localhost nginx-1.20.2]# ./configure --user=nginx --group=nginx --prefix=/usr/local/nginx --add-module=/usr/local/src/nginx-module-vts/

make && make install

[root@localhost conf]# pwd

/usr/local/nginx/conf

[root@localhost conf]# vim nginx.conf

#gzip on;

vhost_traffic_status_zone; #添加

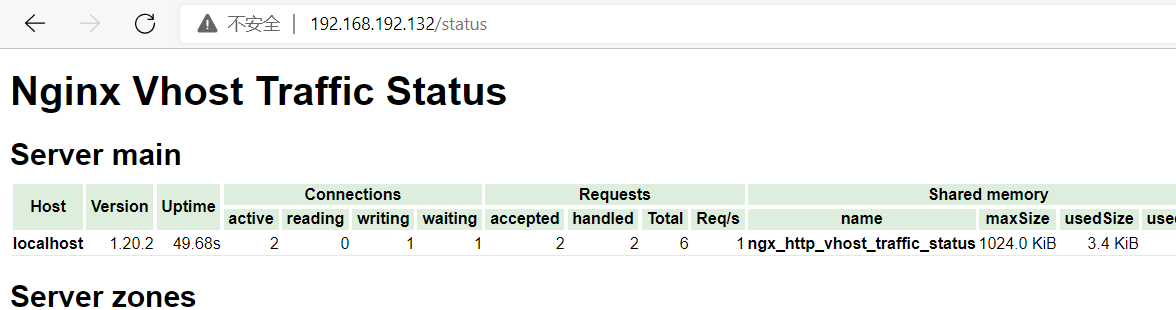

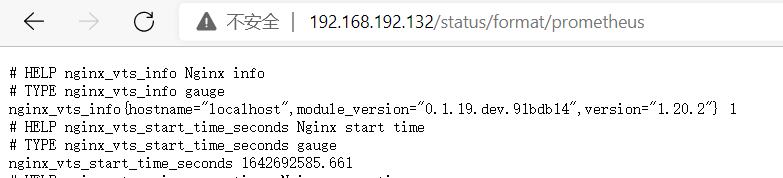

location / { root html; index index.html index.htm; } #后添加 location /status { vhost_traffic_status_display; vhost_traffic_status_display_format html; }

[root@localhost sbin]# pwd

/usr/local/nginx/sbin

[root@localhost sbin]# ./nginx -t

nginx: the configuration file /usr/local/nginx/conf/nginx.conf syntax is ok

nginx: configuration file /usr/local/nginx/conf/nginx.conf test is successful

[root@localhost sbin]# ./nginx

[root@localhost sbin]# ps -ef|grep nginx root 17218 1 0 23:29 ? 00:00:00 nginx: master process ./nginx nginx 17219 17218 0 23:29 ? 00:00:00 nginx: worker process root 17221 1437 0 23:29 pts/0 00:00:00 grep --color=auto nginx

[root@localhost apps]# wget -c https://github.com/hnlq715/nginx-vts-exporter/releases/download/v0.10.3.1/nginx-vts-exporter-0.10.3.linux-amd64.tar.gz

[root@localhost apps]# tar xvf nginx-vts-exporter-0.10.3.linux-amd64.tar.gz

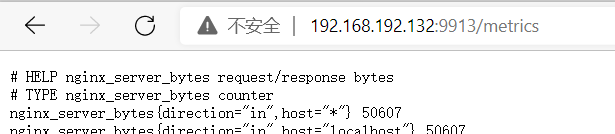

# 推荐exporter和nginx安装在同一台机器上,如果不在同一台主机,把scrape_uri改为nginx主机的地址。 # nginx_vts_exporter的默认端口号:9913,对外暴露监控接口http://xxx:9913/metrics.

[root@localhost nginx-vts-exporter-0.10.3.linux-amd64]# ./nginx-vts-exporter -nginx.scrape_timeout 10 -nginx.scrape_uri http://127.0.0.1/status/format/json

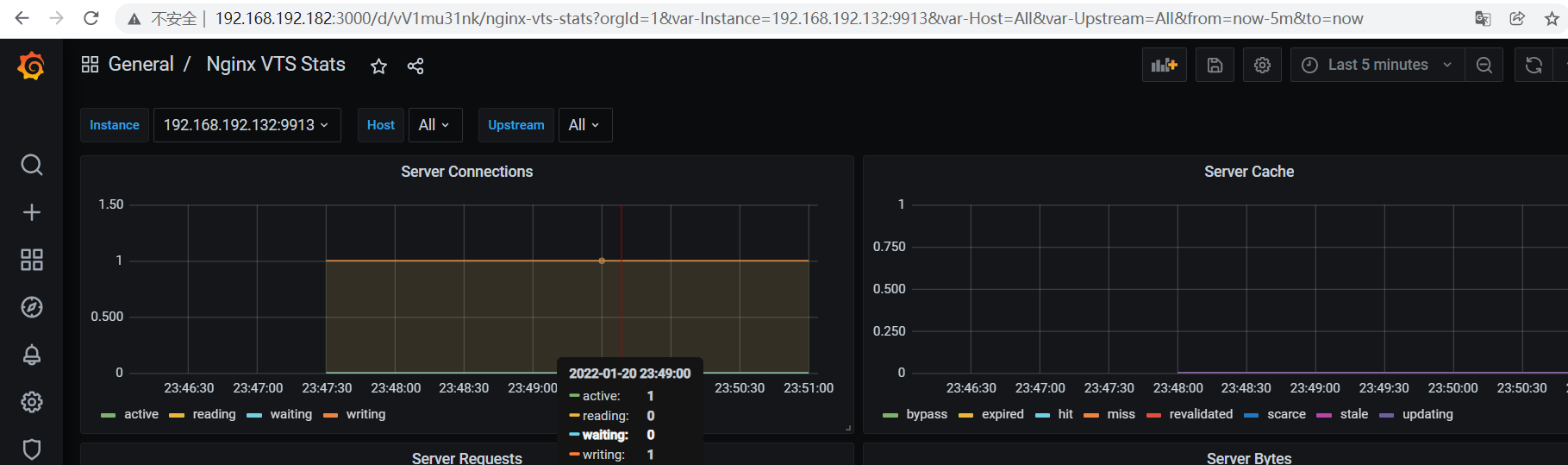

root@pro-liangbang-184:/apps/prometheus# cat prometheus.yml global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. scrape_configs: # - job_name: "prometheus-cadvisor" # static_configs: # - targets: ["192.168.192.243:8080","192.168.192.242:8080","192.168.192.241:8080"] - job_name: "node_exporter243" static_configs: - targets: ["192.168.192.243:39100"] # - job_name: "nginx" # static_configs: # - targets: ["192.168.192.132"] - job_name: "nginx" static_configs: - targets: ["192.168.192.132:9913"]

- job_name: 'prometheus-federate-192.184' scrape_interval: 5s honor_labels: true metrics_path: '/federate' params: 'match[]': - '{job="prometheus"}' - '{__name__=~"job:.*"}' - '{job="node_exporter243"}' - '{job="nginx"}' static_configs: - targets: - '192.168.192.184:9090'

2949 模板

二进制安装grafana

root@pro-server-182:~# wget https://dl.grafana.com/oss/release/grafana-7.5.11.linux-amd64.tar.gz

root@pro-server-182:~# tar xvf grafana-7.5.11.linux-amd64.tar.gz -C /usr/local/

root@pro-server-182:/usr/local/grafana-7.5.11# ln -s /usr/local/grafana-7.5.11/ /usr/local/grafana

useradd -s /sbin/nologin -M grafana mkdir /data/grafana -pmkdir /data/grafana/plugins mkdir /data/grafana/provisioning mkdir /data/grafana/data mkdir /data/grafana/log chown -R grafana:grafana /usr/local/grafana/chown -R grafana:grafana /data/grafana/

vi /usr/local/grafana/conf/defaults.ini data = /data/grafana/data logs = /data/grafana/log plugins = /data/grafana/plugins provisioning = /data/grafana/conf/provisioning

vi /lib/systemd/system/grafana-server.service [Unit] Description=Grafana After=network.target [Service] User=grafana Group=grafana Type=notify ExecStart=/usr/local/grafana/bin/grafana-server -homepath /usr/local/grafana Restart=on-failure [Install] WantedBy=multi-user.target

systemctl start grafana-server && systemctl enable grafana-server

启动服务,并用web访问http://IP:3000,默认3000端口,admin/admin