视觉VO(10-3-1)2D-3D 优化位姿和三维点- 重投影误差 原理篇

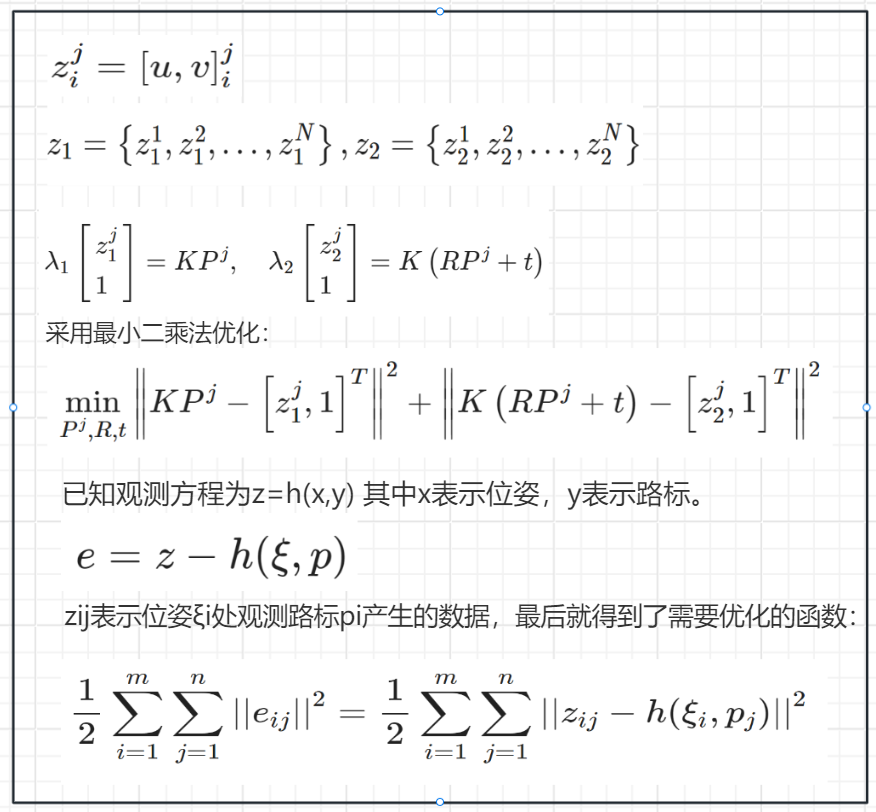

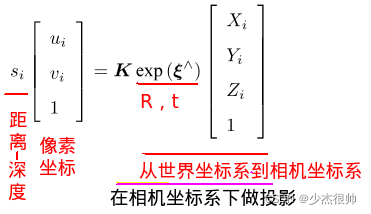

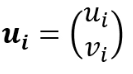

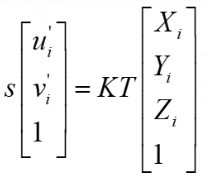

1 重投影方程

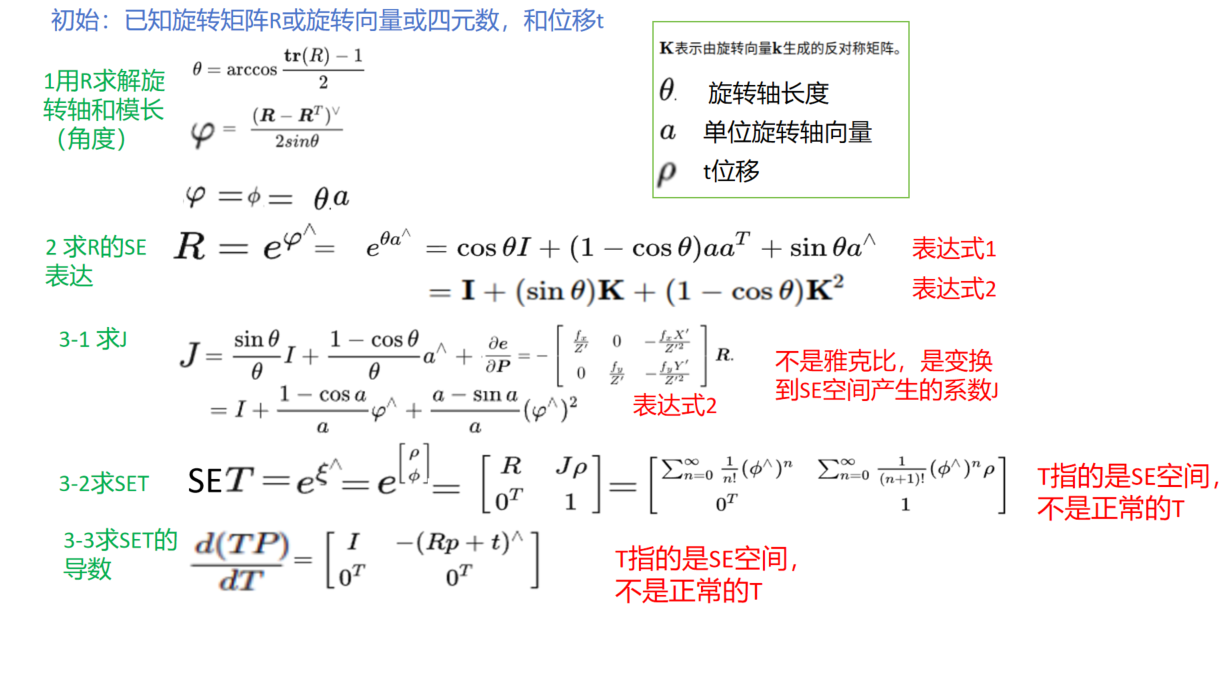

2 李代数表现形式 - 便于求导和减少约束条件

https://blog.csdn.net/weixin_49804978/article/details/121922128

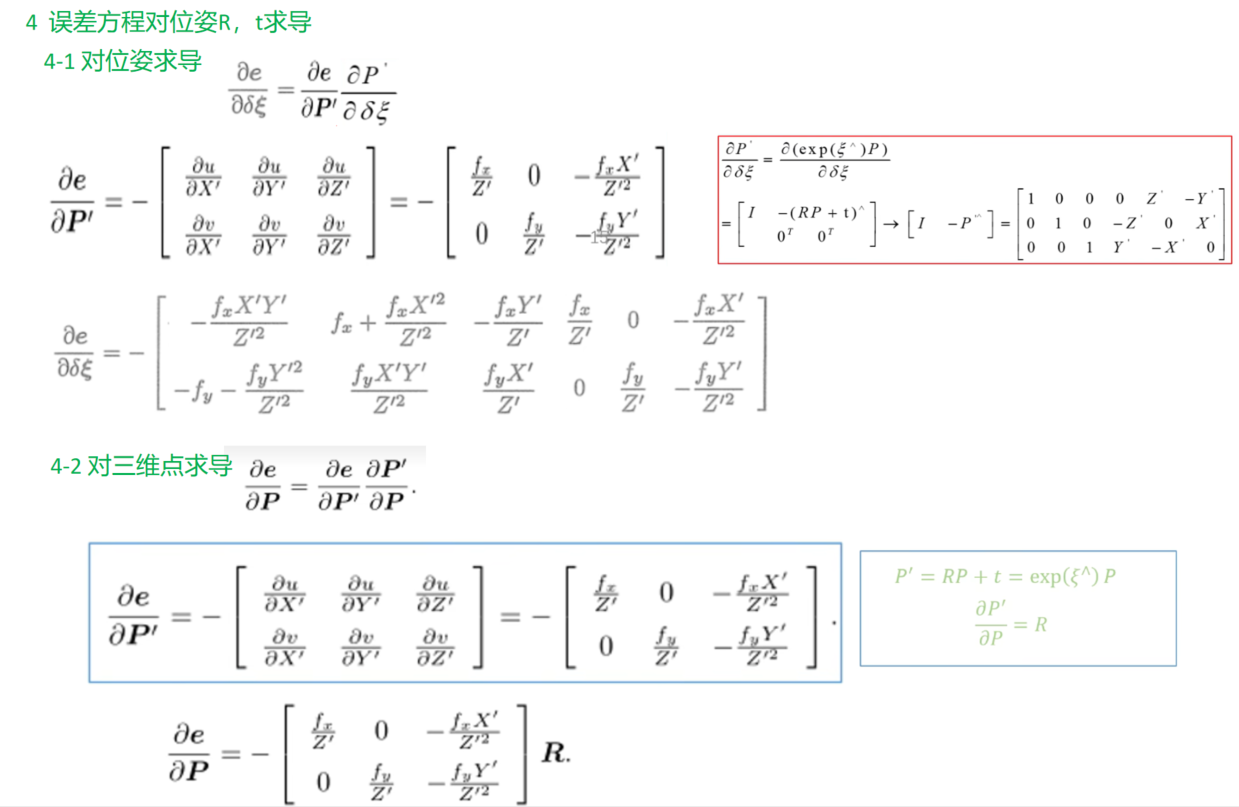

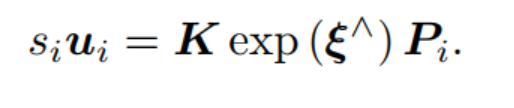

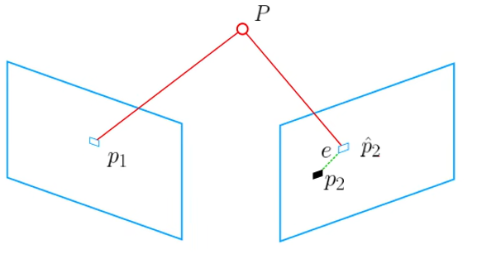

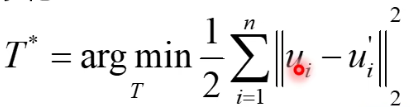

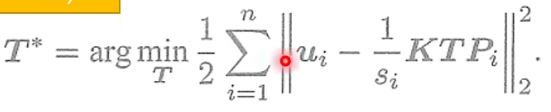

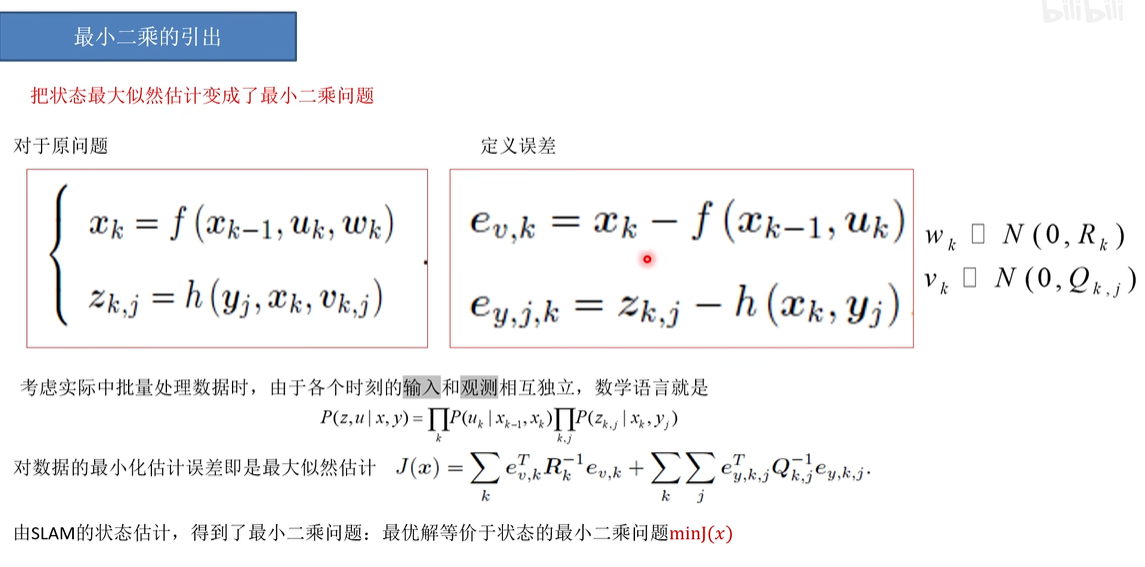

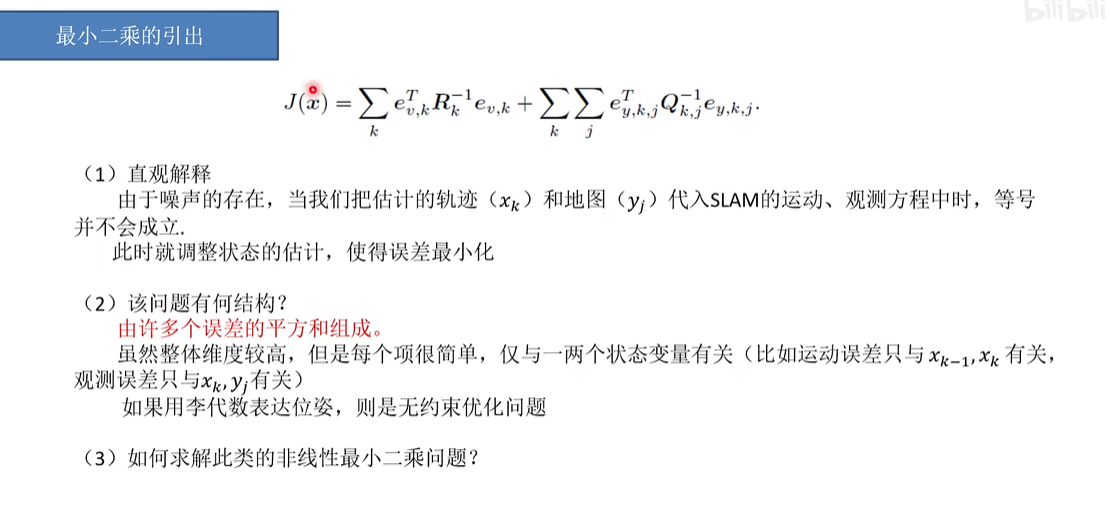

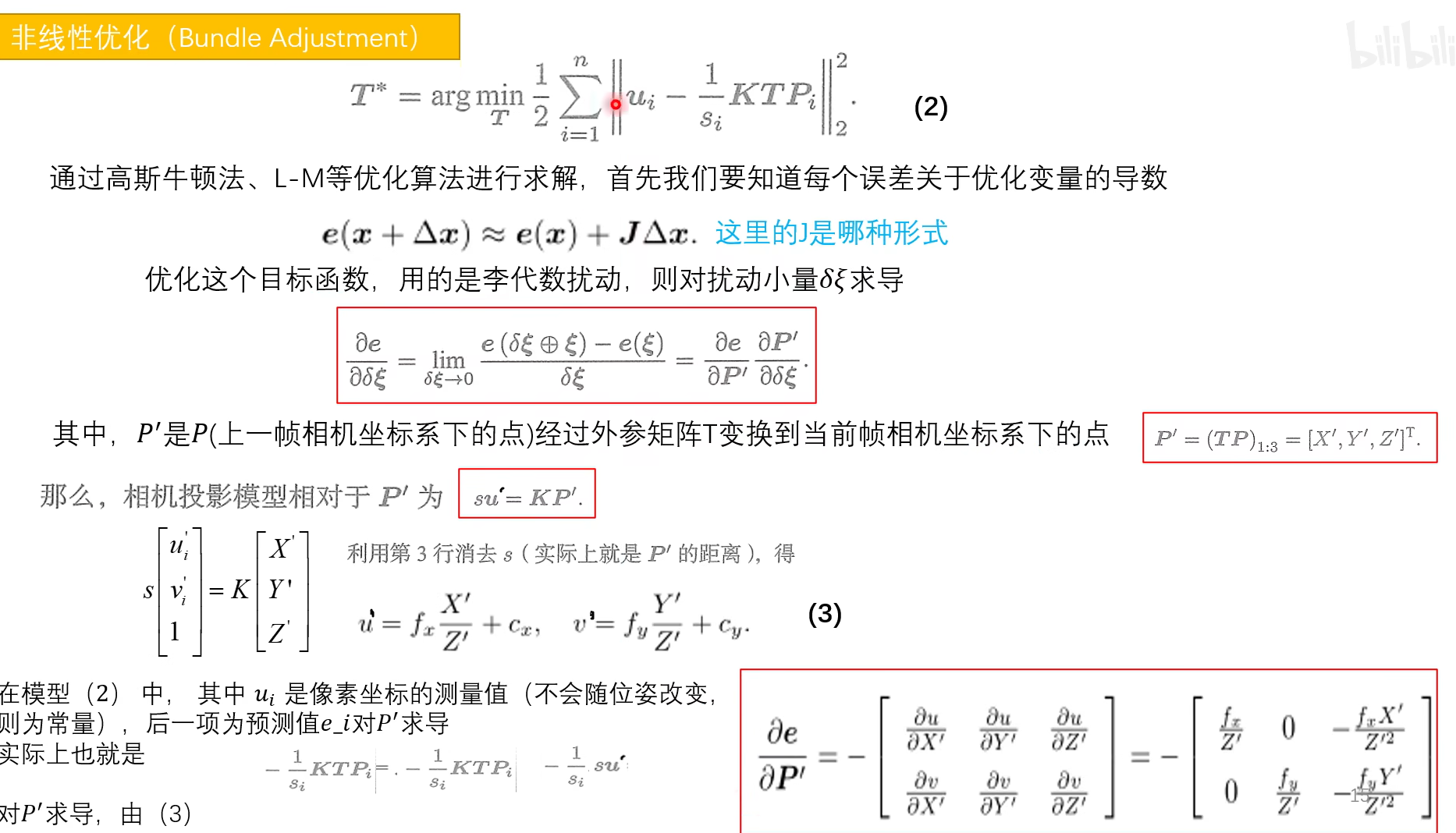

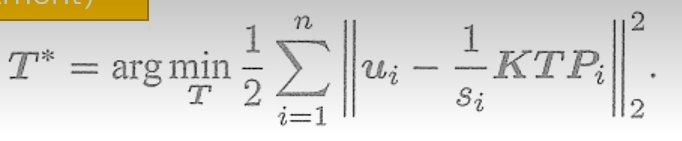

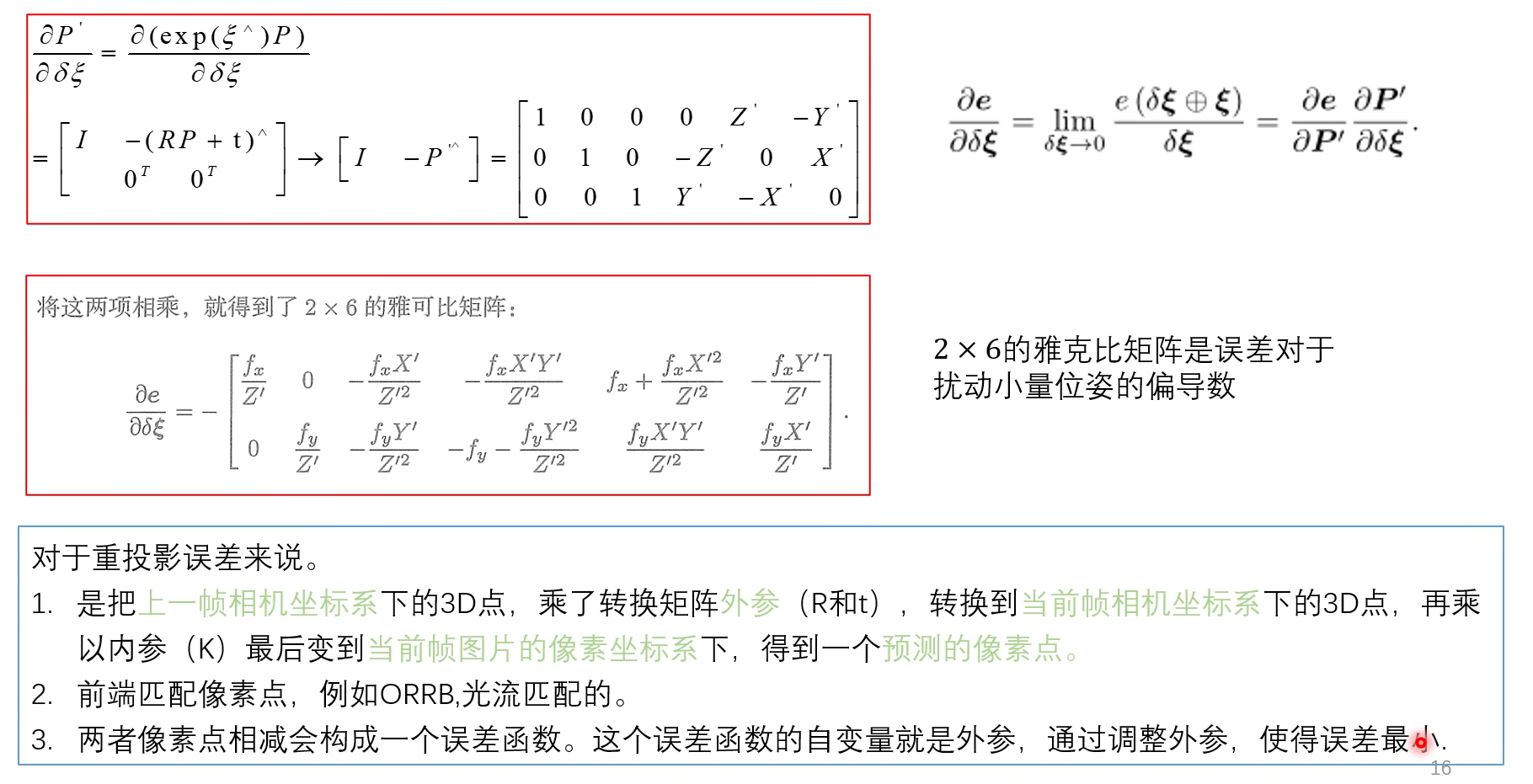

由于相机位姿未知以及观测点的噪声,该等式存在一个误差。我们将误差求和,构建最小二乘问题,然后寻找做好的相机位姿,使它最小化:

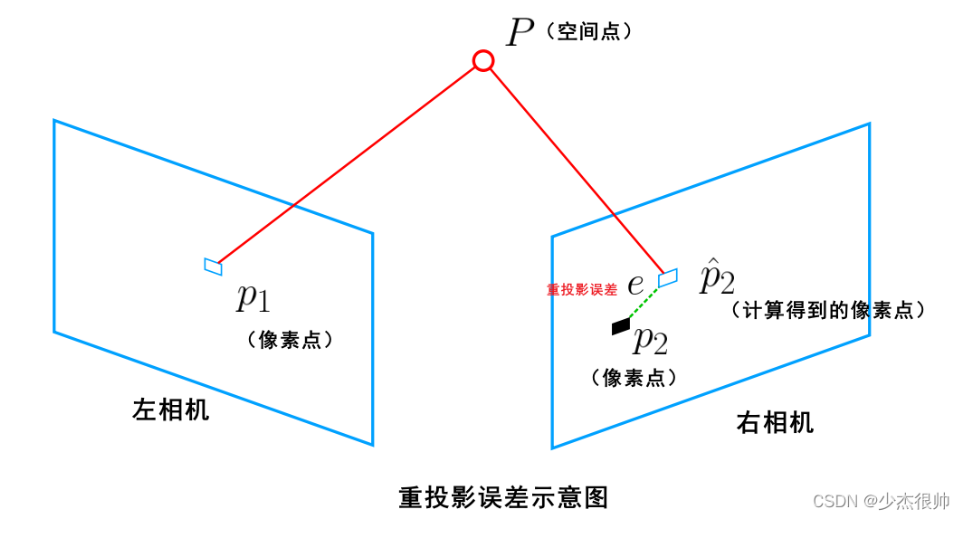

该问题的误差项,是将像素坐标(观测到的投影位置)与 3D 点按照当前估计的位姿进行投影得到的位置相比较得到的误差,所以称之为重投影误差。

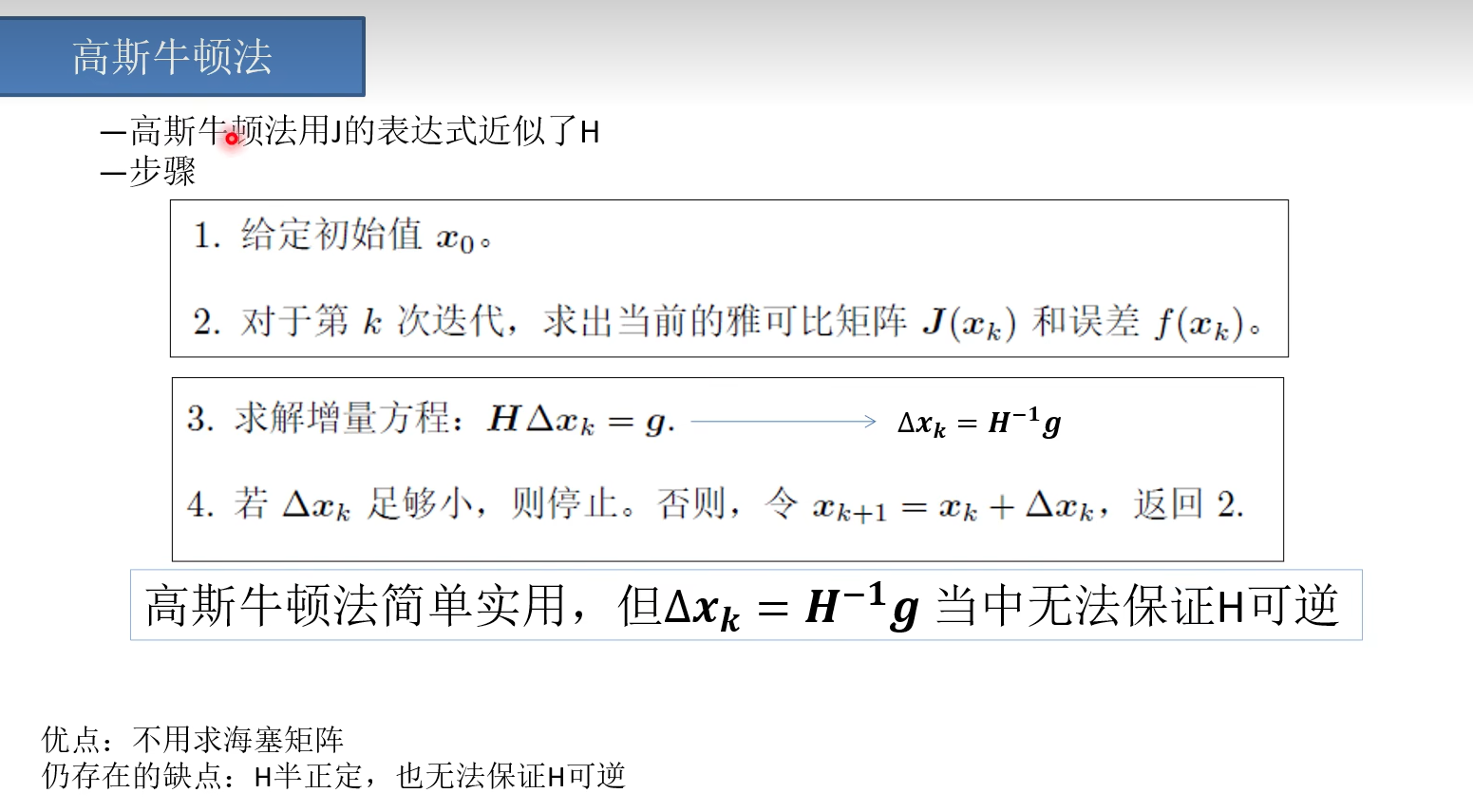

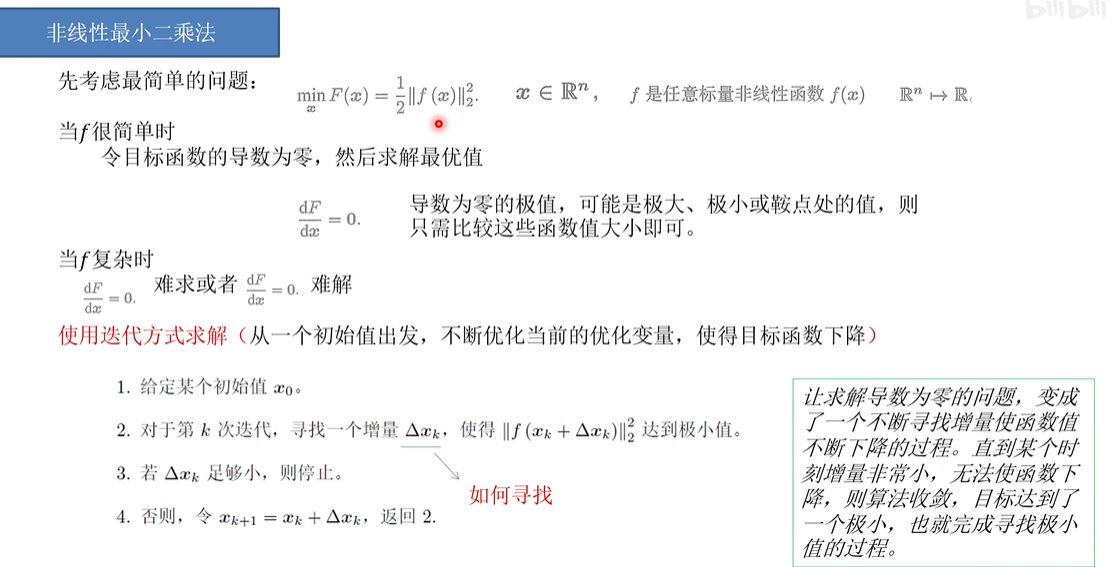

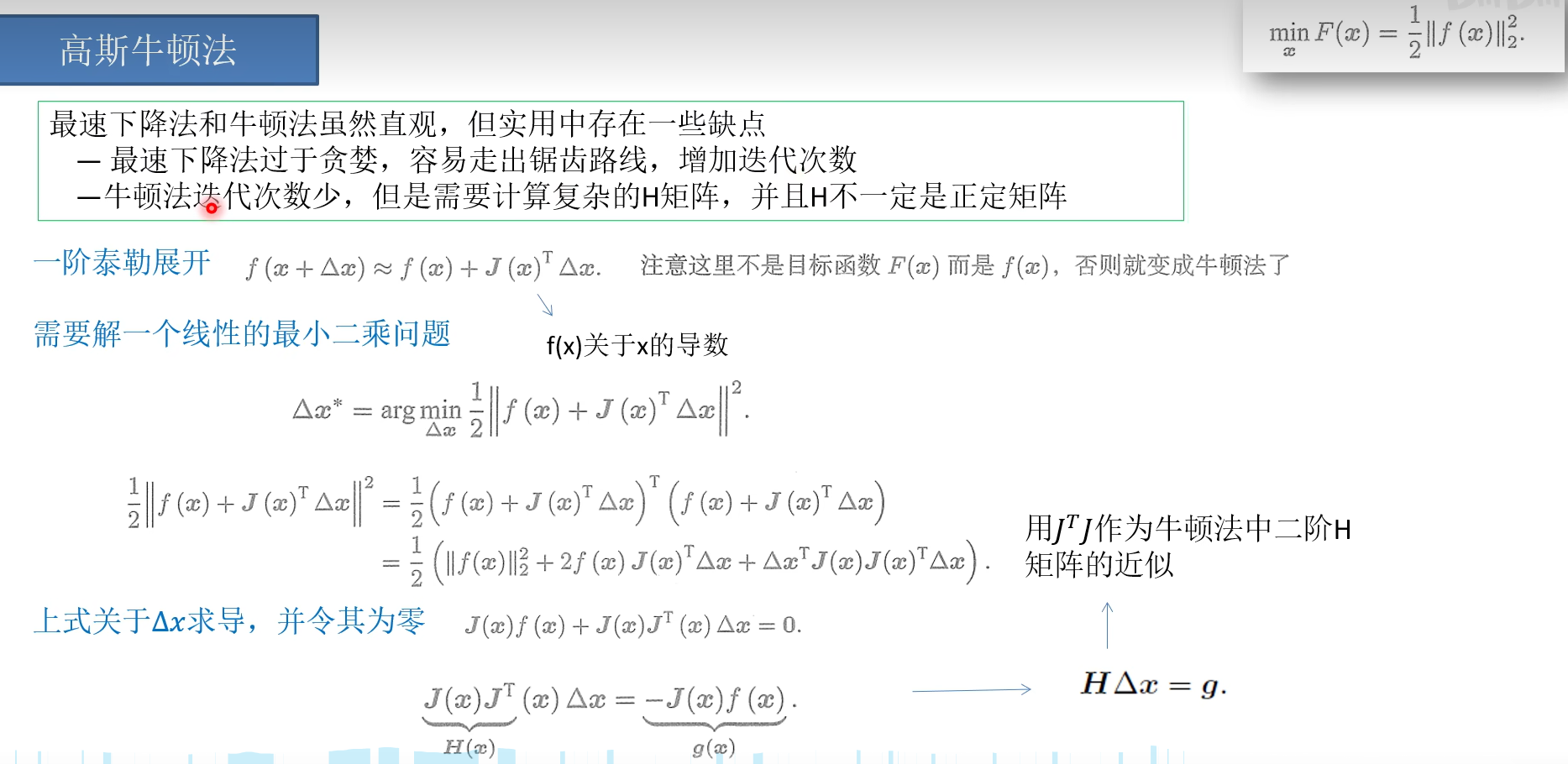

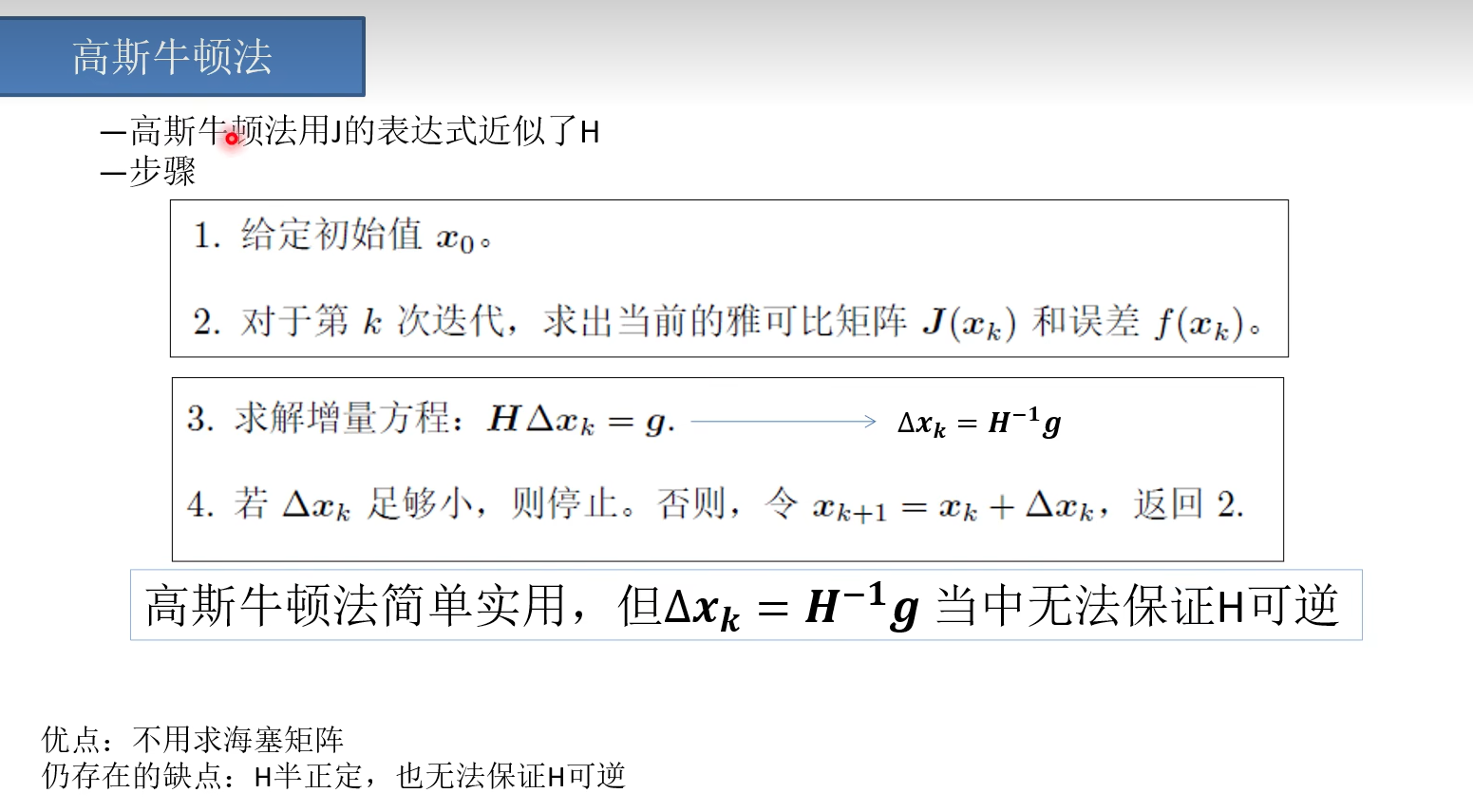

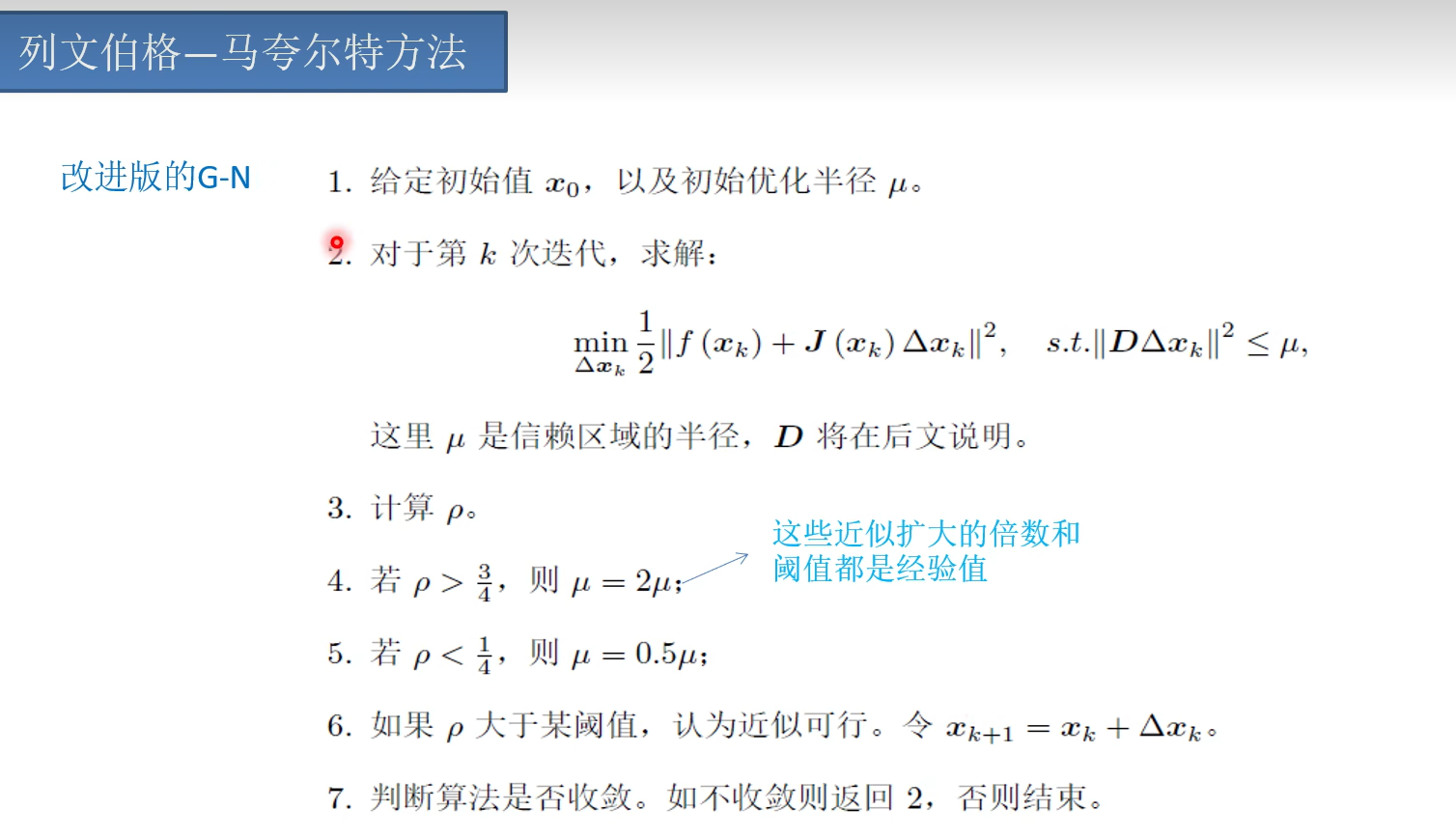

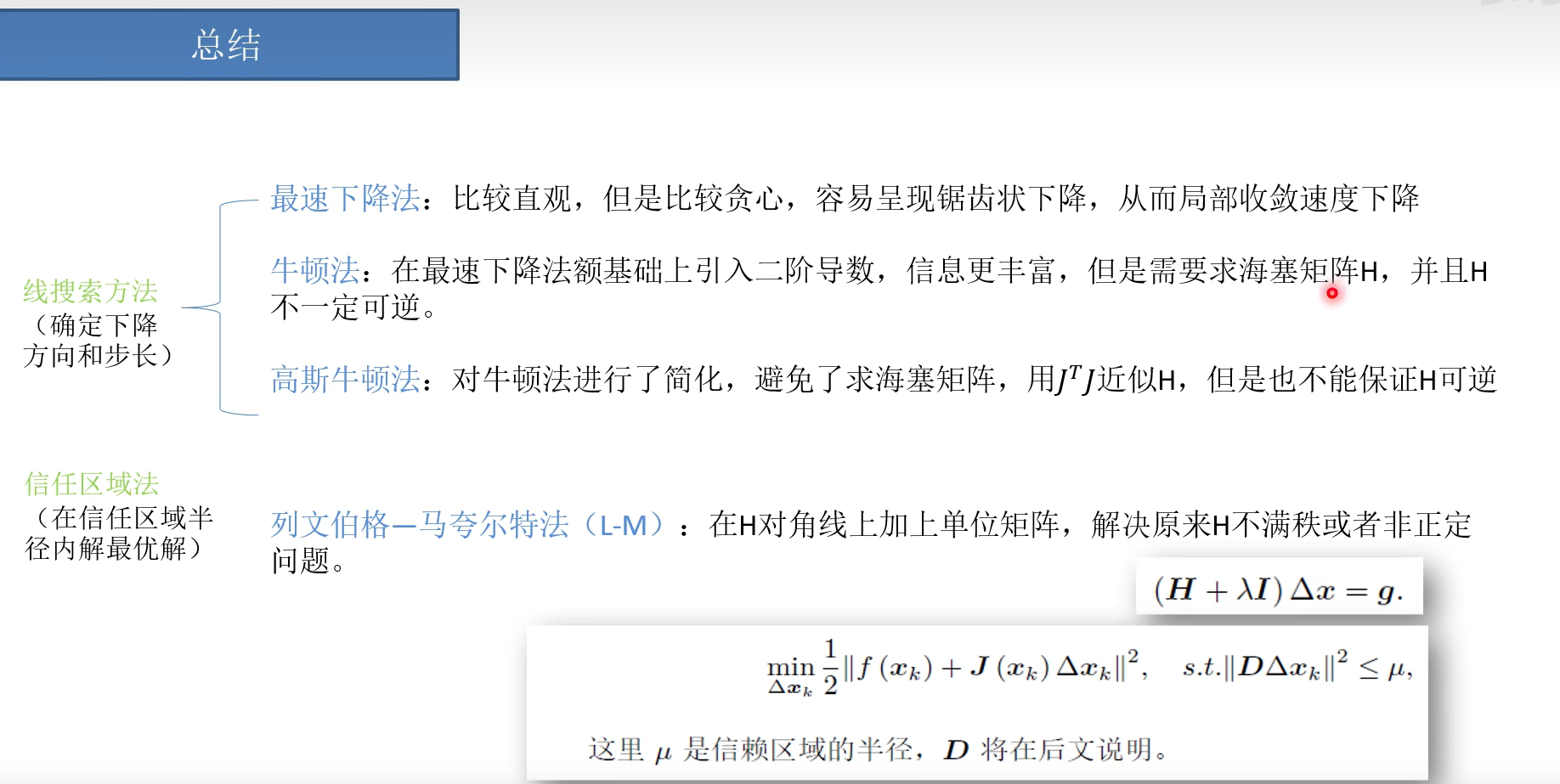

(用高斯牛顿法/列文伯格-马夸尔特方法求解)

3 误差方程求解

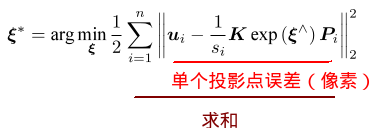

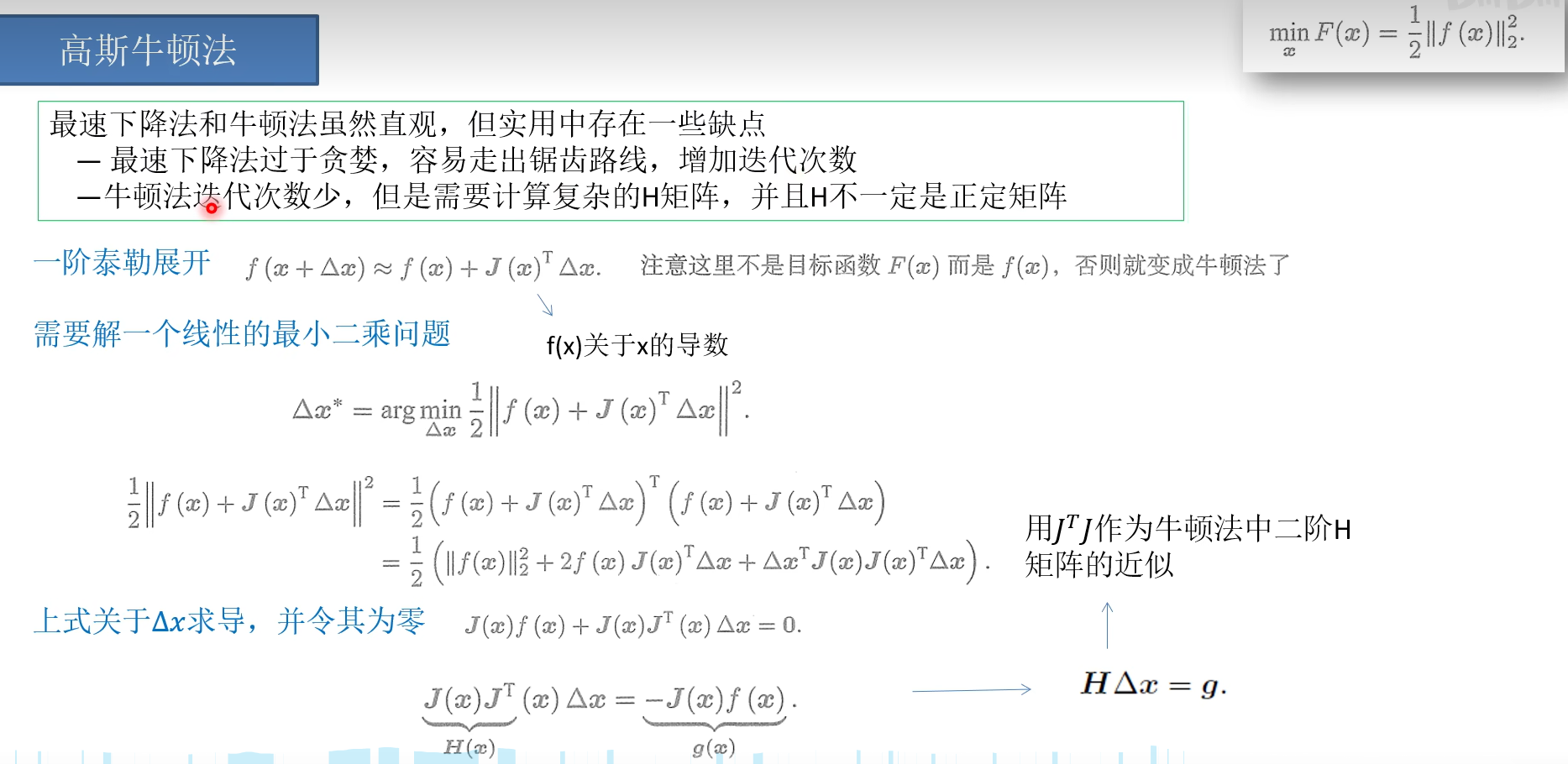

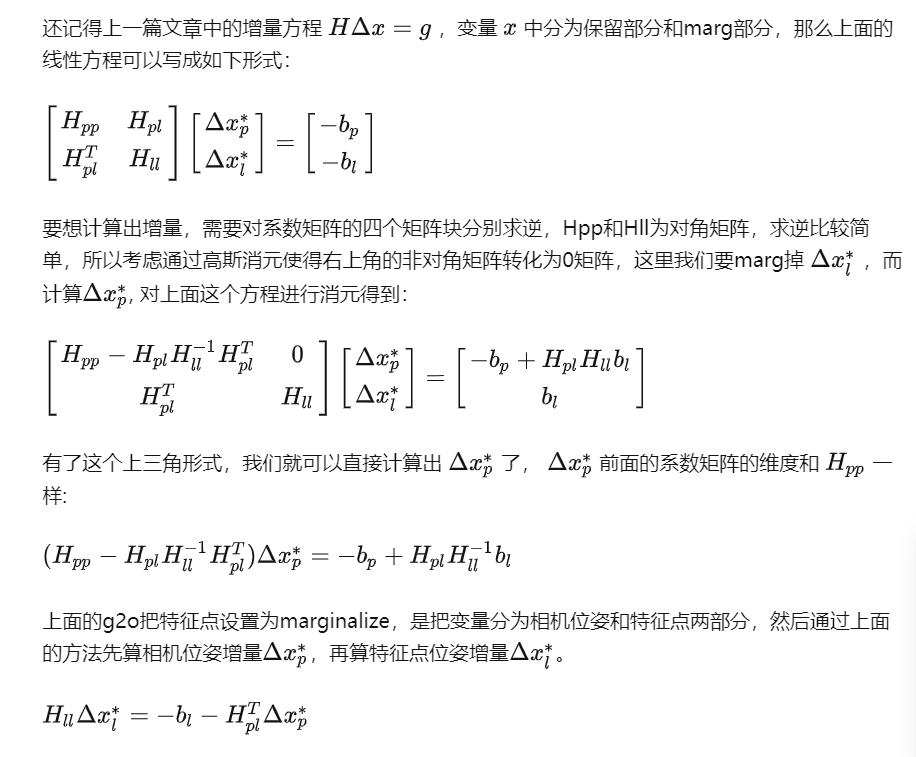

3-1 构造最小二乘解框架

高斯牛顿法举例

其中x 是位姿Rt 和 三维点xyz ,而非原来的 (u,v)观测值

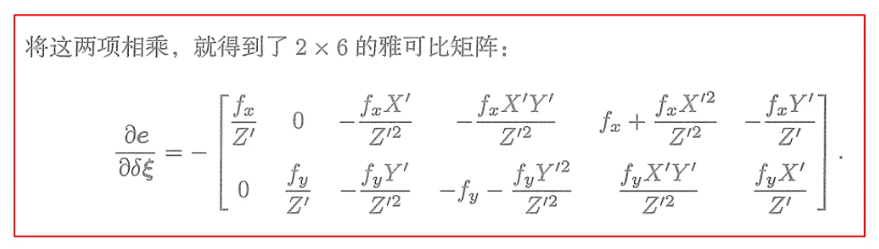

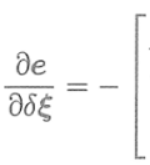

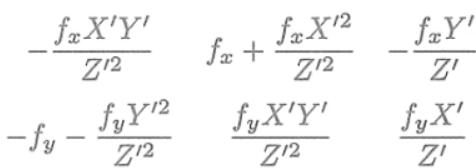

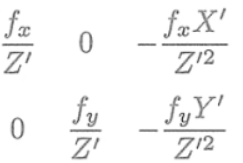

3-2 具体求解一阶雅可比方程

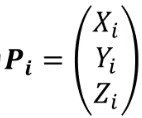

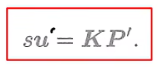

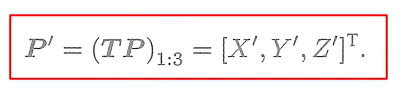

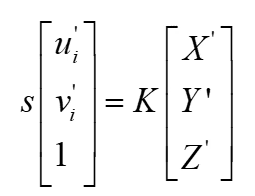

预测值

观测值

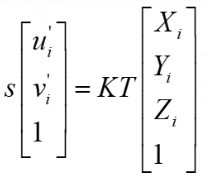

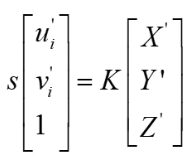

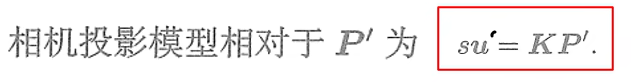

投影方程

三维点

最终

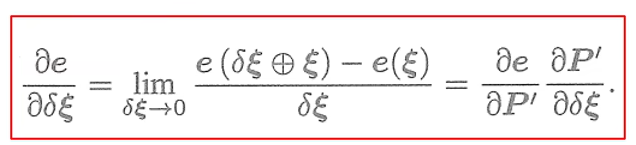

1(u,v)对dT 求导

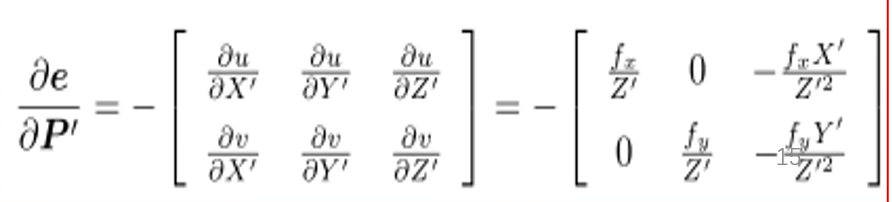

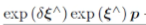

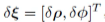

T被转化为扰动E 所以T分为两个部分

1 像素坐标(u,v)对 Rt求导

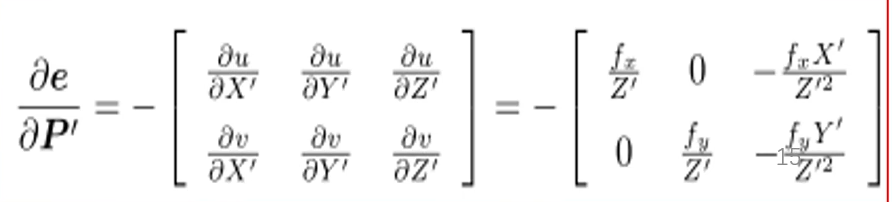

2 T对离地阿叔扰动dT求导

推导过程

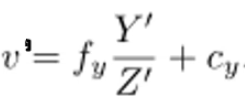

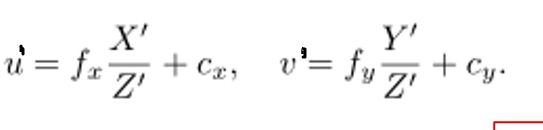

第一项求导

第二项求导

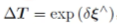

跟新流程

1*6维度 3维位姿 3为位置

1*6维度 3维位姿 3为位置

具体过程

1 Rt转化为dT

dT_1*6 = g2o::SE3Quat (R_3*3,t_1*3)

2初始值

_estimate = SE3Quat();

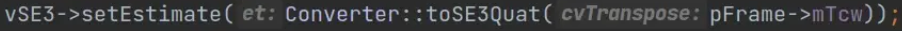

3设置值

pose->setEstimate (dT_1*6)

4每次跟新

1*6维度 3维位姿 3为位置

1*6维度 3维位姿 3为位置

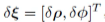

Eigen::Map<const Vector6> update(update_);

5转化

SE3Quat::exp(update)

6跟新

setEstimate(SE3Quat::exp(update)*estimate()); //更新方式

联合

实际代码 对应

位姿一阶导数J代码

_jacobianOplusXj(0, 0) = x * y / z_2 * cam->focal_length; _jacobianOplusXj(0, 1) = -(1 + (x * x / z_2)) * cam->focal_length; _jacobianOplusXj(0, 2) = y / z * cam->focal_length; _jacobianOplusXj(0, 3) = -1. / z * cam->focal_length; _jacobianOplusXj(0, 4) = 0; _jacobianOplusXj(0, 5) = x / z_2 * cam->focal_length; _jacobianOplusXj(1, 0) = (1 + y * y / z_2) * cam->focal_length; _jacobianOplusXj(1, 1) = -x * y / z_2 * cam->focal_length; _jacobianOplusXj(1, 2) = -x / z * cam->focal_length; _jacobianOplusXj(1, 3) = 0; _jacobianOplusXj(1, 4) = -1. / z * cam->focal_length; _jacobianOplusXj(1, 5) = y / z_2 * cam->focal_length;

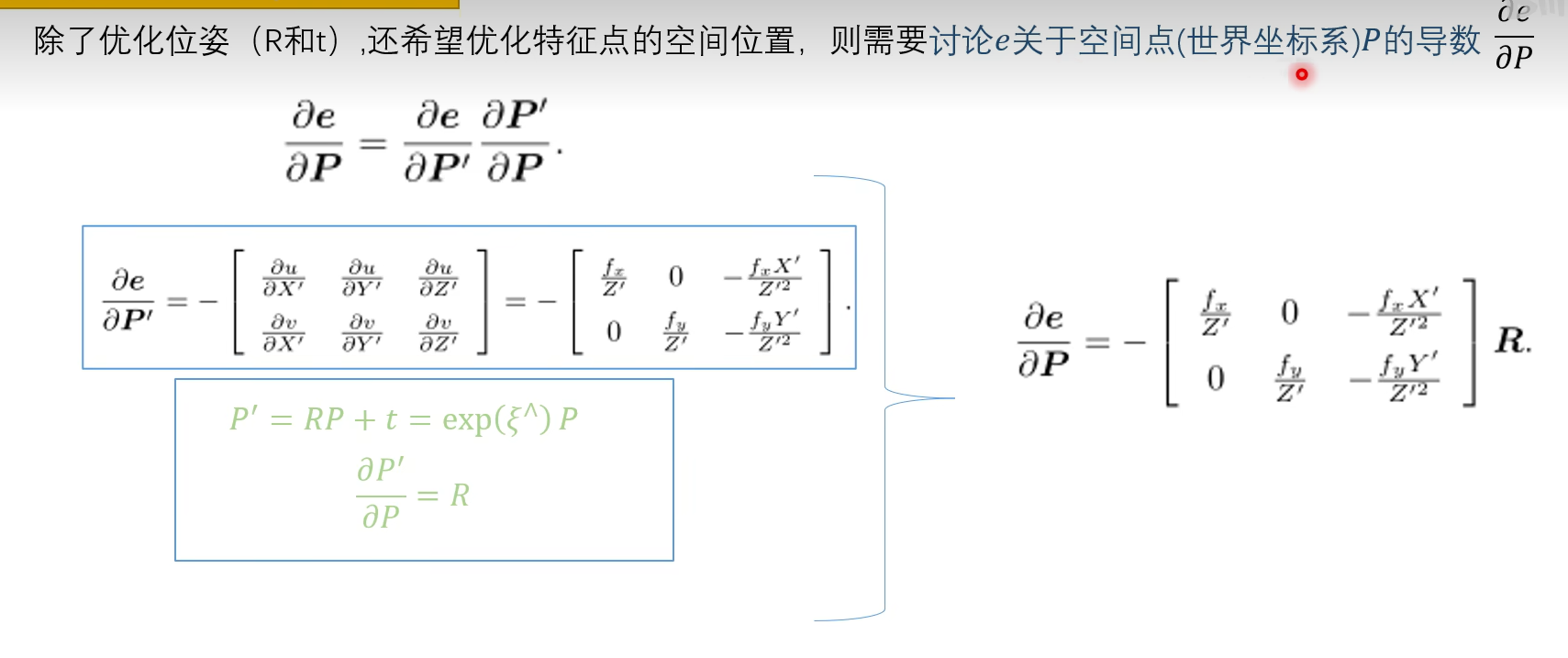

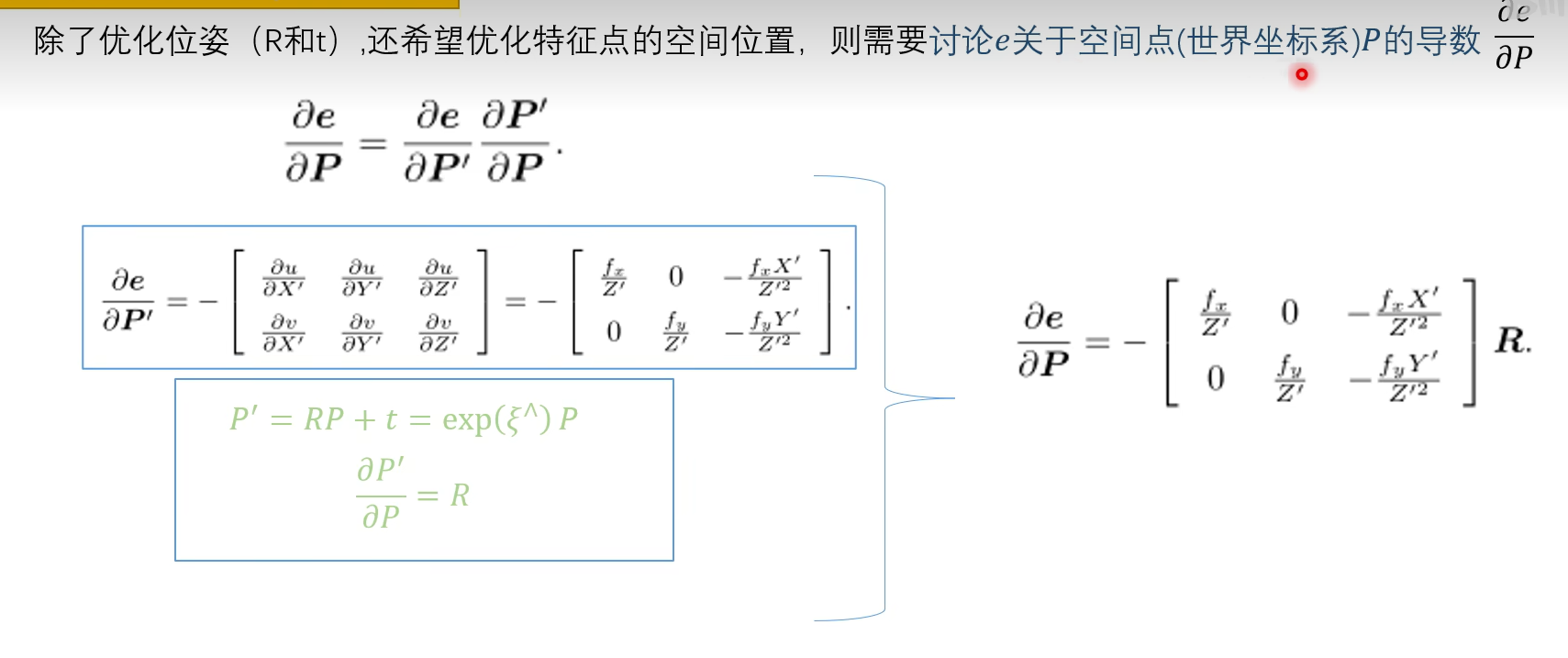

对空间点求导

三维点一阶导数J代码

Eigen::Matrix<double, 2, 3, Eigen::ColMajor> tmp; tmp(0, 0) = cam->focal_length; tmp(0, 1) = 0; tmp(0, 2) = -x / z * cam->focal_length; tmp(1, 0) = 0; tmp(1, 1) = cam->focal_length; tmp(1, 2) = -y / z * cam->focal_length; _jacobianOplusXi = -1. / z * tmp * T.rotation().toRotationMatrix();

位姿和三维点联合在一个边中代码

edge_project_xyz2uv.cpp

// g2o - General Graph Optimization

#include "edge_project_xyz2uv.h"

namespace g2o {

EdgeProjectXYZ2UV::EdgeProjectXYZ2UV()

: BaseBinaryEdge<2, Vector2, VertexPointXYZ, VertexSE3Expmap>() {

_cam = 0;

resizeParameters(1);

installParameter(_cam, 0);

}

bool EdgeProjectXYZ2UV::read(std::istream& is) {

readParamIds(is);

internal::readVector(is, _measurement);

return readInformationMatrix(is);

}

bool EdgeProjectXYZ2UV::write(std::ostream& os) const {

writeParamIds(os);

internal::writeVector(os, measurement());

return writeInformationMatrix(os);

}

void EdgeProjectXYZ2UV::computeError() {

const VertexSE3Expmap* v1 = static_cast<const VertexSE3Expmap*>(_vertices[1]);

const VertexPointXYZ* v2 = static_cast<const VertexPointXYZ*>(_vertices[0]);

const CameraParameters* cam =

static_cast<const CameraParameters*>(parameter(0));

_error = measurement() - cam->cam_map(v1->estimate().map(v2->estimate()));

}

void EdgeProjectXYZ2UV::linearizeOplus() {

VertexSE3Expmap* vj = static_cast<VertexSE3Expmap*>(_vertices[1]);

SE3Quat T(vj->estimate());

VertexPointXYZ* vi = static_cast<VertexPointXYZ*>(_vertices[0]);

Vector3 xyz = vi->estimate();

Vector3 xyz_trans = T.map(xyz);

double x = xyz_trans[0];

double y = xyz_trans[1];

double z = xyz_trans[2];

double z_2 = z * z;

const CameraParameters* cam =

static_cast<const CameraParameters*>(parameter(0));

Eigen::Matrix<double, 2, 3, Eigen::ColMajor> tmp;

tmp(0, 0) = cam->focal_length;

tmp(0, 1) = 0;

tmp(0, 2) = -x / z * cam->focal_length;

tmp(1, 0) = 0;

tmp(1, 1) = cam->focal_length;

tmp(1, 2) = -y / z * cam->focal_length;

_jacobianOplusXi = -1. / z * tmp * T.rotation().toRotationMatrix();

_jacobianOplusXj(0, 0) = x * y / z_2 * cam->focal_length;

_jacobianOplusXj(0, 1) = -(1 + (x * x / z_2)) * cam->focal_length;

_jacobianOplusXj(0, 2) = y / z * cam->focal_length;

_jacobianOplusXj(0, 3) = -1. / z * cam->focal_length;

_jacobianOplusXj(0, 4) = 0;

_jacobianOplusXj(0, 5) = x / z_2 * cam->focal_length;

_jacobianOplusXj(1, 0) = (1 + y * y / z_2) * cam->focal_length;

_jacobianOplusXj(1, 1) = -x * y / z_2 * cam->focal_length;

_jacobianOplusXj(1, 2) = -x / z * cam->focal_length;

_jacobianOplusXj(1, 3) = 0;

_jacobianOplusXj(1, 4) = -1. / z * cam->focal_length;

_jacobianOplusXj(1, 5) = y / z_2 * cam->focal_length;

}

} // namespace g2o

具体推导

https://www.bilibili.com/video/BV1ue4y1R7Ei/?spm_id_from=333.788&vd_source=f88ed35500cb30c7be9bbe418a5998ca

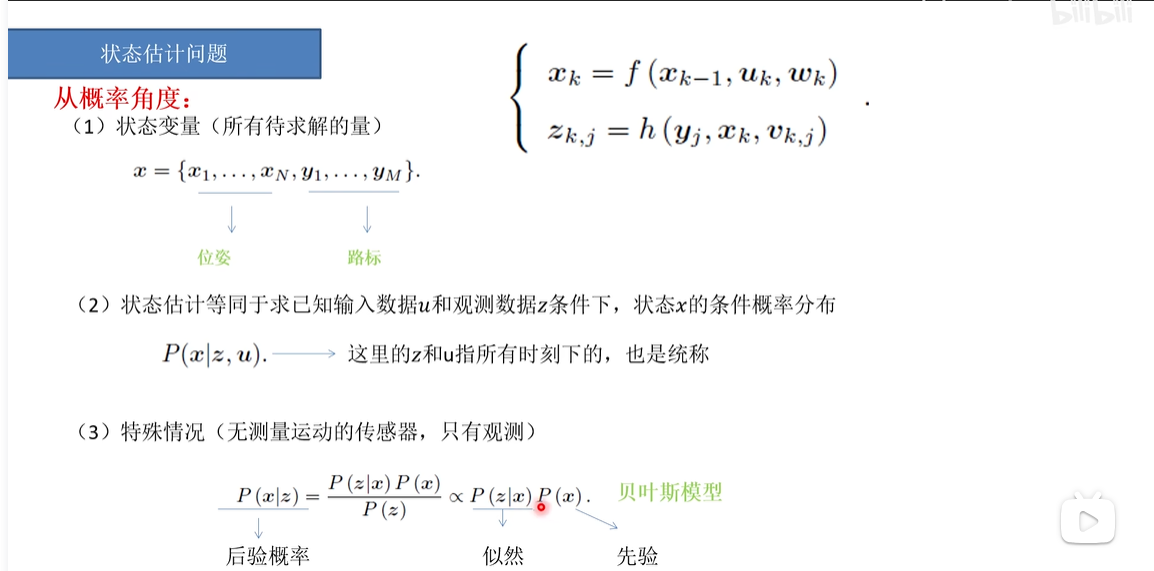

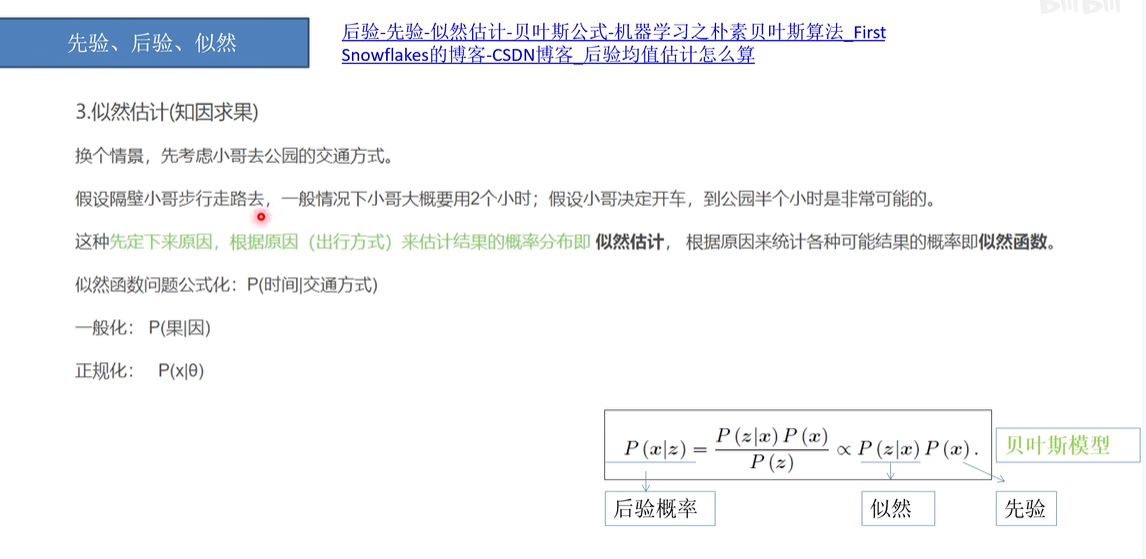

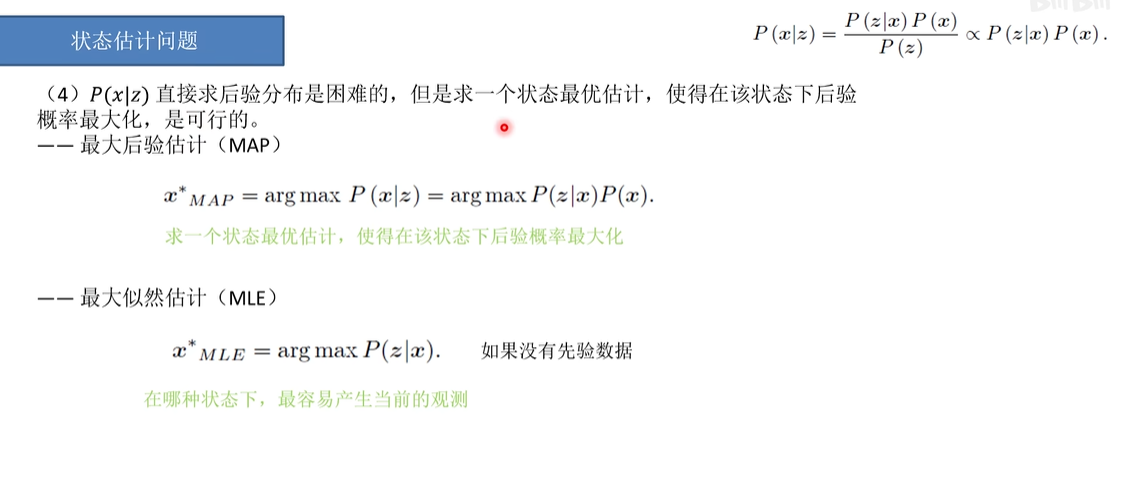

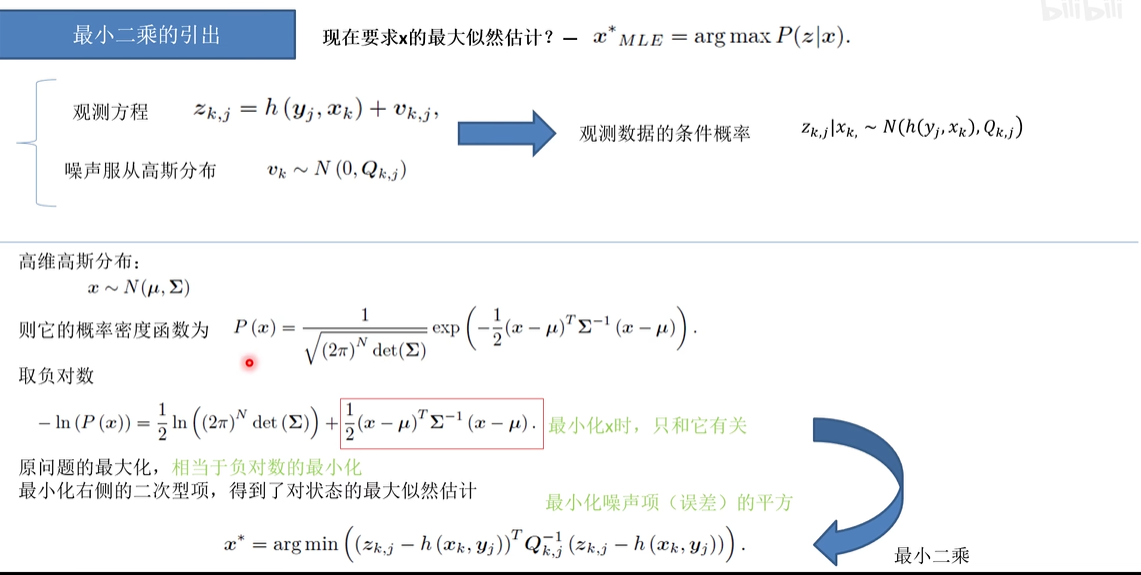

理论基础

理论引出最小二乘

知道观测结果,反推像素坐标和三维点坐标状态,反推的结果更新现有的结果,从而完成了优化。

重投影误差

https://i.cnblogs.com/posts/edit;postId=17832164

整理

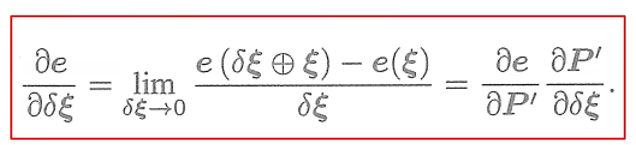

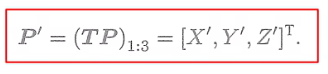

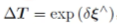

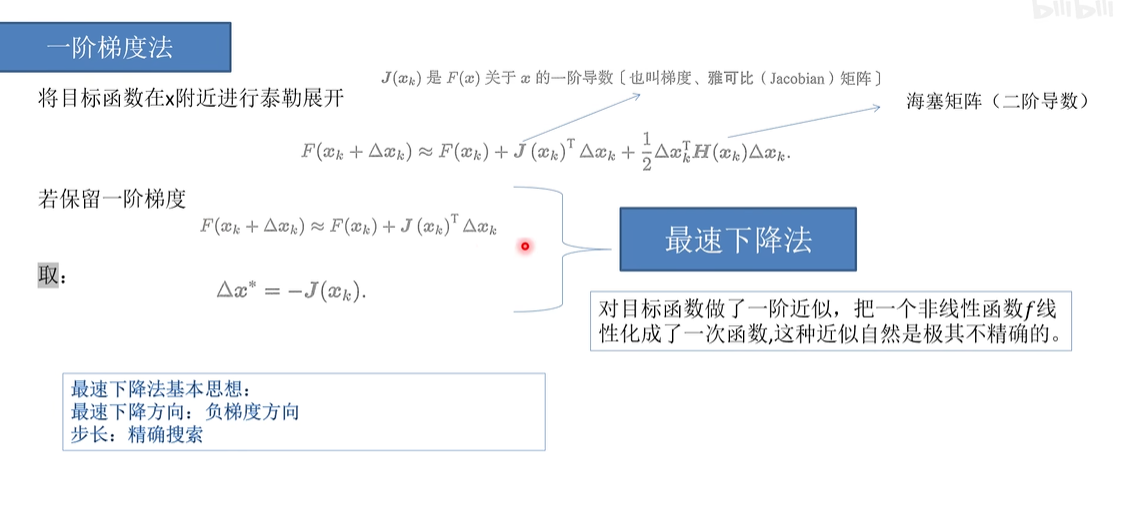

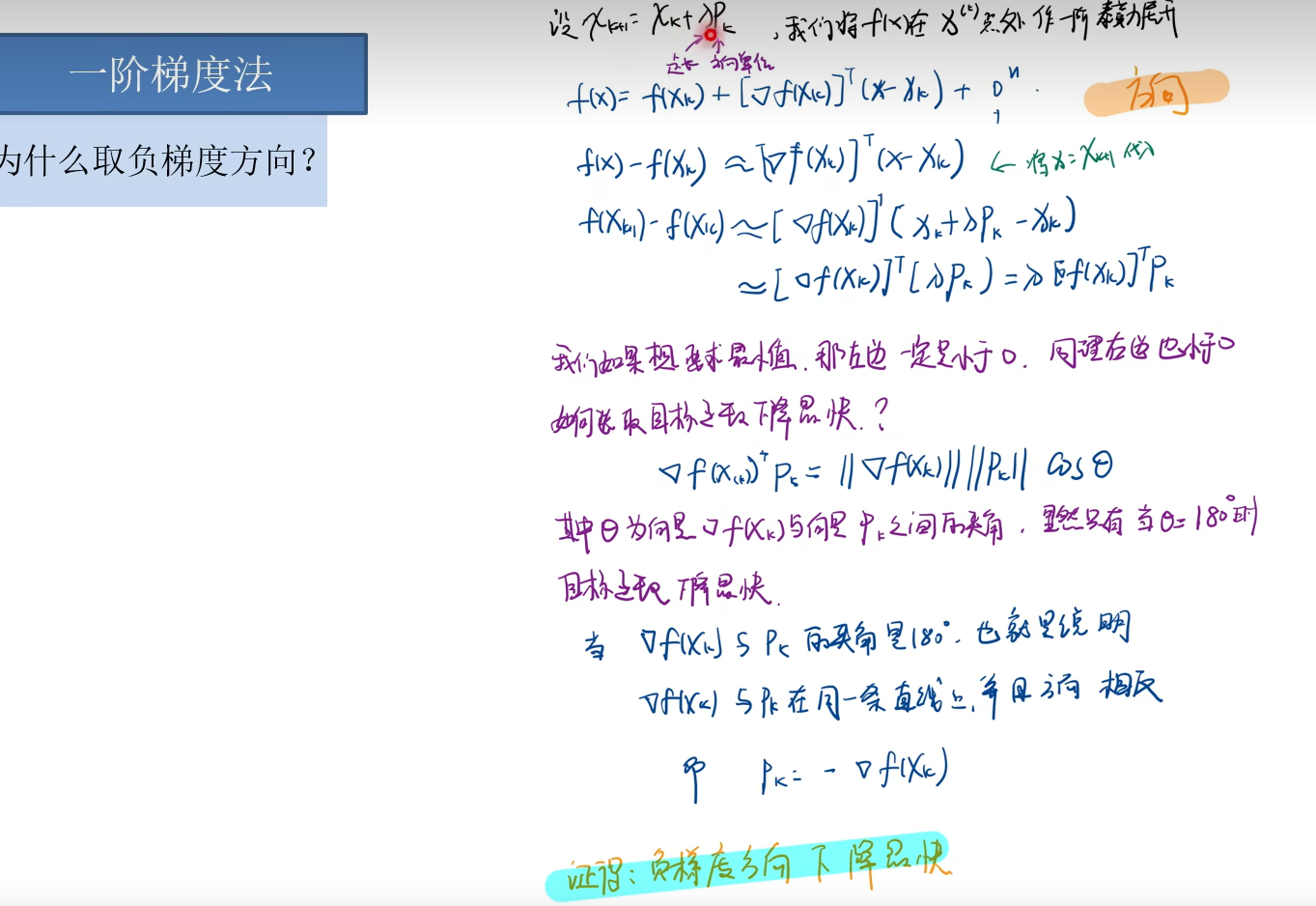

对当前点P'求导Rt-T-对应李代数E

误差是(u,v)对T求导,T对AT求导 的

误差E (u,v)对P(x,y,z)求导

P'关于Rt的位姿

P'对Rt求导 李代数

推导

自己的手工推导

https://www.cnblogs.com/gooutlook/p/16412222.html

B站PPT

https://www.bilibili.com/video/BV1LT411V7zv/?spm_id_from=333.788&vd_source=f88ed35500cb30c7be9bbe418a5998ca

浙公网安备 33010602011771号

浙公网安备 33010602011771号