今天下雪了,是个看《白色相簿2》的好日子。

昨天我们获取所有长评url,今天要解析这些url获取更多的信息随便,点开一个,我们需要的数据有标题,时间,内容。点赞数和评论先不弄了。

解析json的时候用的正则表达式,这次就用xpath吧。

代码:

from lxml import html

import requests

import csv

# 请求头 可自己查看自己的 来更改

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 '

'Safari/537.36 Core/1.70.3741.400 QQBrowser/10.5.3863.400',

'Referer': 'https://www.bilibili.com/bangumi/media/md3516/?spm_id_from=666.25.b_7265766965775f6d6f64756c65.1'

}

# csv文件的头

a = [

'article', 'avatar', 'uname', 'str_url', 'title', 'content'

]

lists = []

lists_w = []

etree = html.etree

with open('a.csv', 'r', encoding='utf-8') as fp:

reader = csv.reader(fp)

# 把第一行消掉

next(fp)

for x in reader:

lists.append(x)

x = 0

while x < len(lists):

print(x)

print(len(lists[x]))

resp = requests.get(lists[x][3])

html = etree.HTML(resp.text)

p = html.xpath("//div[@class='article-holder']//p/text()")

title = html.xpath("//h1[@class='title']/text()")

if len(p) != 0 and len(title) != 0:

list_w = [lists[x][0], lists[x][1], lists[x][2], lists[x][3], title[0], p[0]]

lists_w.append(list_w)

else:

pass

x = x + 1

print(lists_w)

with open('b.csv', 'w', encoding='utf-8', newline='') as fp:

writer = csv.writer(fp)

# 写入表头信息

writer.writerow(a)

writer.writerows(lists_w)

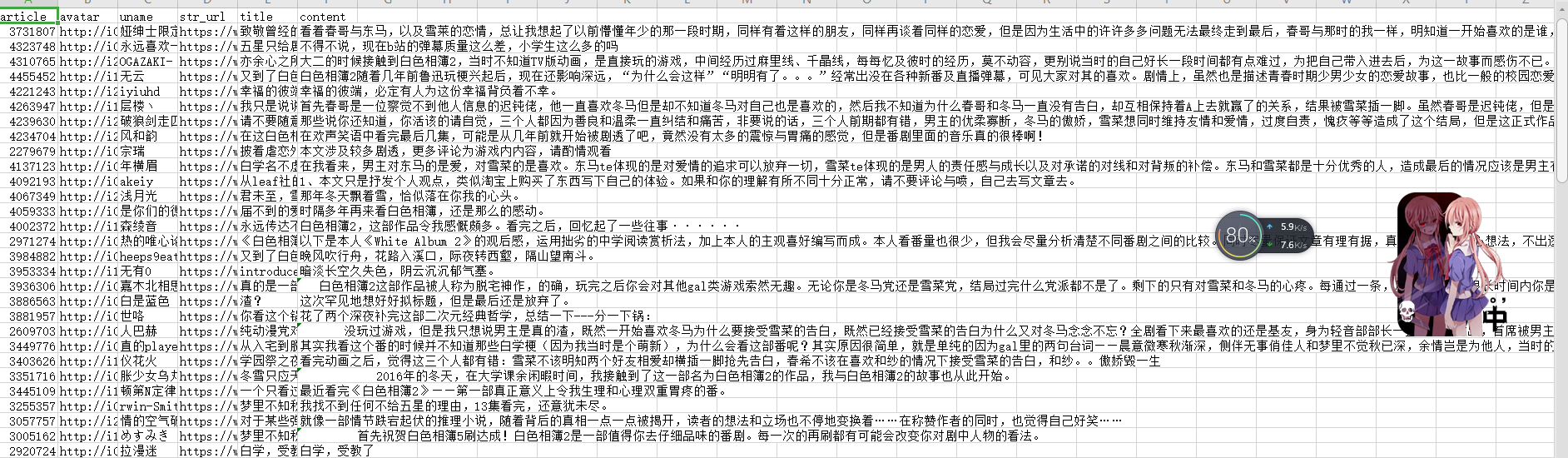

结果截图:

体会:遇到了几个问题,第一个是在谷歌的xpath helper软件里用xpth语言能够找到但是python就不行,后来我用python把整个网页下载下来,发现class属性是不一样的,然后就改了一下xpth语句就成功了,我们使用xpth语句就应对的是requests.get(url)返回的text,需要看text怎么写而不是看原网页的。第二个问题是我昨天爬的那个网页他今天没有了,因为数据就一百多条,我挨个看了一下那个长评的网页没有了,后来在代码加了限定就ok了。