爬虫(10) - Scrapy框架(2) | 伪装爬虫

该篇笔记的代码是接着上一篇文章中的示例项目接着写的,完善我们的土巴兔爬虫项目

伪装爬虫 - user agent中间件的编写

前置知识:user agent

用户代理(User Agent,简称 UA),是一个特殊字符串头,使得服务器能够识别客户使用的操作系统及版本、CPU 类型、浏览器及版本、浏览器渲染引擎、浏览器语言、浏览器插件等;一些网站常常通过判断 UA 来给不同的操作系统、不同的浏览器发送不同的页面,因此可能造成某些页面无法在某个浏览器中正常显示,但通过伪装 UA 可以绕过检测。

代码

middlewares.py

编写自定义生成user gent的类,并设置请求头user gent的值为随机

1 import random 2 3 class my_useragent(object): 4 def process_request(self,request,spider): 5 #自行网上找 6 user_agent_list=[ 7 "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1", 8 "Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11", 9 "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6", 10 "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6", 11 "Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1", 12 "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5", 13 "Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5", 14 "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", 15 "Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", 16 "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_0) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", 17 "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3", 18 "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3", 19 "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", 20 "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", 21 "Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", 22 "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3", 23 "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.24 (KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24", 24 "Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/535.24 (KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24" 25 ] 26 #随机选取一个 27 agent =random.choice(user_agent_list) 28 #request.headers设置请求头 29 request.headers['User-Agent']=agent

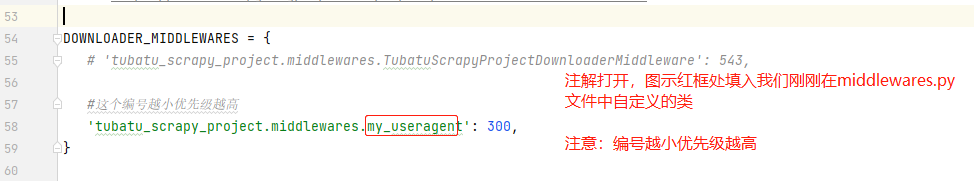

settings.py

将配置文件中 DOWNLOADER_MIDDLEWARES 打开,并指向我们刚刚别写的类,设置优先级

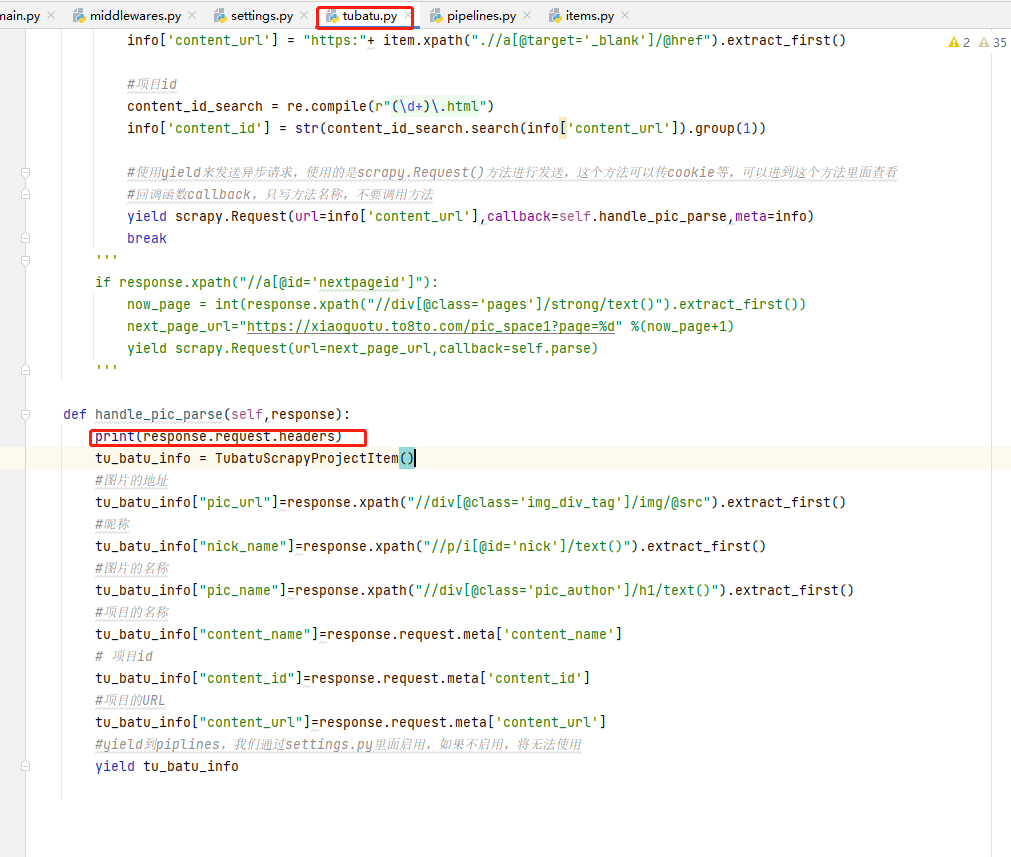

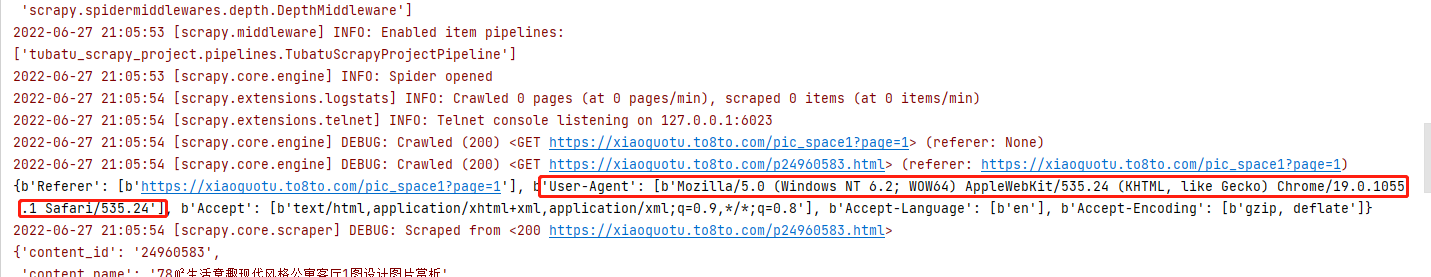

检查

在tubatu.py爬虫文件的handle_pic_parse方法中打印请求头,检查发现user agent伪装成功

伪装爬虫 - ip代理中间件的编写

代码

middlewares.py

编写自定义代理ip的类,将密码进行加密,并将代理信息传入请求头

1 import base64 2 3 class my_proxy(object): 4 def process_request(self,request,spider): 5 #proxy,主机头和端口号 6 request.meta['proxy'] = "http://" + 'b5.t.16yun.cn:6460' 7 #用户名:密码 8 proxy_name_pass = '16GCAQYD:917750'.encode('utf-8') 9 encode_pass_name=base64.b64encode(proxy_name_pass) 10 #将代理信息设置到头部去 11 #注意!!!Basic后面有一个空格 12 request.headers['Proxy-Authorization'] = 'Basic '+encode_pass_name.decode()

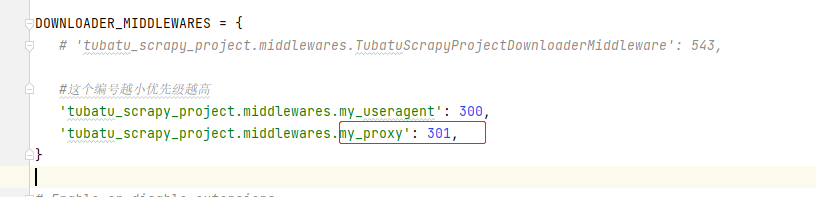

settings.py

将自定义的ip代理类传入 DOWNLOADER_MIDDLEWARES ,注意优先级不能设置一样,否则会冲突

浙公网安备 33010602011771号

浙公网安备 33010602011771号