spark sql访问hive表

1.将hive-site.xml拷贝到spark目录下conf文件夹

2.(非必需)将mysql的jar包引入到spark的classpath,方式有如下两种:

方式1:需要在$SPARK_HOME/conf/spark-env.sh中的SPARK_CLASSPATH添加jdbc驱动的jar包

export SPARK_CLASSPATH=$SPARK_CLASSPATH:/usr/local/hive-1.2.1/lib/mysql-connector-java-5.1.31-bin.jar

方式2:在spark-sql命令中指定driver-class-path,如

local模式

spark-sql --driver-class-path /usr/local/hive-1.2.1/lib/mysql-connector-java-5.1.31-bin.jar

集群模式:

spark-sql --master yarn --driver-class-path /usr/local/hive-1.2.1/lib/mysql-connector-java-5.1.31-bin.jar

开启HiverServer2

默认模式为yarn-client,HiverServer作为一个yarn的应用运行,如图:

停止thriftserver

sbin/stop-thriftserver.sh

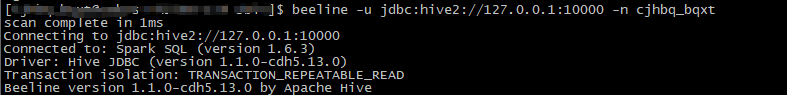

beeline客户端访问thrift server

通过-n指定sql以那个用户执行,下面两个ip是hadoop-master的内外网地址

beeline -u jdbc:hive2://10.9.2.100:10000 -n hive --hiveconf mapreduce.job.queuename=mxbs

beeline -u jdbc:hive2://122.23.368.32:10000 -n hive --hiveconf mapreduce.job.queuename=mxbs

java样例程序

import java.sql.*;

import java.util.HashSet;

import java.util.Set;

public class SimpleDemo1 {

private final static Set<String> HIVE_CONFIGS = initConfig();

/**

* hive conf设置

*

* @return

*/

private static Set<String> initConfig() {

Set<String> configs = new HashSet<String>();

configs.add("set mapreduce.job.queuename=queue1");

configs.add("set hive.cli.print.header=false");

return configs;

}

/**

* 临时去掉所有错误处理和资源关闭

* @param args

* @throws ClassNotFoundException

* @throws SQLException

*/

public static void main(String[] args) throws ClassNotFoundException, SQLException {

String jdbcdriver = "org.apache.hive.jdbc.HiveDriver";

String jdbcurl = "jdbc:hive2://127.0.0.1:10000";

String username = "user001";

String password = "";

Connection conn = null;

Statement statement = null;

Class.forName(jdbcdriver);

DriverManager.setLoginTimeout(20);

conn = DriverManager.getConnection(jdbcurl, username, password);

statement = conn.createStatement();

for (String config : HIVE_CONFIGS) {

statement.execute(config);

}

String sql = "select * from db1.test limit 10";

print(statement.executeQuery(sql));

}

}

maven引用:

<hadoop.version>2.6.0-cdh5.13.0</hadoop.version>

<hive-jdbc.version>1.1.0</hive-jdbc.version>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>${hive-jdbc.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>${hadoop.version}</version>

</dependency>

浙公网安备 33010602011771号

浙公网安备 33010602011771号