爬虫大作业

1.选一个自己感兴趣的主题或网站。(所有同学不能雷同)

2.用python 编写爬虫程序,从网络上爬取相关主题的数据。

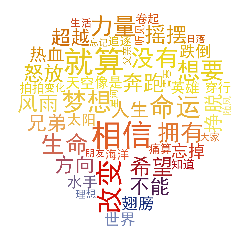

3.对爬了的数据进行文本分析,生成词云。

4.对文本分析结果进行解释说明。

5.写一篇完整的博客,描述上述实现过程、遇到的问题及解决办法、数据分析思想及结论。

6.最后提交爬取的全部数据、爬虫及数据分析源代码。

#encoding=gbk lyric= '' f=open('./励志歌曲歌词.txt','r') for i in f: lyric+=f.read()

加入#encoding=gbk是为了防止后面操作报错SyntaxError: Non-UTF-8 code starting with '\xc0'

然后用jieba分词来对歌曲做分词提取出词频高的词

import jieba.analyse result=jieba.analyse.textrank(lyric,topK=50,withWeight=True) keywords = dict() for i in result: keywords[i[0]]=i[1] print(keywords)

from PIL import Image,ImageSequence import numpy as np import matplotlib.pyplot as plt from wordcloud import WordCloud,ImageColorGenerator image= Image.open('./tim.jpg') graph = np.array(image) wc = WordCloud(font_path='./fonts/simhei.ttf',background_color='White',max_words=50,mask=graph) wc.generate_from_frequencies(keywords) image_color = ImageColorGenerator(graph) plt.imshow(wc) plt.imshow(wc.recolor(color_func=image_color)) plt.axis("off") plt.show()

保存生成图片

wc.to_file('dream.png')

完整代码:

#encoding=gbk import jieba.analyse from PIL import Image,ImageSequence import numpy as np import matplotlib.pyplot as plt from wordcloud import WordCloud,ImageColorGenerator lyric= '' f=open('./励志歌曲歌词.txt','r') for i in f: lyric+=f.read() result=jieba.analyse.textrank(lyric,topK=50,withWeight=True) keywords = dict() for i in result: keywords[i[0]]=i[1] print(keywords) image= Image.open('./tim.jpg') graph = np.array(image) wc = WordCloud(font_path='./fonts/simhei.ttf',background_color='White',max_words=50,mask=graph) wc.generate_from_frequencies(keywords) image_color = ImageColorGenerator(graph) plt.imshow(wc) plt.imshow(wc.recolor(color_func=image_color)) plt.axis("off") plt.show() wc.to_file('dream.png')