09 spark连接mysql数据库

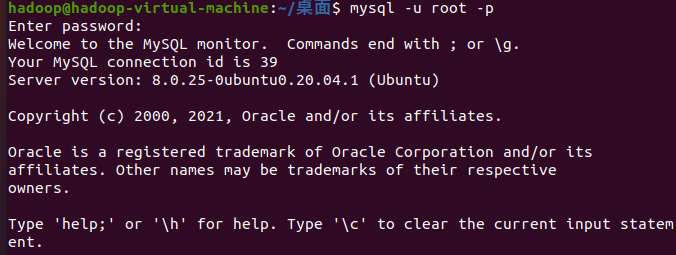

1、安装启动检查Mysql服务。

mysql -u root -p

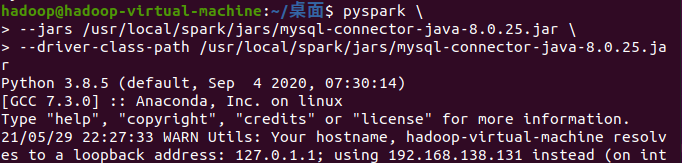

2、spark 连接mysql驱动程序。

pyspark \ > --jars /usr/local/spark/jars/mysql-connector-java-8.0.25.jar \ > --driver-class-path /usr/local/spark/jars/mysql-connector-java-8.0.25.jar

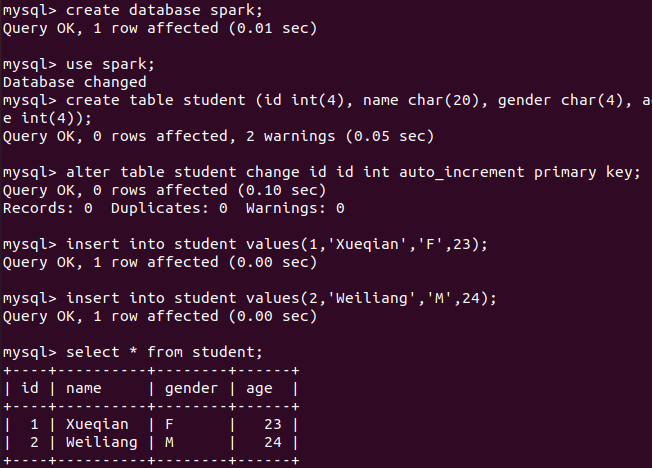

3、启动 Mysql shell,新建数据库spark,表student。

mysql> create database spark; mysql> use spark; mysql> create table student (id int(4), name char(20), gender char(4), age int(4)); mysql> alter table student change id id int auto_increment primary key; mysql> insert into student values(1,'Xueqian','F',23); mysql> insert into student values(2,'Weiliang','M',24); mysql> select * from student;

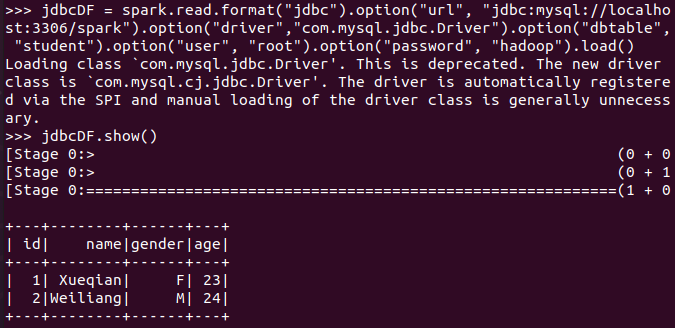

4、spark读取MySQL数据库中的数据

>>> jdbcDF = spark.read.format("jdbc").option("url", "jdbc:mysql://localhost:3306/spark").option("driver","com.mysql.jdbc.Driver").option("dbtable", "student").option("user", "root").option("password", "hadoop").load()

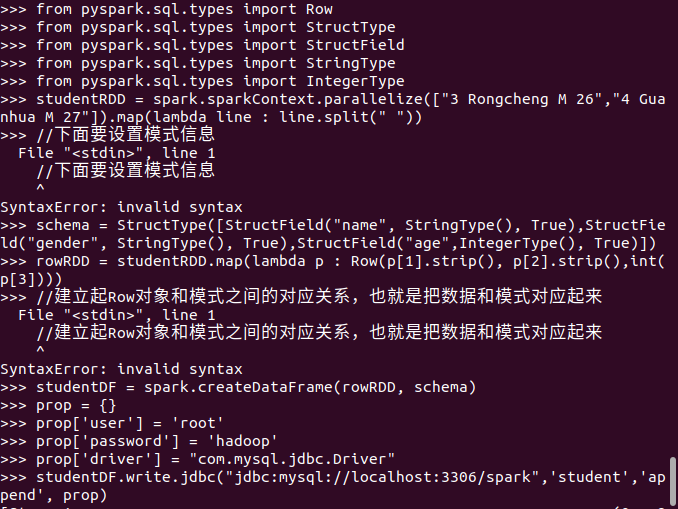

5、spark向MySQL数据库写入数据

>>> from pyspark.sql.types import Row >>> from pyspark.sql.types import StructType >>> from pyspark.sql.types import StructField >>> from pyspark.sql.types import StringType >>> from pyspark.sql.types import IntegerType >>> studentRDD = spark.sparkContext.parallelize(["3 Rongcheng M 26","4 Guanhua M 27"]).map(lambda line : line.split(" ")) >>> schema = StructType([StructField("name", StringType(), True),StructField("gender", StringType(), True),StructField("age",IntegerType(), True)]) >>> rowRDD = studentRDD.map(lambda p : Row(p[1].strip(), p[2].strip(),int(p[3]))) >>> studentDF = spark.createDataFrame(rowRDD, schema) >>> prop = {} >>> prop['user'] = 'root' >>> prop['password'] = 'hadoop' >>> prop['driver'] = "com.mysql.jdbc.Driver" >>> studentDF.write.jdbc("jdbc:mysql://localhost:3306/spark",'student','append', prop)

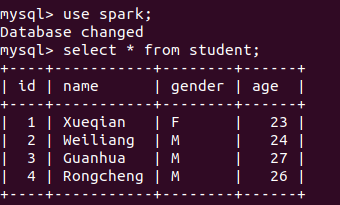

mysql> use spark; mysql> select * from student;