12.朴素贝叶斯-垃圾邮件分类

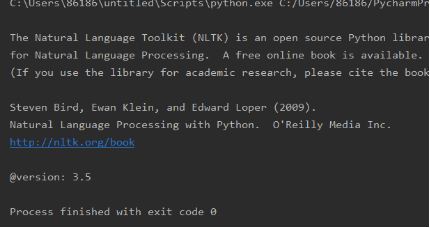

nltk库的安装与使用

import nltk print(nltk.__doc__)

2.1 nltk库 分词

nltk.sent_tokenize(text) #对文本按照句子进行分割

nltk.word_tokenize(sent) #对句子进行分词

2.2 punkt 停用词

from nltk.corpus import stopwords

stops=stopwords.words('english')

*如果提示需要下载punkt

nltk.download(‘punkt’)

或 下载punkt.zip

https://pan.baidu.com/s/1OwLB0O8fBWkdLx8VJ-9uNQ 密码:mema

复制到对应的失败的目录C:\Users\Administrator\AppData\Roaming\nltk_data\tokenizers并解压。

2.3 NLTK 词性标注

nltk.pos_tag(tokens)

2.4 Lemmatisation(词性还原)

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

lemmatizer.lemmatize('leaves') #缺省名词

lemmatizer.lemmatize('best',pos='a')

lemmatizer.lemmatize('made',pos='v')

一般先要分词、词性标注,再按词性做词性还原。

2.5 编写预处理函数

def preprocessing(text):

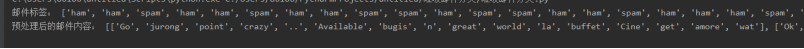

sms_data.append(preprocessing(line[1])) #对每封邮件做预处理

import csv import nltk # 预处理 from nltk import WordNetLemmatizer from nltk.corpus import stopwords # 写一个方法进行词性还原 def get_wordnet_pos(treebank_tag): if treebank_tag.startswith('J'): # 形容词 return nltk.corpus.wordnet.ADJ elif treebank_tag.startswith('V'): # 动词 return nltk.corpus.wordnet.VERB elif treebank_tag.startswith('N'): # 名词 return nltk.corpus.wordnet.NOUN elif treebank_tag.startswith('R'): # 副词 return nltk.corpus.wordnet.ADV else: return def preprocessing(text): tokens = [word for sent in nltk.sent_tokenize(text) for word in nltk.word_tokenize(sent)] # 分词 stops = stopwords.words("english") # 停用词 tokens = [token for token in tokens if token not in stops] # 去掉停用词 lemmatizer = WordNetLemmatizer() tag = nltk.pos_tag(tokens) # 词性标注 newtokens = [] for i, token in enumerate(tokens): if token: pos = get_wordnet_pos(tag[i][1]) if pos: word = lemmatizer.lemmatize(token, pos) newtokens.append(word) return newtokens file_path = r'C:\Users\86186\Desktop\大三下\机器学习\SMSSpamCollection' sms = open(file_path, 'r', encoding='utf-8') sms_data = [] sms_label = [] csv_reader = csv.reader(sms, delimiter='\t') for line in csv_reader: sms_label.append(line[0]) sms_data.append(preprocessing(line[1])) # 对每封邮件进行预处理 sms.close() print("邮件标签:", sms_label) print("预处理后的邮件内容:", sms_data)

结果如下:

浙公网安备 33010602011771号

浙公网安备 33010602011771号