第4次实践作业

(1)使用Docker-compose实现Tomcat+Nginx负载均衡

反向代理(Reverse Proxy)方式是指以代理服务器来接受Internet上的连接请求,然后将请求转发给内部网络上的服务器,并将从服务器上得到的结果返回给Internet上请求连接的客户端,此时代理服务器对外就表现为一个服务器。

default.conf:

upstream tomcats {

server tomcat1:8080; # 端口号

server tomcat2:8080;

server tomcat3:8080;

}

server {

listen 2526;

server_name localhost;

location / {

proxy_pass http://tomcats; # 轮询访问tomcats

}

}

docker-compose.yml:

version: "3"

services:

nginx:

image: nginx

container_name: mymynginx

ports:

- 80:2526

volumes:

- ./default.conf:/etc/nginx/conf.d/default.conf # 挂载配置文件

depends_on:

- tomcat1

- tomcat2

- tomcat3

tomcat1:

image: tomcat

container_name: tomcat1

volumes:

- ./tomcat1:/usr/local/tomcat/webapps/ROOT

tomcat2:

image: tomcat

container_name: tomcat2

volumes:

- ./tomcat2:/usr/local/tomcat/webapps/ROOT

tomcat3:

image: tomcat

container_name: tomcat3

volumes:

- ./tomcat3:/usr/local/tomcat/webapps/ROOT

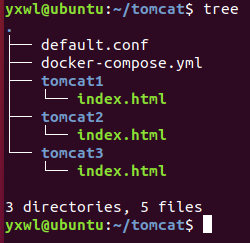

查看树形结构:

执行docker-compose文件并查看容器

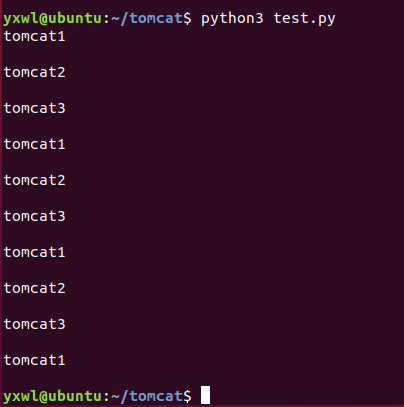

- 负载均衡策略1:轮询策略:

import requests

url='http://localhost'

for i in range(0,10):

response=requests.get(url)

print(response.text)

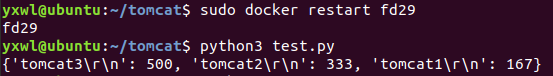

- 负载均衡策略2:权重策略:

upstream tomcats {

server tomcat1:8080 weight=1;

server tomcat2:8080 weight=2;

server tomcat3:8080 weight=3;

}

server {

listen 2526;

server_name localhost;

location / {

proxy_pass http://tomcats; # 轮询访问tomcats

}

}

编写python代码:

import requests

url='http://localhost'

count={}

for i in range(0,1000):

response=requests.get(url)

if response.text in count:

count[response.text]+=1;

else:

count[response.text]=1

print(count)

(2)使用Docker-compose部署javaweb运行环境

docker-compose.yml:

version: "3" #版本

services: #服务节点

tomcat00: #tomcat 服务

image: tomcat #镜像

hostname: hostname #容器的主机名

container_name: tomcat00 #容器名

ports: #端口

- "5050:8080"

volumes: #数据卷

- "./webapps:/usr/local/tomcat/webapps"

- ./wait-for-it.sh:/wait-for-it.sh

networks: #网络设置静态IP

webnet:

ipv4_address: 15.22.0.15

tomcat01: #tomcat 服务

image: tomcat #镜像

hostname: hostname #容器的主机名

container_name: tomcat01 #容器名

ports: #端口

- "5055:8080"

volumes: #数据卷

- "./webapps:/usr/local/tomcat/webapps"

- ./wait-for-it.sh:/wait-for-it.sh

networks: #网络设置静态IP

webnet:

ipv4_address: 15.22.0.16

mymysql: #mymysql服务

build: . #通过MySQL的Dockerfile文件构建MySQL

image: mymysql:test

container_name: mymysql

ports:

- "3309:3306"

#红色的外部访问端口不修改的情况下,要把Linux的MySQL服务停掉

#service mysql stop

#反之,将3306换成其它的

command: [

'--character-set-server=utf8mb4',

'--collation-server=utf8mb4_unicode_ci'

]

environment:

MYSQL_ROOT_PASSWORD: "123456"

networks:

webnet:

ipv4_address: 15.22.0.6

nginx:

image: nginx

container_name: "nginx-tomcat"

ports:

- 8080:8080

volumes:

- ./default.conf:/etc/nginx/conf.d/default.conf # 挂载配置文件

tty: true

stdin_open: true

depends_on:

- tomcat00

- tomcat01

networks:

webnet:

ipv4_address: 15.22.0.7

networks: #网络设置

webnet:

driver: bridge #网桥模式

ipam:

config:

-

subnet: 15.22.0.0/24 #子网

docker-entrypoint.sh:

#!/bin/bash

mysql -uroot -p123456 << EOF # << EOF 必须要有

source /usr/local/grogshop.sql;

Dockerfile

# 这个是构建MySQL的dockerfile

FROM registry.saas.hand-china.com/tools/mysql:5.7.17

# mysql的工作位置

ENV WORK_PATH /usr/local/

# 定义会被容器自动执行的目录

ENV AUTO_RUN_DIR /docker-entrypoint-initdb.d

#复制gropshop.sql到/usr/local

COPY grogshop.sql /usr/local/

#把要执行的shell文件放到/docker-entrypoint-initdb.d/目录下,容器会自动执行这个shell

COPY docker-entrypoint.sh $AUTO_RUN_DIR/

#给执行文件增加可执行权限

RUN chmod a+x $AUTO_RUN_DIR/docker-entrypoint.sh

# 设置容器启动时执行的命令

#CMD ["sh", "/docker-entrypoint-initdb.d/import.sh"]

default.conf:

upstream tomcat123 {

server tomcat00:8080;

server tomcat01:8080;

}

server {

listen 8080;

server_name localhost;

location / {

proxy_pass http://tomcat123;

}

}

进入项目对应目录修改连接数据库的IP

cd /home/compose/webapps/ssmgrogshop_war/WEB-INF/classes

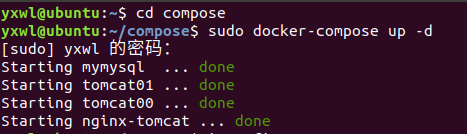

启动容器:

docker-compose up -d

打开浏览器访问:localhost:8080/ssmgrogshop_war

(3)使用Docker搭建大数据集群环境

1.pull ubuntu镜像

拉取镜像并在个人文件下创建一个目录,用于向Docker内部的Ubuntu系统传输文件,创建并运行容器

docker pull ubuntu

cd ~

mkdir build

sudo docker run -it -v /home/zhou/build:/root/build --name ubuntu ubuntu

2.Ubuntu容器的初始化

(1)进入容器中先换源,这里选用阿里源

cat<<EOF>/etc/apt/sources.list

deb http://mirrors.aliyun.com/ubuntu/ bionic main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-security main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-security main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-updates main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-updates main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-backports main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-backports main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-proposed main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-proposed main restricted universe multiverse

EOF

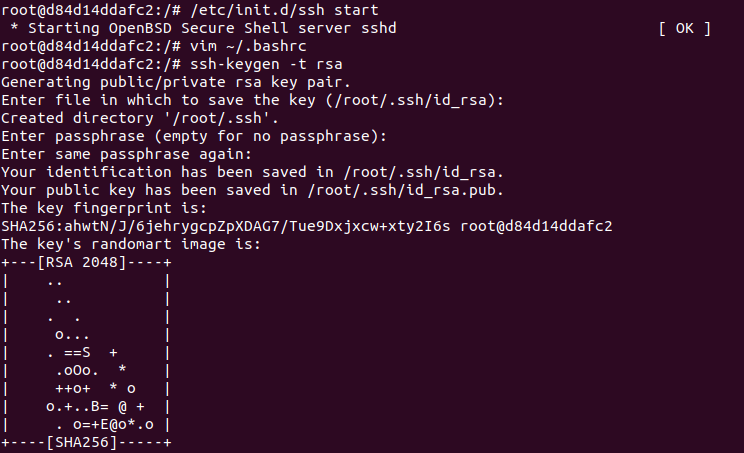

(2)ubuntu环境的初始化

apt-get update

# 安装vim软件

apt-get install vim

# 安装sshd,因为在开启分布式Hadoop时,需要用到ssh连接slave:

apt-get install ssh

# 运行脚本即可开启sshd服务器

/etc/init.d/ssh start

vim ~/.bashrc

# 在该文件中最后一行添加如下内容,实现进入Ubuntu系统时,都能自动启动sshd服务

/etc/init.d/ssh start

配置ssh:

ssh-keygen -t rsa # 一直按回车即可

cd ~/.ssh

cat id_rsa.pub >> authorized_keys

(3)为容器安装SDK

①安装JDK,这里使用JDK8版本

apt-get install openjdk-8-jdk

vim ~/.bashrc # 在文件末尾添加以下两行,配置Java环境变量:

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64/

export PATH=$PATH:$JAVA_HOME/bin

source ~/.bashrc # 使.bashrc生效

②docker commit从容器去创建一个镜像

#另开一个终端

sudo docker commit 容器id ubuntu-jdk8 #讲其保存说明是jkd8版本的ubuntu

sudo docker run -it -v /home/zhou/build:/root/build --name ubuntu-jdk8 ubuntu-jdk8

#开启保存的那份镜像ubuntu-jdk8

(4)安装Hadoop

cd /root/build

tar -zxvf hadoop-3.1.3.tar.gz -C /usr/local #将hadoop压缩包放入本地build文件夹中,这里使用大数据实验中的3.1.3版本

cd /usr/local/hadoop-3.1.3

./bin/hadoop version # 验证安装

(5)配置Hadoop集群

hadoop-env.sh

cd /usr/local/hadoop-3.1.3/etc/hadoop #进入配置文件存放目录

vim hadoop-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64/ # 在任意位置添加

① core-site.xml

vim core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp</value>

<description>A base for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

</configuration>

② hdfs-site.xml

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp/dfs/name</value>

</property>

<property>

<name>dfs.namenode.data.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp/dfs/data</value>

</property>

</configuration>

③ mapred-site.xml

vim mapred-site.xml

<configuration>

<property>

<!--使用yarn运行MapReduce程序-->

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<!--jobhistory地址host:port-->

<name>mapreduce.jobhistory.address</name>

<value>master:10020</value>

</property>

<property>

<!--jobhistory的web地址host:port-->

<name>mapreduce.jobhistory.webapp.address</name>

<value>master:19888</value>

</property>

<property>

<!--指定MR应用程序的类路径-->

<name>mapreduce.application.classpath</name>

<value>/usr/local/hadoop-3.1.3/share/hadoop/mapreduce/lib/*,/usr/local/hadoop-3.1.3/share/hadoop/mapreduce/*</value>

</property>

</configuration>

④ yarn-site.xml

vim yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.5</value>

</property>

</configuration>

⑤ 修改脚本

对于start-dfs.sh和stop-dfs.sh文件,添加下列参数:

cd /usr/local/hadoop-3.1.3/sbin

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

对于start-yarn.sh和stop-yarn.sh,添加下列参数:

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

(6)运行Hadoop集群

保存镜像

sudo docker commit 容器id ubuntu/hadoopinstalled

从三个终端分别开启三个容器运行ubuntu/hadoopinstalled镜像,分别表示Hadoop集群中的master,slave01和slave02

# 第一个终端

sudo docker run -it -h master --name master ubuntu/hadoopinstalled

# 第二个终端

sudo docker run -it -h slave01 --name slave01 ubuntu/hadoopinstalled

# 第三个终端

sudo docker run -it -h slave02 --name slave02 ubuntu/hadoopinstalled

三个终端分别打开/etc/hosts,根据各自ip修改为如下形式

cat /etc/hosts

172.17.0.3 master

172.17.0.4 slave01

172.17.0.5 slave02

(7)测试ssh

在master结点测试ssh,连接到slave结点

ssh slave01

ssh slave02

exit 退出

修改master上workers文件;将localhost修改为如下所示

vim /usr/local/hadoop-3.1.3/etc/hadoop/workers

slave01

slave02

(8)测试Hadoop集群

① 在master终端上执行

cd /usr/local/hadoop-3.1.3

bin/hdfs namenode -format #首次启动Hadoop需要格式化

sbin/start-all.sh #启动所有服务

② 使用jps查看三个终端,如图所示

③ 建立HDFS文件夹

bin/hdfs dfs -mkdir /user

bin/hdfs dfs -mkdir /user/root #注意input文件夹是在root目录下

bin/hdfs dfs -mkdir input

在master终端上vim一个测试样例,并将其上传到input文件夹;注意test文件的路径

bin/hdfs dfs -put ~/test.txt input

(9)运行hadoop 自带的测试实例

① 准备测试样例的输入文件

bin/hdfs dfs -mkdir -p /user/hadoop/input

bin/hdfs dfs -put ./etc/hadoop/*.xml /user/hadoop/input

bin/hdfs dfs -ls /user/hadoop/input //可以看到九个文件

② 测试样例并输出结果

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar grep /user/hadoop/input output 'dfs[a-z.]+' //运行的时候很慢,需要等待

bin/hdfs dfs -cat output/* //输出结果

出现的问题

1️⃣执行docker-compose文件出错

忘记出错的原因了,也没有截图( ′◔ ‸◔`)

2️⃣运行test.py文件时出错

把./test.py改成python3 test.py

3️⃣电脑连的手机热点,因为被限速了,下载的很慢,心好累

该死的校园卡

具体的使用时间没有计算,但从Chrome的历史记录可以粗略地估计一下花了十几个小时,这还是在参考了大佬的博客的基础上,在下载安装上浪费了一些时间