docker版mongodb4.0同步数据到es7.3.2的简单配置(mongo无安全校验)

网上目前能查到的mongo同步数据到es的资料最多就是用的mongo-connector,此外还有revier和logstash。全都是比较过时的,不支持高版本的es。目前能查到的只有monstache支持。版本对照参考官网:

https://rwynn.github.io/monstache-site/start/

es7.3.2的搭建翻看我的上一篇,有详细的elk7.3.2的搭建。这篇里直接用的上次搭建的es。

首先搭建mongodb。我用的是4.0版本。首先需要配置开启副本集,修改配置文件/etc/mongod.conf.orig:

storage: dbPath: /data/db journal: enabled: true # engine: # mmapv1: # wiredTiger: # where to write logging data. systemLog: destination: file logAppend: true path: /var/log/mongodb/mongod.log # network interfaces net: port: 27017 #开启远程访问 bindIp: 0.0.0.0 # how the process runs processManagement: timeZoneInfo: /usr/share/zoneinfo #security: #operationProfiling: #副本集配置内容。 replication: replSetName: es oplogSizeMB: 10240

此时启动mongo的docker-compose为:

version: '3.7' services: mongo: image: mongo:4.0 restart: always container_name: mongodb environment: TZ: Asia/Shanghai volumes: - /data/javaProject/mongo-data:/data/db - /data/javaProject/mongo-config/mongod.conf.orig:/etc/mongod.conf.orig command: --config /etc/mongod.conf.orig ports: - 12000:27017 mongo-express: image: mongo-express container_name: mongo-express restart: always depends_on: - mongo ports: - 8081:8081 TZ: Asia/Shanghai

此时启动容器,在mongo容器中的日志看到内容是:

No logs available

此时是正常的。docker exec进入mongo容器后执行命令 mongo命令进入客户端,执行命令:

> show dbs 2020-09-25T15:32:36.098+0800 E QUERY [js] Error: listDatabases failed:{ "operationTime" : Timestamp(0, 0), "ok" : 0, "errmsg" : "not master and slaveOk=false", "code" : 13435, "codeName" : "NotMasterNoSlaveOk", "$clusterTime" : { "clusterTime" : Timestamp(0, 0), "signature" : { "hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="), "keyId" : NumberLong(0) } } } : _getErrorWithCode@src/mongo/shell/utils.js:25:13 Mongo.prototype.getDBs@src/mongo/shell/mongo.js:139:1 shellHelper.show@src/mongo/shell/utils.js:882:13 shellHelper@src/mongo/shell/utils.js:766:15 @(shellhelp2):1:1

查资料说这种报错是因为没有初始化副本集:

rs.initiate({_id:”es”,members:[{_id:0,host:’127.0.0.1:27017’}]})

其中es要对应配置文件中的replSetName值,而且这句命令要手敲,复制执行会报解析错误

> use admin

switched to db admin

> rs.initiate({_id:”es”,members:[{_id:0,host:’127.0.0.1:27017’}]})

2020-09-25T15:50:35.176+0800 E QUERY [js] SyntaxError: illegal character @(shell):1:17

手敲执行:

> rs.initiate({_id:"es",members:[{_id:0,host:'127.0.0.1:27017'}]})

{

"ok" : 1,

"operationTime" : Timestamp(1601020319, 1),

"$clusterTime" : {

"clusterTime" : Timestamp(1601020319, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

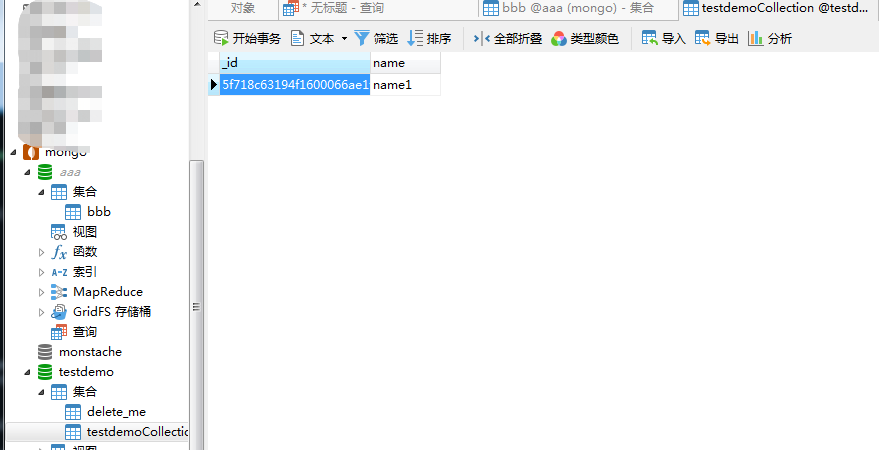

此时创建一个数据库,创建一个集合,创建一个文档。然后在mongo-express可视化页面中找数据:

在自带的local数据库的oplog.rs集合中可以找到刚才添加的数据已经进入了副本集

拉取monstache后,挂载配置文件monstache.config.toml到容器中,配置文件内容:

# connectionn settings # connect to MongoDB using the following URL mongo-url = "mongodb://192.168.10.20:12000" # connect to the Elasticsearch REST API at the following node URLs elasticsearch-urls = ["http://192.168.10.20:9200"] # frequently required settings # if you need to seed an index from a collection and not just listen and sync changes events # you can copy entire collections or views from MongoDB to Elasticsearch direct-read-namespaces = ["testdemo.*"] # if you want to use MongoDB change streams instead of legacy oplog tailing use change-stream-namespaces # change streams require at least MongoDB API 3.6+ # if you have MongoDB 4+ you can listen for changes to an entire database or entire deployment # in this case you usually don't need regexes in your config to filter collections unless you target the deployment. # to listen to an entire db use only the database name. For a deployment use an empty string. #change-stream-namespaces = [""] # additional settings # if you don't want to listen for changes to all collections in MongoDB but only a few # e.g. only listen for inserts, updates, deletes, and drops from mydb.mycollection # this setting does not initiate a copy, it is only a filter on the change event listener #namespace-regex = '' # compress requests to Elasticsearch #gzip = true # generate indexing statistics #stats = true # index statistics into Elasticsearch #index-stats = true # use the following PEM file for connections to MongoDB #mongo-pem-file = "" # disable PEM validation #mongo-validate-pem-file = true # use the following user name for Elasticsearch basic auth elasticsearch-user = "elastic" # use the following password for Elasticsearch basic auth elasticsearch-password = "pwd" # use 4 go routines concurrently pushing documents to Elasticsearch elasticsearch-max-conns = 4 # use the following PEM file to connections to Elasticsearch #elasticsearch-pem-file = "" # validate connections to Elasticsearch #elastic-validate-pem-file = false # propogate dropped collections in MongoDB as index deletes in Elasticsearch dropped-collections = true # propogate dropped databases in MongoDB as index deletes in Elasticsearch dropped-databases = true # do not start processing at the beginning of the MongoDB oplog # if you set the replay to true you may see version conflict messages # in the log if you had synced previously. This just means that you are replaying old docs which are already # in Elasticsearch with a newer version. Elasticsearch is preventing the old docs from overwriting new ones. #replay = false # resume processing from a timestamp saved in a previous run resume = true # do not validate that progress timestamps have been saved #resume-write-unsafe = false # override the name under which resume state is saved #resume-name = "default" # use a custom resume strategy (tokens) instead of the default strategy (timestamps) # tokens work with MongoDB API 3.6+ while timestamps work only with MongoDB API 4.0+ resume-strategy = 0 # exclude documents whose namespace matches the following pattern #namespace-exclude-regex = '^mydb\.ignorecollection$' # turn on indexing of GridFS file content #index-files = true # turn on search result highlighting of GridFS content #file-highlighting = true # index GridFS files inserted into the following collections #file-namespaces = ["users.fs.files"] # print detailed information including request traces verbose = true # enable clustering mode cluster-name = 'docker-cluster' # do not exit after full-sync, rather continue tailing the oplog #exit-after-direct-reads = false

monstache挂载的配置文件monstache.config.toml需要查看官网或者阿里云的案例,阿里云有详细的配置文件各个key的含义,参考:https://help.aliyun.com/knowledge_detail/171650.html

此时完整的docker-compose.yml文件是:

version: '3.7' services: mongo: image: mongo:4.0 restart: always container_name: mongodb environment: TZ: Asia/Shanghai volumes: - /data/javaProject/mongo-data:/data/db - /data/javaProject/mongo-config/mongod.conf.orig:/etc/mongod.conf.orig command: --config /etc/mongod.conf.orig ports: - 12000:27017 mongo-express: image: mongo-express container_name: mongo-express restart: always depends_on: - mongo ports: - 8081:8081 environment: TZ: Asia/Shanghai monstache: image: rwynn/monstache:rel6 restart: always container_name: monstache volumes: - /data/javaProject/monstache-conf/monstache.config.toml:/app/monstache.config.toml command: -f /app/monstache.config.toml

此时构建启动,monstache的日志报错显示连接不上:

current topology: { Type: ReplicaSetNoPrimary,

查到说是需要mongo副本集开启后还要初始化,可是我已经初始化过了。遂删除mongo容器后,全部重新构建,容器启动后,进入mongo容器重新初始化副本集。这时查看monstache的启动日志,已经连接成功。

初始化参考:https://blog.csdn.net/jack_brandy/article/details/88887795。此次构建启动后monstache连接成功:

ERROR 2020/09/28 03:44:32 Unable to connect to MongoDB using URL mongodb://192.168.10.20:12000: server selection error: server selection timeout, current topology: { Type: Unknown, Servers: [{ Addr: 192.168.10.20:12000, Type: RSGhost, State: Connected, Average RTT: 631124 }, ] } INFO 2020/09/28 03:44:47 Started monstache version 6.7.0 INFO 2020/09/28 03:44:47 Go version go1.14 INFO 2020/09/28 03:44:47 MongoDB go driver v1.3.5 INFO 2020/09/28 03:44:47 Elasticsearch go driver 7.0.18 INFO 2020/09/28 03:44:47 Successfully connected to MongoDB version 4.0.20 INFO 2020/09/28 03:44:47 Successfully connected to Elasticsearch version 7.3.2 INFO 2020/09/28 03:44:47 Sending systemd READY=1 WARN 2020/09/28 03:44:47 Systemd notification not supported (i.e. NOTIFY_SOCKET is unset) INFO 2020/09/28 03:44:47 Joined cluster docker-cluster INFO 2020/09/28 03:44:47 Starting work for cluster docker-cluster INFO 2020/09/28 03:44:47 Watching changes on the deployment INFO 2020/09/28 03:44:47 Listening for events INFO 2020/09/28 03:44:47 Resuming from timestamp {T:1601264687 I:5} INFO 2020/09/28 03:44:47 Direct reads completed

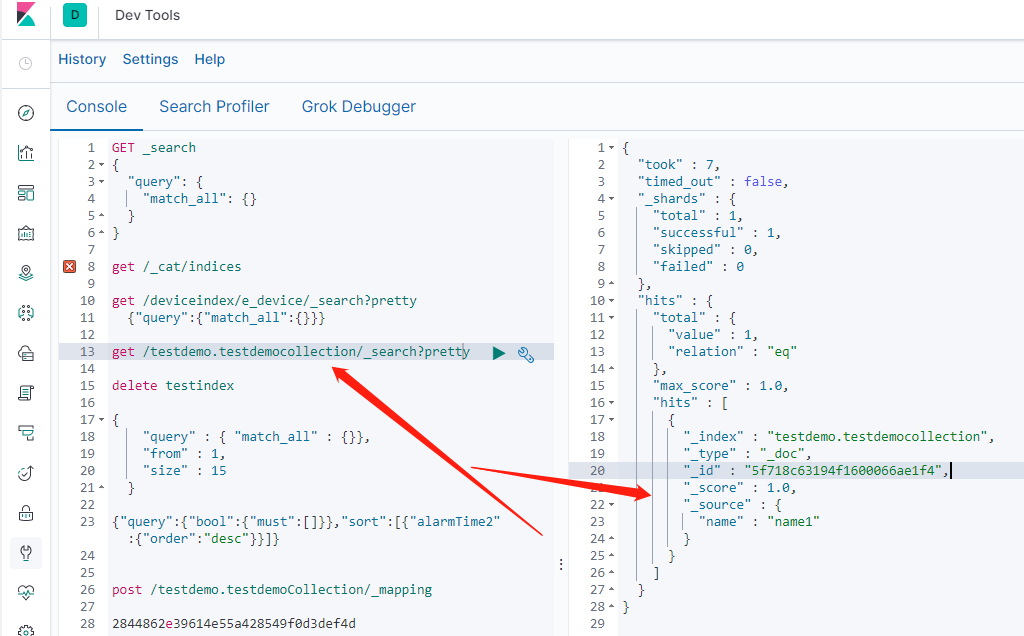

此时在mongo中新增数据库,新增集合,文档。登陆kibana可以查看到新增数据。同步完成。

浙公网安备 33010602011771号

浙公网安备 33010602011771号