Hadoop完全分布式

- 修改主机名

[root@localhost:/soft/hadoop2.7/etc/hadoop]nano /etc/hostname

[root@localhost:/soft/hadoop2.7/etc/hadoop]

[root@localhost:/soft/hadoop2.7/etc/hadoop]hostname

s130

- 修改主机IP映射

[root@localhost:/soft/hadoop2.7/etc/hadoop]nano /etc/hosts

[root@localhost:/soft/hadoop2.7/etc/hadoop]cat /etc/hosts

127.0.0.1 localhost

192.168.109.130 s130

192.168.109.131 s131

192.168.109.132 s132

192.168.109.133 s133

- 测试修改成功

[root@localhost:/soft/hadoop2.7/etc/hadoop]ping s130

PING s130 (192.168.109.130) 56(84) bytes of data.

64 bytes from s130 (192.168.109.130): icmp_seq=1 ttl=64 time=0.013 ms

64 bytes from s130 (192.168.109.130): icmp_seq=2 ttl=64 time=0.036 ms

64 bytes from s130 (192.168.109.130): icmp_seq=3 ttl=64 time=0.020 ms

^C

--- s130 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 1999ms

rtt min/avg/max/mdev = 0.013/0.023/0.036/0.009 ms

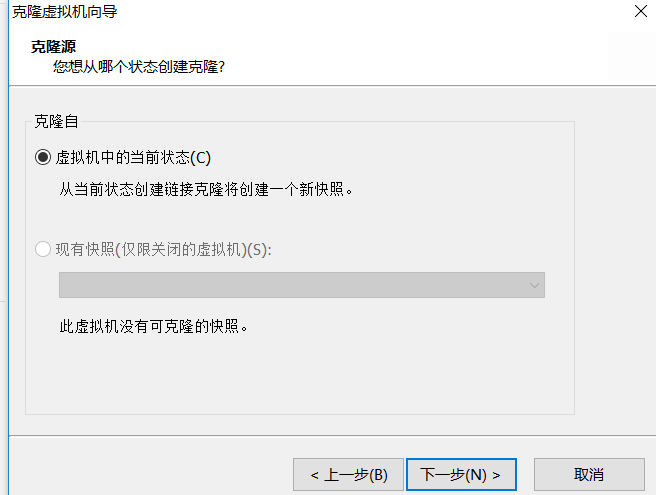

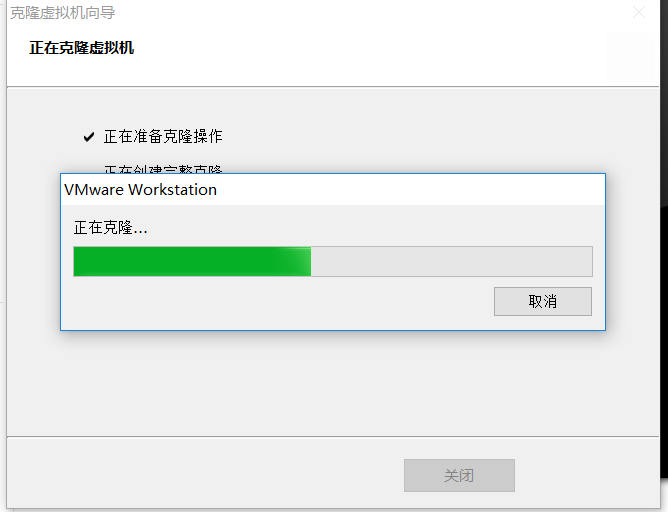

- power off 关机。进行克隆

- 右键选择虚拟机--》选择管理---》克隆

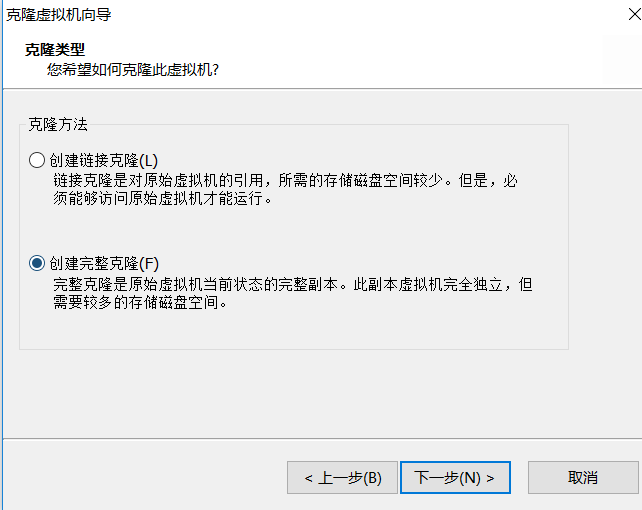

- 第二步创建完整克隆

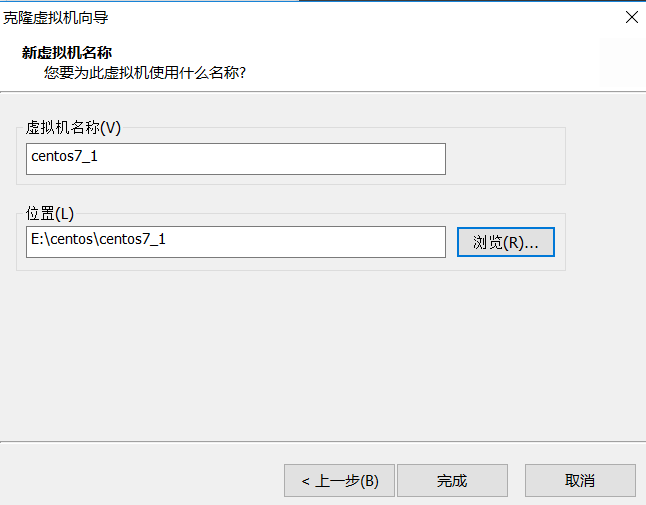

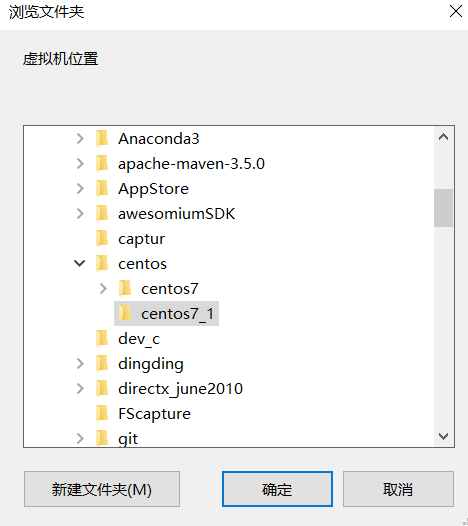

- 第三步 修改克隆的虚拟机名称 ,克隆的位置和原虚拟机在同一个目录下

- 第四步开始克隆

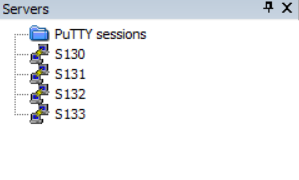

按照这个方法克隆3台虚拟机,并修改对应的IP地址

- 修改每个克隆机器的hostname和IP地址

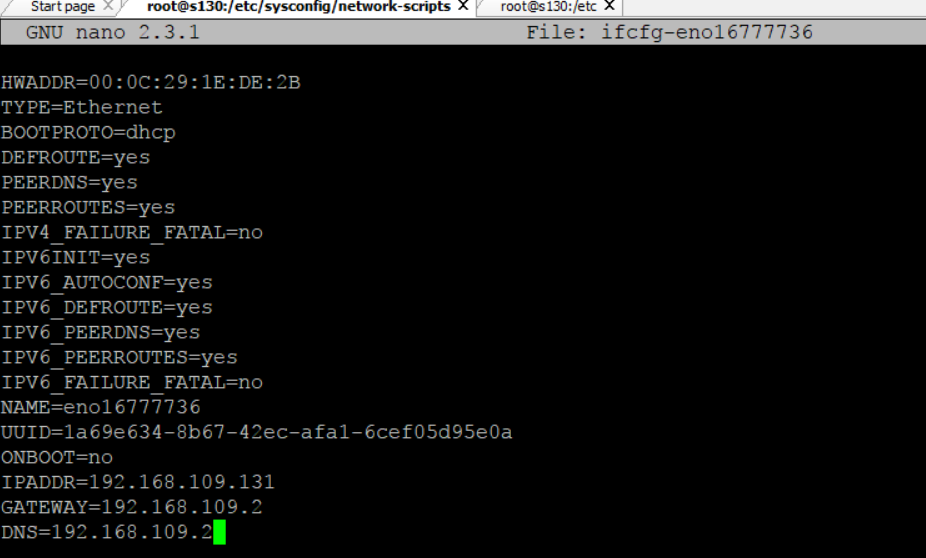

启动s131虚拟机,进入目录,在网卡中添加IP地址,网关,DNS

- 修改主机名

[root@s130:/usr/bin]nano /etc/hostname- 重启网络

[root@s130:/usr/bin]service network restart- 检测是否修改成功

[root@s130:/usr/bin]ping s131

PING s131 (192.168.109.131) 56(84) bytes of data.

64 bytes from s131 (192.168.109.131): icmp_seq=1 ttl=64 time=0.014 ms

64 bytes from s131 (192.168.109.131): icmp_seq=2 ttl=64 time=0.060 ms

^C

[root@s130:/usr/bin]ifconfig

eno16777736: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.109.131 netmask 255.255.255.0 broadcast 192.168.109.255

inet6 fe80::20c:29ff:fe9d:bddf prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:9d:bd:df txqueuelen 1000 (Ethernet)

RX packets 1340 bytes 125703 (122.7 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 906 bytes 232938 (227.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

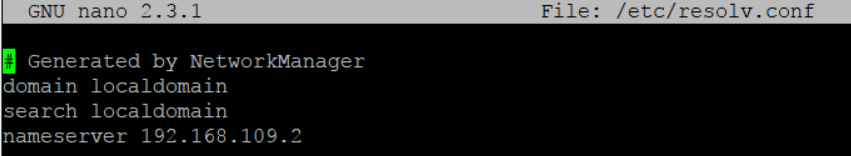

- 设置域名服务解析DNS resolv.conf

- 检测s130和s131是否互通

[root@s130:/usr/bin]ping s130

PING s130 (192.168.109.130) 56(84) bytes of data.

64 bytes from s130 (192.168.109.130): icmp_seq=1 ttl=64 time=0.223 ms

64 bytes from s130 (192.168.109.130): icmp_seq=2 ttl=64 time=0.141 ms

64 bytes from s130 (192.168.109.130): icmp_seq=3 ttl=64 time=0.459 ms

^C- 准备完全分布式主机的密钥对

- 删除所有主机的.ssh

[root@s131:/root/.ssh]ssh s132 rm -rf /root/.ssh/*[root@s131:/root/.ssh]ssh s133 rm -rf /root/.ssh/*

[root@s131:/root/.ssh]ssh s132

[root@s132:/root]cd .ssh

[root@s132:/root/.ssh]ls

[root@s130:/root]cd .ssh

[root@s130:/root/.ssh]ls

known_hosts

[root@s130:/root/.ssh]rm -rf *

[root@s130:/root/.ssh]ls

[root@s130:/root/.ssh]- 将s130作为master,在主机s130上生成密钥对

[root@s130:/root/.ssh]ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

Generating public/private rsa key pair.

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

db:ac:a6:63:71:eb:7c:ba:00:a1:71:61:7c:71:2c:f2 root@s130

The key's randomart image is:

+--[ RSA 2048]----+

| .o .o. |

| .o.o.. |

| . o+ . |

| + .E |

| . . S |

| .. .+ |

| .o..o |

| ooo.. |

| ..=*+ |

+-----------------+

[root@s130:/root/.ssh]ls

id_rsa id_rsa.pub

- 将master的公钥通过远程拷贝到s131-s133主机上

[root@s130:/root/.ssh]scp id_rsa.pub root@s130:/root/.ssh/authorized_keys[root@s130:/root/.ssh]scp id_rsa.pub root@s131:/root/.ssh/authorized_keys

root@s131's password:

id_rsa.pub 100% 391 0.4KB/s 00:00

[root@s130:/root/.ssh]scp id_rsa.pub root@s132:/root/.ssh/authorized_keys

The authenticity of host 's132 (192.168.109.132)' can't be established.

ECDSA key fingerprint is a7:5b:2c:55:73:e9:9a:2e:8d:48:a5:8b:98:dd:f8:05.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 's132,192.168.109.132' (ECDSA) to the list of known hosts.

root@s132's password:

id_rsa.pub 100% 391 0.4KB/s 00:00

[root@s130:/root/.ssh]scp id_rsa.pub root@s133:/root/.ssh/authorized_keys

The authenticity of host 's133 (192.168.109.133)' can't be established.

ECDSA key fingerprint is a7:5b:2c:55:73:e9:9a:2e:8d:48:a5:8b:98:dd:f8:05.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 's133,192.168.109.133' (ECDSA) to the list of known hosts.

root@s133's password:

id_rsa.pub 100% 391 0.4KB/s 00:00

[root@s130:/root/.ssh]

- 测试无密登录

[root@s130:/root/.ssh]ssh s131

Last login: Fri Dec 22 01:38:12 2017 from s133

[root@s131:/root]exit

logout

Connection to s131 closed.

[root@s130:/root/.ssh]ssh s132

Last login: Fri Dec 22 01:35:47 2017 from s131

[root@s132:/root]exit

logout

Connection to s132 closed.

[root@s130:/root/.ssh]ssh s133

Last login: Fri Dec 22 01:36:01 2017 from s132

[root@s133:/root]exit

logout

Connection to s133 closed.

[root@s130:/root/.ssh]- 测试成功,此时就可以在master主机s130上对s131-s133主机进行操作了

[root@s130:/root/.ssh]ssh s132 ls -al /root/.ssh

total 12

drwx------. 2 root root 46 Dec 22 01:55 .

dr-xr-x---. 8 root root 4096 Dec 21 09:14 ..

-rw-r--r--. 1 root root 391 Dec 22 01:55 authorized_keys

-rw-r--r--. 1 root root 182 Dec 22 01:35 known_hosts

[root@s130:/root/.ssh]ssh s131 hostname

s131

[root@s130:/root/.ssh]ssh s133 ps -Af

- 进入完全分布式配置

进入full模式

[root@s130:/soft/hadoop2.7/etc]ll

total 12

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 21 04:28 full

lrwxrwxrwx. 1 hadoop hadoop 6 Dec 21 04:30 hadoop -> pseudo

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 21 04:10 local

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 21 04:28 pseudo

[root@s130:/soft/hadoop2.7/etc]cd full

[root@s130:/soft/hadoop2.7/etc/full]l<property>

<name>fs.defaultFS</name>

<value>hdfs://s130/</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/soft/hadoop2.7/tmp</value>

</property>

配置Hadoop-env.sh JAVA_HOME环境变量

export JAVA_HOME=/soft/jdk1.8[root@s130:/soft/hadoop2.7/etc/hadoop]scp hadoop-env.sh root@s131:/soft/hadoop2.7/etc/full

hadoop-env.sh 100% 4224 4.1KB/s 00:00

[root@s130:/soft/hadoop2.7/etc/hadoop]scp hadoop-env.sh root@s132:/soft/hadoop2.7/etc/full

hadoop-env.sh 100% 4224 4.1KB/s 00:00

[root@s130:/soft/hadoop2.7/etc/hadoop]scp hadoop-env.sh root@s133:/soft/hadoop2.7/etc/full

hadoop-env.sh 配置hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

</configuration><property>

<name>yarn.resourcemanager.hostname</name>

<value>s130</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>[root@s130:/soft/hadoop2.7/etc/hadoop]nano slaves

[root@s130:/soft/hadoop2.7/etc/hadoop]cat slaves

s131

s132

s133

[root@s130:/soft/hadoop2.7/etc/hadoop]scp slaves root@s131:/soft/hadoop2.7/etc/full

slaves 100% 15 0.0KB/s 00:00

[root@s130:/soft/hadoop2.7/etc/hadoop]scp slaves root@s132:/soft/hadoop2.7/etc/full

slaves 100% 15 0.0KB/s 00:00

[root@s130:/soft/hadoop2.7/etc/hadoop]scp slaves root@s133:/soft/hadoop2.7/etc/full

slaves 100% 15 0.0KB/s 00:00

[root@s130:/soft/hadoop2.7/etc/hadoop]

- 修改完毕之后,将整个full文件夹递归远程复制给s131-s133主机的相应目录下

[root@s130:/soft/hadoop2.7/etc]scp -r full root@s131:/soft/hadoop2.7/etc/

[root@s130:/soft/hadoop2.7/etc]scp -r full root@s132:/soft/hadoop2.7/etc/

[root@s130:/soft/hadoop2.7/etc]scp -r full root@s133:/soft/hadoop2.7/etc/- 删除之前的符号链接,创建新的Hadoop链接到full模式

[root@s130:/soft/hadoop2.7/etc]rm hadoop

[root@s130:/soft/hadoop2.7/etc]ln -s full hadoop

[root@s130:/soft/hadoop2.7/etc]ll

total 12

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 22 02:21 full

lrwxrwxrwx. 1 root root 4 Dec 22 02:21 hadoop -> full

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 21 04:10 local

drwxr-xr-x. 2 hadoop hadoop 4096 Dec 21 04:28 pseudo

- 修改s131-s133主机的符号链接

[root@s130:/soft/hadoop2.7/etc]ssh s131 rm /soft/hadoop2.7/etc/hadoop

[root@s130:/soft/hadoop2.7/etc]ssh s132 rm /soft/hadoop2.7/etc/hadoop

[root@s130:/soft/hadoop2.7/etc]ssh s133 rm /soft/hadoop2.7/etc/hadoop

[root@s130:/soft/hadoop2.7/etc]ssh s131 ln -s /soft/hadoop2.7/etc/full /soft/hadoop2.7/etc/hadoop [root@s130:/soft/hadoop2.7/etc]ssh s132 ln -s /soft/hadoop2.7/etc/full /soft/hadoop2.7/etc/hadoop

[root@s130:/soft/hadoop2.7/etc]ssh s133 ln -s /soft/hadoop2.7/etc/full /soft/hadoop2.7/etc/hadoop- 删除临时目录文件

由于这里我之前修改过Hadoop的临时目录文件,所以这里就不再删除了

- 删除Hadoop运行日志

[root@s130:/soft/hadoop2.7/logs]rm -rf *

[root@s130:/soft/hadoop2.7/logs]ls

[root@s130:/soft/hadoop2.7/logs]ssh s131 rm -rf /soft/hadoop2.7/logs

[root@s130:/soft/hadoop2.7/logs]ssh s132 rm -rf /soft/hadoop2.7/logs

[root@s130:/soft/hadoop2.7/logs]ssh s133 rm -rf /soft/hadoop2.7/logs- 格式化namenode

[root@s130:/soft/hadoop2.7/etc/hadoop]hadoop namenode -format

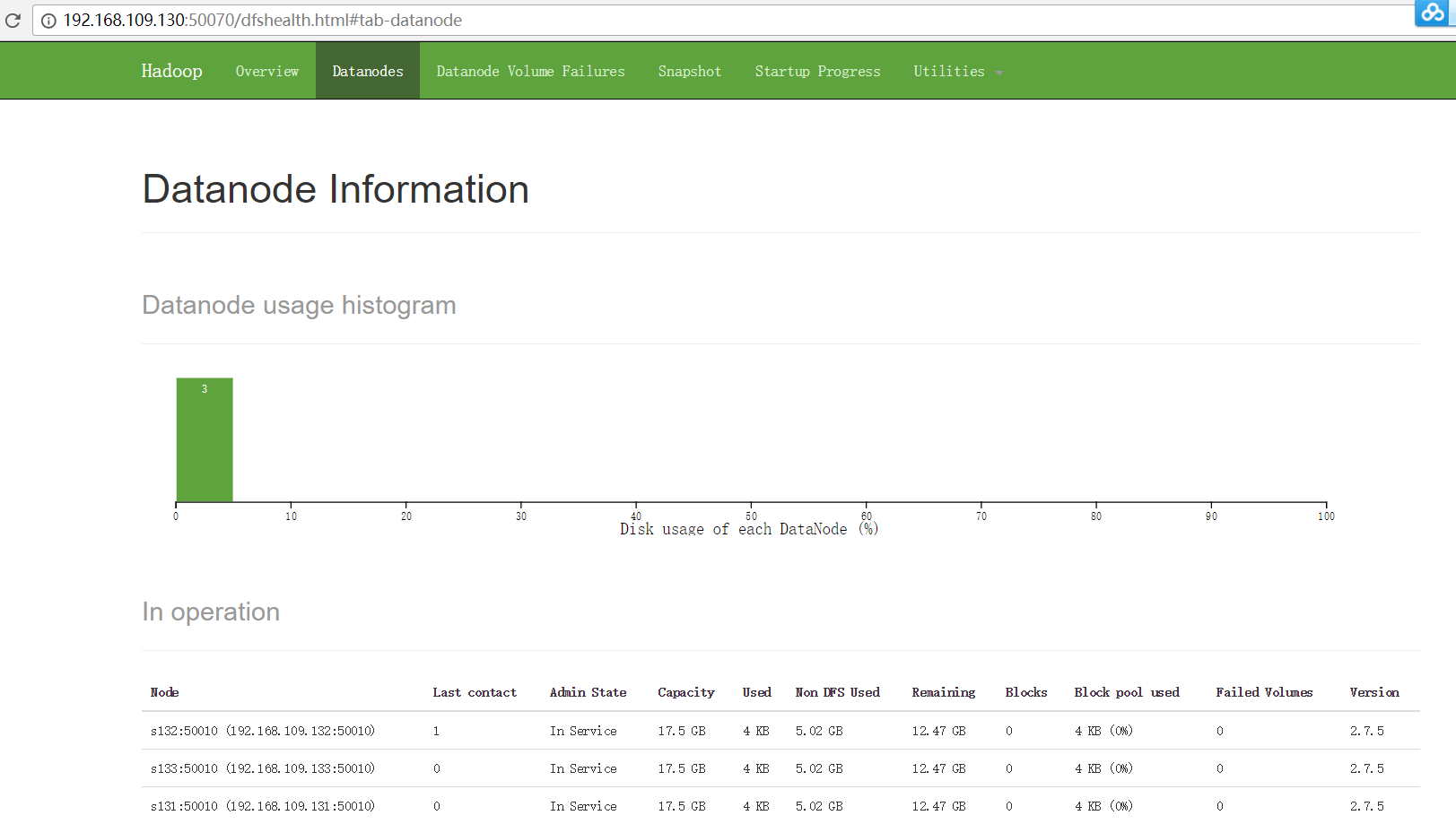

- 启动Hadoop

[root@s130:/soft/hadoop2.7/etc/hadoop]jps

3841 SecondaryNameNode

4273 Jps

4012 ResourceManager

3599 NameNode[root@s131:/root]jps

3723 NodeManager

4139 Jps

3565 DataNode

[root@s131:/root]ssh s132

The authenticity of host 's132 (192.168.109.132)' can't be established.

ECDSA key fingerprint is a7:5b:2c:55:73:e9:9a:2e:8d:48:a5:8b:98:dd:f8:05.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 's132,192.168.109.132' (ECDSA) to the list of known hosts.

root@s132's password:

Last login: Fri Dec 22 03:38:14 2017 from 192.168.109.1

[root@s132:/root]jps

3444 DataNode

3609 NodeManager

3945 Jps

[root@s132:/root]ssh s133

root@s133's password:

Last login: Fri Dec 22 03:38:12 2017 from 192.168.109.1

[root@s133:/root]jps

3621 NodeManager

3463 DataNode

3979 Jps

欢迎关注我的公众号:小秋的博客

CSDN博客:https://blog.csdn.net/xiaoqiu_cr

github:https://github.com/crr121

联系邮箱:rongchen633@gmail.com

有什么问题可以给我留言噢~

浙公网安备 33010602011771号

浙公网安备 33010602011771号