HRNet Pytorch 代码复现

网络介绍

在语义分割的时候需要得到一个高分辨率的heatmap进行关键点的检测。获取高分辨率的方式一般是采用先降分辨率再升分辨率的方法,例如U-Net,SegNet,DeconvNet,Hourglass。这些网络的一个特点是将不同的分辨率进行串联。

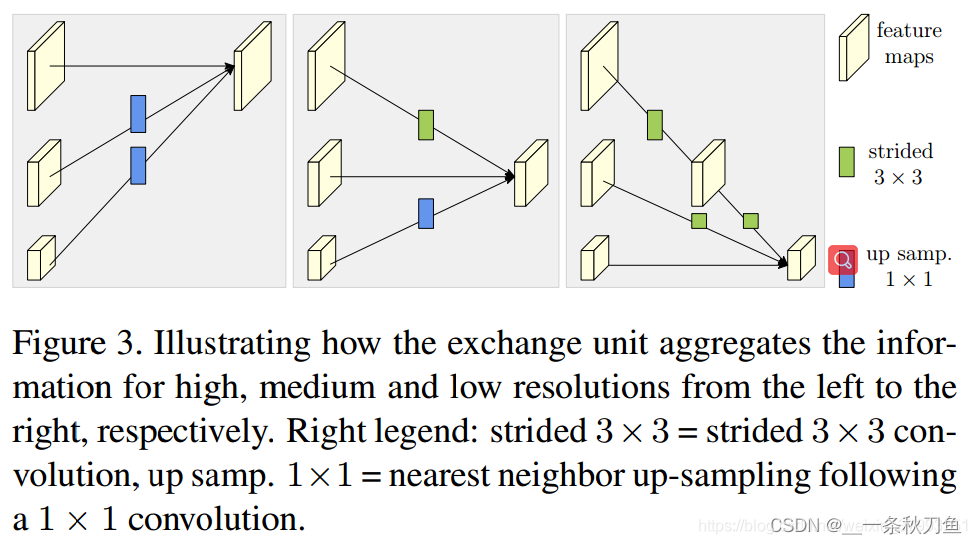

与上述Net不同的是,HRNet并联了不同分辨率,并添加不同分辨率之间的交互。

并联、交互准则

- 相同分辨率的层直接复制

- upsampling采用bilinear upsample + 1*1 kernel统一channel数

- downsample使用strides 3*3kernel(没有使用pooling)

- feature map 之间的融合方式是相加

最终分支的选择

- 普适性选择:使用分辨率最高的特征图

- 语义分割和面部关键点检测:将所有分辨率的特征图在进行upsampling到统一维度后进行concate

- 目标检测:在concate基础上使用特征金字塔

- 分类网络:四个分支融合

code

# ------------------------------------------------------------------------------

# Copyright (c) Microsoft

# Licensed under the MIT License.

# Written by RainbowSecret (yhyuan@pku.edu.cn)

# ------------------------------------------------------------------------------

import os

import torch

import torch.nn as nn

import torch.nn.functional as F

import numpy as np

from model_summary import get_model_summary

os.environ["CUDA_VISIBLE_DEVICES"]="0"

# you can modify your parameters here. Using HRNET32 as an example.

# using ReLU6 to replace ReLU here.

# --- HRNET_32 --- #

hrnet32 = {'STAGE1':{'NUM_MODULES':1, 'NUM_BRANCHES':1, 'NUM_BLOCKS': [4], 'NUM_CHANNELS':[64], 'BLOCK': 'BOTTLENECK', 'FUSE_METHOD': 'SUM'},

'STAGE2':{'NUM_MODULES':1, 'NUM_BRANCHES':2, 'NUM_BLOCKS': [4,4], 'NUM_CHANNELS':[32, 64], 'BLOCK': 'BASIC', 'FUSE_METHOD': 'SUM'},

'STAGE3':{'NUM_MODULES':4, 'NUM_BRANCHES':3, 'NUM_BLOCKS': [4,4,4], 'NUM_CHANNELS':[32,64,128], 'BLOCK': 'BASIC', 'FUSE_METHOD': 'SUM'},

'STAGE4':{'NUM_MODULES':3, 'NUM_BRANCHES':4, 'NUM_BLOCKS': [4,4,4,4], 'NUM_CHANNELS':[32,64,128,256], 'BLOCK': 'BASIC', 'FUSE_METHOD': 'SUM'}}

def conv3x3(in_planes, out_planes, stride=1, groups=1, dilation=1):

"""3x3 convolution with padding"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=dilation, groups=groups, bias=False, dilation=dilation)

def conv1x1(in_planes, out_planes, stride=1):

"""1x1 convolution"""

return nn.Conv2d(in_planes, out_planes, kernel_size=1, stride=stride, bias=False)

# -- weight initialization -- #

def initialize_weights(*models):

for model in models:

for m in model.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight.data, nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1.)

m.bias.data.fill_(1e-4)

elif isinstance(m, nn.Linear):

m.weight.data.normal_(0.0, 0.0001)

m.bias.data.zero_()

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None, groups=1,

base_width=64, dilation=1, norm_layer=None):

super(BasicBlock, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

if groups != 1 or base_width != 64:

raise ValueError('BasicBlock only supports groups=1 and base_width=64')

if dilation > 1:

raise NotImplementedError("Dilation > 1 not supported in BasicBlock")

# Both self.conv1 and self.downsample layers downsample the input when stride != 1

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = norm_layer(planes, eps=1e-3)

self.relu = nn.ReLU6(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = norm_layer(planes, eps=1e-3)

self.downsample = downsample

self.stride = stride

initialize_weights(self)

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None, groups=1,

base_width=64, dilation=1, norm_layer=None):

super(Bottleneck, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

width = int(planes * (base_width / 64.)) * groups

# Both self.conv2 and self.downsample layers downsample the input when stride != 1

self.conv1 = conv1x1(inplanes, width)

self.bn1 = norm_layer(width, eps=1e-3)

self.conv2 = conv3x3(width, width, stride, groups, dilation)

self.bn2 = norm_layer(width, eps=1e-3)

self.conv3 = conv1x1(width, planes * self.expansion)

self.bn3 = norm_layer(planes * self.expansion, eps=1e-3)

self.relu = nn.ReLU6(inplace=True)

self.downsample = downsample

self.stride = stride

initialize_weights(self)

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class HighResolutionModule(nn.Module):

def __init__(self, num_branches, blocks, num_blocks, num_inchannels,

num_channels, fuse_method, multi_scale_output=True, norm_layer=None):

super(HighResolutionModule, self).__init__()

self._check_branches(

num_branches, blocks, num_blocks, num_inchannels, num_channels)

if norm_layer is None:

norm_layer = nn.BatchNorm2d

self.norm_layer = norm_layer

self.num_inchannels = num_inchannels

self.fuse_method = fuse_method

self.num_branches = num_branches

self.multi_scale_output = multi_scale_output

self.branches = self._make_branches(

num_branches, blocks, num_blocks, num_channels)

self.fuse_layers = self._make_fuse_layers()

self.relu = nn.ReLU6(inplace=True)

initialize_weights(self)

def _check_branches(self, num_branches, blocks, num_blocks,

num_inchannels, num_channels):

if num_branches != len(num_blocks):

error_msg = 'NUM_BRANCHES({}) <> NUM_BLOCKS({})'.format(

num_branches, len(num_blocks))

raise ValueError(error_msg)

if num_branches != len(num_channels):

error_msg = 'NUM_BRANCHES({}) <> NUM_CHANNELS({})'.format(

num_branches, len(num_channels))

raise ValueError(error_msg)

if num_branches != len(num_inchannels):

error_msg = 'NUM_BRANCHES({}) <> NUM_INCHANNELS({})'.format(

num_branches, len(num_inchannels))

raise ValueError(error_msg)

def _make_one_branch(self, branch_index, block, num_blocks, num_channels,

stride=1):

downsample = None

if stride != 1 or \

self.num_inchannels[branch_index] != num_channels[branch_index] * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.num_inchannels[branch_index],

num_channels[branch_index] * block.expansion,

kernel_size=1, stride=stride, bias=False),

self.norm_layer(num_channels[branch_index] * block.expansion),

)

layers = []

layers.append(block(self.num_inchannels[branch_index],

num_channels[branch_index], stride, downsample, norm_layer=self.norm_layer))

self.num_inchannels[branch_index] = \

num_channels[branch_index] * block.expansion

for i in range(1, num_blocks[branch_index]):

layers.append(block(self.num_inchannels[branch_index],

num_channels[branch_index], norm_layer=self.norm_layer))

return nn.Sequential(*layers)

def _make_branches(self, num_branches, block, num_blocks, num_channels):

branches = []

for i in range(num_branches):

branches.append(

self._make_one_branch(i, block, num_blocks, num_channels))

return nn.ModuleList(branches)

def _make_fuse_layers(self):

if self.num_branches == 1:

return None

num_branches = self.num_branches

num_inchannels = self.num_inchannels

fuse_layers = []

for i in range(num_branches if self.multi_scale_output else 1):

fuse_layer = []

for j in range(num_branches):

if j > i:

fuse_layer.append(nn.Sequential(

nn.Conv2d(num_inchannels[j],

num_inchannels[i],

1,

1,

0,

bias=False),

self.norm_layer(num_inchannels[i], eps=1e-3)))

elif j == i:

fuse_layer.append(None)

else:

conv3x3s = []

for k in range(i-j):

if k == i - j - 1:

num_outchannels_conv3x3 = num_inchannels[i]

conv3x3s.append(nn.Sequential(

nn.Conv2d(num_inchannels[j],

num_outchannels_conv3x3,

3, 2, 1, bias=False),

self.norm_layer(num_outchannels_conv3x3, eps=1e-3)))

else:

num_outchannels_conv3x3 = num_inchannels[j]

conv3x3s.append(nn.Sequential(

nn.Conv2d(num_inchannels[j],

num_outchannels_conv3x3,

3, 2, 1, bias=False),

self.norm_layer(num_outchannels_conv3x3, eps=1e-3),

nn.ReLU6(inplace=True)))

fuse_layer.append(nn.Sequential(*conv3x3s))

fuse_layers.append(nn.ModuleList(fuse_layer))

return nn.ModuleList(fuse_layers)

def get_num_inchannels(self):

return self.num_inchannels

def forward(self, x):

if self.num_branches == 1:

return [self.branches[0](x[0])]

for i in range(self.num_branches):

x[i] = self.branches[i](x[i])

x_fuse = []

for i in range(len(self.fuse_layers)):

y = x[0] if i == 0 else self.fuse_layers[i][0](x[0])

for j in range(1, self.num_branches):

if i == j:

y = y + x[j]

elif j > i:

width_output = x[i].shape[-1]

height_output = x[i].shape[-2]

y = y + F.interpolate(

self.fuse_layers[i][j](x[j]),

size=[height_output, width_output],

mode='bilinear',

align_corners=True

)

else:

y = y + self.fuse_layers[i][j](x[j])

x_fuse.append(self.relu(y))

return x_fuse

blocks_dict = {

'BASIC': BasicBlock,

'BOTTLENECK': Bottleneck

}

class HighResolutionNet(nn.Module):

def __init__(self,

original_figure_channel,

options,

cfg=None,

norm_layer=None):

super(HighResolutionNet, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

if cfg is None:

cfg = hrnet32

self.norm_layer = norm_layer

self.original_figure_channel = original_figure_channel

# stem network

# stem net

self.conv1 = nn.Conv2d(self.original_figure_channel, 64, kernel_size=3, stride=2, padding=1,

bias=False)

self.bn1 = self.norm_layer(64)

self.conv2 = nn.Conv2d(64, 64, kernel_size=3, stride=2, padding=1,

bias=False)

self.bn2 = self.norm_layer(64)

self.relu = nn.ReLU6(inplace=True)

# stage 1

self.stage1_cfg = cfg['STAGE1']

num_channels = self.stage1_cfg['NUM_CHANNELS'][0]

block = blocks_dict[self.stage1_cfg['BLOCK']]

num_blocks = self.stage1_cfg['NUM_BLOCKS'][0]

self.layer1 = self._make_layer(block, 64, num_channels, num_blocks)

stage1_out_channel = block.expansion*num_channels

# stage 2

self.stage2_cfg = cfg['STAGE2']

num_channels = self.stage2_cfg['NUM_CHANNELS']

block = blocks_dict[self.stage2_cfg['BLOCK']]

num_channels = [

num_channels[i] * block.expansion for i in range(len(num_channels))]

self.transition1 = self._make_transition_layer(

[stage1_out_channel], num_channels)

self.stage2, pre_stage_channels = self._make_stage(

self.stage2_cfg, num_channels)

# stage 3

self.stage3_cfg = cfg['STAGE3']

num_channels = self.stage3_cfg['NUM_CHANNELS']

block = blocks_dict[self.stage3_cfg['BLOCK']]

num_channels = [

num_channels[i] * block.expansion for i in range(len(num_channels))]

self.transition2 = self._make_transition_layer(

pre_stage_channels, num_channels)

self.stage3, pre_stage_channels = self._make_stage(

self.stage3_cfg, num_channels)

# stage 4

self.stage4_cfg = cfg['STAGE4']

num_channels = self.stage4_cfg['NUM_CHANNELS']

block = blocks_dict[self.stage4_cfg['BLOCK']]

num_channels = [

num_channels[i] * block.expansion for i in range(len(num_channels))]

self.transition3 = self._make_transition_layer(

pre_stage_channels, num_channels)

self.stage4, pre_stage_channels = self._make_stage(

self.stage4_cfg, num_channels, multi_scale_output=True)

last_inp_channels = np.int(np.sum(pre_stage_channels))

self.last_layer = nn.Sequential(

nn.Conv2d(

in_channels=last_inp_channels,

out_channels=last_inp_channels,

kernel_size=1,

stride=1,

padding=0),

self.norm_layer(last_inp_channels, eps=1e-3),

nn.ReLU6(inplace=True),

nn.Conv2d(

in_channels=last_inp_channels,

# --- Modified according to FeatureMap input --- #

out_channels=128,

kernel_size=1,

stride=1,

padding=0),

# --- Add batch norm solvee test loss nan --- #

self.norm_layer(128, eps=1e-3)

)

def _make_transition_layer(

self, num_channels_pre_layer, num_channels_cur_layer):

num_branches_cur = len(num_channels_cur_layer)

num_branches_pre = len(num_channels_pre_layer)

transition_layers = []

for i in range(num_branches_cur):

if i < num_branches_pre:

if num_channels_cur_layer[i] != num_channels_pre_layer[i]:

transition_layers.append(nn.Sequential(

nn.Conv2d(num_channels_pre_layer[i],

num_channels_cur_layer[i],

3,

1,

1,

bias=False),

self.norm_layer(num_channels_cur_layer[i], eps=1e-3),

nn.ReLU6(inplace=True)))

else:

transition_layers.append(None)

else:

conv3x3s = []

for j in range(i+1-num_branches_pre):

inchannels = num_channels_pre_layer[-1]

outchannels = num_channels_cur_layer[i] \

if j == i-num_branches_pre else inchannels

conv3x3s.append(nn.Sequential(

nn.Conv2d(

inchannels, outchannels, 3, 2, 1, bias=False),

self.norm_layer(outchannels, eps=1e-3),

nn.ReLU6(inplace=True)))

transition_layers.append(nn.Sequential(*conv3x3s))

return nn.ModuleList(transition_layers)

def _make_layer(self, block, inplanes, planes, blocks, stride=1):

downsample = None

if stride != 1 or inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

self.norm_layer(planes * block.expansion, eps=1e-3),

)

layers = []

layers.append(block(inplanes, planes, stride, downsample, norm_layer=self.norm_layer))

inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(inplanes, planes, norm_layer=self.norm_layer))

return nn.Sequential(*layers)

def _make_stage(self, layer_config, num_inchannels,

multi_scale_output=True):

num_modules = layer_config['NUM_MODULES']

num_branches = layer_config['NUM_BRANCHES']

num_blocks = layer_config['NUM_BLOCKS']

num_channels = layer_config['NUM_CHANNELS']

block = blocks_dict[layer_config['BLOCK']]

fuse_method = layer_config['FUSE_METHOD']

modules = []

for i in range(num_modules):

# multi_scale_output is only used last module

if not multi_scale_output and i == num_modules - 1:

reset_multi_scale_output = False

else:

reset_multi_scale_output = True

modules.append(

HighResolutionModule(num_branches,

block,

num_blocks,

num_inchannels,

num_channels,

fuse_method,

reset_multi_scale_output,

norm_layer=self.norm_layer)

)

num_inchannels = modules[-1].get_num_inchannels()

return nn.Sequential(*modules), num_inchannels

def forward(self, x_):

x = self.conv1(x_)

x = self.bn1(x)

x = self.relu(x)

x = self.conv2(x)

x = self.bn2(x)

x = self.relu(x)

x = self.layer1(x)

x_list = []

for i in range(self.stage2_cfg['NUM_BRANCHES']):

if self.transition1[i] is not None:

x_list.append(self.transition1[i](x))

else:

x_list.append(x)

y_list = self.stage2(x_list)

x_list = []

for i in range(self.stage3_cfg['NUM_BRANCHES']):

if self.transition2[i] is not None:

if i < self.stage2_cfg['NUM_BRANCHES']:

x_list.append(self.transition2[i](y_list[i]))

else:

x_list.append(self.transition2[i](y_list[-1]))

else:

x_list.append(y_list[i])

y_list = self.stage3(x_list)

x_list = []

for i in range(self.stage4_cfg['NUM_BRANCHES']):

if self.transition3[i] is not None:

if i < self.stage3_cfg['NUM_BRANCHES']:

x_list.append(self.transition3[i](y_list[i]))

else:

x_list.append(self.transition3[i](y_list[-1]))

else:

x_list.append(y_list[i])

x = self.stage4(x_list)

# Upsampling

#print(x[0].size(), x[1].size(), x[2].size(), x[3].size())

# --- upsampling to original size --- *

x0_h, x0_w = x[0].size(2), x[0].size(3)

#x0_h, x0_w = x_.size(2), x_.size(3)

#x0 = F.interpolate(x[0], size=(x0_h, x0_w), mode='bilinear', align_corners=True)

x1 = F.interpolate(x[1], size=(x0_h, x0_w), mode='bilinear', align_corners=True)

x2 = F.interpolate(x[2], size=(x0_h, x0_w), mode='bilinear', align_corners=True)

x3 = F.interpolate(x[3], size=(x0_h, x0_w), mode='bilinear', align_corners=True)

#x = torch.cat([x[0], x1, x2, x3], 1)

x = torch.cat([x[0], x1, x2, x3], 1)

x = self.last_layer(x)

return x

if __name__ == '__main__':

device = torch.device("cuda" if torch.cuda.is_available() else 'cpu')

img = torch.randn(1, 27, 256, 256)

img = img.to(device)

model = HighResolutionNet(cfg=hrnet32, original_figure_channel=img.size()[1], options = None)

model = model.to(device)

details = get_model_summary(model, img)

output = model(img)

print(output.shape)

print(details)

# OUTPUT:

torch.Size([1, 128, 64, 64])

Total Parameters: 29,613,024

----------------------------------------------------------------------------------------------------------------------------------

Total Multiply Adds (For Convolution and Linear Layers only): 10.923583984375 GFLOPs

----------------------------------------------------------------------------------------------------------------------------------

Number of Layers

Conv2d : 307 layers BatchNorm2d : 307 layers ReLU6 : 269 layers Bottleneck : 4 layers BasicBlock : 104 layers HighResolutionModule : 8 layers

浙公网安备 33010602011771号

浙公网安备 33010602011771号