Xception Pytorch代码复现

文章目录

参考文章 经典卷积架构的PyTorch实现:Xception

参考文章 卷积神经网络结构简述(二)Inception系列网络

github 项目 Xception backbone

深度可分离卷积

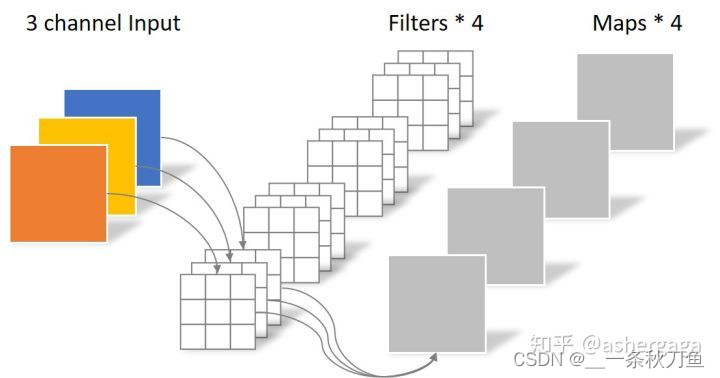

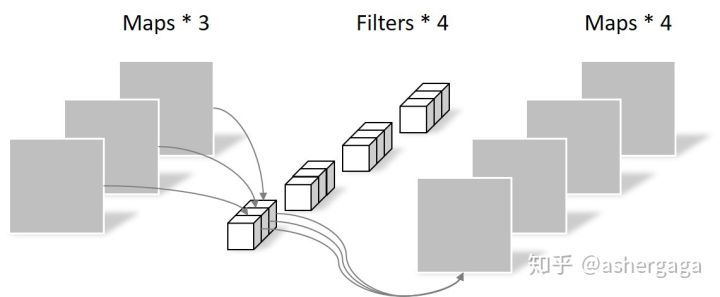

在轻量级的网络中,会有深度可分离卷积 depthwise separable convolution,由depthwise 和pointwise组合,用于提取feature map。

depthwise convolution的一个卷积核只负责一个通道,输入与输出的通道数相同,无法扩展featuremap,没有有效利用不同通道在相同空间位置上的feature信息,因此需要pointwise covolution将feature map进行有效组合。

depthwise convolution,

N

=

3

∗

3

∗

3

N = 3*3*3

N=3∗3∗3

pointwise convolution,

N

=

1

∗

1

∗

3

∗

4

N = 1*1*3*4

N=1∗1∗3∗4

如果是常规卷积操作的话,需要用到 3 ∗ 3 ∗ 4 3*3*4 3∗3∗4的参数,因此使用深度可分离卷积能有效减少参数的个数。

class SeparableConv2d(nn.Module):

def __init__(self,in_channels,out_channels,kernel_size=1,stride=1,padding=0,dilation=1,bias=False):

super(SeparableConv2d,self).__init__()

self.conv1 = nn.Conv2d(in_channels,in_channels,kernel_size,stride,padding,dilation,groups=in_channels,bias=bias)

self.pointwise = nn.Conv2d(in_channels,out_channels,1,1,0,1,1,bias=bias)

def forward(self,x):

x = self.conv1(x)

x = self.pointwise(x)

return x

Inception发展

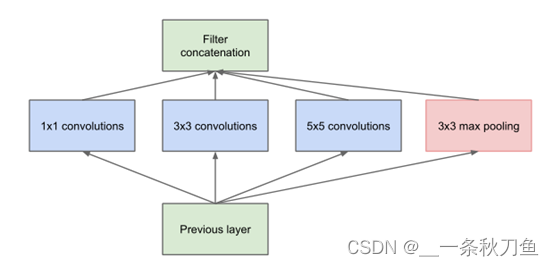

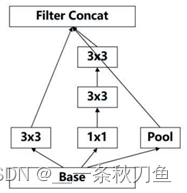

GoogleNet

与VGG等网络以为堆叠深度不同,为了解决随着网络加深面临的参数数量过多,过拟合的问题,GoogleNet率先提出了卷积核的并行合并。与VGG相比有以下改进:

- 使用不同大小的kernel,网络在每一层中都可以学习到稀疏特征( 3 ∗ 3 3*3 3∗3, 5 ∗ 5 5*5 5∗5 kernel)与非稀疏特征( 1 ∗ 1 1*1 1∗1 kernel)。

- 增加网络宽度,增加网络对尺度的适应性。

- 通过不同block的concat,获得非线性属性。

但同样面临着随着网络深度加深计算量大的问题。因此借鉴Network in network思想,使用 1 ∗ 1 1*1 1∗1的卷积核进行降维操作,减小网络的参数量,即inception V1。

Inception Network

inception V1

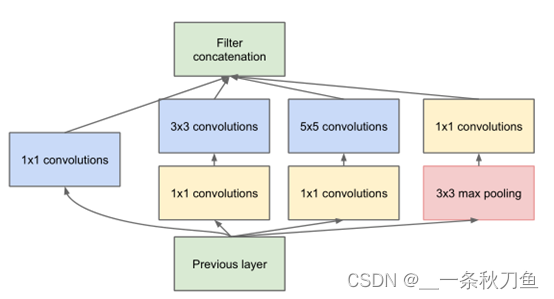

based on inception V1的结构,inception V2和V3的改进遵循以下原则:

- 特征图从输入到输出应该缓慢减小

- 在卷积网络中增加非线性可以使网络训练更快

- 在大尺度卷积之前先对输入进行降维不会影响特征的表达能力。即在空间聚合可以以低维度嵌入进行。

- 平衡网络的深度和宽度。

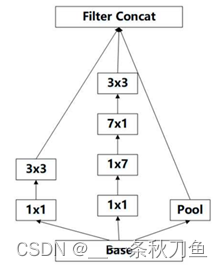

inception V2

inception V2 使用了:

- BN层

- 使用两个 3 ∗ 3 3*3 3∗3的卷积核代替了 5 ∗ 5 5*5 5∗5的卷积核,具有更少的参数,间接增加了网络的深度。

由于inception V2采用的是先pooling再inception的结构,会造成表达的瓶颈问题,即特征图的大小不能急剧缩减,只经过一层就骤降,会丢失大量信息,对模型训练造成困难。

inception V3

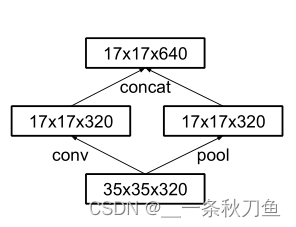

inception V3 采用并联降维的结构,即经过conv和pool然后concat出结果。

35-17

17-8

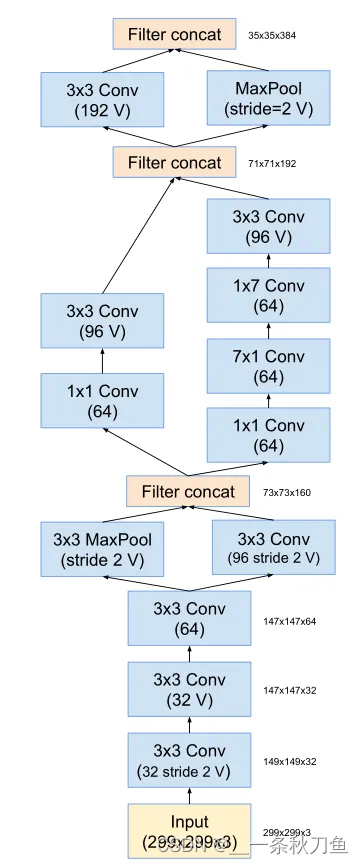

inception V4

在inception V3的基础上,将网络前几层的卷积、池化操作替换为stem模块,加深网络结构。

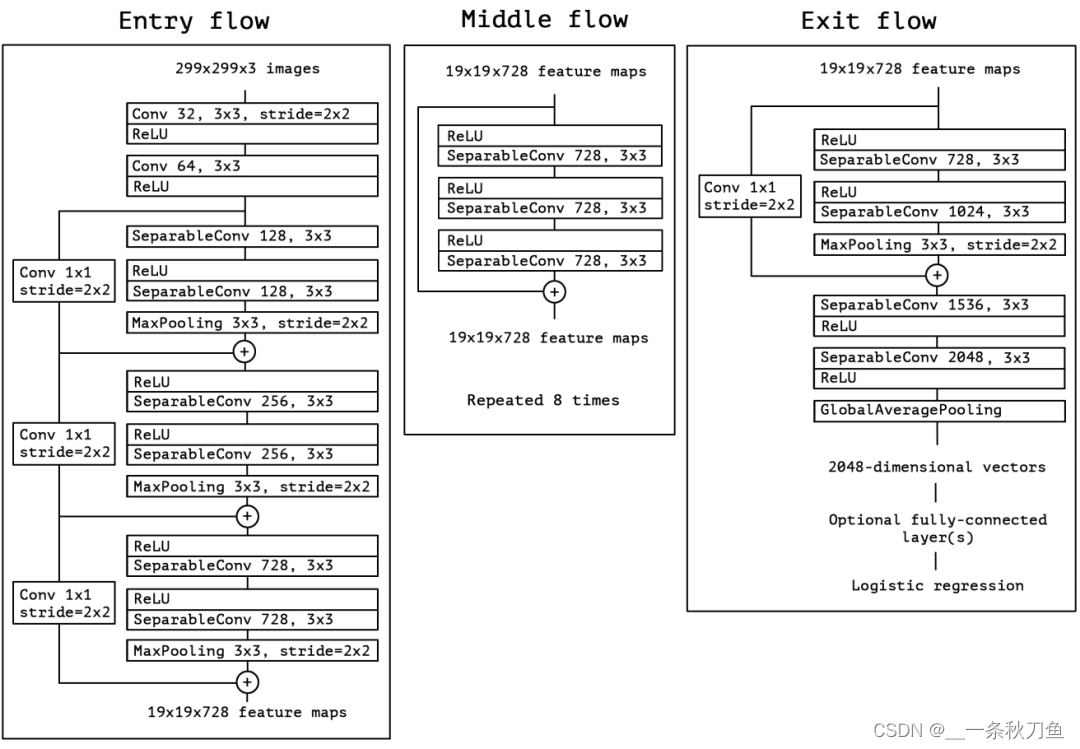

Xception

Xception 将 inception中的inception模块替换成深度可分离卷积。

网络引入ResNet思想,引入skip connection,每个卷积后都做了BN操作。

"""

Xception is adapted from https://github.com/Cadene/pretrained-models.pytorch/blob/master/pretrainedmodels/models/xception.py

Ported to pytorch thanks to [tstandley](https://github.com/tstandley/Xception-PyTorch)

@author: tstandley

Adapted by cadene

Creates an Xception Model as defined in:

Francois Chollet

Xception: Deep Learning with Depthwise Separable Convolutions

https://arxiv.org/pdf/1610.02357.pdf

This weights ported from the Keras implementation. Achieves the following performance on the validation set:

Loss:0.9173 Prec@1:78.892 Prec@5:94.292

REMEMBER to set your image size to 3x299x299 for both test and validation

normalize = transforms.Normalize(mean=[0.5, 0.5, 0.5],

std=[0.5, 0.5, 0.5])

The resize parameter of the validation transform should be 333, and make sure to center crop at 299x299

"""

from __future__ import print_function, division, absolute_import

import math

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.model_zoo as model_zoo

from torch.nn import init

__all__ = ['xception']

pretrained_settings = {

'xception': {

'imagenet': {

'url': 'http://data.lip6.fr/cadene/pretrainedmodels/xception-43020ad28.pth',

'input_space': 'RGB',

'input_size': [3, 299, 299],

'input_range': [0, 1],

'mean': [0.5, 0.5, 0.5],

'std': [0.5, 0.5, 0.5],

'num_classes': 1000,

'scale': 0.8975 # The resize parameter of the validation transform should be 333, and make sure to center crop at 299x299

}

}

}

class SeparableConv2d(nn.Module):

def __init__(self,in_channels,out_channels,kernel_size=1,stride=1,padding=0,dilation=1,bias=False):

super(SeparableConv2d,self).__init__()

self.conv1 = nn.Conv2d(in_channels,in_channels,kernel_size,stride,padding,dilation,groups=in_channels,bias=bias)

self.pointwise = nn.Conv2d(in_channels,out_channels,1,1,0,1,1,bias=bias)

def forward(self,x):

x = self.conv1(x)

x = self.pointwise(x)

return x

class Block(nn.Module):

def __init__(self,in_filters,out_filters,reps,strides=1,start_with_relu=True,grow_first=True, dilation=1):

super(Block, self).__init__()

# skip connections

if out_filters != in_filters or strides!=1:

self.skip = nn.Conv2d(in_filters,out_filters,1,stride=strides, bias=False)

self.skipbn = nn.BatchNorm2d(out_filters)

else:

self.skip=None

rep=[]

filters=in_filters

if grow_first:

rep.append(nn.ReLU(inplace=True))

rep.append(SeparableConv2d(in_filters,out_filters,3,stride=1,padding=dilation, dilation=dilation, bias=False))

rep.append(nn.BatchNorm2d(out_filters))

filters = out_filters

for i in range(reps-1):

rep.append(nn.ReLU(inplace=True))

rep.append(SeparableConv2d(filters,filters,3,stride=1,padding=dilation,dilation=dilation,bias=False))

rep.append(nn.BatchNorm2d(filters))

if not grow_first:

rep.append(nn.ReLU(inplace=True))

rep.append(SeparableConv2d(in_filters,out_filters,3,stride=1,padding=dilation,dilation=dilation,bias=False))

rep.append(nn.BatchNorm2d(out_filters))

if not start_with_relu:

rep = rep[1:]

else:

rep[0] = nn.ReLU(inplace=False)

if strides != 1:

rep.append(nn.MaxPool2d(3,strides,1))

self.rep = nn.Sequential(*rep)

def forward(self,inp):

x = self.rep(inp)

if self.skip is not None:

skip = self.skip(inp)

skip = self.skipbn(skip)

else: # middle flow

skip = inp

x+=skip

return x

class Xception(nn.Module):

"""

Xception optimized for the ImageNet dataset, as specified in

https://arxiv.org/pdf/1610.02357.pdf

"""

def __init__(self, num_classes=1000, replace_stride_with_dilation=None):

""" Constructor

Args:

num_classes: number of classes

"""

super(Xception, self).__init__()

self.num_classes = num_classes

self.dilation = 1

if replace_stride_with_dilation is None:

# each element in the tuple indicates if we should replace

# the 2x2 stride with a dilated convolution instead

replace_stride_with_dilation = [False, False, False, False]

if len(replace_stride_with_dilation) != 4:

raise ValueError("replace_stride_with_dilation should be None "

"or a 4-element tuple, got {}".format(replace_stride_with_dilation))

self.conv1 = nn.Conv2d(3, 32, 3,2, 0, bias=False) # 1 / 2

self.bn1 = nn.BatchNorm2d(32)

self.relu1 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(32,64,3,bias=False)

self.bn2 = nn.BatchNorm2d(64)

self.relu2 = nn.ReLU(inplace=True)

#do relu here

self.block1=self._make_block(64,128,2,2,start_with_relu=False,grow_first=True, dilate=replace_stride_with_dilation[0]) # 1 / 4

self.block2=self._make_block(128,256,2,2,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[1]) # 1 / 8

self.block3=self._make_block(256,728,2,2,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2]) # 1 / 16

self.block4=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block5=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block6=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block7=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block8=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block9=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block10=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block11=self._make_block(728,728,3,1,start_with_relu=True,grow_first=True, dilate=replace_stride_with_dilation[2])

self.block12=self._make_block(728,1024,2,2,start_with_relu=True,grow_first=False, dilate=replace_stride_with_dilation[3]) # 1 / 32

self.conv3 = SeparableConv2d(1024,1536,3,1,1, dilation=self.dilation)

self.bn3 = nn.BatchNorm2d(1536)

self.relu3 = nn.ReLU(inplace=True)

#do relu here

self.conv4 = SeparableConv2d(1536,2048,3,1,1, dilation=self.dilation)

self.bn4 = nn.BatchNorm2d(2048)

self.fc = nn.Linear(2048, num_classes)

# #------- init weights --------

# for m in self.modules():

# if isinstance(m, nn.Conv2d):

# n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

# m.weight.data.normal_(0, math.sqrt(2. / n))

# elif isinstance(m, nn.BatchNorm2d):

# m.weight.data.fill_(1)

# m.bias.data.zero_()

# #-----------------------------

def _make_block(self, in_filters,out_filters,reps,strides=1,start_with_relu=True,grow_first=True, dilate=False):

if dilate:

self.dilation *= strides

strides = 1

return Block(in_filters,out_filters,reps,strides,start_with_relu=start_with_relu,grow_first=grow_first, dilation=self.dilation)

def features(self, input):

x = self.conv1(input)

x = self.bn1(x)

x = self.relu1(x)

x = self.conv2(x)

x = self.bn2(x)

x = self.relu2(x)

x = self.block1(x)

x = self.block2(x)

x = self.block3(x)

x = self.block4(x)

x = self.block5(x)

x = self.block6(x)

x = self.block7(x)

x = self.block8(x)

x = self.block9(x)

x = self.block10(x)

x = self.block11(x)

x = self.block12(x)

x = self.conv3(x)

x = self.bn3(x)

x = self.relu3(x)

x = self.conv4(x)

x = self.bn4(x)

return x

def logits(self, features):

x = nn.ReLU(inplace=True)(features)

x = F.adaptive_avg_pool2d(x, (1, 1))

x = x.view(x.size(0), -1)

x = self.last_linear(x)

return x

def forward(self, input):

x = self.features(input)

x = self.logits(x)

return x

def xception(num_classes=1000, pretrained='imagenet', replace_stride_with_dilation=None):

model = Xception(num_classes=num_classes, replace_stride_with_dilation=replace_stride_with_dilation)

if pretrained:

settings = pretrained_settings['xception'][pretrained]

assert num_classes == settings['num_classes'], \

"num_classes should be {}, but is {}".format(settings['num_classes'], num_classes)

model = Xception(num_classes=num_classes, replace_stride_with_dilation=replace_stride_with_dilation)

model.load_state_dict(model_zoo.load_url(settings['url']))

# TODO: ugly

model.last_linear = model.fc

del model.fc

return model

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· TypeScript + Deepseek 打造卜卦网站:技术与玄学的结合

· 阿里巴巴 QwQ-32B真的超越了 DeepSeek R-1吗?

· 【译】Visual Studio 中新的强大生产力特性

· 10年+ .NET Coder 心语 ── 封装的思维:从隐藏、稳定开始理解其本质意义

· 【设计模式】告别冗长if-else语句:使用策略模式优化代码结构