word2vec 实现 影评情感分析

目录

import所需库

# bs4 nltk gensim

import os

import re

import numpy as np

import pandas as pd

from bs4 import BeautifulSoup

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import confusion_matrix

from sklearn.linear_model import LogisticRegression

import nltk

from nltk.corpus import stopwords

# nltk.download()

# 测试 nltk tokenizers 部分的安装

import nltk

tokenizer = nltk.data.load('tokenizers/punkt/english.pickle')

用pandas读入训练数据

df = pd.read_csv('../data/labeledTrainData.tsv', sep='\t', escapechar='\\')

print('Number of reviews: {}'.format(len(df)))

df.head()

# sentiment 喜欢电影与否;review:对电影的评论

Number of reviews: 25000

| id | sentiment | review | |

|---|---|---|---|

| 0 | 5814_8 | 1 | With all this stuff going down at the moment w... |

| 1 | 2381_9 | 1 | "The Classic War of the Worlds" by Timothy Hin... |

| 2 | 7759_3 | 0 | The film starts with a manager (Nicholas Bell)... |

| 3 | 3630_4 | 0 | It must be assumed that those who praised this... |

| 4 | 9495_8 | 1 | Superbly trashy and wondrously unpretentious 8... |

对影评数据做预处理,大概有以下环节:

- 去掉html标签

- 移除标点

- 切分成词/token

- 去掉停用词

- 重组为新的句子

df['review'][1000]

"I watched this movie really late last night and usually if it's late then I'm pretty forgiving of movies. Although I tried, I just could not stand this movie at all, it kept getting worse and worse as the movie went on. Although I know it's suppose to be a comedy but I didn't find it very funny. It was also an especially unrealistic, and jaded portrayal of rural life. In case this is what any of you think country life is like, it's definitely not. I do have to agree that some of the guy cast members were cute, but the french guy was really fake. I do have to agree that it tried to have a good lesson in the story, but overall my recommendation is that no one over 8 watch it, it's just too annoying."

# 去掉HTML标签的数据

example = BeautifulSoup(df['review'][1000], 'html.parser').get_text()

example

"I watched this movie really late last night and usually if it's late then I'm pretty forgiving of movies. Although I tried, I just could not stand this movie at all, it kept getting worse and worse as the movie went on. Although I know it's suppose to be a comedy but I didn't find it very funny. It was also an especially unrealistic, and jaded portrayal of rural life. In case this is what any of you think country life is like, it's definitely not. I do have to agree that some of the guy cast members were cute, but the french guy was really fake. I do have to agree that it tried to have a good lesson in the story, but overall my recommendation is that no one over 8 watch it, it's just too annoying."

# 去掉标点符号

example_letters = re.sub(r'[^a-zA-Z]', ' ', example)

example_letters

'I watched this movie really late last night and usually if it s late then I m pretty forgiving of movies Although I tried I just could not stand this movie at all it kept getting worse and worse as the movie went on Although I know it s suppose to be a comedy but I didn t find it very funny It was also an especially unrealistic and jaded portrayal of rural life In case this is what any of you think country life is like it s definitely not I do have to agree that some of the guy cast members were cute but the french guy was really fake I do have to agree that it tried to have a good lesson in the story but overall my recommendation is that no one over watch it it s just too annoying '

words = example_letters.lower().split()

words

['i',

'watched',

'this',

'movie',

'really',

'late',

'last',

'night',

'and',

'usually',

'if',

'it',

's',

'late',

'then',

'i',

'm',

'pretty',

'forgiving',

'of',

'movies',

'although',

'i',

'tried',

'i',

'just',

'could',

'not',

'stand',

'this',

'movie',

'at',

'all',

'it',

'kept',

'getting',

'worse',

'and',

'worse',

'as',

'the',

'movie',

'went',

'on',

'although',

'i',

'know',

'it',

's',

'suppose',

'to',

'be',

'a',

'comedy',

'but',

'i',

'didn',

't',

'find',

'it',

'very',

'funny',

'it',

'was',

'also',

'an',

'especially',

'unrealistic',

'and',

'jaded',

'portrayal',

'of',

'rural',

'life',

'in',

'case',

'this',

'is',

'what',

'any',

'of',

'you',

'think',

'country',

'life',

'is',

'like',

'it',

's',

'definitely',

'not',

'i',

'do',

'have',

'to',

'agree',

'that',

'some',

'of',

'the',

'guy',

'cast',

'members',

'were',

'cute',

'but',

'the',

'french',

'guy',

'was',

'really',

'fake',

'i',

'do',

'have',

'to',

'agree',

'that',

'it',

'tried',

'to',

'have',

'a',

'good',

'lesson',

'in',

'the',

'story',

'but',

'overall',

'my',

'recommendation',

'is',

'that',

'no',

'one',

'over',

'watch',

'it',

'it',

's',

'just',

'too',

'annoying']

#下载停用词和其他语料会用到

#nltk.download()

#去停用词

stopwords = {}.fromkeys([ line.rstrip() for line in open('../stopwords.txt')])

words_nostop = [w for w in words if w not in stopwords]

words_nostop

['watched',

'movie',

'late',

'night',

'late',

'pretty',

'forgiving',

'movies',

'stand',

'movie',

'worse',

'worse',

'movie',

'suppose',

'comedy',

'didn',

'funny',

'unrealistic',

'jaded',

'portrayal',

'rural',

'life',

'country',

'life',

'agree',

'guy',

'cast',

'cute',

'french',

'guy',

'fake',

'agree',

'lesson',

'story',

'recommendation',

'watch',

'annoying']

eng_stopwords = set(stopwords)

def clean_text(text):

text = BeautifulSoup(text, 'html.parser').get_text()

text = re.sub(r'[^a-zA-Z]', ' ', text)

words = text.lower().split()

words = [w for w in words if w not in eng_stopwords]

return ' '.join(words)

df['review'][1000]

"I watched this movie really late last night and usually if it's late then I'm pretty forgiving of movies. Although I tried, I just could not stand this movie at all, it kept getting worse and worse as the movie went on. Although I know it's suppose to be a comedy but I didn't find it very funny. It was also an especially unrealistic, and jaded portrayal of rural life. In case this is what any of you think country life is like, it's definitely not. I do have to agree that some of the guy cast members were cute, but the french guy was really fake. I do have to agree that it tried to have a good lesson in the story, but overall my recommendation is that no one over 8 watch it, it's just too annoying."

clean_text(df['review'][1000])

'watched movie late night late pretty forgiving movies stand movie worse worse movie suppose comedy didn funny unrealistic jaded portrayal rural life country life agree guy cast cute french guy fake agree lesson story recommendation watch annoying'

清洗数据添加到dataframe里

df['clean_review'] = df.review.apply(clean_text) # clean_review 清洗后的数据

df.head()

| id | sentiment | review | clean_review | |

|---|---|---|---|---|

| 0 | 5814_8 | 1 | With all this stuff going down at the moment w... | stuff moment mj ve started listening music wat... |

| 1 | 2381_9 | 1 | "The Classic War of the Worlds" by Timothy Hin... | classic war worlds timothy hines entertaining ... |

| 2 | 7759_3 | 0 | The film starts with a manager (Nicholas Bell)... | film starts manager nicholas bell investors ro... |

| 3 | 3630_4 | 0 | It must be assumed that those who praised this... | assumed praised film filmed opera didn read do... |

| 4 | 9495_8 | 1 | Superbly trashy and wondrously unpretentious 8... | superbly trashy wondrously unpretentious explo... |

抽取bag of words特征(用sklearn的CountVectorizer)

vectorizer = CountVectorizer(max_features = 5000) # 基于词频排序,选取前 5k 个词,建立 5k 维度的向量。

train_data_features = vectorizer.fit_transform(df.clean_review).toarray() # 将文本数据转换为 词袋模型的特征数据

train_data_features.shape

(25000, 5000)

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(train_data_features,df.sentiment,test_size = 0.2, random_state = 0)

C:\Anaconda3\lib\site-packages\sklearn\cross_validation.py:44: DeprecationWarning: This module was deprecated in version 0.18 in favor of the model_selection module into which all the refactored classes and functions are moved. Also note that the interface of the new CV iterators are different from that of this module. This module will be removed in 0.20.

"This module will be removed in 0.20.", DeprecationWarning)

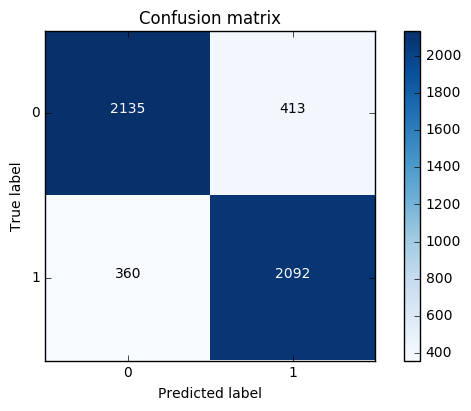

混淆矩阵

可当做模板来使用

import matplotlib.pyplot as plt

import itertools

def plot_confusion_matrix(cm, classes,

title='Confusion matrix',

cmap=plt.cm.Blues):

"""

This function prints and plots the confusion matrix.

"""

plt.imshow(cm, interpolation='nearest', cmap=cmap)

plt.title(title)

plt.colorbar()

tick_marks = np.arange(len(classes))

plt.xticks(tick_marks, classes, rotation=0)

plt.yticks(tick_marks, classes)

thresh = cm.max() / 2.

for i, j in itertools.product(range(cm.shape[0]), range(cm.shape[1])):

plt.text(j, i, cm[i, j],

horizontalalignment="center",

color="white" if cm[i, j] > thresh else "black")

plt.tight_layout()

plt.ylabel('True label')

plt.xlabel('Predicted label')

训练分类器

# 使用逻辑回归来做这个基本分类任务。先不用 word2vec。

LR_model = LogisticRegression()

LR_model = LR_model.fit(X_train, y_train)

y_pred = LR_model.predict(X_test)

cnf_matrix = confusion_matrix(y_test,y_pred)

# 召回率

print("Recall metric in the testing dataset: ", cnf_matrix[1,1]/(cnf_matrix[1,0] + cnf_matrix[1,1]))

# 精度

print("accuracy metric in the testing dataset: ", (cnf_matrix[1,1] + cnf_matrix[0,0])/(cnf_matrix[0,0] + cnf_matrix[1,1]+cnf_matrix[1,0]+cnf_matrix[0,1]))

# Plot non-normalized confusion matrix

class_names = [0,1]

plt.figure()

plot_confusion_matrix(cnf_matrix, classes=class_names, title='Confusion matrix')

plt.show()

Recall metric in the testing dataset: 0.853181076672

accuracy metric in the testing dataset: 0.8454

使用 word2vec

df = pd.read_csv('../data/unlabeledTrainData.tsv', sep='\t', escapechar='\\')

print('Number of reviews: {}'.format(len(df)))

df.head()

Number of reviews: 50000

| id | review | |

|---|---|---|

| 0 | 9999_0 | Watching Time Chasers, it obvious that it was ... |

| 1 | 45057_0 | I saw this film about 20 years ago and remembe... |

| 2 | 15561_0 | Minor Spoilers<br /><br />In New York, Joan Ba... |

| 3 | 7161_0 | I went to see this film with a great deal of e... |

| 4 | 43971_0 | Yes, I agree with everyone on this site this m... |

df['clean_review'] = df.review.apply(clean_text)

df.head()

| id | review | clean_review | |

|---|---|---|---|

| 0 | 9999_0 | Watching Time Chasers, it obvious that it was ... | watching time chasers obvious bunch friends si... |

| 1 | 45057_0 | I saw this film about 20 years ago and remembe... | film ago remember nasty based true incident br... |

| 2 | 15561_0 | Minor Spoilers<br /><br />In New York, Joan Ba... | minor spoilersin york joan barnard elvire audr... |

| 3 | 7161_0 | I went to see this film with a great deal of e... | film deal excitement school director friend mi... |

| 4 | 43971_0 | Yes, I agree with everyone on this site this m... | agree site movie bad call movie insult movies ... |

review_part = df['clean_review']

review_part.shape

(50000,)

import warnings

warnings.filterwarnings("ignore")

tokenizer = nltk.data.load('tokenizers/punkt/english.pickle') # 加载英文分词器;分词,因为 word2vec 是基于词建模。

def split_sentences(review):

raw_sentences = tokenizer.tokenize(review.strip())

sentences = [clean_text(s) for s in raw_sentences if s]

return sentences

sentences = sum(review_part.apply(split_sentences), [])

print('{} reviews -> {} sentences'.format(len(review_part), len(sentences)))

50000 reviews -> 50000 sentences

sentences[0]

'watching time chasers obvious bunch friends sitting day film school hey pool money bad movie bad movie dull story bad script lame acting poor cinematography bottom barrel stock music corners cut prevented film release life'

sentences_list = []

for line in sentences:

sentences_list.append(nltk.word_tokenize(line))

-

sentences:可以是一个list。

-

sg: 用于设置训练算法,默认为0,对应CBOW算法;sg=1则采用skip-gram算法。

-

size:是指特征向量的维度,默认为100。大的size需要更多的训练数据,但是效果会更好. 推荐值为几十到几百。 # 300 以下比较好。

-

window:表示当前词与预测词在一个句子中的最大距离是多少;上下文的滑动窗口。

-

alpha: 是学习速率。

-

seed:用于随机数发生器。与初始化词向量有关。

-

min_count: 可以对字典做截断. 词频少于min_count次数的单词会被丢弃掉, 默认值为5。

-

max_vocab_size: 设置词向量构建期间的RAM限制。如果所有独立单词个数超过这个,则就消除掉其中最不频繁的一个。

每一千万个单词需要大约1GB的RAM。设置成None则没有限制。(很少有一千万个词,设置成 None 就可以了) -

workers:控制训练的并行数。

-

hs:如果为1则会采用hierarchica·softmax技巧(哈弗曼树 或 负采样)。如果设置为0(defau·t),则negative sampling会被使用。

-

negative:如果>0,则会采用 negative sampling,用于设置多少个noise words。

-

iter:迭代次数,默认为5。

# 设定词向量训练的参数

num_features = 300 # Word vector dimensionality 特征维度

min_word_count = 40 # Minimum word count 词频

num_workers = 4 # Number of threads to run in parallel 并行数

context = 10 # Context window size

model_name = '{}features_{}minwords_{}context.model'.format(num_features, min_word_count, context)

使用 gensim 建模

from gensim.models.word2vec import Word2Vec

model = Word2Vec(sentences_list, workers=num_workers, \

size=num_features, min_count = min_word_count, \

window = context)

# If you don't plan to train the model any further, calling

# init_sims will make the model much more memory-efficient.

model.init_sims(replace=True) # 官网推荐的建模方式

# It can be helpful to create a meaningful model name and

# save the model for later use. You can load it later using Word2Vec.load()

model.save(os.path.join('..', 'models', model_name)) # 存储模型

print(model.doesnt_match(['man','woman','child','kitchen'])) # 计算相似度,返回最不相关的词

#print(model.doesnt_match('france england germany berlin'.split())

kitchen

model.most_similar("boy")

[('girl', 0.7018299698829651),

('astro', 0.6647905707359314),

('teenage', 0.6317306160926819),

('frat', 0.60948246717453),

('dad', 0.6011481285095215),

('yr', 0.6010577082633972),

('teenager', 0.5974895358085632),

('brat', 0.5941195487976074),

('joshua', 0.5832049250602722),

('father', 0.5825375914573669)]

model.most_similar("bad")

[('worse', 0.7071679830551147),

('horrible', 0.7065873742103577),

('terrible', 0.6872220635414124),

('sucks', 0.6666240692138672),

('crappy', 0.6634873747825623),

('lousy', 0.6494461297988892),

('horrendous', 0.6371070742607117),

('atrocious', 0.62550288438797),

('suck', 0.6224384307861328),

('awful', 0.619296669960022)]

df = pd.read_csv('../data/labeledTrainData.tsv', sep='\t', escapechar='\\')

df.head()

| id | sentiment | review | |

|---|---|---|---|

| 0 | 5814_8 | 1 | With all this stuff going down at the moment w... |

| 1 | 2381_9 | 1 | "The Classic War of the Worlds" by Timothy Hin... |

| 2 | 7759_3 | 0 | The film starts with a manager (Nicholas Bell)... |

| 3 | 3630_4 | 0 | It must be assumed that those who praised this... |

| 4 | 9495_8 | 1 | Superbly trashy and wondrously unpretentious 8... |

from nltk.corpus import stopwords

eng_stopwords = set(stopwords.words('english'))

def clean_text(text, remove_stopwords=False):

text = BeautifulSoup(text, 'html.parser').get_text()

text = re.sub(r'[^a-zA-Z]', ' ', text)

words = text.lower().split()

if remove_stopwords:

words = [w for w in words if w not in eng_stopwords]

return words

def to_review_vector(review):

global word_vec

review = clean_text(review, remove_stopwords=True)

#print (review)

#words = nltk.word_tokenize(review)

word_vec = np.zeros((1,300))

for word in review:

#word_vec = np.zeros((1,300))

if word in model:

word_vec += np.array([model[word]])

#print (word_vec.mean(axis = 0))

return pd.Series(word_vec.mean(axis = 0))

train_data_features = df.review.apply(to_review_vector)

train_data_features.head()

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | ... | 290 | 291 | 292 | 293 | 294 | 295 | 296 | 297 | 298 | 299 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | -0.696664 | 0.903903 | -0.625330 | -1.004056 | 0.304315 | 0.757687 | -0.585106 | 1.063758 | 0.361671 | -1.063279 | ... | -2.586864 | 0.098197 | 1.739277 | -0.427558 | 1.084251 | -2.500275 | 1.844900 | 0.923524 | -0.471286 | -0.478899 |

| 1 | 0.888799 | -0.449773 | 1.340381 | -3.644667 | 2.221354 | -2.437322 | -1.399687 | 0.539550 | 2.563507 | 0.984283 | ... | 0.932557 | 0.607203 | -0.594103 | -0.159929 | -1.501902 | -1.217742 | 0.115345 | 1.562480 | -0.023147 | -0.065639 |

| 2 | 0.589862 | 4.321714 | -0.652215 | 5.326607 | -8.739010 | 0.005590 | 1.371678 | -0.868081 | -1.485593 | -2.200574 | ... | -1.301250 | 0.632217 | 2.265594 | -2.135824 | 2.925084 | -0.933688 | -0.872354 | -0.567039 | -3.797528 | -2.027258 |

| 3 | -1.029406 | -0.387385 | 0.504282 | -1.223156 | -0.733892 | 0.389869 | -1.111555 | -0.703193 | 3.405883 | 0.458893 | ... | -2.293309 | 0.615899 | 0.109569 | 0.809128 | 0.798448 | -1.626398 | 0.340430 | 1.064533 | -0.892466 | -0.318342 |

| 4 | -2.343473 | 2.814057 | -2.822986 | 1.471130 | -4.252637 | 0.117415 | 3.309642 | 0.895924 | -2.021818 | -0.558035 | ... | -2.075477 | 0.950246 | 4.536721 | -1.728554 | 2.433016 | -1.895700 | -0.214796 | 0.251841 | -3.280589 | -3.242330 |

5 rows × 300 columns

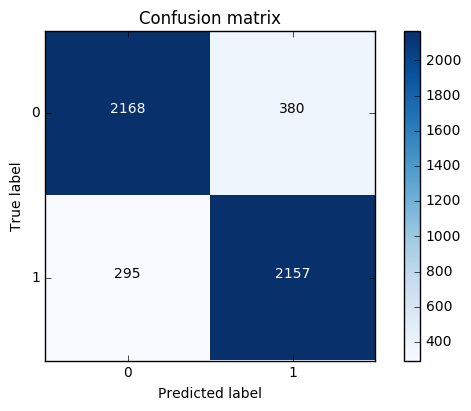

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(train_data_features,df.sentiment,test_size = 0.2, random_state = 0)

LR_model = LogisticRegression()

LR_model = LR_model.fit(X_train, y_train)

y_pred = LR_model.predict(X_test)

cnf_matrix = confusion_matrix(y_test,y_pred)

print("Recall metric in the testing dataset: ", cnf_matrix[1,1]/(cnf_matrix[1,0]+cnf_matrix[1,1]))

print("accuracy metric in the testing dataset: ", (cnf_matrix[1,1]+cnf_matrix[0,0])/(cnf_matrix[0,0]+cnf_matrix[1,1]+cnf_matrix[1,0]+cnf_matrix[0,1]))

# Plot non-normalized confusion matrix

class_names = [0,1]

plt.figure()

plot_confusion_matrix(cnf_matrix

, classes=class_names

, title='Confusion matrix')

plt.show()

Recall metric in the testing dataset: 0.87969004894

accuracy metric in the testing dataset: 0.865

浙公网安备 33010602011771号

浙公网安备 33010602011771号