k8s volume存储卷(五)

介绍

volume存储卷是Pod中能够被多个容器访问的共享目录,kubernetes的volume概念,用途和目的与docker的volume比较类似,但两者不能等价,首先,kubernetes中的volume被定义在Pod上,然后被一个Pod里的多个容器挂在到具体的文件目录下;其次,kubenetes中的volume与Pod的生命周期相同,但与容器生命周期不相关,当容器终止或者重启时,volume中的数据也不会丢失,最后Volume支持多种数据类型,比如:GlusterFS,Ceph等吸纳进的分布式文件系统

emptyDir

emptyDir Volume是在Pod分配到node时创建的,从他的名称就能看得出来,它的出事内容为空,并且无需指定宿主机上对应的目录文件,因为这是kubernetes自动分配的一个目录,当Pod从node上移除时,emptyDir中的数据也会被永久删除emptyDir的用途有:

- 临时空间,例如用于某些应用程序运行时所需的临时目录,且无需永久保留

- 长时间任务的中间过程checkpoint的临时保存目录

- 一个容器需要从另一个容器中获取数据库的目录(多容器共享目录)

emptyDir的使用也比较简单,在大多数情况下,我们先在Pod生命一个Volume,然后在容器里引用该Volume并挂载到容器里的某个目录上,比如,我们在一个Pod中定义2个容器,一个容器运行nginx,一个容器运行busybox,然后我们在这个Pod上定义一个共享存储卷,里面的内容两个容器应该都可以看得到,拓扑图如下:

以下标红的要注意,共享卷的名字要一致

[root@master ~]# cat test.yaml apiVersion: v1 kind: Service metadata: name: serivce-mynginx namespace: default spec: type: NodePort selector: app: mynginx ports: - name: nginx port: 80 targetPort: 80 nodePort: 30080 --- apiVersion: apps/v1 kind: Deployment metadata: name: deploy namespace: default spec: replicas: 1 selector: matchLabels: app: mynginx template: metadata: labels: app: mynginx spec: containers: - name: mynginx image: lizhaoqwe/nginx:v1 volumeMounts: - mountPath: /usr/share/nginx/html/ name: share ports: - name: nginx containerPort: 80 - name: busybox image: busybox command: - "/bin/sh" - "-c" - "sleep 4444" volumeMounts: - mountPath: /data/ name: share volumes: - name: share emptyDir: {}

创建Pod

[root@master ~]# kubectl create -f test.yaml

查看Pod

[root@master ~]# kubectl get pods NAME READY STATUS RESTARTS AGE deploy-5cd657dd46-sx287 2/2 Running 0 2m1s

查看service

[root@master ~]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6d10h serivce-mynginx NodePort 10.99.110.43 <none> 80:30080/TCP 2m27s

我们进入到busybox容器当中创建一个index.html

[root@master ~]# kubectl exec -it deploy-5cd657dd46-sx287 -c busybox -- /bin/sh 容器内部: /data # cd /data /data # echo "fengzi" > index.html

打开浏览器验证一下

到nginx容器中看一下有没有index.html文件

[root@master ~]# kubectl exec -it deploy-5cd657dd46-sx287 -c nginx -- /bin/sh 容器内部: # cd /usr/share/nginx/html # ls -ltr total 4 -rw-r--r-- 1 root root 7 Sep 9 17:06 index.html

ok,说明我们在busybox里写入的文件被nginx读取到了!

hostPath

hostPath为在Pod上挂载宿主机上的文件或目录,它通常可以用于以下几方面:

- 容器应用程序生成的日志文件需要永久保存时,可以使用宿主机的告诉文件系统进行存储

- 需要访问宿主机上Docker引擎内部数据结构的容器应用时,可以通过定义hostPath为宿主机/var/lib/docker目录,使容器内部应用可以直接访问Docker的文件系统

在使用这种类型的volume时,需要注意以下几点:

- 在不同的node上具有相同配置的Pod时,可能会因为宿主机上的目录和文件不同而导致对volume上的目录和文件访问结果不一致

- 如果使用了资源配置,则kubernetes无法将hostPath在宿主机上使用的资源纳入管理

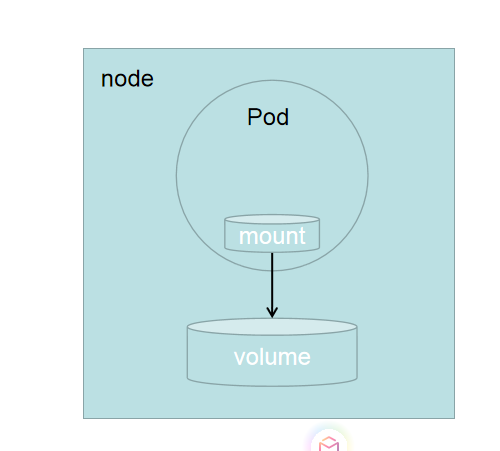

hostPath类型存储卷架构图

那么下面我们就定义一个hostPath看一下效果:

[root@master ~]# cat test.yaml apiVersion: v1 kind: Service metadata: name: nginx-deploy namespace: default spec: selector: app: mynginx type: NodePort ports: - name: nginx port: 80 targetPort: 80 nodePort: 31111 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 2 selector: matchLabels: app: mynginx template: metadata: name: web labels: app: mynginx spec: containers: - name: mycontainer image: lizhaoqwe/nginx:v1 volumeMounts: - mountPath: /usr/share/nginx/html name: persistent-storage ports: - containerPort: 80 volumes: - name: persistent-storage hostPath: type: DirectoryOrCreate path: /mydata

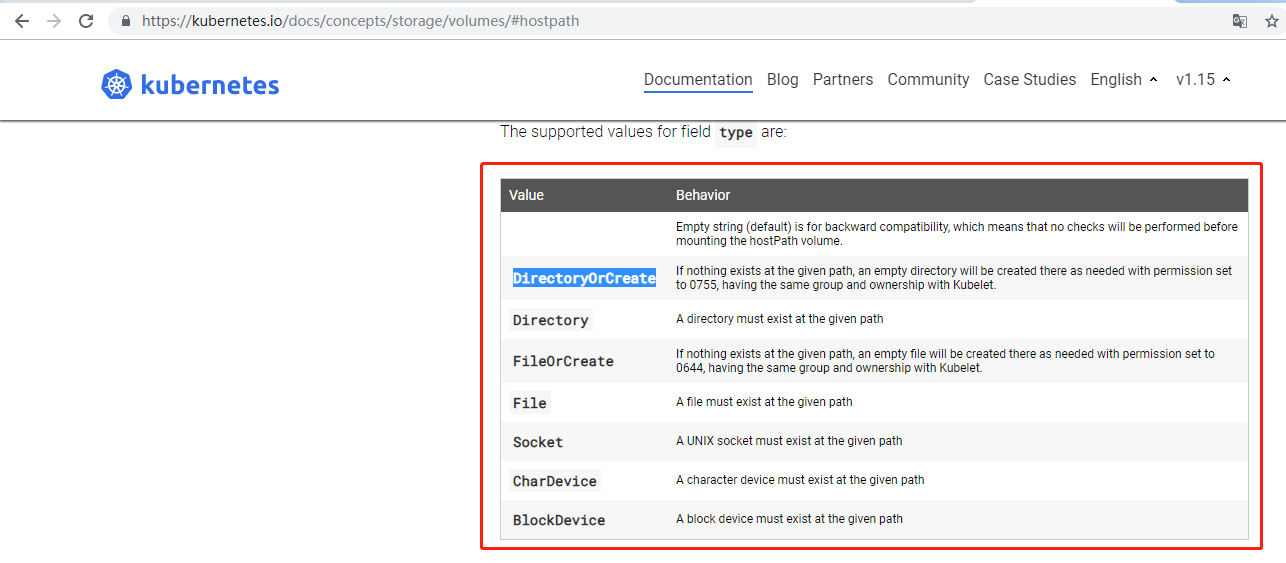

在hostPath下的type要注意一下,我们可以看一下帮助信息如下

[root@master data]# kubectl explain deploy.spec.template.spec.volumes.hostPath.type KIND: Deployment VERSION: extensions/v1beta1 FIELD: type <string> DESCRIPTION: Type for HostPath Volume Defaults to "" More info: https://kubernetes.io/docs/concepts/storage/volumes#hostpath

可以看到帮助信息并没有太多信息,但是给我留了一个参考网站,我们打开这个网站

可以看到hostPath下的type可以有这么多的选项,意思不在解释了,可以自己谷歌,我们这里选第一个

执行yaml文件

[root@master ~]# kubectl create -f test.yaml service/nginx-deploy created deployment.apps/mydeploy created

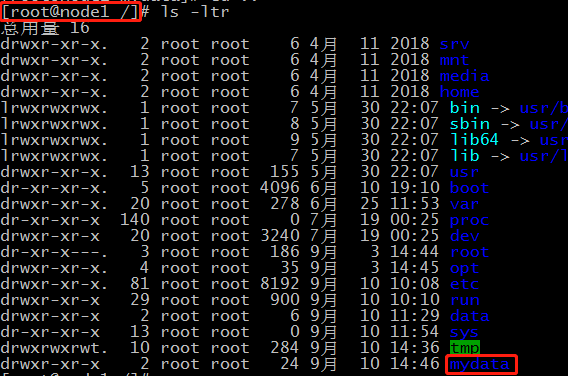

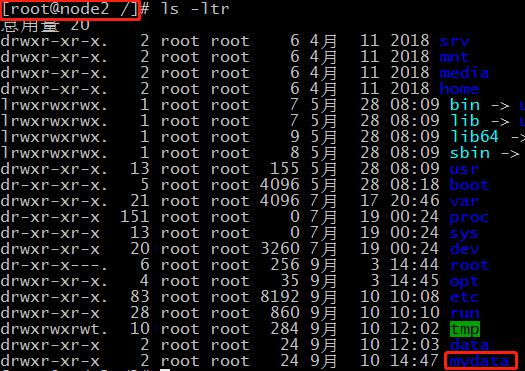

然后我们可以去两个节点去查看是否有/mydata这个目录

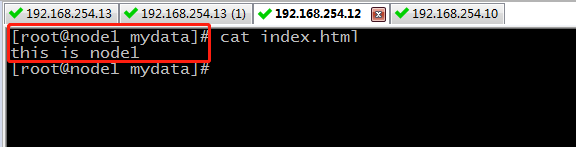

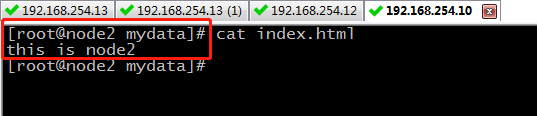

可以看到两边的mydata目录都已经创建完毕,接下来我们在目录里写点东西

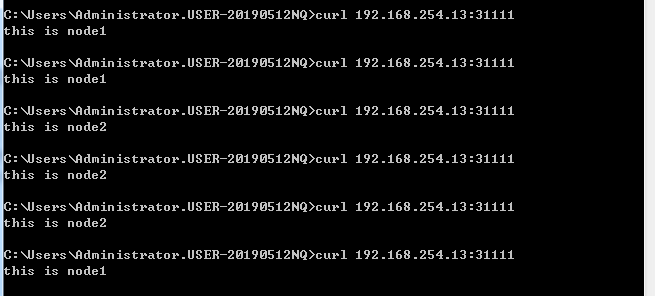

两边节点都写了一些东西,好了,现在我们可以验证一下

可以看到访问没有问题,并且还是负载均衡

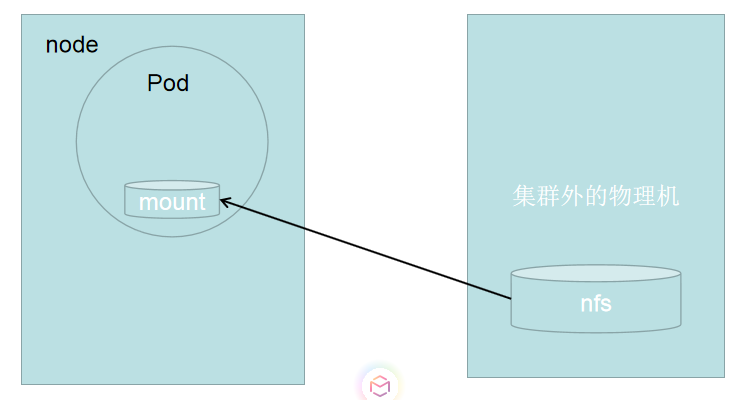

NFS

相信NFS大家已经不陌生了,所以在这里我就不详细说明什么NFS,我只说如何在k8s集群当中挂在nfs文件系统

基于NFS文件系统挂载的卷的架构图为

开启集群以外的另一台虚拟机,安装nfs-utils安装包

note:这里要注意的是需要在集群每个节点都安装nfs-utils安装包,不然挂载会失败!

[root@master mnt]# yum install nfs-utils 已加载插件:fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.cn99.com * extras: mirrors.cn99.com * updates: mirrors.cn99.com 软件包 1:nfs-utils-1.3.0-0.61.el7.x86_64 已安装并且是最新版本 无须任何处理

编辑/etc/exports文件添加以下内容

[root@localhost share]# vim /etc/exports /share 192.168.254.0/24(insecure,rw,no_root_squash)

重启nfs服务

[root@localhost share]# service nfs restart

Redirecting to /bin/systemctl restart nfs.service

在/share目录中写一个index.html文件并且写入内容

[root@localhost share]# echo "nfs server" > /share/index.html

在kubernetes集群的master节点中创建yaml文件并写入

[root@master ~]# cat test.yaml apiVersion: v1 kind: Service metadata: name: nginx-deploy namespace: default spec: selector: app: mynginx type: NodePort ports: - name: nginx port: 80 targetPort: 80 nodePort: 31111 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 2 selector: matchLabels: app: mynginx template: metadata: name: web labels: app: mynginx spec: containers: - name: mycontainer image: lizhaoqwe/nginx:v1 volumeMounts: - mountPath: /usr/share/nginx/html name: nfs ports: - containerPort: 80 volumes: - name: nfs nfs: server: 192.168.254.11 #nfs服务器地址 path: /share #nfs服务器共享目录

创建yaml文件

[root@master ~]# kubectl create -f test.yaml service/nginx-deploy created deployment.apps/mydeploy created

验证

OK,没问题!!!

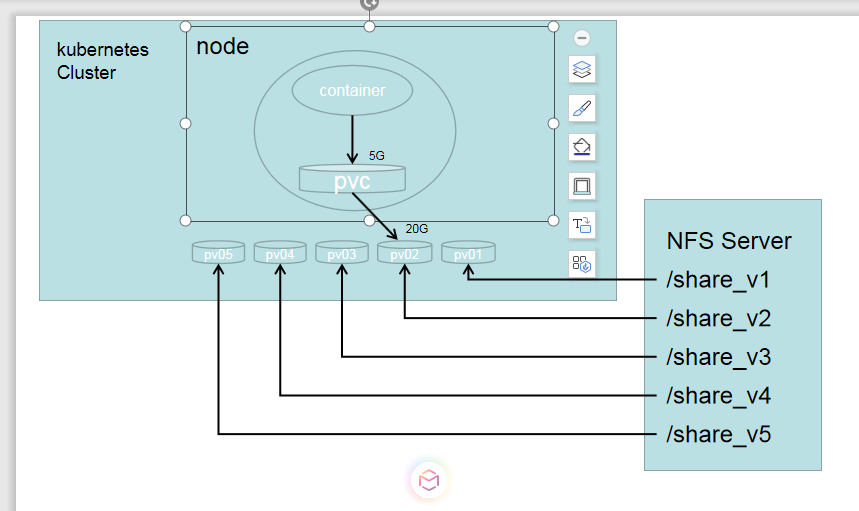

pvc

之前的volume是被定义在Pod上的,属于计算资源的一部分,而实际上,网络存储是相对独立于计算资源而存在的一种实体资源。比如在使用虚拟机的情况下,我们通常会先定义一个网络存储,然后从中划出一个网盘并挂载到虚拟机上,Persistent Volume(pv)和与之相关联的Persistent Volume Claim(pvc)也起到了类似的作用

pv可以理解成为kubernetes集群中的某个网络存储对应的一块存储,它与Volume类似,但有以下区别:

- pv只能是网络存储,不属于任何Node,但可以在每个Node上访问

- pv并不是被定义在Pod上的,而是独立于Pod之外定义的

在nfs server服务器上创建nfs卷的映射并重启

[root@localhost ~]# cat /etc/exports /share_v1 192.168.254.0/24(insecure,rw,no_root_squash) /share_v2 192.168.254.0/24(insecure,rw,no_root_squash) /share_v3 192.168.254.0/24(insecure,rw,no_root_squash) /share_v4 192.168.254.0/24(insecure,rw,no_root_squash) /share_v5 192.168.254.0/24(insecure,rw,no_root_squash)

[root@localhost ~]# service nfs restart

在nfs server服务器上创建响应目录

[root@localhost /]# mkdir /share_v{1,2,3,4,5}

在kubernetes集群中的master节点上创建pv,我这里创建了5个pv对应nfs server当中映射出来的5个目录

[root@master ~]# cat createpv.yaml apiVersion: v1 kind: PersistentVolume metadata: name: pv01 spec: nfs: #存储类型 path: /share_v1 #要挂在的nfs服务器的目录位置 server: 192.168.254.11 #nfs server地址,也可以是域名,前提是能被解析 accessModes: #访问模式: - ReadWriteMany ReadWriteMany:读写权限,允许多个Node挂载 | ReadWriteOnce:读写权限,只能被单个Node挂在 | ReadOnlyMany:只读权限,允许被多个Node挂载 - ReadWriteOnce capacity: #存储容量 storage: 10Gi #pv存储卷为10G --- apiVersion: v1 kind: PersistentVolume metadata: name: pv02 spec: nfs: path: /share_v2 server: 192.168.254.11 accessModes: - ReadWriteMany capacity: storage: 20Gi --- apiVersion: v1 kind: PersistentVolume metadata: name: pv03 spec: nfs: path: /share_v3 server: 192.168.254.11 accessModes: - ReadWriteMany - ReadWriteOnce capacity: storage: 30Gi --- apiVersion: v1 kind: PersistentVolume metadata: name: pv04 spec: nfs: path: /share_v4 server: 192.168.254.11 accessModes: - ReadWriteMany - ReadWriteOnce capacity: storage: 40Gi --- apiVersion: v1 kind: PersistentVolume metadata: name: pv05 spec: nfs: path: /share_v5 server: 192.168.254.11 accessModes: - ReadWriteMany - ReadWriteOnce capacity: storage: 50Gi

执行yaml文件

[root@master ~]# kubectl create -f createpv.yaml persistentvolume/pv01 created persistentvolume/pv02 created persistentvolume/pv03 created persistentvolume/pv04 created persistentvolume/pv05 created

查看pv

[root@master ~]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pv01 10Gi RWO,RWX Retain Available 5m10s

pv02 20Gi RWX Retain Available 5m10s

pv03 30Gi RWO,RWX Retain Available 5m9s

pv04 40Gi RWO,RWX Retain Available 5m9s

pv05 50Gi RWO,RWX Retain Available 5m9s

来一波解释:

ACCESS MODES:

RWO:ReadWriteOnly

RWX:ReadWriteMany

ROX:ReadOnlyMany

RECLAIM POLICY:

Retain:保护pvc释放的pv及其上的数据,将不会被其他pvc绑定

recycle:保留pv但清空数据

delete:删除pvc释放的pv及后端存储volume

STATUS:

Available:空闲状态

Bound:已经绑定到某个pvc上

Released:对应的pvc已经被删除,但是资源没有被集群回收

Failed:pv自动回收失败

CLAIM:

被绑定到了那个pvc上面格式为:NAMESPACE/PVC_NAME

有了pv之后我们就可以创建pvc了

[root@master ~]# cat test.yaml apiVersion: v1 kind: Service metadata: name: nginx-deploy namespace: default spec: selector: app: mynginx type: NodePort ports: - name: nginx port: 80 targetPort: 80 nodePort: 31111 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 2 selector: matchLabels: app: mynginx template: metadata: name: web labels: app: mynginx spec: containers: - name: mycontainer image: nginx volumeMounts: - mountPath: /usr/share/nginx/html name: html ports: - containerPort: 80 volumes: - name: html persistentVolumeClaim: claimName: mypvc --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: mypvc namespace: default spec: accessMode: - ReadWriteMany resources: requests: storage: 5Gi

执行yaml文件

[root@master ~]# kubectl create -f test.yaml service/nginx-deploy created deployment.apps/mydeploy created persistentvolumeclaim/mypvc created

再次查看pv,已经显示pvc被绑定到了pv02上

[root@master ~]# kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pv01 10Gi RWO,RWX Retain Available 22m pv02 20Gi RWX Retain Bound default/mypvc 22m pv03 30Gi RWO,RWX Retain Available 22m pv04 40Gi RWO,RWX Retain Available 22m pv05 50Gi RWO,RWX Retain Available 22m

查看pvc

[root@master ~]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mypvc Bound pv02 20Gi RWX 113s

验证

在nfs server服务器上找到相应的目录执行以下命令

[root@localhost share_v1]# echo 'test pvc' > index.html

然后打开浏览器

OK,没问题

configMap

应用部署的一个最佳实战是将应用所需的配置信息与程序进行分离,这样可以使应用程序被更好的复用,通过不同的配置也能实现更灵活的功能,将应用打包为容器镜像后,可以通过环境变量或者外挂文件的方式在创建容器时进行配置注入,但在大规模容器集群的环境中,对多个容器进行不同的配置讲变得非常复杂,Kubernetes 1.2开始提供了一种统一的应用配置管理方案-configMap

ConfigMap供容器使用的典型用法如下:

- 生成为容器内的环境变量

- 设置容器启动命令的启动参数(需设置为环境变量)

- 以volume的形式挂载为容器内部的文件或者目录

configMap编写变量注入pod中

比如我们用configmap创建两个变量,一个是nginx_port=80,一个是nginx_server=192.168.254.13

[root@master ~]# kubectl create configmap nginx-var --from-literal=nginx_port=80 --from-literal=nginx_server=192.168.254.13 configmap/nginx-var created

查看configmap

[root@master ~]# kubectl get cm NAME DATA AGE nginx-var 2 5s [root@master ~]# kubectl describe cm nginx-var Name: nginx-var Namespace: default Labels: <none> Annotations: <none> Data ==== nginx_port: ---- 80 nginx_server: ---- 192.168.254.13 Events: <none>

然后我们创建pod,把这2个变量注入到环境变量当中

[root@master ~]# cat test2.yaml apiVersion: v1 kind: Service metadata: name: service-nginx namespace: default spec: type: NodePort selector: app: nginx ports: - name: nginx port: 80 targetPort: 80 nodePort: 30080 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 2 selector: matchLabels: app: nginx template: metadata: name: web labels: app: nginx spec: containers: - name: nginx image: nginx ports: - name: nginx containerPort: 80 volumeMounts: - name: html mountPath: /user/share/nginx/html/ env: - name: TEST_PORT valueFrom: configMapKeyRef: name: nginx-var key: nginx_port - name: TEST_HOST valueFrom: configMapKeyRef: name: nginx-var key: nginx_server volumes: - name: html emptyDir: {}

执行pod文件

[root@master ~]# kubectl create -f test2.yaml

service/service-nginx created

查看pod

[root@master ~]# kubectl get pods NAME READY STATUS RESTARTS AGE mydeploy-d975ff774-fzv7g 1/1 Running 0 19s mydeploy-d975ff774-nmmqt 1/1 Running 0 19s

进入到容器中查看环境变量

[root@master ~]# kubectl exec -it mydeploy-d975ff774-fzv7g -- /bin/sh # printenv SERVICE_NGINX_PORT_80_TCP_PORT=80 KUBERNETES_PORT=tcp://10.96.0.1:443 SERVICE_NGINX_PORT_80_TCP_PROTO=tcp KUBERNETES_SERVICE_PORT=443 HOSTNAME=mydeploy-d975ff774-fzv7g SERVICE_NGINX_SERVICE_PORT_NGINX=80 HOME=/root PKG_RELEASE=1~buster SERVICE_NGINX_PORT_80_TCP=tcp://10.99.184.186:80 TEST_HOST=192.168.254.13 TEST_PORT=80 TERM=xterm KUBERNETES_PORT_443_TCP_ADDR=10.96.0.1 NGINX_VERSION=1.17.3 PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin KUBERNETES_PORT_443_TCP_PORT=443 NJS_VERSION=0.3.5 KUBERNETES_PORT_443_TCP_PROTO=tcp SERVICE_NGINX_SERVICE_HOST=10.99.184.186 SERVICE_NGINX_PORT=tcp://10.99.184.186:80 SERVICE_NGINX_SERVICE_PORT=80 KUBERNETES_SERVICE_PORT_HTTPS=443 KUBERNETES_PORT_443_TCP=tcp://10.96.0.1:443 KUBERNETES_SERVICE_HOST=10.96.0.1 PWD=/ SERVICE_NGINX_PORT_80_TCP_ADDR=10.99.184.186

可以发现configMap当中的环境变量已经注入到了pod容器当中

这里要注意的是,如果是用这种环境变量的注入方式,pod启动后,如果在去修改configMap当中的变量,对于pod是无效的,如果是以卷的方式挂载,是可的实时更新的,这一点要清楚

用configMap以存储卷的形式挂载到pod中

上面说到了configMap以变量的形式虽然可以注入到pod当中,但是如果在修改变量的话pod是不会更新的,如果想让configMap中的配置跟pod内部的实时更新,就需要以存储卷的形式挂载

[root@master ~]# cat test2.yaml apiVersion: v1 kind: Service metadata: name: service-nginx namespace: default spec: type: NodePort selector: app: nginx ports: - name: nginx port: 80 targetPort: 80 nodePort: 30080 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 2 selector: matchLabels: app: nginx template: metadata: name: web labels: app: nginx spec: containers: - name: nginx image: nginx ports: - name: nginx containerPort: 80 volumeMounts: - name: html-config mountPath: /nginx/vars/ readOnly: true volumes: - name: html-config configMap: name: nginx-var

执行yaml文件

[root@master ~]# kubectl create -f test2.yaml service/service-nginx created deployment.apps/mydeploy created

查看pod

[root@master ~]# kubectl get pods NAME READY STATUS RESTARTS AGE mydeploy-6f6b6c8d9d-pfzjs 1/1 Running 0 90s mydeploy-6f6b6c8d9d-r9rz4 1/1 Running 0 90s

进入到容器中

[root@master ~]# kubectl exec -it mydeploy-6f6b6c8d9d-pfzjs -- /bin/bash

在容器中查看configMap对应的配置

root@mydeploy-6f6b6c8d9d-pfzjs:/# cd /nginx/vars root@mydeploy-6f6b6c8d9d-pfzjs:/nginx/vars# ls nginx_port nginx_server root@mydeploy-6f6b6c8d9d-pfzjs:/nginx/vars# cat nginx_port 80 root@mydeploy-6f6b6c8d9d-pfzjs:/nginx/vars#

修改configMap中的配置,把端口号从80修改成8080

[root@master ~]# kubectl edit cm nginx-var # Please edit the object below. Lines beginning with a '#' will be ignored, # and an empty file will abort the edit. If an error occurs while saving this file will be # reopened with the relevant failures. # apiVersion: v1 data: nginx_port: "8080" nginx_server: 192.168.254.13 kind: ConfigMap metadata: creationTimestamp: "2019-09-13T14:22:20Z" name: nginx-var namespace: default resourceVersion: "248779" selfLink: /api/v1/namespaces/default/configmaps/nginx-var uid: dfce8730-f028-4c57-b497-89b8f1854630

修改完稍等片刻查看文件档中的值,已然更新成8080

root@mydeploy-6f6b6c8d9d-pfzjs:/nginx/vars# cat nginx_port 8080 root@mydeploy-6f6b6c8d9d-pfzjs:/nginx/vars#

configMap创建配置文件注入到pod当中

这里以nginx配置文件为例子,我们在宿主机上配置好nginx的配置文件,创建configmap,最后通过configmap注入到容器中

创建nginx配置文件

[root@master ~]# vim www.conf server { server_name 192.168.254.13; listen 80; root /data/web/html/; }

创建configMap

[root@master ~]# kubectl create configmap nginx-config --from-file=/root/www.conf configmap/nginx-config created

查看configMap

[root@master ~]# kubectl get cm NAME DATA AGE nginx-config 1 3m3s nginx-var 2 63m

创建pod并挂载configMap存储卷

[root@master ~]# cat test2.yaml apiVersion: v1 kind: Service metadata: name: service-nginx namespace: default spec: type: NodePort selector: app: nginx ports: - name: nginx port: 80 targetPort: 80 nodePort: 30080 --- apiVersion: apps/v1 kind: Deployment metadata: name: mydeploy namespace: default spec: replicas: 1 selector: matchLabels: app: nginx template: metadata: name: web labels: app: nginx spec: containers: - name: nginx image: nginx ports: - name: nginx containerPort: 80 volumeMounts: - name: html-config mountPath: /etc/nginx/conf.d/ readOnly: true volumes: - name: html-config configMap: name: nginx-config

启动容器,并让容器启动的时候就加载configMap当中的配置

[root@master ~]# kubectl create -f test2.yaml service/service-nginx created deployment.apps/mydeploy created

查看容器

[root@master ~]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES mydeploy-fd46f76d6-jkq52 1/1 Running 0 22s 10.244.1.46 node1 <none> <none>

访问容器当中的网页,80端口是没问题的,8888端口访问不同

[root@master ~]# curl 10.244.1.46

this is test web

[root@master ~]# curl 10.244.1.46:8888

curl: (7) Failed connect to 10.244.1.46:8888; 拒绝连接

接下来我们去修改configMap当中的内容,吧80端口修改成8888

[root@master ~]# kubectl edit cm nginx-config # Please edit the object below. Lines beginning with a '#' will be ignored, # and an empty file will abort the edit. If an error occurs while saving this file will be # reopened with the relevant failures. # apiVersion: v1 data: www.conf: | server { server_name 192.168.254.13; listen 8888; root /data/web/html/; } kind: ConfigMap metadata: creationTimestamp: "2019-09-13T15:22:22Z" name: nginx-config namespace: default resourceVersion: "252615" selfLink: /api/v1/namespaces/default/configmaps/nginx-config uid: f1881f87-5a91-4b8e-ab39-11a2f45733c2

进入到容器查看配置文件,可以发现配置文件已经修改过来了

root@mydeploy-fd46f76d6-jkq52:/usr/bin# cat /etc/nginx/conf.d/www.conf server { server_name 192.168.254.13; listen 8888; root /data/web/html/; }

在去测试访问,发现还是报错,这是因为配置文件虽然已经修改了,但是nginx服务并没有加载配置文件,我们手动加载一下,以后可以用脚本形式自动完成加载文件

[root@master ~]# curl 10.244.1.46 this is test web [root@master ~]# curl 10.244.1.46:8888 curl: (7) Failed connect to 10.244.1.46:8888; 拒绝连接

在容器内部手动加载配置文件

root@mydeploy-fd46f76d6-jkq52:/usr/bin# nginx -s reload 2019/09/13 16:04:12 [notice] 34#34: signal process started

再去测试访问,可以看到80端口已经访问不通,反而是我们修改的8888端口可以访问通

[root@master ~]# curl 10.244.1.46 curl: (7) Failed connect to 10.244.1.46:80; 拒绝连接 [root@master ~]# curl 10.244.1.46:8888 this is test web

secret

secret作用跟configmap很像,但是secret主要作用是用于保管私密数据,比如密码,秘钥等,将这些私密信息放在secret对象中比直接放在pod或者image中更为安全,也更便于分发

[root@master k8s-prom]# kubectl create secret generic mysql-root-password --from-literal=password=abc123 secret/mysql-root-password created [root@master k8s-prom]# kubectl get secret NAME TYPE DATA AGE default-token-b7c2k kubernetes.io/service-account-token 3 22h mysql-root-password Opaque 1 4s

查看secret,看到已经被base64编码加密,但是其实还是可以查看到密码

[root@master k8s-prom]# kubectl describe secret mysql-root-password Name: mysql-root-password Namespace: default Labels: <none> Annotations: <none> Type: Opaque Data ==== password: 6 bytes

用yaml格式查看

[root@master k8s-prom]# kubectl get secret mysql-root-password -o yaml apiVersion: v1 data: password: YWJjMTIz kind: Secret metadata: creationTimestamp: "2020-03-14T15:14:07Z" name: mysql-root-password namespace: default resourceVersion: "63698" selfLink: /api/v1/namespaces/default/secrets/mysql-root-password uid: 25b5f01f-47e8-4c14-9b46-ce6dc2154a31 type: Opaque

我们可以用base64进行破译

[root@master k8s-prom]# echo YWJjMTIz | base64 -d abc123

如果要加载到pod里可以通过环境变量

[root@master k8s-prom]# vim pod.yaml apiVersion: v1 kind: Pod metadata: name: mypod namespace: default spec: containers: - name: mycontainer1 image: liwang7314/myapp:v1 imagePullPolicy: IfNotPresent env: - name: MYSQL-ROOT-PASSWORD valueFrom: secretKeyRef: name: mysql-root-password key: password

[root@master k8s-prom]# kubectl create -f pod.yaml pod/mypod created [root@master k8s-prom]# kubectl get pods NAME READY STATUS RESTARTS AGE myapp-54fbc848b4-7lf9m 1/1 Running 0 45m mypod 0/1 ContainerCreating 0 4s

连入到pod查看环境变量,这是密码显示的是明文

[root@master k8s-prom]# kubectl exec -it mypod -- /bin/sh/ # / # printenv KUBERNETES_SERVICE_PORT=443 KUBERNETES_PORT=tcp://10.96.0.1:443 MYAPP_SVC_PORT_80_TCP_ADDR=10.107.71.174 MYAPP_SVC_PORT_80_TCP_PORT=80 HOSTNAME=mypod SHLVL=1 MYAPP_SVC_PORT_80_TCP_PROTO=tcp HOME=/root MYAPP_SERVICE_HOST=10.96.7.12 MYAPP_SVC_PORT_80_TCP=tcp://10.107.71.174:80 MYAPP_PORT=tcp://10.96.7.12:80 MYAPP_SERVICE_PORT=80 TERM=xterm NGINX_VERSION=1.12.2 KUBERNETES_PORT_443_TCP_ADDR=10.96.0.1 MYAPP_PORT_80_TCP_ADDR=10.96.7.12 PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin KUBERNETES_PORT_443_TCP_PORT=443 KUBERNETES_PORT_443_TCP_PROTO=tcp MYAPP_PORT_80_TCP_PORT=80 MYAPP_PORT_80_TCP_PROTO=tcp MYAPP_SVC_SERVICE_HOST=10.107.71.174 MYSQL-ROOT-PASSWORD=abc123 KUBERNETES_SERVICE_PORT_HTTPS=443 KUBERNETES_PORT_443_TCP=tcp://10.96.0.1:443 PWD=/ KUBERNETES_SERVICE_HOST=10.96.0.1 MYAPP_PORT_80_TCP=tcp://10.96.7.12:80 MYAPP_SVC_SERVICE_PORT=80 MYAPP_SVC_PORT=tcp://10.107.71.174:80

浙公网安备 33010602011771号

浙公网安备 33010602011771号