ceph 集群故障恢复

集群规划配置

master1 172.16.230.21

master2 172.16.230.22

master3 172.16.230.23

node1 172.16.230.26

node2 172.16.230.27

node3 172.16.23028

一、 模拟monitor 宕机状态

2. 测试删除monitor节点, 把master3 关机

发现 master3 节点已经宕机, 具体操作步骤,需要删除配置文件中master3 信息,同步ceph.conf 配置文件 ,然后命令删除master3

3. 修改ceph.conf 配置文件,删除 monitor3信息

[root@master1 cluster-ceph]# cd /opt/cluster-ceph/ [global] fsid = 574c4cb4-50f8-4d80-a61e-25eadd0c567d mon_initial_members = master1, master2 mon_host = 172.16.230.21,172.16.230.22 auth_cluster_required = cephx auth_service_required = cephx auth_client_required = cephx public_network = 172.16.230.0/24 osd_pool_default_size = 2 mon_pg_warn_max_per_osd = 1000 osd pool default pg num = 256 osd pool default pgp num = 256 mon clock drift allowed = 2 mon clock drift warn backoff = 30 # 删除 mon_initial_members 中的master3 和 mon_host 中 172.16.230.23

4 . ceph.conf 同步到其他节点

ceph-deploy --overwrite-conf admin master1 master2 node1 node2 node3

5. 使用remove命令 删除节点

[root@master1 cluster-ceph]# ceph mon remove master3 removing mon.master3 at 172.16.230.23:6789/0, there will be 2 monitors

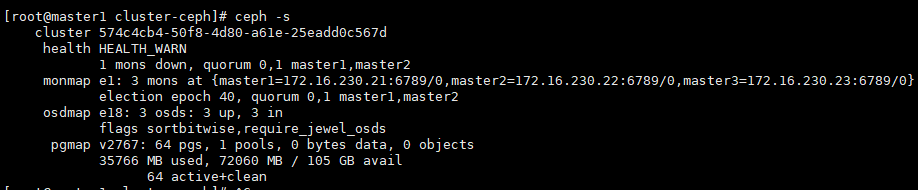

6. 查看ceph集群状态

二.添加monitor3 到ceph集群中(ceph-deploy)

[root@master1 cluster-ceph]# cd /opt/cluster-ceph/

[root@master1 cluster-ceph]# ceph-deploy mon create master3

同步ceph.conf 到集群各个节点

ceph-deploy --overwrite-conf admin master1 master2 node1 node2 node3

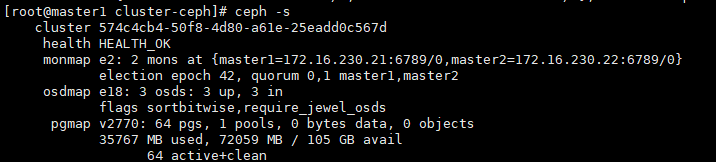

查看集群状态

参考 https://www.bookstack.cn/read/ceph-handbook/Operation-add_rm_mon.md

浙公网安备 33010602011771号

浙公网安备 33010602011771号