k8s基于二进制安装方法安装kubernetes1.19版本(二)

基础环境规划

实验环境规划:

操作系统:centos7.6

虚拟机配置:

配置: 4Gib内存/4vCPU/100G硬盘

网络:NAT

开启虚拟机的虚拟化:

集群环境:

版本:kubernetes1.20

Pod网段: 10.0.0.0/16

Service网段: 10.255.0.0/16

| K8S集群角色 | IP | 主机名 | 安装的组件 |

|---|---|---|---|

| Master1 | 192.168.7.10 | k8s-master1 | apiserver、controller-manager、scheduler、etcd、docker |

| Master2 | 192.168.7.11 | k8s-master2 | apiserver、controller-manager、scheduler、etcd、docker |

| Master3 | 192.168.7.12 | k8s-master3 | apiserver、controller-manager、scheduler、etcd、docker |

| Node1 | 192.168.7.13 | k8s-node1 | kubelet、kube-proxy、docker、calico、cordns |

| Node2 | 192.168.7.16 | k8s-node2 | kubelet、kube-proxy、docker、calico、cordns |

| VIP | 192.168.7.50 | 控制节点的vip地址 |

kubeadm和二进制安装的区别

k8s适用场景分析

kubeadm是官方提供的开源工具,是一个开源项目,用于快速搭建kubernetes集群,目前是比较方便和推荐使用的。kubeadm init 以及 kubeadm join 这两个命令可以快速创建 kubernetes 集群。Kubeadm初始化k8s,所有的组件都是以pod形式运行的,具备故障自恢复能力。

kubeadm是工具,可以快速搭建集群,也就是相当于用程序脚本帮我们装好了集群,属于自动部署,简化部署操作,自动部署屏蔽了很多细节,使得对各个模块感知很少,如果对k8s架构组件理解不深的话,遇到问题比较难排查。

kubeadm适合需要经常部署k8s,或者对自动化要求比较高的场景下使用。

二进制:在官网下载相关组件的二进制包,如果手动安装,对kubernetes理解也会更全面。

Kubeadm和二进制都适合生产环境,在生产环境运行都很稳定,具体如何选择,可以根据实际项目进行评估。

1、K8S集群环境安装

1.1 初始化环境(所有节点)

1.1.1 配置主机名

配置主机名:所有主机都需要配置

在192.168.7.10上执行如下:

hostnamectl set-hostname k8s-master1 && bash

1.1.2 配置hosts文件

修改每台机器的/etc/hosts文件,增加如下几行:

[root@k8s-master1 ~]# vim /etc/hosts

#k8s

127.0.0.1 k8s-master1 master1

192.168.7.10 k8s-master1 master1

192.168.7.11 k8s-node1 node1

192.168.7.12 k8s-node2 node2

192.168.7.13 k8s-node3 node3

1.1.3 配置主机之间无密码登录

生成ssh 密钥对

[root@k8s-master1 ~]# ssh-keygen -t rsa

一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

[root@k8s-master1 ~]# ssh-copy-id -i .ssh/id_rsa.pub k8s-master1

[root@k8s-master1 ~]# ssh-copy-id -i .ssh/id_rsa.pub k8s-node1

1.1.4 关闭firewalld防火墙

[root@k8s-master1 ~]# systemctl stop firewalld ; systemctl disable firewalld

1.1.5 关闭selinux

[root@k8s-master1~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

#修改selinux配置文件之后,重启机器,selinux才能永久生效

[root@k8s-master1 ~]# getenforce

Disabled

1.1.6 关闭交换分区swap

[root@k8s-master1 ~]# swapoff -a

[root@k8s-node1 ~]# swapoff -a

永久关闭:注释swap挂载,给swap这行开头加一下注释

#删除UUID

[root@k8s-master1 ~]# vim /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

1.1.7 修改内核参数

[root@k8s-master1 ~]# modprobe br_netfilter

[root@k8s-master1 ~]# echo "modprobe br_netfilter" >> /etc/profile

[root@k8s-master1~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

[root@k8s-master1 ~]# sysctl -p /etc/sysctl.d/k8s.conf

1.1.8 配置阿里云repo源

安装rzsz、scp、yum-utils命令

[root@k8s-master1]# yum install lrzsz openssh-clients -y

备份基础repo源

[root@k8s-master1 ~]# mkdir -p /etc/yum.repos.d/repo.bak && cd /etc/yum.repos.d/ && mv * /etc/yum.repos.d/repo.bak

#下载阿里云的repo源

#配置国内阿里云docker的repo源

[root@k8s-master1 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#配置epel源

把epel.repo上传到k8s-master1的/etc/yum.repos.d目录下,然后再远程拷贝到k8s-node1节点。

[root@k8s-master1 ~]# scp /etc/yum.repos.d/epel.repo k8s-node1:/etc/yum.repos.d/

1.1.9 配置时间同步

[root@k8s-master1 ~]# yum install ntpdate -y

[root@k8s-master1 ~]# ntpdate cn.pool.ntp.org

#把时间同步做成计划任务

[root@k8s-master1 ~]# crontab -e

* */1 * * * /usr/sbin/ntpdate cn.pool.ntp.org

#重启crond服务

[root@k8s-master1 ~]# service crond restart

[root@k8s-node1 ~]# yum install ntpdate -y

[root@k8s-node1~]# ntpdate cn.pool.ntp.org

#把时间同步做成计划任务

[root@k8s-node1 ~]# crontab -e

* */1 * * * /usr/sbin/ntpdate cn.pool.ntp.org

#重启crond服务

[root@k8s-node1 ~]# service crond restart

1.1.10 安装iptables

如果用firewalld不是很习惯,可以安装iptables

[root@k8s-master1 ~]# yum install iptables-services -y

禁用iptables

[root@k8s-master1 ~]# service iptables stop && systemctl disable iptables

清空防火墙规则

[root@k8s-master1 ~]# iptables -F

1.1.11 开启ipvs

不开启ipvs将会使用iptables进行数据包转发,但是效率低,所以官网推荐需要开通ipvs。

把ipvs.modules上传到k8s-master1机器的/etc/sysconfig/modules/目录下

[root@k8s-master1# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26583 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

[root@k8s-master1~]# scp /etc/sysconfig/modules/ipvs.modules k8s-node1:/etc/sysconfig/modules/

[root@k8s-node1]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26583 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

1.1.12 安装基础软件包

[root@k8s-master1]# yum install -y yum-utils device-mapper-persistent-data lvm2 wget net-tools nfs-utils lrzsz gcc gcc-c++ make cmake libxml2-devel openssl-devel curl curl-devel unzip sudo ntp libaio-devel wget vim ncurses-devel autoconf automake zlib-devel python-devel epel-release openssh-server socat ipvsadm conntrack ntpdate

2、安装Docker环境

2.1 安装docker-ce

注意:二进制安装时控制节点master可以不用安装docker,因为控制节点不允许pod调度到控制节点

如果是kubeadm方式安装,控制节点和工作节点都需要安装docker,因为kubeadm方式安装控制节点的组件是以pod运行的,只要有pod他就需要基于容器引擎启动容器

我这里还是在所有节点都安装一下docker

[root@k8s-master1~]# yum install docker-ce docker-ce-cli containerd.io -y

[root@k8s-master1~]# systemctl start docker && systemctl enable docker.service

2.2 配置docker镜像加速器

[root@k8s-master1~]# tee /etc/docker/daemon.json << 'EOF'

{

"registry-mirrors":["https://rsbud4vc.mirror.aliyuncs.com","https://registry.docker-cn.com","https://docker.mirrors.ustc.edu.cn","https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com","http://qtid6917.mirror.aliyuncs.com", "https://rncxm540.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

[root@k8s-master1~]# systemctl daemon-reload && systemctl restart docker && systemctl status docker

Active: active (running) since Sun 2021-09-19 19:34:09 CST; 9ms ago

#修改docker文件驱动为systemd,默认为cgroupfs,kubelet默认使用systemd,两者必须一致才可以。

3、搭建etcd集群

3.1 配置etcd工作目录

创建配置文件目录和证书文件存放目录

[root@k8s-master1 ~]# mkdir /etc/etcd && mkdir -p /etc/etcd/ssl

3.2 安装签发证书工具cfssl

[root@k8s-master1 ~]# mkdir /root/k8s-hxu

#把cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64上传到目录

[root@k8s-master1 k8s-hxu]# ls

cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64

#把文件变成可执行权限

[root@k8s-master1 k8s-hxu]# chmod +x *

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

3.3 配置ca证书

#生成ca证书请求文件

[root@k8s-master1 ]# cd /root/k8s-hxu/

[root@k8s-master1 k8s-hxu]# vim ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "k8s",

"OU": "system"

}

],

"ca": {

"expiry": "8760h"

}

}

#生成ca证书json文件

[root@k8s-master1 k8s-hxu]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}

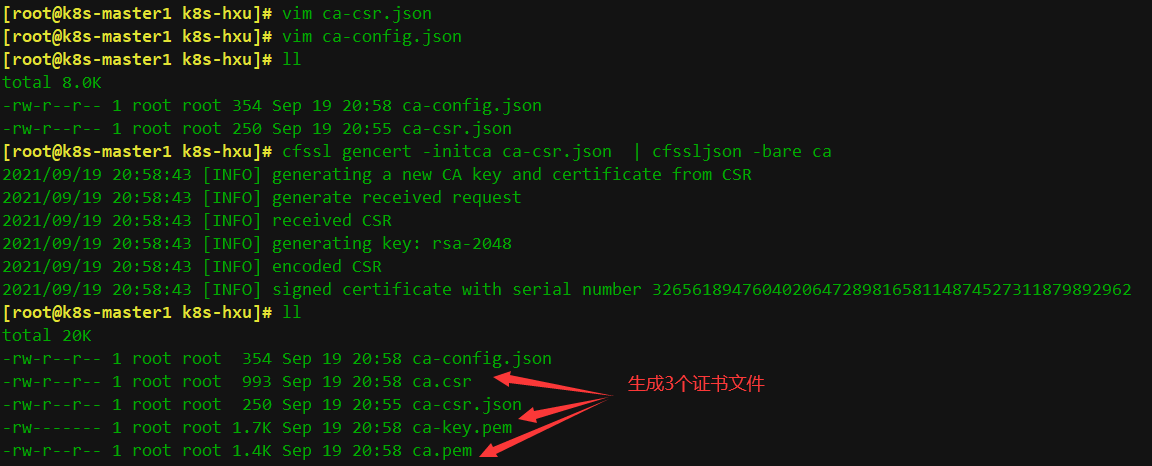

[root@k8s-master1 k8s-hxu]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

注: CN:Common Name,kube-apiserver 从证书中提取该字段作为请求的用户名 (User Name);浏览器使用该字段验证网站是否合法; O:Organization,kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group)

即如果如下图

导出证书文件都已经生成了,下面为etcd生成证书

3.4 生成etcd证书

配置etcd证书请求,hosts的ip变成自己master节点的ip

[root@k8s-master1 k8s-hxu]# vim etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.7.10",

"192.168.7.11",

"192.168.7.12", # 10,11,12地址为所有etcd节点的集群内部通信IP,可以预留几个,做扩容用

"192.168.7.50" # etcd的vip地址

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "k8s",

"OU": "system"

}]

}

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

\# 会生成3个etcd的证书文件

[root@k8s-master1 k8s-hxu]# ll

-rw-r--r-- 1 root root 1.1K Sep 19 21:17 etcd.csr

-rw------- 1 root root 1.7K Sep 19 21:17 etcd-key.pem

-rw-r--r-- 1 root root 1.4K Sep 19 21:17 etcd.pem

3.5 部署etcd集群

把etcd-v3.4.13-linux-amd64.tar.gz上传到k8s-master1节点的/root/k8s-hxu目录下

[root@k8s-master1 k8s-hxu]# tar -xf etcd-v3.4.13-linux-amd64.tar.gz

[root@k8s-master1 k8s-hxu]# cp -p etcd-v3.4.13-linux-amd64/etcd* /usr/local/bin/

把这两个二进制程序文件拷贝到master2和master3的/usr/local/bin/目录下

[root@k8s-master1 etcd-v3.4.13-linux-amd64]# scp -p /usr/local/bin/etcd* master2:/usr/local/bin/

[root@k8s-master1 etcd-v3.4.13-linux-amd64]# scp -p /usr/local/bin/etcd* master3:/usr/local/bin/

#创建配置文件

[root@k8s-master1 k8s-hxu]#cd /root/k8s-hxu/

[root@k8s-master1 k8s-hxu]# vim etcd.conf

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.7.10:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.7.10:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.7.10:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.7.10:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.7.10:2380,etcd2=https://192.168.7.11:2380,etcd3=https://192.168.7.12:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#注:

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

#创建启动服务文件

[root@k8s-master1 k8s-hxu]# vim etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

# 下面开始拷贝etcd的证书到上面编辑的启动文件中定义的目录下

[root@k8s-master1 k8s-hxu]# ll /etc/etcd/ssl/

total 0

[root@k8s-master1 k8s-hxu]# cp ca*.pem /etc/etcd/ssl/

[root@k8s-master1 k8s-hxu]# cp etcd*.pem /etc/etcd/ssl/

[root@k8s-master1 k8s-hxu]# ll /etc/etcd/ssl/

-rw------- 1 root root 1.7K Sep 19 21:39 ca-key.pem

-rw-r--r-- 1 root root 1.4K Sep 19 21:39 ca.pem

-rw------- 1 root root 1.7K Sep 19 21:39 etcd-key.pem

-rw-r--r-- 1 root root 1.4K Sep 19 21:39 etcd.pem

[root@k8s-master1 k8s-hxu]# cp etcd.conf /etc/etcd/

[root@k8s-master1 k8s-hxu]# ll /etc/etcd/

-rw-r--r-- 1 root root 519 Sep 19 21:40 etcd.conf

drwxr-xr-x 2 root root 74 Sep 19 21:39 ssl

[root@k8s-master1 k8s-hxu]# cp etcd.service /usr/lib/systemd/system/

# 把文件同步到master2和master3上

for i in k8s-master2 k8s-master3;do rsync -vaz etcd.conf $i:/etc/etcd/;done

for i in k8s-master2 k8s-master3;do rsync -vaz etcd*.pem ca*.pem $i:/etc/etcd/ssl/;done

for i in k8s-master2 k8s-master3;do rsync -vaz etcd.service $i:/usr/lib/systemd/system/;done

[root@k8s-master1 k8s-hxu]# mkdir -p /var/lib/etcd/default.etcd

启动etcd集群

[root@k8s-master1 k8s-hxu]# systemctl daemon-reload && systemctl enable etcd.service && systemctl start etcd.service && systemctl status etcd

Active: active (running) since Sun 2021-09-19 21:53:59 CST; 5ms ago

\#**注意**:启动etcd的时候,先启动k8s-master1的etcd服务,会一直卡住在启动的状态,然后接着再启动k8s-master2的etcd,这样k8s-master1这个节点etcd才会正常起来

#查看etcd集群

[root@k8s-master1 k8s-hxu]# ETCDCTL_API=3

[root@k8s-master1 k8s-hxu]# /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://192.168.7.10:2379,https://192.168.7.11:2379,https://192.168.7.12:2379 endpoint health

+---------------------------+--------+------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+---------------------------+--------+------------+-------+

| https://192.168.7.12:2379 | true | 7.823002ms | |

| https://192.168.7.10:2379 | true | 8.056305ms | |

| https://192.168.7.11:2379 | true | 20.08422ms | |

+---------------------------+--------+------------+-------+

4、安装kubernetes组件

二进制包所在的github地址如下:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/

4.1 下载安装包

把kubernetes-server-linux-amd64.tar.gz上传到k8s-master1上的/data/work

[root@k8s-master1 k8s-hxu]# tar zxvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master1 k8s-hxu]# cd kubernetes/server/bin/

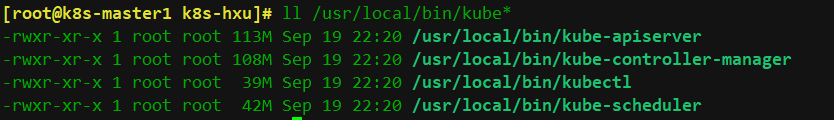

[root@k8s-master1 bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

[root@k8s-master1 bin]# #rsync -vaz kube-apiserver kube-controller-manager kube-scheduler kubectl k8s-master2:/usr/local/bin/

[root@k8s-master1 bin]# rsync -vaz kube-apiserver kube-controller-manager kube-scheduler kubectl k8s-master2:/usr/local/bin/

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-node1:/usr/local/bin/

[root@k8s-master1 bin]# cd /root/k8s-hxu/

[root@k8s-master1 k8s-hxu]# mkdir -p /etc/kubernetes/ssl && mkdir /var/log/kubernetes

二进制程序文件如下图所示

4.2 部署api-server组件

启动TLS Bootstrapping 机制

Master apiserver启用TLS认证后,每个节点的 kubelet 组件都要使用由 apiserver 使用的 CA 签发的有效证书才能与 apiserver 通讯,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。

Bootstrap 是很多系统中都存在的程序,比如 Linux 的bootstrap,bootstrap 一般都是作为预先配置在开启或者系统启动的时候加载,这可以用来生成一个指定环境。Kubernetes 的 kubelet 在启动时同样可以加载一个这样的配置文件,这个文件的内容类似如下形式:

apiVersion: v1

clusters: null

contexts:

- context:

cluster: kubernetes

user: kubelet-bootstrap

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kubelet-bootstrap

user: {}

#TLS bootstrapping 具体引导过程

-

TLS 作用

TLS 的作用就是对通讯加密,防止中间人窃听;同时如果证书不信任的话根本就无法与 apiserver 建立连接,更不用提有没有权限向apiserver请求指定内容。 -

RBAC 作用

当 TLS 解决了通讯问题后,那么权限问题就应由 RBAC 解决(可以使用其他权限模型,如 ABAC);RBAC 中规定了一个用户或者用户组(subject)具有请求哪些 api 的权限;在配合 TLS 加密的时候,实际上 apiserver 读取客户端证书的 CN 字段作为用户名,读取 O字段作为用户组.

以上说明:第一,想要与 apiserver 通讯就必须采用由 apiserver CA 签发的证书,这样才能形成信任关系,建立 TLS 连接;第二,可以通过证书的 CN、O 字段来提供 RBAC 所需的用户与用户组。

#kubelet 首次启动流程

TLS bootstrapping 功能是让 kubelet 组件去 apiserver 申请证书,然后用于连接 apiserver;那么第一次启动时没有证书如何连接 apiserver ?

在apiserver 配置中指定了一个 token.csv 文件,该文件中是一个预设的用户配置;同时该用户的Token 和 由apiserver 的 CA签发的用户被写入了 kubelet 所使用的 bootstrap.kubeconfig 配置文件中;这样在首次请求时,kubelet 使用 bootstrap.kubeconfig 中被 apiserver CA 签发证书时信任的用户来与 apiserver 建立 TLS 通讯,使用 bootstrap.kubeconfig 中的用户 Token 来向 apiserver 声明自己的 RBAC 授权身份.

首次启动时,可能与遇到 kubelet 报 401 无权访问 apiserver 的错误;这是因为在默认情况下,kubelet 通过 bootstrap.kubeconfig 中的预设用户 Token 声明了自己的身份,然后创建 CSR 请求;但是不要忘记这个用户在我们不处理的情况下他没任何权限的,包括创建 CSR 请求;所以需要创建一个 ClusterRoleBinding,将预设用户 kubelet-bootstrap 与内置的 ClusterRole system:node-bootstrapper 绑定到一起,使其能够发起 CSR 请求。稍后安装kubelet的时候演示。

#创建token.csv文件

[root@k8s-master1 k8s-hxu]#

/root/k8s-hxu

[root@k8s-master1 k8s-hxu]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

生成了一个文件,文件内容如下

#格式:token,用户名,UID,用户组

[root@k8s-master1 k8s-hxu]# cat token.csv

0758e774e990b936c217d2f6f8f676d4,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

#创建csr请求文件,替换为自己机器的IP

[root@k8s-master1 k8s-hxu]# vim kube-apiserver-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.7.10",

"192.168.7.11",

"192.168.7.12",

"192.168.7.13",

"192.168.7.50",

"10.255.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "k8s",

"OU": "system"

}

]

}

#注: 如果 hosts 字段不为空则需要指定授权使用该证书的 IP 或域名列表。 由于该证书后续被 kubernetes master 集群使用,需要将master节点的IP都填上,同时还需要填写 service 网络的首个IP。(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个IP,如 10.255.0.1)

#生成证书和token文件

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

[root@k8s-master1 k8s-hxu]# ll -t

-rw-r--r-- 1 root root 1.3K Sep 20 11:09 kube-apiserver.csr

-rw------- 1 root root 1.7K Sep 20 11:09 kube-apiserver-key.pem

-rw-r--r-- 1 root root 1.6K Sep 20 11:09 kube-apiserver.pem

[root@k8s-master1 k8s-hxu]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

#创建api-server的配置文件,替换成自己的ip

[root@k8s-master1 k8s-hxu]# vim kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=192.168.7.10 \

--secure-port=6443 \

--advertise-address=192.168.7.10 \

--insecure-port=0 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.255.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://192.168.7.10:2379,https://192.168.7.11:2379,https://192.168.7.12:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

# 注截:

--logtostderr:启用日志

--v:日志等级

--log-dir:日志目录

--etcd-servers:etcd集群地址

--bind-address:监听地址

--secure-port:https安全端口

--advertise-address:集群通告地址

--allow-privileged:启用授权

--service-cluster-ip-range:Service虚拟IP地址段

--enable-admission-plugins:准入控制模块

--authorization-mode:认证授权,启用RBAC授权和节点自管理

--enable-bootstrap-token-auth:启用TLS bootstrap机制

--token-auth-file:bootstrap token文件

--service-node-port-range:Service nodeport类型默认分配端口范围

--kubelet-client-xxx:apiserver访问kubelet客户端证书

--tls-xxx-file:apiserver https证书

--etcd-xxxfile:连接Etcd集群证书 –

-audit-log-xxx:审计日志

#创建服务启动文件

[root@k8s-master1 k8s-hxu]# vim kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[root@k8s-master1 k8s-hxu]# cp ca*.pem /etc/kubernetes/ssl/

[root@k8s-master1 k8s-hxu]# ll /etc/kubernetes/ssl/

-rw------- 1 root root 1.7K Sep 20 11:22 ca-key.pem

-rw-r--r-- 1 root root 1.4K Sep 20 11:22 ca.pem

[root@k8s-master1 k8s-hxu]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@k8s-master1 k8s-hxu]# ll /etc/kubernetes/ssl/

-rw------- 1 root root 1.7K Sep 20 11:22 ca-key.pem

-rw-r--r-- 1 root root 1.4K Sep 20 11:22 ca.pem

-rw------- 1 root root 1.7K Sep 20 11:22 kube-apiserver-key.pem

-rw-r--r-- 1 root root 1.6K Sep 20 11:22 kube-apiserver.pem

[root@k8s-master1 k8s-hxu]# cp token.csv /etc/kubernetes/

[root@k8s-master1 k8s-hxu]# cp kube-apiserver.conf /etc/kubernetes/

[root@k8s-master1 k8s-hxu]# cp kube-apiserver.service /usr/lib/systemd/system/

# 下面把这些生成的文件都拷贝到其他两个master控制节点的对应目录下(千万不要漏)

rsync -vaz token.csv k8s-master2:/etc/kubernetes/

rsync -vaz token.csv k8s-master3:/etc/kubernetes/

rsync -vaz kube-apiserver*.pem k8s-master2:/etc/kubernetes/ssl/

rsync -vaz kube-apiserver*.pem k8s-master3:/etc/kubernetes/ssl/

rsync -vaz ca*.pem k8s-master2:/etc/kubernetes/ssl/

rsync -vaz ca*.pem k8s-master3:/etc/kubernetes/ssl/

rsync -vaz kube-apiserver.conf k8s-master2:/etc/kubernetes/

rsync -vaz kube-apiserver.conf k8s-master3:/etc/kubernetes/

rsync -vaz kube-apiserver.service k8s-master2:/usr/lib/systemd/system/

rsync -vaz kube-apiserver.service k8s-master3:/usr/lib/systemd/system/

# 注:k8s-master2和k8s-master3配置文件kube-apiserver.conf的IP地址修改为实际的本机IP,修改以下两个字段即可

--bind-address=192.168.7.12

--advertise-address=192.168.7.12

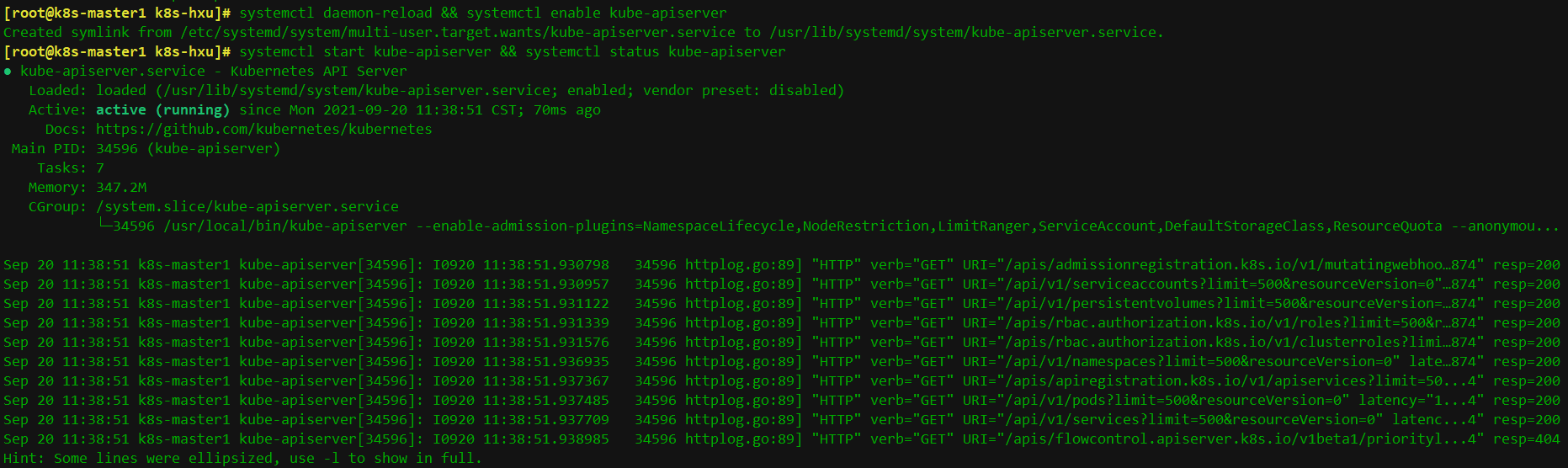

加载启动文件并启动

# systemctl daemon-reload && systemctl enable kube-apiserver && systemctl start kube-apiserver && systemctl status kube-apiserver

查看一下状态,看到下面这个running则表示apiserver正常启动了。

使用curl命令请求访问一下

[root@k8s-master1 k8s-hxu]# curl --insecure https://192.168.7.10:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

上面看到401,这个是正常的的状态,还没认证

4.3 部署kubectl组件

Kubectl是客户端工具,操作k8s资源的,如增删改查等。

Kubectl操作资源的时候,怎么知道连接到哪个集群,需要一个文件/etc/kubernetes/admin.conf,kubectl会根据这个文件的配置,去访问k8s资源。/etc/kubernetes/admin.con文件记录了访问的k8s集群,和用到的证书。

可以设置一个环境变量KUBECONFIG

[root@ k8s-master1 ~]# export KUBECONFIG =/etc/kubernetes/admin.conf

这样在操作kubectl,就会自动加载KUBECONFIG来操作要管理哪个集群的k8s资源了

也可以按照下面方法,这个是在kubeadm初始化k8s的时候会告诉我们要用的一个方法

[root@ k8s-master1 ~]# cp /etc/kubernetes/admin.conf /root/.kube/config

这样我们在执行kubectl,就会加载/root/.kube/config文件,去操作k8s资源了

如果设置了KUBECONFIG,那就会先找到KUBECONFIG去操作k8s,如果没有KUBECONFIG变量,那就会使用/root/.kube/config文件决定管理哪个k8s集群的资源

创建csr请求文件

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "system:masters",

"OU": "system"

}

]

}

#说明: 后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权; kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用kube-apiserver 的所有 API的权限; O指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限; 注: 这个admin 证书,是将来生成管理员用的kube config 配置文件用的,现在我们一般建议使用RBAC 来对kubernetes 进行角色权限控制, kubernetes 将证书中的CN 字段 作为User, O 字段作为 Group; "O": "system:masters", 必须是system:masters,否则后面kubectl create clusterrolebinding报错。

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2021/09/20 14:06:01 [INFO] generate received request

2021/09/20 14:06:01 [INFO] received CSR

2021/09/20 14:06:01 [INFO] generating key: rsa-2048

2021/09/20 14:06:02 [INFO] encoded CSR

2021/09/20 14:06:02 [INFO] signed certificate with serial number 345050993241817661147076199353241438925566273976

2021/09/20 14:06:02 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master1 k8s-hxu]# ll -t

total 320M

-rw-r--r-- 1 root root 1001 Sep 20 14:06 admin.csr

-rw------- 1 root root 1.7K Sep 20 14:06 admin-key.pem

-rw-r--r-- 1 root root 1.4K Sep 20 14:06 admin.pem

[root@k8s-master1 k8s-hxu]# cp admin*.pem /etc/kubernetes/ssl/

#创建kubeconfig配置文件,比较重要

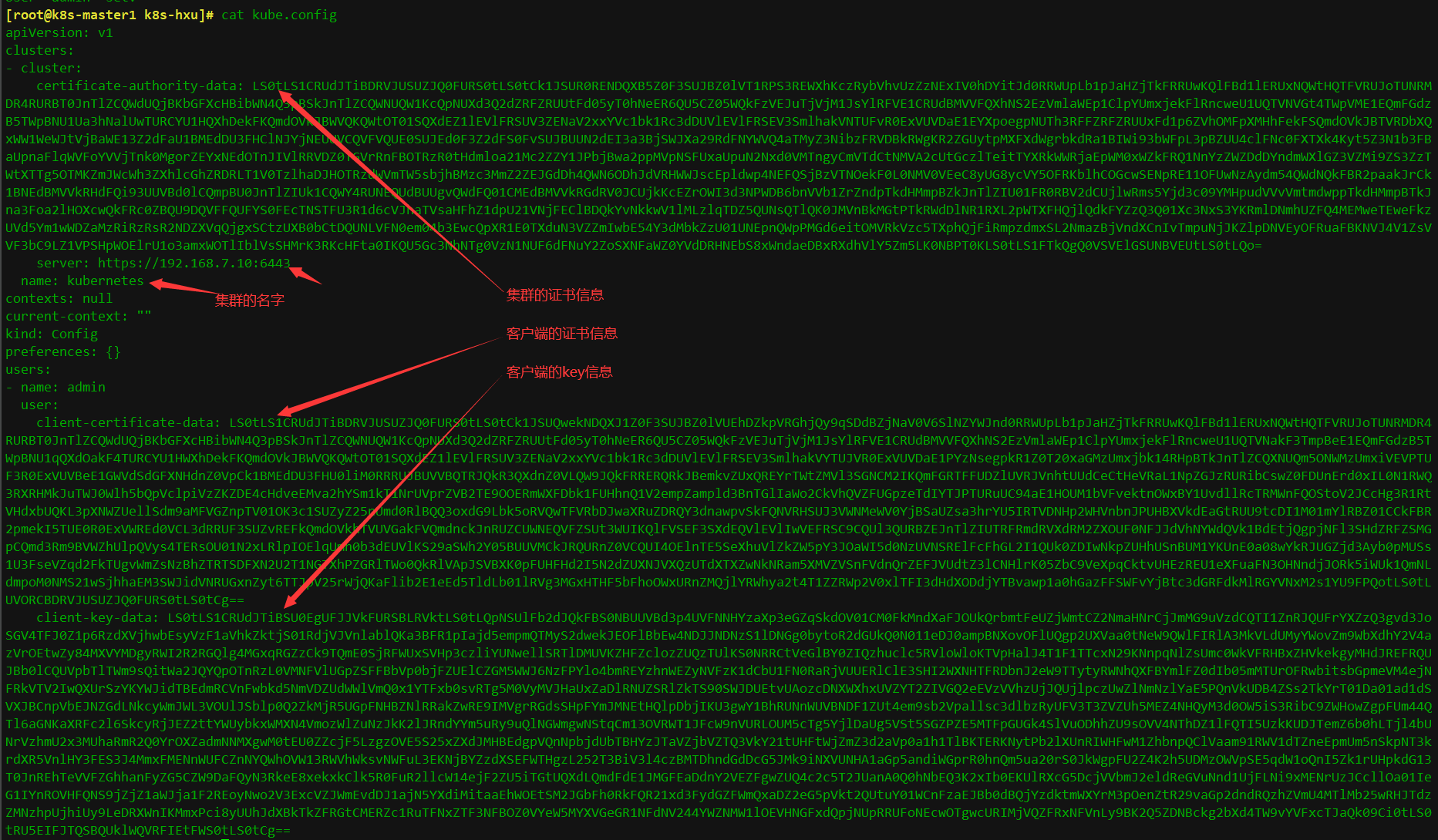

kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书(这里如果报错找不到kubeconfig路径,请手动复制到相应路径下,没有则忽略)

1.设置集群参数

[root@k8s-master1 k8s-hxu]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.7.10:6443 --kubeconfig=kube.config

2.设置客户端认证参数

[root@k8s-master1 k8s-hxu]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

3.设置上下文参数

在上面的截图中可以看到contexts: null 字段是无信息的,下面配置上下文参数使他们之间建立连接(也就是admin用户对应个集群),有上下文之后再配置当前上下文(current-context: ""字段),他就会吧哪个用户对应哪个集群建立起连接了

[root@k8s-master1 k8s-hxu]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.configContext "kubernetes" created.

[root@k8s-master1 k8s-hxu]# cat kube.config

设置完之后再查看文件context字段就被加上了内容

............

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

4.设置默认上下文,此时admin用户就可以访问kubernetes了

[root@k8s-master1 k8s-hxu]# kubectl config use-context kubernetes --kubeconfig=kube.config

Switched to context "kubernetes".

[root@k8s-master1 k8s-hxu]# cat kube.config

...

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: kubernetes

我们在执行kubectl的时候就需要让他加载kube.config文件,如何让他加载此文件?执行以下操作,

[root@k8s-master1 k8s-hxu]# mkdir ~/.kube -p

#如果不拷贝config文件直接执行kubectl命令就会报以下错误

[root@k8s-master1 k8s-hxu]# kubectl get node

The connection to the server localhost:8080 was refused - did you specify the right host or port?

拷贝文件后再执行

[root@k8s-master1 k8s-hxu]# cp kube.config ~/.kube/config

[root@k8s-master1 k8s-hxu]# kubectl get node

No resources found

现在虽然可以查看资源但是还没有全新可以创建相关的资源,下面给他授权让它可以创建资源

5.授权kubernetes证书访问kubelet api权限

[root@k8s-master1 k8s-hxu]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

注解:

# clusterrolebinding是无名称空间限制的,对任何名称空间都有效的

# 给clusterrolebinding起个名叫kube-apiserver:kubelet-apis

# 要把kubernetes这个用户通过clusterrolebinding绑定到system:kubelet-api-admin这个集群内置的一个clusterrole

6、把配置文件同样拷贝到master2和master3上,先创建.kube目录再拷贝

rsync -vaz /root/.kube/config k8s-master2:/root/.kube/

rsync -vaz /root/.kube/config k8s-master2:/root/.kube/

这样在其它两个master上也可以查看和操作集群资源了

#查看集群组件状态

[root@k8s-master1 k8s-hxu]# kubectl cluster-info

Kubernetes control plane is running at https://192.168.7.10:6443

[root@k8s-master1 k8s-hxu]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true"}

[root@k8s-master1 k8s-hxu]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.255.0.1 <none> 443/TCP

配置kubectl子命令补全

参考官方文档:

https://v1-20.docs.kubernetes.io/zh/docs/tasks/tools/install-kubectl-linux/

[root@k8s-master1 k8s-hxu]# yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

[root@k8s-master1 k8s-hxu]# kubectl completion bash > ~/.kube/completion.bash.inc

source '/root/.kube/completion.bash.inc'

source $HOME/.bash_profile

在文件 ~/.bashrc 中导入(source)补全脚本:

[root@k8s-master1 k8s-hxu]# echo 'source <(kubectl completion bash)' >>~/.bashrc

将补全脚本添加到目录 /etc/bash_completion.d 中:

[root@k8s-master1 k8s-hxu]# kubectl completion bash >/etc/bash_completion.d/kubectl

如果 kubectl 有关联的别名,你可以扩展 shell 补全来适配此别名:

[root@k8s-master1 k8s-hxu]# echo 'alias k=kubectl' >>~/.bashrc

[root@k8s-master1 k8s-hxu]# echo 'complete -F __start_kubectl k' >>~/.bashrc

4.4 部署部署kube-controller-manager组件

创建csr请求文件

[root@k8s-master1 k8s-hxu]# vim kube-controller-manager-csr.json

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.7.10",

"192.168.7.11",

"192.168.7.12",

"192.168.7.50"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

注: hosts 列表包含所有 kube-controller-manager 节点 IP; CN 为 system:kube-controller-manager、O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

#生成证书

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

创建kube-controller-manager的kubeconfig

1.设置集群参数

[root@k8s-master1 k8s-hxu]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.7.10:6443 --kubeconfig=kube-controller-manager.kubeconfig

Cluster "kubernetes" set.

2.设置客户端认证参数

[root@k8s-master1 k8s-hxu]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

User "system:kube-controller-manager" set.

3.设置上下文参数

[root@k8s-master1 k8s-hxu]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

Context "system:kube-controller-manager" created.

[root@k8s-master1 k8s-hxu]# cat kube-controller-manager.kubeconfig

contexts:

- context:

cluster: kubernetes

user: system:kube-controller-manager

name: system:kube-controller-manager

4.设置当前上下文

[root@k8s-master1 k8s-hxu]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

Switched to context "system:kube-controller-manager".

[root@k8s-master1 k8s-hxu]# cat kube-controller-manager.kubeconfig

....

current-context: system:kube-controller-manager

....

#创建配置文件kube-controller-manager.conf

[root@k8s-master1 k8s-hxu]# vim kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS="--port=0 \

--secure-port=10252 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.255.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=10.0.0.0/16 \

--experimental-cluster-signing-duration=8760h \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-use-rest-clients=true \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

创建启动文件

[root@k8s-master1 k8s-hxu]# vim kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kuberntes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

启动服务

# 先拷贝相关的证书文件到指定的目录下,并同步到master2和master3节点

[root@k8s-master1 k8s-hxu]# cp kube-controller-manager*.pem /etc/kubernetes/ssl/

cp kube-controller-manager.kubeconfig /etc/kubernetes/

cp kube-controller-manager.conf /etc/kubernetes/

cp kube-controller-manager.service /usr/lib/systemd/system/

# 拷贝文件到master2和master3节点

rsync -vaz kube-controller-manager*.pem k8s-master2:/etc/kubernetes/ssl/

rsync -vaz kube-controller-manager*.pem k8s-master3:/etc/kubernetes/ssl/

rsync -vaz kube-controller-manager.kubeconfig kube-controller-manager.conf k8s-master2:/etc/kubernetes/

rsync -vaz kube-controller-manager.kubeconfig kube-controller-manager.conf k8s-master3:/etc/kubernetes/

rsync -vaz kube-controller-manager.service k8s-master2:/usr/lib/systemd/system/

rsync -vaz kube-controller-manager.service k8s-master3:/usr/lib/systemd/system/

# 启动

[root@k8s-master1 k8s-hxu]# systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl start kube-controller-manager

systemctl status kube-controller-manager

Active: active (running) since Mon 2021-09-20 15:33:19 CST; 35ms ago

4.5 部署kube-scheduler组件

创建csr请求

[root@k8s-master1 k8s-hxu]# vim kube-scheduler-csr.json

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.7.10"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

注: hosts 列表包含所有 kube-scheduler 节点 IP; CN 为 system:kube-scheduler、O 为 system:kube-scheduler,kubernetes 内置的 ClusterRoleBindings system:kube-scheduler 将赋予 kube-scheduler 工作所需的权限。

生成证书

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

创建kube-scheduler的kubeconfig

1.设置集群参数

[root@k8s-master1 k8s-hxu]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.7.10:6443 --kubeconfig=kube-scheduler.kubeconfig

Cluster "kubernetes" set.

2.设置客户端认证参数

[root@k8s-master1 k8s-hxu]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

User "system:kube-scheduler" set

3.设置上下文参数

[root@k8s-master1 k8s-hxu]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

Context "system:kube-scheduler" created.

4.设置默认上下文

[root@k8s-master1 k8s-hxu]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

Switched to context "system:kube-scheduler".

#创建配置文件kube-scheduler.conf

[root@k8s-master1 k8s-hxu]# vim kube-scheduler.conf

KUBE_SCHEDULER_OPTS="--address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

#创建服务启动文件

[root@k8s-master1 k8s-hxu]# vim kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

启动服务

[root@k8s-master1 k8s-hxu]# cp kube-scheduler*.pem /etc/kubernetes/ssl/

cp kube-scheduler.kubeconfig /etc/kubernetes/

cp kube-scheduler.conf /etc/kubernetes/

cp kube-scheduler.service /usr/lib/systemd/system/

# 同步文件到其他节点

rsync -vaz kube-scheduler*.pem k8s-master2:/etc/kubernetes/ssl/

rsync -vaz kube-scheduler*.pem k8s-master3:/etc/kubernetes/ssl/

rsync -vaz kube-scheduler.kubeconfig kube-scheduler.conf k8s-master2:/etc/kubernetes/

rsync -vaz kube-scheduler.kubeconfig kube-scheduler.conf k8s-master3:/etc/kubernetes/

rsync -vaz kube-scheduler.service k8s-master2:/usr/lib/systemd/system/

rsync -vaz kube-scheduler.service k8s-master3:/usr/lib/systemd/system/

[root@k8s-master1 k8s-hxu]# systemctl daemon-reload && systemctl enable kube-scheduler && systemctl start kube-scheduler && systemctl status kube-scheduler

● kube-scheduler.service - Kubernetes Scheduler

Active: active (running) since Wed

[root@k8s-master1 k8s-hxu]# netstat -ntlp|grep kube-schedule

tcp 0 0 127.0.0.1:10251 0.0.0.0:* LISTEN 35139/kube-schedule

tcp6 0 0 :::10259 :::* LISTEN 35139/kube-schedule

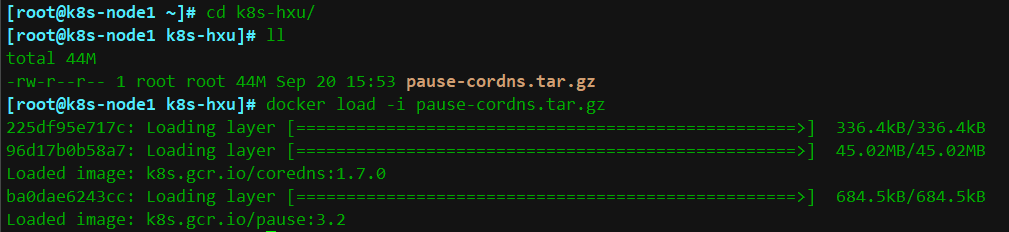

先导入离线镜像压缩包coredns

把pause-cordns.tar.gz上传到k8s-node1节点,手动解压

[root@k8s-node1 ~]# docker load -i pause-cordns.tar.gz

4.6 部署kubelet组件

kubelet: 每个Node节点上的kubelet定期就会调用API Server的REST接口报告自身状态,API Server接收这些信息后,将节点状态信息更新到etcd中。kubelet也通过API Server监听Pod信息,从而对Node机器上的POD进行管理,如创建、删除、更新Pod,因为控制节点不需要调度pod所以不需要在控制节点安装kubelet组件,为了方便生成证书所以下面操作还是在master1上操作最后同步到node1节点上

以下操作在k8s-master1上操作

创建kubelet-bootstrap.kubeconfig

[root@k8s-master1 k8s-hxu]# cd /root/k8s-hxu/

[root@k8s-master1 k8s-hxu]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

[root@k8s-master1 k8s-hxu]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.7.10:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 k8s-hxu]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 k8s-hxu]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 k8s-hxu]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 k8s-hxu]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

#创建配置文件kubelet.json

"cgroupDriver": "systemd"要和docker的驱动一致。

address替换为自己k8s-node1的IP地址。

[root@k8s-master1 k8s-hxu]# vim kubelet.json

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.7.13",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.255.0.2"]

}

添加服务启动文件

[root@k8s-master1 k8s-hxu]# vim kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--network-plugin=cni \

--pod-infra-container-image=k8s.gcr.io/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

#注: –hostname-override:显示名称,集群中唯一

–network-plugin:启用CNI

–kubeconfig:空路径,会自动生成,后面用于连接apiserver

–bootstrap-kubeconfig:kubelet首次启动向apiserver申请证书

–config:配置参数文件

–cert-dir:kubelet证书生成目录

–pod-infra-container-image:管理Pod网络容器的镜像

# 注:kubelete.json配置文件address改为各个节点的ip地址,在各个work节点上启动服务

[root@k8s-node1 ~]# mkdir /etc/kubernetes/ssl -p

[root@k8s-master1 k8s-hxu]# scp kubelet-bootstrap.kubeconfig kubelet.json k8s-node1:/etc/kubernetes/

scp ca.pem k8s-node1:/etc/kubernetes/ssl/

scp kubelet.service k8s-node1:/usr/lib/systemd/system/

#启动kubelet服务

[root@k8s-node1 ~]# mkdir /var/lib/kubelet && mkdir /var/log/kubernetes

[root@k8s-node1 ~]# systemctl daemon-reload && systemctl enable kubelet && systemctl start kubelet && systemctl status kubelet

Active: active (running) since

确认kubelet服务启动成功后,接着到k8s-master1节点上Approve一下bootstrap请求。

执行如下命令可以看到worker节点发送了 CSR 请求:

[root@k8s-master1 k8s-hxu]# kubectl get csr

在下面截图中可以查看到有一条kubelet的请求,状态为pending

在服务端批准客户端的请求

[root@k8s-master1 k8s-hxu]# kubectl certificate approve node-csr-0etHx5MGVH6iIP1zR67lnbUSVpMsWmZ7cLuAspuumAU

[root@k8s-master1 k8s-hxu]# kubectl get csr

[root@k8s-master1 k8s-hxu]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node1 NotReady <none> 40s v1.20.7

#注意:STATUS是NotReady表示还没有安装网络插件

进到node1的/etc/kubernetes/目录下就可以看到生成的文件了

[root@k8s-node1 k8s-hxu]# cd /etc/kubernetes/

[root@k8s-node1 kubernetes]# ll

-rw------- 1 root root 2.1K Sep 20 16:30 kubelet-bootstrap.kubeconfig

-rw-r--r-- 1 root root 801 Sep 20 16:30 kubelet.json

-rw------- 1 root root 2.3K Sep 20 16:42 kubelet.kubeconfig

drwxr-xr-x 2 root root 138 Sep 20 16:42 ssl

[root@k8s-node1 kubernetes]# ll ssl/

-rw-r--r-- 1 root root 1.4K Sep 20 16:30 ca.pem

-rw------- 1 root root 1.2K Sep 20 16:42 kubelet-client-2021-09-20-16-42-19.pem

lrwxrwxrwx 1 root root 58 Sep 20 16:42 kubelet-client-current.pem -> /etc/kubernetes/ssl/kubelet-client-2021-09-20-16-42-19.pem

-rw-r--r-- 1 root root 2.3K Sep 20 16:32 kubelet.crt

-rw------- 1 root root 1.7K Sep 20 16:32 kubelet.key

4.7 部署kube-proxy组件

创建csr请求

[root@k8s-master1 k8s-hxu]# vim kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "bj",

"O": "k8s",

"OU": "system"

}

]

}

生成证书

[root@k8s-master1 k8s-hxu]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

#创建kubeconfig文件

[root@k8s-master1 k8s-hxu]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.7.10:6443 --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 k8s-hxu]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

User "kube-proxy" set.

[root@k8s-master1 k8s-hxu]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

Context "default" created.

[root@k8s-master1 k8s-hxu]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

Switched to context "default".

创建kube-proxy配置文件

[root@k8s-master1 k8s-hxu]# vim kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.7.13 #node1的ip

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 192.168.7.0/24 #物理机网段

healthzBindAddress: 192.168.7.13:10256 #node1的ip

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.7.13:10249 #node1的ip

mode: "ipvs"

创建服务启动文件

[root@k8s-master1 k8s-hxu]# vim kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[root@k8s-master1 k8s-hxu]# scp kube-proxy.kubeconfig kube-proxy.yaml k8s-node1:/etc/kubernetes/

[root@k8s-master1 k8s-hxu]#scp kube-proxy.service k8s-node1:/usr/lib/systemd/system/

#启动服务

[root@k8s-node1 ~]# mkdir -p /var/lib/kube-proxy

# systemctl daemon-reload

# systemctl enable kube-proxy

# systemctl restart kube-proxy

# systemctl status kube-proxy

Active: active (running) since Mon 2021-09-20 17:05:41 CST; 49ms ago

4.8 部署calico组件

解压离线镜像压缩包

#把cni.tar.gz和node.tar.gz上传到k8s-node1节点,手动解压

[root@k8s-node1 ~]# docker load -i cni.tar.gz

[root@k8s-node1 ~]# docker load -i node.tar.gz

在master1上创建yaml文件,需要修改calico.yaml文件:

166 - name: IP_AUTODETECTION_METHOD

167 value: "can-reach=192.168.7.13 #这个ip是k8s任何一个工作节点的ip都行

创建

[root@k8s-master1 k8s-hxu]# kubectl apply -f calico.yaml

[root@k8s-master1 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-node-xk7n4 1/1 Running 0 13s

现在node状态也由NoReady变为了Ready

[root@k8s-master1 k8s-hxu]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready <none> 32m v1.20.7

4.10 部署coredns组件

在之前我们已经上传过pause-cordns.tar.gz包了,这里直接创建yaml文件并创建即可

[root@k8s-master1 ~]# kubectl apply -f coredns.yaml

[root@k8s-master1 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-node-xk7n4 1/1 Running 0 6m6s

coredns-7bf4bd64bd-dt8dq 1/1 Running 0 51s

[root@k8s-master1 ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.255.0.2 <none> 53/UDP,53/TCP,9153/TCP 12m

4.查看集群状态

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready <none> 38m v1.20.7

5.测试k8s集群部署tomcat服务

#把tomcat.tar.gz和busybox-1-28.tar.gz上传到k8s-node1,手动解压

[root@k8s-node1 ~]# docker load -i tomcat.tar.gz

[root@k8s-node1 ~]# docker load -i busybox-1-28.tar.gz

[root@k8s-master1 ~]# kubectl apply -f tomcat.yaml

[root@k8s-master1 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

demo-pod 2/2 Running 0 11m

[root@k8s-master1 ~]# kubectl apply -f tomcat-service.yaml

[root@k8s-master1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.255.0.1 <none> 443/TCP 158m

tomcat NodePort 10.255.227.179 <none> 8080:30080/TCP 19m

在浏览器访问k8s-node1节点的ip:30080即可请求到浏览器

6.验证cordns是否正常

[root@k8s-master1 ~]# kubectl run busybox --image busybox:1.28 --restart=Never --rm -it busybox -- sh

/ # ping www.baidu.com

PING www.baidu.com (39.156.66.18): 56 data bytes

64 bytes from 39.156.66.18: seq=0 ttl=127 time=39.3 ms

#通过上面可以看到能访问网络

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.255.0.2

Address: 10.255.0.2:53

Name: kubernetes.default.svc.cluster.local

Address: 10.255.0.1

/ # nslookup tomcat.default.svc.cluster.local

Server: 10.255.0.2

Address 1: 10.255.0.2 kube-dns.kube-system.svc.cluster.local

Name: tomcat.default.svc.cluster.local

Address 1: 10.255.227.179 tomcat.default.svc.cluster.local

#注意:

busybox要用指定的1.28版本,不能用最新版本,最新版本,nslookup会解析不到dns和ip,报错如下:

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.255.0.2

Address: 10.255.0.2:53

*** Can't find kubernetes.default.svc.cluster.local: No answer

*** Can't find kubernetes.default.svc.cluster.local: No answer

10.255.0.2 就是我们coreDNS的clusterIP,说明coreDNS配置好了。

解析内部Service的名称,是通过coreDNS去解析的。

浙公网安备 33010602011771号

浙公网安备 33010602011771号