HBase Client JAVA API

旧 的 HBase 接口逻辑与传统 JDBC 方式很不相同,新的接口与传统 JDBC 的逻辑更加相像,具有更加清晰的 Connection 管理方式。

同时,在旧的接口中,客户端何时将 Put 写到服务端也需要设置,一个 Put 马上写到服务端,还是攒到一批写到服务端,新用户往往对此不太清楚。

在新的接口中,引入了 BufferedMutator,可以提供更加高效清晰的写操作。

HBase 0.98 与 HBase 1.0 接口名称对比

举一个例子,旧的 API 写入操作的代码:

新的 API 写入操作的代码:

可以看到,在操作前,首先建立连接,然后拿到一个对应表的句柄,之后再进行一系列操作。以上两个是同步写操作。

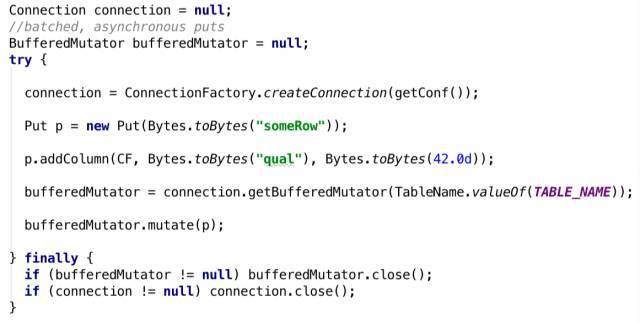

下面看一下批量异步写入接口:

org.apache.hadoop.hbase.client.BufferedMutator主要用来对HBase的单个表进行操作。它和Put类的作用差不多,但是主要用来实现批量的异步写操作。

BufferedMutator替换了HTable的setAutoFlush(false)的作用。

可以从Connection的实例中获取BufferedMutator的实例。在使用完成后需要调用close()方法关闭连接。对BufferedMutator进行配置需要通过BufferedMutatorParams完成。

MapReduce Job的是BufferedMutator使用的典型场景。MapReduce作业需要批量写入,但是无法找到恰当的点执行flush。

BufferedMutator接收MapReduce作业发送来的Put数据后,会根据某些因素(比如接收的Put数据的总量)启发式地执行Batch Put操作,且会异步的提交Batch Put请求,这样MapReduce作业的执行也不会被打断。

BufferedMutator也可以用在一些特殊的情况上。MapReduce作业的每个线程将会拥有一个独立的BufferedMutator对象。

一个独立的BufferedMutator也可以用在大容量的在线系统上来执行批量Put操作,但是这时需要注意一些极端情况比如JVM异常或机器故障,此时有可能造成数据丢失。

官方源码路径:/hbase-2.0.4/hbase-examples/src/main/java/org/apache/hadoop/hbase/client/example/BufferedMutatorExample.java

/** * * Licensed to the Apache Software Foundation (ASF) under one * or more contributor license agreements. See the NOTICE file * distributed with this work for additional information * regarding copyright ownership. The ASF licenses this file * to you under the Apache License, Version 2.0 (the * "License"); you may not use this file except in compliance * with the License. You may obtain a copy of the License at * * http://www.apache.org/licenses/LICENSE-2.0 * * Unless required by applicable law or agreed to in writing, software * distributed under the License is distributed on an "AS IS" BASIS, * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. * See the License for the specific language governing permissions and * limitations under the License. */ package org.apache.hadoop.hbase.client.example; import java.io.IOException; import java.util.ArrayList; import java.util.List; import java.util.concurrent.Callable; import java.util.concurrent.ExecutionException; import java.util.concurrent.ExecutorService; import java.util.concurrent.Executors; import java.util.concurrent.Future; import java.util.concurrent.TimeUnit; import java.util.concurrent.TimeoutException; import org.apache.hadoop.conf.Configured; import org.apache.hadoop.hbase.TableName; import org.apache.hadoop.hbase.client.BufferedMutator; import org.apache.hadoop.hbase.client.BufferedMutatorParams; import org.apache.hadoop.hbase.client.Connection; import org.apache.hadoop.hbase.client.ConnectionFactory; import org.apache.hadoop.hbase.client.Put; import org.apache.hadoop.hbase.client.RetriesExhaustedWithDetailsException; import org.apache.hadoop.hbase.util.Bytes; import org.apache.hadoop.util.Tool; import org.apache.hadoop.util.ToolRunner; import org.apache.yetus.audience.InterfaceAudience; import org.slf4j.Logger; import org.slf4j.LoggerFactory; /** * An example of using the {@link BufferedMutator} interface. */ @InterfaceAudience.Private public class BufferedMutatorExample extends Configured implements Tool { private static final Logger LOG = LoggerFactory.getLogger(BufferedMutatorExample.class); private static final int POOL_SIZE = 10; private static final int TASK_COUNT = 100; private static final TableName TABLE = TableName.valueOf("foo"); private static final byte[] FAMILY = Bytes.toBytes("f"); @Override public int run(String[] args) throws InterruptedException, ExecutionException, TimeoutException { /** a callback invoked when an asynchronous write fails. */ final BufferedMutator.ExceptionListener listener = new BufferedMutator.ExceptionListener() { @Override public void onException(RetriesExhaustedWithDetailsException e, BufferedMutator mutator) { for (int i = 0; i < e.getNumExceptions(); i++) { LOG.info("Failed to sent put " + e.getRow(i) + "."); } } }; BufferedMutatorParams params = new BufferedMutatorParams(TABLE) .listener(listener); // // step 1: create a single Connection and a BufferedMutator, shared by all worker threads. // try (final Connection conn = ConnectionFactory.createConnection(getConf()); final BufferedMutator mutator = conn.getBufferedMutator(params)) { /** worker pool that operates on BufferedTable instances */ final ExecutorService workerPool = Executors.newFixedThreadPool(POOL_SIZE); List<Future<Void>> futures = new ArrayList<>(TASK_COUNT); for (int i = 0; i < TASK_COUNT; i++) { futures.add(workerPool.submit(new Callable<Void>() { @Override public Void call() throws Exception { // // step 2: each worker sends edits to the shared BufferedMutator instance. They all use // the same backing buffer, call-back "listener", and RPC executor pool. // Put p = new Put(Bytes.toBytes("someRow")); p.addColumn(FAMILY, Bytes.toBytes("someQualifier"), Bytes.toBytes("some value")); mutator.mutate(p); // do work... maybe you want to call mutator.flush() after many edits to ensure any of // this worker's edits are sent before exiting the Callable return null; } })); } // // step 3: clean up the worker pool, shut down. // for (Future<Void> f : futures) { f.get(5, TimeUnit.MINUTES); } workerPool.shutdown(); } catch (IOException e) { // exception while creating/destroying Connection or BufferedMutator LOG.info("exception while creating/destroying Connection or BufferedMutator", e); } // BufferedMutator.close() ensures all work is flushed. Could be the custom listener is // invoked from here. return 0; } public static void main(String[] args) throws Exception { ToolRunner.run(new BufferedMutatorExample(), args); } }

浙公网安备 33010602011771号

浙公网安备 33010602011771号