tensorflow--logistic regression

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist=input_data.read_data_sets("tmp/data", one_hot=True)

learning_rate=0.01

training_epochs=25

batch_size=100

display_step=1

# placeholder x,y 用来存储输入,输入图像x构成一个2维的浮点张量,[None,784]是简单的平铺图,'None'代表处理的批次大小,是任意大小

x=tf.placeholder(tf.float32,[None,784])

y=tf.placeholder(tf.float32,[None,10])

# variables 为模型定义权重和偏置

w=tf.Variable(tf.zeros([784,10]))

b=tf.Variable(tf.zeros([10]))

pred=tf.nn.softmax(tf.matmul(x,w)+b) # w*x+b要加上softmax函数

# reduce_sum 对所有类别求和,reduce_mean 对和取平均

cost=tf.reduce_mean(-tf.reduce_sum(y*tf.log(pred),reduction_indices=1))

# 往graph中添加新的操作,计算梯度,计算参数的更新

optimizer=tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)

init=tf.initialize_all_variables()

with tf.Session() as sess:

sess.run(init)

for epoch in range(training_epochs):

total_batch=int(mnist.train.num_examples/batch_size)

for i in range(total_batch):

batch_xs,batch_ys=mnist.train.next_batch(batch_size)

sess.run(optimizer,feed_dict={x:batch_xs,y:batch_ys})

if( epoch+1)%display_step==0:

print "cost=", sess.run(cost,feed_dict={x:batch_xs,y:batch_ys})

prediction=tf.equal(tf.argmax(pred,1),tf.argmax(y,1))

accuracy=tf.reduce_mean(tf.cast(prediction,tf.float32))

print "Accuracy:" ,accuracy.eval({x:mnist.test.image,y:mnist.test.labels})

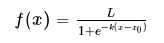

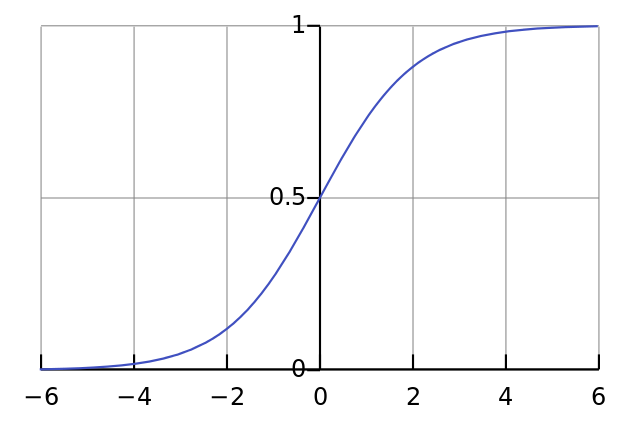

logistic 函数:

二分类问题

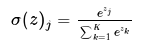

softmax 函数:

将k维向量压缩成另一个k维向量,进行多分类,logistic 是softmax的一个例外