Mac下hadoop运行word count的坑

Mac下hadoop运行word count的坑

Word count体现了Map Reduce的经典思想,是分布式计算中中的hello world。然而博主很幸运地遇到了Mac下特有的问题Mkdirs failed to create,特此记录

一、代码

- WCMapper.java

package wordcount;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.util.StringUtils;

import java.io.IOException;

/**

* 四个泛型中,前两个是指mapper输入的数据类型

* KEYIN是输入的key类型,VALUEIN是输入的value类型

* map和reduce的数据输入输出都是以key-value对的形式分装的

* 默认情况下,框架传递给我们的mapper的输入数据中

* key是要处理的文本中第一行的起始偏移量,value是这一行的内容

*

* Long->LongWritable实现hadoop自己的序列化接口,内容更精简,传输效率高

* String->Text

*/

public class WCMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

//mapreduce框架每一行数据就调用一次改方法

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 具体的业务逻辑就写在这个方法中,而且需要的处理的key-value已经传递进来

// 将这一行的内容转换成string

String line = value.toString();

// 切分单词

String[] words = StringUtils.split(line, ' ');

// 通过context把结果输出

for (String word: words){

context.write(new Text(word), new LongWritable(1));

}

}

}

- WCReducer.java

package wordcount;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WCReducer extends Reducer<Text, LongWritable, Text, LongWritable>{

// 框架在map处理完成之后,将所有k-v对缓存起来

// 进行分组,然后传递一个组<key, values{}>

// 调用一次reduce方法

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException {

long count = 0;

// 遍历values,累加求和

for (LongWritable value: values){

count += value.get();

}

// 输出这一个单词的统计结果

context.write(key, new LongWritable(count));

}

}

- WCRunner.java(启动项)

package wordcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* 用来描述一个特定的作业

* 比如,该作业使用哪个类作为逻辑处理的map,哪个作为reduce

* 还可以指定该作业要需要的数据所在的路径

* 还可以指定该作业输出的结果放到哪个路径

*/

public class WCRunner {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 设置整个job需要的jar包

// 通过WCRuner来找到其他依赖WCMapper和WCReducer

job.setJarByClass(WCRunner.class);

// 本job使用的mapper和reducer类

job.setMapperClass(WCMapper.class);

job.setReducerClass(WCReducer.class);

// 指定reducer的输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

// 指定mapper的输出kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

// 指定原始数据存放在哪里

FileInputFormat.setInputPaths(job,new Path("/wc/input/"));

// 指定处理结果的输出数据存放在哪里

FileOutputFormat.setOutputPath(job, new Path("/wc/output/"));

// 将job提交运行

job.waitForCompletion(true);

}

}

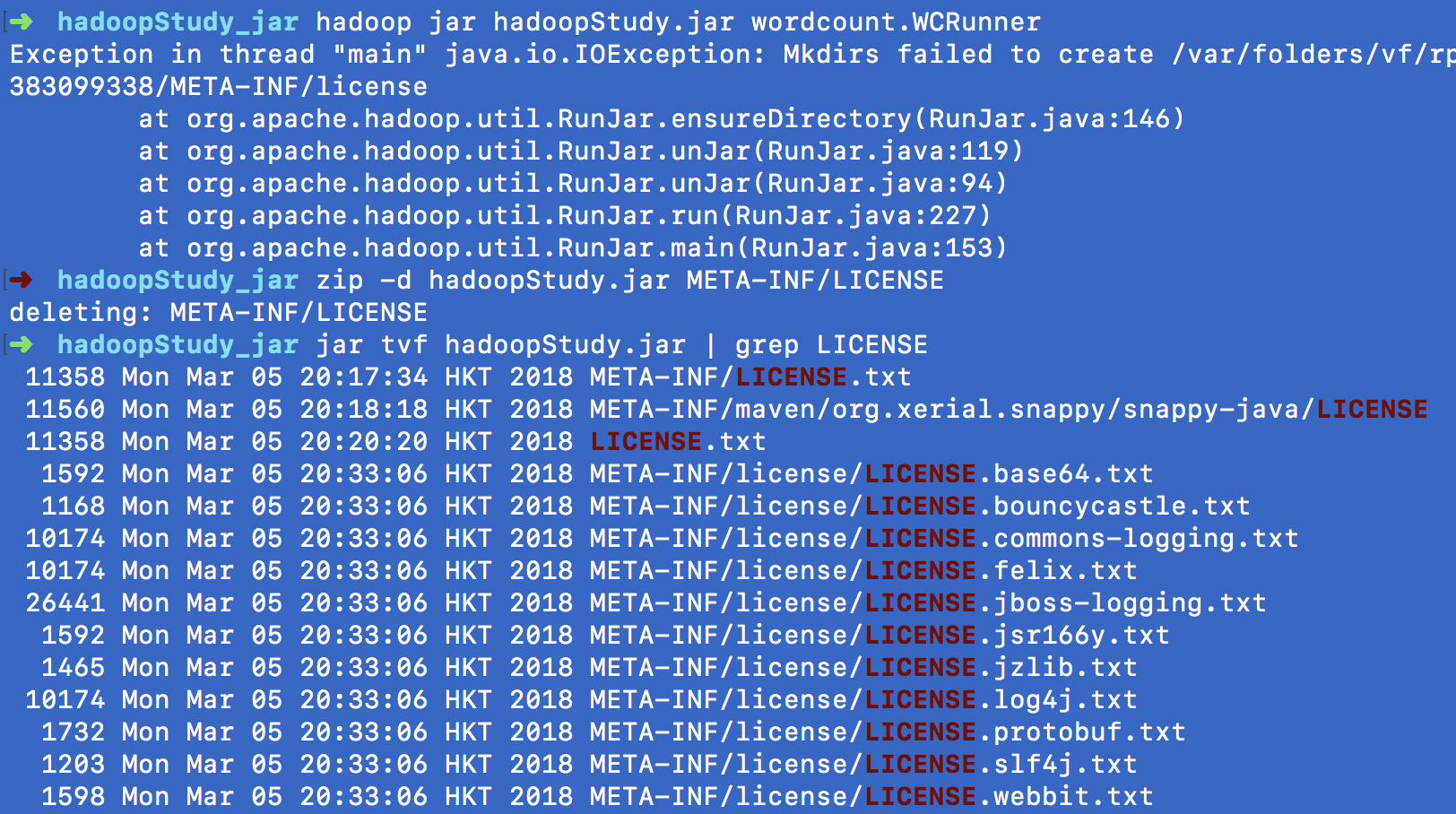

二、问题重现

写好代码后打包成jar,博主是用IDEA直接图形化操作的,然后提交到hadoop上运行

hadoop jar hadoopStudy.jar wordcount.WCRunner

结果未像官网和其他很多教程中说的那样出结果,而是报错

Exception in thread "main" java.io.IOException: Mkdirs failed to create /var/folders/vf/rplr8k812fj018q5lxcb5k940000gn/T/hadoop-unjar1598612687383099338/META-INF/license

at org.apache.hadoop.util.RunJar.ensureDirectory(RunJar.java:146)

at org.apache.hadoop.util.RunJar.unJar(RunJar.java:119)

at org.apache.hadoop.util.RunJar.unJar(RunJar.java:94)

at org.apache.hadoop.util.RunJar.run(RunJar.java:227)

at org.apache.hadoop.util.RunJar.main(RunJar.java:153)

最后折腾了半天,发现是Mac的问题,在stackoverflow中找到解释

The issue is that a /tmp/hadoop-xxx/xxx/LICENSE file and a

/tmp/hadoop-xxx/xxx/license directory are being created on a

case-insensitive file system when unjarring the mahout jobs.

删除原来压缩包的META-INF/LICENS,再重新压缩,解决问题~

zip -d hadoopStudy.jar META-INF/LICENSE

jar tvf hadoopStudy.jar | grep LICENSE

然后把新的jar上传到hadoop上运行

hadoop jar hadoopStudy.jar wordcount.WCRunner

bingo!

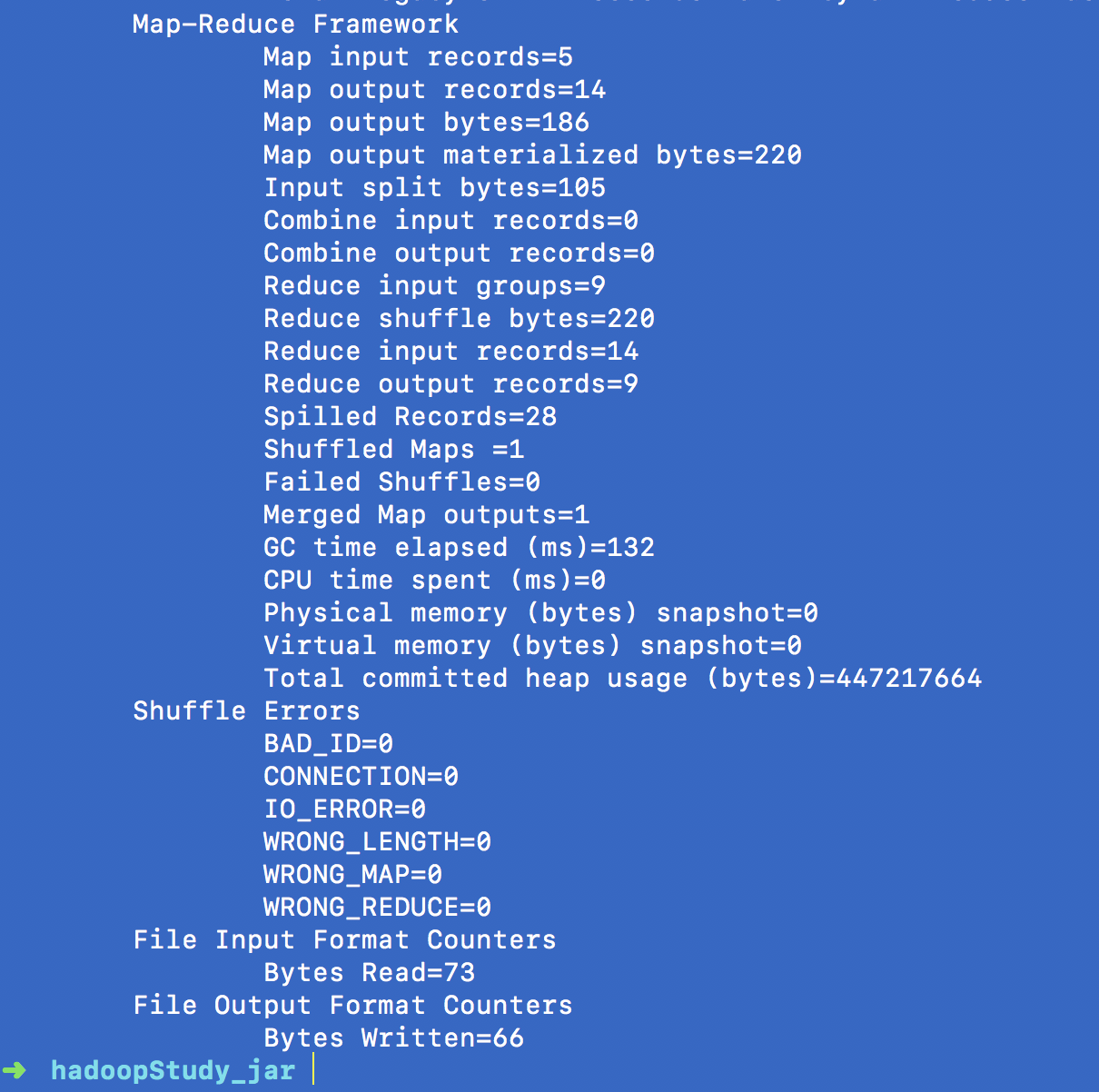

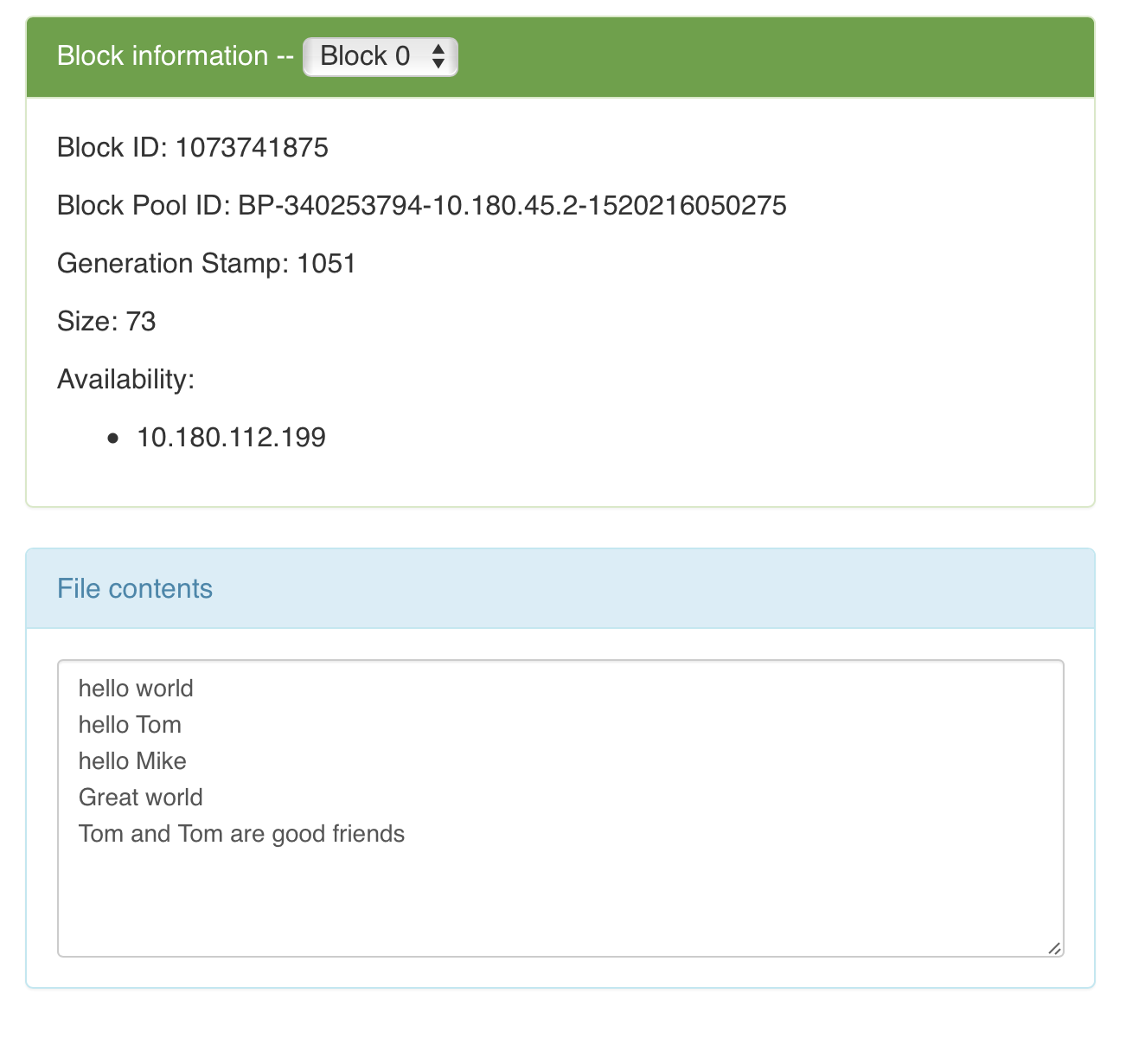

三、运行结果

顺便用浏览器看一下运行结果

- 输入文件

wc/input/input.txt

- 输出文件

/wc/output/part-r-00000]

运行结果显然是正确的,再也不敢随便说Mac大法好了……

浙公网安备 33010602011771号

浙公网安备 33010602011771号