Flannel IPIP 模式

Flannel IPIP 模式

一、环境信息

| 主机 | IP |

|---|---|

| ubuntu | 172.16.94.141 |

| 软件 | 版本 |

|---|---|

| docker | 26.1.4 |

| helm | v3.15.0-rc.2 |

| kind | 0.18.0 |

| clab | 0.54.2 |

| kubernetes | 1.23.4 |

| ubuntu os | Ubuntu 20.04.6 LTS |

| kernel | 5.11.5 内核升级文档 |

二、安装服务

kind 配置文件信息

$ cat install.sh

#!/bin/bash

date

set -v

# 1.prep noCNI env

cat <<EOF | kind create cluster --name=flannel-vxlan --image=kindest/node:v1.23.4 --config=-

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

networking:

# kind 默认使用 rancher cni,cni 我们需要自己创建

disableDefaultCNI: true

# 定义节点使用的 pod 网段

podSubnet: "10.244.0.0/16"

nodes:

- role: control-plane

- role: worker

- role: worker

containerdConfigPatches:

- |-

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."harbor.evescn.com"]

endpoint = ["https://harbor.evescn.com"]

EOF

# 2.install cni

kubectl apply -f ./flannel.yaml

# 3.install necessary tools

# cd /opt/

# curl -o calicoctl -O -L "https://gh.api.99988866.xyz/https://github.com/containernetworking/plugins/releases/download/v0.9.0/cni-plugins-linux-amd64-v0.9.0.tgz"

# tar -zxvf cni-plugins-linux-amd64-v0.9.0.tgz

for i in $(docker ps -a --format "table {{.Names}}" | grep flannel)

do

echo $i

docker cp /opt/bridge $i:/opt/cni/bin/

docker cp /usr/bin/ping $i:/usr/bin/ping

docker exec -it $i bash -c "sed -i -e 's/jp.archive.ubuntu.com\|archive.ubuntu.com\|security.ubuntu.com/old-releases.ubuntu.com/g' /etc/apt/sources.list"

docker exec -it $i bash -c "apt-get -y update >/dev/null && apt-get -y install net-tools tcpdump lrzsz bridge-utils >/dev/null 2>&1"

done

flannel.yaml

配置文件

# flannel.yaml

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "ipip"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: harbor.dayuan1997.com/devops/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: harbor.dayuan1997.com/devops/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: harbor.dayuan1997.com/devops/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

- name: tun

mountPath: /dev/net/tun

volumes:

- name: tun

hostPath:

path: /dev/net/tun

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

flannel.yaml 参数解释

Backend.Type- 含义: 用于指定

flannel工作模式。 ipip:flannel工作在ipip模式。

- 含义: 用于指定

- 安装

k8s集群和flannel服务

# ./install.sh

Creating cluster "flannel-ipip" ...

✓ Ensuring node image (kindest/node:v1.23.4) 🖼

✓ Preparing nodes 📦 📦 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing StorageClass 💾

✓ Joining worker nodes 🚜

Set kubectl context to "kind-flannel-ipip"

You can now use your cluster with:

kubectl cluster-info --context kind-flannel-ipip

Have a nice day! 👋

- 查看安装的服务

root@kind:~# kubectl get pods -A -w

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-64897985d-m2fln 1/1 Running 0 3m45s

kube-system coredns-64897985d-wcxz8 1/1 Running 0 3m45s

kube-system etcd-flannel-ipip-control-plane 1/1 Running 0 4m

kube-system kube-apiserver-flannel-ipip-control-plane 1/1 Running 0 4m

kube-system kube-controller-manager-flannel-ipip-control-plane 1/1 Running 0 4m4s

kube-system kube-flannel-ds-845gs 1/1 Running 0 3m26s

kube-system kube-flannel-ds-gm7n2 1/1 Running 0 3m26s

kube-system kube-flannel-ds-k5lkj 1/1 Running 0 3m26s

kube-system kube-proxy-4hngw 1/1 Running 0 3m26s

kube-system kube-proxy-v8zmv 1/1 Running 0 3m26s

kube-system kube-proxy-znb29 1/1 Running 0 3m46s

kube-system kube-scheduler-flannel-ipip-control-plane 1/1 Running 0 4m1s

local-path-storage local-path-provisioner-5ddd94ff66-x8jjt 1/1 Running 0 3m45s

k8s 集群安装 Pod 测试网络

root@kind:~# cat cni.yaml

apiVersion: apps/v1

kind: DaemonSet

#kind: Deployment

metadata:

labels:

app: cni

name: cni

spec:

#replicas: 1

selector:

matchLabels:

app: cni

template:

metadata:

labels:

app: cni

spec:

containers:

- image: harbor.dayuan1997.com/devops/nettool:0.9

name: nettoolbox

securityContext:

privileged: true

---

apiVersion: v1

kind: Service

metadata:

name: serversvc

spec:

type: NodePort

selector:

app: cni

ports:

- name: cni

port: 80

targetPort: 80

nodePort: 32000

root@kind:~# kubectl apply -f cni.yaml

daemonset.apps/cni created

service/serversvc created

root@kind:~# kubectl run net --image=harbor.dayuan1997.com/devops/nettool:0.9

pod/net created

- 查看安装服务信息

root@kind:~# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-f58n6 1/1 Running 0 19s 10.244.1.2 flannel-ipip-worker2 <none> <none>

cni-p548p 1/1 Running 0 19s 10.244.2.2 flannel-ipip-worker <none> <none>

net 1/1 Running 0 14s 10.244.1.3 flannel-ipip-worker2 <none> <none>

root@kind:~# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 4m46s

serversvc NodePort 10.96.82.171 <none> 80:32000/TCP 30s

三、测试网络

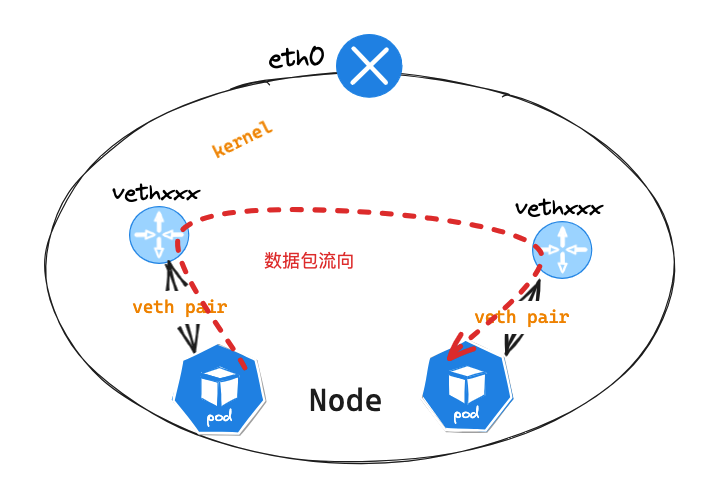

同节点 Pod 网络通讯

可以查看此文档 Flannel UDP 模式 中,同节点网络通讯,数据包转发流程一致

Flannel 同节点通信通过

l2网络通信,2层交换机完成

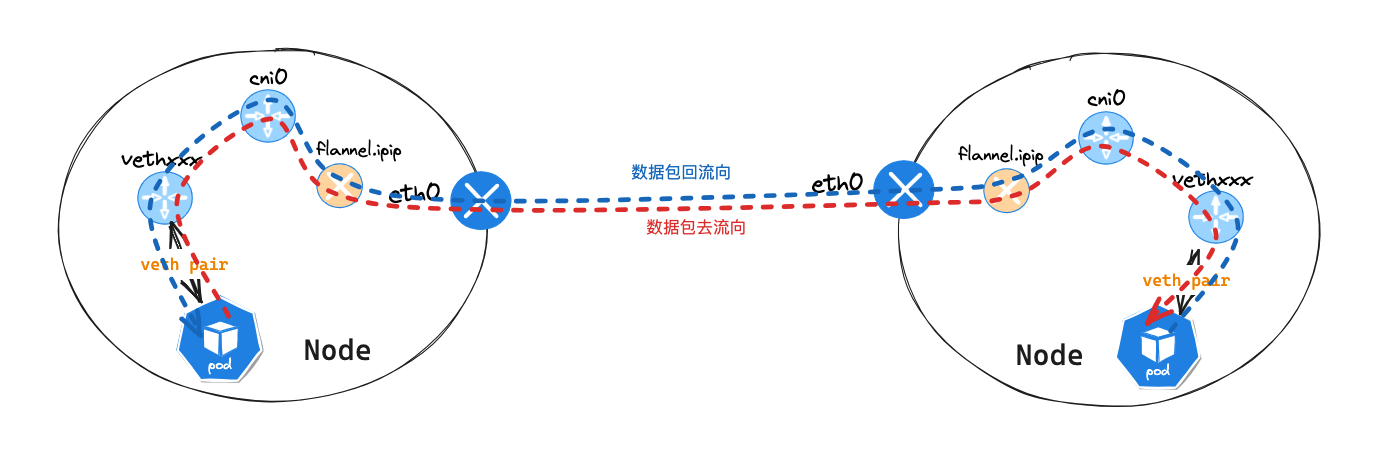

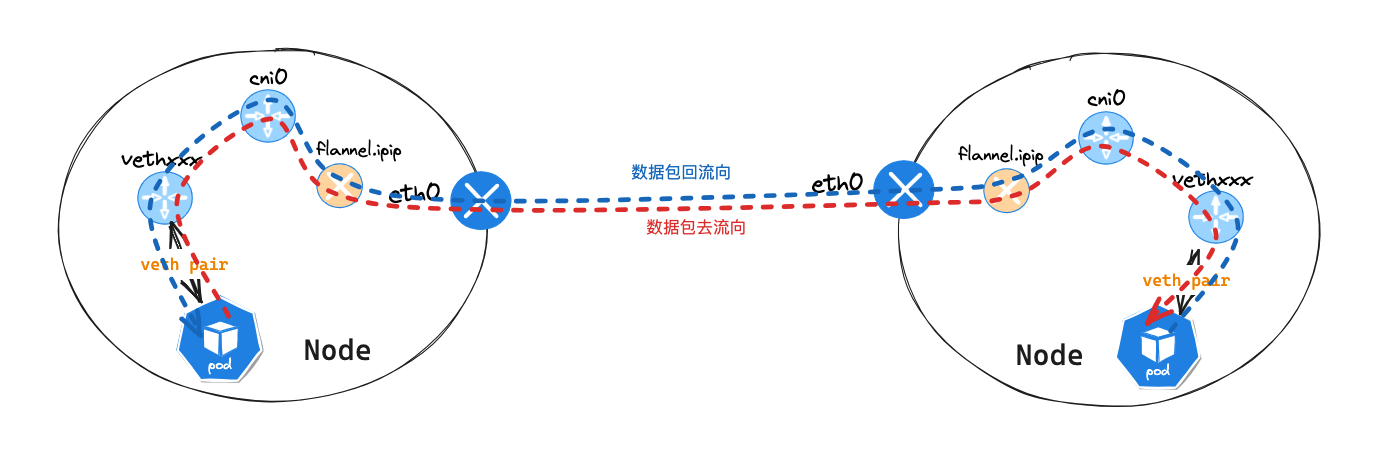

不同节点 Pod 网络通讯

Pod节点信息

## ip 信息

root@kind:~# kubectl exec -it net -- ip a l

4: eth0@if6: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue state UP group default

link/ether 3a:b2:db:1a:fc:d7 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.244.1.3/24 brd 10.244.1.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::38b2:dbff:fe1a:fcd7/64 scope link

valid_lft forever preferred_lft forever

## 路由信息

root@kind:~# kubectl exec -it net -- ip r s

default via 10.244.1.1 dev eth0

10.244.0.0/16 via 10.244.1.1 dev eth0

10.244.1.0/24 dev eth0 proto kernel scope link src 10.244.1.3

Pod节点所在Node节点信息

root@kind:~# docker exec -it flannel-ipip-worker2 bash

## ip 信息

root@flannel-ipip-worker2:/# ip a l

2: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

3: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN group default

link/ipip 172.18.0.3 brd 0.0.0.0

inet 10.244.1.0/32 scope global flannel.ipip

valid_lft forever preferred_lft forever

inet6 fe80::5efe:ac12:3/64 scope link

valid_lft forever preferred_lft forever

4: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue state UP group default qlen 1000

link/ether 42:94:41:c6:b2:23 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.1/24 brd 10.244.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::4094:41ff:fec6:b223/64 scope link

valid_lft forever preferred_lft forever

5: vethff7a3f2c@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP group default

link/ether ee:0b:49:ab:46:91 brd ff:ff:ff:ff:ff:ff link-netns cni-e18d1e60-364c-9ff3-9e8f-0367e78fd563

inet6 fe80::ec0b:49ff:feab:4691/64 scope link

valid_lft forever preferred_lft forever

6: veth753e0660@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1480 qdisc noqueue master cni0 state UP group default

link/ether 8a:69:94:d2:56:6b brd ff:ff:ff:ff:ff:ff link-netns cni-81b6e21f-62ae-df9b-7fe7-501253072833

inet6 fe80::8869:94ff:fed2:566b/64 scope link

valid_lft forever preferred_lft forever

17: eth0@if18: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:12:00:03 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.18.0.3/16 brd 172.18.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fc00:f853:ccd:e793::3/64 scope global nodad

valid_lft forever preferred_lft forever

inet6 fe80::42:acff:fe12:3/64 scope link

valid_lft forever preferred_lft forever

## 路由信息

root@flannel-ipip-worker2:/# ip r s

default via 172.18.0.1 dev eth0

10.244.0.0/24 via 172.18.0.4 dev flannel.ipip onlink

10.244.1.0/24 dev cni0 proto kernel scope link src 10.244.1.1

10.244.2.0/24 via 172.18.0.2 dev flannel.ipip onlink

172.18.0.0/16 dev eth0 proto kernel scope link src 172.18.0.3

Pod节点进行ping包测试,访问cni-p548pPod节点

root@kind:~# kubectl exec -it net -- ping 10.244.2.2 -c 1

PING 10.244.2.2 (10.244.2.2): 56 data bytes

64 bytes from 10.244.2.2: seq=0 ttl=62 time=2.661 ms

--- 10.244.2.2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 2.661/2.661/2.661 ms

Pod节点eth0网卡抓包

net~$ tcpdump -pne -i eth0

06:45:42.959427 3a:b2:db:1a:fc:d7 > 42:94:41:c6:b2:23, ethertype IPv4 (0x0800), length 98: 10.244.1.3 > 10.244.2.2: ICMP echo request, id 64, seq 0, length 64

06:45:42.959519 42:94:41:c6:b2:23 > 3a:b2:db:1a:fc:d7, ethertype IPv4 (0x0800), length 98: 10.244.2.2 > 10.244.1.3: ICMP echo reply, id 64, seq 0, length 64

数据包源 mac 地址: 3a:b2:db:1a:fc:d7 为 eth0 网卡 mac 地址,而目的 mac 地址: 42:94:41:c6:b2:23 为 net Pod 节点 cni0 网卡对应的网卡 mac 地址,cni0

网卡 ip 地址为网络网关地址 10.244.2.1 , flannel 为 2 层网络模式通过路由送往数据到网关地址

net~$ arp -n

Address HWtype HWaddress Flags Mask Iface

10.244.1.1 ether 42:94:41:c6:b2:23 C eth0

而通过 veth pair 可以确定 Pod 节点 eth0 网卡对应的 veth pair 为 veth753e0660@if4 网卡

flannel-ipip-worker2节点veth753e0660网卡抓包

root@flannel-ipip-worker2:/# tcpdump -pne -i veth753e0660

06:45:42.959427 3a:b2:db:1a:fc:d7 > 42:94:41:c6:b2:23, ethertype IPv4 (0x0800), length 98: 10.244.1.3 > 10.244.2.2: ICMP echo request, id 64, seq 0, length 64

06:45:42.959519 42:94:41:c6:b2:23 > 3a:b2:db:1a:fc:d7, ethertype IPv4 (0x0800), length 98: 10.244.2.2 > 10.244.1.3: ICMP echo reply, id 64, seq 0, length 64

因为他们互为 veth pair 所以抓包信息相同

flannel-ipip-worker2节点cni0网卡抓包

root@flannel-ipip-worker2:/# tcpdump -pne -i cni0

06:45:42.959427 3a:b2:db:1a:fc:d7 > 42:94:41:c6:b2:23, ethertype IPv4 (0x0800), length 98: 10.244.1.3 > 10.244.2.2: ICMP echo request, id 64, seq 0, length 64

06:45:42.959519 42:94:41:c6:b2:23 > 3a:b2:db:1a:fc:d7, ethertype IPv4 (0x0800), length 98: 10.244.2.2 > 10.244.1.3: ICMP echo reply, id 64, seq 0, length 64

数据包源 mac 地址: 3a:b2:db:1a:fc:d7 为 net Pod 节点 eth0 网卡 mac 地址,而目的 mac 地址: 42:94:41:c6:b2:23 为 cni0 网卡 mac 地址

查看

flannel-ipip-worker2主机路由信息,发现并在数据包会在通过10.244.2.0/24 via 172.18.0.2 dev flannel.ipip onlink路由信息转发

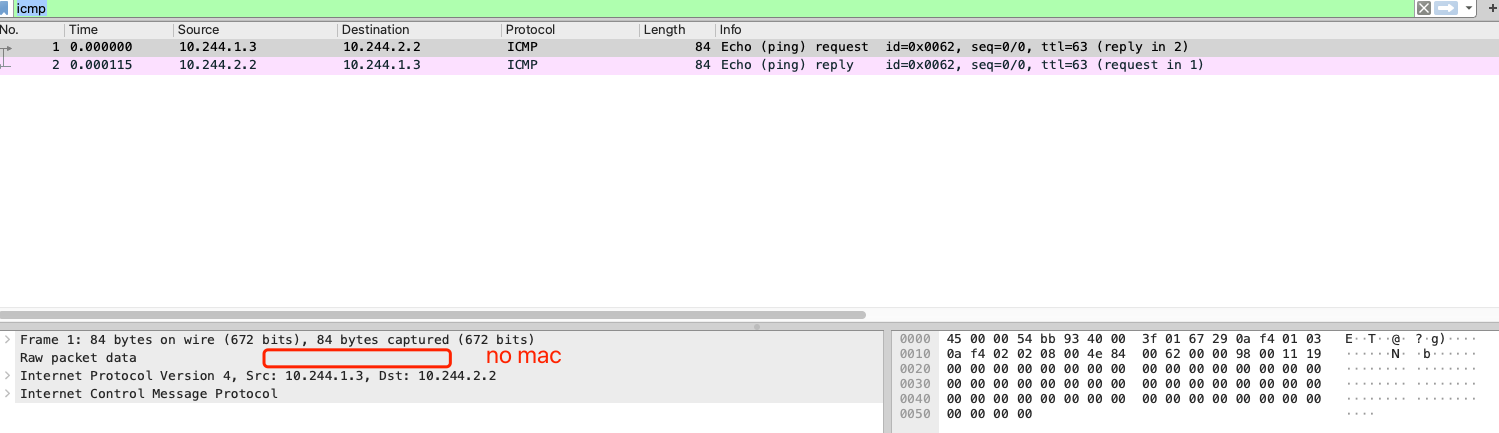

flannel-ipip-worker2节点flannel.ipip网卡抓包

root@flannel-ipip-worker2:/# tcpdump -pne -i flannel.ipip icmp

listening on flannel.ipip, link-type RAW (Raw IP), snapshot length 262144 bytes

06:48:37.658204 ip: 10.244.1.3 > 10.244.2.2: ICMP echo request, id 91, seq 0, length 64

06:48:37.658260 ip: 10.244.2.2 > 10.244.1.3: ICMP echo reply, id 91, seq 0, length 64

icmp 包中,没有 mac 信息,只有源 ip 目的 ip 信息,这也是 ipip 数据包的特性: IPIP 隧道的工作原理是将源主机的IP数据包封装在一个新的IP数据包中

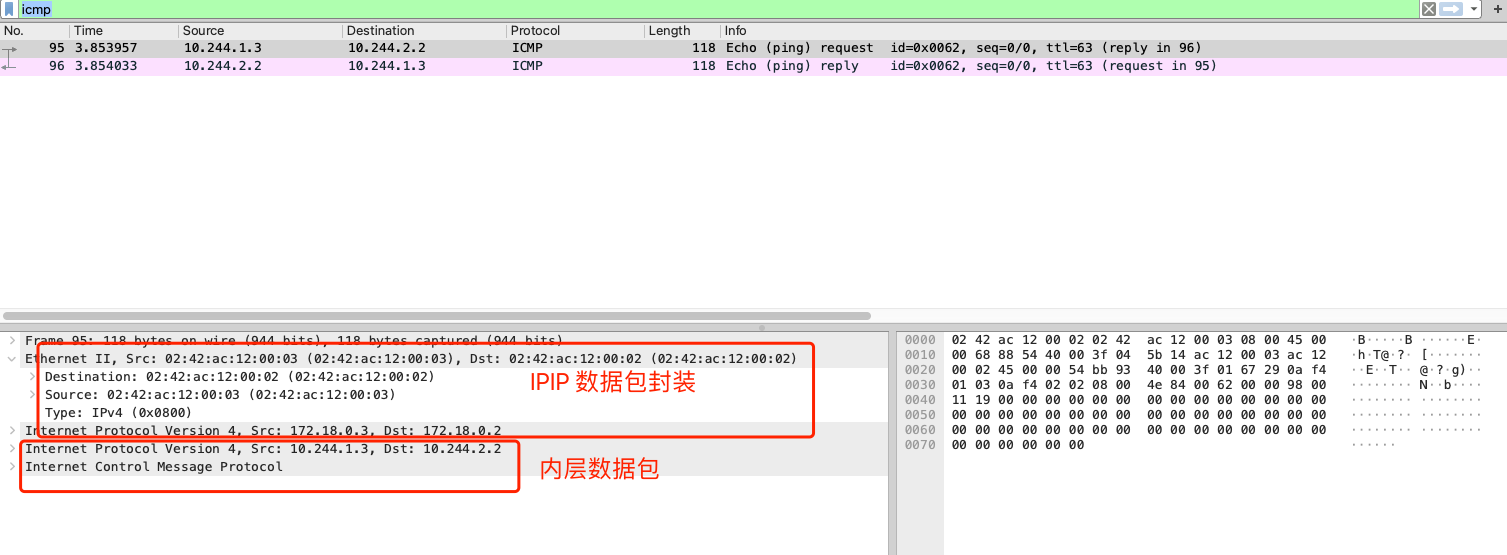

flannel-ipip-worker2节点eth0网卡抓包

-

request数据包信息信息icmp包中,外部mac信息中,源mac: 02:42:ac:12:00:03为flannel-ipip-worker2的eth0网卡mac,目的mac: 02:42:ac:12:00:02为对端Pod宿主机flannel-ipip-worker的eth0网卡mac。

-

flannel-ipip-worker2节点ipip信息

root@flannel-ipip-worker2:/# ip -d link show

3: flannel.ipip@NONE: <NOARP,UP,LOWER_UP> mtu 1480 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/ipip 172.18.0.3 brd 0.0.0.0 promiscuity 0 minmtu 0 maxmtu 0

ipip any remote any local 172.18.0.3 ttl inherit nopmtudisc addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

数据包流向

- 数据从

pod服务发出,通过查看本机路由表,送往10.244.2.1网卡。路由:10.244.0.0/16 via 10.244.2.1 dev eth0 - 通过

veth pair网卡veth753e0660发送数据到flannel-ipip-worker2主机上,在转送到cni0: 10.244.1.1网卡 flannel-ipip-worker2主机查看自身路由后,会送往flannel.ipip接口,因为目的地址为10.244.2.2。路由:10.244.2.0/24 via 172.18.0.2 dev flannel.ipip onlinkflannel.ipip接口为ipip模式,会重新封装数据包。- 数据封装完成后,会送往

eth0 接口,并送往对端flannel-ipip-worker主机。 - 对端

flannel-ipip-worker主机接受到数据包后,发现这个是一个ipip数据包,接收端会将外层IP头部去掉,提取内层的IP数据包。 - 内层数据包会被交给

flannel.ipip接口进行处理,就像是接收到了一个普通的IP数据包一样。 - 解封装后发现内部的数据包,目的地址为

10.244.2.2,通过查看本机路由表,送往cni0网卡。路由:10.244.2.0/24 dev cni0 proto kernel scope link src 10.244.2.1 - 通过

cni0网卡brctl showmacs cni0mac信息 ,最终会把数据包送到cni-p548p主机

Service 网络通讯

可以查看此文档 Flannel UDP 模式 中,Service 网络通讯,数据包转发流程一致

Flannel IPIP 模式

Flannel IPIP 模式

浙公网安备 33010602011771号

浙公网安备 33010602011771号