Cilium Dual Stack 双栈特性(转载)

Cilium 双栈特性(转载)

一、环境信息

| 主机 | IP |

|---|---|

| ubuntu | 10.0.0.234 |

| 软件 | 版本 |

|---|---|

| docker | 26.1.4 |

| helm | v3.15.0-rc.2 |

| kind | 0.18.0 |

| kubernetes | 1.23.4 |

| ubuntu os | Ubuntu 22.04.6 LTS |

| kernel | 5.15.0-106 |

二、IPV6/IPV4 双栈

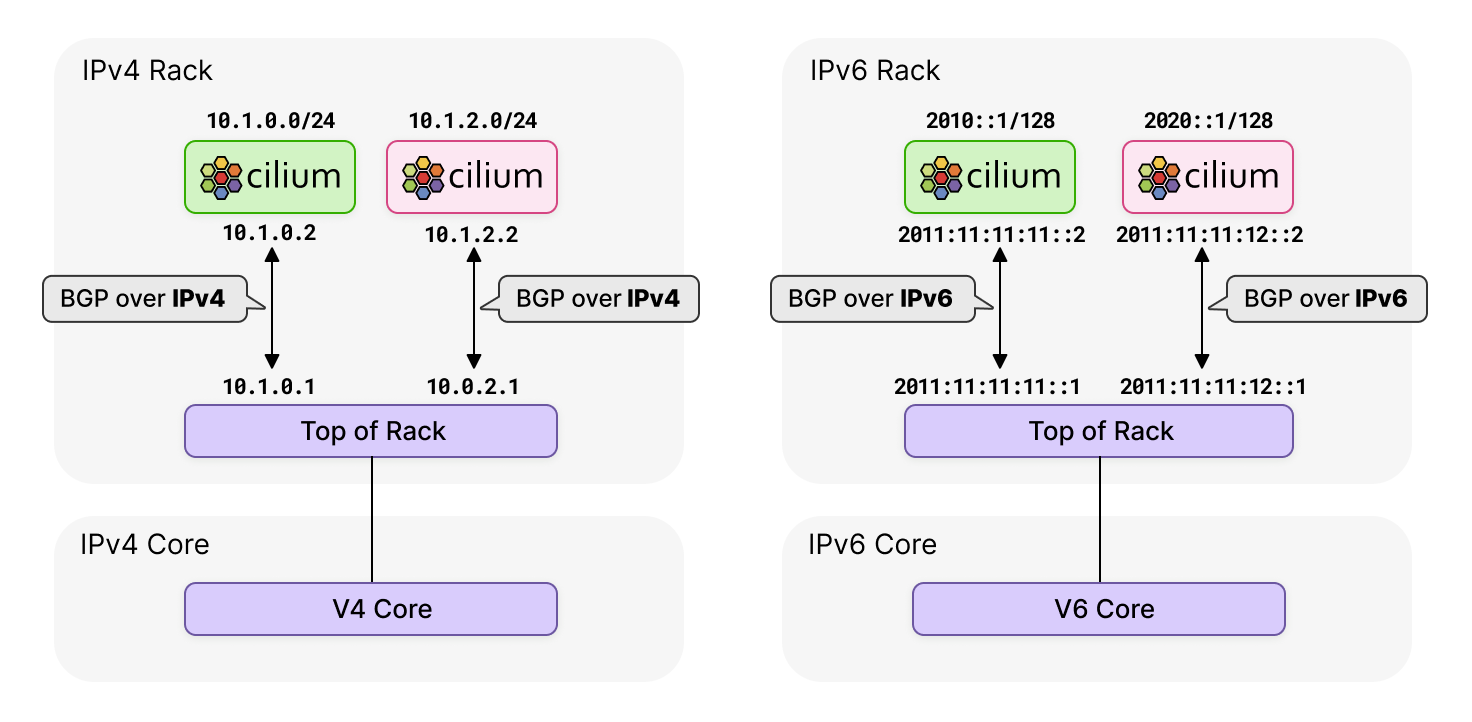

ipv4 ipv6 共存( DualStack )有两种方式:

- 一个网卡上有两个IP地址,一个是

ipv4,一个是ipv6。标准实现方式。 - 两个同样功能的网卡接口,一个提供

ipv4,一个提供ipv6。通过负载均衡机制,将对应地址的请求发送到对应的网卡。

目前 k8s 集群已经支持 ipv4/ipv6 双栈,从 1.21 alpha 版本开始

同样 cilium cni 也对双栈技术做了实现,是一个 inCluster 层面的实现,如果数据流量要进出集群,就需要平台级的实现。

Cilium 的双协议栈 IPv4/IPv6 网络允许为每个 pod 分配 IPv4 和 IPv6 地址,确保与现代 IPv6 系统以及传统 IPv4 应用程序和服务进行无缝通信。对于正在从 IPv4 向 IPv6 过渡的企业,Cilium 的 NAT46/64 支持为其提供了一条轻松过渡的途径。Cilium 的 BGP IPv6 支持可让用户公布其 IPv6 Pod CIDR。这些功能共同提供了可扩展性,使企业做好准备,迎接 IPv6 成为常态而非例外的未来。

三、Cilium Dual Stack 模式环境搭建

kind 配置文件信息

root@kind:~# cat install.sh

#!/bin/bash

date

set -v

# 1.prep noCNI env

cat <<EOF | kind create cluster --name=cilium-dual-stack --image=kindest/node:v1.23.4 --config=-

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

networking:

# kind 默认使用 rancher cni,cni 我们需要自己创建

disableDefaultCNI: true

# 启用双栈功能打开

ipFamily: dual

apiServerAddress: 127.0.0.1

nodes:

- role: control-plane

- role: worker

- role: worker

containerdConfigPatches:

- |-

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."harbor.evescn.com"]

endpoint = ["https://harbor.evescn.com"]

EOF

# 2.remove taints

controller_node_ip=`kubectl get node -o wide --no-headers | grep -E "control-plane|bpf1" | awk -F " " '{print $6}'`

# kubectl taint nodes $(kubectl get nodes -o name | grep control-plane) node-role.kubernetes.io/master:NoSchedule-

kubectl get nodes -o wide

# 3.install cni

helm repo add cilium https://helm.cilium.io > /dev/null 2>&1

helm repo update > /dev/null 2>&1

helm install cilium cilium/cilium \

--set k8sServiceHost=$controller_node_ip \

--set k8sServicePort=6443 \

--version 1.13.0-rc5 \

--namespace kube-system \

--set debug.enabled=true \

--set debug.verbose=datapath \

--set monitorAggregation=none \

--set ipam.mode=kubernetes \

--set cluster.name=cilium-dual-stack \

--set kubeProxyReplacement=disabled

--set tunnel=vxlan \

--set ipv6.enabled=true

# 4.install necessary tools

for i in $(docker ps -a --format "table {{.Names}}" | grep cilium)

do

echo $i

docker cp /usr/bin/ping $i:/usr/bin/ping

docker exec -it $i bash -c "sed -i -e 's/jp.archive.ubuntu.com\|archive.ubuntu.com\|security.ubuntu.com/old-releases.ubuntu.com/g' /etc/apt/sources.list"

docker exec -it $i bash -c "apt-get -y update >/dev/null && apt-get -y install net-tools tcpdump lrzsz bridge-utils >/dev/null 2>&1"

done

--set 参数解释

-

--set kubeProxyReplacement=disabled- 含义: 禁用 kube-proxy 替代功能。

- 用途: Cilium 不会替代 kube-proxy 实现服务负载均衡,依旧使用 kube-proxy 实现服务负载均衡。

-

--set tunnel=vxlan- 含义: 使用隧道模式。

- 用途: 启用 VXLAN 隧道模式,这是一种网络虚拟化技术,用于在第 2 层数据包上封装第 3 层数据包,实现网络隔离和扩展。

-

--set ipv6.enabled=true- 含义: 启用 IPv6 支持。

- 用途: 启用后 网卡会配置 IPv6 地址信息。

- 安装

k8s集群和cilium服务

root@kind:~# ./install.sh

Creating cluster "cilium-dual-stack" ...

✓ Ensuring node image (kindest/node:v1.23.4) 🖼

✓ Preparing nodes 📦 📦 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing StorageClass 💾

✓ Joining worker nodes 🚜

Set kubectl context to "kind-cilium-dual-stack"

You can now use your cluster with:

kubectl cluster-info --context kind-cilium-dual-stack

Have a question, bug, or feature request? Let us know! https://kind.sigs.k8s.io/#community 🙂

cilium 配置信息

root@kind:~# kubectl -n kube-system exec -it ds/cilium -- cilium status

KVStore: Ok Disabled

Kubernetes: Ok 1.23 (v1.23.4) [linux/amd64]

Kubernetes APIs: ["cilium/v2::CiliumClusterwideNetworkPolicy", "cilium/v2::CiliumEndpoint", "cilium/v2::CiliumNetworkPolicy", "cilium/v2::CiliumNode", "core/v1::Namespace", "core/v1::Node", "core/v1::Pods", "core/v1::Service", "discovery/v1::EndpointSlice", "networking.k8s.io/v1::NetworkPolicy"]

KubeProxyReplacement: Disabled

Host firewall: Disabled

CNI Chaining: none

CNI Config file: CNI configuration file management disabled

Cilium: Ok 1.13.0-rc5 (v1.13.0-rc5-dc22a46f)

NodeMonitor: Listening for events on 8 CPUs with 64x4096 of shared memory

Cilium health daemon: Ok

# IPAM 中不仅有 IPv4 地址范围,还有 IPv6 地址范围

IPAM: IPv4: 6/254 allocated from 10.244.1.0/24, IPv6: 6/18446744073709551614 allocated from fd00:10:244:1::/64

IPv6 BIG TCP: Disabled

BandwidthManager: Disabled

Host Routing: Legacy

Masquerading: IPTables [IPv4: Enabled, IPv6: Enabled]

Controller Status: 34/34 healthy

Proxy Status: OK, ip 10.244.1.154, 0 redirects active on ports 10000-20000

Global Identity Range: min 256, max 65535

Hubble: Ok Current/Max Flows: 4095/4095 (100.00%), Flows/s: 11.23 Metrics: Disabled

Encryption: Disabled

Cluster health: 3/3 reachable (2024-07-12T07:00:21Z)

k8s 集群安装 Pod 测试网络

# cat cni.yaml

apiVersion: apps/v1

kind: DaemonSet

#kind: Deployment

metadata:

labels:

app: cni

name: cni

spec:

#replicas: 1

selector:

matchLabels:

app: cni

template:

metadata:

labels:

app: cni

spec:

containers:

- image: harbor.dayuan1997.com/devops/nettool:0.9

name: nettoolbox

securityContext:

privileged: true

---

apiVersion: v1

kind: Service

metadata:

name: cni

spec:

ipFamilyPolicy: PreferDualStack

ipFamilies:

- IPv6

- IPv4

ports:

- port: 80

targetPort: 80

protocol: TCP

nodePort: 30000

type: NodePort

selector:

app: cni

root@kind:~# kubectl apply -f cni.yaml

daemonset.apps/cni created

service/serversvc created

- 查看安装服务信息

root@kind:~# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-7t9p4 1/1 Running 0 2m35s 10.244.1.233 cilium-dual-stack-worker <none> <none>

cni-8qgcs 1/1 Running 0 2m35s 10.244.2.245 cilium-dual-stack-worker2 <none> <none>

确认 IPv4 IPv6 双栈启用成功

- 查看

POD信息

root@kind:~# kubectl exec -it cni-7t9p4 -- bash

cni-7t9p4~$ ip a l

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

18: eth0@if19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 4e:5b:57:45:63:3b brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.244.1.233/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fd00:10:244:1::4749/128 scope global nodad

valid_lft forever preferred_lft forever

inet6 fe80::4c5b:57ff:fe45:633b/64 scope link

valid_lft forever preferred_lft forever

eth0 网卡同时存在 IPv4 地址 10.244.1.233 和 IPv6 地址 fd00:10:244:1::4749 , pod IPv4 IPv6 双栈启用成功

- 查看

SVC信息

root@KinD:~# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

cni NodePort fd00:10:96::f38d <none> 80:30000/TCP 6m44s

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 58m

serversvc NodePort 10.96.58.99 <none> 80:32000/TCP 53m

root@KinD:~# kubectl get svc cni -o yaml

apiVersion: v1

kind: Service

metadata:

annotations:

......

creationTimestamp: "2024-07-12T07:08:05Z"

name: cni

namespace: default

resourceVersion: "8306"

uid: 47a50c0e-d595-48f1-8574-59328412be13

spec:

clusterIP: fd00:10:96::f38d

clusterIPs:

- fd00:10:96::f38d

- 10.96.86.244

externalTrafficPolicy: Cluster

internalTrafficPolicy: Cluster

ipFamilies:

- IPv6

- IPv4

ipFamilyPolicy: PreferDualStack

ports:

- nodePort: 30000

port: 80

protocol: TCP

targetPort: 80

selector:

app: cni

sessionAffinity: None

type: NodePort

status:

loadBalancer: {}

svc 网卡同时存在 IPv4 地址 10.96.86.244 和 IPv6 地址 fd00:10:96::f38d , svc IPv4 IPv6 双栈启用成功

svc的IPv4和IPv6地址的解析验证

root@KinD:~# kubectl exec -it cni-7t9p4 -- bash

cni-7t9p4~$ nslookup -type=A cni

Server: 10.96.0.10

Address: 10.96.0.10#53

Name: cni.default.svc.cluster.local

Address: 10.96.86.244

cni-7t9p4~$ nslookup -type=AAAA cni

Server: 10.96.0.10

Address: 10.96.0.10#53

Name: cni.default.svc.cluster.local

Address: fd00:10:96::f38d

-type=A 解析 cni svc 的 ipv4 地址,解析结果: 10.96.86.244 ,同配置文件信息一致

-type=AAAA 解析 cni svc 的 ipv6 地址,解析结果: fd00:10:96::f38d ,同配置文件信息一致

Cilium Dual Stack 模式分析

- IPv4 模式下 pod 内的

IP路由信息

root@KinD:~# kubectl exec -it cni-7t9p4 -- ip a l

18: eth0@if19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 4e:5b:57:45:63:3b brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.244.1.233/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fd00:10:244:1::4749/128 scope global nodad

valid_lft forever preferred_lft forever

inet6 fe80::4c5b:57ff:fe45:633b/64 scope link

valid_lft forever preferred_lft forever

root@kind:~# # kubectl exec -it cni-7t9p4 -- ip r s

default via 10.244.1.154 dev eth0 mtu 1450

10.244.1.154 dev eth0 scope link

pod 出网需要经过 eth0 网卡,下一跳地址 10.244.1.154 为 node 节点上的 cilium_host 网卡

root@KinD:~# kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

cni-7t9p4 1/1 Running 0 29m 10.244.1.233 cilium-dual-stack-worker <none> <none>

cni-8qgcs 1/1 Running 0 29m 10.244.2.245 cilium-dual-stack-worker2 <none> <none>

root@KinD:~# lo cilium-dual-stack-worker ip a l

3: cilium_host@cilium_net: <BROADCAST,MULTICAST,NOARP,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 32:ed:4d:67:b2:e1 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.154/32 scope link cilium_host

valid_lft forever preferred_lft forever

inet6 fc00:f853:ccd:e793::4/128 scope global

valid_lft forever preferred_lft forever

inet6 fe80::30ed:4dff:fe67:b2e1/64 scope link

valid_lft forever preferred_lft forever

# cni-7t9p4 POD eth0 的 veth pair 网卡信息

19: lxc237a31216c0f@if18: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether a2:79:14:47:80:b3 brd ff:ff:ff:ff:ff:ff link-netns cni-7635120a-f51b-728d-4cbd-91d3c61c0093

inet6 fe80::a079:14ff:fe47:80b3/64 scope link

valid_lft forever preferred_lft forever

IPv6模式下pod内的路由规则

root@KinD:~# kubectl exec -it cni-7t9p4 -- ip -6 r s

fd00:10:244:1::22f1 dev eth0 metric 1024 pref medium

fd00:10:244:1::4749 dev eth0 proto kernel metric 256 pref medium

fe80::/64 dev eth0 proto kernel metric 256 pref medium

default via fd00:10:244:1::22f1 dev eth0 metric 1024 mtu 1450 pref medium

root@KinD:~# kubectl exec -it cni-7t9p4 -- ip -6 n s

fd00:10:244:1::22f1 dev eth0 lladdr a2:79:14:47:80:b3 router STALE

- 它的下一跳所在的地址

fd00:10:244:1::22f1,查看ip -6 n s发现fd00:10:244:1::22f1地址对应的 mac 地址为a2:79:14:47:80:b3,此地址为cilium-dual-stack-worker节点19: lxc237a31216c0f@if18网卡mac地址,但是此网卡没有fd00:10:244:1::22f1IPv6 地址 - 此处类似于

calico的169.254.1.1,这个地址不一定要有,但是数据包往上面发的时候,只要有一个hook能劫持,并且让其他网卡回复对应的mac地址就行了。

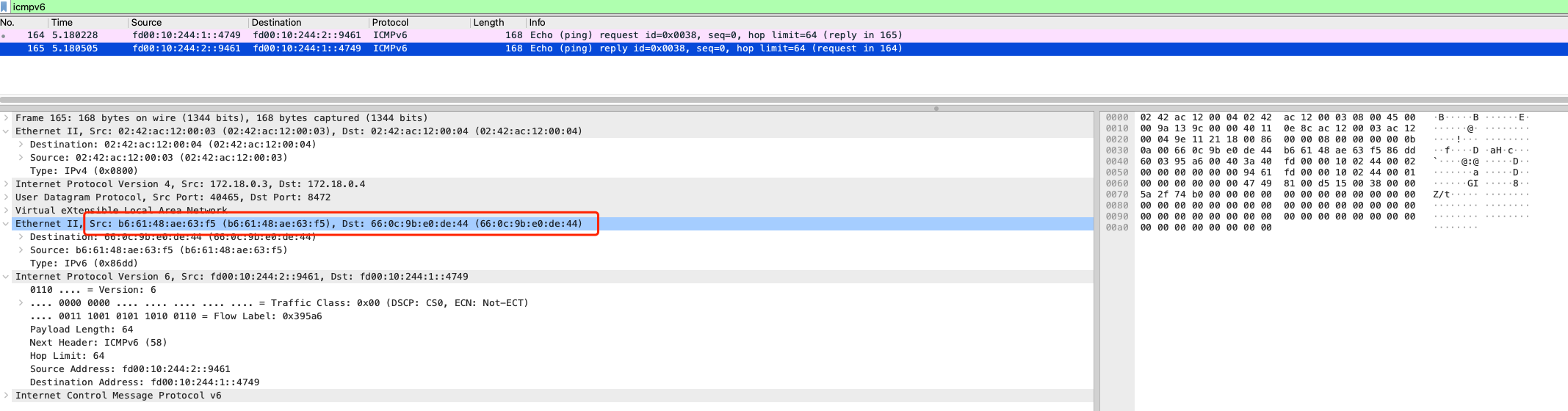

IPv6模式下的ping测

cni-7t9p4~$ ip a l

18: eth0@if19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 4e:5b:57:45:63:3b brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.244.1.233/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fd00:10:244:1::4749/128 scope global nodad

valid_lft forever preferred_lft forever

inet6 fe80::4c5b:57ff:fe45:633b/64 scope link

valid_lft forever preferred_lft forever

cni-8qgcs~$ ip a l

11: eth0@if12: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether b6:61:48:ae:63:f5 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.244.2.245/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fd00:10:244:2::9461/128 scope global nodad

valid_lft forever preferred_lft forever

inet6 fe80::b461:48ff:feae:63f5/64 scope link

valid_lft forever preferred_lft forever

- cni-7t9p4 IPv6 地址:

fd00:10:244:1::4749 - cni-8qgcs IPv6 地址:

fd00:10:244:2::9461

root@KinD:~# kubectl exec -it cni-7t9p4 -- ping6 -c 1 fd00:10:244:2::9461

PING fd00:10:244:2::9461 (fd00:10:244:2::9461): 56 data bytes

64 bytes from fd00:10:244:2::9461: seq=0 ttl=63 time=0.838 ms

--- fd00:10:244:2::9461 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.838/0.838/0.838 ms

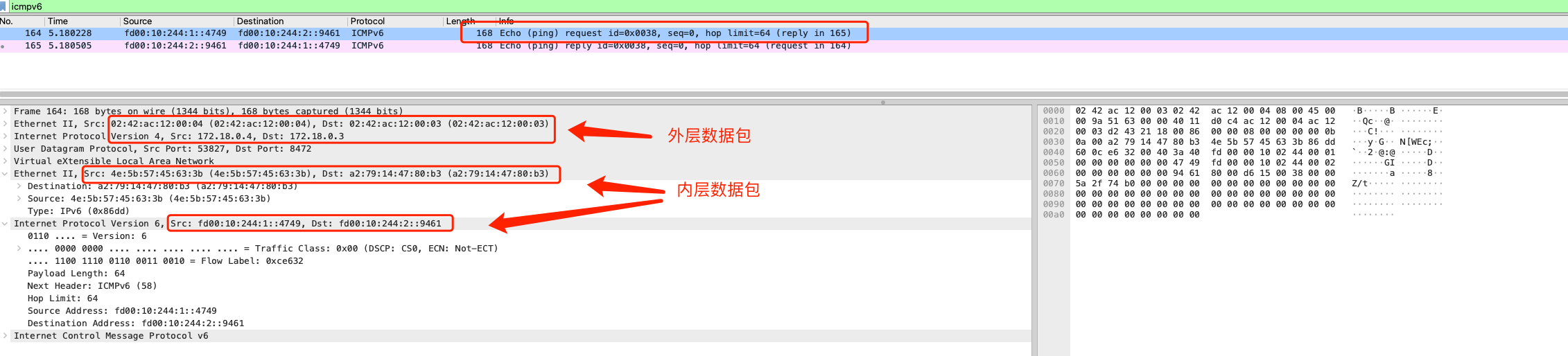

cni-7t9p4eth0网卡抓包分析数据包

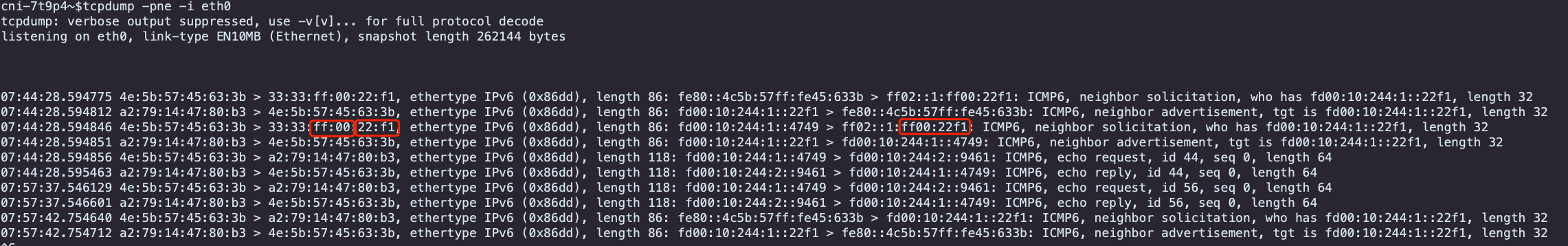

cni-7t9p4~$tcpdump -pne -i eth0

tcpdump: verbose output suppressed, use -v[v]... for full protocol decode

listening on eth0, link-type EN10MB (Ethernet), snapshot length 262144 bytes

07:44:28.594775 4e:5b:57:45:63:3b > 33:33:ff:00:22:f1, ethertype IPv6 (0x86dd), length 86: fe80::4c5b:57ff:fe45:633b > ff02::1:ff00:22f1: ICMP6, neighbor solicitation, who has fd00:10:244:1::22f1, length 32

07:44:28.594812 a2:79:14:47:80:b3 > 4e:5b:57:45:63:3b, ethertype IPv6 (0x86dd), length 86: fd00:10:244:1::22f1 > fe80::4c5b:57ff:fe45:633b: ICMP6, neighbor advertisement, tgt is fd00:10:244:1::22f1, length 32

07:44:28.594846 4e:5b:57:45:63:3b > 33:33:ff:00:22:f1, ethertype IPv6 (0x86dd), length 86: fd00:10:244:1::4749 > ff02::1:ff00:22f1: ICMP6, neighbor solicitation, who has fd00:10:244:1::22f1, length 32

07:44:28.594851 a2:79:14:47:80:b3 > 4e:5b:57:45:63:3b, ethertype IPv6 (0x86dd), length 86: fd00:10:244:1::22f1 > fd00:10:244:1::4749: ICMP6, neighbor advertisement, tgt is fd00:10:244:1::22f1, length 32

07:44:28.594856 4e:5b:57:45:63:3b > a2:79:14:47:80:b3, ethertype IPv6 (0x86dd), length 118: fd00:10:244:1::4749 > fd00:10:244:2::9461: ICMP6, echo request, id 44, seq 0, length 64

07:44:28.595463 a2:79:14:47:80:b3 > 4e:5b:57:45:63:3b, ethertype IPv6 (0x86dd), length 118: fd00:10:244:2::9461 > fd00:10:244:1::4749: ICMP6, echo reply, id 44, seq 0, length 64

- 源

mac:4e:5b:57:45:63:3b为cni-7t9p4eth0网卡mac地址,目标mac:a2:79:14:47:80:b3为cni-7t9p4eth0网卡对应的lxc网卡mac地址 - 源

IPv6:fd00:10:244:1::4749为cni-7t9p4eth0网卡IPv6地址,目标IPv6:fd00:10:244:2::9461为目标pod的IPv6地址 - 所以即使在

pod内查看IPv6的路由规则,找不到对应的下一跳位置,也不影响数据报文的封装。 - 类似于

calico的169.254.1.1,这个地址不一定要有,但是数据包往上面发的时候,只要有一个hook能劫持,并且让其他网卡回复对应的mac地址就行了。这样在封装报文的时候:srcMac srcIP,dstMac,dstIP都具备了,这样一个数据包才能完整的发送出去。 - 整个流程差不多就是 根据容器内IPv6的下一跳 抓包找到对应的mac地址,然后根据mac地址的来源做一个推理。

cni-7t9p4POD所在node节点eth0网卡抓包分析数据包

root@cilium-dual-stack-worker:/# tcpdump -pne -i eth0 -w /tmp/1.cap

root@cilium-dual-stack-worker:/# sz /tmp/1.cap

request包中内层包中源mac:4e:5b:57:45:63:3b为cni-7t9p4eth0网卡mac地址,目标mac:a2:79:14:47:80:b3为cni-7t9p4eth0网卡对应的lxc网卡mac地址,内层包中源IPv6:fd00:10:244:1::4749为cni-7t9p4eth0网卡IPv6地址,目标IPv6:fd00:10:244:2::9461为目标pod的IPv6地址,同POD节点eth0网卡信息request包中外层包中使用node节点信息,封装vxlan信息完成数据包转发

reply包中内层包中源mac目标mac信息不同于request包,分析这2个地址应该为目标POD eth0网卡与其对于的veth pair网卡对于 mac 信息

- IPv6 的优点

- 一个数据包如果到达IP层才能感知可以丢包,但是 IPv6 在二层就能感知到,不是自己的包就可以丢掉。

- 提供了更多的IP地址,但是复杂性也增加了。

浙公网安备 33010602011771号

浙公网安备 33010602011771号