OpenShift上的OpenvSwitch入门

前段时间参加openshift培训,通过产品部门的讲解,刷新了我对OpenShift一些的认识,今天先从最弱的环节网络做一些了解吧。

Openvswitch是openshift sdn的核心组件,进入集群,然后列出某个节点所有的pod

[root@master ~]# oc adm manage-node node1.example.com --list-pods Listing matched pods on node: node1.example.com NAMESPACE NAME READY STATUS RESTARTS AGE default docker-registry-1-trrdq 1/1 Running 3 16d default router-1-6zjmb 1/1 Running 3 16d openshift-monitoring alertmanager-main-0 3/3 Running 11 16d openshift-monitoring alertmanager-main-1 3/3 Running 10 6d openshift-monitoring alertmanager-main-2 3/3 Running 11 6d openshift-monitoring cluster-monitoring-operator-df6d9f48d-8lzcw 1/1 Running 4 16d openshift-monitoring grafana-76cc4f64c-m25c4 2/2 Running 8 16d openshift-monitoring kube-state-metrics-8db94b768-tgfgq 3/3 Running 11 16d openshift-monitoring node-exporter-mjclp 2/2 Running 86 164d openshift-monitoring prometheus-k8s-0 4/4 Running 15 6d openshift-monitoring prometheus-k8s-1 4/4 Running 14 6d openshift-monitoring prometheus-operator-959fc8dfd-ppc78 1/1 Running 9 16d openshift-node sync-7mpsc 1/1 Running 47 164d openshift-sdn ovs-rzc7j 1/1 Running 42 164d openshift-sdn sdn-77s8t 1/1 Running 60 164d

Sync Pod

sync pod主要是sync daemonset创建,主要负责监控/etc/sysconfig/atomic-openshift-node的变化, 主要是观察BOOTSTRAP_CONFIG_NAME的设置.

BOOTSTRAP_CONFIG_NAME 是openshift-ansible 安装的,是一个基于node configuration group的ConfigMap类型。openshift 缺省有如下node Configuration Group

-

node-config-master

-

node-config-infra

-

node-config-compute

-

node-config-all-in-one

-

node-config-master-infra

Sync Pod 转换configmap的数据到kubelet的配置,并为节点生成/etc/origin/node/node-config.yaml ,如果文件配置有变化,kubelet会重启。

0. OVS的简介

OVS Pod

ovs Pod主要基于Openvswitch为Openshift提供一个容器网络.它是OpenvSwitch的一个容器化实现,核心组件如下:

核心组件介绍如下

- OVS-VSwitchd

ovs-vswitchd守护进程是OVS的核心部件,它和datapath内核模块一起实现OVS基于流的数据交换。作为核心组件,它使用openflow协议与上层OpenFlow控制器通信,使用OVSDB协议与ovsdb-server通信,使用netlink和datapath内核模块通信。ovs-vswitchd在启动时会读取ovsdb-server中配置信息,然后配置内核中的datapaths和所有OVS switches,当ovsdb中的配置信息改变时(例如使用ovs-vsctl工具),ovs-vswitchd也会自动更新其配置以保持与数据库同步

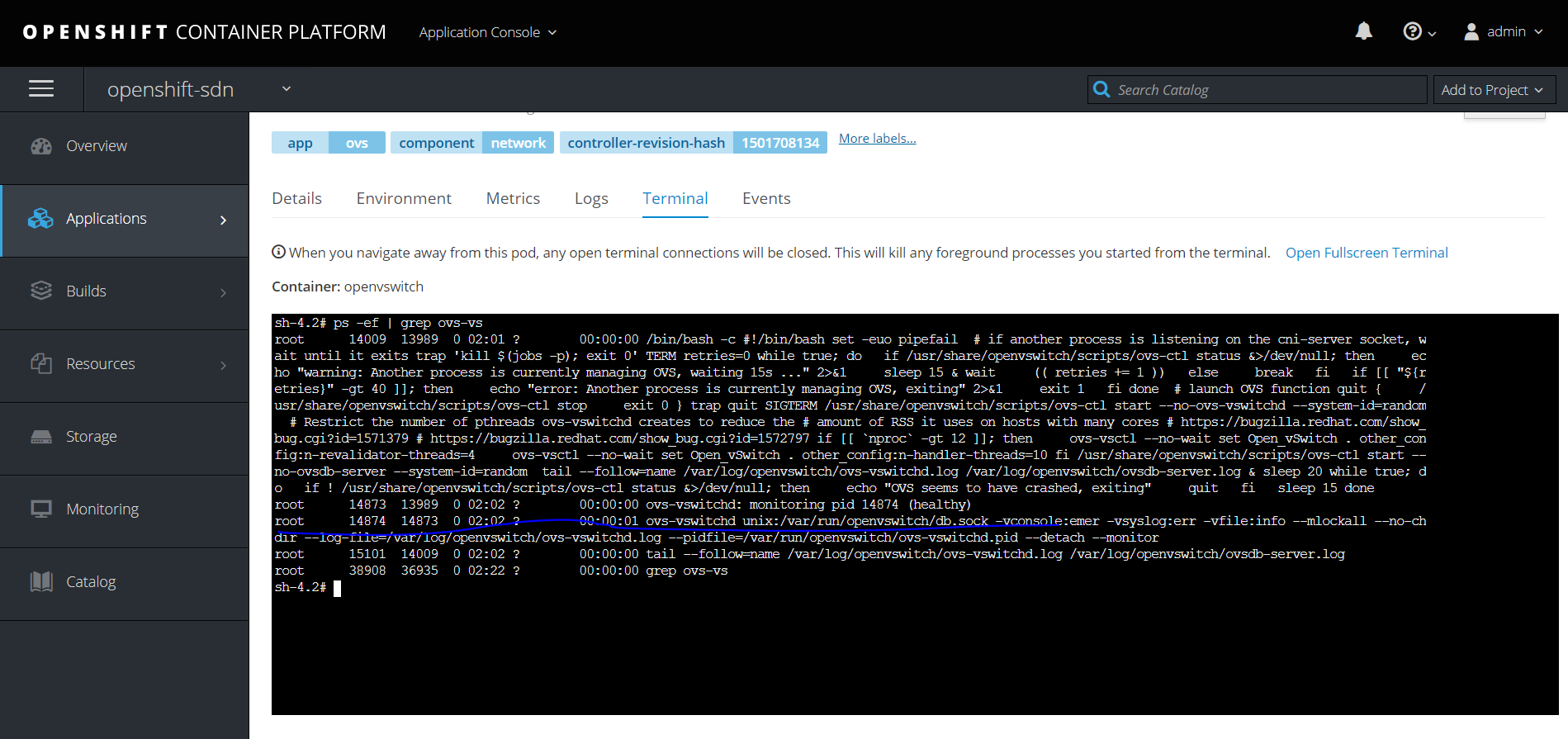

进入到ovs pod,可以看到运行的核心进程

root 14874 14873 0 02:02 ? 00:00:01 ovs-vswitchd unix:/var/run/openvswitch/db.sock -vconsole:emer -vsyslog:err -vfile:info --mlockall --no-chdir --log-file=/var/log/openvswitch/ovs-vswitchd.log --pidfile=/var/run/openvswitch/ovs-vswitchd.pid --detach --monitor

-

ovsdb-server

ovsdb-server是OVS轻量级的数据库服务,用于整个OVS的配置信息,包括接口/交换内容/VLAN等,OVS主进程ovs-vswitchd根据数据库中的配置信息工作,下面是ovsdb-server进程详细信息

sh-4.2# ps -ef | grep ovsdb-server root 14009 13989 0 02:01 ? 00:00:00 /bin/bash -c #!/bin/bash set -euo pipefail # if another process is listening on the cni-server socket, wait until it exits trap 'kill $(jobs -p); exit 0' TERM retries=0 while true; do if /usr/share/openvswitch/scripts/ovs-ctl status &>/dev/null; then echo "warning: Another process is currently managing OVS, waiting 15s ..." 2>&1 sleep 15 & wait (( retries += 1 )) else break fi if [[ "${retries}" -gt 40 ]]; then echo "error: Another process is currently managing OVS, exiting" 2>&1 exit 1 fi done # launch OVS function quit { /usr/share/openvswitch/scripts/ovs-ctl stop exit 0 } trap quit SIGTERM /usr/share/openvswitch/scripts/ovs-ctl start --no-ovs-vswitchd --system-id=random # Restrict the number of pthreads ovs-vswitchd creates to reduce the # amount of RSS it uses on hosts with many cores # https://bugzilla.redhat.com/show_bug.cgi?id=1571379 # https://bugzilla.redhat.com/show_bug.cgi?id=1572797 if [[ `nproc` -gt 12 ]]; then ovs-vsctl --no-wait set Open_vSwitch . other_config:n-revalidator-threads=4 ovs-vsctl --no-wait set Open_vSwitch . other_config:n-handler-threads=10 fi /usr/share/openvswitch/scripts/ovs-ctl start --no-ovsdb-server --system-id=random tail --follow=name /var/log/openvswitch/ovs-vswitchd.log /var/log/openvswitch/ovsdb-server.log & sleep 20 while true; do if ! /usr/share/openvswitch/scripts/ovs-ctl status &>/dev/null; then echo "OVS seems to have crashed, exiting" quit fi sleep 15 done root 14382 13989 0 02:02 ? 00:00:00 ovsdb-server: monitoring pid 14383 (healthy) root 14383 14382 0 02:02 ? 00:00:00 ovsdb-server /etc/openvswitch/conf.db -vconsole:emer -vsyslog:err -vfile:info --remote=punix:/var/run/openvswitch/db.sock --private-key=db:Open_vSwitch,SSL,private_key --certificate=db:Open_vSwitch,SSL,certificate --bootstrap-ca-cert=db:Open_vSwitch,SSL,ca_cert --no-chdir --log-file=/var/log/openvswitch/ovsdb-server.log --pidfile=/var/run/openvswitch/ovsdb-server.pid --detach --monitor root 15101 14009 0 02:02 ? 00:00:00 tail --follow=name /var/log/openvswitch/ovs-vswitchd.log /var/log/openvswitch/ovsdb-server.log root 44875 36935 0 02:27 ? 00:00:00 grep ovsdb-server

-

OpenFlow

OpenFlow是开源的用于管理交换机流表的协议,OpenFlow在OVS中的地位可以参考上面架构图,它是Controller和ovs-vswitched间的通信协议。需要注意的是,OpenFlow是一个独立的完整的流表协议,不依赖于OVS,OVS只是支持OpenFlow协议,有了支持,我们可以使用OpenFlow控制器来管理OVS中的流表,OpenFlow不仅仅支持虚拟交换机,某些硬件交换机也支持OpenFlow协议。

-

Controller

Controller指OpenFlow控制器。OpenFlow控制器可以通过OpenFlow协议连接到任何支持OpenFlow的交换机,比如OVS。控制器通过向交换机下发流表规则来控制数据流向。除了可以通过OpenFlow控制器配置OVS中flows,也可以使用OVS提供的ovs-ofctl命令通过OpenFlow协议去连接OVS,从而配置flows,命令也能够对OVS的运行状况进行动态监控。

-

Kernel Datapath

datapath是一个Linux内核模块,它负责执行数据交换。

1.网络架构

下面这个图比较直观。

找个环境先看看

[root@node1 ~]# ip addr show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: enp0s3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000 link/ether 08:00:27:dc:99:1a brd ff:ff:ff:ff:ff:ff inet 192.168.56.104/24 brd 192.168.56.255 scope global noprefixroute enp0s3 valid_lft forever preferred_lft forever inet6 fe80::a00:27ff:fedc:991a/64 scope link tentative dadfailed valid_lft forever preferred_lft forever 3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default link/ether 02:42:1e:32:6a:69 brd ff:ff:ff:ff:ff:ff inet 172.17.0.1/16 scope global docker0 valid_lft forever preferred_lft forever 8: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000 link/ether 42:ea:54:f3:41:32 brd ff:ff:ff:ff:ff:ff 9: br0: <BROADCAST,MULTICAST> mtu 1450 qdisc noop state DOWN group default qlen 1000 link/ether 8e:be:50:76:b7:45 brd ff:ff:ff:ff:ff:ff 10: vxlan_sys_4789: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 65000 qdisc noqueue master ovs-system state UNKNOWN group default qlen 1000 link/ether 56:e0:7e:45:e9:5b brd ff:ff:ff:ff:ff:ff inet6 fe80::54e0:7eff:fe45:e95b/64 scope link valid_lft forever preferred_lft forever 11: tun0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default qlen 1000 link/ether 1a:57:ef:7d:84:2a brd ff:ff:ff:ff:ff:ff inet 10.130.0.1/23 brd 10.130.1.255 scope global tun0 valid_lft forever preferred_lft forever inet6 fe80::1857:efff:fe7d:842a/64 scope link valid_lft forever preferred_lft forever 12: veth4da99f3a@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 4e:7c:96:3b:db:3c brd ff:ff:ff:ff:ff:ff link-netnsid 0 inet6 fe80::4c7c:96ff:fe3b:db3c/64 scope link valid_lft forever preferred_lft forever 13: veth4596fc96@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 2a:83:b7:1b:e0:81 brd ff:ff:ff:ff:ff:ff link-netnsid 1 inet6 fe80::2883:b7ff:fe1b:e081/64 scope link valid_lft forever preferred_lft forever 14: veth04caa6b2@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 3a:5b:9a:62:9c:f4 brd ff:ff:ff:ff:ff:ff link-netnsid 2 inet6 fe80::385b:9aff:fe62:9cf4/64 scope link valid_lft forever preferred_lft forever 15: veth14f14b18@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether ca:d2:96:48:84:be brd ff:ff:ff:ff:ff:ff link-netnsid 3 inet6 fe80::c8d2:96ff:fe48:84be/64 scope link valid_lft forever preferred_lft forever 16: veth31713a78@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether ce:c3:8f:3e:b9:41 brd ff:ff:ff:ff:ff:ff link-netnsid 4 inet6 fe80::ccc3:8fff:fe3e:b941/64 scope link valid_lft forever preferred_lft forever 17: veth9ff2f96a@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 16:f8:11:d2:28:7a brd ff:ff:ff:ff:ff:ff link-netnsid 5 inet6 fe80::14f8:11ff:fed2:287a/64 scope link valid_lft forever preferred_lft forever 18: veth86a4a302@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 56:75:ab:7a:17:28 brd ff:ff:ff:ff:ff:ff link-netnsid 6 inet6 fe80::5475:abff:fe7a:1728/64 scope link valid_lft forever preferred_lft forever 19: vethb4141622@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 46:d1:df:02:5c:d3 brd ff:ff:ff:ff:ff:ff link-netnsid 7 inet6 fe80::44d1:dfff:fe02:5cd3/64 scope link valid_lft forever preferred_lft forever 20: vethae772509@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether c6:12:af:03:f0:b7 brd ff:ff:ff:ff:ff:ff link-netnsid 8 inet6 fe80::c412:afff:fe03:f0b7/64 scope link valid_lft forever preferred_lft forever 25: veth4fbfae38@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master ovs-system state UP group default link/ether 82:f3:e8:87:9b:e9 brd ff:ff:ff:ff:ff:ff link-netnsid 9 inet6 fe80::80f3:e8ff:fe87:9be9/64 scope link valid_lft forever preferred_lft forever

可以看到一堆的interface, 在一个节点上,OpenShift SDN往往会生成如下的类型的接口(interface)

-

br0: OVS的网桥设备 The OVS bridge device that containers will be attached to. OpenShift SDN also configures a set of non-subnet-specific flow rules on this bridge. -

tun0: 访问外部网络的网关 An OVS internal port (port 2 onbr0). This gets assigned the cluster subnet gateway address, and is used for external network access. OpenShift SDN configuresnetfilterand routing rules to enable access from the cluster subnet to the external network via NAT. -

vxlan_sys_4789: 访问其他节点Pod的设备 The OVS VXLAN device (port 1 onbr0), which provides access to containers on remote nodes. Referred to asvxlan0in the OVS rules. -

vethX(in the main netns): 和Pod关联的虚拟网卡 A Linux virtual ethernet peer ofeth0in the Docker netns. It will be attached to the OVS bridge on one of the other ports.

这个说明可以结合上面那个图来看,就很清晰了。

基于brctl命令是看不到ovs的网桥的,只能看到 docker0

[root@node1 ~]# brctl show bridge name bridge id STP enabled interfaces docker0 8000.02421e326a69 no

如果要看到br0网桥,并且了解附在上面的ip信息,需要安装openvswitch.

2.安装OpenvSwitch

基于

https://access.redhat.com/solutions/3710321

因为openvswitch 在3.10以上版本转为static pod实现,所以在3.10以上版本在节点不预装openvswitch,如果需要安装参照一下步骤。当然也可以直接在ovs pod里面进行查看。

# subscription-manager register # subscription-manager list --available # subscription-manager attach --pool=<pool_id> subscription-manager repos --enable=rhel-7-server-extras-rpms subscription-manager repos --enable=rhel-7-server-optional-rpms yum install -y openvswitch

基于命令查看网桥

[root@node1 network-scripts]# ovs-vsctl list-br

br0

查看网桥上的端口

[root@node1 network-scripts]# ovs-ofctl -O OpenFlow13 dump-ports-desc br0 OFPST_PORT_DESC reply (OF1.3) (xid=0x2): 1(vxlan0): addr:a2:01:b9:7d:a6:c3 config: 0 state: LIVE speed: 0 Mbps now, 0 Mbps max 2(tun0): addr:de:48:d6:e7:a9:91 config: 0 state: LIVE speed: 0 Mbps now, 0 Mbps max 3(veth04fb5821): addr:66:20:89:fa:e0:9f config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 4(vethbdebc4f7): addr:d6:f8:92:06:3e:da config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 5(veth9fd3926f): addr:5e:60:13:c8:30:9e config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 6(veth3b466fa9): addr:ee:3f:1b:cb:cf:9b config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 7(veth866c42b5): addr:be:7e:e6:d2:2f:f1 config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 8(veth2446bc66): addr:96:5b:fe:87:10:30 config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 9(veth1afcb012): addr:36:3b:de:9d:82:8b config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 10(vethd31bb8c7): addr:06:8f:41:50:ba:72 config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 11(vethe02a7907): addr:36:c4:af:c3:c8:26 config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 12(veth06b13117): addr:0a:53:73:5e:21:d7 config: 0 state: LIVE current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max LOCAL(br0): addr:b2:a3:b4:47:9d:4e config: PORT_DOWN state: LINK_DOWN speed: 0 Mbps now, 0 Mbps max

查看所有信息

[root@node1 ~]# ovs-vsctl show c2bad35f-9494-4055-aa1c-b255d0ee7e60 Bridge "br0" fail_mode: secure Port "tun0" Interface "tun0" type: internal Port "vethfff599a6" Interface "vethfff599a6" Port "br0" Interface "br0" type: internal Port "veth38efc56f" Interface "veth38efc56f" Port "veth80b6c074" Interface "veth80b6c074" Port "veth4cdb026d" Interface "veth4cdb026d" Port "veth42f411d0" Interface "veth42f411d0" Port "vxlan0" Interface "vxlan0" type: vxlan options: {dst_port="4789", key=flow, remote_ip=flow} Port "veth5bf0c012" Interface "veth5bf0c012" Port "vethb80cca24" Interface "vethb80cca24" Port "veth2335064f" Interface "veth2335064f" Port "vethf857e799" Interface "vethf857e799" Port "veth325c8496" Interface "veth325c8496" ovs_version: "2.9.0"

查看流量

[root@node1 network-scripts]# ovs-ofctl -O OpenFlow13 dump-flows br0 OFPST_FLOW reply (OF1.3) (xid=0x2): cookie=0x0, duration=788.192s, table=0, n_packets=0, n_bytes=0, priority=250,ip,in_port=2,nw_dst=224.0.0.0/4 actions=drop cookie=0x0, duration=788.192s, table=0, n_packets=0, n_bytes=0, priority=200,arp,in_port=1,arp_spa=10.128.0.0/14,arp_tpa=10.130.0.0/23 actions=move:NXM_NX_TUN_ID[0..31]->NXM_NX_REG0[],goto_table:10 cookie=0x0, duration=788.192s, table=0, n_packets=0, n_bytes=0, priority=200,ip,in_port=1,nw_src=10.128.0.0/14 actions=move:NXM_NX_TUN_ID[0..31]->NXM_NX_REG0[],goto_table:10 cookie=0x0, duration=788.192s, table=0, n_packets=0, n_bytes=0, priority=200,ip,in_port=1,nw_dst=10.128.0.0/14 actions=move:NXM_NX_TUN_ID[0..31]->NXM_NX_REG0[],goto_table:10 cookie=0x0, duration=788.192s, table=0, n_packets=98, n_bytes=4116, priority=200,arp,in_port=2,arp_spa=10.130.0.1,arp_tpa=10.128.0.0/14 actions=goto_table:30

开了个头,先这样,更多的网络诊断,可以参考

https://docs.openshift.com/container-platform/3.11/admin_guide/sdn_troubleshooting.html

openvswitch材料,参考

https://opengers.github.io/openstack/openstack-base-use-openvswitch/

浙公网安备 33010602011771号

浙公网安备 33010602011771号