人工智能作业

BP神经网络

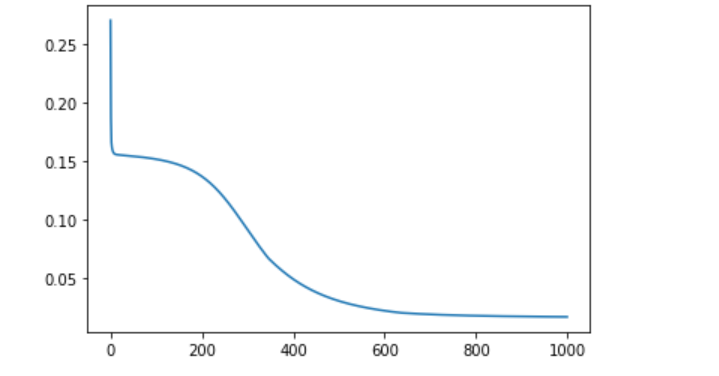

import pandas as pd import numpy as np import matplotlib.pyplot as plt def sigmoid(x): # 定义网络激活函数 return 1/(1+np.exp(-x)) data_tr = pd.read_csv('D:\\人工智能\\data.txt') # 训练集样本 data_te = pd.read_csv('D:\\人工智能\\ceshi.txt') # 测试集样本 n = len(data_tr) yita = 0.85 # 自己设置学习速率 out_in = np.array([0.0, 0, 0, 0, -1]) # 输出层的输入,即隐层的输出 w_mid = np.zeros([3,4]) # 隐层神经元的权值&阈值 w_out = np.zeros([5]) # 输出层神经元的权值&阈值 delta_w_out = np.zeros([5]) # 输出层权值&阈值的修正量 delta_w_mid = np.zeros([3,4]) # 中间层权值&阈值的修正量 Err = [] ''' 模型训练 ''' for j in range(1000): error = [] for it in range(n): net_in = np.array([data_tr.iloc[it, 0], data_tr.iloc[it, 1], -1]) # 网络输入 real = data_tr.iloc[it, 2] for i in range(4): out_in[i] = sigmoid(sum(net_in * w_mid[:, i])) # 从输入到隐层的传输过程 res = sigmoid(sum(out_in * w_out)) # 模型预测值 error.append(abs(real-res))#误差 print(it, '个样本的模型输出:', res, 'real:', real) delta_w_out = yita*res*(1-res)*(real-res)*out_in # 输出层权值的修正量 delta_w_out[4] = -yita*res*(1-res)*(real-res) # 输出层阈值的修正量 w_out = w_out + delta_w_out # 更新,加上修正量 for i in range(4): delta_w_mid[:, i] = yita*out_in[i]*(1-out_in[i])*w_out[i]*res*(1-res)*(real-res)*net_in # 中间层神经元的权值修正量 delta_w_mid[2, i] = -yita*out_in[i]*(1-out_in[i])*w_out[i]*res*(1-res)*(real-res) # 中间层神经元的阈值修正量,第2行是阈值 w_mid = w_mid + delta_w_mid # 更新,加上修正量 Err.append(np.mean(error)) print(w_mid,w_out) plt.plot(Err)#训练集上每一轮的平均误差 plt.show() plt.close() ''' 将测试集样本放入训练好的网络中去 ''' error_te = [] for it in range(len(data_te)): net_in = np.array([data_te.iloc[it, 0], data_te.iloc[it, 1], -1]) # 网络输入 real = data_te.iloc[it, 2] for i in range(4): out_in[i] = sigmoid(sum(net_in * w_mid[:, i])) # 从输入到隐层的传输过程 res = sigmoid(sum(out_in * w_out)) # 模型预测值 error_te.append(abs(real-res)) plt.plot(error_te)#测试集上每一轮的误差 plt.show() np.mean(error_te)

手工搭建神经网络

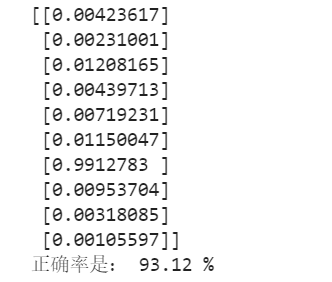

import numpy as np import scipy.special import pylab import matplotlib.pyplot as plt #%% class NeuralNetwork(): # 初始化神经网络 def __init__(self, inputnodes, hiddennodes, outputnodes, learningrate): # 设置输入层节点,隐藏层节点和输出层节点的数量和学习率 self.inodes = inputnodes self.hnodes = hiddennodes self.onodes = outputnodes self.lr = learningrate #设置神经网络中的学习率 # 使用正态分布,进行权重矩阵的初始化 self.wih = np.random.normal(0.0, pow(self.hnodes, -0.5), (self.hnodes, self.inodes)) #(mu,sigma,矩阵) self.who = np.random.normal(0.0, pow(self.onodes, -0.5), (self.onodes, self.hnodes)) self.activation_function = lambda x: scipy.special.expit(x) #激活函数设为Sigmod()函数 pass # 定义训练神经网络 print("************Train start******************") def train(self,input_list,target_list): # 将输入、输出列表转换为二维数组 inputs = np.array(input_list, ndmin=2).T #T:转置 targets = np.array(target_list,ndmin= 2).T hidden_inputs = np.dot(self.wih, inputs) #计算到隐藏层的信号,dot()返回的是两个数组的点积 hidden_outputs = self.activation_function(hidden_inputs) #计算隐藏层输出的信号 final_inputs = np.dot(self.who, hidden_outputs) #计算到输出层的信号 final_outputs = self.activation_function(final_inputs) output_errors = targets - final_outputs #计算输出值与标签值的差值 #print("*****************************") #print("output_errors:",output_errors) hidden_errors = np.dot(self.who.T,output_errors) #隐藏层和输出层权重更新 self.who += self.lr * np.dot((output_errors*final_outputs*(1.0-final_outputs)), np.transpose(hidden_outputs))#transpose()转置 #输入层和隐藏层权重更新 self.wih += self.lr * np.dot((hidden_errors*hidden_outputs*(1.0-hidden_outputs)), np.transpose(inputs))#转置 pass #查询神经网络 def query(self, input_list): # 转换输入列表到二维数 inputs = np.array(input_list, ndmin=2).T #计算到隐藏层的信号 hidden_inputs = np.dot(self.wih, inputs) #计算隐藏层输出的信号 hidden_outputs = self.activation_function(hidden_inputs) #计算到输出层的信号 final_inputs = np.dot(self.who, hidden_outputs) final_outputs = self.activation_function(final_inputs) return final_outputs #%% input_nodes = 784 #输入层神经元个数 hidden_nodes = 100 #隐藏层神经元个数 output_nodes = 10 #输出层神经元个数 learning_rate = 0.4 #学习率为0.4 # 创建神经网络 n = NeuralNetwork(input_nodes, hidden_nodes, output_nodes, learning_rate) #%% #读取训练数据集 转化为列表 training_data_file = open(r'D:\人工智能\mnist_train.csv') training_data_list = training_data_file.readlines() #方法用于读取所有行,并返回列表 #print("training_data_list:",training_data_list) training_data_file.close() #%% #训练次数 i = 2 for e in range(i): #训练神经网络 for record in training_data_list: all_values = record.split(',') #根据逗号,将文本数据进行拆分 #将文本字符串转化为实数,并创建这些数字的数组。 inputs = (np.asfarray(all_values[1:])/255.0 * 0.99) + 0.01 #创建用零填充的数组,数组的长度为output_nodes,加0.01解决了0输入造成的问题 targets = np.zeros(output_nodes) + 0.01 #10个元素都为0.01的数组 #使用目标标签,将正确元素设置为0.99 targets[int(all_values[0])] = 0.99#all_values[0]=='8' n.train(inputs,targets) pass pass #%% test_data_file = open(r'D:\人工智能\mnist_test.csv') test_data_list = test_data_file.readlines() test_data_file.close() all_values = test_data_list[2].split(',') #第3条数据,首元素为1 # print(all_values) # print(len(all_values)) # print(all_values[0]) #输出目标值 #%% score = [] print("***************Test start!**********************") for record in test_data_list: #用逗号分割将数据进行拆分 all_values = record.split(',') #正确的答案是第一个值 correct_values = int(all_values[0]) # print(correct_values,"是正确的期望值") #做输入 inputs = (np.asfarray(all_values[1:])/255.0 * 0.99) + 0.01 #测试网络 作输入 outputs= n.query(inputs)#10行一列的矩阵 #找出输出的最大值的索引 label = np.argmax(outputs) # print(label,"是网络的输出值\n") #如果期望值和网络的输出值正确 则往score 数组里面加1 否则添加0 if(label == correct_values): score.append(1) else: score.append(0) pass pass print(outputs) #%% # print(score) score_array = np.asfarray(score) #%% print("正确率是:",(score_array.sum()/score_array.size)*100,'%')

bp网络

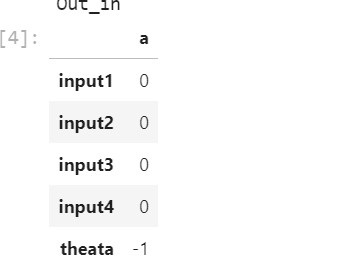

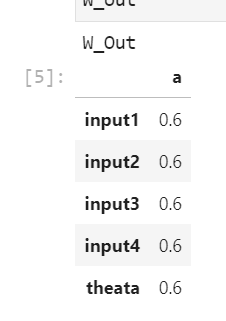

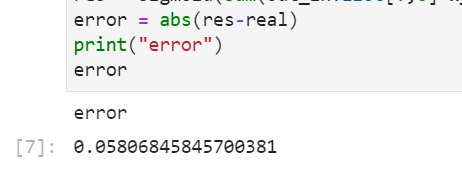

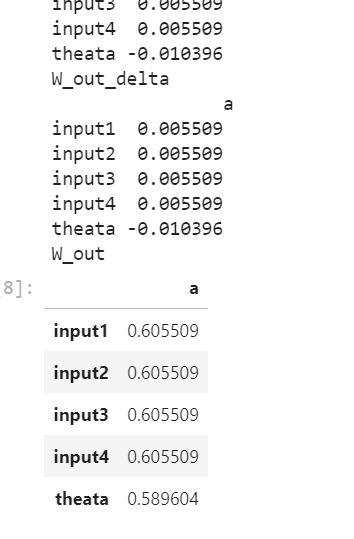

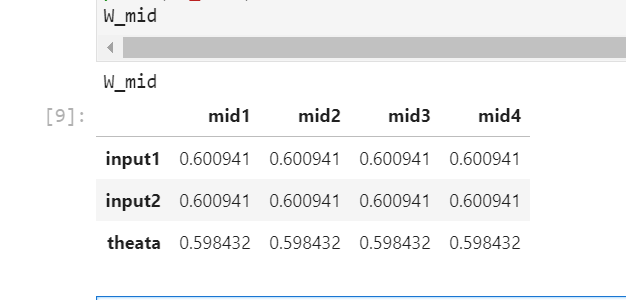

def sigmoid(x): #映射函数 return 1/(1+math.exp(-x)) #%% import math import numpy as np import pandas as pd from pandas import DataFrame #%% Net_in = DataFrame(0.6,index=['input1','input2','theata'],columns=['a']) Out_in = DataFrame(0,index=['input1','input2','input3','input4','theata'],columns=['a']) Net_in.iloc[2,0] = -1 Out_in.iloc[4,0] = -1 real=Net_in.iloc[0,0]**2+Net_in.iloc[1,0]**2 print("Out_in") Out_in W_mid=DataFrame(0.6,index=['input1','input2','theata'],columns=['mid1','mid2','mid3','mid4']) W_out=DataFrame(0.6,index=['input1','input2','input3','input4','theata'],columns=['a']) W_mid_delta=DataFrame(0,index=['input1','input2','theata'],columns=['mid1','mid2','mid3','mid4']) W_out_delta=DataFrame(0,index=['input1','input2','input3','input4','theata'],columns=['a']) W_mid print("W_Out") W_out for i in range(0,4): Out_in.iloc[i,0] = sigmoid(sum(W_mid.iloc[:,i]*Net_in.iloc[:,0])) #输出层的输出/网络输出 res = sigmoid(sum(Out_in.iloc[:,0]*W_out.iloc[:,0])) error = abs(res-real) print("error") error yita=0.8 #输出层权值变化量 W_out_delta.iloc[:,0] = yita*res*(1-res)*(real-res)*Out_in.iloc[:,0] print("W_out_delta",'\n',W_out_delta) W_out_delta.iloc[4,0] = -(yita*res*(1-res)*(real-res))#更新输出层阈值theata print("W_out_delta",'\n',W_out_delta) W_out = W_out + W_out_delta #输出层权值更新 print("W_out") W_out for i in range(0,4): W_mid_delta.iloc[:,i] = yita*Out_in.iloc[i,0]*(1-Out_in.iloc[i,0])*W_out.iloc[i,0]*res*(1-res)*(real-res)*Net_in.iloc[:,0] W_mid_delta.iloc[2,i] = -(yita*Out_in.iloc[i,0]*(1-Out_in.iloc[i,0])*W_out.iloc[i,0]*res*(1-res)*(real-res))#更新隐含层阈值theat W_mid = W_mid + W_mid_delta #中间层权值更新 print("W_mid") W_mid

浙公网安备 33010602011771号

浙公网安备 33010602011771号