RDD之四:Value型Transformation算子

处理数据类型为Value型的Transformation算子可以根据RDD变换算子的输入分区与输出分区关系分为以下几种类型:

1)输入分区与输出分区一对一型

2)输入分区与输出分区多对一型

3)输入分区与输出分区多对多型

4)输出分区为输入分区子集型

5)还有一种特殊的输入与输出分区一对一的算子类型:Cache型。 Cache算子对RDD分区进行缓存

输入分区与输出分区一对一型

(1)map

1.map(func):数据集中的每个元素经过用户自定义的函数转换形成一个新的RDD,新的RDD叫MappedRDD.

package com.sf.transform.base; import org.apache.spark.SparkContext import org.apache.spark.SparkConf object Map { def main(args: Array[String]) { val conf = new SparkConf().setMaster("local").setAppName("map") val sc = new SparkContext(conf) val rdd = sc.parallelize(1 to 10) //创建RDD val map = rdd.map(_*2) //对RDD中的每个元素都乘于2 map.foreach(x => print(x+" ")) sc.stop() } }

2 4 6 8 10 12 14 16 18 20

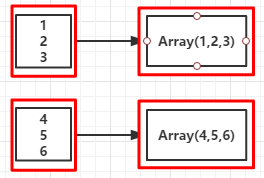

(2)flatMap

与map类似,但每个元素输入项都可以被映射到0个或多个的输出项,最终将结果”扁平化“后输出。

package com.sf.transform.base import org.apache.spark.SparkContext import org.apache.spark.SparkConf object FlatMap { def main(args: Array[String]) { val conf = new SparkConf().setMaster("local").setAppName("map") val sc = new SparkContext(conf) val rdd = sc.parallelize(1 to 5) val fm = rdd.map(x => (1 to x)).collect() fm.foreach(x => print(x + " ")) sc.stop() } }

1 1 2 1 2 3 1 2 3 4 1 2 3 4 5

Range(1) Range(1, 2) Range(1, 2, 3) Range(1, 2, 3, 4) Range(1, 2, 3, 4, 5)

(RDD依赖图)

(3)mapPartitions

mapPartitions函数获取到每个分区的迭代器,在函数中通过这个分区整体的迭代器对整个分区的元素进行操作。 内部实现是生成MapPartitionsRDD。

类似与map,map作用于每个分区的每个元素,但mapPartitions作用于每个分区中

package com.sf.transform.base import org.apache.spark.SparkContext import org.apache.spark.SparkConf object MapPartitions { //定义函数 def partitionsFun( /*index : Int,*/ iter: Iterator[(String, String)]): Iterator[String] = { var woman = List[String]() while (iter.hasNext) { val next = iter.next() next match { case (_, "female") => woman = /*"["+index+"]"+*/ next._1 :: woman case _ => } } return woman.iterator } def main(args: Array[String]) { val conf = new SparkConf().setMaster("local").setAppName("mappartitions") val sc = new SparkContext(conf) val list = List(("kpop", "female"), ("zorro", "male"), ("mobin", "male"), ("lucy", "female")) val rdd = sc.parallelize(list, 2) //第二个参数是分区数 val mp = rdd.mapPartitions(partitionsFun) /*val mp = rdd.mapPartitionsWithIndex(partitionsFun)*/ mp.collect.foreach(x => (println(x + " "))) //将分区中的元素转换成Array再输出 } }

kpop lucy

val mp = rdd.mapPartitions(x => x.filter(_._2 == "female")).map(x => x._1)

4.mapPartitionsWithIndex(func):与mapPartitions类似,不同的是函数多了个分区索引的参数

package com.sf.transform.base

import org.apache.spark.SparkContext

import org.apache.spark.SparkConf

object MapPartitions {

//定义函数

def partitionsFun( iter: Iterator[(String, String)]): Iterator[String] = {

var woman = List[String]()

while (iter.hasNext) {

val next = iter.next()

next match {

case (_, "female") => woman = next._1 :: woman

case _ =>

}

}

return woman.iterator

}

def partitionsFun( index : Int, iter: Iterator[(String, String)]): Iterator[String] = {

var woman = List[String]()

while (iter.hasNext) {

val next = iter.next()

next match {

case (_, "female") => woman = "["+index+"]"+ next._1 :: woman

case _ =>

}

}

return woman.iterator

}

def main(args: Array[String]) {

val conf = new SparkConf().setMaster("local").setAppName("mappartitions")

val sc = new SparkContext(conf)

val list = List(("kpop", "female"), ("zorro", "male"), ("mobin", "male"), ("lucy", "female"))

val rdd = sc.parallelize(list, 2) //第二个参数是分区数

val mp = rdd.mapPartitions(partitionsFun)

println()

mp.collect.foreach(x => (print(x + " "))) //将分区中的元素转换成Array再输出

println()

val mp2 = rdd.mapPartitionsWithIndex(partitionsFun)

mp2.collect.foreach(x => (print(x + " "))) //将分区中的元素转换成Array再输出

}

}

输出:(带了分区索引)

[Stage 0:> (0 + 0) / 2]

kpop lucy

[0]kpop [1]lucy

(4)glom

glom函数将每个分区形成一个数组,内部实现是返回的RDD[Array[T]]。

图中,方框代表一个分区。 该图表示含有V1、 V2、 V3的分区通过函数glom形成一个数组Array[(V1),(V2),(V3)]。

源码:

- /**

- * Return an RDD created by coalescing all elements within each partition into an array.

- */

- def glom(): RDD[Array[T]] = {

- new MapPartitionsRDD[Array[T], T](this, (context, pid, iter) => Iterator(iter.toArray))

- }

输入分区与输出分区多对一型

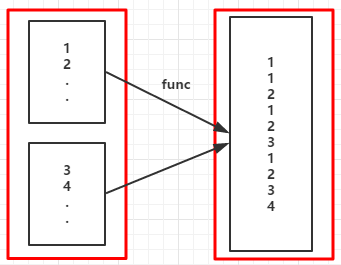

(1)union

使用union函数时需要保证两个RDD元素的数据类型相同,返回的RDD数据类型和被合并的RDD元素数据类型相同,并不进行去重操作,保存所有元素。如果想去重,可以使用distinct()。++符号相当于uion函数操作。

图中,左侧的大方框代表两个RDD,大方框内的小方框代表RDD的分区。右侧大方框代表合并后的RDD,大方框内的小方框代表分区。含有V1,V2…U4的RDD和含有V1,V8…U8的RDD合并所有元素形成一个RDD。V1、V1、V2、V8形成一个分区,其他元素同理进行合并。

源码:

- /**

- * Return the union of this RDD and another one. Any identical elements will appear multiple

- * times (use `.distinct()` to eliminate them).

- */

- def union(other: RDD[T]): RDD[T] = {

- if (partitioner.isDefined && other.partitioner == partitioner) {

- new PartitionerAwareUnionRDD(sc, Array(this, other))

- } else {

- new UnionRDD(sc, Array(this, other))

- }

- }

(2)certesian

对两个RDD内的所有元素进行笛卡尔积操作。操作后,内部实现返回CartesianRDD。

左侧的大方框代表两个RDD,大方框内的小方框代表RDD的分区。右侧大方框代表合并后的RDD,大方框内的小方框代表分区。

大方框代表RDD,大方框中的小方框代表RDD分区。 例如,V1和另一个RDD中的W1、 W2、 Q5进行笛卡尔积运算形成(V1,W1)、(V1,W2)、(V1,Q5)。

源码:

- /**

- * Return the Cartesian product of this RDD and another one, that is, the RDD of all pairs of

- * elements (a, b) where a is in `this` and b is in `other`.

- */

- def cartesian[U: ClassTag](other: RDD[U]): RDD[(T, U)] = new CartesianRDD(sc, this, other)

输入分区与输出分区多对多型

groupBy

将元素通过函数生成相应的Key,数据就转化为Key-Value格式,之后将Key相同的元素分为一组。

val cleanF = sc.clean(f)中sc.clean函数将用户函数预处理; this.map(t => (cleanF(t), t)).groupByKey(p)对数据map进行函数操作,再对groupByKey进行分组操作。其中,p中确定了分区个数和分区函数,也就决定了并行化的程度。

图中,方框代表一个RDD分区,相同key的元素合并到一个组。 例如,V1,V2合并为一个Key-Value对,其中key为“ V” ,Value为“ V1,V2” ,形成V,Seq(V1,V2)。

源码:

- /**

- * Return an RDD of grouped items. Each group consists of a key and a sequence of elements

- * mapping to that key. The ordering of elements within each group is not guaranteed, and

- * may even differ each time the resulting RDD is evaluated.

- *

- * Note: This operation may be very expensive. If you are grouping in order to perform an

- * aggregation (such as a sum or average) over each key, using [[PairRDDFunctions.aggregateByKey]]

- * or [[PairRDDFunctions.reduceByKey]] will provide much better performance.

- */

- def groupBy[K](f: T => K)(implicit kt: ClassTag[K]): RDD[(K, Iterable[T])] =

- groupBy[K](f, defaultPartitioner(this))

- /**

- * Return an RDD of grouped elements. Each group consists of a key and a sequence of elements

- * mapping to that key. The ordering of elements within each group is not guaranteed, and

- * may even differ each time the resulting RDD is evaluated.

- *

- * Note: This operation may be very expensive. If you are grouping in order to perform an

- * aggregation (such as a sum or average) over each key, using [[PairRDDFunctions.aggregateByKey]]

- * or [[PairRDDFunctions.reduceByKey]] will provide much better performance.

- */

- def groupBy[K](f: T => K, numPartitions: Int)(implicit kt: ClassTag[K]): RDD[(K, Iterable[T])] =

- groupBy(f, new HashPartitioner(numPartitions))

- /**

- * Return an RDD of grouped items. Each group consists of a key and a sequence of elements

- * mapping to that key. The ordering of elements within each group is not guaranteed, and

- * may even differ each time the resulting RDD is evaluated.

- *

- * Note: This operation may be very expensive. If you are grouping in order to perform an

- * aggregation (such as a sum or average) over each key, using [[PairRDDFunctions.aggregateByKey]]

- * or [[PairRDDFunctions.reduceByKey]] will provide much better performance.

- */

- def groupBy[K](f: T => K, p: Partitioner)(implicit kt: ClassTag[K], ord: Ordering[K] = null)

- : RDD[(K, Iterable[T])] = {

- val cleanF = sc.clean(f)

- this.map(t => (cleanF(t), t)).groupByKey(p)

- }

输出分区为输入分区子集型

(1)filter

filter的功能是对元素进行过滤,对每个元素应用f函数,返回值为true的元素在RDD中保留,返回为false的将过滤掉。 内部实现相当于生成FilteredRDD(this,sc.clean(f))。

图中,每个方框代表一个RDD分区。 T可以是任意的类型。通过用户自定义的过滤函数f,对每个数据项进行操作,将满足条件,返回结果为true的数据项保留。 例如,过滤掉V2、 V3保留了V1,将区分命名为V1’。

源码:

- /**

- * Return a new RDD containing only the elements that satisfy a predicate.

- */

- def filter(f: T => Boolean): RDD[T] = {

- val cleanF = sc.clean(f)

- new MapPartitionsRDD[T, T](

- this,

- (context, pid, iter) => iter.filter(cleanF),

- preservesPartitioning = true)

- }

(2)distinct

distinct将RDD中的元素进行去重操作。

图中,每个方框代表一个分区,通过distinct函数,将数据去重。 例如,重复数据V1、 V1去重后只保留一份V1。

源码:

- /**

- * Return a new RDD containing the distinct elements in this RDD.

- */

- def distinct(numPartitions: Int)(implicit ord: Ordering[T] = null): RDD[T] =

- map(x => (x, null)).reduceByKey((x, y) => x, numPartitions).map(_._1)

- /**

- * Return a new RDD containing the distinct elements in this RDD.

- */

- def distinct(): RDD[T] = distinct(partitions.size)

(3)subtract

subtract相当于进行集合的差操作,RDD 1去除RDD 1和RDD 2交集中的所有元素。

图中,左侧的大方框代表两个RDD,大方框内的小方框代表RDD的分区。右侧大方框代表合并后的RDD,大方框内的小方框代表分区。V1在两个RDD中均有,根据差集运算规则,新RDD不保留,V2在第一个RDD有,第二个RDD没有,则在新RDD元素中包含V2。

源码:

- /**

- * Return an RDD with the elements from `this` that are not in `other`.

- *

- * Uses `this` partitioner/partition size, because even if `other` is huge, the resulting

- * RDD will be <= us.

- */

- def subtract(other: RDD[T]): RDD[T] =

- subtract(other, partitioner.getOrElse(new HashPartitioner(partitions.size)))

- /**

- * Return an RDD with the elements from `this` that are not in `other`.

- */

- def subtract(other: RDD[T], numPartitions: Int): RDD[T] =

- subtract(other, new HashPartitioner(numPartitions))

- /**

- * Return an RDD with the elements from `this` that are not in `other`.

- */

- def subtract(other: RDD[T], p: Partitioner)(implicit ord: Ordering[T] = null): RDD[T] = {

- if (partitioner == Some(p)) {

- // Our partitioner knows how to handle T (which, since we have a partitioner, is

- // really (K, V)) so make a new Partitioner that will de-tuple our fake tuples

- val p2 = new Partitioner() {

- override def numPartitions = p.numPartitions

- override def getPartition(k: Any) = p.getPartition(k.asInstanceOf[(Any, _)]._1)

- }

- // Unfortunately, since we're making a new p2, we'll get ShuffleDependencies

- // anyway, and when calling .keys, will not have a partitioner set, even though

- // the SubtractedRDD will, thanks to p2's de-tupled partitioning, already be

- // partitioned by the right/real keys (e.g. p).

- this.map(x => (x, null)).subtractByKey(other.map((_, null)), p2).keys

- } else {

- this.map(x => (x, null)).subtractByKey(other.map((_, null)), p).keys

- }

- }

(4)sample

sample将RDD这个集合内的元素进行采样,获取所有元素的子集。用户可以设定是否有放回的抽样、百分比、随机种子,进而决定采样方式。

参数说明:

withReplacement=true, 表示有放回的抽样;

withReplacement=false, 表示无放回的抽样。

每个方框是一个RDD分区。通过sample函数,采样50%的数据。V1、V2、U1、U2、U3、U4采样出数据V1和U1、U2,形成新的RDD。

源码:

- /**

- * Return a sampled subset of this RDD.

- */

- def sample(withReplacement: Boolean,

- fraction: Double,

- seed: Long = Utils.random.nextLong): RDD[T] = {

- require(fraction >= 0.0, "Negative fraction value: " + fraction)

- if (withReplacement) {

- new PartitionwiseSampledRDD[T, T](this, new PoissonSampler[T](fraction), true, seed)

- } else {

- new PartitionwiseSampledRDD[T, T](this, new BernoulliSampler[T](fraction), true, seed)

- }

- }

(5)takeSample

takeSample()函数和上面的sample函数是一个原理,但是不使用相对比例采样,而是按设定的采样个数进行采样,同时返回结果不再是RDD,而是相当于对采样后的数据进行collect(),返回结果的集合为单机的数组。

图中,左侧的方框代表分布式的各个节点上的分区,右侧方框代表单机上返回的结果数组。通过takeSample对数据采样,设置为采样一份数据,返回结果为V1。

源码:

- /**

- * Return a fixed-size sampled subset of this RDD in an array

- *

- * @param withReplacement whether sampling is done with replacement

- * @param num size of the returned sample

- * @param seed seed for the random number generator

- * @return sample of specified size in an array

- */

- def takeSample(withReplacement: Boolean,

- num: Int,

- seed: Long = Utils.random.nextLong): Array[T] = {

- val numStDev = 10.0

- if (num < 0) {

- throw new IllegalArgumentException("Negative number of elements requested")

- } else if (num == 0) {

- return new Array[T](0)

- }

- val initialCount = this.count()

- if (initialCount == 0) {

- return new Array[T](0)

- }

- val maxSampleSize = Int.MaxValue - (numStDev * math.sqrt(Int.MaxValue)).toInt

- if (num > maxSampleSize) {

- throw new IllegalArgumentException("Cannot support a sample size > Int.MaxValue - " +

- s"$numStDev * math.sqrt(Int.MaxValue)")

- }

- val rand = new Random(seed)

- if (!withReplacement && num >= initialCount) {

- return Utils.randomizeInPlace(this.collect(), rand)

- }

- val fraction = SamplingUtils.computeFractionForSampleSize(num, initialCount,

- withReplacement)

- var samples = this.sample(withReplacement, fraction, rand.nextInt()).collect()

- // If the first sample didn't turn out large enough, keep trying to take samples;

- // this shouldn't happen often because we use a big multiplier for the initial size

- var numIters = 0

- while (samples.length < num) {

- logWarning(s"Needed to re-sample due to insufficient sample size. Repeat #$numIters")

- samples = this.sample(withReplacement, fraction, rand.nextInt()).collect()

- numIters += 1

- }

- Utils.randomizeInPlace(samples, rand).take(num)

- }

Cache型

(1)cache

cache将RDD元素从磁盘缓存到内存,相当于persist(MEMORY_ONLY)函数的功能。

图中,每个方框代表一个RDD分区,左侧相当于数据分区都存储在磁盘,通过cache算子将数据缓存在内存。

源码:

- /** Persist this RDD with the default storage level (`MEMORY_ONLY`). */

- def cache(): this.type = persist()

(2)persist

persist函数对RDD进行缓存操作。数据缓存在哪里由StorageLevel枚举类型确定。

有几种类型的组合,DISK代表磁盘,MEMORY代表内存,SER代表数据是否进行序列化存储。StorageLevel是枚举类型,代表存储模式,如,MEMORY_AND_DISK_SER代表数据可以存储在内存和磁盘,并且以序列化的方式存储。 其他同理。

图中,方框代表RDD分区。 disk代表存储在磁盘,mem代表存储在内存。 数据最初全部存储在磁盘,通过persist(MEMORY_AND_DISK)将数据缓存到内存,但是有的分区无法容纳在内存,例如:图3-18中将含有V1,V2,V3的RDD存储到磁盘,将含有U1,U2的RDD仍旧存储在内存。

源码:

- /**

- * Set this RDD's storage level to persist its values across operations after the first time

- * it is computed. This can only be used to assign a new storage level if the RDD does not

- * have a storage level set yet..

- */

- def persist(newLevel: StorageLevel): this.type = {

- // TODO: Handle changes of StorageLevel

- if (storageLevel != StorageLevel.NONE && newLevel != storageLevel) {

- throw new UnsupportedOperationException(

- "Cannot change storage level of an RDD after it was already assigned a level")

- }

- sc.persistRDD(this)

- // Register the RDD with the ContextCleaner for automatic GC-based cleanup

- sc.cleaner.foreach(_.registerRDDForCleanup(this))

- storageLevel = newLevel

- this

- }

- /** Persist this RDD with the default storage level (`MEMORY_ONLY`). */

- def persist(): this.type = persist(StorageLevel.MEMORY_ONLY)