Mongo分片+副本集集群搭建

一. 概念简单描述

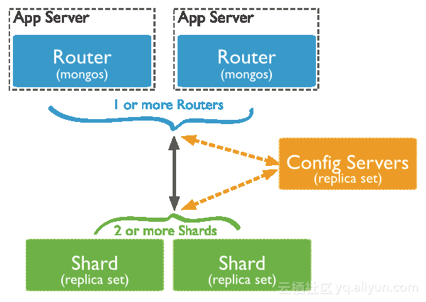

1. MongoDB分片集群包含组件: mongos,configserver,shardding分片

2. Mongos:路由服务是Sharded cluster的访问入口,本身不存储数据

(1) 负载处理客户端连接;

(2) 负责集群数据的分片

3. Configserver: 配置服务器,存储所有数据库元信息(路由、分片)的配置。mongos本身没有物理存储分片服务器和数据路由信息,只是缓存在内存里,配置服务器则实际存储这些数据。mongos第一次启动或者关掉重启就会从 config server 加载配置信息,以后如果配置服务器信息变化会通知到所有的 mongos 更新自己的状态, 这样mongos 就能继续准确路由。在生产环境通常有多个 config server 配置服务器,因为它存储了分片路由的元数据,防止数据丢失.

4. 分片(sharding)是指将数据库拆分,将其分散在不同的机器上的过程。将数据分散到不同的机器上,不需要功能强大的服务器就可以存储更多的数据和处理更大的负载,基本思想就是将集合切成小块,这些块分散到若干片里,每个片只负责总数据的一部分,最后通过一个均衡器来对各个分片进行均衡(数据迁移)

(1) 分片节点可以是一个实例,也可以说一个副本集集群

(2) 副本集集群中的仲裁节点(Arbiter),只负责在主节点宕机时,将从节点选举为主节点

二.MongoDB分片+副本集集群部署环境

1.服务器信息(实际服务器配置,看需求,根据自身情况决定)

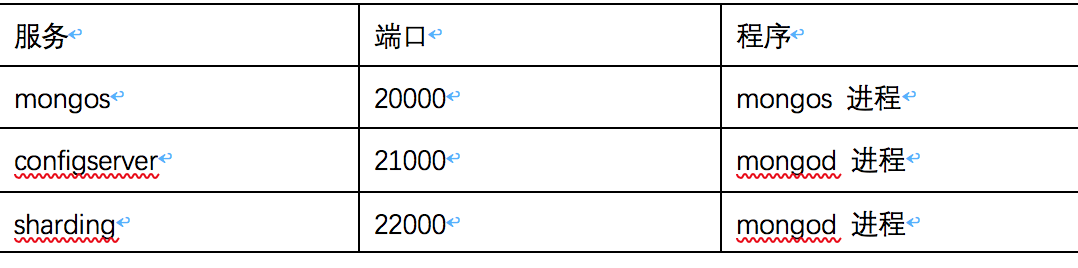

2.服务端口

3.软件版本

(1)系统: Centos7.4

(2)Mongo: Percona mongo 3.4

(3)supervisord : 3.3.4

二. 集群部署

1. 安装mongo

(1) 下载软件包

#所有服务器操作

mkdir -p /opt/upload/mongo_packge cd /opt/upload/mongo_packge wget 'https://www.percona.com/downloads/percona-server-mongodb-3.4/percona-server-mongodb-3.4.14-2.12/binary/redhat/7/x86_64/percona-server-mongodb-3.4.14-2.12-r28ff075-el7-x86_64-bundle.tar'

(2)解压软件包并安装mongo

# 所有节点操作

mkdir -p rpm tar -xf percona-server-mongodb-3.4.14-2.12-r28ff075-el7-x86_64-bundle.tar -C rpm/ cd rpm yum -y install ./*.rpm

2. 部署configserver

(1) 创建configserver相关目录

# mongos-01, mongos-02, mongos-03操作

mkdir -p /opt/configserver/configserver_conf mkdir -p /opt/configserver/configserver_data mkdir -p /opt/configserver/configserver_key mkdir -p /opt/configserver/configserver_log

(2) 创建配置文件

# mongos-01, mongos-02, mongos-03操作

cat <<EOF> /opt/configserver/configserver_conf/configserver.conf dbpath=/opt/configserver/configserver_data directoryperdb=true logpath=/opt/configserver/configserver_log/config.log bind_ip = 0.0.0.0 port=21000 maxConns=10000 replSet=configs configsvr=true logappend=true fork=true httpinterface=true #auth=true #keyFile=/opt/configserver/configserver_key/mongo_keyfile EOF chown -R mongod:mongod /opt/configserver

(3) 启动configserver

# mongos-01, mongos-02, mongos-03操作

/usr/bin/mongod -f /opt/configserver/configserver_conf/configserver.conf

(4) 创建configserver副本集

# mongos-01, mongos-02, mongos-03中任意节点操作

mongo --port 21000 #登陆mongo config = {_id : "configs",members : [{_id : 0, host : "172.18.6.87:21000" },{_id : 1, host : "172.18.6.86:21000" },{_id : 2, host : "172.18.6.85:21000" }]} #配置副本集成员节点 rs.initiate(config) #初始化副本集

3. 部署sharding01分片副本集(本次部署两组副本集,每组副本集作为一个分片节点)

(1) 创建程序相关目录 #操作服务器shardding01_arbitration-01, shardding01_mongodb-01, shardding01_mongodb-02 mkdir -p /opt/mongodb/mongodb_data mkdir -p /opt/mongodb/mongodb_keyfile mkdir -p /opt/mongodb/ mongodb_log chow -R mongod:mongod /opt/mongodb/ mongodb_log (2) 修改配置文件(shardding01_arbitration-01节点的cacheSizeGB参数,调整为2G) #操作服务器shardding01_arbitration-01, shardding01_mongodb-01, shardding01_mongodb-02 cat <<EOF >/etc/mongod.conf # mongod.conf, Percona Server for MongoDB # for documentation of all options, see: # http://docs.mongo.org/manual/reference/configuration-options/ # Where and how to store data. storage: dbPath: /opt/mongodb/mongodb_data directoryPerDB: true journal: enabled: true # engine: mmapv1 # engine: rocksdb engine: wiredTiger # engine: inMemory # Storage engine various options # More info for mmapv1: https://docs.mongo.com/v3.4/reference/configuration-options/#storage-mmapv1-options # mmapv1: # preallocDataFiles: true # nsSize: 16 # quota: # enforced: false # maxFilesPerDB: 8 # smallFiles: false # More info for wiredTiger: https://docs.mongo.com/v3.4/reference/configuration-options/#storage-wiredtiger-options wiredTiger: engineConfig: cacheSizeGB: 20 # checkpointSizeMB: 1000 # statisticsLogDelaySecs: 0 # journalCompressor: snappy # directoryForIndexes: false # collectionConfig: # blockCompressor: snappy # indexConfig: # prefixCompression: true # More info for rocksdb: https://github.com/mongo-partners/mongo-rocks/wiki#configuration # rocksdb: # cacheSizeGB: 1 # compression: snappy # maxWriteMBPerSec: 1024 # crashSafeCounters: false # counters: true # singleDeleteIndex: false # More info for inMemory: https://www.percona.com/doc/percona-server-for-mongo/3.4/inmemory.html#configuring-percona-memory-engine # inMemory: # engineConfig: # inMemorySizeGB: 1 # statisticsLogDelaySecs: 0 # Two options below can be used for wiredTiger and inMemory storage engines #setParameter: # wiredTigerConcurrentReadTransactions: 128 # wiredTigerConcurrentWriteTransactions: 128 # where to write logging data. systemLog: destination: file logAppend: true path: /opt/mongodb/mongodb_log/mongod.log processManagement: fork: true pidFilePath: /opt/mongodb/mongodb_log/mongod.pid # network interfaces net: port: 22000 bindIp: 0.0.0.0 maxIncomingConnections: 100000 wireObjectCheck : true http: JSONPEnabled: false RESTInterfaceEnabled: false #security: # authorization: enabled # keyFile: /opt/mongodb/mongodb_keyfile/mongo_keyfile #operationProfiling: replication: replSetName: "sharding01" sharding: clusterRole: shardsvr archiveMovedChunks: false ## Enterprise-Only Options: #auditLog: #snmp: EOF (3) 修改systemd mongo的管理程序文件 #操作服务器shardding01_arbitration-01, shardding01_mongodb-01, shardding01_mongodb-02 sed -i 's#64000#100000#g' /usr/lib/systemd/system/mongod.service #修改程序打开文件数 sed -i 's#/var/run/mongod.pid#/opt/mongodb/mongodb_log/mongod.pid#g' /usr/lib/systemd/system/mongod.service #修改pid文件的位置 systemctl daemon-reload (3) 启动monod #操作服务器shardding01_arbitration-01, shardding01_mongodb-01, shardding01_mongodb-02 systemctl restart mongod systemctl enable mongod (4) 配置副本集 mongo --port 22000 config = {_id : "sharding01",members : [{_id : 0, host : "172.18.6.89:22000" },{_id : 1, host : "172.18.6.88:22000" },{_id : 2, host : "172.18.6.92:22000",arbiterOnly:true}]} #配置副本集节点, arbiterOnly:true 表示为仲裁节点 rs.initiate(config) #初始化副本集 (5) 查看副本集状态 sharding01:SECONDARY> rs.status() { "set" : "sharding01", "date" : ISODate("2018-11-02T16:05:37.648Z"), "myState" : 2, "term" : NumberLong(17), "syncingTo" : "172.18.6.89:22000", "heartbeatIntervalMillis" : NumberLong(2000), "optimes" : { "lastCommittedOpTime" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) }, "appliedOpTime" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) }, "durableOpTime" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) } }, "members" : [ { "_id" : 0, "name" : "172.18.6.89:22000", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 27141, "optime" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) }, "optimeDurable" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) }, "optimeDate" : ISODate("2018-11-02T16:05:30Z"), "optimeDurableDate" : ISODate("2018-11-02T16:05:30Z"), "lastHeartbeat" : ISODate("2018-11-02T16:05:35.909Z"), "lastHeartbeatRecv" : ISODate("2018-11-02T16:05:37.428Z"), "pingMs" : NumberLong(0), "electionTime" : Timestamp(1541147605, 1), "electionDate" : ISODate("2018-11-02T08:33:25Z"), "configVersion" : 1 }, { "_id" : 1, "name" : "172.18.6.88:22000", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 27142, "optime" : { "ts" : Timestamp(1541174730, 1127), "t" : NumberLong(17) }, "optimeDate" : ISODate("2018-11-02T16:05:30Z"), "syncingTo" : "172.18.6.89:22000", "configVersion" : 1, "self" : true }, { "_id" : 2, "name" : "172.18.6.92:22000", "health" : 1, "state" : 7, "stateStr" : "ARBITER", "uptime" : 27141, "lastHeartbeat" : ISODate("2018-11-02T16:05:35.909Z"), "lastHeartbeatRecv" : ISODate("2018-11-02T16:05:33.315Z"), "pingMs" : NumberLong(0), "configVersion" : 1 } ], "ok" : 1 }

4. 部署sharding02分片副本集(本次部署两组副本集,每组副本集作为一个分片节点)

参考 sharding01

5. 配置mongos

# mongos-01, mongos-02, mongos-03操作 (1) 创建程序相关目录 mkdir -p /opt/mongos/mongos_conf mkdir -p /opt/mongos/mongos_data mkdir -p /opt/mongos/mongos_key mkdir -p /opt/mongos/mongos_log (2) 创建配置文件 # mongos-01, mongos-02, mongos-03操作 cat <<EOF> /opt/mongos/mongos_conf/mongos.conf logpath=/opt/mongos/mongos_log/mongos.log logappend=true bind_ip = 0.0.0.0 port=20000 configdb=configs/172.18.6.87:21000,172.18.6.86:21000,172.18.6.85:21000 #配置分片服务器集群地址 fork=frue #keyFile=/opt/mongos/mongos_key/mongo_keyfile EOF (3) 启动mongos # mongos-01, mongos-02, mongos-03操作 /usr/bin/mongos -f /opt/mongos/mongos_conf/mongos.conf (4) 配置分片 # mongos-01, mongos-02, mongos-03任意节点操作 mongo –port use admin db.runCommand( { addshard : "sharding01/172.18.6.89:22000,172.18.6.88:22000,172.18.6.92:22000"}); #添加分片节点1 db.runCommand( { addshard : "sharding02/172.18.6.91:22000,172.18.6.90:22000,172.18.6.93:22000"}); #添加分片节点2 (5) 查看集群状态 mongos> db.runCommand( { listshards : 1 } ) { "shards" : [ { "_id" : "sharding01", "host" : "sharding01/172.18.6.88:22000,172.18.6.89:22000", "state" : 1 }, { "_id" : "sharding02", "host" : "sharding02/172.18.6.90:22000,172.18.6.91:22000", "state" : 1 } ], "ok" : 1 }

6. 配置片键(本次根据业务场景使用hash分片)

Mongo分片需要为有分片需求的数据库开启分片,并为库内需要分片的集合设置片键 (1) 为分片库开启分片 #mongos任意节点操作 mongo --port 20000 use admin db.runCommand( { enablesharding :"dingkai"}); #为指定库开启分片(dingkai 是库名) use dingkai #进入dingkai 库 db.dingkaitable.createIndex({dingkaifields: "hashed"}); #为作为片键的字段创建hash索引,dingkaitable是需要分片的集合, dingkaifields是作为片键的字段 use admin #创建片键,需要在admin库执行 db.runCommand({shardcollection : "dingkai. dingkaitable",key:{dingkaifields: "hashed"} }) #创建片键(hashed表示使用hash分片,使用范围分片则把hashed改为 1)

三. 开启认证

1. 在各个集群中创建账号(建议开始只建立root权限的用户,后续需要其他用户,可以通过root账号建立)

(1) configserver集群 #主节点操作 mongo --port 21000 use admin db.createUser({user:"root", pwd:"Dingkai.123", roles:[{role: "root", db:"admin" }]}) #root账号(超级管理员) use admin db.createUser({user:"admin", pwd:"Dingkai.123", roles:[{role: "userAdminAnyDatabase", db:"admin" }]}) #管理员账号 use admin db.createUser({user:"clusteradmin", pwd:"Dingkai.123", roles:[{role: "clusterAdmin", db:"admin" }]}) #集群管理账号 (2) sharding集群的主节点(sharding节点设置,主要用于单独登陆分片节点的副本集集群时使用,业务库的账号,在mongos上建立即可) mongo --port 21000 use admin db.createUser({user:"root", pwd:"Dingkai.123", roles:[{role: "root", db:"admin" }]}) #root账号(超级管理员) use admin db.createUser({user:"admin", pwd:"Dingkai.123", roles:[{role: "userAdminAnyDatabase", db:"admin" }]}) #管理员账号 use admin db.createUser({user:"clusteradmin", pwd:"Dingkai.123", roles:[{role: "clusterAdmin", db:"admin" }]}) #集群管理账号

2. 创建集群认证文件

openssl rand -base64 64 > mongo_keyfile

3. 将认证文件分发至各个节点中配置文件制定的位置

(1) configserver集群节点(mongos-01, mongos-02, mongos-03)配置文件中

keyfile制定目录为: keyFile=/opt/configserver/configserver_key/mongo_keyfile

(2) sharding节点集群中各个节点配置文件指定目录:

keyFile: /opt/mongodb/mongodb_keyfile/mongo_keyfile

(3) mongos 各个节点(mongos-01, mongos-02, mongos-03)配置文件指定目录:

keyFile=/opt/mongos/mongos_key/mongo_keyfile

(4)分发完成后, 认证文件 mongo_keyfile 权限设置为 600, 属主属组为mongod

chmod 600

chown mongod:mongod

4. 修改各个节点配置文件中认证相关配置

(1) Sharding个节点(shardding01_arbitration-01, shardding01_mongodb-01, shardding01_mongodb-02, shardding02_arbitration-01, shardding02_mongodb-01, shardding02_mongodb-02)

开启配置文件中:

security:

authorization: enabled

keyFile: /opt/mongodb/mongodb_keyfile/mongo_keyfile

(2)configserver个节点(mongos-01, mongos-02, mongos-03)

keyFile=/opt/mongos/mongos_key/mongo_keyfile

5. 重启所有节点

(1) 重启shardding分片各个节点

(2) 重启configserver各节点

(3) 重启mongos各节点

6. 验证

(1)不认证无法执行命令查看集群状态

mongos -port 20000 use admin db.runCommand( { listshards : 1 } ) { "ok" : 0, "errmsg" : "not authorized on admin to execute command { listshards: 1.0 }", "code" : 13, "codeName" : "Unauthorized"

(2) 使用集群管理账号认证

mongos> use admin switched to db admin mongos> db.auth("clusteradmin","Dingkai.123") 1 mongos> db.runCommand( { listshards : 1 } ) { "shards" : [ { "_id" : "sharding01", "host" : "sharding01/172.18.6.88:22000,172.18.6.89:22000", "state" : 1 }, { "_id" : "sharding02", "host" : "sharding02/172.18.6.90:22000,172.18.6.91:22000", "state" : 1 } ], "ok" : 1 }

四. 常用命令

(1)集群管理

db.runCommand( { listshards : 1 } ) #查看集群状态

db.printShardingStatus() #给出整个分片系统的一些状态信息

db.表名.stats() #查看表的存储状态

(2)用户管理

Read:允许用户读取指定数据库

readWrite:允许用户读写指定数据库

dbAdmin:允许用户在指定数据库中执行管理函数,如索引创建、删除,查看统计或访问system.profile

userAdmin:允许用户向system.users集合写入,可以找指定数据库里创建、删除和管理用户

clusterAdmin:只在admin数据库中可用,赋予用户所有分片和复制集相关函数的管理权限。

readAnyDatabase:只在admin数据库中可用,赋予用户所有数据库的读权限

readWriteAnyDatabase:只在admin数据库中可用,赋予用户所有数据库的读写权限

userAdminAnyDatabase:只在admin数据库中可用,赋予用户所有数据库的userAdmin权限

dbAdminAnyDatabase:只在admin数据库中可用,赋予用户所有数据库的dbAdmin权限。

root:只在admin数据库中可用。超级账号,超级权限

######创建用户######

db.createUser({user:"XXX",pwd:"XXX",roles:[{role:"readWrite", db:"myTest"}]})

######查看用户######

(1)查看所有用户

use admin

db.system.users.find()

(2)查看当前库的用户

use 库名

show users

######删除用户######

(1)删除当前库用户

use 库名

db.dropUser('用户名')

浙公网安备 33010602011771号

浙公网安备 33010602011771号