K8S部署kafka集群,不做后端存储的情况(消息和消息队列)-20210207

kafka概述:

kafka是一个分布式的基于发布/订阅模式的消息队列,主要应用于大数据实时处理领域。

消息队列概述:

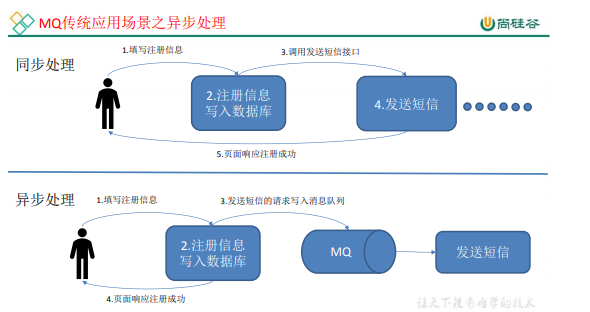

传统消息队列的应用场景 (图片来自尚硅谷哔哩哔哩kafka课程文档)

使用消息队列的好处:

1. 解耦:允许你独立的扩展或者修改两边的处理过程,只要确保它们遵守同样的接口约束。

2. 可恢复性: 系统的一部分组件失效时,不会影响到整个系统。消息队列降低了进程间的耦合度,所以即使一个处理消息的进程挂掉,加入队列中的消息仍任可以在系统恢复后被处理。

3. 缓冲: 有助于控制和优化数据流经过系统的速度,解决生产消息和消费消息的处理速度不一致的情况。

4. 灵活性&&峰值处理能力: 在访问量剧增的情况下,应用任然需要继续发挥作用,但是这样的突发流量并不常见。如果专门为处理这种如法的流量峰值来投入资源随时待命,那么将是巨大的资源浪费。使用消息队列能够使关键组件顶住突发的压力,而不会因为突发的超负荷的请求而完全崩溃。

5. 异步通信: 很多时候,用户不想也不需要立即处理消息。消息队列提供了异步处理机制,允许用户把一个消息放入队列,但并不立即处理它。想向队列中放入多少消息就放多少,然后在需要的时候再去处理他们。

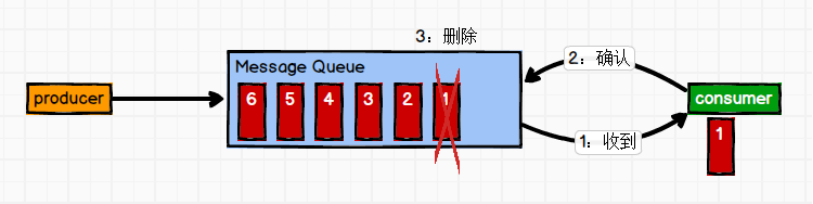

消息队列的两种模式:

1. 点对点模式(一对一,消费者主动拉取数据,消息收到后消息清除)

消息生产者生产消息发送到Queue(队列)中,然后消息消费者从Queue中取出并且消费信息。消息被消费以后,Queue中不再存储,所以消息消费者不可能消费到已经被消费的消息。Queue支持存在多个消费者,但是对一个消息而言,只会有一个消费者可以消费。

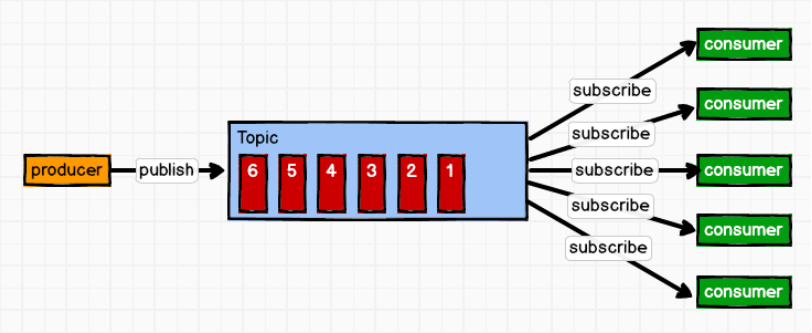

2. 发布/订阅模式(一对多,消费者消费数据之后不会清除消息)

消息生产者(发布)将消息发布到topic(主题)中,同时有多个消息消费者(订阅)消费该消息。和点对点方式不同,发布到topic的消息会被所有订阅者消费。

Kafka基础架构

1. Producere: 消息生产者,就是向kafka broker 发消息的客户端。

2. Consumer: 消息消费者,向kafka broker取消息的客户端。

3. Consumer group: 消费者组,由多个consumer组成。消费者组内每个消费者负责不同分区的数据,一个分区只能由一个组内消费者消费;消费者组之间相互不会影响。所有的消费者都属于某个消费者组,即消费者组是逻辑上的一个订阅者。

4. Broker : 一台kafka服务器就是一个broker。一个集群由多个broker组成。一个broker可以容纳多个topic。

5. Topic : 可以理解为一个队列,一个主题,生产者和消费者面向的都是一个topic。

6. Partition: 为了实现扩展性,一个非常大的topic可以分布到多个broker(kafka服务器)上,一个topic可以分为多个partition,每个partition是一个有序队列。

7. Replica: 副本,为保证集群中的某个节点发生故障时,该节点上的partirion数据不丢失,并且kafka任然能够继续工作,kafka提供了副本机制,一个topic的每个分区都有若干个副本,一个leadder和若干个follower。

8. Leader: 每个分区多个副本的“主”,生产者发送数据的对象,以及消费者消费数据的对象都是leadder。

9. Follower: 每个分区多个副本中的“从”,实时从leader中同步数据,保持和leader数据的同步。leader发生故障时,某个follower会成为新的leader。

在K8S集群中部署kafka集群:

集群规划:

master:192.168.11.113

node1:192.168.11.106

node2:192.168.11.116

以下操作都在master上操作:

1. 创建一个kafka的名称空间。

[root@master ~]# kubectl create namespace kafka

查看创建的名称空间

[root@master ~]# kubectl get namespace kafka NAME STATUS AGE kafka Active 23h

2. 安装zookeeper集群,kafka基于zookeeper做服务注册发现。

2.1 创建zookeeper service 的yaml文件

[root@master ~]# cd yaml/kafka/

[root@master kafka]# vim zookeeper-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: zoo1

labels:

app: zookeeper-1

spec:

ports:

- name: client

port: 2181

protocol: TCP

- name: follower

port: 2888

protocol: TCP

- name: leader

port: 3888

protocol: TCP

selector:

app: zookeeper-1

---

apiVersion: v1

kind: Service

metadata:

name: zoo2

labels:

app: zookeeper-2

spec:

ports:

- name: client

port: 2181

protocol: TCP

- name: follower

port: 2888

protocol: TCP

- name: leader

port: 3888

protocol: TCP

selector:

app: zookeeper-2

---

apiVersion: v1

kind: Service

metadata:

name: zoo3

labels:

app: zookeeper-3

spec:

ports:

- name: client

port: 2181

protocol: TCP

- name: follower

port: 2888

protocol: TCP

- name: leader

port: 3888

protocol: TCP

selector:

app: zookeeper-3

使用kubectl命令创建zookeeper svc

[root@master kafka]# kubectl apply -f zookeeper-svc.yaml

查看zookeeper svc

[root@master kafka]# kubectl get svc -n kafka NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) zoo1 ClusterIP 10.1.125.242 <none> 2181/TCP,2888/TCP,3888/TCP 21h zoo2 ClusterIP 10.1.211.184 <none> 2181/TCP,2888/TCP,3888/TCP 21h zoo3 ClusterIP 10.1.85.246 <none> 2181/TCP,2888/TCP,3888/TCP 21h

2.2 创建zookeeper-deployment.yaml 服务的yaml文件

[root@master kafka]# vim zookeeper-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: zookeeper-deployment-1

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper-1

name: zookeeper-1

template:

metadata:

labels:

app: zookeeper-1

name: zookeeper-1

spec:

containers:

- name: zoo1

image: zookeeper

imagePullPolicy: IfNotPresent

ports:

- containerPort: 2181

env:

- name: ZOO_MY_ID

value: "1"

- name: ZOO_SERVERS

value: "server.1=0.0.0.0:2888:3888;2181 server.2=zoo2:2888:3888;2181 server.3=zoo3:2888:3888;2181"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: zookeeper-deployment-2

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper-2

name: zookeeper-2

template:

metadata:

labels:

app: zookeeper-2

name: zookeeper-2

spec:

containers:

- name: zoo2

image: zookeeper

imagePullPolicy: IfNotPresent

ports:

- containerPort: 2181

env:

- name: ZOO_MY_ID

value: "2"

- name: ZOO_SERVERS

value: "server.1=zoo1:2888:3888;2181 server.2=0.0.0.0:2888:3888;2181 server.3=zoo3:2888:3888;2181"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: zookeeper-deployment-3

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper-3

name: zookeeper-3

template:

metadata:

labels:

app: zookeeper-3

name: zookeeper-3

spec:

containers:

- name: zoo3

image: zookeeper

imagePullPolicy: IfNotPresent

ports:

- containerPort: 2181

env:

- name: ZOO_MY_ID

value: "3"

- name: ZOO_SERVERS

value: "server.1=zoo1:2888:3888;2181 server.2=zoo2:2888:3888;2181 server.3=0.0.0.0:2888:3888;2181"

创建zookeeper服务

kubectl apply -f zookeeper-deployment.yaml

查看zookeeper pod 状态

[root@master kafka]# kubectl get pod -n kafka NAME READY STATUS RESTARTS AGE zookeeper-deployment-1-66cb7dcb-j4zcr 1/1 Running 1 20h zookeeper-deployment-2-56fc4644c7-q6nx4 1/1 Running 1 20h zookeeper-deployment-3-7c7789cf98-9m5kn 1/1 Running 1 20h

3. 部署kafka

3.1 创建kafka svc的yaml文件

vim kafka-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: kafka-service-1

labels:

app: kafka-service-1

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-1

targetPort: 9092

nodePort: 30901

protocol: TCP

selector:

app: kafka-service-1

---

apiVersion: v1

kind: Service

metadata:

name: kafka-service-2

labels:

app: kafka-service-2

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-2

targetPort: 9092

nodePort: 30902

protocol: TCP

selector:

app: kafka-service-2

---

apiVersion: v1

kind: Service

metadata:

name: kafka-service-3

labels:

app: kafka-service-3

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-3

targetPort: 9092

nodePort: 30903

protocol: TCP

selector:

app: kafka-service-3

使用kubectl命令创建kafka svc

[root@master kafka]# kubectl apply -f kafka-svc.yaml

查看SVC

[root@master kafka]# kubectl get svc -n kafka NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kafka-service-1 NodePort 10.1.246.238 <none> 9092:30901/TCP 24h kafka-service-2 NodePort 10.1.214.60 <none> 9092:30902/TCP 24h kafka-service-3 NodePort 10.1.252.232 <none> 9092:30903/TCP 24h zoo1 ClusterIP 10.1.125.242 <none> 2181/TCP,2888/TCP,3888/TCP 24h zoo2 ClusterIP 10.1.211.184 <none> 2181/TCP,2888/TCP,3888/TCP 24h zoo3 ClusterIP 10.1.85.246 <none> 2181/TCP,2888/TCP,3888/TCP 24h

创建 zookeeper-deployment.yaml 文件

[root@master kafka]# vim kafka-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-1

spec:

replicas: 1

selector:

matchLabels:

name: kafka-service-1

template:

metadata:

labels:

name: kafka-service-1

app: kafka-service-1

spec:

containers:

- name: kafka-1

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ADVERTISED_PORT

value: "9092"

- name: KAFKA_ADVERTISED_HOST_NAME

value: 10.1.246.238

- name: KAFKA_ZOOKEEPER_CONNECT

value: zoo1:2181,zoo2:2181,zoo3:2181

- name: KAFKA_BROKER_ID

value: "1"

- name: KAFKA_CREATE_TOPICS

value: mytopic:2:1

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30901

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-2

spec:

replicas: 1

selector:

matchLabels:

name: kafka-service-2

template:

metadata:

labels:

name: kafka-service-2

app: kafka-service-2

spec:

containers:

- name: kafka-2

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ADVERTISED_PORT

value: "9092"

- name: KAFKA_ADVERTISED_HOST_NAME

value: 10.1.214.60

- name: KAFKA_ZOOKEEPER_CONNECT

value: zoo1:2181,zoo2:2181,zoo3:2181

- name: KAFKA_BROKER_ID

value: "2"

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30902

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-3

spec:

replicas: 1

selector:

matchLabels:

name: kafka-service-3

template:

metadata:

labels:

name: kafka-service-3

app: kafka-service-3

spec:

containers:

- name: kafka-3

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ADVERTISED_PORT

value: "9092"

- name: KAFKA_ADVERTISED_HOST_NAME

value: 10.1.252.232

- name: KAFKA_ZOOKEEPER_CONNECT

value: zoo1:2181,zoo2:2181,zoo3:2181

- name: KAFKA_BROKER_ID

value: "3"

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30903

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

说明:

1. KAFKA_ADVERTISED_HOST_NAME 对应 kafka 的svc cluster ip地址,可通过: kubectl get svc -n kafka 查询,例如:

[root@master kafka]# kubectl get svc -n kafka NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kafka-service-1 NodePort 10.1.246.238 <none> 9092:30901/TCP 24h kafka-service-2 NodePort 10.1.214.60 <none> 9092:30902/TCP 24h kafka-service-3 NodePort 10.1.252.232 <none> 9092:30903/TCP 24h

2. KAFKA_ADVERTISED_LISTENERS 监听地址,填写master或者node1或者node2的主机地址加 K8S 所暴露的映射端口(30000 - 32767)

metadata:

name: kafka-deployment-1

………………

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30901

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-2

………………

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30902

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-3

………………

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://192.168.11.113:30903

使用kubectl创建kafka

[root@master kafka]# kubectl apply -f kafka-deployment.yaml

查看 kafka的状态

[root@master kafka]# kubectl get pod -n kafka

NAME READY STATUS RESTARTS AGE

kafka-deployment-1-6d8d6c9bd4-vj9tf 1/1 Running 1 25h

kafka-deployment-2-6c94d5cf5b-cghvc 1/1 Running 1 25h

kafka-deployment-3-566cf5994b-mz4mh 1/1 Running 1 25h

zookeeper-deployment-1-66cb7dcb-j4zcr 1/1 Running 1 25h

zookeeper-deployment-2-56fc4644c7-q6nx4 1/1 Running 1 25h

zookeeper-deployment-3-7c7789cf98-9m5kn 1/1 Running 1 25h

测试,命令行测试

你可以进入任意一个pod然后使用命令行进行kafka的操作:

我们测试进入 kafka-deployment-1-6d8d6c9bd4-vj9tf 模拟消息生产者:

[root@master ~]# kubectl exec -it kafka-deployment-1-6d8d6c9bd4-vj9tf -n kafka /bin/bash bash-4.4#

进入 kafka-deployment-3-566cf5994b-mz4mh 模拟消息消费者:

[root@master kafka]# kubectl exec -it kafka-deployment-3-566cf5994b-mz4mh -n kafka /bin/bash bash-4.4#

进入容器后,进入kafka的命令目录(生产者和消费者一样):

bash-4.4# cd /opt/kafka/bin/

消息生产者新建 test topic,准备发消息:

具体命令格式:

kafka-console-producer.sh --broker-list <kafka-svc1-clusterIP>:9092,<kafka-svc2-clusterIP>:9092,<kafka-svc3-clusterIP>:9092 --topic test

虚拟机性能不行,我这里只写一个地址,也可以连接多个地址测试

bash-4.4# kafka-console-producer.sh --broker-list 10.1.246.238:9092 --topic test >

打开消费者容器终端,模拟消费者收消息:

bin/kafka-console-consumer.sh --bootstrap-server <任意kafka-svc-clusterIP>:9092 --topic test --from-beginning

我还是连接一个地址

bash-4.4# kafka-console-consumer.sh --bootstrap-server 10.1.252.232:9092 --topic test --from-beginning

然后在消息生产者容器中输入想要发送的消息

bash-4.4# kafka-console-producer.sh --broker-list 10.1.246.238:9092 --topic test >野猪套天下第一 >test >!@#$ >123 >

发送完成在消息接收者容器里能够看到生产者发送的消息(在生产者输入信息,查看消费者是否能够接收到。如果接收到,说明运行成功。)

bash-4.4# kafka-console-consumer.sh --bootstrap-server 10.1.252.232:9092 --topic test --from-beginning 野猪套天下第一 test !@#$ 123

最后,还可以执行下面的命令以测试列出所有的消息主题:

kafka-topics.sh --list --zookeeper [zookeeper的service的clusterIP]:2181

bash-4.4# kafka-topics.sh --list --zookeeper zoo1:2181

__consumer_offsets

mytopic

test # 我们创建的test

常用的命令和目录:

# 进入容器

kubectl exec -it kafka-deployment-1-xxxxxxxxxxx -n zookeeper /bin/bash cd cd opt/kafka # 查看topics bin/kafka-topics.sh --list --zookeeper <任意zookeeper-svc-clusterIP>:2181

# 手动创建主题 bin/kafka-topics.sh --create --zookeeper <zookeeper-svc1-clusterIP>:2181,<zookeeper-svc2-clusterIP>:2181,<zookeeper-svc3-clusterIP>:2181 --topic test --partitions 3 --replication-factor 1

# 写(CTRL+D结束写内容) bin/kafka-console-producer.sh --broker-list <kafka-svc1-clusterIP>:9092,<kafka-svc2-clusterIP>:9092,<kafka-svc3-clusterIP>:9092 --topic test

# 读(CTRL+C结束读内容) bin/kafka-console-consumer.sh --bootstrap-server <任意kafka-svc-clusterIP>:9092 --topic test --from-beginning

注意: 如果集群内部使用kafka,可以使用cluster ip 或者 K8S内的dns服务名称加对应的端口

如果集群外部想使用kafka,可以使用集群内任意一台主机的IP地址 加 映射出去的(30000 - 32767端口)

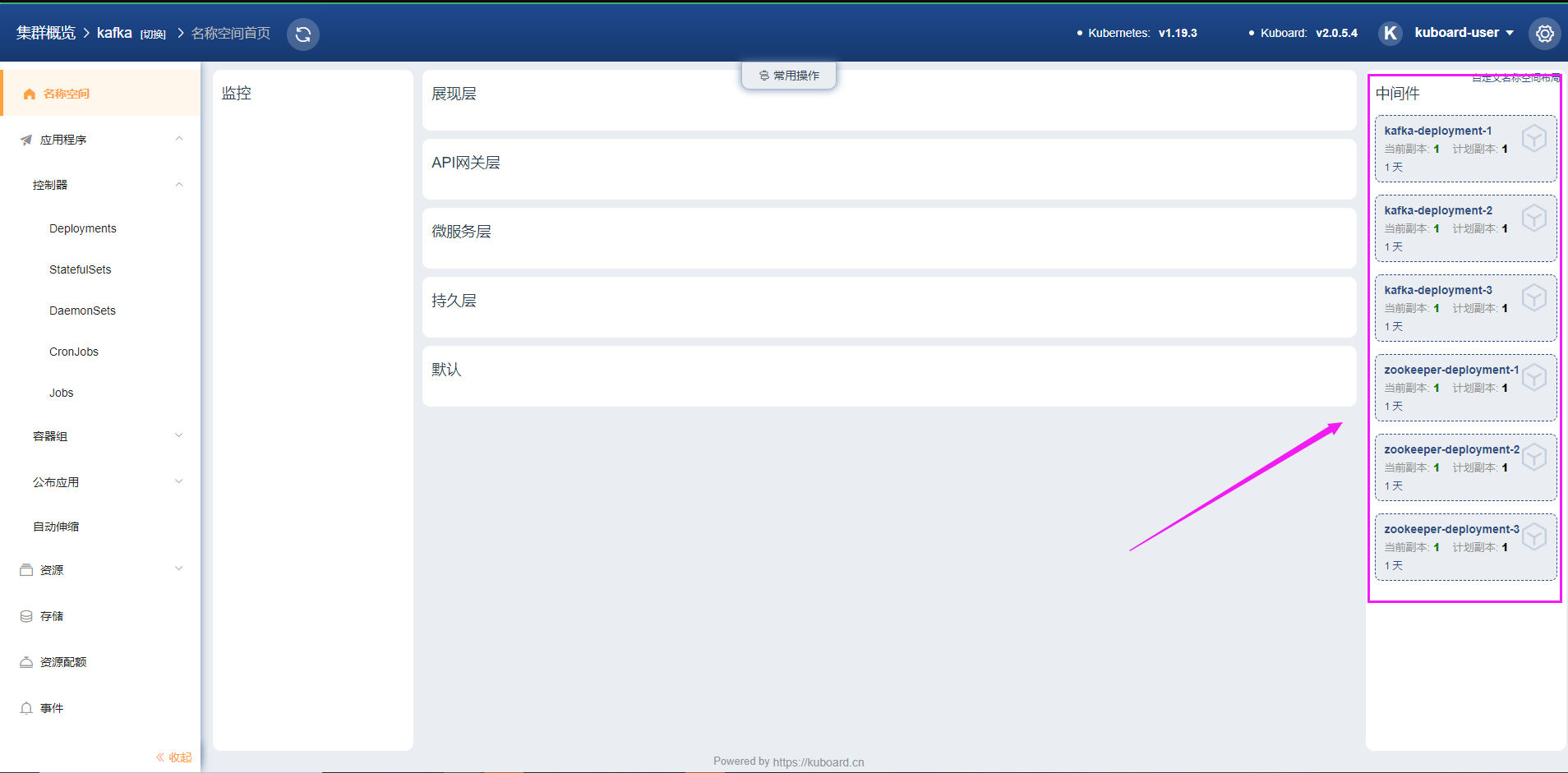

可以配合kuboard来方便的展示集群,例如:

# 至此,我们在K8S集群内部署不带存储的Kafka集群完毕,不做持久化。