kubeadm部署k8s v1.23.16

一、更新源

1、更新docker和k8s源

(这里我使用的是阿里源https://developer.aliyun.com/mirror/)

docker-ce

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3

sudo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

# Step 4: 更新并安装Docker-CE

sudo yum makecache fast

kubernetes源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

ps: 由于官网未开放同步方式, 可能会有索引gpg检查失败的情况, 这时请用 yum install -y --nogpgcheck kubelet kubeadm kubectl 安装

2、更新docker镜像加速(网易)

cat >/etc/docker/daemon.json << EOF

{

"registry-mirrors": ["http://hub-mirror.c.163.com"]

}

EOF

二、设置环境

1.升级内核(如果需要)

参考:https://www.cnblogs.com/deny/p/16373537.html

2、 配置host

在三个节点分别执行(主机名和IP根据实际情况填写)

#### 分别在三台机器设置主机名

hostnamectl set-hostname k8s-master

hostnamectl set-hostname k8s-node1

hostnamectl set-hostname k8s-node2

#### 在三台机器都执行

cat >> /etc/hosts << EOF

192.168.245.141 k8s-master

192.168.245.142 k8s-node1

192.168.245.143 k8s-node2

EOF

3、关闭防火墙

systemctl stop firewalld && systemctl disable firewalld

4、关闭selinux

setenforce 0 #### 临时关闭

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config ####永久关闭

reboot

5、关闭swap

swapoff -a 临时关闭

永久关闭设置/etc/fstab

sed -i 's/.*swap.*/#&/' /etc/fstab

6、内核调优

cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.ipv4.ip_nonlocal_bind = 1

EOF

sysctl -p

echo "1" >/proc/sys/net/ipv4/ip_forward

7、安装ipvs模块

yum install ipset ipvsadm -y

modprobe br_netfilter

#### 内核3.10 ####

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

#### 内核5.4 ####

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

################## 高版本的centos内核nf_conntrack_ipv4被nf_conntrack替换了

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

8、配置时间同步

三、安装docker和k8s

所有节点安装docker-ce

yum install -y docker-ce mkdir /etc/docker cat > /etc/docker/daemon.json <<EOF { "registry-mirrors": ["https://4bsnyw1n.mirror.aliyuncs.com"], "exec-opts":["native.cgroupdriver=systemd"], "log-opts": { "max-size": "300m", "max-file": "3" } } EOF systemctl enable docker && systemctl restart docker

PS: 需要修改docker的cgroup驱动,不然会报错

kubelet cgroup driver默认驱动为systemd,而docker cgroup driver默认驱动为cgroupfs。需要保持一致

https://kubernetes.io/zh-cn/docs/tasks/administer-cluster/kubeadm/configure-cgroup-driver/

master节点操作

1、安装服务

yum list --showduplicates kubeadm --disableexcludes=kubernetes

yum install -y kubeadm-1.23.16-0.x86_64 kubelet-1.23.16-0.x86_64 kubectl-1.23.16-0.x86_64

2、拉取镜像

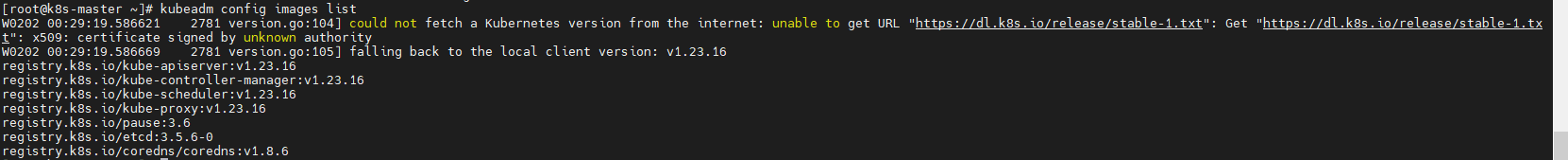

可先通过kubeadm config images list 查看所需镜像,由于镜像都是国外,所以先拉取国内镜像,在tag改名

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.16

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.16

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.16

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.6-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.16 k8s.gcr.io/kube-apiserver:v1.23.16

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.16 k8s.gcr.io/kube-controller-manager:v1.23.16

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.16 k8s.gcr.io/kube-scheduler:v1.23.16

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16 k8s.gcr.io/kube-proxy:v1.23.16

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6 k8s.gcr.io/pause:3.6

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.6-0 k8s.gcr.io/etcd:3.5.6-0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6 k8s.gcr.io/coredns/coredns:v1.8.6

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.23.16

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.23.16

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.23.16

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.6-0

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

PS:如果有私有仓库,可以将镜像上传到私有仓库

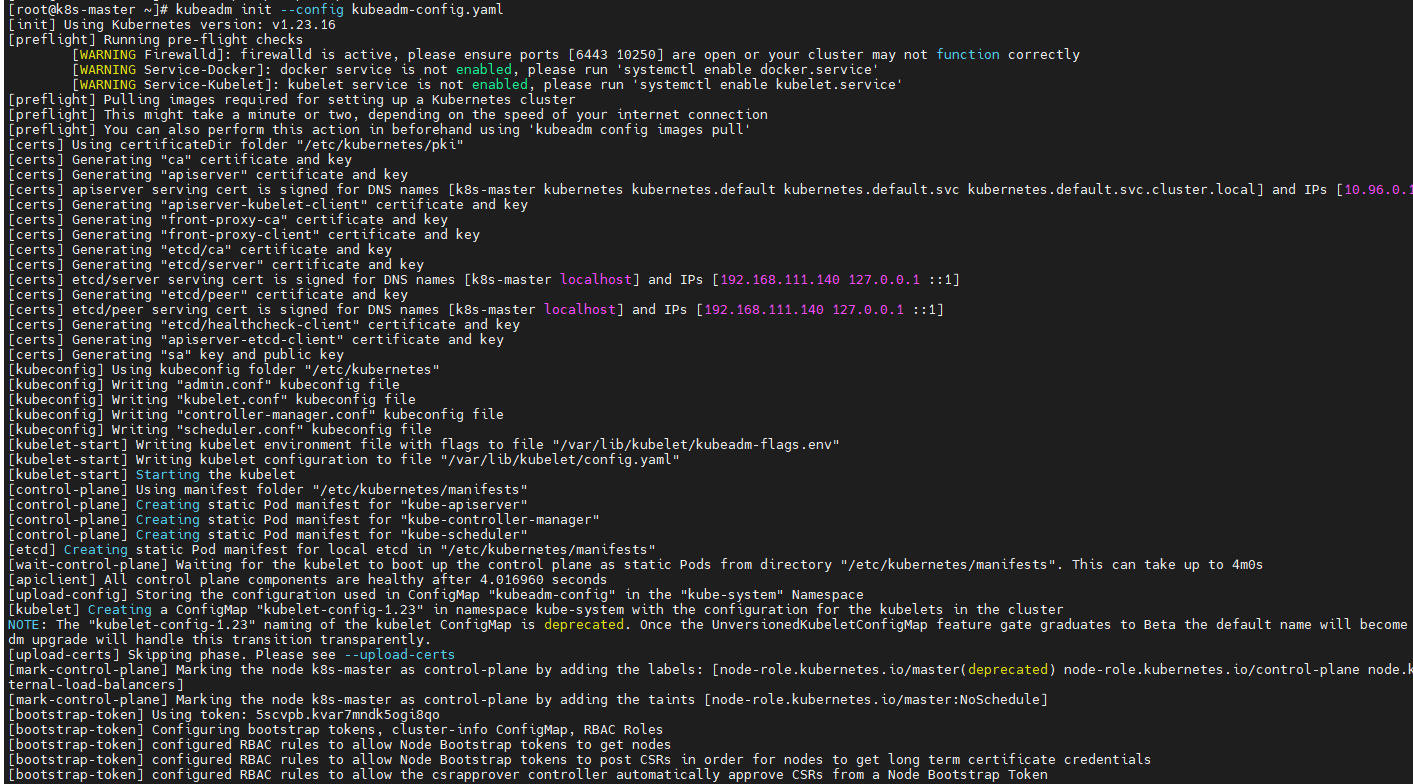

3、初始化集群

创建初始化集群配置文件:

--- apiVersion: kubeadm.k8s.io/v1beta3 kind: ClusterConfiguration kubernetesVersion: v1.23.16 networking: dnsDomain: cluster.local serviceSubnet: 10.96.0.0/12 podSubnet: 10.244.0.0/16 --- apiVersion: kubeproxy.config.k8s.io/v1alpha1 kind: KubeProxyConfiguration mode: ipvs

初始化集群

kubeadm init --config=kubeadm-config.yaml

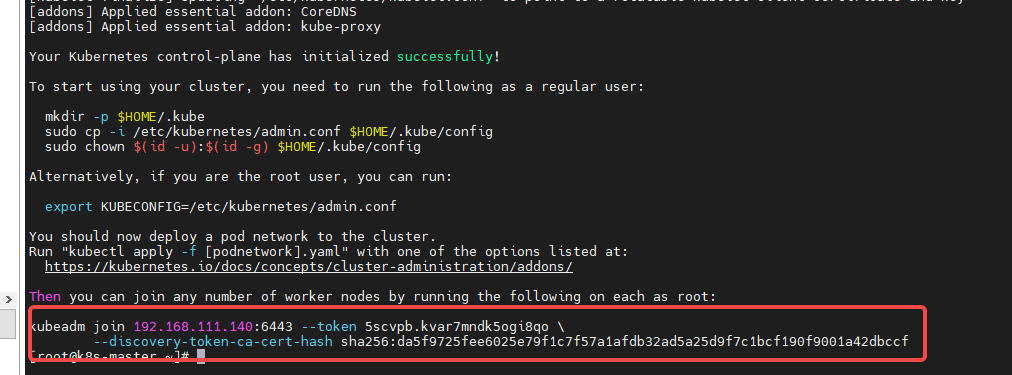

记下这里的token,节点加入需要使用

要使kubectl为非root用户工作,请运行以下命令,这些命令也是kubeadm init输出的一部分:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

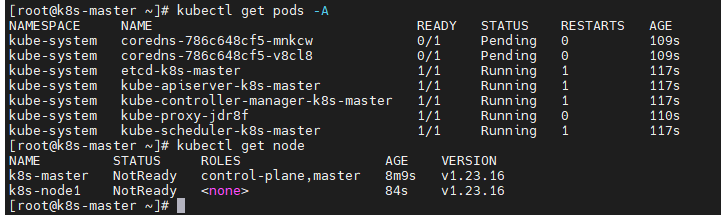

查看pod状态 kubectl get pods -A

此处可以看出我们已经获取到了node节点,但是状态是NotReady,因为此时我们还没有安装网络,接下来我们安装flannel:

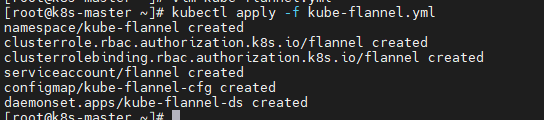

4、安装网络插件

kubectl apply -f https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml

--- kind: Namespace apiVersion: v1 metadata: name: kube-flannel labels: pod-security.kubernetes.io/enforce: privileged --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel rules: - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - get - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-flannel --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-flannel --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-flannel labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds namespace: kube-flannel labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/os operator: In values: - linux hostNetwork: true priorityClassName: system-node-critical tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni-plugin #image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply) image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0 command: - cp args: - -f - /flannel - /opt/cni/bin/flannel volumeMounts: - name: cni-plugin mountPath: /opt/cni/bin - name: install-cni #image: flannelcni/flannel:v0.20.2 for ppc64le and mips64le (dockerhub limitations may apply) image: docker.io/rancher/mirrored-flannelcni-flannel:v0.20.2 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel #image: flannelcni/flannel:v0.20.2 for ppc64le and mips64le (dockerhub limitations may apply) image: docker.io/rancher/mirrored-flannelcni-flannel:v0.20.2 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN", "NET_RAW"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace - name: EVENT_QUEUE_DEPTH value: "5000" volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ - name: xtables-lock mountPath: /run/xtables.lock volumes: - name: run hostPath: path: /run/flannel - name: cni-plugin hostPath: path: /opt/cni/bin - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg - name: xtables-lock hostPath: path: /run/xtables.lock type: FileOrCreate

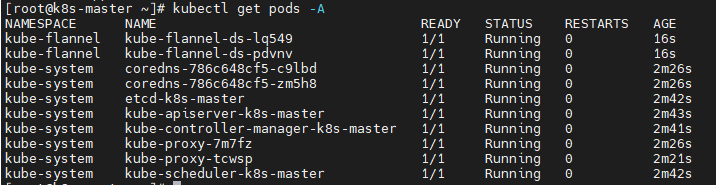

此时查看pod节点

节点上操作

1、安装服务

yum install -y docker-ce

yum install -y kubelet kubeadm kubectl

2、拉取镜像

节点只需要安装如下镜像以及flannel网络

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16

docker registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16 k8s.gcr.io/kube-proxy:v1.23.16 docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6 k8s.gcr.io/pause:3.6 docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6 k8s.gcr.io/coredns/coredns:v1.8.6

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.23.16 docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6 docker rm registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

3、加入k8s集群,使用master创建时显示的token

kubeadm join 192.168.111.140:6443 --token 3beqmv.wue19gsnbf3conjn --discovery-token-ca-cert-hash sha256:63caffa37641eaf47a5b738e28d70daf6fcfc61a57c9291bd02597c6febcd755

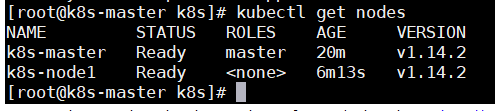

此时在master上就可以看到新加的节点

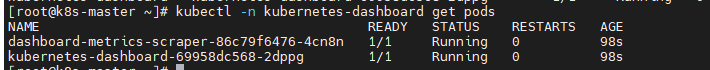

四、部署Dashboard

在master节点上进行如下操作:

1.创建Dashboard的yaml文件

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

# Copyright 2017 The Kubernetes Authors. # # Licensed under the Apache License, Version 2.0 (the "License"); # you may not use this file except in compliance with the License. # You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. apiVersion: v1 kind: Namespace metadata: name: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: ports: - port: 443 targetPort: 8443 selector: k8s-app: kubernetes-dashboard --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-certs namespace: kubernetes-dashboard type: Opaque --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-csrf namespace: kubernetes-dashboard type: Opaque data: csrf: "" --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-key-holder namespace: kubernetes-dashboard type: Opaque --- kind: ConfigMap apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-settings namespace: kubernetes-dashboard --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard rules: # Allow Dashboard to get, update and delete Dashboard exclusive secrets. - apiGroups: [""] resources: ["secrets"] resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"] verbs: ["get", "update", "delete"] # Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map. - apiGroups: [""] resources: ["configmaps"] resourceNames: ["kubernetes-dashboard-settings"] verbs: ["get", "update"] # Allow Dashboard to get metrics. - apiGroups: [""] resources: ["services"] resourceNames: ["heapster", "dashboard-metrics-scraper"] verbs: ["proxy"] - apiGroups: [""] resources: ["services/proxy"] resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"] verbs: ["get"] --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard rules: # Allow Metrics Scraper to get metrics from the Metrics server - apiGroups: ["metrics.k8s.io"] resources: ["pods", "nodes"] verbs: ["get", "list", "watch"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard spec: securityContext: seccompProfile: type: RuntimeDefault containers: - name: kubernetes-dashboard image: kubernetesui/dashboard:v2.7.0 imagePullPolicy: Always ports: - containerPort: 8443 protocol: TCP args: - --auto-generate-certificates - --namespace=kubernetes-dashboard # Uncomment the following line to manually specify Kubernetes API server Host # If not specified, Dashboard will attempt to auto discover the API server and connect # to it. Uncomment only if the default does not work. # - --apiserver-host=http://my-address:port volumeMounts: - name: kubernetes-dashboard-certs mountPath: /certs # Create on-disk volume to store exec logs - mountPath: /tmp name: tmp-volume livenessProbe: httpGet: scheme: HTTPS path: / port: 8443 initialDelaySeconds: 30 timeoutSeconds: 30 securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 volumes: - name: kubernetes-dashboard-certs secret: secretName: kubernetes-dashboard-certs - name: tmp-volume emptyDir: {} serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux # Comment the following tolerations if Dashboard must not be deployed on master tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule --- kind: Service apiVersion: v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: ports: - port: 8000 targetPort: 8000 selector: k8s-app: dashboard-metrics-scraper --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: dashboard-metrics-scraper template: metadata: labels: k8s-app: dashboard-metrics-scraper spec: securityContext: seccompProfile: type: RuntimeDefault containers: - name: dashboard-metrics-scraper image: kubernetesui/metrics-scraper:v1.0.8 ports: - containerPort: 8000 protocol: TCP livenessProbe: httpGet: scheme: HTTP path: / port: 8000 initialDelaySeconds: 30 timeoutSeconds: 30 volumeMounts: - mountPath: /tmp name: tmp-volume securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux # Comment the following tolerations if Dashboard must not be deployed on master tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule volumes: - name: tmp-volume emptyDir: {}

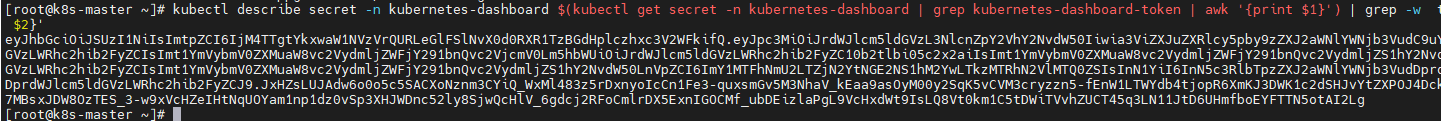

5. 查看访问Dashboard的认证令牌

kubectl describe secret -n kubernetes-dashboard $(kubectl get secret -n kubernetes-dashboard | grep kubernetes-dashboard-token | awk '{print $1}') | grep -w token | awk 'NR==3 {print $2}'

6.使用上面输出的token登录Dashboard。

或者配置kubeconfig文件进行登录

[root@k8s-master ~]# TOKEN=$(kubectl describe secret -n kubernetes-dashboard $(kubectl get secret -n kubernetes-dashboard | grep kubernetes-dashboard-token | awk '{print $1}') | grep -w token | awk 'NR==3 {print $2}')

[root@k8s-master ~]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.crt --embed-certs=true --server=10.2.57.17:6443 --kubeconfig=product.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master ~]# kubectl config set-credentials dashboard_user --token=${TOKEN} --kubeconfig=product.kubeconfig

User "dashboard_user" set.

[root@k8s-master ~]# kubectl config set-context default --cluster=kubernetes --user=dashboard_user --kubeconfig=product.kubeconfig

Context "default" created.

[root@k8s-master ~]# kubectl config use-context default --kubeconfig=product.kubeconfig

Switched to context "default".

清理kubernetes

重置kubernetes服务,重置网络。删除网络配置 kubeadm reset systemctl stop kubelet systemctl stop docker rm -rf /var/lib/cni/ rm -rf /var/lib/kubelet/* iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

ipvsadm -C rm -rf /etc/cni/ rm -rf /etc/kubernetes/ rm -rf $HOME/.kube ifconfig cni0 down ifconfig flannel.4096 down ifconfig docker0 down ifconfig kube-ipvs0 down ip link delete cni0 ip link delete flannel.4096 ip link delete kube-ipvs0 systemctl start docker

浙公网安备 33010602011771号

浙公网安备 33010602011771号