Python35爬虫爬取百度歼击机词条

1. spider_main

# coding:utf8

from Spider_Test import url_manager, html_downloader, html_parser,html_outputer

class SpiderMain(object):

def __init__(self):

self.urls = url_manager.UrlManager()

self.downloader = html_downloader.HtmlDownloader()

self.parser = html_parser.HtmlParser()

self.outputer = html_outputer.HtmlOutPuter()

def craw(self, root_url):

count = 1

self.urls.add_new_url(root_url)

while self.urls.has_new_url:

try:

new_url = self.urls.get_new_url()

print("craw %d:%s" % (count,new_url))

html_cont = self.downloader.download(new_url)

new_urls, new_data = self.parser.parse(new_url, html_cont)

self.urls.add_new_urls(new_urls)

self.outputer.collect_data(new_data)

if count == 7:

break

count = count + 1

except:

print("craw fail")

self.outputer.output_html()

if __name__ == "__main__":

root_url = "http://baike.baidu.com/view/114149.htm"

obj_spider = SpiderMain()

obj_spider.craw(root_url)

2. url_manager

# coding:utf8

class UrlManager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

def add_new_url(self, url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url) # this place use the method

def add_new_urls(self, urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url) # this place use the method

def has_new_url(self):

return len(self.new_urls)!= 0

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

3. html_downloader

# coding:utf8

from urllib import request

class HtmlDownloader(object):

def download(self, url):

if url is None:

return None

# Python3.5 different from Python2.7

response = request.urlopen(url)

if response.getcode() != 200:

return None

return response.read().decode('utf-8','ignore')

4. html_parser

# coding:utf8

import re

import urllib

from bs4 import BeautifulSoup

class HtmlParser(object):

def _get_new_urls(self, page_url, soup):

new_urls = set()

links = soup.find_all("a", href=re.compile(r"/view/\d+\.htm"))

for link in links:

new_url = link['href']

# different from Python2.7

new_full_url = urllib.parse.urljoin(page_url, new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data = {}

res_data['url'] = page_url

# <dl class="lemmaWgt-lemmaTitle lemmaWgt-lemmaTitle-">

title_node = soup.find('dl',class_="lemmaWgt-lemmaTitle lemmaWgt-lemmaTitle-").find("h1")

res_data['title'] = title_node.get_text() # the key should be right or it would raise an error

summary_node = soup.find('div', class_ = "lemma-summary")

res_data['summary'] = summary_node.get_text()

return res_data

def parse(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont, "html.parser", from_encoding="utf-8")

new_urls = self._get_new_urls(page_url, soup)

new_data = self._get_new_data(page_url, soup)

return new_urls, new_data

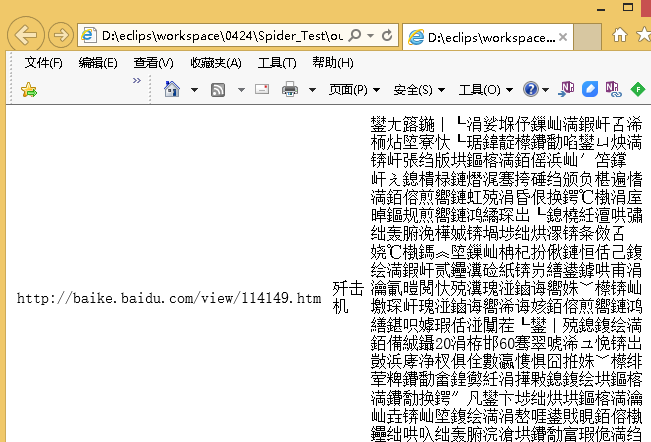

5. html_outputer

# coding:utf8

class HtmlOutPuter(object):

def __init__(self):

self.datas = []

def collect_data(self, data):

if data is None:

return

self.datas.append(data) # array use append method

def output_html(self):

fout = open('output.html','w')

fout.write("<html>")

fout.write("<body>")

fout.write("<table>")

for data in self.datas:

fout.write("<tr>")

fout.write("<td>%s</td>" % data["url"])

fout.write("<td>%s</td>" % data["title"]) # .encode('utf-8')不能编码成utf-8

# fout.write("<td>%s</td>" % data["summary"].encode('utf-8').decode('gbk','ignore'))

fout.write("<td>")

fout.write(data["summary"].encode('utf-8').decode('gbk','ignore')) # this place can't display some characters

fout.write("</td>")

fout.write("</tr>")

fout.write("</table>")

fout.write("</body>")

fout.write("</html")

fout.close()

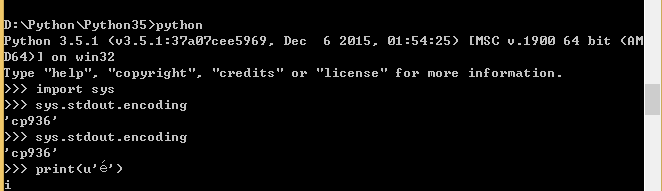

最终获取的html,有些字符不能显示,查资料,说是用命令cmd /K chcp 65001 但是用控制台查询codepage编码依然是,改动不了,说是windows 控制台的问题,先这样。