K8S中Service

Service 的概念

Kubernetes Service 定义了这样一种抽象:一个 Pod 的逻辑分组,一种可以访问它们的策略 —— 通常称为微

服务。 这一组 Pod 能够被 Service 访问到,通常是通过 Label Selector

Service能够提供负载均衡的能力,但是在使用上有以下限制:

只提供 4 层负载均衡能力,而没有 7 层功能,但有时我们可能需要更多的匹配规则来转发请求,这点上 4 层负载均衡是不支持的

Service 的类型

Service 在 K8s 中有以下四种类型

- ClusterIp:默认类型,自动分配一个仅 Cluster 内部可以访问的虚拟 IP

- NodePort:在 ClusterIP 基础上为 Service 在每台机器上绑定一个端口,这样就可以通过 : NodePort 来访问该服务

- LoadBalancer:在 NodePort 的基础上,借助 cloud provider 创建一个外部负载均衡器,并将请求转发到: NodePort

- ExternalName:把集群外部的服务引入到集群内部来,在集群内部直接使用。没有任何类型代理被创建,这只有 kubernetes 1.7 或更高版本的 kube-dns 才支持

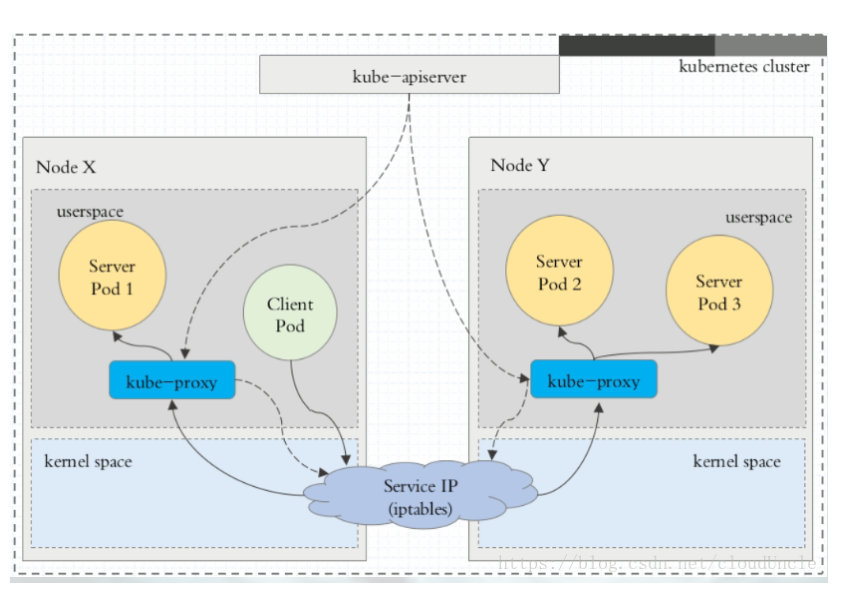

VIP 和 Service 代理

在 Kubernetes 集群中,每个 Node 运行一个 kube-proxy 进程。 kube-proxy 负责为 Service 实现了一种

VIP(虚拟 IP)的形式,而不是 ExternalName 的形式。 在 Kubernetes v1.0 版本,代理完全在 userspace。在

Kubernetes v1.1 版本,新增了 iptables 代理,但并不是默认的运行模式。 从 Kubernetes v1.2 起,默认就是

iptables 代理。 在 Kubernetes v1.8.0-beta.0 中,添加了 ipvs 代理。

在 Kubernetes 1.14 版本开始默认使用 ipvs 代理。

在 Kubernetes v1.0 版本, Service 是 “4层”(TCP/UDP over IP)概念。 在 Kubernetes v1.1 版本,新增了

Ingress API(beta 版),用来表示 “7层”(HTTP)服务。

代理模式的分类

Ⅰ、userspace 代理模式

Ⅱ、iptables 代理模式

Ⅲ、ipvs 代理模式

这种模式,kube-proxy 会监视 Kubernetes Service 对象和 Endpoints ,调用 netlink 接口以相应地创建

ipvs 规则并定期与 Kubernetes Service 对象和 Endpoints 对象同步 ipvs 规则,以确保 ipvs 状态与期望一

致。访问服务时,流量将被重定向到其中一个后端 Pod

与 iptables 类似,ipvs 于 netfilter 的 hook 功能,但使用哈希表作为底层数据结构并在内核空间中工作。这意

味着 ipvs 可以更快地重定向流量,并且在同步代理规则时具有更好的性能。此外,ipvs 为负载均衡算法提供了更

多选项,例如:

rr :轮询调度

lc :最小连接数

dh :目标哈希

sh :源哈希

sed :最短期望延迟

nq : 不排队调度

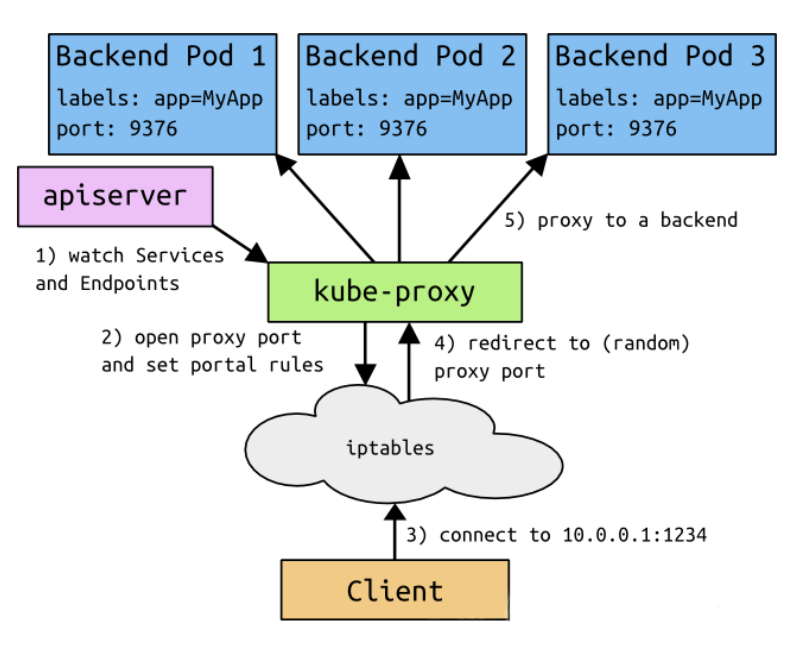

ClusterIP

clusterIP 主要在每个 node 节点使用 iptables,将发向 clusterIP 对应端口的数据,转发到 kube-proxy 中。然

后 kube-proxy 自己内部实现有负载均衡的方法,并可以查询到这个 service 下对应 pod 的地址和端口,进而把

数据转发给对应的 pod 的地址和端口

为了实现图上的功能,主要需要以下几个组件的协同工作:

apiserver 用户通过kubectl命令向apiserver发送创建service的命令,apiserver接收到请求后将数据存储

到etcd中

kube-proxy kubernetes的每个节点中都有一个叫做kube-porxy的进程,这个进程负责感知service,pod

的变化,并将变化的信息写入本地的iptables规则中

iptables 使用NAT等技术将virtualIP的流量转至endpoint中

[root@k8s-master mnt]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d23h [root@k8s-master mnt]# ipvsadm -L IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.96.0.1:https rr -> 192.168.180.130:sun-sr-https Masq 1 3 0 TCP 10.96.0.10:domain rr -> 10.244.0.6:domain Masq 1 0 0 -> 10.244.0.7:domain Masq 1 0 0 TCP 10.96.0.10:9153 rr -> 10.244.0.6:9153 Masq 1 0 0 -> 10.244.0.7:9153 Masq 1 0 0 UDP 10.96.0.10:domain rr -> 10.244.0.6:domain Masq 1 0 0 -> 10.244.0.7:domain Masq 1 0 0 [root@k8s-master mnt]# ipvsadm -Ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.96.0.1:443 rr -> 192.168.180.130:6443 Masq 1 3 0 TCP 10.96.0.10:53 rr -> 10.244.0.6:53 Masq 1 0 0 -> 10.244.0.7:53 Masq 1 0 0 TCP 10.96.0.10:9153 rr -> 10.244.0.6:9153 Masq 1 0 0 -> 10.244.0.7:9153 Masq 1 0 0 UDP 10.96.0.10:53 rr -> 10.244.0.6:53 Masq 1 0 0 -> 10.244.0.7:53 Masq 1 0 0

可以看出访问的试本机6443端口

yaml文件

[root@k8s-master mnt]# cat svc-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: myapp

release: stabel

template:

metadata:

labels:

app: myapp

release: stabel

env: test

spec:

containers:

- name: myapp

image: wangyanglinux/myapp:v2

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

[root@k8s-master mnt]# cat myapp-service.yaml

apiVersion: v1

kind: Service

metadata:

name: myapp

namespace: default

spec:

type: ClusterIP

selector:

app: myapp

release: stabel

ports:

- name: http

port: 80

targetPort: 80

[root@k8s-master mnt]#

测试

[root@k8s-master mnt]# vim svc-deployment.yaml [root@k8s-master mnt]# kubectl apply -f svc-deployment.yaml deployment.apps/myapp-deploy created [root@k8s-master mnt]# kubectl get pod NAME READY STATUS RESTARTS AGE myapp-deploy-55c8657767-5jzt4 1/1 Running 0 5s myapp-deploy-55c8657767-6tkc4 0/1 ContainerCreating 0 5s myapp-deploy-55c8657767-hw96w 0/1 ContainerCreating 0 5s [root@k8s-master mnt]# kubectl get pod NAME READY STATUS RESTARTS AGE myapp-deploy-55c8657767-5jzt4 1/1 Running 0 12s myapp-deploy-55c8657767-6tkc4 1/1 Running 0 12s myapp-deploy-55c8657767-hw96w 1/1 Running 0 12s [root@k8s-master mnt]# kubectl get pod NAME READY STATUS RESTARTS AGE myapp-deploy-55c8657767-5jzt4 1/1 Running 0 13s myapp-deploy-55c8657767-6tkc4 1/1 Running 0 13s myapp-deploy-55c8657767-hw96w 1/1 Running 0 13s [root@k8s-master mnt]# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES myapp-deploy-55c8657767-5jzt4 1/1 Running 0 17s 10.244.1.26 k8s-node02 <none> <none> myapp-deploy-55c8657767-6tkc4 1/1 Running 0 17s 10.244.2.29 k8s-node01 <none> <none> myapp-deploy-55c8657767-hw96w 1/1 Running 0 17s 10.244.2.30 k8s-node01 <none> <none> [root@k8s-master mnt]# curl 10.244.2.30 Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a> [root@k8s-master mnt]# vim myapp-service.yaml [root@k8s-master mnt]# kubectl create -f myapp-service.yaml service/myapp created [root@k8s-master mnt]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d23h myapp ClusterIP 10.111.227.210 <none> 80/TCP 5s [root@k8s-master mnt]# ipvsadm -Ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.96.0.1:443 rr -> 192.168.180.130:6443 Masq 1 3 0 TCP 10.96.0.10:53 rr -> 10.244.0.6:53 Masq 1 0 0 -> 10.244.0.7:53 Masq 1 0 0 TCP 10.96.0.10:9153 rr -> 10.244.0.6:9153 Masq 1 0 0 -> 10.244.0.7:9153 Masq 1 0 0 TCP 10.111.227.210:80 rr -> 10.244.1.26:80 Masq 1 0 0 -> 10.244.2.29:80 Masq 1 0 0 -> 10.244.2.30:80 Masq 1 0 0 UDP 10.96.0.10:53 rr -> 10.244.0.6:53 Masq 1 0 0 -> 10.244.0.7:53 Masq 1 0 0 [root@k8s-master mnt]# curl 10.111.227.210 Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a> [root@k8s-master mnt]# curl 10.111.227.210 Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a> [root@k8s-master mnt]# curl 10.111.227.210 Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a> [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-hw96w [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-6tkc4 [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-5jzt4 [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-hw96w [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-6tkc4 [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-5jzt4 [root@k8s-master mnt]# curl 10.111.227.210/hostname.html myapp-deploy-55c8657767-hw96w

Headless Service

有时不需要或不想要负载均衡,以及单独的 Service IP 。遇到这种情况,可以通过指定 Cluster

IP(spec.clusterIP) 的值为 “None” 来创建 Headless Service 。这类 Service 并不会分配 Cluster IP, kube-

proxy 不会处理它们,而且平台也不会为它们进行负载均衡和路由。

[root@k8s-master mnt]# cat svc-headless.yaml apiVersion: v1 kind: Service metadata: name: myapp-headless namespace: default spec: selector: app: myapp clusterIP: "None" ports: - port: 80 targetPort: 80 [root@k8s-master mnt]#

[root@k8s-master mnt]# vim svc-headless.yaml [root@k8s-master mnt]# kubectl create -f svc-headless.yaml service/myapp-headless created [root@k8s-master mnt]# kube kubeadm kubectl kubelet [root@k8s-master mnt]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d23h myapp ClusterIP 10.111.227.210 <none> 80/TCP 8m myapp-headless ClusterIP None <none> 80/TCP 7s [root@k8s-master mnt]# kubectl create -f svc-headless.yaml Error from server (AlreadyExists): error when creating "svc-headless.yaml": services "myapp-headless" already exists [root@k8s-master mnt]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE coredns-58cc8c89f4-9gn5g 1/1 Running 2 3d23h coredns-58cc8c89f4-xxzx7 1/1 Running 2 3d23h etcd-k8s-master 1/1 Running 3 3d23h kube-apiserver-k8s-master 1/1 Running 3 3d23h kube-controller-manager-k8s-master 1/1 Running 6 3d23h kube-flannel-ds-amd64-4bc88 1/1 Running 3 3d23h kube-flannel-ds-amd64-lzwd6 1/1 Running 4 3d23h kube-flannel-ds-amd64-vw4vn 1/1 Running 5 3d23h kube-proxy-bs8sd 1/1 Running 3 3d23h kube-proxy-nfvtt 1/1 Running 2 3d23h kube-proxy-rn98b 1/1 Running 3 3d23h kube-scheduler-k8s-master 1/1 Running 5 3d23h [root@k8s-master mnt]# dig ;; Warning: Message parser reports malformed message packet. ; <<>> DiG 9.11.4-P2-RedHat-9.11.4-9.P2.el7 <<>> ;; global options: +cmd ;; Got answer: ;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 326 ;; flags: qr rd ra; QUERY: 1, ANSWER: 13, AUTHORITY: 0, ADDITIONAL: 27 ;; WARNING: Message has 8 extra bytes at end ;; QUESTION SECTION: ;. IN NS ;; ANSWER SECTION: . 5 IN NS h.root-servers.net. . 5 IN NS e.root-servers.net. . 5 IN NS d.root-servers.net. . 5 IN NS m.root-servers.net. . 5 IN NS k.root-servers.net. . 5 IN NS g.root-servers.net. . 5 IN NS l.root-servers.net. . 5 IN NS c.root-servers.net. . 5 IN NS j.root-servers.net. . 5 IN NS i.root-servers.net. . 5 IN NS f.root-servers.net. . 5 IN NS b.root-servers.net. . 5 IN NS a.root-servers.net. ;; ADDITIONAL SECTION: a.root-servers.net. 5 IN A 198.41.0.4 b.root-servers.net. 5 IN A 199.9.14.201 c.root-servers.net. 5 IN A 192.33.4.12 d.root-servers.net. 5 IN A 199.7.91.13 e.root-servers.net. 5 IN A 192.203.230.10 f.root-servers.net. 5 IN A 192.5.5.241 g.root-servers.net. 5 IN A 192.112.36.4 h.root-servers.net. 5 IN A 198.97.190.53 i.root-servers.net. 5 IN A 192.36.148.17 j.root-servers.net. 5 IN A 192.58.128.30 k.root-servers.net. 5 IN A 193.0.14.129 l.root-servers.net. 5 IN A 199.7.83.42 m.root-servers.net. 5 IN A 202.12.27.33 a.root-servers.net. 5 IN AAAA 2001:503:ba3e::2:30 b.root-servers.net. 5 IN AAAA 2001:500:200::b ;; Query time: 6 msec ;; SERVER: 192.168.180.2#53(192.168.180.2) ;; WHEN: 一 12月 23 22:16:55 CST 2019 ;; MSG SIZE rcvd: 512 [root@k8s-master mnt]# kubectl get pod -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES coredns-58cc8c89f4-9gn5g 1/1 Running 2 3d23h 10.244.0.7 k8s-master <none> <none> coredns-58cc8c89f4-xxzx7 1/1 Running 2 3d23h 10.244.0.6 k8s-master <none> <none> etcd-k8s-master 1/1 Running 3 3d23h 192.168.180.130 k8s-master <none> <none> kube-apiserver-k8s-master 1/1 Running 3 3d23h 192.168.180.130 k8s-master <none> <none> kube-controller-manager-k8s-master 1/1 Running 6 3d23h 192.168.180.130 k8s-master <none> <none> kube-flannel-ds-amd64-4bc88 1/1 Running 3 3d23h 192.168.180.136 k8s-node02 <none> <none> kube-flannel-ds-amd64-lzwd6 1/1 Running 4 3d23h 192.168.180.130 k8s-master <none> <none> kube-flannel-ds-amd64-vw4vn 1/1 Running 5 3d23h 192.168.180.135 k8s-node01 <none> <none> kube-proxy-bs8sd 1/1 Running 3 3d23h 192.168.180.135 k8s-node01 <none> <none> kube-proxy-nfvtt 1/1 Running 2 3d23h 192.168.180.136 k8s-node02 <none> <none> kube-proxy-rn98b 1/1 Running 3 3d23h 192.168.180.130 k8s-master <none> <none> kube-scheduler-k8s-master 1/1 Running 5 3d23h 192.168.180.130 k8s-master <none> <none> [root@k8s-master mnt]# dig -t A myapp-headless.default.svc.cluster.local. @10.244.0.7 ; <<>> DiG 9.11.4-P2-RedHat-9.11.4-9.P2.el7 <<>> -t A myapp-headless.default.svc.cluster.local. @10.244.0.7 ;; global options: +cmd ;; Got answer: ;; WARNING: .local is reserved for Multicast DNS ;; You are currently testing what happens when an mDNS query is leaked to DNS ;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 44455 ;; flags: qr aa rd; QUERY: 1, ANSWER: 3, AUTHORITY: 0, ADDITIONAL: 1 ;; WARNING: recursion requested but not available ;; OPT PSEUDOSECTION: ; EDNS: version: 0, flags:; udp: 4096 ;; QUESTION SECTION: ;myapp-headless.default.svc.cluster.local. IN A ;; ANSWER SECTION: myapp-headless.default.svc.cluster.local. 30 IN A 10.244.2.29 myapp-headless.default.svc.cluster.local. 30 IN A 10.244.1.26 myapp-headless.default.svc.cluster.local. 30 IN A 10.244.2.30 ;; Query time: 199 msec ;; SERVER: 10.244.0.7#53(10.244.0.7) ;; WHEN: 一 12月 23 22:18:21 CST 2019 ;; MSG SIZE rcvd: 237 [root@k8s-master mnt]# kubectl get pod -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES myapp-deploy-55c8657767-5jzt4 1/1 Running 0 16m 10.244.1.26 k8s-node02 <none> <none> myapp-deploy-55c8657767-6tkc4 1/1 Running 0 16m 10.244.2.29 k8s-node01 <none> <none> myapp-deploy-55c8657767-hw96w 1/1 Running 0 16m 10.244.2.30 k8s-node01 <none> <none>

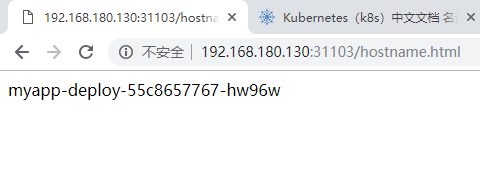

NodePort

nodePort 的原理在于在 node 上开了一个端口,将向该端口的流量导入到 kube-proxy,然后由 kube-proxy 进

一步到给对应的 pod。

[root@k8s-master mnt]# cat NodePort.yaml apiVersion: v1 kind: Service metadata: name: myapp namespace: default spec: type: NodePort selector: app: myapp release: stabel ports: - name: http port: 80 targetPort: 80 [root@k8s-master mnt]#

测试:

[root@k8s-master mnt]# vim NodePort.yaml [root@k8s-master mnt]# kubectl create -f NodePort.yaml Error from server (AlreadyExists): error when creating "NodePort.yaml": services "myapp" already exists [root@k8s-master mnt]# kubectl apply -f NodePort.yaml Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply service/myapp configured [root@k8s-master mnt]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 4d myapp NodePort 10.111.227.210 <none> 80:31103/TCP 14m myapp-headless ClusterIP None <none> 80/TCP 6m26s [root@k8s-master mnt]# netstat -antp |grep 31103 tcp6 0 0 :::31103 :::* LISTEN 3974/kube-proxy [root@k8s-master mnt]#

浙公网安备 33010602011771号

浙公网安备 33010602011771号