Kubernetes-自动扩展器HPA、VPA、CA

目录

一、Kubernetes自动扩展器

- HPA:Pod 水平缩放器

- VPA:Pod 垂直缩放器

- CA:集群自动缩放器

1.1、Kubernetes Pod水平自动伸缩(HPA)

HPA官方文档 :https://kubernetes.io/zh/docs/tasks/run-application/horizontal-pod-autoscale/

1.1.1、HPA简介

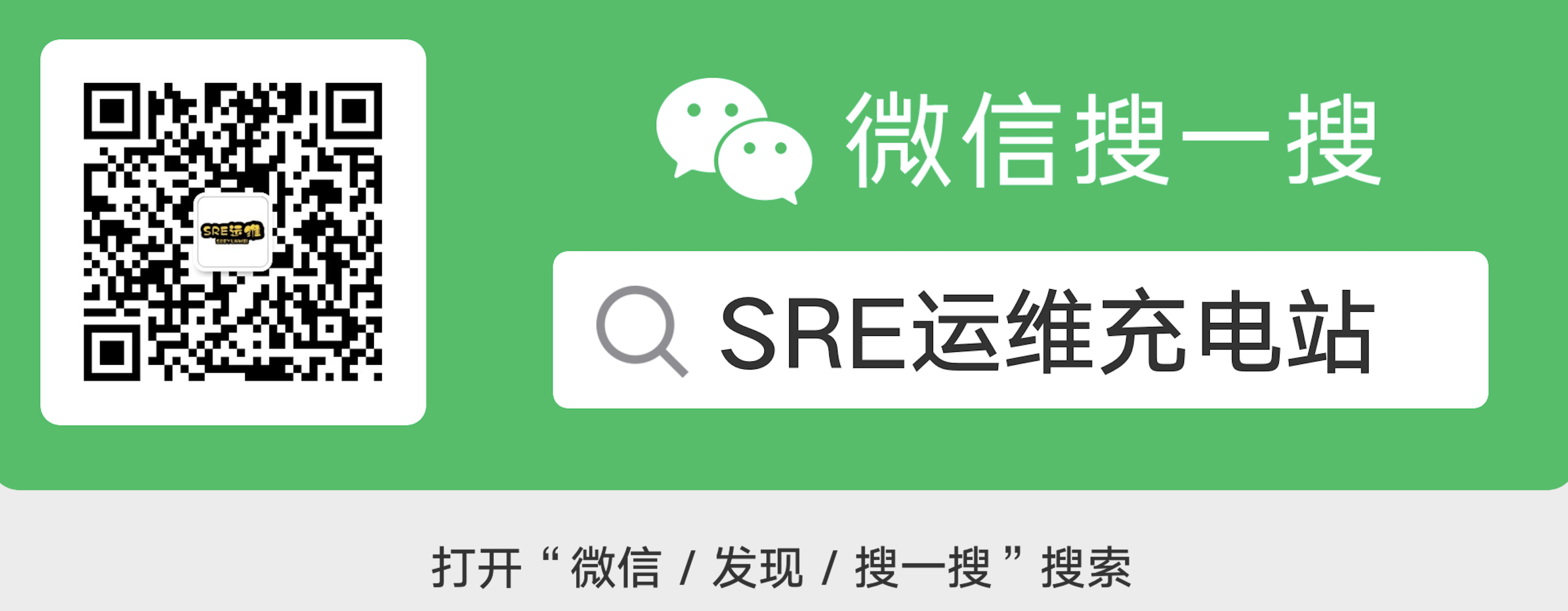

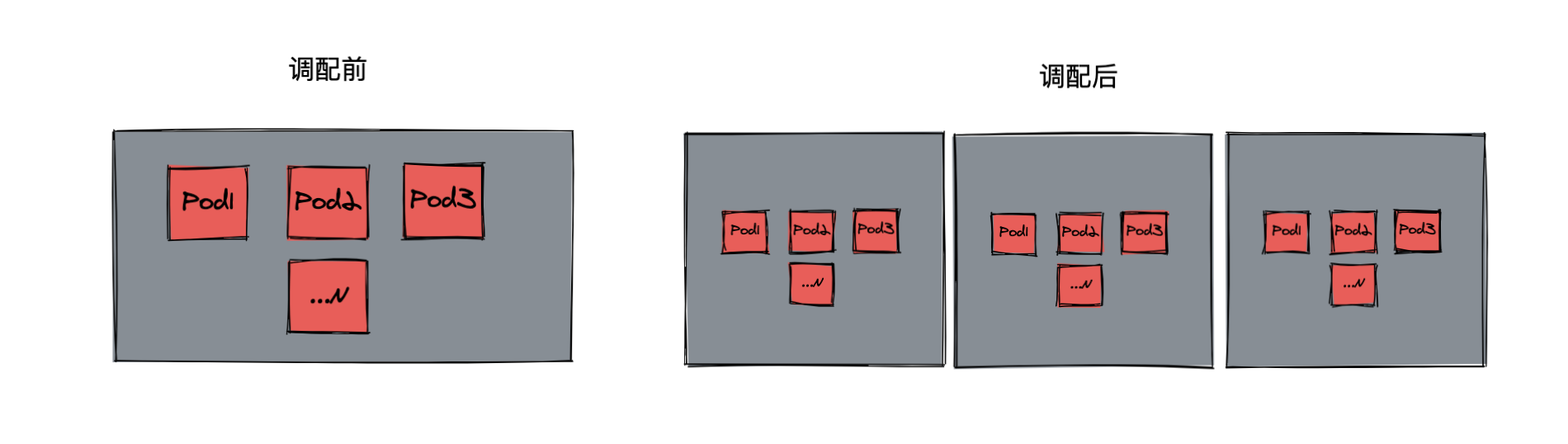

- HAP,全称 Horizontal Pod Autoscaler, 可以基于 CPU 利用率自动扩缩 ReplicationController、Deployment 和 ReplicaSet 中的 Pod 数量。 除了 CPU 利用率,也可以基于其他应程序提供的自定义度量指标来执行自动扩缩。 Pod 自动扩缩不适用于无法扩缩的对象,比如 DaemonSet。

- Pod 水平自动扩缩特性由 Kubernetes API 资源和控制器实现。资源决定了控制器的行为。 控制器会周期性的调整副本控制器或 Deployment 中的副本数量,以使得 Pod 的平均 CPU 利用率与用户所设定的目标值匹配。

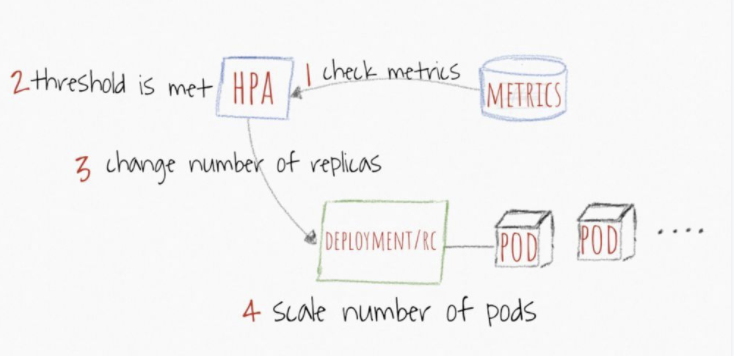

- HPA 定期检查内存和 CPU 等指标,自动调整 Deployment 中的副本数,比如流量变化:

- 实际生产中,广泛使用这四类指标:

- 1、Resource metrics - CPU核内存利用率指标

- 2、Pod metrics - 例如网络利用率和流量

- 3、Object metrics - 特定对象的指标,比如Ingress, 可以按每秒使用请求数来扩展容器

- 4、Custom metrics - 自定义监控,比如通过定义服务响应时间,当响应时间达到一定指标时自动扩容

1.1.2、HPA示例

- 1、首先我们部署一个nginx,副本数为2,请求cpu资源为200m。同时为了便宜测试,使用NodePort暴露服务,命名空间设置为:hpa

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

namespace: hpa

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

resources:

requests:

cpu: 200m

memory: 100Mi

---

apiVersion: v1

kind: Service

metadata:

name: nginx

namespace: hpa

spec:

type: NodePort

ports:

- port: 80

targetPort: 80

selector:

app: nginx

- 2、查看部署结果

# kubectl get po -n hpa

NAME READY STATUS RESTARTS AGE

nginx-5c87768612-48b4v 1/1 Running 0 8m38s

nginx-5c87768612-kfpkq 1/1 Running 0 8m38s

- 3、创建HPA

- 这里创建一个HPA,用于控制我们上一步骤中创建的 Deployment,使 Pod 的副本数量维持在 1 到 10 之间。

- HPA 将通过增加或者减少 Pod 副本的数量(通过 Deployment)以保持所有 Pod 的平均 CPU 利用率在 50% 以内。

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: nginx

namespace: hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: nginx

minReplicas: 1

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 50

- 4、查看部署结果

# kubectl get hpa -n hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

nginx Deployment/nginx 0%/50% 1 10 2 50s

- 5、压测观察Pod数和HPA变化

# 执行压测命令

# ab -c 1000 -n 100000000 http://127.0.0.1:30792/

This is ApacheBench, Version 2.3 <$Revision: 1843412 $>

Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/

Licensed to The Apache Software Foundation, http://www.apache.org/

Benchmarking 127.0.0.1 (be patient)

# 观察变化

# kubectl get hpa -n hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

nginx Deployment/nginx 303%/50% 1 10 7 12m

# kubectl get po -n hpa

NAME READY STATUS RESTARTS AGE

pod/nginx-5c87768612-6b4sl 1/1 Running 0 85s

pod/nginx-5c87768612-99mjb 1/1 Running 0 69s

pod/nginx-5c87768612-cls7r 1/1 Running 0 85s

pod/nginx-5c87768612-hhdr7 1/1 Running 0 69s

pod/nginx-5c87768612-jj744 1/1 Running 0 85s

pod/nginx-5c87768612-kfpkq 1/1 Running 0 27m

pod/nginx-5c87768612-xb94x 1/1 Running 0 69s

- 6、可以看出,hpa TARGETS达到了303%,需要扩容。pod数自动扩展到了7个。等待压测结束;

# kubectl get hpa -n hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

nginx Deployment/nginx 20%/50% 1 10 7 16m

---N分钟后---

# kubectl get hpa -n hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

nginx Deployment/nginx 0%/50% 1 10 7 18m

---再过N分钟后---

# kubectl get po -n hpa

NAME READY STATUS RESTARTS AGE

nginx-5c87768612-jj744 1/1 Running 0 11m

- 7、hpa示例总结

- CPU 利用率已经降到 0,所以 HPA 将自动缩减副本数量至 1。

- 为什么会将副本数降为1,而不是我们部署时指定的replicas: 2呢?

- 因为在创建HPA时,指定了副本数范围,这里是minReplicas: 1,maxReplicas: 10。所以HPA在缩减副本数时减到了1。

1.2、Kubernetes Pod垂直自动伸缩(VPA)

VPA项目托管地址 :https://github.com/kubernetes/autoscaler/tree/master/vertical-pod-autoscaler

1.2.1、VPA 简介

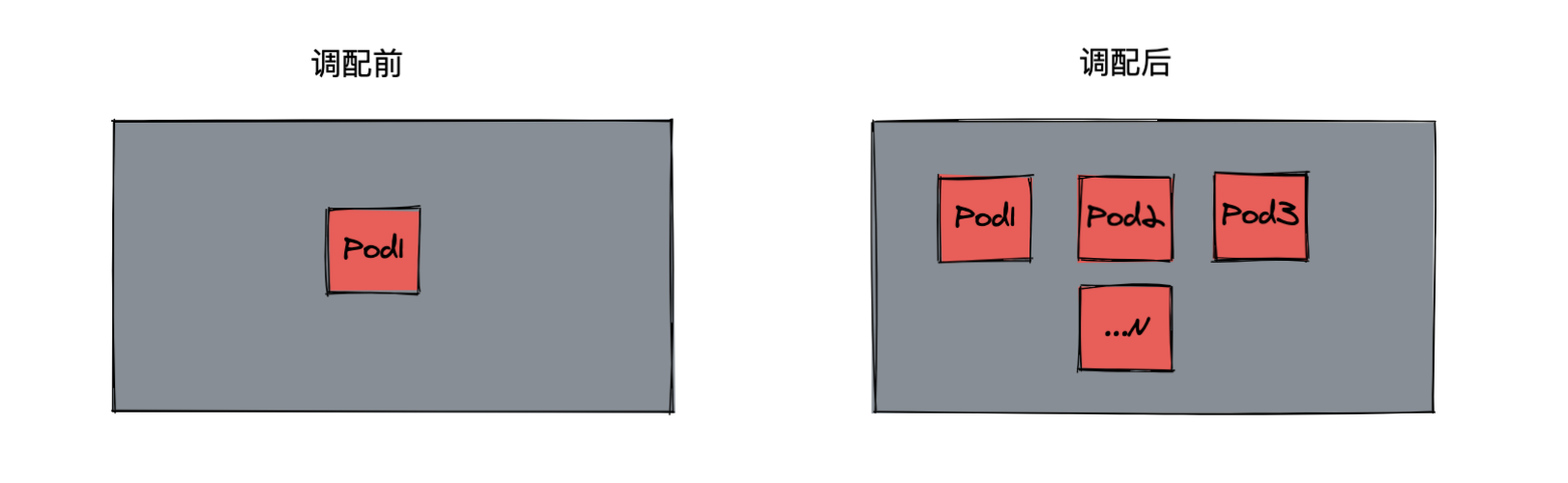

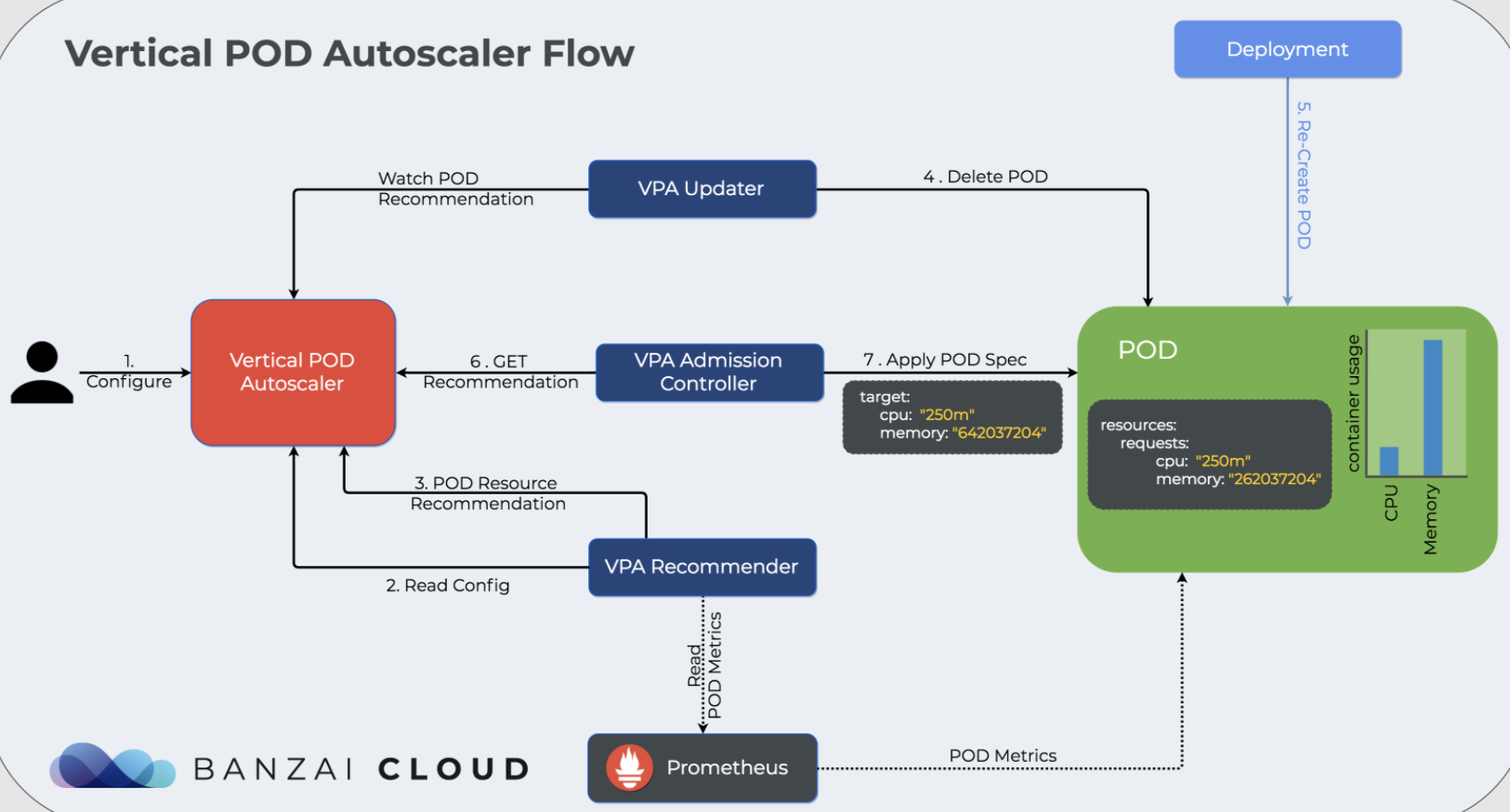

- VPA 全称 Vertical Pod Autoscaler,即垂直 Pod 自动扩缩容,它根据容器资源使用率自动设置 CPU 和 内存 的requests,从而允许在节点上进行适当的调度,以便为每个 Pod 提供适当的资源。

- 它既可以缩小过度请求资源的容器,也可以根据其使用情况随时提升资源不足的容量。

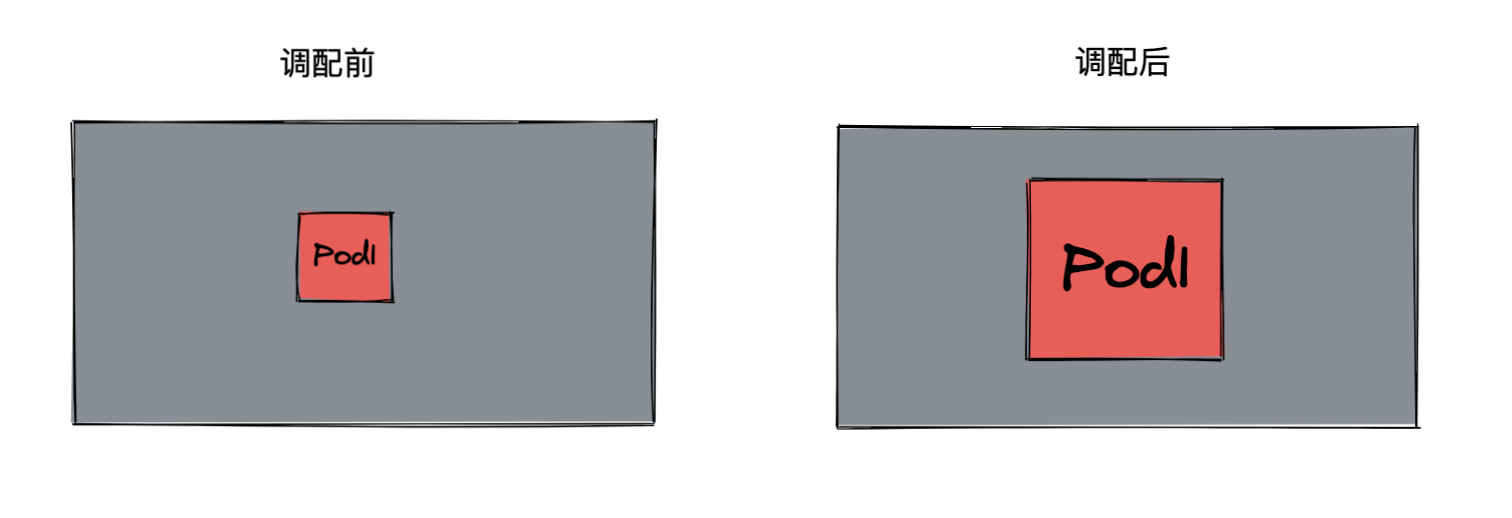

- 有些时候无法通过增加 Pod 数来扩容,比如数据库。这时候可以通过 VPA 增加 Pod 的大小,比如调整 Pod 的 CPU 和内存:

1.2.2、VPA示例

1.2.2.1、部署metrics-server

- 1、下载部署清单文件

# wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.3.7/components.yaml

- 2、修改components.yaml文件

- 修改了镜像地址,gcr.io为我自己的仓库

- 修改了metrics-server启动参数args,要不然会报错unable to fully scrape metrics from source kubelet_summary…

- name: metrics-server

image: scofield/metrics-server:v0.3.7

imagePullPolicy: IfNotPresent

args:

- --cert-dir=/tmp

- --secure-port=4443

- /metrics-server

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP

- 3、部署及验证

# kubectl apply -f components.yaml

# kubectl get po -n kube-system

NAME READY STATUS RESTARTS AGE

metrics-server-7947cb98b6-xw6b8 1/1 Running 0 10m

# kubectl top nodes

1.2.2.2、部署vertical-pod-autoscaler

- 1、克隆autoscaler

# git clone https://github.com/kubernetes/autoscaler.git

- 2、部署autoscaler

# cd autoscaler/vertical-pod-autoscaler

# ./hack/vpa-up.sh

Warning: apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition

customresourcedefinition.apiextensions.k8s.io/verticalpodautoscalers.autoscaling.k8s.io created

customresourcedefinition.apiextensions.k8s.io/verticalpodautoscalercheckpoints.autoscaling.k8s.io created

clusterrole.rbac.authorization.k8s.io/system:metrics-reader created

clusterrole.rbac.authorization.k8s.io/system:vpa-actor created

clusterrole.rbac.authorization.k8s.io/system:vpa-checkpoint-actor created

clusterrole.rbac.authorization.k8s.io/system:evictioner created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-actor created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-checkpoint-actor created

clusterrole.rbac.authorization.k8s.io/system:vpa-target-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-target-reader-binding created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-evictionter-binding created

serviceaccount/vpa-admission-controller created

clusterrole.rbac.authorization.k8s.io/system:vpa-admission-controller created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-admission-controller created

clusterrole.rbac.authorization.k8s.io/system:vpa-status-reader created

clusterrolebinding.rbac.authorization.k8s.io/system:vpa-status-reader-binding created

serviceaccount/vpa-updater created

deployment.apps/vpa-updater created

serviceaccount/vpa-recommender created

deployment.apps/vpa-recommender created

Generating certs for the VPA Admission Controller in /tmp/vpa-certs.

Generating RSA private key, 2048 bit long modulus (2 primes)

............................................................................+++++

.+++++

e is 65537 (0x010001)

Generating RSA private key, 2048 bit long modulus (2 primes)

............+++++

...........................................................................+++++

e is 65537 (0x010001)

Signature ok

subject=CN = vpa-webhook.kube-system.svc

Getting CA Private Key

Uploading certs to the cluster.

secret/vpa-tls-certs created

Deleting /tmp/vpa-certs.

deployment.apps/vpa-admission-controller created

service/vpa-webhook created

- 3、验证部署结果

# 可以看到metrics-server和vpa都已经正常运行了

# kubectl get po -n kube-system

NAME READY STATUS RESTARTS AGE

metrics-server-7947cb98b6-xw6b8 1/1 Running 0 46m

vpa-admission-controller-7d87559549-g77h9 1/1 Running 0 10m

vpa-recommender-84bf7fb9db-65669 1/1 Running 0 10m

vpa-updater-79cc46c7bb-5p889 1/1 Running 0 10m

1.2.2.3、updateMode: "Off"(此模式仅获取资源推荐不更新Pod)

- 1、部署一个nginx服务,部署到namespace: vpa

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

namespace: vpa

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

resources:

requests:

cpu: 100m

memory: 250Mi

- 2、创建一个NodePort类型的service,便于压测Pod

# cat nginx-vpa-ingress.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx

namespace: vpa

spec:

type: NodePort

ports:

- port: 80

targetPort: 80

selector:

app: nginx

# kubectl get svc -n vpa

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx NodePort 10.97.250.131 <none> 80:32621/TCP 55s

- 3、创建VPA

- 这里先使用updateMode: "Off"模式,这种模式仅获取资源推荐不更新Pod

# cat nginx-vpa-demo.yaml

apiVersion: autoscaling.k8s.io/v1beta2

kind: VerticalPodAutoscaler

metadata:

name: nginx-vpa

namespace: vpa

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: nginx

updatePolicy:

updateMode: "Off"

resourcePolicy:

containerPolicies:

- containerName: "nginx"

minAllowed:

cpu: "250m"

memory: "100Mi"

maxAllowed:

cpu: "2000m"

memory: "2048Mi"

4、查看部署结果

[root@k8s-node001 examples]# kubectl get vpa -n vpa

NAME AGE

nginx-vpa 2m34s

5、使用describe查看vpa详情,主要关注Container Recommendations

[root@k8s-node001 examples]# kubectl describe vpa nginx-vpa -n vpa

Name: nginx-vpa

Namespace: vpa

....略去10000字 哈哈......

Update Policy:

Update Mode: Off

Status:

Conditions:

Last Transition Time: 2020-09-28T04:04:25Z

Status: True

Type: RecommendationProvided

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 250m

Memory: 262144k

Uncapped Target:

Cpu: 25m

Memory: 262144k

Upper Bound:

Cpu: 803m

Memory: 840190575

Events: <none>

Lower Bound: 下限值

Target: 推荐值

Upper Bound: 上限值

Uncapped Target: 如果没有为VPA提供最小或最大边界,则表示目标利用率

上述结果表明,推荐的 Pod 的 CPU 请求为 25m,推荐的内存请求为 262144k 字节。

- 4、对nginx进行压测,执行压测命令

# ab -c 100 -n 10000000 http://192.168.127.124:32621/

This is ApacheBench, Version 2.3 <$Revision: 1843412 $>

Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/

Licensed to The Apache Software Foundation, http://www.apache.org/

Benchmarking 192.168.127.124 (be patient)

Completed 1000000 requests

Completed 2000000 requests

Completed 3000000 requests

- 5、稍后再观察VPA Recommendation变化

# kubectl describe vpa nginx-vpa -n vpa |tail -n 20

Conditions:

Last Transition Time: 2021-06-28T04:04:25Z

Status: True

Type: RecommendationProvided

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 476m

Memory: 262144k

Uncapped Target:

Cpu: 476m

Memory: 262144k

Upper Bound:

Cpu: 2

Memory: 387578728

Events: <none>

- 从输出信息可以看出,VPA对Pod给出了推荐值:Cpu: 476m,因为我们这里设置了updateMode: "Off",所以不会更新Pod;

1.2.2.4、updateMode: "Auto"(此模式当目前运行的pod的资源达不到VPA的推荐值,就会执行pod驱逐,重新部署新的足够资源的服务)

- 1、把updateMode: "Auto",看看VPA会有什么动作

- 并且把resources改为:memory: 50Mi,cpu: 100m

# kubectl apply -f nginx-vpa.yaml

deployment.apps/nginx created

# cat nginx-vpa.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

namespace: vpa

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

resources:

requests:

cpu: 100m

memory: 50Mi

# kubectl get po -n vpa

NAME READY STATUS RESTARTS AGE

nginx-7ff65f974c-f4vgl 1/1 Running 0 114s

nginx-7ff65f974c-v9ccx 1/1 Running 0 114s

- 2、再次部署vpa,这里VPA部署文件nginx-vpa-demo.yaml只改了

updateMode: "Auto"和name: nginx-vpa-2

# cat nginx-vpa-demo.yaml

apiVersion: autoscaling.k8s.io/v1beta2

kind: VerticalPodAutoscaler

metadata:

name: nginx-vpa-2

namespace: vpa

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: nginx

updatePolicy:

updateMode: "Auto"

resourcePolicy:

containerPolicies:

- containerName: "nginx"

minAllowed:

cpu: "250m"

memory: "100Mi"

maxAllowed:

cpu: "2000m"

memory: "2048Mi"

# kubectl apply -f nginx-vpa-demo.yaml

verticalpodautoscaler.autoscaling.k8s.io/nginx-vpa created

# kubectl get vpa -n vpa

NAME AGE

nginx-vpa-2 9s

- 3、再次压测

# ab -c 1000 -n 100000000 http://192.168.127.124:32621/

- 4、稍后使用describe查看vpa详情,同样只关注Container Recommendations

# kubectl describe vpa nginx-vpa-2 -n vpa |tail -n 30

Min Allowed:

Cpu: 250m

Memory: 100Mi

Target Ref:

API Version: apps/v1

Kind: Deployment

Name: nginx

Update Policy:

Update Mode: Auto

Status:

Conditions:

Last Transition Time: 2021-06-28T04:48:25Z

Status: True

Type: RecommendationProvided

Recommendation:

Container Recommendations:

Container Name: nginx

Lower Bound:

Cpu: 250m

Memory: 262144k

Target:

Cpu: 476m

Memory: 262144k

Uncapped Target:

Cpu: 476m

Memory: 262144k

Upper Bound:

Cpu: 2

Memory: 262144k

Events: <none>

-

Target变成了Cpu: 587m ,Memory: 262144k

-

5、查看event事件

~]# kubectl get event -n vpa

LAST SEEN TYPE REASON OBJECT MESSAGE

33m Normal Pulling pod/nginx-7ff65f974c-f4vgl Pulling image "nginx"

33m Normal Pulled pod/nginx-7ff65f974c-f4vgl Successfully pulled image "nginx" in 15.880996269s

33m Normal Created pod/nginx-7ff65f974c-f4vgl Created container nginx

33m Normal Started pod/nginx-7ff65f974c-f4vgl Started container nginx

26m Normal EvictedByVPA pod/nginx-7ff65f974c-f4vgl Pod was evicted by VPA Updater to apply resource recommendation.

26m Normal Killing pod/nginx-7ff65f974c-f4vgl Stopping container nginx

35m Normal Scheduled pod/nginx-7ff65f974c-hnzr5 Successfully assigned vpa/nginx-7ff65f974c-hnzr5 to k8s-node005

35m Normal Pulling pod/nginx-7ff65f974c-hnzr5 Pulling image "nginx"

34m Normal Pulled pod/nginx-7ff65f974c-hnzr5 Successfully pulled image "nginx" in 40.750855715s

34m Normal Scheduled pod/nginx-7ff65f974c-v9ccx Successfully assigned vpa/nginx-7ff65f974c-v9ccx to k8s-node004

33m Normal Pulling pod/nginx-7ff65f974c-v9ccx Pulling image "nginx"

33m Normal Pulled pod/nginx-7ff65f974c-v9ccx Successfully pulled image "nginx" in 15.495315629s

33m Normal Created pod/nginx-7ff65f974c-v9ccx Created container nginx

33m Normal Started pod/nginx-7ff65f974c-v9ccx Started container nginx

- 从输出信息可以了解到,vpa执行了EvictedByVPA,自动停掉了nginx,然后使用 VPA推荐的资源启动了新的nginx,我们查看下nginx的pod可以得到确认;

~]# kubectl describe po nginx-7ff65f974c-2m9zl -n vpa

Name: nginx-7ff65f974c-2m9zl

Namespace: vpa

Priority: 0

Node: k8s-node004/192.168.100.184

Start Time: June, 28 Sep 2021 00:46:19 -0400

Labels: app=nginx

pod-template-hash=7ff65f974c

Annotations: cni.projectcalico.org/podIP: 100.67.191.53/32

vpaObservedContainers: nginx

vpaUpdates: Pod resources updated by nginx-vpa: container 0: cpu request, memory request

Status: Running

IP: 100.67.191.53

IPs:

IP: 100.67.191.53

Controlled By: ReplicaSet/nginx-7ff65f974c

Containers:

nginx:

Container ID: docker://c96bcd07f35409d47232a0bf862a76a56352bd84ef10a95de8b2e3f6681df43d

Image: nginx

Image ID: docker-pullable://nginx@sha256:c628b67d21744fce822d22fdcc0389f6bd763daac23a6b77147d0712ea7102d0

Port: <none>

Host Port: <none>

State: Running

Started: June, 28 Sep 2021 00:46:38 -0400

Ready: True

Restart Count: 0

Requests:

cpu: 476m

memory: 262144k

- 看重点Requests:cpu: 476m,memory: 262144k

- 再回头看看部署文件

requests:

cpu: 100m

memory: 50Mi

- 随着服务的负载的变化,VPA的推荐值也会不断变化。当目前运行的pod的资源达不到VPA的推荐值,就会执行pod驱逐,重新部署新的足够资源的服务。

1.2.2.5、VPA使用限制&优势

- 限制

- 不能与HPA(Horizontal Pod Autoscaler )一起使用;

- 优势

- Pod 资源用其所需,所以集群节点使用效率高;

- Pod 会被安排到具有适当可用资源的节点上;

- 不必运行基准测试任务来确定 CPU 和内存请求的合适值;

- VPA 可以随时调整 CPU 和内存请求,无需人为操作,因此可以减少维护时间;

1.3、Kubernetes 集群自动缩放器(CA)

CA项目托管地址 :https://github.com/kubernetes/autoscaler/tree/master/cluster-autoscaler

节点的初始化: https://kubernetes.io/docs/reference/command-line-tools-reference/kubelet-tls-bootstrapping/

1.3.1、CA简介

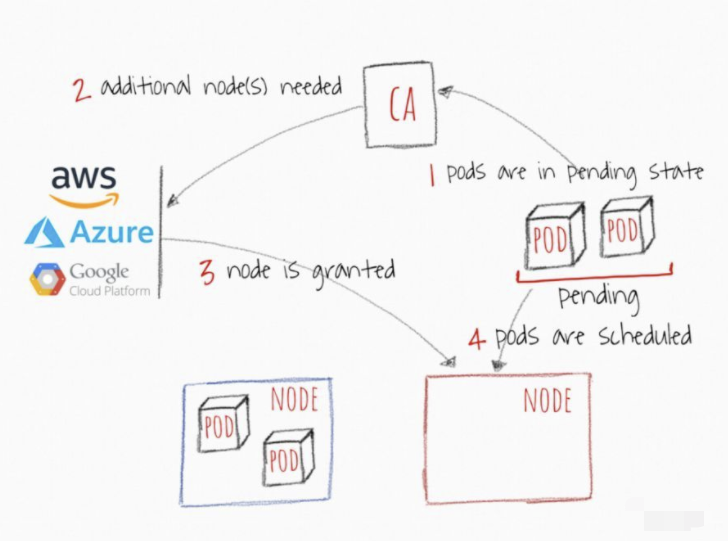

- 集群自动伸缩器(CA)基于待处理的豆荚扩展集群节点。它会定期检查是否有任何待处理的豆荚,如果需要更多的资源,并且扩展的集群仍然在用户提供的约束范围内,则会增加集群的大小。CA与云供应商接口,请求更多节点或释放空闲节点。它与GCP、AWS和Azure兼容。版本1.0(GA)与Kubernetes 1.8一起发布。

- 当集群资源不足时,CA 会自动配置新的计算资源并添加到集群中:

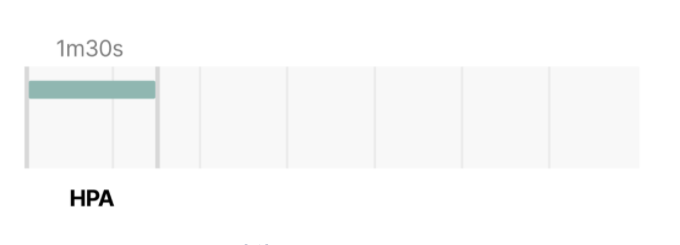

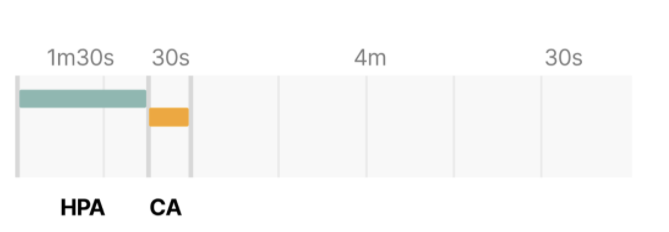

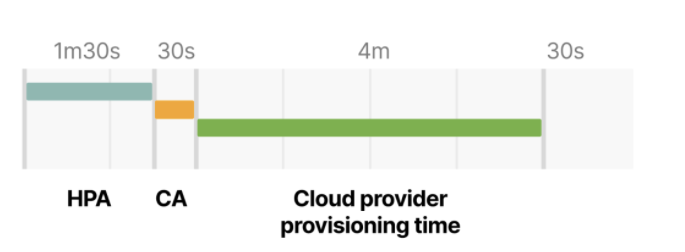

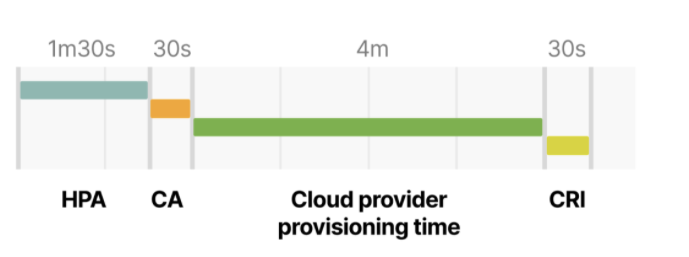

1.4、Pod 自动缩放的前置时间

- 四个因素:

- 1.HPA 的响应耗时

- 2.CA 的响应耗时

- 3.节点的初始化耗时

- 4.Pod 的创建时间

- 默认情况下,kubelet 每 10 秒抓取一次 Pod 的 CPU 和内存占用情况;

- 每分钟,Metrics Server 会将聚合的指标开放给 Kubernetes API 的其他组件使用;

- CA 每 10 秒排查不可调度的 Pod。[10]

- 少于 100 个节点,且每个节点最多 30 个 Pod,时间不超过 30s。平均延迟大约 5s;

- 100 到 1000个节点,不超过 60s。平均延迟大约 15s;

- 节点的配置时间,取决于云服务商。通常在 3~5 分钟;

- 容器运行时创建 Pod:启动容器的几毫秒和下载镜像的几秒钟。如果不做镜像缓存,几秒到 1 分钟不等,取决于层的大小和梳理;

- 对于小规模的集群,最坏的情况是 6 分 30 秒。对于 100 个以上节点规模的集群,可能高达 7 分钟;

HPA delay: 1m30s +

CA delay: 0m30s +

Cloud provider: 4m +

Container runtime: 0m30s +

=========================

Total 6m30s

- 突发情况,比如流量激增,你是否愿意等这 7 分钟?该如何压缩时间?

(即使调小了上述设置,依然会受云服务商的时间限制)

HPA 的刷新时间,默认 15 秒,通过 --horizontal-pod-autoscaler-sync-period 标志控制;

Metrics Server 的指标抓取时间,默认 60 秒,通过 metric-resolution 控

CA 的扫描间隔,默认 10 秒,通过 scan-interval 控制;

节点上缓存镜像,比如 kube-fledged等工具;

向往的地方很远,喜欢的东西很贵,这就是我努力的目标。