逻辑回归 及 实例

主要参考 《统计学习方法》 《机器学习实战》 机器学习:从编程的角度去理解逻辑回归

逻辑回归,

有一种定义是这样的:逻辑回归其实是一个线性分类器,只是在外面嵌套了一个逻辑函数,主要用于二分类问题。这个定义明确的指明了逻辑回归的特点:

一个线性分类器

外层有一个逻辑函数

我们知道,线性回归的模型是求出输出特征向量 Y 和输入样本矩阵 X 之间的线性关系系数 θ,满足 Y=Xθ。此时我们的 Y 是连续的,所以是回归模型。如果我们想要 Y 是离散的话,怎么办呢?一个可以想到的办法是,我们对于这个 Y 再做一次函数转换,变为 g(Y)。如果我们令 g(Y)的值在某个实数区间的时候是类别 A,在另一个实数区间的时候是类别 B,以此类推,就得到了一个分类模型。如果结果的类别只有两种,那么就是一个二元分类模型了。逻辑回归的出发点就是从这来的。

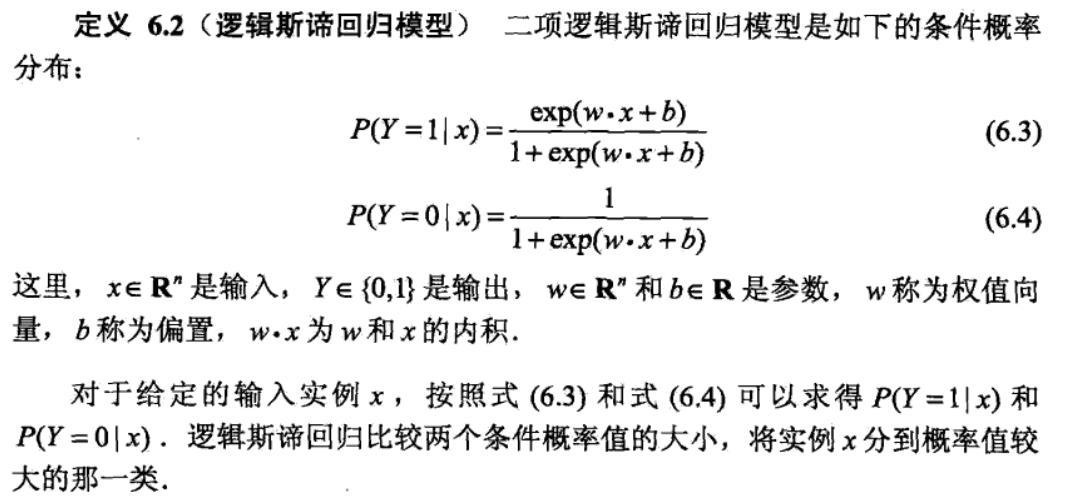

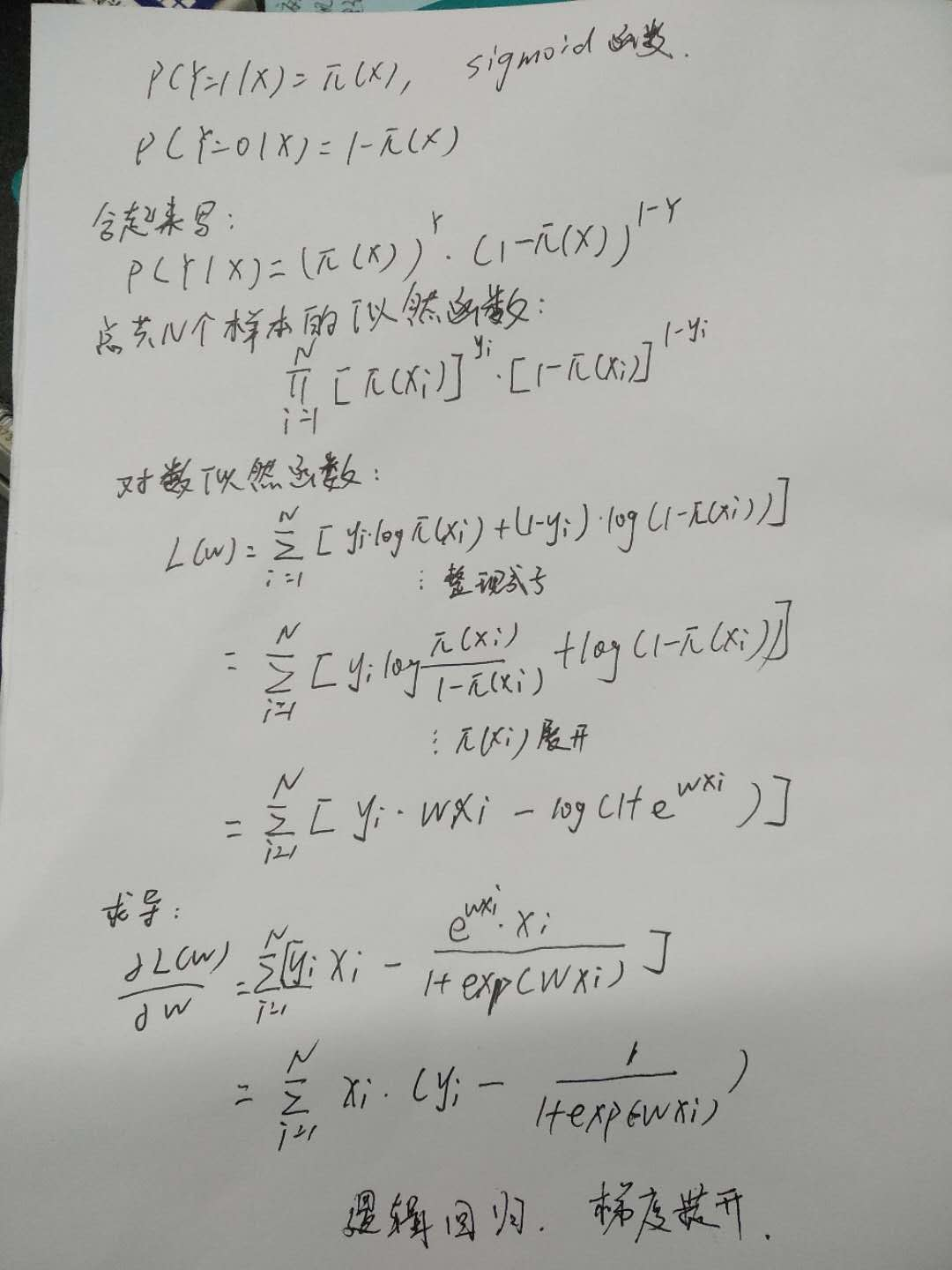

定义:

模型参数估计

用最大似然的方法

获得导数之后,就可以用梯度提升法来 迭代更新参数了。

接下来看下代码部分,所有的代码示例都没有写预测结果,而只是画出分界线。

分界线怎么画?

设定 w0x0+w1x1+w2x2=0 解出x2和x1的关系,就可以画图了,当然等式右边也可换成1。这个分界线主要就是用来看下大概的一个分区。

In [36]:

%matplotlib inline

import numpy as np

from numpy import *

import os

import pandas as pd

import matplotlib.pyplot as plt

普通的梯度上升算法¶

可以看到21-24行的代码,就是上面推导公式,梯度提升迭代更新参数w。

这里要注意到,算法gradAscent里的变量h 和误差error都是向量, 用矩阵的形式把所有的样本都带进去算了,要区分后面的随机梯度的算法。

In [53]:

def loadDataSet():

dataMat = []; labelMat = []

fr = open('testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat,labelMat

##逻辑函数

def sigmoid(inX):

return 1.0/(1+np.exp(-inX))

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn) #convert to NumPy matrix

labelMat = mat(classLabels).transpose() #convert to NumPy matrix

m,n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = ones((n,1))

for k in range(maxCycles): #heavy on matrix operations

h = sigmoid(dataMatrix*weights) #matrix mult

error = (labelMat - h) #vector subtraction

weights = weights + alpha * dataMatrix.transpose()* error #matrix mult

print("h和error的形式",shape(h),shape(error))

return weights

def plotBestFit(weights):

dataMat,labelMat=loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = []; ycord1 = []

xcord2 = []; ycord2 = []

for i in range(n):

if int(labelMat[i])== 1:

xcord1.append(dataArr[i,1]); ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1]); ycord2.append(dataArr[i,2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s')

ax.scatter(xcord2, ycord2, s=30, c='green')

x = arange(-3.0, 3.0, 0.1)

y = (-weights[0]-weights[1]*x)/weights[2]+1

ax.plot(x, y)

plt.xlabel('X1'); plt.ylabel('X2');

plt.show()

if __name__ == '__main__':

dataMat, labelMat = loadDataSet()

weights=gradAscent(dataMat,labelMat)

weights=array(weights).ravel()

print(weights)

plotBestFit(weights)

In [54]:

def stocGradAscent0(dataMatrix, classLabels):

m,n = shape(dataMatrix)

alpha = 0.01

weights = ones(n) #initialize to all ones

for i in range(m):

h = sigmoid(sum(dataMatrix[i]*weights))

error = classLabels[i] - h

weights = weights + alpha * error * dataMatrix[i]

print("h和error的形式",h,error)

return weights

if __name__ == '__main__':

dataMat, labelMat = loadDataSet()

weights=stocGradAscent0(array(dataMat),labelMat)

print(weights)

plotBestFit(weights)

In [55]:

def stocGradAscent1(dataMatrix, classLabels, numIter=150):

m,n = shape(dataMatrix)

weights = ones(n) #initialize to all ones

for j in range(numIter):

dataIndex = [i for i in range(m)]

for i in range(m):

alpha = 4/(1.0+j+i)+0.0001 #apha decreases with iteration, does not

randIndex = int(random.uniform(0,len(dataIndex)))#go to 0 because of the constant

h = sigmoid(sum(dataMatrix[randIndex]*weights))

error = classLabels[randIndex] - h

weights = weights + alpha * error * dataMatrix[randIndex]

dataIndex.pop(randIndex)

return weights

if __name__ == '__main__':

dataMat, labelMat = loadDataSet()

weights=stocGradAscent1(array(dataMat),labelMat)

print(weights)

plotBestFit(weights)

使用 sklearn 包中的逻辑回归算法¶

使用sklearn包,用他自己提供的接口,我们获取到了最后的系数,然后画出分界线。

In [50]:

from sklearn.linear_model import LogisticRegression

def sk_lr(X_train,y_train):

model = LogisticRegression()

model.fit(X_train, y_train)

model.score(X_train,y_train)

print('权重',model.coef_)

print(model.intercept_)

# return model.predict(X_train)

return model.coef_,model.intercept_

def plotBestFit1(coef,intercept):

weights=array(coef).ravel()

dataMat,labelMat=loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = []; ycord1 = []

xcord2 = []; ycord2 = []

for i in range(n):

if int(labelMat[i])== 1:

xcord1.append(dataArr[i,1]); ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1]); ycord2.append(dataArr[i,2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s')

ax.scatter(xcord2, ycord2, s=30, c='green')

x = arange(-3.0, 3.0, 0.1)

y = (-weights[0]-weights[1]*x)/weights[2]+intercept+1

ax.plot(x, y)

plt.xlabel('X1'); plt.ylabel('X2');

plt.show()

if __name__=='__main__':

dataMat,labelMat=loadDataSet()

coef,intercept=sk_lr(dataMat,labelMat)

plotBestFit1(coef,intercept)

浙公网安备 33010602011771号

浙公网安备 33010602011771号