"""

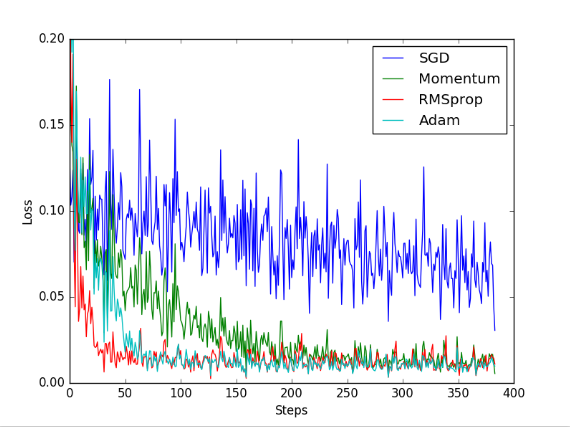

常用的网络优化器有四种:SGD, Momentum, RMSprop, Adam

通过网络的运行结果可以知道SGD的收敛效果最差

"""

import torch

import torch.utils.data as Data

import torch.nn.functional as F

from torch.autograd import Variable

import matplotlib.pyplot as plt

# 超参数

LR = 0.01

BATCH_SIZE = 32

EPOCH = 12

# 数据

x = torch.unsqueeze(torch.linspace(-1, 1, 1000), dim=1)

y = x.pow(2) + 0.1*torch.normal(torch.zeros(*x.size()))

# 画出散点图

plt.scatter(x.numpy(), y.numpy())

plt.show()

# 将数据放入torch数据库

torch_dataset = Data.TensorDataset(data_tensor=x, target_tensor=y)

loader = Data.DataLoader(dataset=torch_dataset, batch_size=BATCH_SIZE, shuffle=True, num_workers=2,)

# 搭建网络

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hidden = torch.nn.Linear(1, 20)

self.predict = torch.nn.Linear(20, 1)

def forward(self, x):

x = F.relu(self.hidden(x))

x = self.predict(x)

return x

# 定义四个网络放入一个列表中

net_SGD = Net()

net_Momentum = Net()

net_RMSprop = Net()

net_Adam = Net()

nets = [net_SGD, net_Momentum, net_RMSprop, net_Adam]

# 经过不同的优化函数优化并放入列表

opt_SGD = torch.optim.SGD(net_SGD.parameters(), lr=LR)

opt_Momentum = torch.optim.SGD(net_Momentum.parameters(), lr=LR, momentum=0.8)

opt_RMSprop = torch.optim.RMSprop(net_RMSprop.parameters(), lr=LR, alpha=0.9)

opt_Adam = torch.optim.Adam(net_Adam.parameters(), lr=LR, betas=(0.9, 0.99))

optimizers = [opt_SGD, opt_Momentum, opt_RMSprop, opt_Adam]

# 计算损失值

loss_func = torch.nn.MSELoss()

# 用于记录四个网络各自的损失值

losses_his = [[], [], [], []]

# 训练

for epoch in range(EPOCH):

print('Epoch: ', epoch)

for step, (batch_x, batch_y) in enumerate(loader):

# 之前的数据格式为tensor格式,不能用于训练网络,必须转换为variable

b_x = Variable(batch_x)

b_y = Variable(batch_y)

for net, opt, l_his in zip(nets, optimizers, losses_his):

output = net(b_x) # b_x经过各自的网络获得预测值

loss = loss_func(output, b_y) # 计算各自的损失值

opt.zero_grad() # 清除上一步的梯度值

loss.backward() # 反向传播,计算梯度值

opt.step() # 优化各个节点的参数值

l_his.append(loss.data[0]) # 记录损失值

labels = ['SGD', 'Momentum', 'RMSprop', 'Adam']

for i, l_his in enumerate(losses_his):

plt.plot(l_his, label=labels[i])

plt.legend(loc='best')

plt.xlabel('Steps')

plt.ylabel('Loss')

plt.ylim((0, 0.2))

plt.show()

浙公网安备 33010602011771号

浙公网安备 33010602011771号