ubuntu-k8s搭建

系统环境

服务器联网

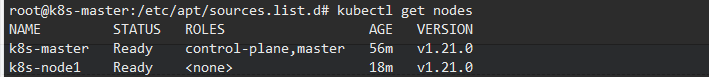

Kubernetes version: v1.21.0

Ubuntu 20.04.1 LTS

部署计划

| 192.168.137.2 | k8s-master |

| 192.168.137.3 | k8s-node1 |

安装准备(master/node)

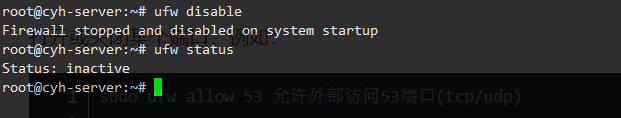

关闭防火墙

#关闭防火墙

ufw disable

#查看状态

ufw status

禁止swap分区

#临时 swapoff -a #持久化修改/etc/fstab,注释掉swap那行

设置正确的时区

#修改为上海 timedatectl set-timezone Asia/Shanghai #更改时区系统日志生效 systemctl restart rsyslog

设置host

如果有selinux要设置关闭

#编辑hosts添加以下内容

vi /etc/hosts

#k8s 192.168.137.2 k8s-master 192.168.137.3 k8s-node1

修改主机名

master节点修改

#临时生效 hostname k8s-master #重启后永久生效 hostnamectl set-hostname k8s-master #查看当前主机名 hostname

node节点修改

#临时生效 hostname k8s-node1 #重启后永久生效 hostnamectl set-hostname k8s-node1 #查看当前主机名 hostname

网络设置

因为Gemfield的K8s集群即将部署的是calico网络插件,而calico需要这个内核参数是0或者1,但是Ubuntu20.04上默认是2这里还需要修改/etc/sysctl.d/10-network-security.conf中的rp_filter值为1

#创建桥接配置k8s.conf cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF

#设置rp_filter的值

vi /etc/sysctl.d/10-network-security.conf

#将下面两个参数的值从2修改为1

#net.ipv4.conf.default.rp_filter=1

#net.ipv4.conf.all.rp_filter=1

#手动加载所有的配置文件

sysctl --system

安装docker

没装就装,装了跳过,此处省略安装过程

master安装

安装必要的软件

sudo apt-get update sudo apt-get install -y ca-certificates curl software-properties-common apt-transport-https curl

添加debian密钥

wget https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg

apt-key add apt-key.gpg

添加源

sudo tee /etc/apt/sources.list.d/kubernetes.list <<EOF deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main EOF

apt-get update

安装

apt-get install -y kubelet kubeadm kubectl apt-mark hold kubelet kubeadm kubectl

安装成功后设置docker开机启动否则执行下面命令会被警告

systemctl enable docker.service

init 方案一

这是获取镜像的一种方法但是由于版本不同国内镜像源可能有各种问题 所以可以使用方案二

kubeadm init --pod-network-cidr 172.16.0.0/16 --image-repository=cn-hangzhou.aliyuncs.com/google_containers

init 方案二 先使用下面的脚本来获取镜像或者单独处理

使用前根据安装版本更改images变量

获取需要镜像命令

kubeadm config images list

获取国内源的镜像然后改images,以下是脚本示例是v1.21.0版本

#!/bin/bash

# download k8s 1.21.0 images

# get image-list by 'kubeadm config images list --kubernetes-version=v1.21.0'

# registry.cn-hangzhou.aliyuncs.com/google_containers/google-containers == k8s.gcr.io

images=(

kube-apiserver:v1.21.0

kube-controller-manager:v1.21.0

kube-scheduler:v1.21.0

kube-proxy:v1.21.0

pause:3.4.1

etcd:3.4.13-0

coredns:v1.8.0

)

for imageName in ${images[@]};do

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName k8s.gcr.io/$imageName

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

done

如果这里有部分镜像失败就取docker hub找 手动pull然后安规则改名

再执行

kubeadm init --pod-network-cidr 172.16.0.0/16

参数说明

--pod-network-cidr 172.16.0.0/16 设置无类型域间选路 指明 pod 网络可以使用的 IP 地址段

--image-repository=cn-hangzhou.aliyuncs.com/google_containers 选择用于拉取控制平面镜像的容器仓库(默认值是"k8s.gcr.io" 没有**上网应该拉不到)

这里可能会有的报错和警告

[init] Using Kubernetes version: v1.16.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR NumCPU]: the number of available CPUs 1 is less than the required 2

错误:Kubernetes对GPU要求至少是2核,2G内存

因为这里系统在虚拟机上运行,因为虚拟机默认了一核,可以通过配置,调整成2核,重启系统即可

警告:检测到“cgroupfs”作为Docker cgroup驱动程序。 推荐的驱动程序是“systemd”。

更换驱动

vim /etc/docker/daemon.json

#在json最外层对象添加属性

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]

获取不到镜像

[init] Using Kubernetes version: v1.21.0 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' error execution phase preflight: [preflight] Some fatal errors occurred: [ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-apiserver:v1.21.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-controller-manager:v1.21.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-scheduler:v1.21.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-proxy:v1.21.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/pause:3.4.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/etcd:3.4.13-0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [ERROR ImagePull]: failed to pull image k8s.gcr.io/coredns/coredns:v1.8.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers) , error: exit status 1 [preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

检查所需要的镜像是否有少

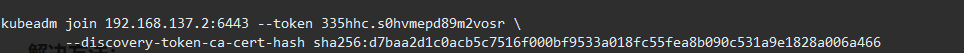

init命令执行成功后,会输出一条和kubeadm join相关的命令,后面加入worker node的时候要使用。

另外,给自己的非sudo的常规身份拷贝一个token,这样就可以执行kubectl命令了

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

安装calico插件

下载 https://docs.projectcalico.org/v3.11/manifests/calico.yaml

找到yaml中的CALICO_IPV4POOL_CIDR修改它的值为刚刚设置的地址段(把原始的192.168.0.0/16 修改成了172.16.0.0/16)

kubectl apply -f calico.yaml

至此主节点安装完毕

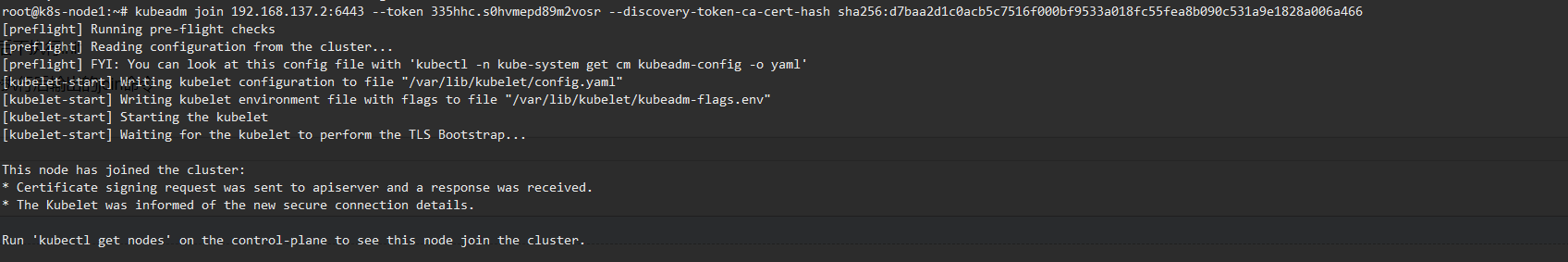

worker节点

按照以上主节点装法,只是不执行init(calico也要装)

最后执行主节点init命令执行后输出的join命令

如果忘记join命令可以使用以下命令重新创建一个

kubeadm token create --print-join-command --ttl 0

最后在主节点可以获取节点

kubectl get nodes