pytorch实现回归

pytorch实现回归

回归

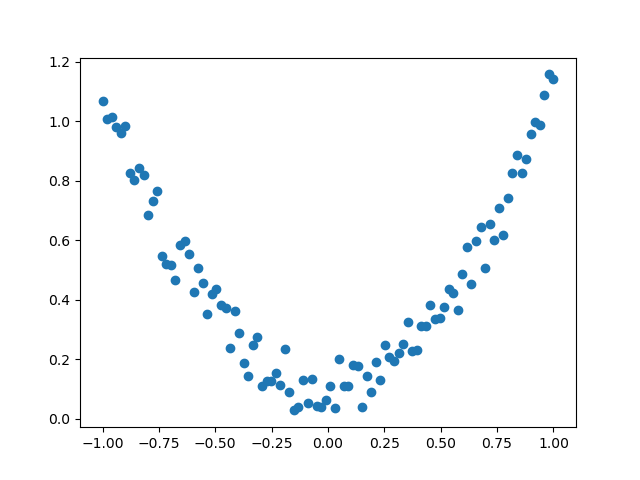

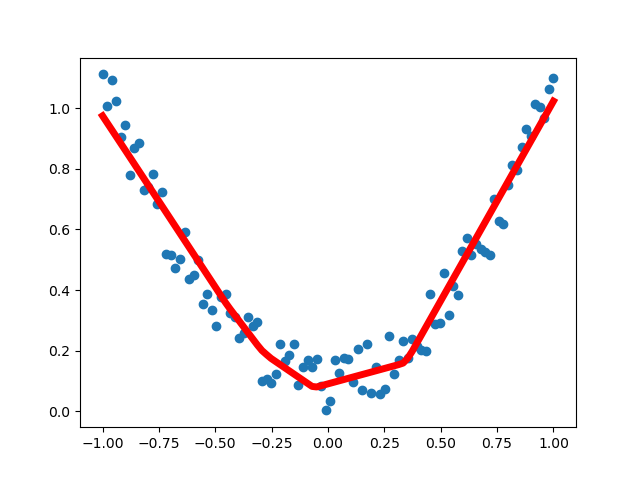

此处回归即用一根曲线近似表示一堆离散点的轨迹。

上图即离散点,下图中的红线即表示离散点轨迹的曲线,求这一曲线的过程就是回归。

pytorch实现

import torch

from torch.autograd import Variable

import torch.nn.functional as F

import matplotlib.pyplot as plt

import numpy

import os

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

x = torch.unsqueeze(torch.linspace(-1, 1, 100), dim=1)

y = x.pow(2) + torch.rand(x.size()) * 0.2

x = Variable(x)

y = Variable(y)

# plt.scatter(x.data.numpy(), y.data.numpy())

# plt.show()

class Net(torch.nn.Module):

def __init__(self, n_input, n_hidden, n_output):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(n_input, n_hidden)

self.l2 = torch.nn.Linear(n_hidden, n_output)

def forward(self, x):

x = F.relu(self.l1(x))

x = self.l2(x)

return x

net = Net(1, 10, 1)

plt.ion()

plt.show()

optimizer = torch.optim.SGD(net.parameters(), lr=0.2)

loss_func = torch.nn.MSELoss()

for t in range(2000):

prediction = net(x) # result from neural network

loss = loss_func(prediction, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if t % 5 == 0:

plt.cla()

plt.scatter(x.data.numpy(), y.data.numpy())

plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5) # color of line = r,width of line = 5

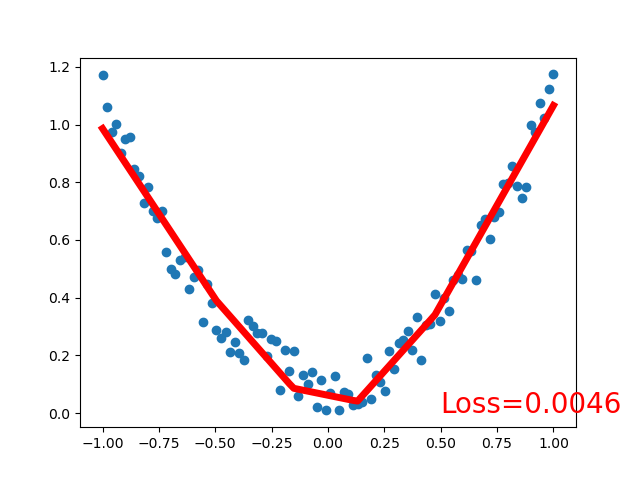

plt.text(0.5, 0, 'Loss=%.4f' % loss.data, fontdict={'size': 20, 'color': 'red'})

plt.pause(0.1)

plt.ioff()

plt.show()

输出结果:

代码中Net类就是建立的神经网络,init函数定义神经网络每层的结构,forward定义数据在神经网络中的流向,forward返回值为神经网络末端的输出。

训练前实例化Net类,训练时每一步使用误差反传(第45行loss.backward()),优化器优化(第46行optimizer.step())

浙公网安备 33010602011771号

浙公网安备 33010602011771号