第二集:Hodoop介绍及集群搭建

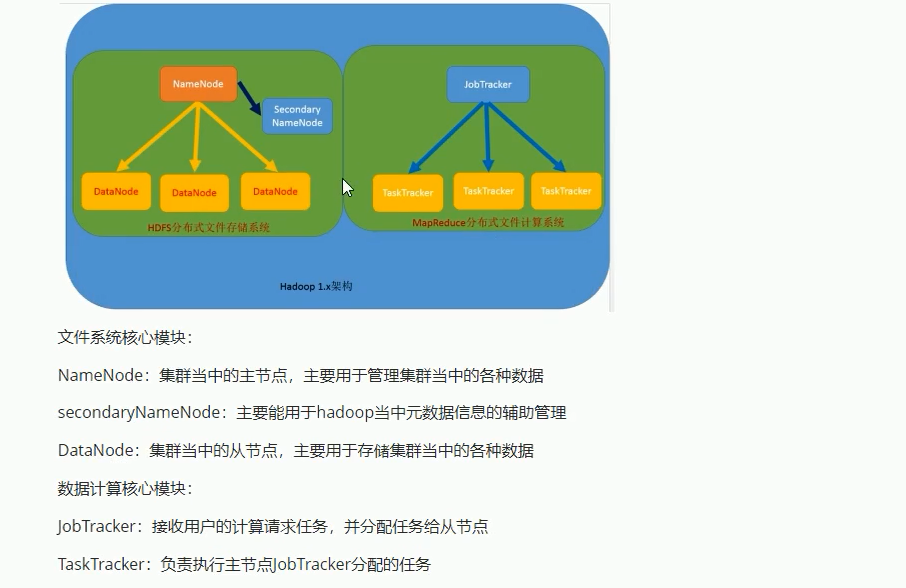

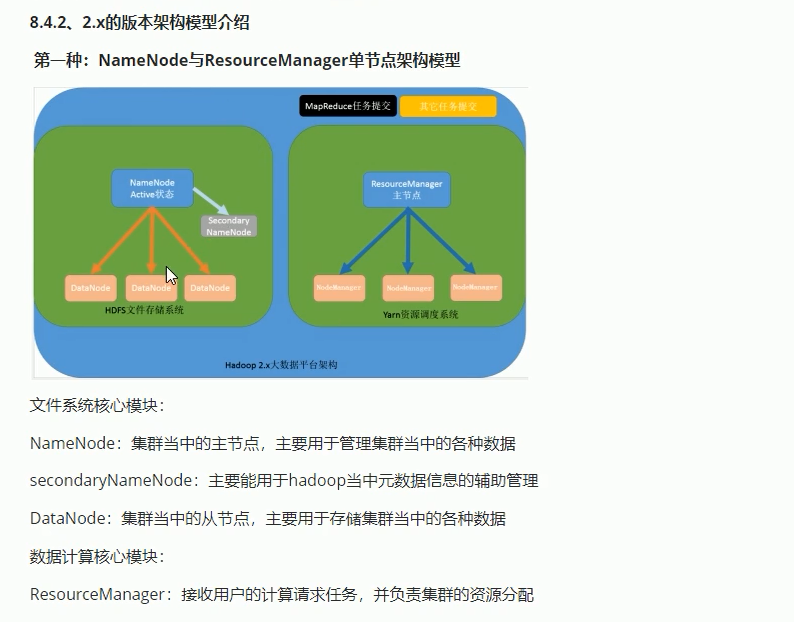

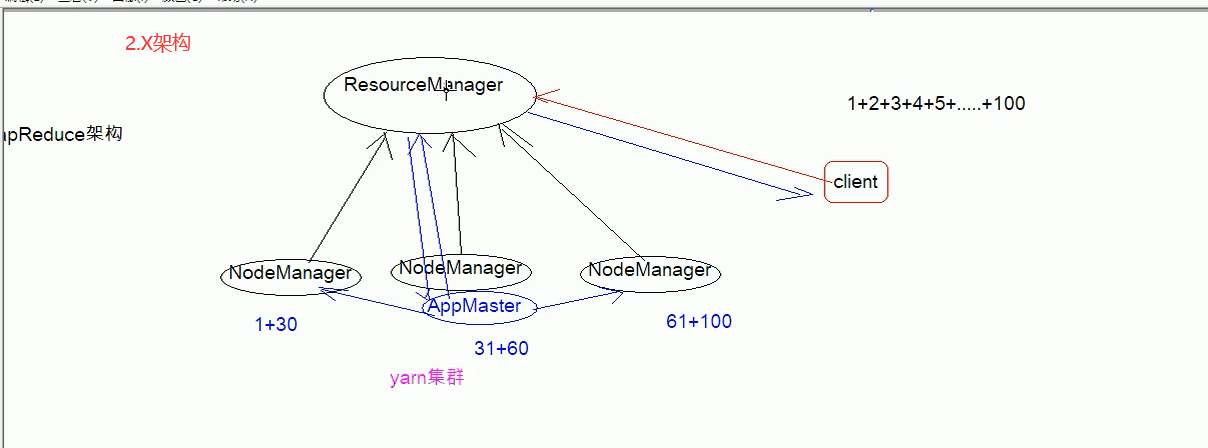

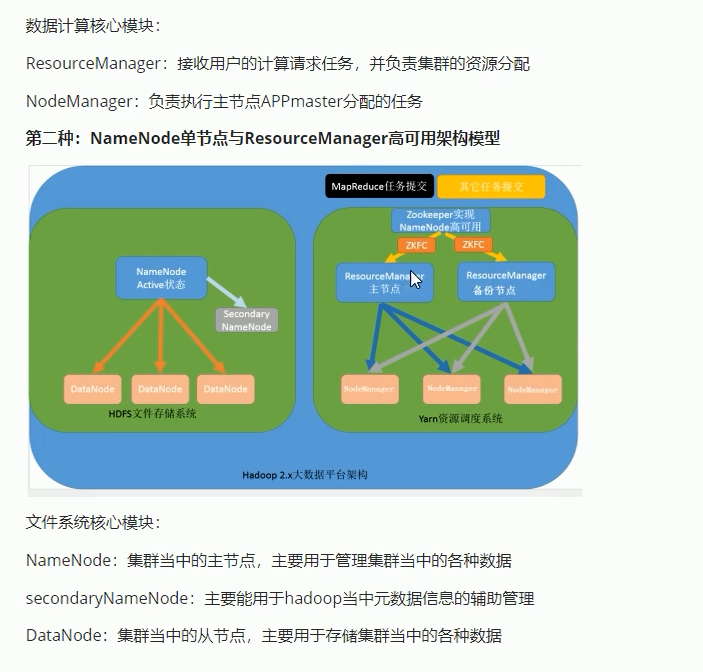

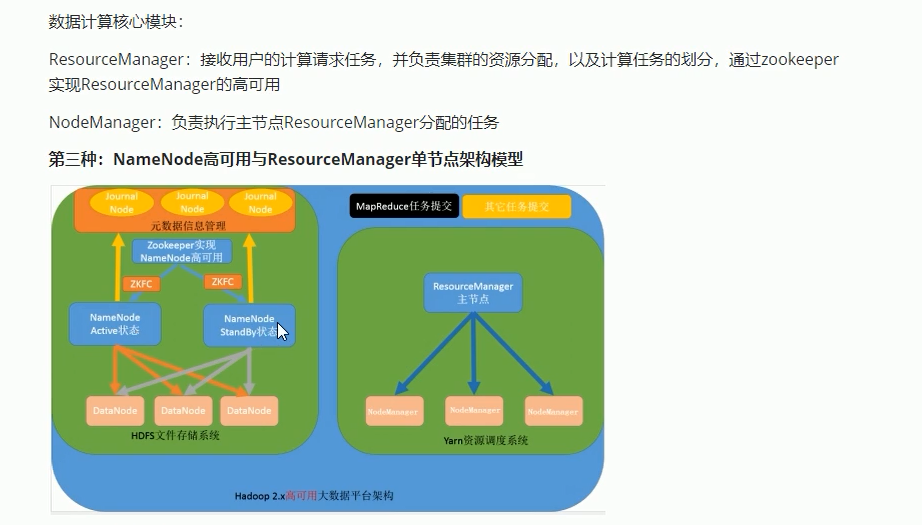

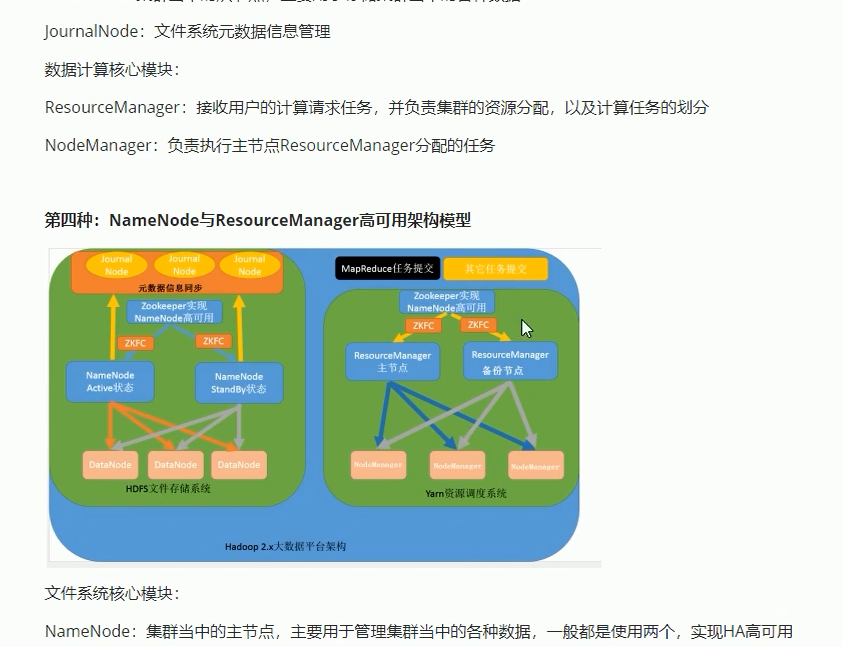

16-hadoop的架构

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

==========================================================================================================

17-hadoop的安装-准备工作

利用NodePad++的FtpNpp插件更改下面6个配置文件:

=======================================================================================================================

18-hadoop的安装-配置文件修改

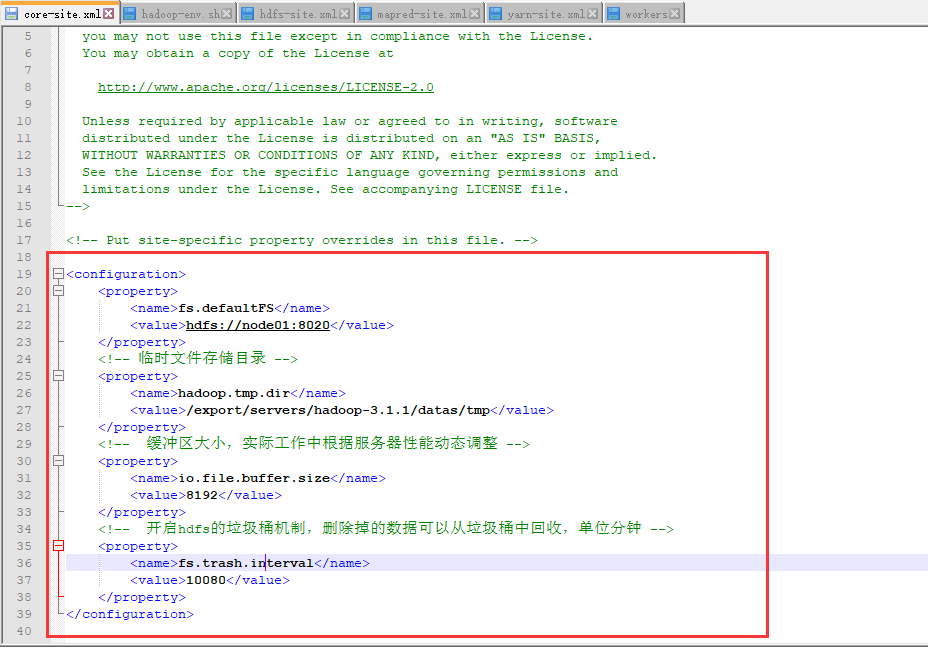

core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://node01:8020</value>

</property>

<!-- 临时文件存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/export/servers/hadoop-3.1.1/datas/tmp</value>

</property>

<!-- 缓冲区大小,实际工作中根据服务器性能动态调整 -->

<property>

<name>io.file.buffer.size</name>

<value>8192</value>

</property>

<!-- 开启hdfs的垃圾桶机制,删除掉的数据可以从垃圾桶中回收,单位分钟 -->

<property>

<name>fs.trash.interval</name>

<value>10080</value>

</property>

</configuration>

-----------------------------------------------------------------------------------------------------------------------------------------------------------

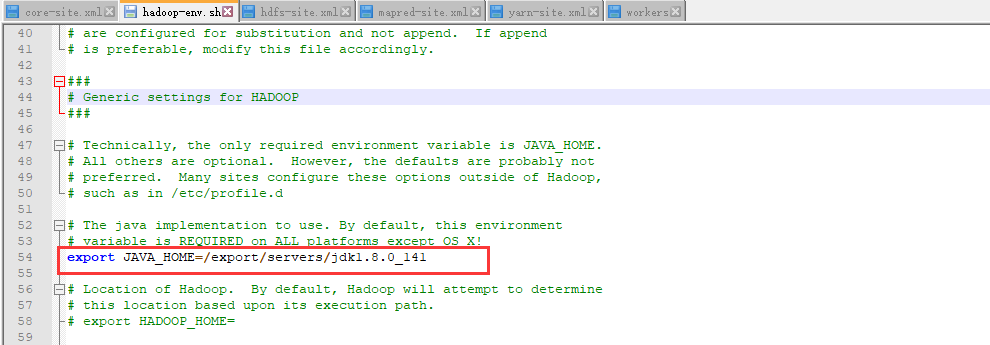

hadoop-env.sh

export JAVA_HOME=/export/servers/jdk1.8.0_141

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

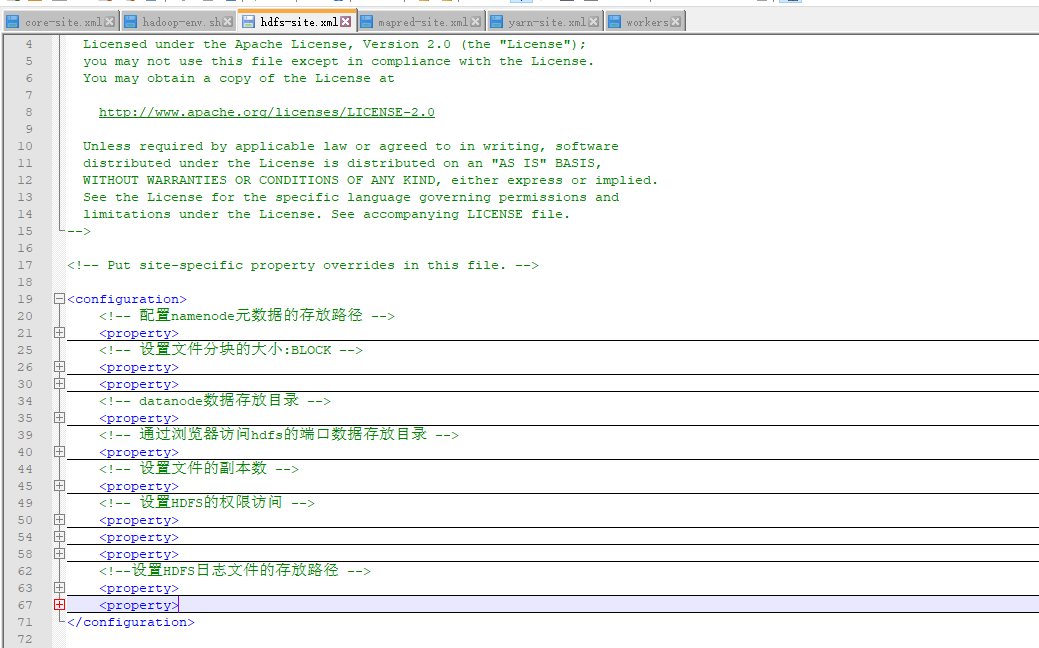

hdfs-site.xml

<configuration>

<!-- 配置namenode元数据的存放路径 -->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///export/servers/hadoop-3.1.1/datas/namenode/namenodedatas</value>

</property>

<!-- 设置文件分块的大小:BLOCK -->

<property>

<name>dfs.blocksize</name>

<value>134217728</value>

</property>

<property>

<name>dfs.namenode.handler.count</name>

<value>10</value>

</property>

<!-- datanode数据存放目录 -->

<property>

<name>dfs.datanode.data.dir</name>

<value>file:///export/servers/hadoop-3.1.1/datas/datanode/datanodeDatas</value>

</property>

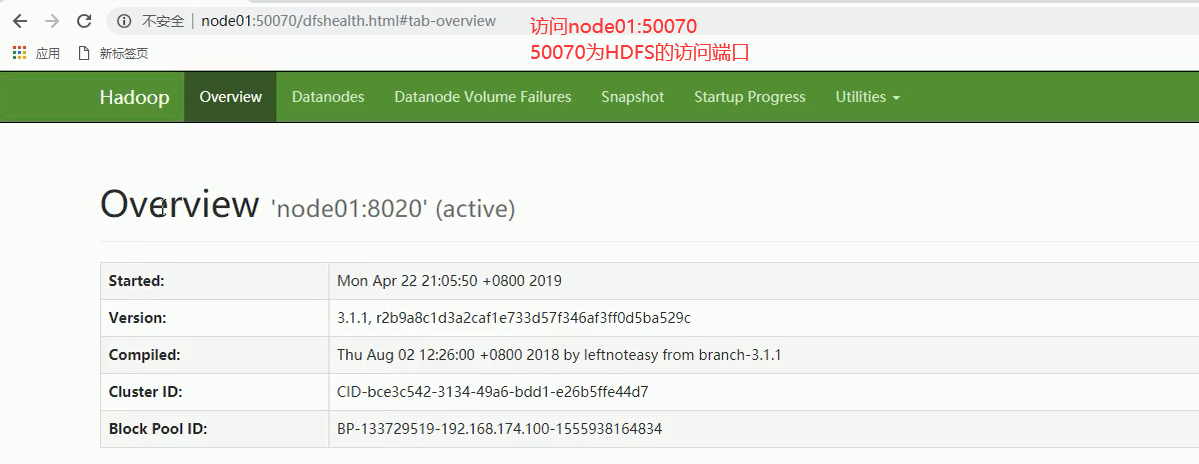

<!-- 通过浏览器访问hdfs的端口数据存放目录 -->

<property>

<name>dfs.namenode.http-address</name>

<value>node01:50070</value>

</property>

<!-- 设置文件的副本数 -->

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!-- 设置HDFS的权限访问 -->

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

</property>

<property>

<name>dfs.namenode.checkpoint.edits.dir</name>

<value>file:///export/servers/hadoop-3.1.1/datas/dfs/nn/snn/edits</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>node01.hadoop.com:50090</value>

</property>

<!--设置HDFS日志文件的存放路径 -->

<property>

<name>dfs.namenode.edits.dir</name>

<value>file:///export/servers/hadoop-3.1.1/datas/dfs/nn/edits</value>

</property>

<property>

<name>dfs.namenode.checkpoint.dir</name>

<value>file:///export/servers/hadoop-3.1.1/datas/dfs/snn/name</value>

</property>

</configuration>

----------------------------------------------------------------------------------------------------------------------------------------------

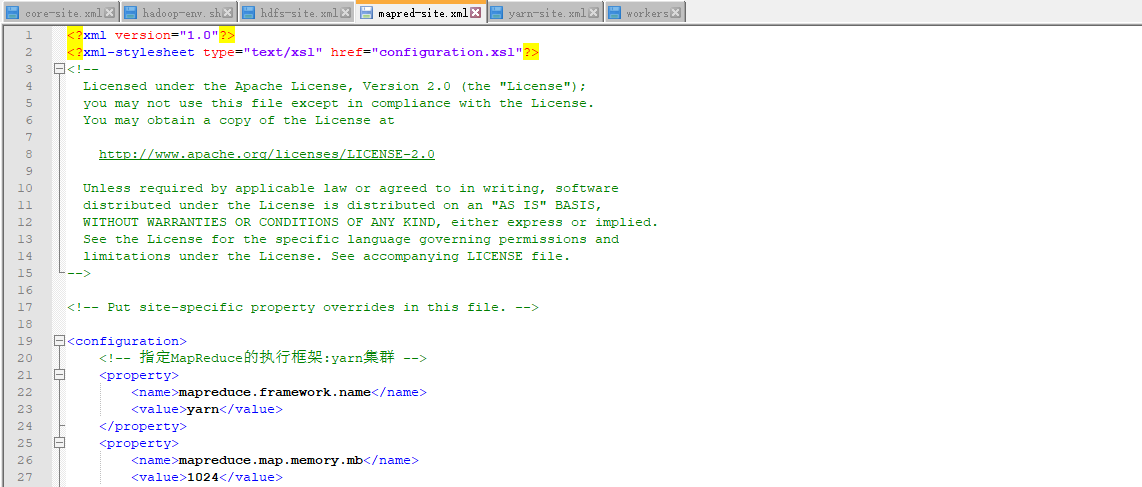

mapred-site.xml

<configuration>

<!-- 指定MapReduce的执行框架:yarn集群 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx512M</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx512M</value>

</property>

<property>

<name>mapreduce.task.io.sort.mb</name>

<value>256</value>

</property>

<property>

<name>mapreduce.task.io.sort.factor</name>

<value>100</value>

</property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopies</name>

<value>25</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>node01.hadoop.com:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>node01.hadoop.com:19888</value>

</property>

<property>

<name>mapreduce.jobhistory.intermediate-done-dir</name>

<value>/export/servers/hadoop-3.1.1/datas/jobhsitory/intermediateDoneDatas</value>

</property>

<property>

<name>mapreduce.jobhistory.done-dir</name>

<value>/export/servers/hadoop-3.1.1/datas/jobhsitory/DoneDatas</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/export/servers/hadoop-3.1.1</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/export/servers/hadoop-3.1.1/</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/export/servers/hadoop-3.1.1</value>

</property>

</configuration>

--------------------------------------------------------------------------------------------------------------------------------------------------

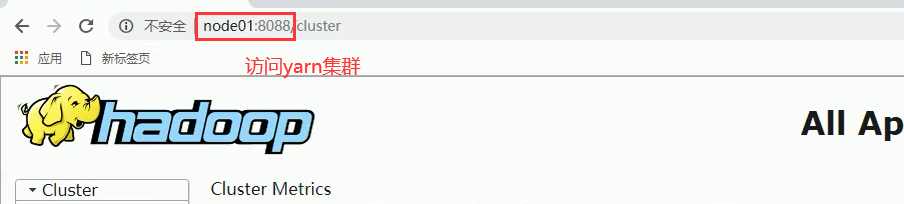

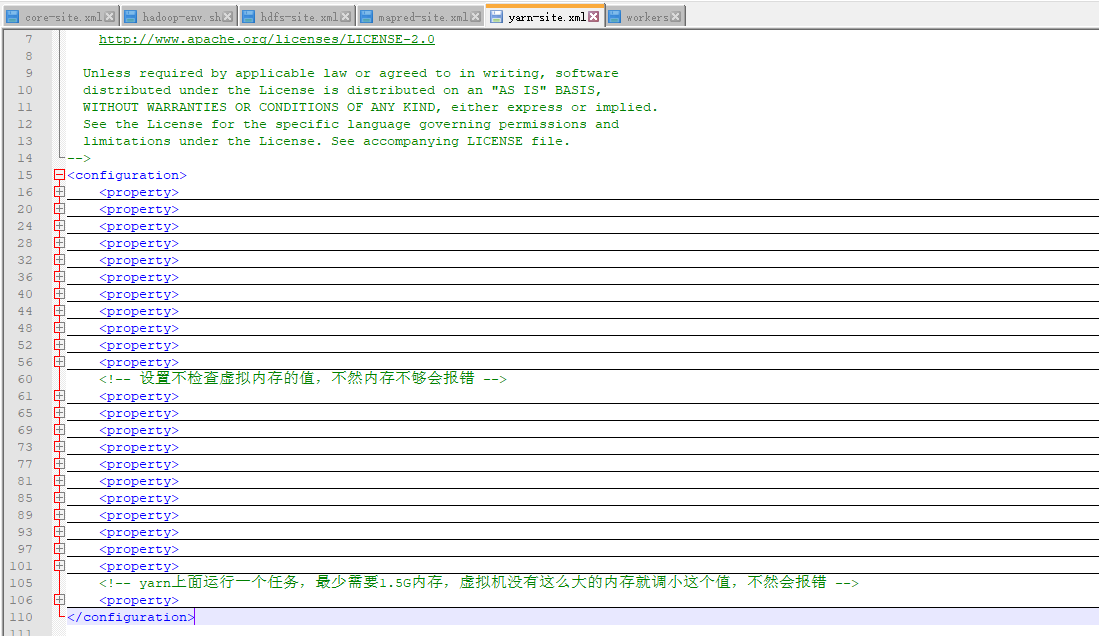

yarn-site.xml(主要是来配置yarn集群的)

<configuration>

<property>

<name>dfs.namenode.handler.count</name>

<value>100</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>node01:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>node01:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>node01:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>node01:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>node01:8088</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>node01</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>1024</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value>

</property>

<!-- 设置不检查虚拟内存的值,不然内存不够会报错 -->

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>1024</value>

</property>

<property>

<name>yarn.nodemanager.resource.detect-hardware-capabilities</name>

<value>true</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>file:///export/servers/hadoop-3.1.1/datas/nodemanager/nodemanagerDatas</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>file:///export/servers/hadoop-3.1.1/datas/nodemanager/nodemanagerLogs</value>

</property>

<property>

<name>yarn.nodemanager.log.retain-seconds</name>

<value>10800</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/export/servers/hadoop-3.1.1/datas/remoteAppLog/remoteAppLogs</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir-suffix</name>

<value>logs</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>18144000</value>

</property>

<property>

<name>yarn.log-aggregation.retain-check-interval-seconds</name>

<value>86400</value>

</property>

<!-- yarn上面运行一个任务,最少需要1.5G内存,虚拟机没有这么大的内存就调小这个值,不然会报错 -->

<property>

<name>yarn.app.mapreduce.am.resource.mb</name>

<value>1024</value>

</property>

</configuration>

----------------------------------------------------------------------------------------------------------------------------------------------------------------

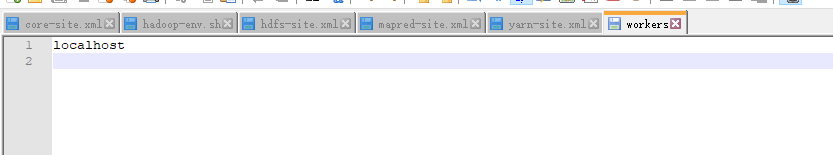

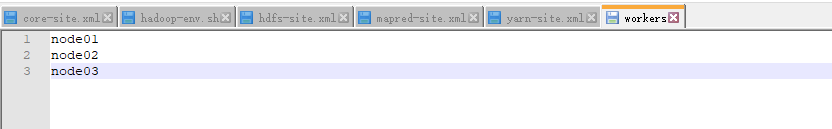

works(主要是设置从机的有3个)

node01

node02

node03

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

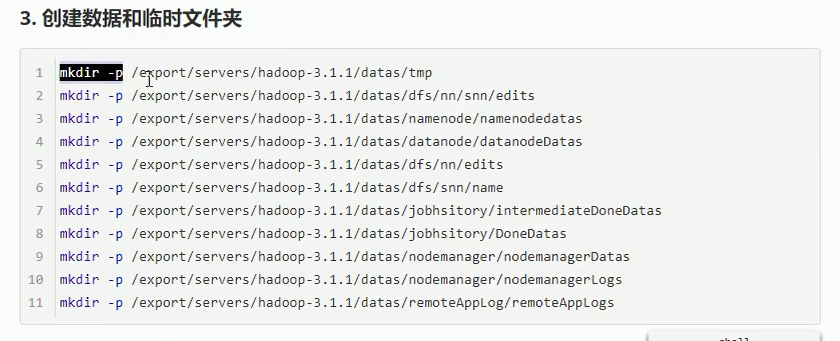

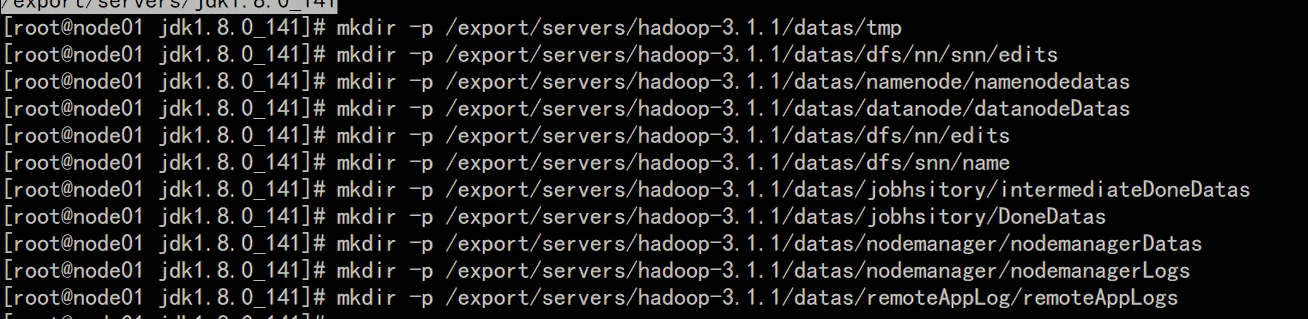

#### 3. 创建数据和临时文件夹

```shell

mkdir -p /export/servers/hadoop-3.1.1/datas/tmp

mkdir -p /export/servers/hadoop-3.1.1/datas/dfs/nn/snn/edits

mkdir -p /export/servers/hadoop-3.1.1/datas/namenode/namenodedatas

mkdir -p /export/servers/hadoop-3.1.1/datas/datanode/datanodeDatas

mkdir -p /export/servers/hadoop-3.1.1/datas/dfs/nn/edits

mkdir -p /export/servers/hadoop-3.1.1/datas/dfs/snn/name

mkdir -p /export/servers/hadoop-3.1.1/datas/jobhsitory/intermediateDoneDatas

mkdir -p /export/servers/hadoop-3.1.1/datas/jobhsitory/DoneDatas

mkdir -p /export/servers/hadoop-3.1.1/datas/nodemanager/nodemanagerDatas

mkdir -p /export/servers/hadoop-3.1.1/datas/nodemanager/nodemanagerLogs

mkdir -p /export/servers/hadoop-3.1.1/datas/remoteAppLog/remoteAppLogs

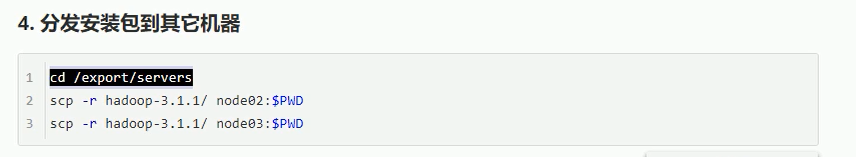

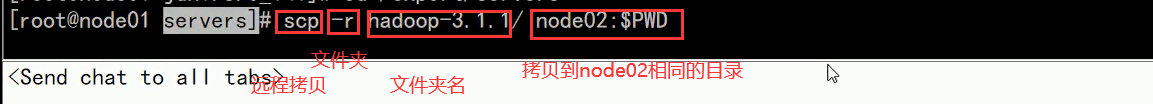

#### 4. 分发安装包到其它机器

```shell

cd /export/servers

scp -r hadoop-3.1.1/ node02:$PWD

scp -r hadoop-3.1.1/ node03:$PWD

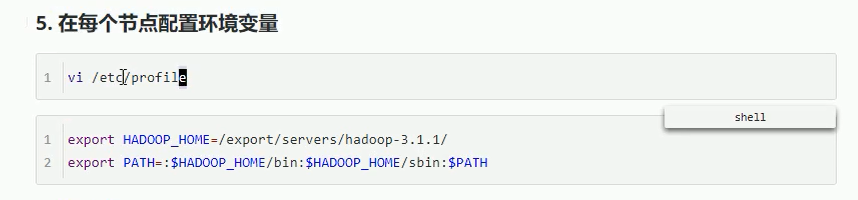

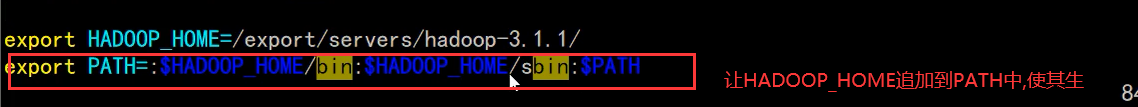

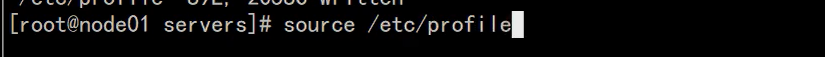

#### 5. 在每个节点配置环境变量

```shell

vi /etc/profile

```

```shell

export HADOOP_HOME=/export/servers/hadoop-3.1.1/

export PATH=:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

======================================================================================================================

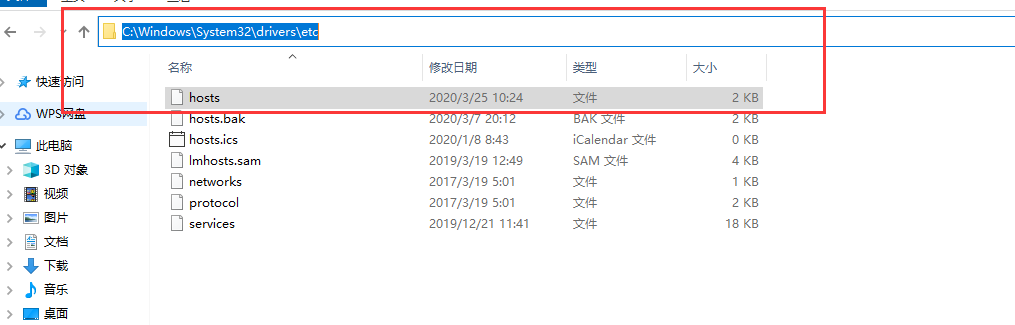

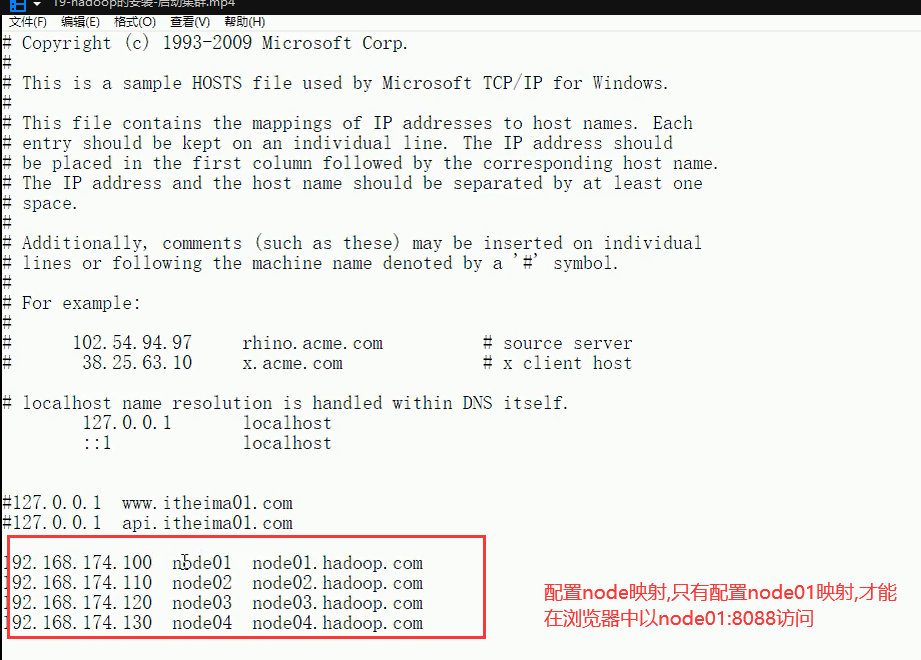

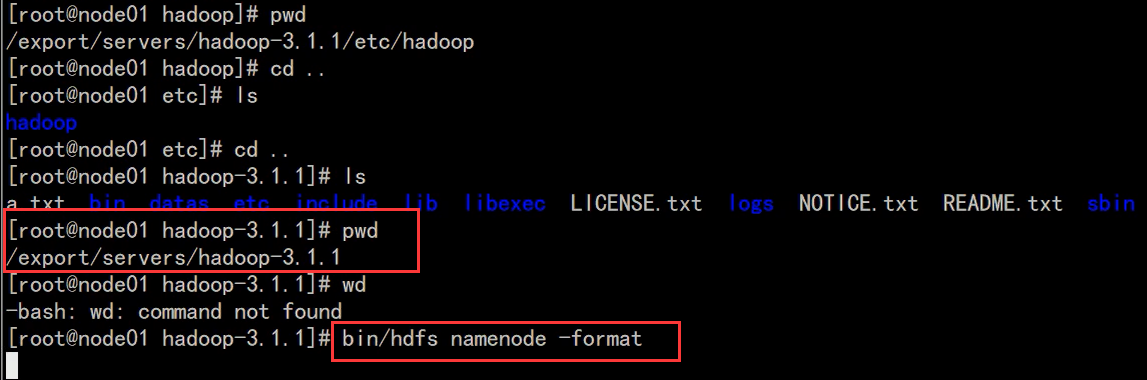

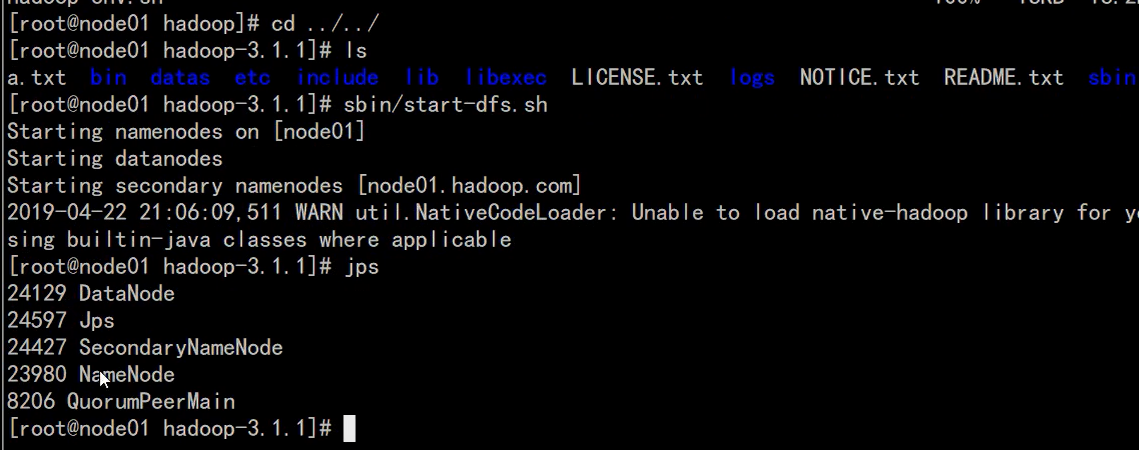

19-hadoop的安装-启动集群

#### 6. 格式化HDFS(格式化 的时候会创建一些中间文件)

- 为什么要格式化HDFS

- HDFS需要一个格式化的过程来创建存放元数据(image, editlog)的目录

```shell

bin/hdfs namenode -format

```

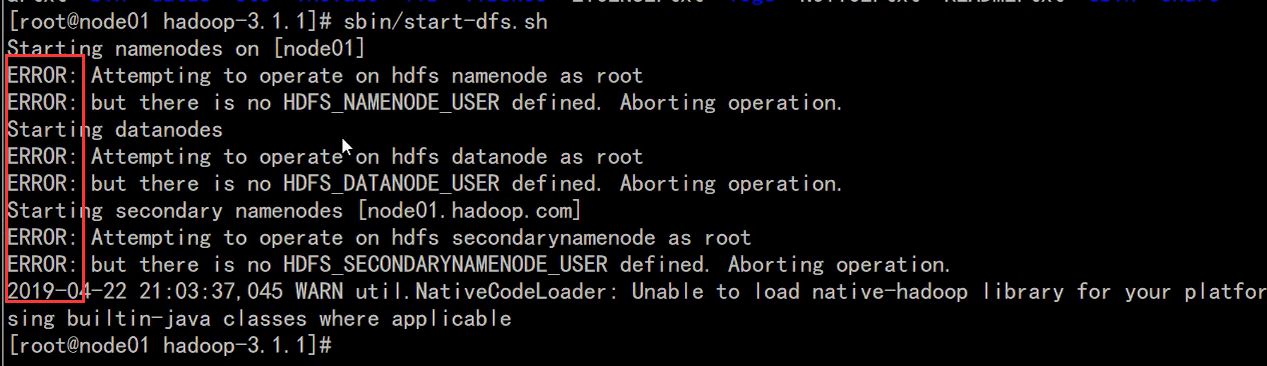

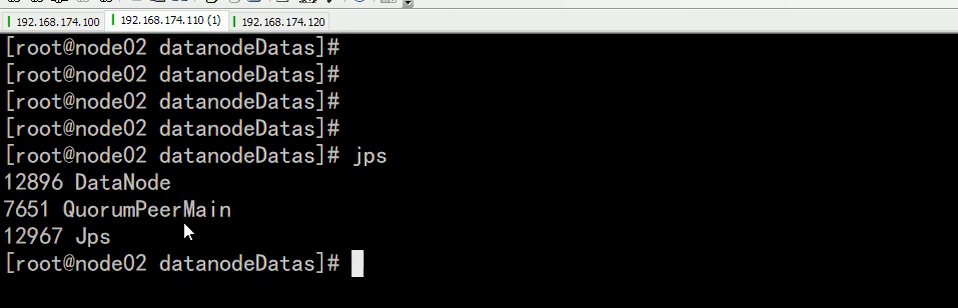

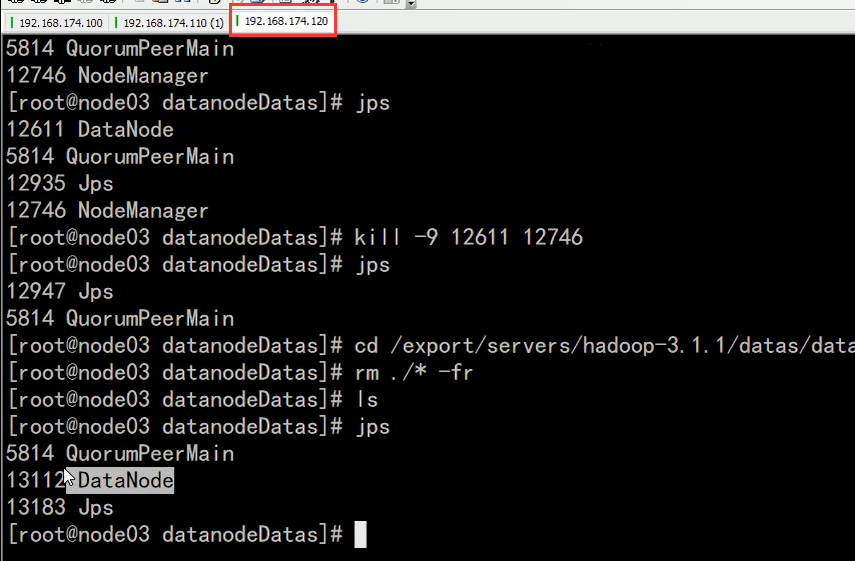

#### 7. 启动集群

```shell

# 会登录进所有的worker启动相关进行, 也可以手动进行, 但是没必要

//HDFS启动成功

/export/servers/hadoop-3.1.1/sbin/start-dfs.sh

//启动yarn集群

/export/servers/hadoop-3.1.1/sbin/start-yarn.sh

mapred --daemon start historyserver

------------------------------------------------------------------------------------------------------------------------------

(1).启动HDFS

//HDFS启动成功

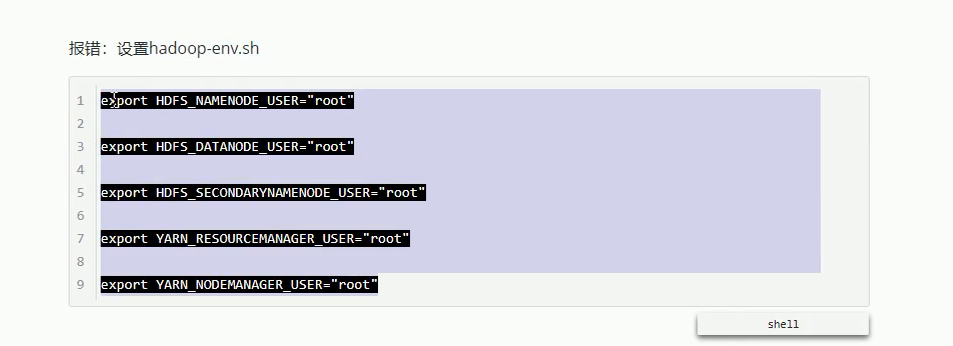

报错:设置hadoop-env.sh

~~~shell

export HDFS_NAMENODE_USER="root"

export HDFS_DATANODE_USER="root"

export HDFS_SECONDARYNAMENODE_USER="root"

export YARN_RESOURCEMANAGER_USER="root"

export YARN_NODEMANAGER_USER="root"

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

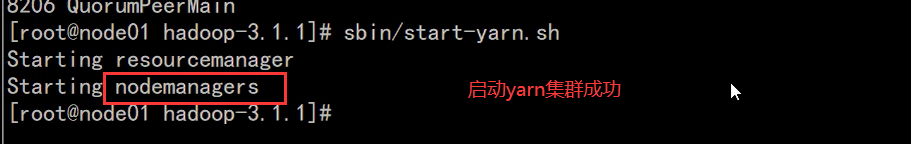

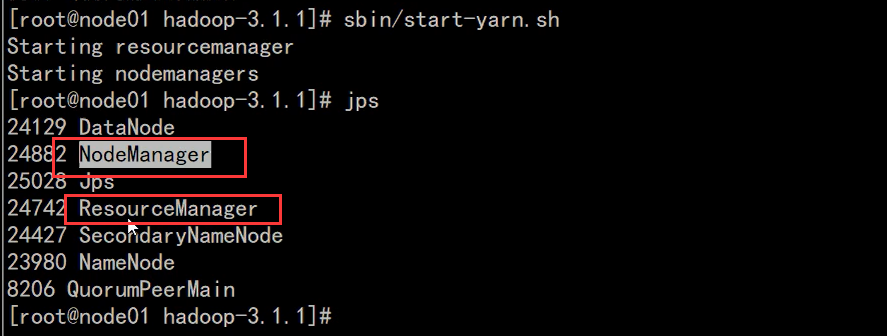

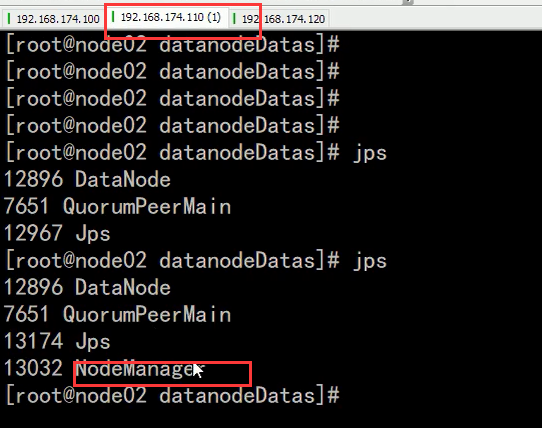

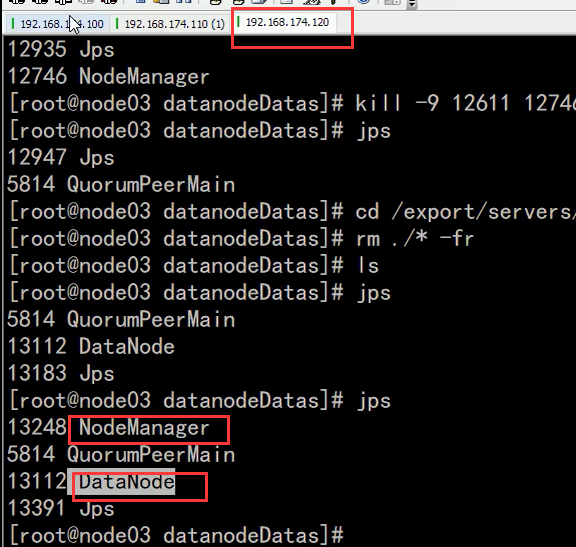

(2).启动yarn集群

//启动yarn集群

/export/servers/hadoop-3.1.1/sbin/start-yarn.sh