Spark应用监控解决方案--使用Prometheus和Grafana监控Spark应用

Spark任务启动后,我们通常都是通过跳板机去Spark UI界面查看对应任务的信息,一旦任务多了之后,这将会是让人头疼的问题。如果能将所有任务信息集中起来监控,那将会是很完美的事情。

通过Spark官网指导文档,发现Spark只支持以下sink

Each instance can report to zero or more sinks. Sinks are contained in the org.apache.spark.metrics.sink package:

ConsoleSink: Logs metrics information to the console.CSVSink: Exports metrics data to CSV files at regular intervals.JmxSink: Registers metrics for viewing in a JMX console.MetricsServlet: Adds a servlet within the existing Spark UI to serve metrics data as JSON data.GraphiteSink: Sends metrics to a Graphite node.Slf4jSink: Sends metrics to slf4j as log entries.StatsdSink: Sends metrics to a StatsD node.

没有比较常用的Influxdb和Prometheus ~~~

谷歌一把发现要支持influxdb需要使用第三方包,比较有参考意义的是这篇,Monitoring Spark Streaming with InfluxDB and Grafana ,在提交任务的时候增加file和配置文件,但成功永远不会这么轻松。。。

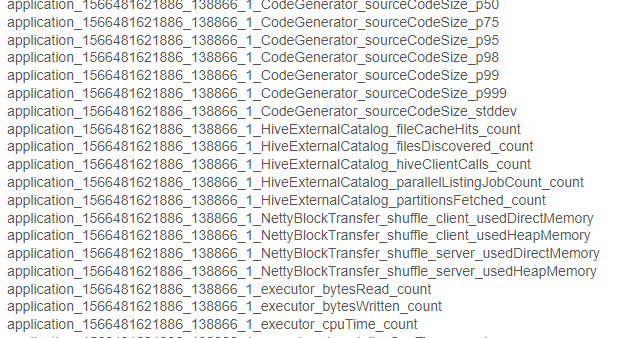

写入influxdb的数据都是以application_id命名的,类似这种application_1533838659288_1030_1_jvm_heap_usage,也就是说每个任务的指标都是在单独的表,最终我们展示在grafana不还得一个一个配置么?

显然这个不是我想要的结果,最终目的就是:一次配置后每提交一个任务自动会在监控上看到。

谷歌是治愈一切的良药,终究找到一个比较完美的解决方案,就是通过graphite_exporter中转数据后接入Prometheus,再通过grafana展示出来。

所以,目前已经实践可行的方案有两个

方案一:

监控数据直接写入influxdb,再通过grafana读取数据做展示,步骤如下:

1.在spark下 conf/metrics.properties 加入以下配置

master.source.jvm.class=org.apache.spark.metrics.source.JvmSource

worker.source.jvm.class=org.apache.spark.metrics.source.JvmSource

driver.source.jvm.class=org.apache.spark.metrics.source.JvmSourc

executor.source.jvm.class=org.apache.spark.metrics.source.JvmSource

*.sink.influx.class=org.apache.spark.metrics.sink.InfluxDbSink

*.sink.influx.protocol=http

*.sink.influx.host=xx.xx.xx.xx

*.sink.influx.port=8086

*.sink.influx.database=sparkonyarn

*.sink.influx.auth=admin:admin

2.在提交任务的时候增加以下配置,并确保以下jar存在

--files /spark/conf/metrics.properties \

--conf spark.metrics.conf=metrics.properties \

--jars /spark/jars/metrics-influxdb-1.1.8.jar,/spark/jars/spark-influx-sink-0.4.0.jar \

--conf spark.driver.extraClassPath=metrics-influxdb-1.1.8.jar:spark-influx-sink-0.4.0.jar \

--conf spark.executor.extraClassPath=metrics-influxdb-1.1.8.jar:spark-influx-sink-0.4.0.jar

缺点:application_id发生变化需要重新配置grafana

方案二(目前在用的):

通过graphite_exporter将原生数据通过映射文件转化为有 label 维度的 Prometheus 数据

1.下载graphite_exporter,解压后执行以下命令,其中graphite_exporter_mapping需要我们自己创建,内容为数据映射文件

nohup ./graphite_exporter --graphite.mapping-config=graphite_exporter_mapping &

例如

mappings:

- match: '*.*.jvm.*.*'

name: jvm_memory_usage

labels:

application: $1

executor_id: $2

mem_type: $3

qty: $4会将数据转化成 metric name 为 jvm_memory_usage,label 为 application,executor_id,mem_type,qty 的格式。

application_1533838659288_1030_1_jvm_heap_usage -> jvm_memory_usage{application="application_1533838659288_1030",executor_id="driver",mem_type="heap",qty="usage"}

2.配置 Prometheus 从 graphite_exporter 获取数据,重启prometheus

/path/to/prometheus/prometheus.ymlscrape_configs:

- job_name: 'spark'

static_configs:

- targets: ['localhost:9108']3.在spark下 conf/metrics.properties 加入以下配置

master.source.jvm.class=org.apache.spark.metrics.source.JvmSource

worker.source.jvm.class=org.apache.spark.metrics.source.JvmSource

driver.source.jvm.class=org.apache.spark.metrics.source.JvmSourc

executor.source.jvm.class=org.apache.spark.metrics.source.JvmSource

*.sink.graphite.class=org.apache.spark.metrics.sink.GraphiteSink

*.sink.graphite.protocol=tcp

*.sink.graphite.host=xx.xx.xx.xx

*.sink.graphite.port=9109

*.sink.graphite.period=5

*.sink.graphite.unit=seconds

4.提交spark任务的时候增加 --files /spark/conf/metrics.properties

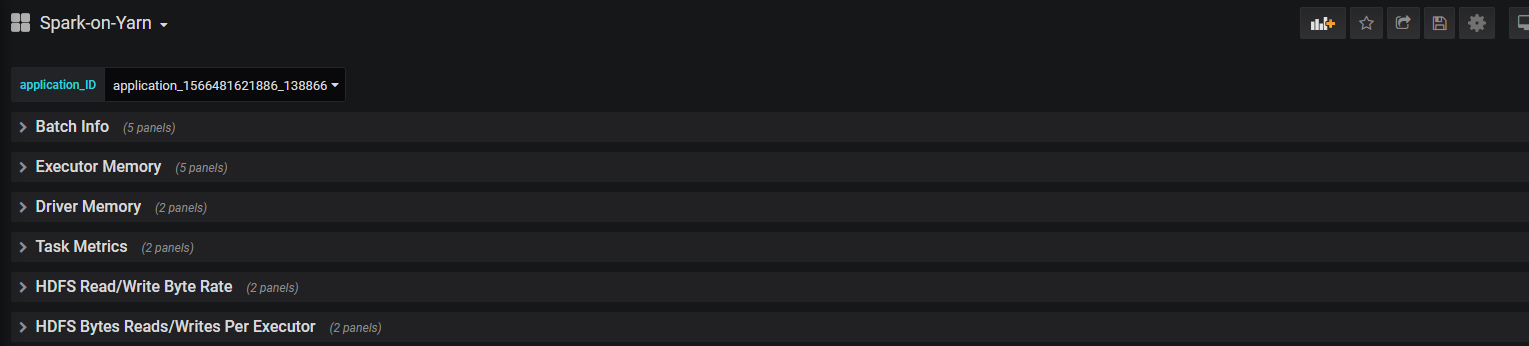

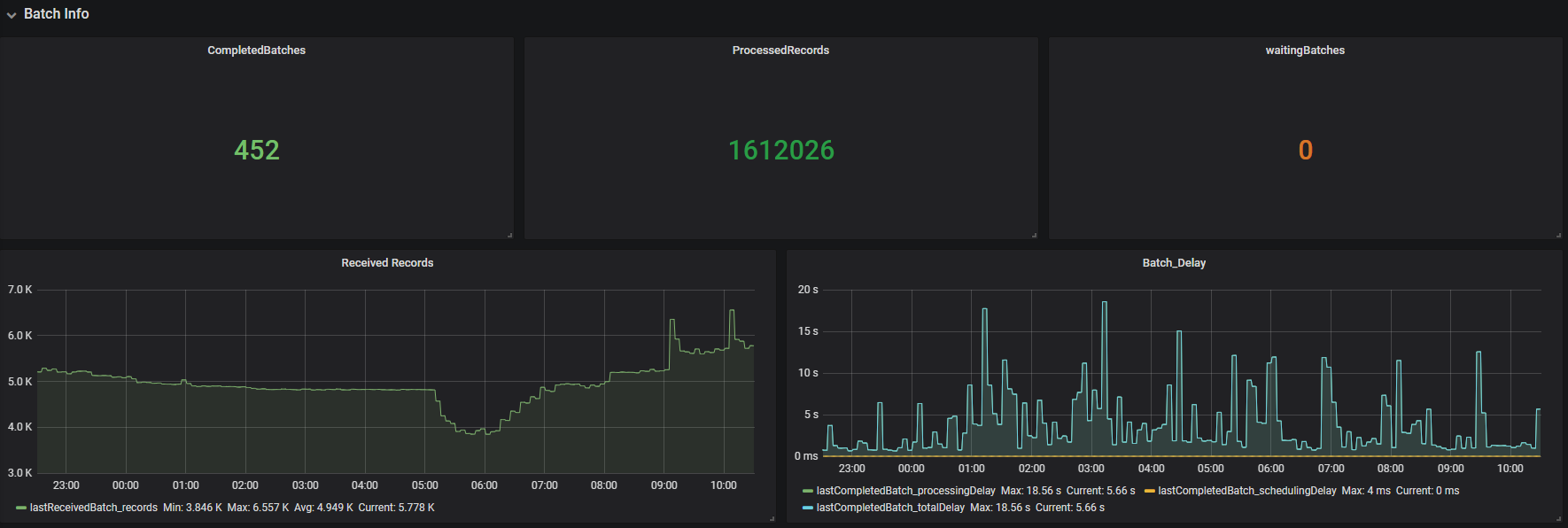

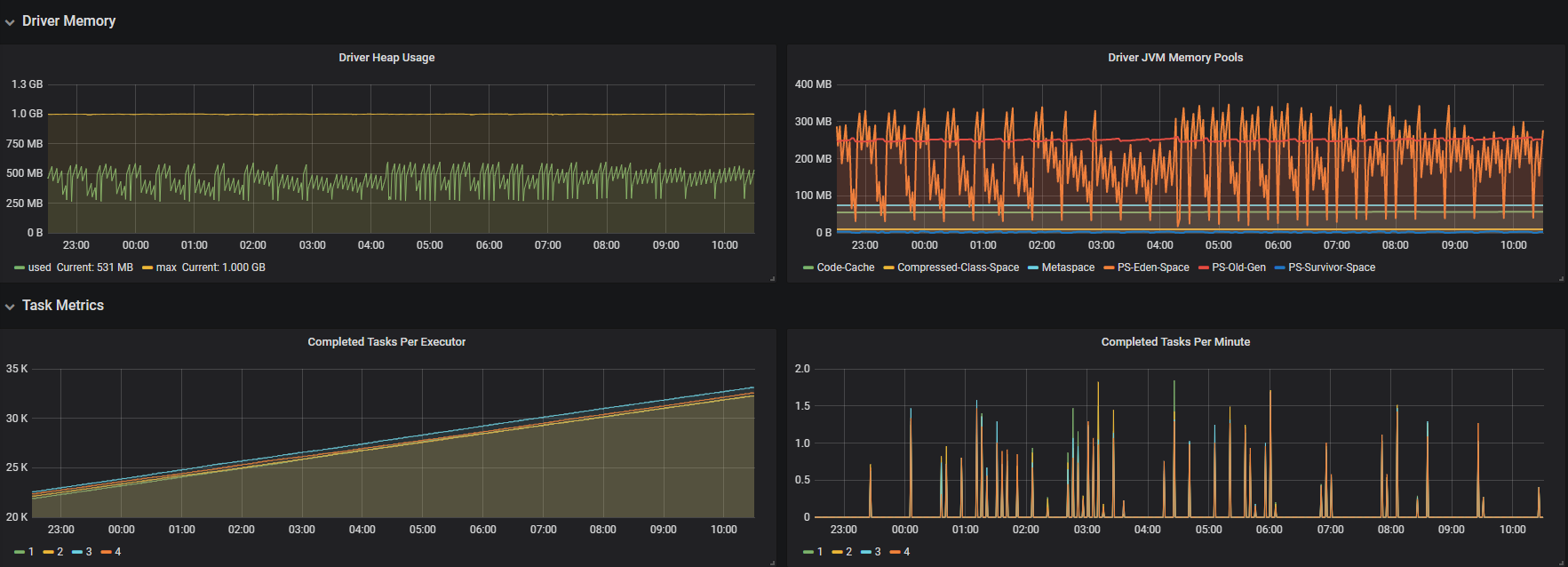

5.最后在grafana创建prometheus数据源,创建需要的指标,最终效果如下,有新提交的任务不需要再配置监控,直接选择application_id就可以看对应的信息

需要用到的jar包

https://repo1.maven.org/maven2/com/izettle/metrics-influxdb/1.1.8/metrics-influxdb-1.1.8.jar

https://mvnrepository.com/artifact/com.palantir.spark.influx/spark-influx-sink

模板

mappings:

- match: '*.*.executor.filesystem.*.*'

name: filesystem_usage

labels:

application: $1

executor_id: $2

fs_type: $3

qty: $4

- match: '*.*.executor.threadpool.*'

name: executor_tasks

labels:

application: $1

executor_id: $2

qty: $3

- match: '*.*.executor.jvmGCTime.count'

name: jvm_gcTime_count

labels:

application: $1

executor_id: $2

- match: '*.*.executor.*.*'

name: executor_info

labels:

application: $1

executor_id: $2

type: $3

qty: $4

- match: '*.*.jvm.*.*'

name: jvm_memory_usage

labels:

application: $1

executor_id: $2

mem_type: $3

qty: $4

- match: '*.*.jvm.pools.*.*'

name: jvm_memory_pools

labels:

application: $1

executor_id: $2

mem_type: $3

qty: $4

- match: '*.*.BlockManager.*.*'

name: block_manager

labels:

application: $1

executor_id: $2

type: $3

qty: $4

- match: '*.driver.DAGScheduler.*.*'

name: DAG_scheduler

labels:

application: $1

type: $2

qty: $3

- match: '*.driver.*.*.*.*'

name: task_info

labels:

application: $1

task: $2

type1: $3

type2: $4

qty: $5

参考资料

https://github.com/palantir/spark-influx-sink

https://spark.apache.org/docs/latest/monitoring.html

https://www.linkedin.com/pulse/monitoring-spark-streaming-influxdb-grafana-christian-g%C3%BCgi

https://github.com/prometheus/prometheus/wiki/Default-port-allocations

https://github.com/prometheus/graphite_exporter

https://prometheus.io/download/

https://rokroskar.github.io/monitoring-spark-on-hadoop-with-prometheus-and-grafana.html

https://blog.csdn.net/lsshlsw/article/details/82670508

https://www.jianshu.com/p/274380bb0974

浙公网安备 33010602011771号

浙公网安备 33010602011771号