CrawlSpider_获取图片名称地址,及入库

1.继承自scrapy.Spider

2.独门秘笈

CrawlSpider可以定义规则,再解析html内容的时候,可以根据链接规则提取出指定的链接,然后再向这些链接发送请求

所以,如果有需要跟进链接的需求,意思就是爬取了网页之后,需要提取链接再次爬取,使用CrawlSpider是非常合适的

3.提取链接

链接提取器,在这里就可以写规则提取指定链接

scrapy.linkextractors.LinkExtractor(

allow = (), # 正则表达式 提取符合正则的链接

deny = (), # (不用)正则表达式 不提取符合正则的链接

allow_domains = (), # (不用)允许的域名

deny_domains = (), # (不用)不允许的域名

restrict_xpaths = (), # xpath,提取符合xpath规则的链接

restrict_css = () # 提取符合选择器规则的链接

)

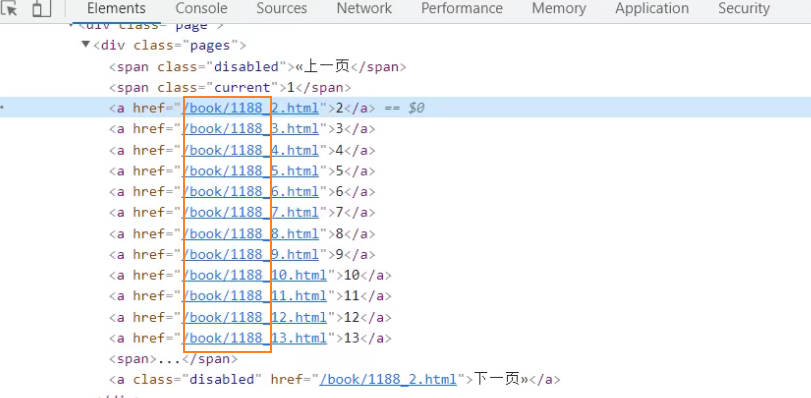

4.模拟使用

正则用法: links1 = LinkExtractor(allow=r'list_23_\d+\.html')

xpath用法: links2 = LinkExtractor(restrict_xpaths=r'//div[@class="x"]')

css用法: links3 = LinkExtractor(restrict_css='.x')

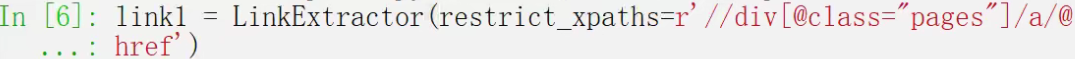

5.提取连接

link.extract_links(response)

对当前网页链接提取

导入链接提取器

使用正则语法,比较多

\b 表数字

\b+ 一到多个数字

\. 转义点号

查看提取的链接

使用xpath语法

查看提取的链接

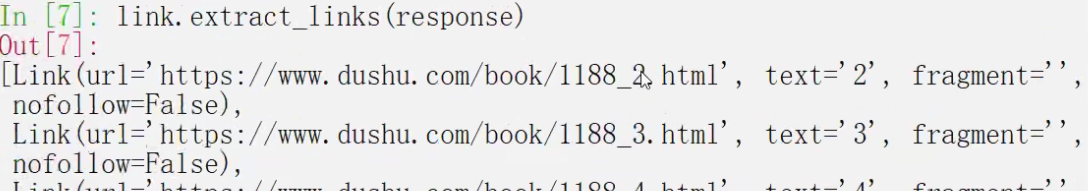

6.注意事项

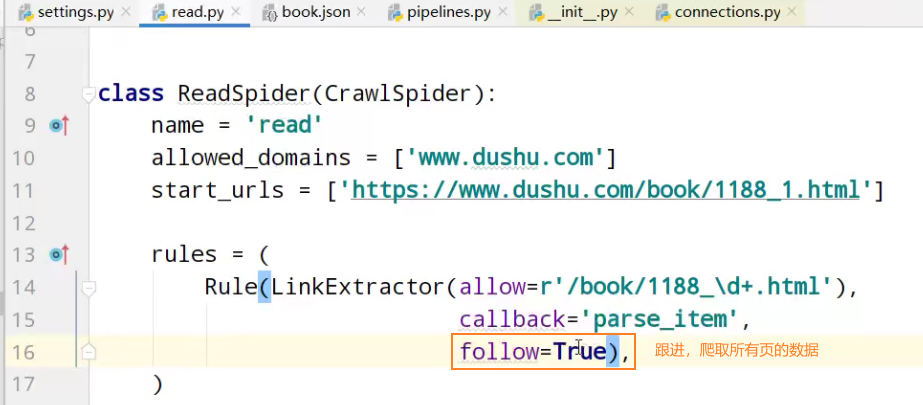

【注1】callback只能写函数名字符串, callback='parse_item'

【注2】在基本的spider中,如果重新发送请求,那里的callback写的是 callback=self.parse_item 【注‐‐稍后看】follow=true 是否跟进 就是按照提取连接规则进行提取

运行原理:

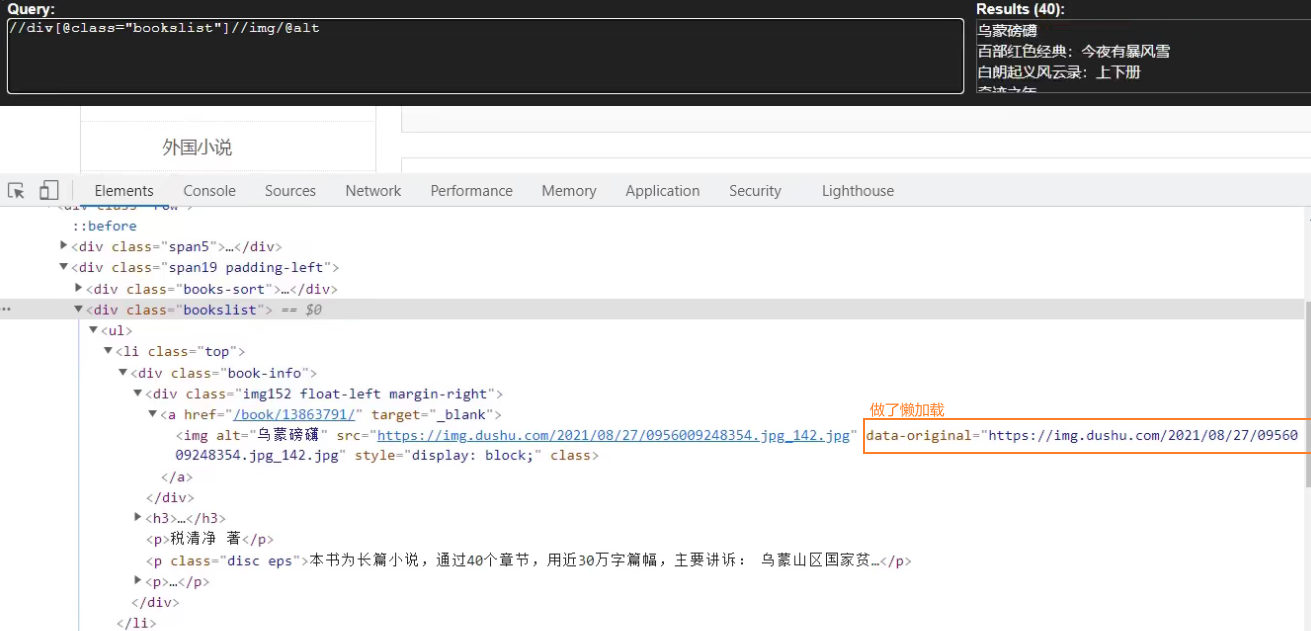

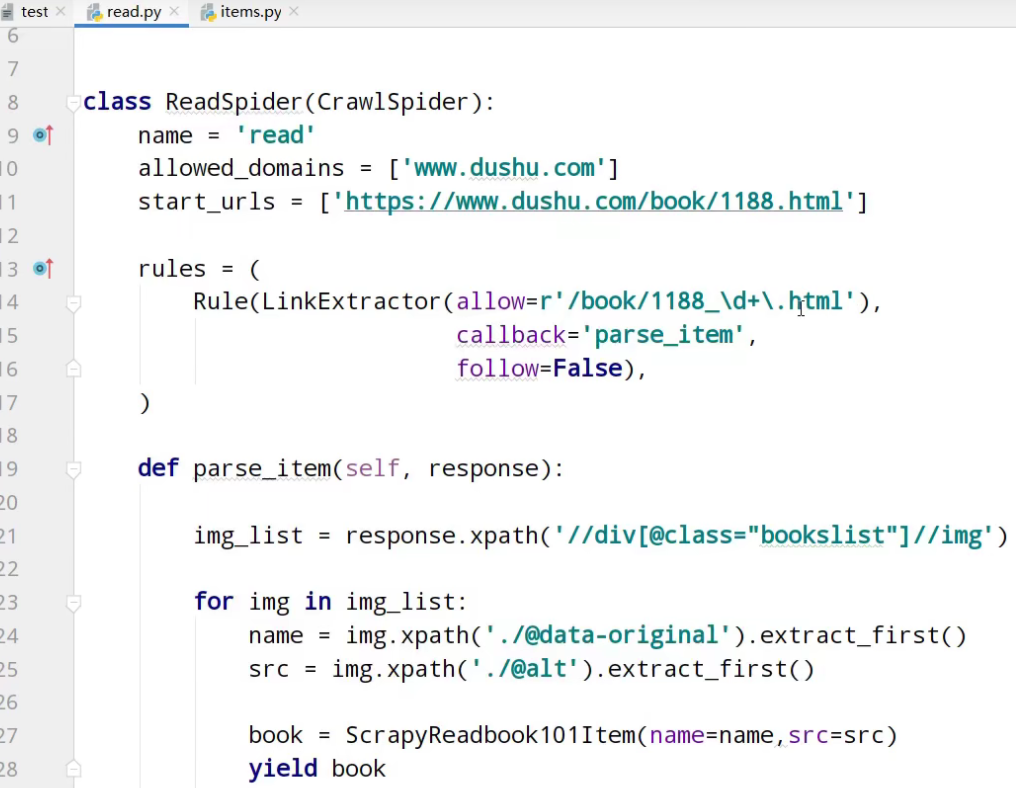

读书网数据入库

1.创建项目:scrapy startproject dushuproject

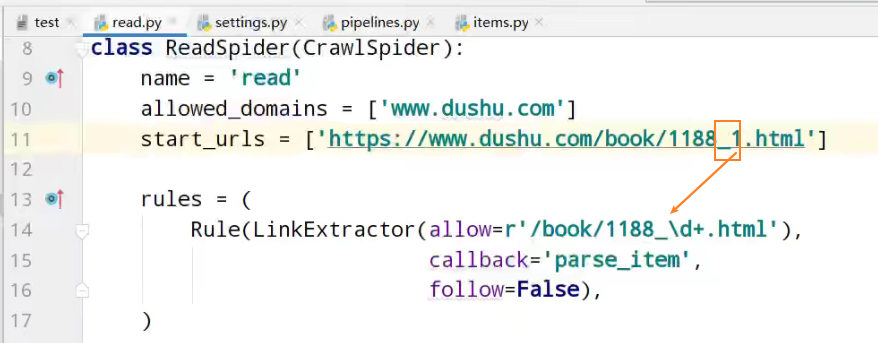

2.跳转到spiders路径 cd\dushuproject\dushuproject\spiders

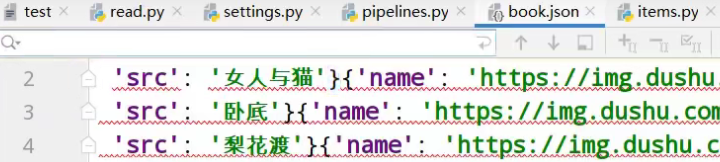

3.创建爬虫类:scrapy genspider ‐t crawl read www.dushu.com

4.items

5.spiders

6.settings

7.pipelines

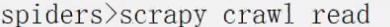

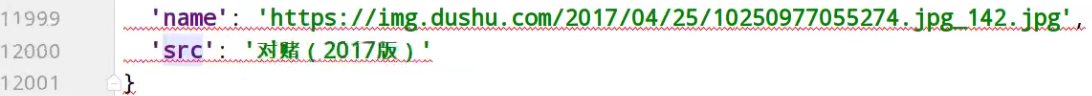

数据保存到本地

数据保存到mysql数据库

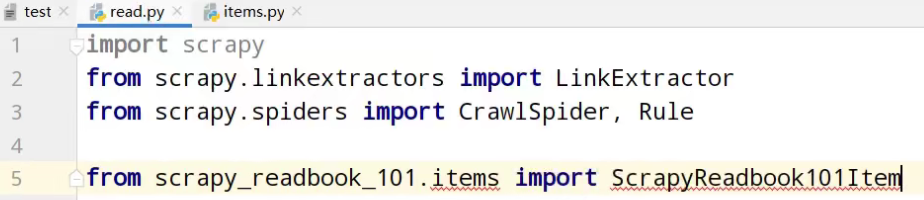

创建项目

> scrapy startproject scrapy_redbook_101

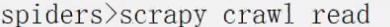

cd到spiders目录下,创建爬虫文件

spiders> scrapy genspider ‐t crawl read https://www.dushu.com/book/1188.html

items定义爬取的数据结构类

name名字、src图片

items数据结构类的导包

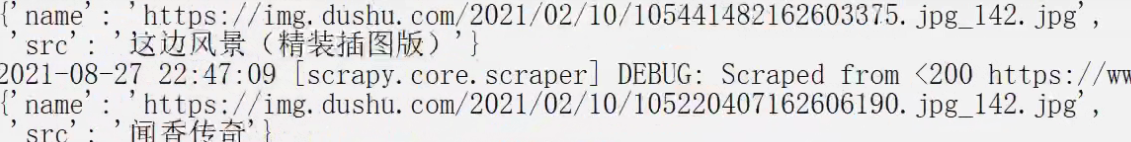

运行

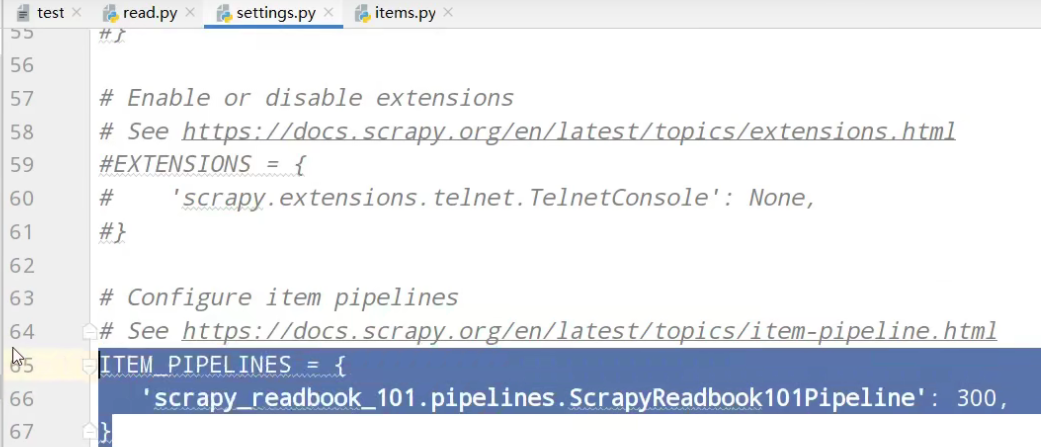

开启管道

pipelines管道功能

运行

经过计算观察,缺失第一页的数据

运行

这次,没有问题,13页的所有数据

入库操作

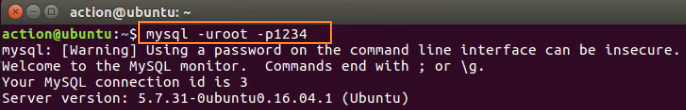

链接mysql数据库

创建spider01数据库

使用spider01数据库

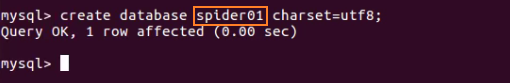

创建book表

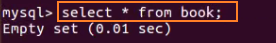

查询book表内容

查询虚拟机ip

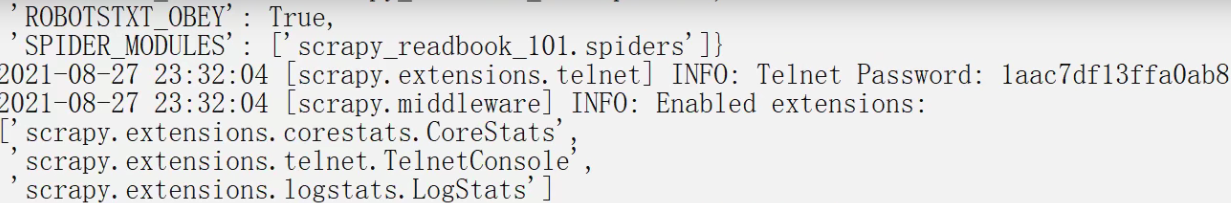

settings配置,链接使用数据库

开启数据库插入管道

pipelines数据库插入功能实现

# Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html # useful for handling different item types with a single interface from itemadapter import ItemAdapter class ScrapyReadbook101Pipeline: def open_spider(self,spider): self.fp = open('book.json','w',encoding='utf-8') def process_item(self, item, spider): self.fp.write(str(item)) return item def close_spider(self,spider): self.fp.close() # 加载settings文件,数据库参数 from scrapy.utils.project import get_project_settings

# 导入pyymsql import pymysql # 创建mysql插入管道 class MysqlPipeline:

# 获取数据库链接参数 def open_spider(self,spider): settings = get_project_settings() self.host = settings['DB_HOST'] self.port =settings['DB_PORT'] self.user =settings['DB_USER'] self.password =settings['DB_PASSWROD'] self.name =settings['DB_NAME'] self.charset =settings['DB_CHARSET'] # 链接 self.connect()

# 链接数据库函数实现,获取cursor对象 def connect(self): self.conn = pymysql.connect( host=self.host, port=self.port, user=self.user, password=self.password, db=self.name, charset=self.charset ) # 创建执行mysql语句对象 self.cursor = self.conn.cursor() # 操作数据库函数 def process_item(self, item, spider): # 插入操作 sql = 'insert into book(name,src) values("{}","{}")'.format(item['name'],item['src']) # 执行sql语句 self.cursor.execute(sql) # 提交 self.conn.commit() return item #关闭插入,关闭链接 def close_spider(self,spider): self.cursor.close() self.conn.close()

运行

虚拟机中,查询表中数据

以上是13页数据的爬取

follow=true 跟进 按照提取连接规则进行提取

运行

虚拟机中,查询表中数据

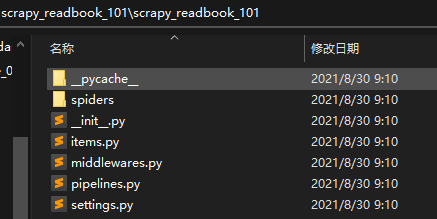

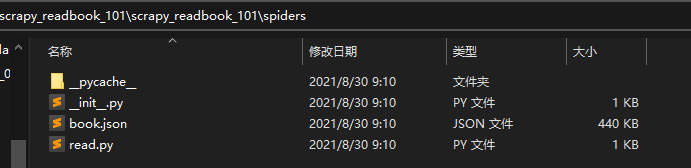

项目文件夹

read.py爬虫核心文件

import scrapy from scrapy.linkextractors import LinkExtractor from scrapy.spiders import CrawlSpider, Rule from scrapy_readbook_101.items import ScrapyReadbook101Item class ReadSpider(CrawlSpider): name = 'read' allowed_domains = ['www.dushu.com'] start_urls = ['https://www.dushu.com/book/1188_1.html'] rules = ( Rule(LinkExtractor(allow=r'/book/1188_\d+.html'), callback='parse_item', follow=True), ) def parse_item(self, response): img_list = response.xpath('//div[@class="bookslist"]//img') for img in img_list: name = img.xpath('./@data-original').extract_first() src = img.xpath('./@alt').extract_first() book = ScrapyReadbook101Item(name=name,src=src) yield book

items.py自定义数据结构类

# Define here the models for your scraped items # # See documentation in: # https://docs.scrapy.org/en/latest/topics/items.html import scrapy class ScrapyReadbook101Item(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() name = scrapy.Field() src = scrapy.Field()

settings.py参数配置文件

# Scrapy settings for scrapy_readbook_101 project # # For simplicity, this file contains only settings considered important or # commonly used. You can find more settings consulting the documentation: # # https://docs.scrapy.org/en/latest/topics/settings.html # https://docs.scrapy.org/en/latest/topics/downloader-middleware.html # https://docs.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = 'scrapy_readbook_101' SPIDER_MODULES = ['scrapy_readbook_101.spiders'] NEWSPIDER_MODULE = 'scrapy_readbook_101.spiders' # Crawl responsibly by identifying yourself (and your website) on the user-agent #USER_AGENT = 'scrapy_readbook_101 (+http://www.yourdomain.com)' # Obey robots.txt rules ROBOTSTXT_OBEY = True # Configure maximum concurrent requests performed by Scrapy (default: 16) #CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0) # See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs #DOWNLOAD_DELAY = 3 # The download delay setting will honor only one of: #CONCURRENT_REQUESTS_PER_DOMAIN = 16 #CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default) #COOKIES_ENABLED = False # Disable Telnet Console (enabled by default) #TELNETCONSOLE_ENABLED = False # Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', #} # Enable or disable spider middlewares # See https://docs.scrapy.org/en/latest/topics/spider-middleware.html #SPIDER_MIDDLEWARES = { # 'scrapy_readbook_101.middlewares.ScrapyReadbook101SpiderMiddleware': 543, #} # Enable or disable downloader middlewares # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html #DOWNLOADER_MIDDLEWARES = { # 'scrapy_readbook_101.middlewares.ScrapyReadbook101DownloaderMiddleware': 543, #} # Enable or disable extensions # See https://docs.scrapy.org/en/latest/topics/extensions.html #EXTENSIONS = { # 'scrapy.extensions.telnet.TelnetConsole': None, #} # 参数中一个端口号 一个是字符集 都要注意 DB_HOST = '192.168.231.130' # 端口号是一个整数 DB_PORT = 3306 DB_USER = 'root' DB_PASSWROD = '1234' DB_NAME = 'spider01' # utf-8的杠不允许写 DB_CHARSET = 'utf8' # Configure item pipelines # See https://docs.scrapy.org/en/latest/topics/item-pipeline.html ITEM_PIPELINES = { 'scrapy_readbook_101.pipelines.ScrapyReadbook101Pipeline': 300, # MysqlPipeline 'scrapy_readbook_101.pipelines.MysqlPipeline':301 } # Enable and configure the AutoThrottle extension (disabled by default) # See https://docs.scrapy.org/en/latest/topics/autothrottle.html #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default) # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings #HTTPCACHE_ENABLED = True #HTTPCACHE_EXPIRATION_SECS = 0 #HTTPCACHE_DIR = 'httpcache' #HTTPCACHE_IGNORE_HTTP_CODES = [] #HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

pipelines.py功能核心功能

# Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html # useful for handling different item types with a single interface from itemadapter import ItemAdapter class ScrapyReadbook101Pipeline: def open_spider(self,spider): self.fp = open('book.json','w',encoding='utf-8') def process_item(self, item, spider): self.fp.write(str(item)) return item def close_spider(self,spider): self.fp.close() # 加载settings文件 from scrapy.utils.project import get_project_settings import pymysql class MysqlPipeline: def open_spider(self,spider): settings = get_project_settings() self.host = settings['DB_HOST'] self.port =settings['DB_PORT'] self.user =settings['DB_USER'] self.password =settings['DB_PASSWROD'] self.name =settings['DB_NAME'] self.charset =settings['DB_CHARSET'] self.connect() def connect(self): self.conn = pymysql.connect( host=self.host, port=self.port, user=self.user, password=self.password, db=self.name, charset=self.charset ) self.cursor = self.conn.cursor() def process_item(self, item, spider): sql = 'insert into book(name,src) values("{}","{}")'.format(item['name'],item['src']) # 执行sql语句 self.cursor.execute(sql) # 提交 self.conn.commit() return item def close_spider(self,spider): self.cursor.close() self.conn.close()

浙公网安备 33010602011771号

浙公网安备 33010602011771号