大数据系列之Flume+HDFS

本文将介绍Flume(Spooling Directory Source) + HDFS,关于Flume 中几种Source详见文章 http://www.cnblogs.com/cnmenglang/p/6544081.html

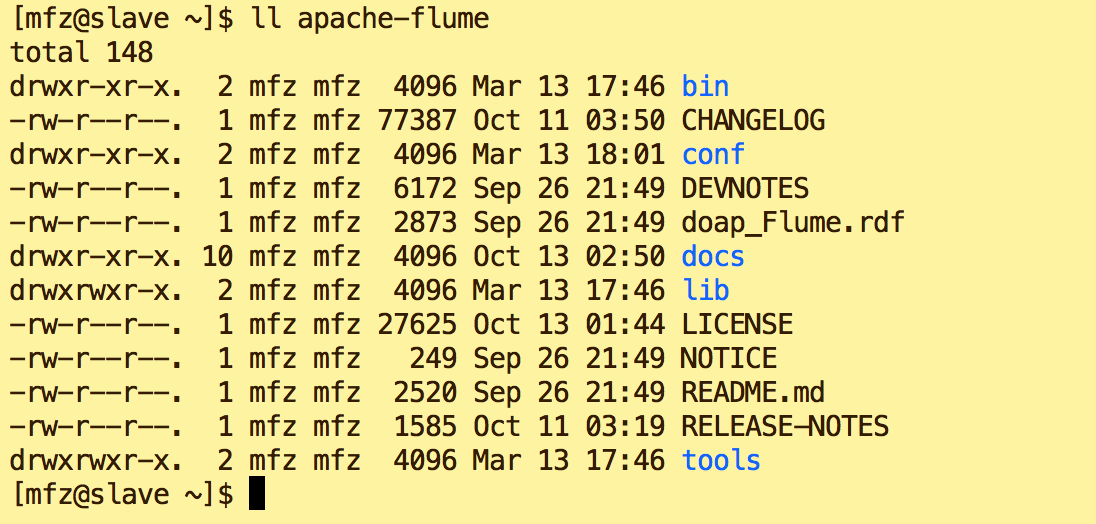

1.资料准备 : apache-flume-1.7.0-bin.tar.gz

2.配置步骤:

a.上传至用户(LZ用户mfz)目录resources下

b.解压

tar -xzvf apache-flume-1.7.0-bin.tar.gz

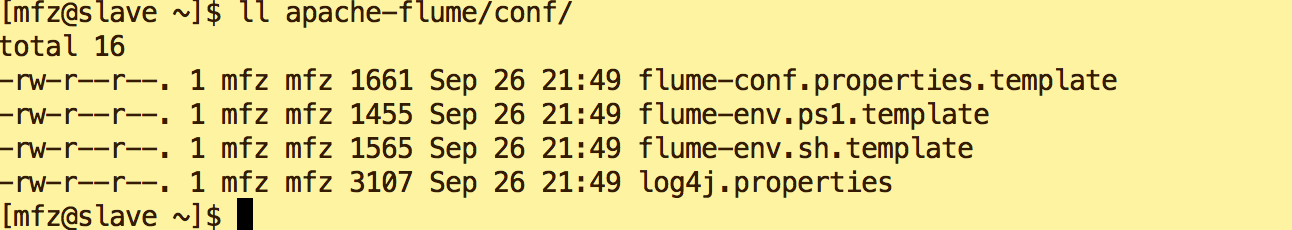

c.修改conf下 文件名

mv flume-conf.properties.template flume-conf.properties mv flume-env.sh.template flume-env.sh

d.修改flume-env.sh 环境变量,添加如下:

export JAVA_HOME=/usr/java/jdk1.8.0_102 FLUME_CLASSPATH="/home/mfz/hadoop-2.7.3/share/hadoop/hdfs/*"

e.新增文件 hdfs.properties

LogAgent.sources = apache LogAgent.channels = fileChannel LogAgent.sinks = HDFS #sources config #spooldir 对监控指定文件夹中新文件的变化,一旦有新文件出现就解析,解析写入channel后完成的文件名将追加后缀为*.COMPLATE LogAgent.sources.apache.type = spooldir LogAgent.sources.apache.spoolDir = /tmp/logs LogAgent.sources.apache.channels = fileChannel LogAgent.sources.apache.fileHeader = false #sinks config LogAgent.sinks.HDFS.channel = fileChannel LogAgent.sinks.HDFS.type = hdfs LogAgent.sinks.HDFS.hdfs.path = hdfs://master:9000/data/logs/%Y-%m-%d/%H LogAgent.sinks.HDFS.hdfs.fileType = DataStream LogAgent.sinks.HDFS.hdfs.writeFormat=TEXT LogAgent.sinks.HDFS.hdfs.filePrefix = flumeHdfs LogAgent.sinks.HDFS.hdfs.batchSize = 1000 LogAgent.sinks.HDFS.hdfs.rollSize = 10240 LogAgent.sinks.HDFS.hdfs.rollCount = 0 LogAgent.sinks.HDFS.hdfs.rollInterval = 1 LogAgent.sinks.HDFS.hdfs.useLocalTimeStamp = true #channels config LogAgent.channels.fileChannel.type = memory LogAgent.channels.fileChannel.capacity =10000 LogAgent.channels.fileChannel.transactionCapacity = 100

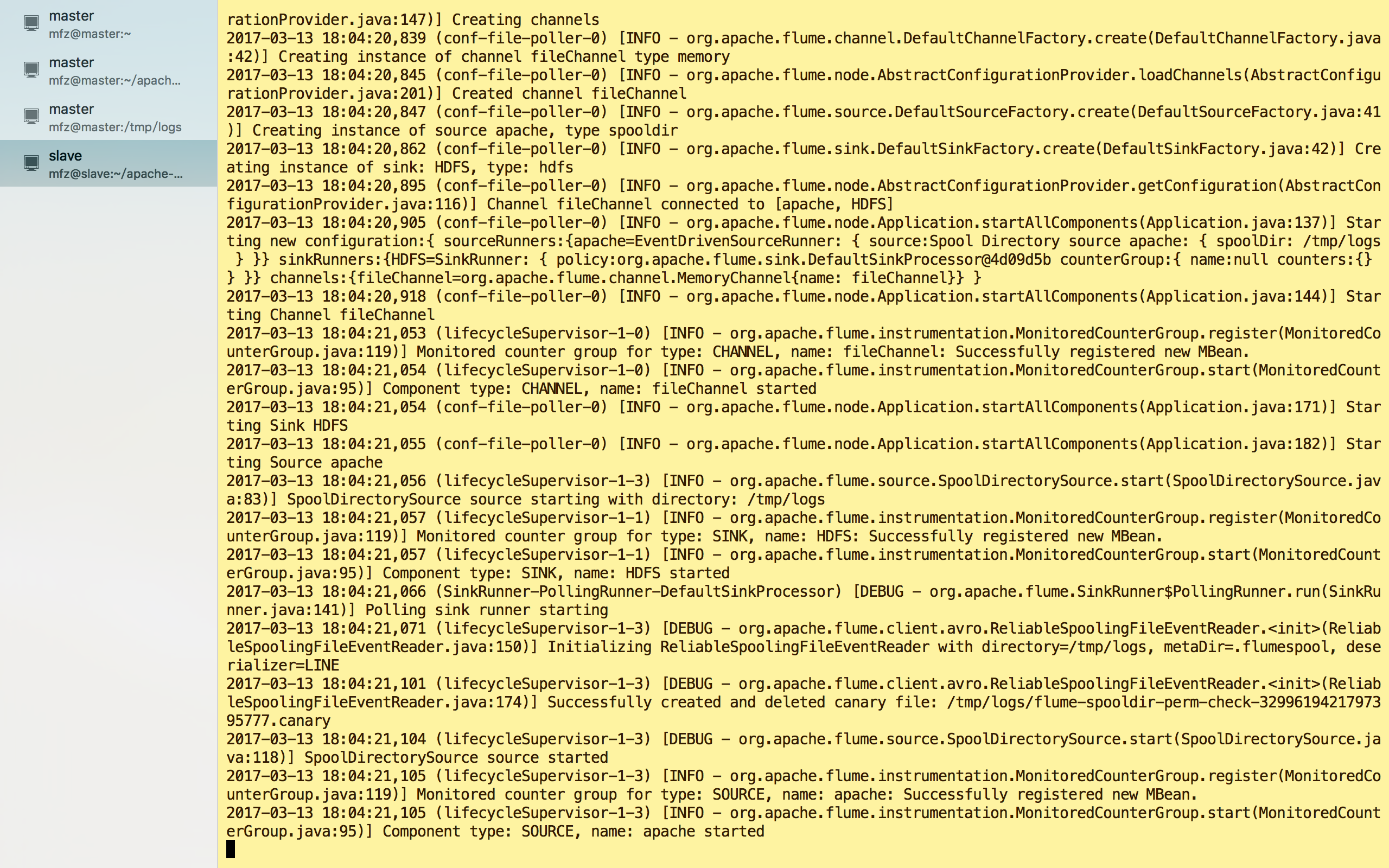

3.启动:

1.在 apache-flume 目录下执行

bin/flume-ng agent --conf-file conf/hdfs.properties -c conf/ --name LogAgent -Dflume.root.logger=DEBUG,console

启动出错,Ctrl+C 退出,新建监控目录/tmp/logs

mkdir -p /tmp/logs

重新启动:

启动成功!

4.验证:

a.另新建一终端操作;

b.在监控目录/tmp/logs下新建test.log目录

vi test.log #内容 test hello world

c.保存文件后查看之前的终端输出为

看图可得到信息:

1.test.log 已被解析传输完成且名称修改为test.log.COMPLETED;

2.HDFS目录下生成了文件及路径为:hdfs://master:9000/data/logs/2017-03-13/18/flumeHdfs.1489399757638.tmp

3.文件flumeHdfs.1489399757638.tmp 已被修改为flumeHdfs.1489399757638

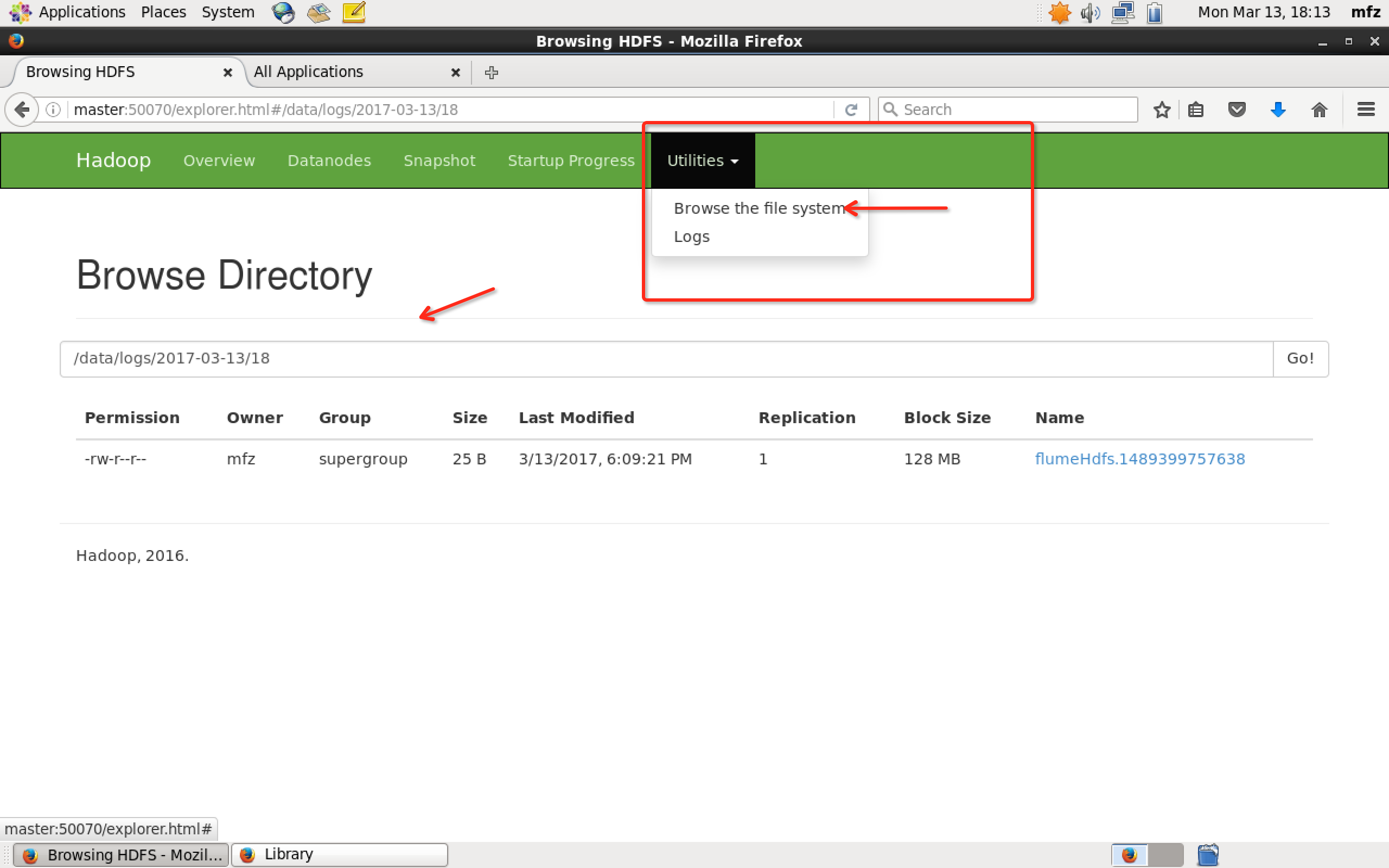

那么接下里登录master主机,打开WebUI,如下操作

或者打开master终端,在hadoop安装包下执行命令

bin/hadoop fs -ls -R /data/logs/2017-03-13/18

![]()

查看文件内容,命令:

bin/hadoop fs -cat /data/logs/2017-03-13/18/flumeHdfs.1489399757638

OK,完成!

浙公网安备 33010602011771号

浙公网安备 33010602011771号