编译安装Kubernetes 1.29 高可用集群(4)--master节点配置

1.1 在k8s-master01上解压kubernetes可执行文件到/usr/local/bin目录

tar -zxf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

注:--strip-components=3 表示解压时忽略压缩文件中的前3级目录结构,提取文件时直接放到目标目录中。

#浏览已解压的kubernetes可执行文件

# ls /usr/local/bin/kube*

/usr/local/bin/kube-apiserver /usr/local/bin/kubectl /usr/local/bin/kube-proxy

/usr/local/bin/kube-controller-manager /usr/local/bin/kubelet /usr/local/bin/kube-scheduler

#查看版本信息

# kubelet --version

Kubernetes v1.29.61.2 将kubernetes可执行文件拷贝至k8s-master02/node01/node02节点

scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} root@k8s-master-etcd02:/usr/local/bin/

scp /usr/local/bin/kube{let,-proxy,-scheduler} root@k8s-node01:/usr/local/bin/

scp /usr/local/bin/kube{let,-proxy,-scheduler} root@k8s-node02:/usr/local/bin/1.3 在k8s-master01/02节点上创建k8s证书存放目录

mkdir -p /etc/kubernetes/{yaml,pki,manifests,cert,conf} /var/lib/kubelet /var/log/kubernetes

ssh root@k8s-master-etcd02 "mkdir -p /etc/kubernetes/{yaml,pki,manifests,cert,conf} /var/lib/kubelet /var/log/kubernetes"1.4 在k8s-master所有节点上将etcd工作目录中的CA证书和CA请求文件拷贝至kubernetes工作目录中

cp /etc/etcd/cert/ca*.json /etc/kubernetes/cert/ && cp /etc/etcd/pki/ca* /etc/kubernetes/pki/

# ls /etc/kubernetes/pki/

ca.csr ca-key.pem ca.pem

# ls /etc/kubernetes/cert/

ca-config.json ca-csr.json2. 部署apiserver服务

2.1.1 在k8s-master01节点创建apiserver证书请求文件

cat > /etc/kubernetes/cert/kube-apiserver-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.83.210",

"192.168.83.211",

"192.168.83.212",

"192.168.83.213",

"192.168.83.214",

"192.168.83.220",

"192.168.83.221",

"192.168.83.222",

"192.168.83.223",

"192.168.83.224",

"192.168.83.200",

"10.66.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-CNPC"

}

]

}

EOF

注:192.168.83.222-224和192.168.83.213/214为预留IP,192.168.83.200为haproxy LB VIP-IP2.1.2 生成apiserver证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/cert/kube-apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-apiserver2.1.3 生成apiserver的token文件

cat > /etc/kubernetes/cert/token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF2.2.1 在k8s-master01节点创建apiserver聚合证书请求文件

# cat > /etc/kubernetes/cert/metrics-server-csr.json << EOF

{

"CN": "metrics-server",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-CNPC"

}

]

}

EOF2.2.2 生成聚合证书文件

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes /etc/kubernetes/cert/metrics-server-csr.json | cfssljson -bare /etc/kubernetes/pki/metrics-server2.3.1 在k8s-master01节点创建apiserver启动文件

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \\

--anonymous-auth=false \\

--bind-address=0.0.0.0 \\

--advertise-address=192.168.83.210 \\

--secure-port=6443 \\

--authorization-mode=Node,RBAC \\

--enable-aggregator-routing=true \\

--runtime-config=api/all=true \\

--enable-bootstrap-token-auth \\

--service-cluster-ip-range=10.66.0.0/16 \\

--token-auth-file=/etc/kubernetes/cert/token.csv \\

--service-node-port-range=30000-32767 \\

--tls-cert-file=/etc/kubernetes/pki/kube-apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/kube-apiserver-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--kubelet-client-certificate=/etc/kubernetes/pki/kube-apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/kube-apiserver-key.pem \\

--service-account-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-issuer=api \\

--etcd-cafile=/etc/etcd/pki/ca.pem \\

--etcd-certfile=/etc/etcd/pki/etcd.pem \\

--etcd-keyfile=/etc/etcd/pki/etcd-key.pem \\

--etcd-servers=https://192.168.83.210:2379,https://192.168.83.211:2379,https://192.168.83.212:2379 \\

--allow-privileged=true \\

--enable-aggregator-routing=true \\

--requestheader-client-ca-file=/etc/kubernetes/pki/ca.pem \\

--requestheader-allowed-names=metrics-server \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--proxy-client-cert-file=/etc/kubernetes/pki/metrics-server.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/metrics-server-key.pem \\

--apiserver-count=3 \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/kubernetes\kube-apiserver-audit.log \\

--event-ttl=1h \\

--v=4

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF2.3.2 在k8s-master02节点创建apiserver服务配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \\

--anonymous-auth=false \\

--bind-address=0.0.0.0 \\

--advertise-address=192.168.83.211 \\

--secure-port=6443 \\

--authorization-mode=Node,RBAC \\

--runtime-config=api/all=true \\

--enable-bootstrap-token-auth \\

--service-cluster-ip-range=10.66.0.0/16 \\

--token-auth-file=/etc/kubernetes/cert/token.csv \\

--service-node-port-range=30000-32767 \\

--tls-cert-file=/etc/kubernetes/pki/kube-apiserver.pem \\

--tls-private-key-file=/etc/kubernetes/pki/kube-apiserver-key.pem \\

--client-ca-file=/etc/kubernetes/pki/ca.pem \\

--kubelet-client-certificate=/etc/kubernetes/pki/kube-apiserver.pem \\

--kubelet-client-key=/etc/kubernetes/pki/kube-apiserver-key.pem \\

--service-account-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\

--service-account-issuer=api \\

--etcd-cafile=/etc/etcd/pki/ca.pem \\

--etcd-certfile=/etc/etcd/pki/etcd.pem \\

--etcd-keyfile=/etc/etcd/pki/etcd-key.pem \\

--etcd-servers=https://192.168.83.210:2379,https://192.168.83.211:2379,https://192.168.83.212:2379 \\

--allow-privileged=true \\

--enable-aggregator-routing=true \\

--requestheader-client-ca-file=/etc/kubernetes/pki/ca.pem \\

--requestheader-allowed-names=metrics-server \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--proxy-client-cert-file=/etc/kubernetes/pki/metrics-server.pem \\

--proxy-client-key-file=/etc/kubernetes/pki/metrics-server-key.pem \\

--apiserver-count=3 \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/kubernetes/kube-apiserver-audit.log \\

--event-ttl=1h \\

--v=2

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF注:--advertise-address地址为各k8s-master主机地址

2.4.1 将k8s-master01上的apiserver的证书和请求文件拷贝至k8s-master02

scp /etc/kubernetes/cert/kube-apiserver-csr.json root@k8s-master-etcd02:/etc/kubernetes/cert/

scp /etc/kubernetes/cert/token.csv root@k8s-master-etcd02:/etc/kubernetes/cert/

scp /etc/kubernetes/pki/kube-apiserver* root@k8s-master-etcd02:/etc/kubernetes/pki/2.4.2 将k8s-master01上的apiserver的聚合证书和请求文件拷贝至k8s-master02

scp /etc/kubernetes/cert/metrics-server-csr.json root@k8s-master-etcd02:/etc/kubernetes/cert/

scp /etc/kubernetes/pki/metrics-server* root@k8s-master-etcd02:/etc/kubernetes/pki2.5.1 启动apiserver服务

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl start kube-apiserver

systemctl status kube-apiserver2.5.2 apiserver服务测试

curl --insecure https://192.168.83.210:6443/

curl --insecure https://192.168.83.211:6443/

curl --insecure https://192.168.83.200:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}3. 部署kubectl服务

3.1 在k8s-master01上创建kubectl证书请求文件

cat > /etc/kubernetes/cert/admin-csr.json << EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes-CNPC"

}

]

}

EOF3.2 生成证书文件

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/cert/admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin3.3.1 生成kube.config配置文件

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.83.200:6443 \

--kubeconfig=/etc/kubernetes/conf/kube.configkubectl config set-credentials admin \

--client-certificate=/etc/kubernetes/pki/admin.pem \

--client-key=/etc/kubernetes/pki/admin-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/conf/kube.configkubectl config set-context kubernetes \

--cluster=kubernetes \

--user=admin \

--kubeconfig=/etc/kubernetes/conf/kube.configkubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/conf/kube.config3.3.2 验证kube.config文件

# cat /etc/kubernetes/conf/kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURwakNDQW82Z0F3SUJBZ0lVRDltanJJcHJiR2k0ZzJYQ09LekZHVGNYVjR3d0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FqRUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeERUQUxCZ05WQkFvVEJHVjBZMlF4RXpBUkJnTlZCQXNUQ2tOT1VFTWdSM0p2ZFhBeEV6QVJCZ05WCkJBTVRDbXQxWW1WeWJtVjBaWE13SUJjTk1qUXdNakUzTURJeU1UQXdXaGdQTWpFeU5EQXhNalF3TWpJeE1EQmEKTUdveEN6QUpCZ05WQkFZVEFrTk9NUkF3RGdZRFZRUUlFd2RDWldscWFXNW5NUkF3RGdZRFZRUUhFd2RDWldscQphVzVuTVEwd0N3WURWUVFLRXdSbGRHTmtNUk13RVFZRFZRUUxFd3BEVGxCRElFZHliM1Z3TVJNd0VRWURWUVFECkV3cHJkV0psY201bGRHVnpNSUlCSWpBTkJna3Foa2lHOXcwQkFRRUZBQU9DQVE4QU1JSUJDZ0tDQVFFQTVzSU0KK05aQmxDQTZuTCtBR0MzclN3dXB6OWJKUkhYUll1TEd1M25lUi9Pd1p1dGYybGxoRlFOZTNSTXNsa1Y1V2VIcwpTUStrY0FKM2NJK1RiMEN0UjhlRlFmMUk2M2U5a1hwL0psSFpwTUxsTzV3NTNaU2pVdXNTVjRzTisrUnNhditHCjlhWGlWTTE2M21HcndLdzlFc042a2lrTFg0dktVN2lReVREY2VQOFMvV2NBYW5LK3laUlRsVUZWZlF1eDZJWncKb1ViQytuZlQ4YzQxNU4zWWVsalFIVVlHQ2UzSXZOVVY1TzE2c3ZoU2kwdEhTdTcvcnJpdTEyc0J2bVhCUnZBbwpqYlExSXVoU1BxYmV4SnNrRDcxRnBpV3IrdTRqT2FoN1VIQnFlKzh3akh0cGpiVFp4dUFnTzd5clcyNXQ2aXg5CmZaZEN6MlZnNnk1Ri9CS0ltUUlEQVFBQm8wSXdRREFPQmdOVkhROEJBZjhFQkFNQ0FRWXdEd1lEVlIwVEFRSC8KQkFVd0F3RUIvekFkQmdOVkhRNEVGZ1FVMFprNThzME1rY3JTb24xTWN5Sm1JZFpLYjNZd0RRWUpLb1pJaHZjTgpBUUVMQlFBRGdnRUJBQUlnemFoeHoyck9ydkIxUENtNTlSNDgvaW92VkFScy8vV3JKS0N4bit4QWJRdmFkNkw2CkhWTy82eFZWS1JYMmJmTzF5cm1LZWVPZEI0Yzdyd1lRbE5xQzJva1FHZU1uKzJmQ1pqZzlkZ1ZHRW9FQ1BSNWsKYkFqT1ZHTFBKbGF0SEk3eXljby9hVzJwd0dkS1VYcEs5bGR5bnRWUlBwdlE2UHF4bFE3d1E0T254ME1MaisrUApKckFETmxMUkFkamZHUEtxSjRKc3NwQTErRWJXL1JCbmF5NnBYaDhBM1VSZ01yaFdDdURwL2UwWlRXMThSMmlxCmRiUWd6RmZIL0s2UUV2Mkx6WGxncG14MUpPNTZRWXhTYlpnMGh6cGRzUlArY2c0cGI5R1VsSkE2bWRPUko1djIKVWV1TlIrSk9WY3VQSzJUcmRDbERKc3NHaGttaUM2YzRuWjA9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://192.168.83.200:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: kubernetes

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQ3VENDQXRXZ0F3SUJBZ0lVSTN2RzNURDZKaWNKZ0JoOWdQbVNBbXVodFFvd0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FqRUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeERUQUxCZ05WQkFvVEJHVjBZMlF4RXpBUkJnTlZCQXNUQ2tOT1VFTWdSM0p2ZFhBeEV6QVJCZ05WCkJBTVRDbXQxWW1WeWJtVjBaWE13SUJjTk1qUXdNakUzTVRJMU5UQXdXaGdQTWpFeU5EQXhNalF4TWpVMU1EQmEKTUhReEN6QUpCZ05WQkFZVEFrTk9NUkF3RGdZRFZRUUlFd2RDWldscWFXNW5NUkF3RGdZRFZRUUhFd2RDWldscQphVzVuTVJjd0ZRWURWUVFLRXc1emVYTjBaVzA2YldGemRHVnljekVZTUJZR0ExVUVDeE1QUzNWaVpYSnVaWFJsCmN5MURUbEJETVE0d0RBWURWUVFERXdWaFpHMXBiakNDQVNJd0RRWUpLb1pJaHZjTkFRRUJCUUFEZ2dFUEFEQ0MKQVFvQ2dnRUJBTk1RNWo1UnY2akJXZlVsQmJVdXBHSU9zaU96clJKOFhiNEJIVVBxakpsaHJqUjdhQVpxWGlKUwpmYW9ERkZFbEJNSE5QUytxMWZvb2EzQmZNSnFBTThGZ1FxL3NMQ0dZRVdFdGdBcjQwb2hvV2tsenF3bzFydVpnCnNtQmV6eDRMRDc5RTliV016cStlalc2US8weG9sYXU2Qk9BMGtwTWd0Z0tIYkJKOXlYd3lVeWVwTzdubHg4Y3cKaXdKRzhlNHpaNHRuTUNMK0lGdjdOVFl4U0ZQNCtwMzZodnN1TXNDcHhxWTl3b3MraFN6SUtiMmtrMVJ1cGVUagpPYzFJcXVid1VoUExSY0FGaXFrb2cxNnJKT3J5ZlpyNG5ZSUtKM1haSjF3Y1A0dnlFNGhSSUlHeHNjSDI2T2tRClFjV0g5WlBHVGRsNFpUM0lHMUliVENTeS9ybnVpdFVDQXdFQUFhTi9NSDB3RGdZRFZSMFBBUUgvQkFRREFnV2cKTUIwR0ExVWRKUVFXTUJRR0NDc0dBUVVGQndNQkJnZ3JCZ0VGQlFjREFqQU1CZ05WSFJNQkFmOEVBakFBTUIwRwpBMVVkRGdRV0JCVDdMYTlKL29DSzZ4WU5oaFBSaWltc2p6VTBoREFmQmdOVkhTTUVHREFXZ0JUUm1Ubnl6UXlSCnl0S2lmVXh6SW1ZaDFrcHZkakFOQmdrcWhraUc5dzBCQVFzRkFBT0NBUUVBMFdoVXJPS2hpbGNYVDdhTGFHS3cKR0JBeEdsdzJlWkdYU201QVVpYkNBV3VuUVNacEdLNE9rYzNMMnhDNGd2MjBadHUrT1FLTDZibFNGVlZmZ1dJcQpLcVhRUzQ4NEUzTnI0SW9abkQ3aXBKdy9Fd01DZWg1d1psTmhMcUJuQjZCMHY0UDl6bHFWZzMzNmMrekd3ZjRMCm4yZXNTSklvM0JIQnlOV3IxUjBxT1NaazZqSGRZVkNFQklnOTVCc1JpVU5zM3d5bWZsTUpmL0g3c1h2b2s5eGMKdWhkL0tIT1l1SWJFWldSUFE3SEpVTDdFSThPTjVzbkN6cnZGYjNvQytnbFNINkI5RjJoQ0JuZGVJWitlZUdzNwpWaXdETnZKb1o1ZE5ObTNZeEpyT1VlbU5lRnA4Z2cwZHptYUlvWFNxU2tJRWlNRWhCUmkzQndXQk5hZk1vTHhyCjZBPT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb2dJQkFBS0NBUUVBMHhEbVBsRy9xTUZaOVNVRnRTNmtZZzZ5STdPdEVueGR2Z0VkUStxTW1XR3VOSHRvCkJtcGVJbEo5cWdNVVVTVUV3YzA5TDZyVitpaHJjRjh3bW9BendXQkNyK3dzSVpnUllTMkFDdmpTaUdoYVNYT3IKQ2pXdTVtQ3lZRjdQSGdzUHYwVDF0WXpPcjU2TmJwRC9UR2lWcTdvRTREU1NreUMyQW9kc0VuM0pmREpUSjZrNwp1ZVhIeHpDTEFrYng3ak5uaTJjd0l2NGdXL3MxTmpGSVUvajZuZnFHK3k0eXdLbkdwajNDaXo2RkxNZ3B2YVNUClZHNmw1T001elVpcTV2QlNFOHRGd0FXS3FTaURYcXNrNnZKOW12aWRnZ29uZGRrblhCdy9pL0lUaUZFZ2diR3gKd2ZibzZSQkJ4WWYxazhaTjJYaGxQY2diVWh0TUpMTCt1ZTZLMVFJREFRQUJBb0lCQUIwTEJFT3JDQTUybktSSQo5ZUlhaXZBYlNaUDBFMnFweGxSdzN0QUxwRkV1eWNQYS8xTnlxNFZaaUlVdWEwdEhKc0pzTlhFcnRzbjNhZUZLCmwrdUtuSlNOWkYvRXhjWFJvUUtZT2poSVNPQVFTK3d6aUdPZFEzWGI5RytpWENtc0oveVB4cWUydW5JY0JTWWoKdVoyUC9waGt2bXNEa08vQjNvbTJqTDUycEpUOEw4YjFCLzZpTzFkdW93bWN4bDhiUjV3NUFPVzIyTXAzeHJTSQp1TW1PR1VuK1NBaVpKdllXT3Exd05QSmVTNmdlZmVod0h5c2tiZnhNVFZMdkZlTU40VldsNG14SkR4UlFBVU42ClNiVjY1QnFZSm9xMDdZSWgzY0ZjZTZqeFhrR3hhUjFvamZJSmlrUURSM2ZVTXg4Y3o5bWRPRzNtZGlTMHFGaFMKdkI2VWZrRUNnWUVBNEw2cm5HeVE2ZnpMYUxLRE5JR21XMnd0ZXhlRjBiT3UyOW9ZaGViQkpob2xaQlVDaUppWQp4VkJHelVPNzdYZHp6Yll2SkNkZDBtd1N6UFFlU0I0VGYvcThVYjl1Y3grYitRbWRKbWFkY1JSYVpCWGl0STFJCkVNaDBwc1gvbE1Ga1ZVYktZUWlETmYzSThCV1o1dE1vd2E2ZmZXRnNRS2VsSExUd0tFNXRCZGtDZ1lFQThHczkKSUhtNU1HaUQxZzRqUEl3Z3dhV1lZRzNlYm9jZ0ZuZzNmb1AxdUR6OU9BMGtQVmI4WDhNN2F4SmowZFdoWnlGMgpBMkZzaXdHRllRRUNucTI3QjBERVBCb25ZRWpMV0k0UjRNWHlqamNnR0JJdUpHb3ozY1EvWXBHdlM4TStlSHcwCllMZmdlTXMycEZJVzl6WE5OaVFBL09TQ1dMc1MrQTJDVkFiRTQxMENnWUJiNngvZEZqb2V1Um1vZEhwZEd6bWkKNHBlblpIT0MxTzZMVktQNi8rbTNYN0l6UUxTTWtYektGbzhlbkxsYjRpTW0yNEJrVlFWMmJtVXlGaFhjZ1JDUQpvMGdxVnhVaFdLZytMc1JyVkVUSVh2NnBPSnBFSmhSM2FNVHRBTVlMWFIxZ0UxZnFYOFRxYkNXbXErOEtEUXI2ClRDVkc3bldMN0FVSHZLa01reWJiNFFLQmdCanFoRU5CV2pmeUhQZXFMMnl1K1JZWW0xb2pDTkpibnErMWRjcmUKMGpCdDRiQlZiRlFQRjhpMDZ3dUZ0R0tpeVQ5dThGUVpYSzVyVWU5anMybk9oM3VROHNWbjBIemlEbkQzQ0ZOTApNSUFjcG03WU00QUNvYTF5RVQrZDZaVG9meWp0ZG1BdnJrdldnNnN6OXIxWURoUjJWc1BleXNOM1g5ZmxUb1IyCnp5RGhBb0dBYTMzQkxMZ1NzS2NVYUMyc2lXZFUxeTdRV0N3cWt1dGEvWFk0QTNXUk1oTWRFZXVjWElTWTlHaVEKNXdJK2Q1eXBkNTBnUjBkdzNGSHBXRVVTdEdXRDJQa0lUWnJKS2ViaG9WOUFsR3hBTXpSMFI1bUdaTGw5dXpETwpmRGJvMXArNmViVXB2YTJodG5LdkF4cHkxTEdYaS8zanVVUnB0RmdTMVUyZThaV0gzNUE9Ci0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==3.4 准备kubectl配置文件并进行角色绑定

mkdir ~/.kube

cp /etc/kubernetes/conf/kube.config ~/.kube/config

kubectl create clusterrolebinding kube-apiserver:kubelet-apis \

--clusterrole=system:kubelet-api-admin \

--user kubernetes \

--kubeconfig=/root/.kube/config3.5 查看kubernetes集群状态

查看集群信息

# kubectl cluster-info

Kubernetes control plane is running at https://192.168.83.200:6443

查看集群组件状态

# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy ok

查看命名空间中资源对象

# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.66.0.1 <none> 443/TCP 53m3.6 将kubectl配置文件拷贝至k8s-master02节点

ssh root@k8s-master-etcd02 "mkdir /root/.kube"

scp /etc/kubernetes/conf/kube.config root@k8s-master-etcd02:/etc/kubernetes/conf/

scp /root/.kube/config root@k8s-master-etcd02:/root/.kube/4. 部署controller-manager服务

4.1 创建kube-controller-manager证书请求文件

cat > /etc/kubernetes/cert/kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.83.210",

"192.168.83.211"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes-CNPC"

}

]

}

EOF4.2 生成kube-controller-manager证书文件

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/cert/kube-controller-manager-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-controller-manager4.3 创建kube-controller-manager.kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.83.200:6443 \

--kubeconfig=/etc/kubernetes/conf/kube-controller-manager.kubeconfigkubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/kube-controller-manager.pem \

--client-key=/etc/kubernetes/pki/kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/conf/kube-controller-manager.kubeconfigkubectl config set-context system:kube-controller-manager \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/conf/kube-controller-manager.kubeconfigkubectl config use-context system:kube-controller-manager --kubeconfig=/etc/kubernetes/conf/kube-controller-manager.kubeconfig4.4 创建kube-controller-manager启动文件

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \\

--secure-port=10257 \\

--bind-address=0.0.0.0 \\

--kubeconfig=/etc/kubernetes/conf/kube-controller-manager.kubeconfig \\

--service-cluster-ip-range=10.66.0.0/16 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \\

--allocate-node-cidrs=true \\

--cluster-cidr=172.31.0.0/16 \\

--root-ca-file=/etc/kubernetes/pki/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/pki/ca-key.pem \\

--leader-elect=true \\

--feature-gates=RotateKubeletServerCertificate=true \\

--controllers=*,bootstrapsigner,tokencleaner \\

--tls-cert-file=/etc/kubernetes/pki/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/pki/kube-controller-manager-key.pem \\

--use-service-account-credentials=true \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF4.5 将kube-controller-manager的请求文件和证书等文件拷贝至k8s-master02节点

scp /etc/kubernetes/pki/kube-controller-manager* root@k8s-master-etcd02:/etc/kubernetes/pki/

scp /etc/kubernetes/conf/kube-controller-manager.kubeconfig root@k8s-master-etcd02:/etc/kubernetes/conf/

scp /usr/lib/systemd/system/kube-controller-manager.service root@k8s-master-etcd02:/usr/lib/systemd/system/4.6 启动kube-controller-manager服务

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl start kube-controller-manager

systemctl status kube-controller-manager4.7 查看kubernetes集群状态

# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

etcd-0 Healthy ok

scheduler Healthy ok

controller-manager Healthy ok5. 部署scheduler服务

5.1 创建kube-scheduler证书请求文件

cat > /etc/kubernetes/cert/kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.83.210",

"192.168.83.211"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes-CNPC"

}

]

}

EOF5.2 生成kube-scheduler证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/cert/kube-scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-scheduler5.3 创建kube-scheduler的kubeconfig

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.83.200:6443 \

--kubeconfig=/etc/kubernetes/conf/kube-scheduler.kubeconfigkubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/kube-scheduler.pem \

--client-key=/etc/kubernetes/pki/kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/conf/kube-scheduler.kubeconfigkubectl config set-context system:kube-scheduler \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/conf/kube-scheduler.kubeconfigkubectl config use-context system:kube-scheduler --kubeconfig=/etc/kubernetes/conf/kube-scheduler.kubeconfig5.4 创建kube-schedule启动文件

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

ExecStart=/usr/local/bin/kube-scheduler \\

--kubeconfig=/etc/kubernetes/conf/kube-scheduler.kubeconfig \\

--leader-elect=true \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF5.6 将kube-scheduler的请求文件和证书等文件拷贝至k8s-master02节点

scp /etc/kubernetes/pki/kube-scheduler* root@k8s-master-etcd02:/etc/kubernetes/pki/

scp /etc/kubernetes/conf/kube-scheduler.kubeconfig root@k8s-master-etcd02:/etc/kubernetes/conf/

scp /usr/lib/systemd/system/kube-scheduler.service root@k8s-master-etcd02:/usr/lib/systemd/system/5.7 启动kube-scheduler服务

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl start kube-scheduler

systemctl status kube-scheduler5.8 查看kubernetes集群状态

# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

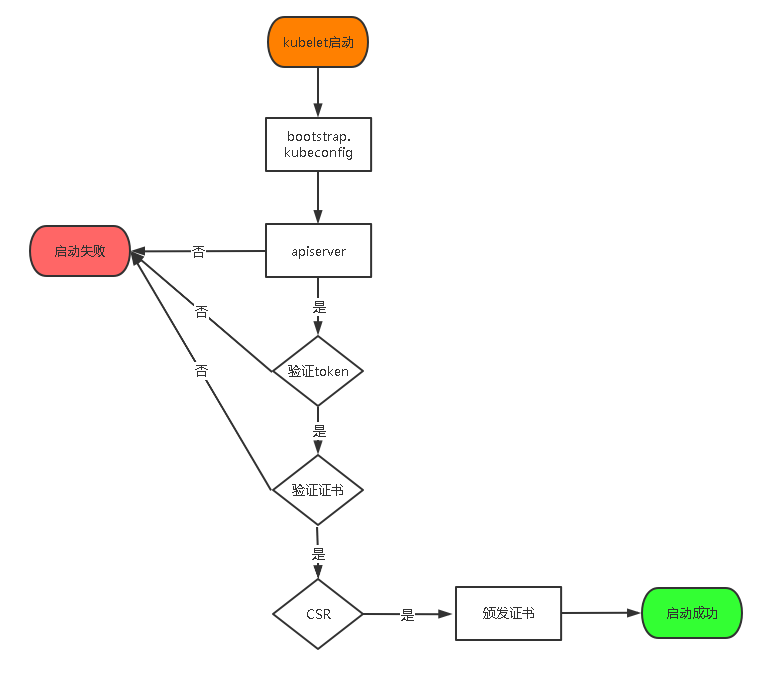

etcd-0 Healthy ok 6. 在k8s-master各节点创建TLS Bootstrapping Token用于引导kubelet自动生成证书

6.1 在k8s-master01节点上创建kubelet-bootstrap.kubeconfig

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/cert/token.csv)kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.83.200:6443 \

--kubeconfig=/etc/kubernetes/conf/kubelet-bootstrap.kubeconfigkubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=/etc/kubernetes/conf/kubelet-bootstrap.kubeconfigkubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=/etc/kubernetes/conf/kubelet-bootstrap.kubeconfigkubectl config use-context default --kubeconfig=/etc/kubernetes/conf/kubelet-bootstrap.kubeconfigkubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrapkubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap \

--kubeconfig=/etc/kubernetes/conf/kubelet-bootstrap.kubeconfig6.2.1 将k8s-master01生成的kubelet-bootstrap.kubeconfig和ca.pem文件拷贝到k8s-node各节点

scp /etc/kubernetes/pki/ca.pem root@k8s-node01:/etc/kubernetes/pki/

scp /etc/kubernetes/conf/kubelet-bootstrap.kubeconfig root@k8s-node01:/etc/kubernetes/conf/

scp /etc/kubernetes/pki/ca.pem root@k8s-node02:/etc/kubernetes/pki

scp /etc/kubernetes/conf/kubelet-bootstrap.kubeconfig root@k8s-node02:/etc/kubernetes/conf/6.2.2 将k8s-master01生成的kubelet-bootstrap.kubeconfig拷贝到其他k8s-master节点

scp /etc/kubernetes/conf/kubelet-bootstrap.kubeconfig root@k8s-master-etcd02:/etc/kubernetes/conf/6.3 在k8s-master节点验证

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node01 NotReady <none> 104s v1.29.6

k8s-node02 NotReady <none> 23s v1.29.6

注:node节点都已加入集群,没有在master上安装kubelet,master只作为管理节点所以kubelet get nodes看不到master节点

后续如果节点异常,重新签发证书,需要把pki里面的kubelet证书先删除,否则会看到节点加入集群失败7. 部署kube-proxy

7.1 在k8s-master01上创建kube-proxy证书请求文件

cat > /etc/kubernetes/cert/kube-proxy-csr.json << EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-CNPC"

}

]

}

EOF7.2 生成kube-proxy证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=/etc/kubernetes/cert/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/cert/kube-proxy-csr.json | cfssljson -bare /etc/kubernetes/pki/kube-proxy7.3 创建kube-proxy的kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.83.200:6443 \

--kubeconfig=/etc/kubernetes/conf/kube-proxy.kubeconfigkubectl config set-credentials kube-proxy \

--client-certificate=/etc/kubernetes/pki/kube-proxy.pem \

--client-key=/etc/kubernetes/pki/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/conf/kube-proxy.kubeconfigkubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=/etc/kubernetes/conf/kube-proxy.kubeconfigkubectl config use-context default --kubeconfig=/etc/kubernetes/conf/kube-proxy.kubeconfig7.4 将kube-proxy证书文件同步到k8s-node各节点和其他k8s-master节

scp /etc/kubernetes/cert/kube-proxy-csr.json root@k8s-master-etcd02:/etc/kubernetes/cert/

scp /etc/kubernetes/conf/kube-proxy.kubeconfig root@k8s-master-etcd02:/etc/kubernetes/conf/

scp /etc/kubernetes/pki/kube-proxy* root@k8s-master-etcd02:/etc/kubernetes/pki/

for node in k8s-node01 k8s-node02;do scp /etc/kubernetes/conf/kube-proxy.kubeconfig $node:/etc/kubernetes/conf/ ;done

for node in k8s-node01 k8s-node02;do scp /etc/kubernetes/pki/kube-proxy* $node:/etc/kubernetes/pki/ ;done

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧

· 【自荐】一款简洁、开源的在线白板工具 Drawnix

· 园子的第一款AI主题卫衣上架——"HELLO! HOW CAN I ASSIST YOU TODAY

· Docker 太简单,K8s 太复杂?w7panel 让容器管理更轻松!