第九 socket编程进程、队列

一、upd socket 说明

udp通信不需要跟对方确认,直接发送,就结束,并且清除本地缓存。udp有效字节为512.

udp通信示例:

1.1.udp server端

from socket import * #定义socket udp_server = socket(AF_INET,SOCK_DGRAM) #绑定端口 udp_server.bind(('127.0.0.1',9090)) while True: #接收消息 data,client_addr=udp_server.recvfrom(1024) print(data) #发送消息(内容为客户端大写) udp_server.sendto(data.upper(),client_addr)

1.2.udp client端

from socket import * #定义socket udp_client = socket(AF_INET,SOCK_DGRAM) while True: msg=input('>> ').strip() #发送消息 udp_client.sendto(msg.encode('utf-8'),('127.0.0.1',9090)) #接收消息 data,server_addr=udp_client.recvfrom(1024) print(data.decode('utf-8'))

运行服务端:

....

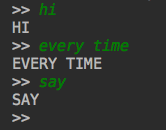

运行客户端:

服务端结果:

2.udp 并发通信

2.1.服务端

import socketserver class CklupdHandler(socketserver.BaseRequestHandler): def handle(self): print(self.request) if __name__ == '__main__': server = socketserver.ThreadingUDPServer(('127.0.0.1',9090),CklupdHandler) server.serve_forever()

2.2.客户端:

from socket import * #定义socket udp_client = socket(AF_INET,SOCK_DGRAM) while True: msg=input('>> ').strip() #发送消息 udp_client.sendto(msg.encode('utf-8'),('127.0.0.1',9090)) #接收消息 data,server_addr=udp_client.recvfrom(1024) print(data.decode('utf-8'))

运行服务端:

....

运行客户端:

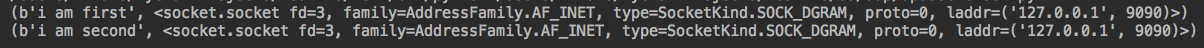

服务端:

返回一个元祖,第一个为消息内容,第二个为通信socket

修改服务端:

import socketserver class CklupdHandler(socketserver.BaseRequestHandler): def handle(self): print(self.request) self.request[1].sendto(self.request[0].upper(),self.client_address) if __name__ == '__main__': server = socketserver.ThreadingUDPServer(('127.0.0.1',9090),CklupdHandler) server.serve_forever()

客户端不变:

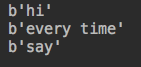

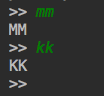

运行客户端一:

运行客户端二:

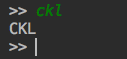

服务端:

二、python进程

产生进程的两种方式

方式一:

from multiprocessing import Process import time def work(name): print('taks %s is practicing' %name) time.sleep(3) print('task %s has finished' %name) if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p2=Process(target=work,args=('zld',)) p1.start() p2.start() print("---- main -----")

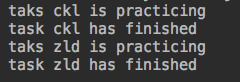

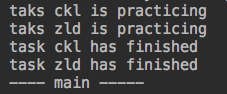

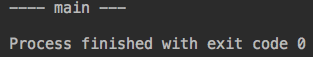

运行结果:

#主进程并未等待子进行运行结束。

方式二:

from multiprocessing import Process import time #自定义一个进程类,继承Process class CklProcess(Process): def __init__(self,name): super().__init__() #调用父类的初始化 self.name = name #必须有的一个方法 def run(self): print('taks %s is practicing' % self.name) time.sleep(3) print('task %s has finished' % self.name) if __name__ == '__main__': c1=CklProcess('ckl') c2=CklProcess('zld') c1.run() c2.run()

运行结果:

进程模拟tcp并发

原通信服务端tcp:

import socket cklServer=socket.socket(socket.AF_INET,socket.SOCK_STREAM) cklServer.setsockopt(socket.SOL_SOCKET,socket.SO_REUSEADDR,1) cklServer.bind(('127.0.0.1',9090)) cklServer.listen(5)while True: #循环链接 conn,client_addr=cklServer.accept() while True: #循环通信 client_data = conn.recv(1024) if not client_data: break print(client_data) conn.send(client_data.upper()) conn.close() cklServer.close()

客户端:

import socket cklClient = socket.socket(socket.AF_INET,socket.SOCK_STREAM) cklClient.connect(('127.0.0.1',9090)) while True: msg = input('>> ').strip() if not msg: continue cklClient.send(msg.encode('utf-8')) server_data = cklClient.recv(1024) print(server_data.decode('utf-8')) cklClient.close()

运行服务端:

...

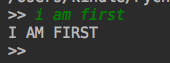

运行第一个客户端:

运行第二个客户端:

第二个客户端处于等待状态,如何将服务端做成并发?

分析:

1.服务端循环创建链接

while True: #循环链接 conn,client_addr=cklServer.accept()

2.若一个请求进来,则处理这条请求

while True: #循环通信 client_data = conn.recv(1024) if not client_data: break print(client_data) conn.send(client_data.upper()) conn.close()

#这次请求结束,才释放链接,进入下一次循环。那么,可否产生多个链接,处理多次请求,而不需要循环等待。

使用Process模块

import socket from multiprocessing import Process cklServer=socket.socket(socket.AF_INET,socket.SOCK_STREAM) cklServer.setsockopt(socket.SOL_SOCKET,socket.SO_REUSEADDR,1) cklServer.bind(('127.0.0.1',9090)) cklServer.listen(5) #定义通信循环为单独函数 def workpro(conn,client_addr): while True: client_data = conn.recv(1024) if not client_data: break print(client_data) conn.send(client_data.upper()) conn.close() if __name__ == '__main__': while True: conn,client_addr=cklServer.accept() #生成Process对象,target为通信循环内容,参数为链接及客户端地址 p=Process(target=workpro,args=(conn,client_addr)) #启动程序 p.start() cklServer.close()

客户端不变;

启动服务器端:

...

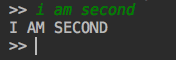

启动客户端1:

启动客户端2:

服务端:

#这样就实现了一个模拟并发的效果。

join

from multiprocessing import Process import time def work(name): print('taks %s is practicing' %name) time.sleep(3) print('task %s has finished' %name) if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p2=Process(target=work,args=('zld',)) p1.start() p2.start() p1.join() #主进程等待,等待p1运行结束 p2.join() #主进程等待,等待p2运行结束 print("---- main -----")

运行结果:

#主进程等待子进程运行结束,这种场景比如爬虫,爬取到的内容有返回,才能进行分析,否则无意义。

上面代码优化

from multiprocessing import Process import time def work(name): print('taks %s is practicing' %name) time.sleep(3) print('task %s has finished' %name) if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p2=Process(target=work,args=('zld',)) # p1.start() # p2.start() # p1.join() #主进程等待,等待p1运行结束 # p2.join() #主进程等待,等待p2运行结束 p_list = [p1,p2] for i in p_list: i.start() #启动了所有的start() for j in p_list: j.join() #join循环等待,是主进程等待 print("---- main -----")

#这样是并行的,而不是串行,主进程等待所有进程运行完毕,但是所有子进程在并发运行。

Process 其它

from multiprocessing import Process import time def work(name): print('taks %s is practicing' %name) time.sleep(3) print('task %s has finished' %name) if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p1.start() p1.terminate() #结束p1进程,非常危险,如果p1还有子进程。p1结束,p1的子进程并未结束,就称为僵尸进程 print(p1.is_alive()) #检查p1是否存货 print("---- main -----")

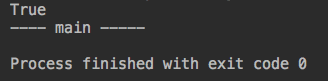

运行结果:

#为什么p1还是存活的,操作系统运行了两个进程p1.start(),p1.terminal(),进程销毁需要一段时间,操作系统来控制,间隔较短,所有看起来仍然存活

进程pid

from multiprocessing import Process import time import os def work(name): print('main is:%s,son is %s practicing' %(os.getpid(),os.getppid())) #主进程pid,子进程pid time.sleep(3) print('main is:%s,son is %s has finished' %(os.getpid(),os.getppid())) #主进程pid,子进程pid if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p1.start() print(p1.name) #p1进程名,可以指定 print("main is:",os.getpid(),os.getppid()) #主进程pid,父进程pid(也就是pycharm进程)

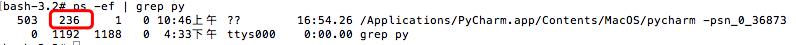

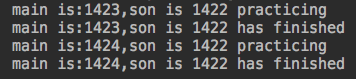

运行结果:

查询系统进程:

#236确实是pycharm进程

守护进程

from multiprocessing import Process import time import os def work(name): print('main is:%s,son is %s practicing' %(os.getpid(),os.getppid())) #主进程pid,子进程pid time.sleep(3) print('main is:%s,son is %s has finished' %(os.getpid(),os.getppid())) #主进程pid,子进程pid if __name__ == '__main__': p1=Process(target=work,args=('ckl',)) p1.daemon=True #设置p1为守护进程 p1.start() print("---- main ---")

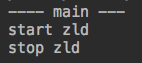

运行结果:

#守护进程在代码运行结束,就会结束,所以p1为守护进程,运行到main,主进程结束,p1也就结束

一个分析小示例:

from multiprocessing import Process import time import os def ckl(): print("start ckl...") time.sleep(3) print("stop ckl") def zld(): print("start zld") time.sleep(3) print("stop zld") if __name__ == '__main__': p1=Process(target=ckl) p2=Process(target=zld) p1.daemon=True #设置p1为守护进程,必须在start之前 p1.start() p2.start() print("---- main ---")

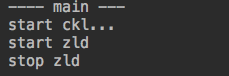

运行结果:

分析:

#1.设置p1位守护进程

#2.运行p1

#3.运行p2

#打印main

运行主进程,主进程代码运行完毕,守护进程p1就结束了,但是p2还在运行,所以会运行完毕。

运行结果2:

#运行主进程,主进程代码运行完毕,守护进程p1就结束了,但是main结束之前,p1已结启动了,所以终端会打印p1的输出,紧接着main运行,p1就结束,再运行p2

Lock

之前进程并发抢占资源,无序进行,如何有序,就需要加锁,如下:

from multiprocessing import Process,Lock import time import os def work(name,mutex): mutex.acquire() #加上锁 print('main is:%s,son is %s practicing' %(os.getpid(),os.getppid())) time.sleep(3) print('main is:%s,son is %s has finished' %(os.getpid(),os.getppid())) mutex.release() #释放锁 if __name__ == '__main__': mutex=Lock() p1=Process(target=work,args=('ckl',mutex)) p2=Process(target=work,args=('zld',mutex)) p1.start() p2.start()

运行结果:

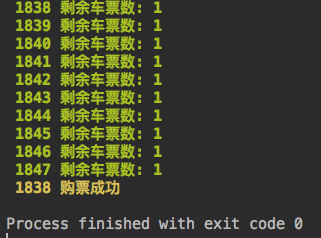

p1和p2就是有序进程。加锁牺牲效率,但是有准确性,如下模拟购买火车票:

db.txt

{"count": 0}

抢票:

from multiprocessing import Process import os import json import time def find_ticket(): ticket_dic = json.load(open('db.txt')) print('\033[1;32m %s 剩余车票数: %s\033[0m' %(os.getpid(),ticket_dic['count'])) def obtain_ticket(): ticket_dic = json.load(open('db.txt')) time.sleep(0.5) if ticket_dic['count'] > 0: ticket_dic['count'] -= 1 time.sleep(0.5) json.dump(ticket_dic,open('db.txt','w')) print('\033[1;33m %s 购票成功 \033[0m' %os.getpid()) def run(): find_ticket() obtain_ticket() if __name__ == '__main__': for i in range(10): i=Process(target=run,) i.start()

运行结果:

#运行结果是10个进程都抢票成功,但票数只有一张,这种情况不应该发生,这时候就需要加锁了:

from multiprocessing import Process,Lock import os import json import time def find_ticket(): ticket_dic = json.load(open('db.txt')) print('\033[1;32m %s 剩余车票数: %s\033[0m' %(os.getpid(),ticket_dic['count'])) def obtain_ticket(): ticket_dic = json.load(open('db.txt')) time.sleep(0.5) if ticket_dic['count'] > 0: ticket_dic['count'] -= 1 time.sleep(0.5) json.dump(ticket_dic,open('db.txt','w')) print('\033[1;33m %s 购票成功 \033[0m' %os.getpid()) def run(mutex): find_ticket() mutex.acquire() #买票时候加锁 obtain_ticket() mutex.release() #购买完毕释放 if __name__ == '__main__': mutex = Lock() for i in range(10): p=Process(target=run,args=(mutex,)) p.start()

运行结果:

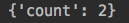

上面共享太落后,文件共享效率低,如何高效共享?内存共享

from multiprocessing import Process,Lock,Manager import os import json import time def run(dic): dic['count'] -= 1 if __name__ == '__main__': m = Manager() mdic = m.dict({'count':100}) p_list = [] for i in range(100): p=Process(target=run,args=(mdic,)) p_list.append(p) p.start() print(mdic)

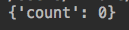

运行结果:

#结果不应该是0吗?怎会是2呢?因为,同时间可能有两个进程在修改同样的数据,所以并不准确,如何保证准确?加锁

from multiprocessing import Process,Lock,Manager import os import json import time def run(dic,mutex): with mutex: #加锁后,自动释放 dic['count'] -= 1 if __name__ == '__main__': m = Manager() mdic = m.dict({'count':100}) p_list = [] mutex = Lock() for i in range(100): p=Process(target=run,args=(mdic,mutex)) p_list.append(p) p.start() #等待运行结束 for j in p_list: j.join() print(mdic)

运行结果:

起始,没有这样使用的,这是只演示一个原理,真正的共享依靠队列来实现,下面说说吧

三、队列

存取值:

from multiprocessing import Queue q=Queue(3) q.put('one') q.put('two') q.put('three') print(q.get()) print(q.get()) print(q.get())

结果:

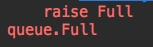

存入超出队列

from multiprocessing import Queue q=Queue(3) q.put('one') q.put('two') q.put('three') q.put('four')

这样就会一直卡着等着有进程存入值

不希望等待,写如下:

from multiprocessing import Queue q=Queue(3) q.put('one',block=False) q.put('two',block=False) q.put('three',block=False) q.put('four',block=False)

结果:

同样效果写法:

q.put_nowait('four')

四、生产者消费者模型

模拟生产消费者模型

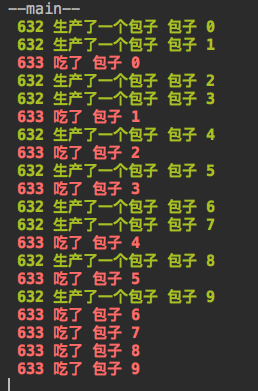

import time from multiprocessing import Queue,Process import os def producer(q): for i in range(10): time.sleep(1) res = "包子 %s" %i #将循环产生的包子放入队列 q.put(res) print("\033[1;32m %s 生产了一个包子 %s\033[0m" %(os.getpid(),res)) def consumer(q): while True: #从队列取包子 res = q.get() time.sleep(1.5) print("\033[1;31m %s 吃了 %s\033[0m" %(os.getpid(),res)) if __name__ == '__main__': q = Queue() #生产者 p1 = Process(target=producer,args=(q,)) #消费者 c1 = Process(target=consumer,args=(q,)) p1.start() c1.start() print("--main--")

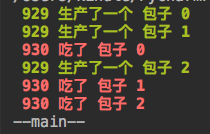

运行结果:

上面问题是:进程不会结束,一直卡着,因为消费者卡主,还在等待队列里面的消息,这时候就需要生产者通知消费者已经生产完毕,所以,我们在队列里面放入一个None

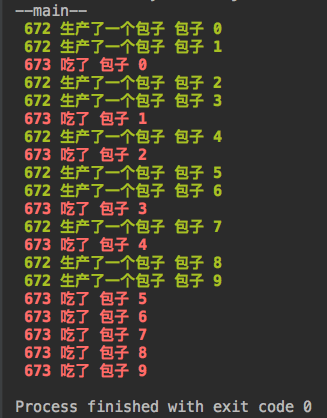

import time from multiprocessing import Queue,Process import os def producer(q): for i in range(10): time.sleep(1) res = "包子 %s" %i q.put(res) print("\033[1;32m %s 生产了一个包子 %s\033[0m" %(os.getpid(),res)) q.put(None) #生产完毕,在队列放入None def consumer(q): while True: res = q.get() if res == None: #如果队列取出None,说明没有了 break time.sleep(1.5) print("\033[1;31m %s 吃了 %s\033[0m" %(os.getpid(),res)) if __name__ == '__main__': q = Queue() #生产者 p1 = Process(target=producer,args=(q,)) #消费者 c1 = Process(target=consumer,args=(q,)) p1.start() c1.start() print("--main--")

运行结果:

如果有多个生产者,多个消费者呢?

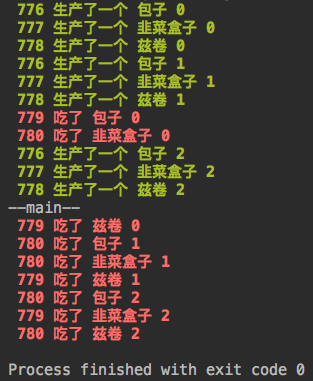

import time from multiprocessing import Queue,Process import os def producer(q,name): for i in range(3): time.sleep(1) res = "%s %s" %(name,i) q.put(res) print("\033[1;32m %s 生产了一个 %s\033[0m" %(os.getpid(),res)) def consumer(q): while True: res = q.get() if res == None: #如果队列取出None,说明没有了 break time.sleep(1.5) print("\033[1;31m %s 吃了 %s\033[0m" %(os.getpid(),res)) if __name__ == '__main__': q = Queue() #生产者 p1 = Process(target=producer,args=(q,'包子')) p2 = Process(target=producer,args=(q,'韭菜盒子')) p3 = Process(target=producer,args=(q,'兹卷')) #消费者 c1 = Process(target=consumer,args=(q,)) c2 = Process(target=consumer,args=(q,)) p1.start() p2.start() p3.start() c1.start() c2.start() p1.join() #主进程等待p1结束 p2.join() #主进程等待p2结束 p3.join() #主进程等待p3结束 q.put(None) #给第一个消费者发送None q.put(None) #给第二个消费者发送None print("--main--")

运行结果:

#这样可以解决问题,但是写法比较low.应该写法是:

import time from multiprocessing import Queue,Process,JoinableQueue import os def producer(q,name): for i in range(3): time.sleep(1) res = "%s %s" %(name,i) q.put(res) print("\033[1;32m %s 生产了一个 %s\033[0m" %(os.getpid(),res)) q.join() #生产者等待q结束,也就是q为空了。 def consumer(q): while True: res = q.get() if res == None: break time.sleep(1.5) print("\033[1;31m %s 吃了 %s\033[0m" %(os.getpid(),res)) q.task_done() #发送信号说已经吃了一个,发送给q.join() if __name__ == '__main__': q = JoinableQueue() #生产者 p1 = Process(target=producer,args=(q,'包子')) #消费者 c1 = Process(target=consumer,args=(q,)) p1.start() c1.daemon = True c1.start() p1.join() #主进程在等待p1运行完毕,也就是p.join()结束,也就是消费者已经消费完毕,消费者就没有必要存在,这就用到daemon了。 print("--main--")

运行结果:

多个消费者和生产者:

import time from multiprocessing import Queue,Process,JoinableQueue import os def producer(q,name): for i in range(3): time.sleep(1) res = "%s %s" %(name,i) q.put(res) print("\033[1;32m %s 生产了一个 %s\033[0m" %(os.getpid(),res)) q.join() #生产者等待q结束,也就是q为空了。 def consumer(q): while True: res = q.get() if res == None: break time.sleep(1.5) print("\033[1;31m %s 吃了 %s\033[0m" %(os.getpid(),res)) q.task_done() #发送信号说已经吃了一个 if __name__ == '__main__': q = JoinableQueue() #生产者 p1 = Process(target=producer,args=(q,'包子')) p2 = Process(target=producer,args=(q,'韭菜盒子')) p3 = Process(target=producer,args=(q,'兹卷')) #消费者 c1 = Process(target=consumer,args=(q,)) c2 = Process(target=consumer,args=(q,)) p1.start() p2.start() p3.start() c1.daemon = True #消费者一 c2.daemon = True #消费者二 c1.start() c2.start() p1.join() p2.join() p3.join() print("--main--")

进程池

同步:

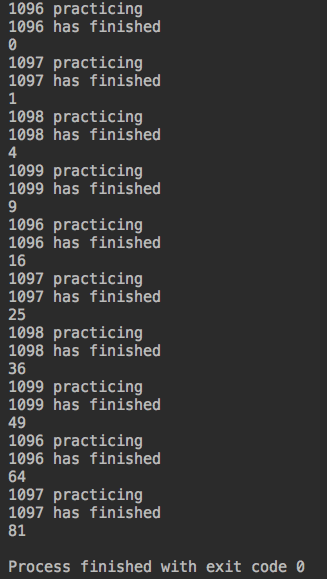

from multiprocessing import Pool import os import time def work(n): print('%s practicing' %os.getpid()) time.sleep(3) print('%s has finished' %os.getpid()) return n**2 if __name__ == '__main__': # print(os.cpu_count()) #查看cpu个数 p = Pool(4) #进程池个数,也就是cpu个数 for i in range(10): res=p.apply(work,args=(i,)) print(res)

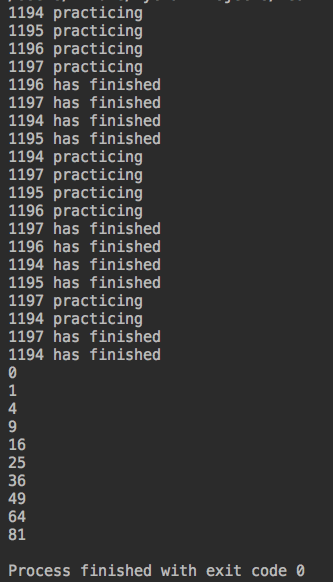

运行结果:

#同步机制:每次运行都在等待上次运行结束,并得到一个结果。始终有四个进程(1096,1097,1098,1099)在运行

#上面运行效率太低,如何提高?异步

异步

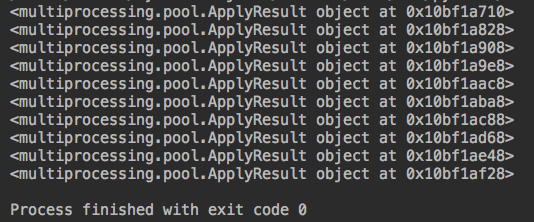

from multiprocessing import Pool import os import time def work(n): print('%s practicing' %os.getpid()) time.sleep(3) print('%s has finished' %os.getpid()) return n**2 if __name__ == '__main__': # print(os.cpu_count()) #查看cpu个数 p = Pool(4) #进程池个数,也就是cpu个数 for i in range(10): res=p.apply_async(work,args=(i,)) print(res)

运行结果:

#这样并没有得到返回结果,因为异步提交,一次性全提交,不管返回结果,要得到结果,get():

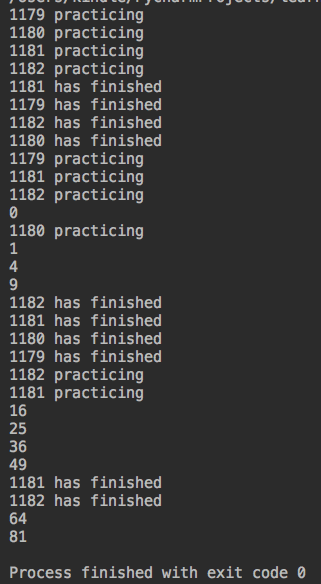

from multiprocessing import Pool import os import time def work(n): print('%s practicing' %os.getpid()) time.sleep(3) print('%s has finished' %os.getpid()) return n**2 if __name__ == '__main__': # print(os.cpu_count()) #查看cpu个数 p = Pool(4) #进程池个数,也就是cpu个数 p_list = [] for i in range(10): res=p.apply_async(work,args=(i,)) p_list.append(res) for j in p_list: print(j.get())

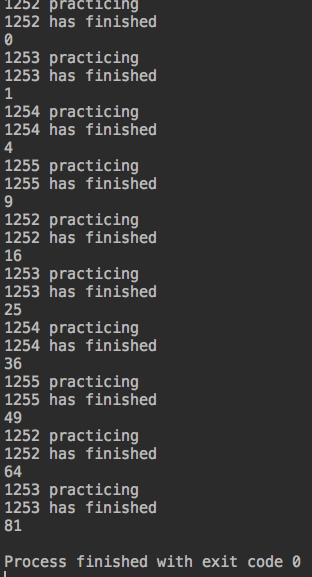

运行结果:

#得到返回结果,问题是,得到的结果返回很乱,没有一次性全返回,这个时候就需要等待进程运行结束,就需要join了

from multiprocessing import Pool import os import time def work(n): print('%s practicing' %os.getpid()) time.sleep(3) print('%s has finished' %os.getpid()) return n**2 if __name__ == '__main__': # print(os.cpu_count()) #查看cpu个数 p = Pool(4) #进程池个数,也就是cpu个数 p_list = [] for i in range(10): res=p.apply_async(work,args=(i,)) p_list.append(res) p.close() #不允许再往进程池提交新的任务 p.join() #等待进程运行结束 for j in p_list: print(j.get())

运行结果:

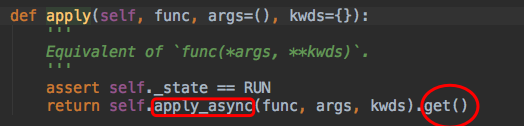

#分析apply

apply其实就是在调用异步操作,只不过是每次都get一个结果,所以,下一个进程就进入等待,如下用异步实现同步:

from multiprocessing import Pool import os import time def work(n): print('%s practicing' %os.getpid()) time.sleep(3) print('%s has finished' %os.getpid()) return n**2 if __name__ == '__main__': # print(os.cpu_count()) #查看cpu个数 p = Pool(4) #进程池个数,也就是cpu个数 for i in range(10): res=p.apply_async(work,args=(i,)).get() #每次运行结束,get到结果,才进入下一次循环 print(res)

运行结果:

用进程池模拟tcp通信,服务端并发

服务端:

import socket from multiprocessing import Pool import os cklServer=socket.socket(socket.AF_INET,socket.SOCK_STREAM) cklServer.setsockopt(socket.SOL_SOCKET,socket.SO_REUSEADDR,1) cklServer.bind(('127.0.0.1',9090)) cklServer.listen(5) def workpro(conn,client_addr): print(os.getpid()) while True: client_data = conn.recv(1024) if not client_data: break print(client_data) conn.send(client_data.upper()) conn.close() if __name__ == '__main__': p = Pool(4) #进程池 while True: conn,client_addr=cklServer.accept() #异步并发处理 p.apply_async(workpro,args=(conn,client_addr)) cklServer.close()

客户端不变

运行服务端:

....

运行客户端1:

运行客户端2:

运行客户端3:

运行客户端4:

运行客户端5:

客户端5处于等待中,因为进程池数量为4,同时处理4个请求

服务端:

#服务器端启动四个进程来处理请求,结束掉一个客户端,客户端5就可以被处理:

回调函数

模拟爬取网页:

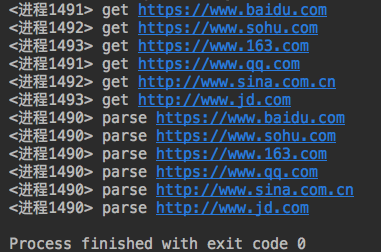

from multiprocessing import Pool import requests import json import os #下载链接 def get_page(url): print('<进程%s> get %s' %(os.getpid(),url)) respone=requests.get(url) if respone.status_code == 200: return {'url':url,'text':respone.text} #分析内容 def pasrse_page(res): print('<进程%s> parse %s' %(os.getpid(),res['url'])) parse_res='url:<%s> size:[%s]\n' %(res['url'],len(res['text'])) with open('db.txt','a') as f: f.write(parse_res) if __name__ == '__main__': urls=[ 'https://www.baidu.com', 'https://www.sohu.com', 'https://www.163.com', 'https://www.qq.com', 'http://www.sina.com.cn', 'http://www.jd.com' ] p=Pool(3) #进程池为3 res_l=[] for url in urls: res=p.apply_async(get_page,args=(url,)) #并发下载链接 res_l.append(res) p.close() p.join() #等待全部运行完毕,再进行网页分析 for res in res_l: pasrse_page(res.get())

运行:

查看文件db.txt

url:<https://www.baidu.com> size:[2443] url:<https://www.baidu.com> size:[2443] url:<https://www.sohu.com> size:[180630] url:<https://www.163.com> size:[678844] url:<https://www.qq.com> size:[223522] url:<http://www.sina.com.cn> size:[572204] url:<http://www.jd.com> size:[115026] url:<https://www.baidu.com> size:[2443] url:<https://www.sohu.com> size:[180587] url:<https://www.163.com> size:[678844] url:<https://www.qq.com> size:[223522] url:<http://www.sina.com.cn> size:[572204] url:<http://www.jd.com> size:[115082]

#同时并发了3个进程,等待所有进程结束,才进行网页分析,效率太低,有没有,爬完一个就进行分析呢?这就需要回调函数了

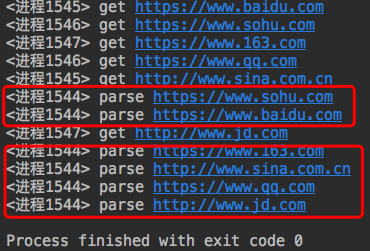

from multiprocessing import Pool import requests import json import os #下载链接 def get_page(url): print('<进程%s> get %s' %(os.getpid(),url)) respone=requests.get(url) if respone.status_code == 200: return {'url':url,'text':respone.text} #分析内容 def pasrse_page(res): print('<进程%s> parse %s' %(os.getpid(),res['url'])) parse_res='url:<%s> size:[%s]\n' %(res['url'],len(res['text'])) with open('db.txt','a') as f: f.write(parse_res) if __name__ == '__main__': urls=[ 'https://www.baidu.com', 'https://www.sohu.com', 'https://www.163.com', 'https://www.qq.com', 'http://www.sina.com.cn', 'http://www.jd.com' ] p=Pool(3) #进程池为3 for url in urls: p.apply_async(get_page,args=(url,),callback=pasrse_page) #一旦某个获取网页结束,就通知主进程,则分析内容 p.close() p.join()

运行结果:

socket模拟ftp服务器

一、服务器端

#!/usr/bin/env python # -*- coding:utf-8 -*- import socket import subprocess import sys import time import os import progressbar UserDic = {'ckl': '12345', 'zld': '7890'} FtpPub = "/data/pub" IpPort = ("172.16.110.47", 2021) Fsk = socket.socket(socket.AF_INET, socket.SOCK_STREAM) Fsk.bind(IpPort) Fsk.listen(3) Conn, Addr = Fsk.accept() class FtpServer(): def __init__(self): pass def AuthLogin(self): UserCircle = True while UserCircle: UserData = Conn.recv(1024) UserStrData = UserData.decode() print(UserStrData) if UserStrData in UserDic.keys(): Conn.send(bytes("UserPassed", "utf8")) while True: PassData = Conn.recv(1024) PassStrData = PassData.decode() print(PassStrData) if PassStrData == UserDic[UserStrData]: Conn.send(bytes("PassPassed", "utf8")) UserCircle = False UserHome = FtpPub + "/" + UserStrData os.chdir(UserHome) break else: PassTry = "Sorry,your pass is wrong,try again!" Conn.send(bytes(PassTry, "utf8")) else: UserTry = "Sorry,%s not found,try again!" % UserStrData Conn.send(bytes(UserTry, "utf8")) continue def TransActive(self): while True: print("Top") ClientData = Conn.recv(1024) FileMsg = ClientData.decode() time.sleep(1) FileList = ''.join(FileMsg).strip().split(":") print(FileList[0]) if FileList[0] == "put": PutFileName = FileList[1] PutFileSize = int(FileList[2]) Conn.send(bytes("ReadyDone", "utf8")) RecievedContent = "" RecievedSize = 0 while RecievedSize < PutFileSize: RecievedData = Conn.recv(500) RecievedSize += len(RecievedData) RecievedContent += str(RecievedData.decode()) else: # print(RecievedContent) pass with open(PutFileName, 'w') as fu: fu.write(RecievedContent) print("--- recieved done ---") elif FileList[0] == "get": GetFileName = FileList[1] print(GetFileName) if os.path.exists(GetFileName): with open(GetFileName, 'rb') as fu: GetData = fu.read() DataLength = len(GetData) print(DataLength) GetFileSize = int(DataLength) Conn.send(bytes("%s" % (DataLength), "utf8")) ReadyResult = Conn.recv(500) if ReadyResult.decode() == "ReadyDone": SendData = GetData.decode() # print(SendData) Conn.send(bytes(SendData, "utf8")) else: Conn.send(bytes("%s is not found" % GetFileName, "utf8")) elif FileList[0] == "ls": Fresult = subprocess.Popen("ls -l", shell=True, stdout=subprocess.PIPE) Sresult = Fresult.stdout.read().decode() LenResult = str(len(Sresult)) Conn.send(bytes(LenResult, "utf8")) Ready = Conn.recv(200) if Ready.decode() == "ReadyDone": Conn.send(bytes(Sresult, "utf8")) if __name__ == "__main__": Server = FtpServer() Server.AuthLogin() Server.TransActive()

二、客户端

#!/usr/bin/env python # -*- coding:utf-8 -*- import socket import sys import re import os import time import progressbar IpPort = ('172.16.110.47', 2021) Fsk = socket.socket() Fsk.connect(IpPort) class AuthClient(): def __init__(self): pass def ClientLogin(self): UserCircleNum = 0 while UserCircleNum < 2: AskUser = input("Your UserName: ") Fsk.send(bytes(AskUser, "utf8")) UserResult = Fsk.recv(1024) UserStrResult = UserResult.decode() if UserStrResult == "UserPassed": PassCircleNum = 0 while PassCircleNum < 2: AskPass = input("Your Password: ") Fsk.send(bytes(AskPass, "utf8")) PassResult = Fsk.recv(1024) PassStrResult = PassResult.decode() if PassStrResult == "PassPassed": print("Welcome Login ckl ftp system!") UserCircleNum = 2 break else: PassCircleNum += 1 print(PassCircleNum) if PassCircleNum >= 2: print("Your password tries is too much,will be quit") Fsk.close() sys.exit() continue else: UserCircleNum += 1 if UserCircleNum >= 2: print("Your name tries is too much,will be quit!") Fsk.close() sys.exit() print(UserStrResult) continue # Fsk.close() class ClientTrans(AuthClient): def __init__(self): pass def ClientFtp(self): while True: try: ClientSaid = input("Client>> ") MatchPutFirst = re.findall('\s?put\s+.*', ClientSaid) MatchPutResult = ''.join(MatchPutFirst).strip().split() MatchGetFirst = re.findall('\s?get\s+.*', ClientSaid) MatchGetResult = ''.join(MatchGetFirst).strip().split() if len(ClientSaid) <= 0: continue if ClientSaid == 'quit': print("Clinet System will be quit!") break if len(MatchPutResult) > 0: print(MatchPutResult) if os.path.exists(MatchPutResult[1]): with open(MatchPutResult[1], 'rb') as fu: UpData = fu.read() DataLength = len(UpData) print(DataLength) RealFileName = os.path.basename(MatchPutResult[1]) Fsk.send(bytes("put:%s:%s" % (RealFileName, DataLength), "utf8")) ReadyResult = Fsk.recv(500) if ReadyResult.decode() == "ReadyDone": SendData = UpData.decode() Fsk.send(bytes(SendData, "utf8")) print("-- %s send finished --" % RealFileName) else: print("%s is not found!" % MatchPutResult[1]) continue if len(MatchGetResult) > 0: Fsk.send(bytes("get:%s" % (MatchGetResult[1]), "utf8")) FileLength = Fsk.recv(500) if FileLength.decode() == "%s is not found" % MatchGetResult[1]: print("Sorry,%s is not found!" % MatchGetResult[1]) continue GetFileSize = int(FileLength.decode()) print(GetFileSize) Fsk.send(bytes("ReadyDone", "utf8")) RecievedContent = "" RecievedSize = 0 with progressbar.ProgressBar(max_value=GetFileSize) as bar: while RecievedSize < GetFileSize: RecievedData = Fsk.recv(500) RecievedSize += len(RecievedData) RecievedContent += str(RecievedData.decode()) time.sleep(0.02) bar.update(RecievedSize) else: # print(RecievedContent) pass with open(MatchGetResult[1], 'w') as fu: fu.write(RecievedContent) print("-- %s recieved finished --" % MatchGetResult[1]) if ClientSaid == "ls": Fsk.send(bytes("ls", "utf8")) LenRes = Fsk.recv(500) Lsize = int(LenRes.decode()) Fsk.send(bytes("ReadyDone", "utf8")) RecievedLresult = "" RecievedLSize = 0 while RecievedLSize < Lsize: RecievedData = Fsk.recv(500) RecievedLSize += len(RecievedData) RecievedLresult += str(RecievedData.decode()) else: print(RecievedLresult) except IndexError: pass Fsk.close() if __name__ == "__main__": ClientOp = ClientTrans() ClientOp.ClientLogin() ClientOp.ClientFtp()

浙公网安备 33010602011771号

浙公网安备 33010602011771号