Spark-源码-Spark-Submit 任务提交

Spark 版本:1.3

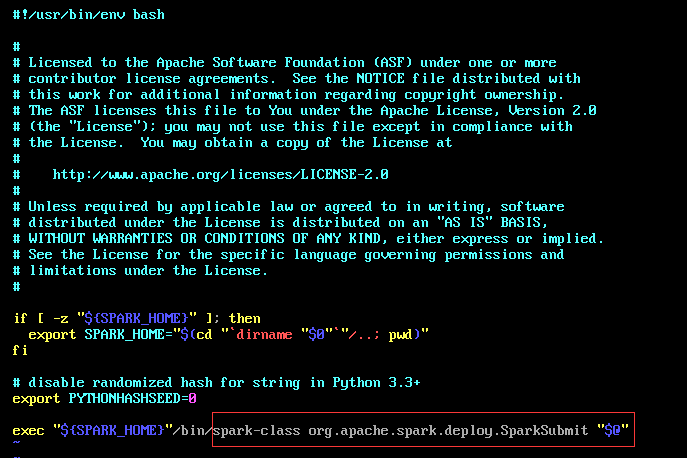

调用shell, spark-submit.sh args[]

首先是进入 org.apache.spark.deploy.SparkSubmit 类中

调用他的 main() 方法

def main(args: Array[String]): Unit = {

//

val appArgs = new SparkSubmitArguments(args)

if (appArgs.verbose) {

printStream.println(appArgs)

}

appArgs.action match {

case SparkSubmitAction.SUBMIT => submit(appArgs)

case SparkSubmitAction.KILL => kill(appArgs)

case SparkSubmitAction.REQUEST_STATUS => requestStatus(appArgs)

}

}

1.1 val appArgs = new SparkSubmitArguments(args) /*在SparkSubmitArguments(args)中获取到提交参数并进行一些初始化,*/

"""将我们手动写出的args赋值到 SparkSubmitArguments 的各个变量中"""

parseOpts(args.toList)

"""将没有写出的args通过默认文件赋值为默认变量"""

mergeDefaultSparkProperties()

"""使用`sparkProperties`映射和env vars来填充任何缺少的参数"""

loadEnvironmentArguments() 此时将 action:SparkSubmitAction = Option(action).getOrElse(SUBMIT) """也就是说在没有指定action 的情况下默认为 SUBMIT"""

validateArguments() """确保存在必填字段。 只有在加载所有默认值后才调用此方法。"""

1.2 if (appArgs.verbose) {

printStream.println(appArgs)

}

"""如果我们手动写出了verbose参数为true 则打印如下的变量信息"""

"""master deployMode executorMemory executorCores totalExecutorCores propertiesFile driverMemory driverCores

driverExtraClassPath driverExtraLibraryPath driverExtraJavaOptions supervise queue numExecutors files pyFiles

archives mainClass primaryResource name childArgs jars packages packagesExclusions repositories verbose"""

1.3 appArgs.action match {case SparkSubmitAction.SUBMIT => submit(appArgs)...}

"""匹配action的值(其实是个枚举类型的匹配), 匹配 SparkSubmitAction.SUBMIT 执行submit(appArgs)"""

2.1 submit(args: SparkSubmitArguments)

/**

* Submit the application using the provided parameters.

*

* This runs in two steps. First, we prepare the launch environment by setting up

* the appropriate classpath, system properties, and application arguments for

* running the child main class based on the cluster manager and the deploy mode.

* Second, we use this launch environment to invoke the main method of the child

* main class.

*/

private[spark] def submit(args: SparkSubmitArguments): Unit = {

val (childArgs, childClasspath, sysProps, childMainClass) = prepareSubmitEnvironment(args)

def doRunMain(): Unit = {

if (args.proxyUser != null) {

val proxyUser = UserGroupInformation.createProxyUser(args.proxyUser,

UserGroupInformation.getCurrentUser())

try {

proxyUser.doAs(new PrivilegedExceptionAction[Unit]() {

override def run(): Unit = {

runMain(childArgs, childClasspath, sysProps, childMainClass, args.verbose)

}

})

} catch {

case e: Exception =>

// Hadoop's AuthorizationException suppresses the exception's stack trace, which

// makes the message printed to the output by the JVM not very helpful. Instead,

// detect exceptions with empty stack traces here, and treat them differently.

if (e.getStackTrace().length == 0) {

printStream.println(s"ERROR: ${e.getClass().getName()}: ${e.getMessage()}")

exitFn()

} else {

throw e

}

}

} else {

runMain(childArgs, childClasspath, sysProps, childMainClass, args.verbose)

}

}

使用提供的参数提交申请。这分两步进行。

"""首先,我们通过设置适当的类路径,系统属性和应用程序参数来准备启动环境,以便 根据 集群管理器 和 部署 模式运行子主类。"""

"""其次,我们使用此启动环境来调用子主类的main方法。"""

val (childArgs, childClasspath, sysProps, childMainClass) = prepareSubmitEnvironment(args) //为变量赋值

在独立群集模式下,有两个提交网关:

(1)传统的Akka网关使用 o.a.s.deploy.Client 作为包装器(wrapper)

(2)Spark 1.3中引入的基于REST的新网关

后者是Spark 1.3的默认行为,但如果主端点不是REST服务器,Spark提交将故障转移以使用旧网关。

根据他的部署模式来运行或者(failOver重设参数并重新提交) 之后执行 doRunMain()方法

2.1.1 doRunMain()

其中如果有proxyUser属性则去获取他的代理对象调用proxyUser的 runMain(...)方法, 没有则直接运行 runMain(childArgs, childClasspath, sysProps, childMainClass, args.verbose)

2.2 def runMain(childArgs: Seq[String],childClasspath: Seq[String],sysProps: Map[String, String],childMainClass: String,verbose: Boolean):Unit

"""使用提供的启动环境运行子类的main方法。 请注意,如果我们正在运行集群部署模式或python应用程序,则此主类将不是用户提供的类。"""

Thread.currentThread.setContextClassLoader(loader) 给当前线程设置一个Context加载器(loader)

for (jar <- childClasspath) { addJarToClasspath(jar, loader) } 将childClasspath的各个类加载,实际上是调用的 loader.addURL(file.toURI.toURL) 方法

for ((key, value) <- sysProps) {System.setProperty(key, value) } 将各个系统参数变量设置到系统中

mainClass: Class[_] = Class.forName(childMainClass, true, loader) 使用loader将我们写的主类加载 利用反射的方法加载主类

val mainMethod = mainClass.getMethod("main", new Array[String](0).getClass) 加载mainMethod,并在接下来的代码中对它做检查,

必须是static~("The main method in the given main class must be static")

mainMethod.invoke(null, childArgs.toArray)

到此为止启动我们的主类的main方法!!!

浙公网安备 33010602011771号

浙公网安备 33010602011771号