ELK logstash 处理MySQL慢查询日志

在生产环境下,logstash 经常会遇到处理多种格式的日志,不同的日志格式,解析方法不同。下面来说说logstash处理多行日志的例子,对MySQL慢查询日志进行分析,这个经常遇到过,网络上疑问也很多。

MySQL慢查询日志格式如下:

|

1

2

3

4

5

|

# User@Host: ttlsa[ttlsa] @ [10.4.10.12] Id: 69641319

# Query_time: 0.000148 Lock_time: 0.000023 Rows_sent: 0 Rows_examined: 202

SET timestamp=1456717595;

select `Id`, `Url` from `File` where `Id` in ('201319', '201300');

# Time: 160229 11:46:37

|

filebeat配置

我这里是使用filebeat 1.1.1版本的,之前版本没有multiline配置项,具体方法看后面那种。

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

filebeat:

prospectors:

-

paths:

- /www.ttlsa.com/logs/mysql/slow.log

document_type: mysqlslowlog

input_type: log

multiline:

negate: true

match: after

registry_file: /var/lib/filebeat/registry

output:

logstash:

hosts: ["10.6.66.14:5046"]

shipper:

logging:

files:

|

logstash配置

1. input段配置

|

1

2

3

4

5

6

7

|

# vi /etc/logstash/conf.d/01-beats-input.conf

input {

beats {

port => 5046

host => "10.6.66.14"

}

}

|

2. filter 段配置

|

1

2

3

4

5

6

7

8

9

10

11

12

|

# vi /etc/logstash/conf.d/16-mysqlslowlog.log

filter {

if [type] == "mysqlslowlog" {

grok {

match => { "message" => "(?m)^#\s+User@Host:\s+%{USER:user}\[[^\]]+\]\s+@\s+(?:(?<clienthost>\S*) )?\[(?:%{IPV4:clientip})?\]\s+Id:\s+%{NUMBER:row_id:int}\n#\s+Query_time:\s+%{NUMBER:query_time:float}\s+Lock_time:\s+%{NUMBER:lock_time:float}\s+Rows_sent:\s+%{NUMBER:rows_sent:int}\s+Rows_examined:\s+%{NUMBER:rows_examined:int}\n\s*(?:use %{DATA:database};\s*\n)?SET\s+timestamp=%{NUMBER:timestamp};\n\s*(?<sql>(?<action>\w+)\b.*;)\s*(?:\n#\s+Time)?.*$" }

}

date {

match => [ "timestamp", "UNIX", "YYYY-MM-dd HH:mm:ss"]

remove_field => [ "timestamp" ]

}

}

}

|

关键之重是grok正则的配置。

3. output段配置

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

# vi /etc/logstash/conf.d/30-beats-output.conf

output {

if "_grokparsefailure" in [tags] {

file { path => "/var/log/logstash/grokparsefailure-%{[type]}-%{+YYYY.MM.dd}.log" }

}

if [@metadata][type] in [ "mysqlslowlog" ] {

elasticsearch {

hosts => ["10.6.66.14:9200"]

sniffing => true

manage_template => false

template_overwrite => true

index => "%{[@metadata][beat]}-%{[type]}-%{+YYYY.MM.dd}"

document_type => "%{[@metadata][type]}"

}

}

}

|

标准输出结果截图

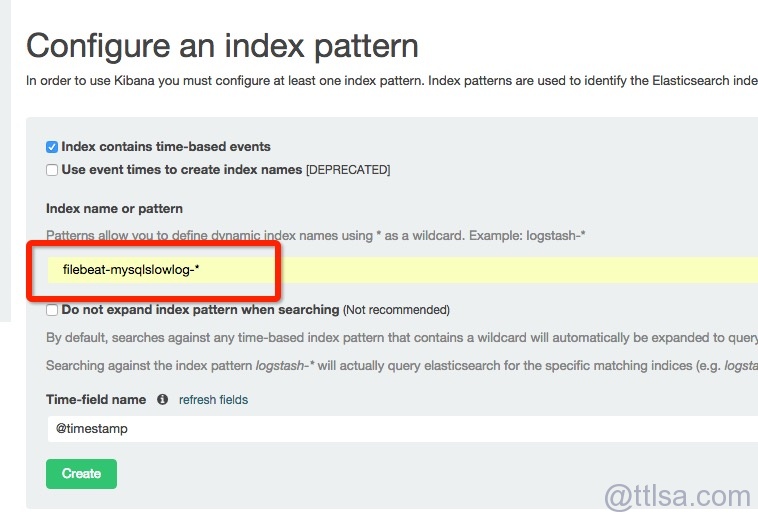

elasticsearch结果截图

如果是使用filebeat1.1.1之前的版本,配置如下:

1. filebeat配置

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

filebeat:

prospectors:

-

paths:

- /www.ttlsa.com/logs/mysql/slow.log

document_type: mysqlslowlog

input_type: log

registry_file: /var/lib/filebeat/registry

output:

logstash:

hosts: ["10.6.66.14:5046"]

shipper:

logging:

files:

|

2. logstash input段配置

|

1

2

3

4

5

6

7

8

9

10

11

|

input {

beats {

port => 5046

host => "10.6.66.14"

codec => multiline {

pattern => "^# User@Host:"

negate => true

what => previous

}

}

}

|

其它配置不变。

如有疑问跟帖,或者加群沟通。

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 浏览器原生「磁吸」效果!Anchor Positioning 锚点定位神器解析

· 没有源码,如何修改代码逻辑?

· 一个奇形怪状的面试题:Bean中的CHM要不要加volatile?

· [.NET]调用本地 Deepseek 模型

· 一个费力不讨好的项目,让我损失了近一半的绩效!

· 全网最简单!3分钟用满血DeepSeek R1开发一款AI智能客服,零代码轻松接入微信、公众号、小程

· .NET 10 首个预览版发布,跨平台开发与性能全面提升

· 《HelloGitHub》第 107 期

· 全程使用 AI 从 0 到 1 写了个小工具

· 从文本到图像:SSE 如何助力 AI 内容实时呈现?(Typescript篇)