第三周 淘宝商品/股票数据定向爬虫

浏览器打开淘宝,搜索“书包”

第一页链接:https://s.taobao.com/search?q=书包&imgfile=&commend=all&ssid=s5-e&search_type=item&sourceId=tb.index&spm=&ie=utf8&initiative_id=tbindexz_20170306

第二页链接:https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all%3A1&initiative_id=staobaoz_20180713&ie=utf8&bcoffset=3&ntoffset=3&p4ppushleft=1%2C48&s=44

第三页链接:https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all%3A1&initiative_id=staobaoz_20180713&ie=utf8&bcoffset=0&ntoffset=6&p4ppushleft=1%2C48&s=88

观察链接可以看出每页商品有44个

import requests import re def getHTMLText(url): try: r=requests.get(url) r.raise_for_status() r.encoding=r.apparent_encoding return r.text except: print('') def parsePage(ilt, html): try: plt = re.findall(r'\"view_price\"\:\"[\d\.]*\"',html) tlt = re.findall(r'\"raw_title\"\:\".*?\"',html) for i in range(len(plt)): price = eval(plt[i].split(':')[1]) title = eval(tlt[i].split(':')[1]) ilt.append([price , title]) except: print("") def printGoodsList(ilt): tplt = "{:4}\t{:8}\t{:16}" print(tplt.format("序号", "价格", "商品名称")) count = 0 for g in ilt: count = count + 1 print(tplt.format(count, g[0], g[1])) def main(): goods = '电脑' depth = 2 start_url = 'https://s.taobao.com/search?q=' + goods infoList = [] for i in range(depth): try: url = start_url + '&s=' + str(44*i) html = getHTMLText(url) parsePage(infoList, html) except: continue printGoodsList(infoList) main()

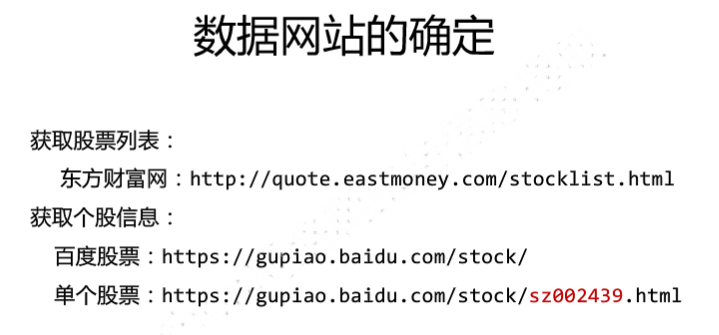

股票数据:

import requests from bs4 import BeautifulSoup import traceback import re def getHTMLText(url,code="utf-8"): try: r = requests.get(url) r.raise_for_status() r.encoding = code return r.text except: return "" def getStockList(lst, stockURL): html = getHTMLText(stockURL,"GB2312") soup = BeautifulSoup(html, 'html.parser') a = soup.find_all('a') for i in a: try: href = i.attrs['href'] lst.append(re.findall(r"[s][hz]\d{6}", href)[0]) except: continue def getStockInfo(lst, stockURL, fpath): count = 0 for stock in lst: url = stockURL + stock + ".html" html = getHTMLText(url) try: if html=="": continue infoDict = {} soup = BeautifulSoup(html, 'html.parser') stockInfo = soup.find('div',attrs={'class':'stock-bets'}) name = stockInfo.find_all(attrs={'class':'bets-name'})[0] infoDict.update({'股票名称': name.text.split()[0]}) keyList = stockInfo.find_all('dt') valueList = stockInfo.find_all('dd') for i in range(len(keyList)): key = keyList[i].text val = valueList[i].text infoDict[key] = val with open(fpath, 'a', encoding='utf-8') as f: f.write( str(infoDict) + '\n' ) count=count+1 print("\r当前进度:{:.2f}%".format(count*100/len(lst)),end="") except: traceback.print_exc() continue def main(): stock_list_url = 'http://quote.eastmoney.com/stocklist.html' stock_info_url = 'http://gupiao.baidu.com/stock/' output_file = 'D:/BaiduStockInfo.txt' slist=[] getStockList(slist, stock_list_url) getStockInfo(slist, stock_info_url, output_file) main()

浙公网安备 33010602011771号

浙公网安备 33010602011771号