After spark publish 2.0 version, Akka replaced by RPC framework due to the Akka version in the user's spark application conflicts with the built-in Akka version of spark.

1.Key words

- RpcEnv: The environment on which the RPC Endpoint runs is called RpcEnv

- RpcEndpoint: RPC Endpoint, Spark calls each communication entity an RPC Endpoint, and implements the RpcEndpoint interface. According to the needs of different Endpoints design different messages and different business processing.

- RpcEndpointRef: Is a reference to the remote RpcEndpoint. When we need to send a message to a specific RpcEndpoint, generally we need to get the reference of the RpcEndpoint, and then send the message through the application.

- RpcAddress: Address of the remote RpcEndpointRef, Host + Port

- Dispatcher: Message distributor. It is responsible for distributing RpcMessage to the corresponding RpcEndpoint. The Dispatcher contains a MessageLoop, which reads the RpcMessage from LinkedBlockingQueue, find the inbox of the Endpoint according to the Endpoint ID specified by the client, and then put it. Because the queue is blocked, it will block naturally when there is no message. Once there is a message, it will start to work. Dispatcher's ThreadPool is responsible for consuming these messages.

- Inbox: A local Endpoint corresponds to an Inbox. There is a list of InboxMessage in the Inbox. There are many subclasses of InboxMessage, such as OneWayMessage、RpcMessage、RemoteProcessConnected and others in follow picture. These messages will make pattern matching in the inside of the inbox by corresponding RpcEndpoint functions.

- Outbox: A Endpoint corresponds to an outbox, and NettyRpcEnv contains a ConcurrentHashMap[RpcAddress, Outbox]. When the message is put into the Outbox, it is sent out through the TransportClient.

2.RpcEnv

Spark program start from the SparkContext. When the SparkContext is initialized, it will create a SparkEnv through the createSparkEnv method. In the process of creating SparkEnv, One step is to create rpcenv. In the create method of SparkEnv, create a SparkEnv for the driver or executor.

2.1 Create RpcEnv

RpcEnv: It is a abstract class, which defines the RPC framework's start、stop、close and other abstract methods. Two create methods are defined in it's companion object RpcEnv.

In spark, RpcEnv's implementation is NettyRpcEnv, RpcEnvFactory's implementation is NettyRpcEnvFactory

2.2 RpcEnv start

NettyRpcEnvFactory's create method as follow

2.3 setupEndpoint

In RpcEnv, we should focus on the setupEndpoint method, which Register RpcEndpoint to dispatcher. When registering, we must specify a name, client route find Endpoint by this name.

3 RpcEndPoint

In spark, RpcEndpoint is an abstraction of all communication entities. RpcEndpoint is a feature, it defined functions that are triggered when a message is received.

4 RpcEndpointRef

Spark RpcEndpointRef is a reference to RpcEndpoint. To request a remote RpcEndpoint, you must obtain its reference RpcEndpointRef.

RpcEndpointRef specifies IP and port, which is an address like spark://host:port/name.

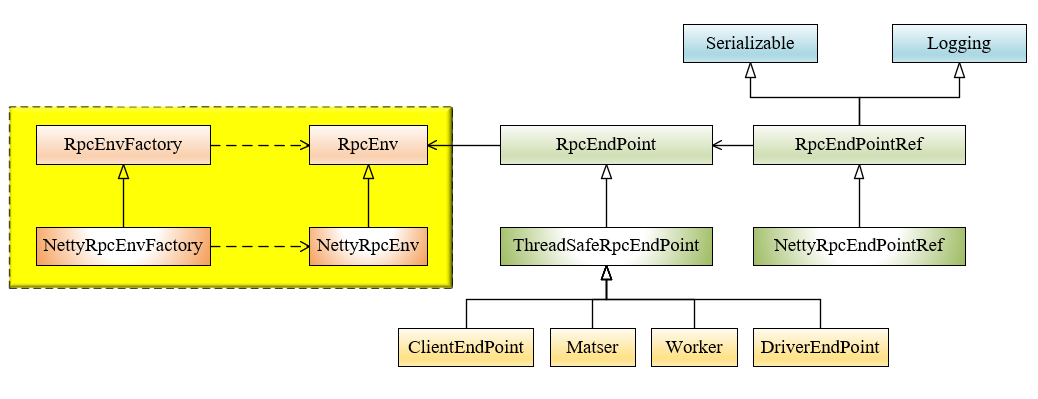

5 RpcEnv and RpcEndpoint relation class diagram

- For the server, RpcEnv is the running environment of RpcEndpoint, which is responsible for the whole life cycle management of Endpoint. It can register Endpoint, parse and deserialize the data packets of TCP layer, encapsulate them into RpcMessage, route the request to the specified Endpoint, invoke the business logic code, and if the Endpoint needs to respond, serialize the returned objects and then transmitted to the remote Endpoint by TCP Layer. If the Endpoint has an exception, RpcCallContext.sendFailure is invoked to send the exception back.

- For the client, RpcEnv can get RpcEndpoint reference, which is RpcEndpointRef.

- RpcEnvFactory is responsible for creating RpcEnv. Currently, NettyRpcEnvFactory is the only subclass of RpcEnvFactory. if NettyRpcEnvFactory.create is invoked, the server will start immediately on the port in address of the bind.

- NettyRpcEnv is created by NettyRpcEnvFactory.create, which is the bridge between the spark core and org.apache.spark.spark-network-common. The core method setupEndpoint will register Endpoint in dispatcher, and setupEndpointRef will invoke RpcEndpoint Verifier to verify whether Endpoint exists in local or remote. and then create RpcEndpointRef.

6 Dispatcher and Inbox

NettyRpcEnv includes Dispatcher, which is mainly used for the server to help route to the correct RpcEndpoint and invoke it's business logic.

6.1 Important attributes in dispatcher

- EndpointData class properties are name, RpcEndpoint, NettyRpcEndpointRef. every RpcEndpoint has an inbox saved in InboxMessage.

- The endpoints object type is ConcurrentMap. this map's key is the name of RpcEndpoint and it's value is EndpointData.

- The endpointRefs object type is ConcurrentMap. this map's key is RpcEndpoint and it's value is RpcEndpointRef.

- The receivers object type is LinkedBlockingQueue whose value is endpointdata.

6.2 Scheduling Principle Of Dispatcher

A thread pool is created when the Dispatcher is built. in MessageLoop thread, if receivers has EndpointData, take it from receivers and then to process InboxMessage which take from EndpointData's inbox.

6.3 Message source for inbox

(1) registerRpcEndpoint

When registering Endpoint, EndpointData will be put into receivers. Each time when new EndpointData is created, an inbox corresponding to it will be created. The OnStart message will be added to it's messages list in the inbox, and the messageloop thread will consume this message.

(2) unregisterRpcEndpoint

Remove EndpointData from endpoints when unregistering. After that, inbox sends OnStop message to messages, and the MessageLoop thread processes after receivers.offer(data).

(3) postMessage which submit the message to the specified EndpointData

(4) Stop Dispatcher

It's function is stop all endpoints. This will unregister all endpoints. and then enqueue a message that tells the message loops to stop.

6.4 Dispatcher and inbox request flowchart

7 Outbox

浙公网安备 33010602011771号

浙公网安备 33010602011771号