MINIST手写数字识别

1、配置环境:tensorflow+matplotlib

添加matplotlib库:https://blog.csdn.net/jiaoyangwm/article/details/79252845

2、下载数据:

http://yann.lecun.com/exdb/mnist/

3、主要步骤:

1)载入数据,解析文件

2)构建CNN网络

(1)传统LeNet5包含输入层、卷积层CONV5-32、池化层pool2、卷积层CONV5-64、池化层pool2、全连接层fc1、输出层fc。

改进的LeNet5神经网络激活函数采用ReLU激活函数,添加dropout层防止过拟合,输出层为softmax分类层,

INPUT: [28x28x1] weights: 0

CONV5-32: [28x28x32] weights: (5*5*1+1)*32

POOL2: [14x14x32] weights: 2*2*1

CONV5-64: [14x14x64] weights: (5*5*32+1)*64

POOL2: [7x7x64] weights: 2*2*1

FC: [1x1x1024] weights: (7*7*64+1)*1024

FC: [1x1x10] weights: (1*1*512+1)*10

(2)

(3)

3)构建loss function

softmax损失函数

4)配置寻优器

梯度下降法、Adam优化器、动量优化器、Adagrad优化器、FTRL优化器、RMSProp优化器、

5)训练、测试

6)tensorboard可视化

4、详细解释

http://wiki.jikexueyuan.com/project/tensorflow-zh/tutorials/mnist_beginners.html

5、具体实现

多层神经网络实现MINIST手写数字识别:

import input_data #input_data为下载的脚本文件,网址见最后 import tensorflow as tf mnist = input_data.read_data_sets('F:/PycharmProjects/untitled2/tensorflow/MINIST_data', one_hot=True) #下载的MNIST数据集存储路径 x = tf.placeholder(tf.float32, [None, 784]) y_ = tf.placeholder(tf.float32, [None, 10]) def weight_variable(shape): initial = tf.truncated_normal(shape,stddev = 0.1) return tf.Variable(initial) def bias_variable(shape): initial = tf.constant(0.1,shape = shape) return tf.Variable(initial) def conv2d(x,W): return tf.nn.conv2d(x, W, strides = [1,1,1,1], padding = 'SAME') def max_pool_2x2(x): return tf.nn.max_pool(x, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME') W_conv1 = weight_variable([5, 5, 1, 32]) b_conv1 = bias_variable([32]) x_image = tf.reshape(x,[-1,28,28,1]) h_conv1 = tf.nn.relu(conv2d(x_image,W_conv1) + b_conv1) h_pool1 = max_pool_2x2(h_conv1) W_conv2 = weight_variable([5, 5, 32, 64]) b_conv2 = bias_variable([64]) h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) h_pool2 = max_pool_2x2(h_conv2) W_fc1 = weight_variable([7 * 7 * 64, 1024]) b_fc1 = bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64]) h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder("float") h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = weight_variable([1024, 10]) b_fc2 = bias_variable([10]) y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2) cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv)) train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy) correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) saver = tf.train.Saver() #定义saver with tf.Session() as sess: sess.run(tf.global_variables_initializer()) for i in range(2000): batch = mnist.train.next_batch(50) if i % 100 == 0: train_accuracy = accuracy.eval(feed_dict={ x: batch[0], y_: batch[1], keep_prob: 1.0}) print('step %d, training accuracy %g' % (i, train_accuracy)) train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5}) saver.save(sess, 'F:/PycharmProjects/untitled2/tensorflow/MINIST_data/model.ckpt') #模型储存位置 print('test accuracy %g' % accuracy.eval(feed_dict={ x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

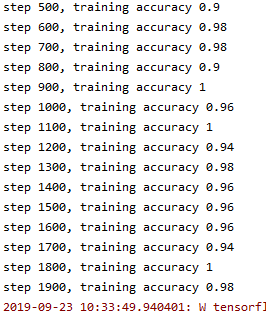

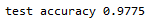

运行结果:

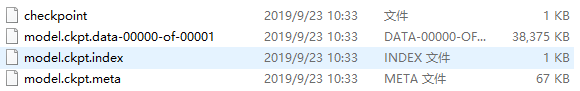

CNN模型文件:

讲一下入过的坑:

第一个坑:

报错:ImportError: No module named examples.tutorials.mnist.input_data

这就需要从TensorFlow的官网上下载input_data.py,这个国内可拷贝的链接是:http://blog.csdn.net/fdbptha/article/details/51265430。

第二个坑:(安装tf2.0的后果,变化太大不会使,只能重新安装低版的tf)

报错:AttributeError: module 'tensorflow' has no attribute 'placeholder'

替换 import tensorflow as tf

为:import tensorflow.compat.v1 as tf

报错:RuntimeError: tf.placeholder() is not compatible with eager execution.

重装tf1.15版本后可以正常运行了,感动到泪奔T_T

浙公网安备 33010602011771号

浙公网安备 33010602011771号