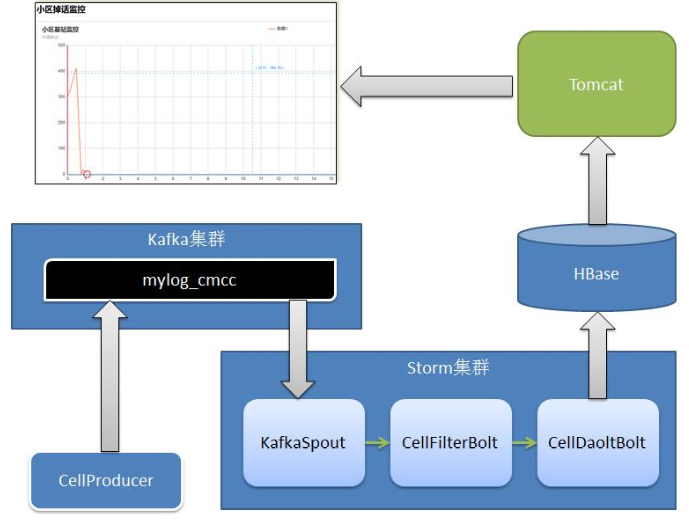

22 Flume+Kafka+Storm案例

1 整合demo

1.1 Flume操作

- 1)在Flume的conf目录下,新建fk.conf

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = avro

a1.sources.r1.bind = master

a1.sources.r1.port = 41414

# Describe the sink

a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink

a1.sinks.k1.topic = testflume

a1.sinks.k1.brokerList = master:9092,slvae1:9092,slave2:9092

a1.sinks.k1.requiredAcks = 1

a1.sinks.k1.batchSize = 20

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000000

a1.channels.c1.transactionCapacity = 10000

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1- 2)启动

bin/flume-ng agent -n a1 -c conf -f conf/fk.conf -Dflume.root.logger=DEBUG,console

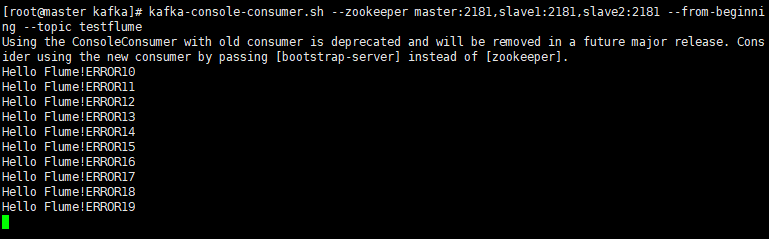

1.2 kafka操作

启动消费者

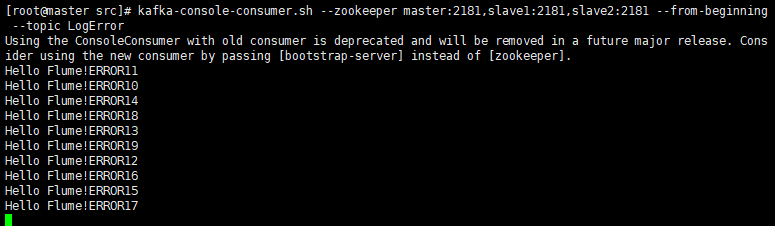

kafka-console-consumer.sh --zookeeper master:2181,slave1:2181,slave2:2181 --from-beginning --topic testflume

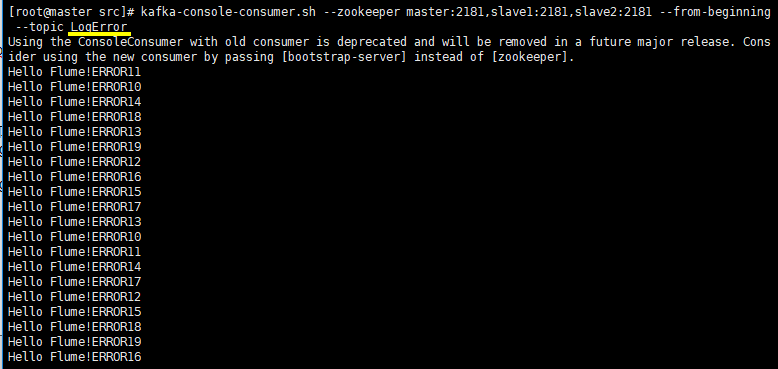

kafka-console-consumer.sh --zookeeper master:2181,slave1:2181,slave2:2181 --from-beginning --topic LogError1.3 IDEA操作

- pom.xml(注销作用域)

<dependency>

<groupId>org.apache.flume</groupId>

<artifactId>flume-ng-core</artifactId>

<version>1.6.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>1.1.1</version>

</dependency>

<dependency>

<groupId>org.apache.storm</groupId>

<artifactId>storm-kafka</artifactId>

<version>0.9.3</version>

<scope>provided</scope>

</dependency>

- 官网flume+kafka案例RpcClientDemo.java

import org.apache.flume.Event;

import org.apache.flume.EventDeliveryException;

import org.apache.flume.api.RpcClient;

import org.apache.flume.api.RpcClientFactory;

import org.apache.flume.event.EventBuilder;

import java.nio.charset.Charset;

/**

* Flume官网案例

* http://flume.apache.org/FlumeDeveloperGuide.html

* @author root

*/

public class RpcClientDemo {

public static void main(String[] args) {

MyRpcClientFacade client = new MyRpcClientFacade();

// Initialize client with the remote Flume agent's host and port

client.init("192.168.74.10", 41414);

// Send 10 events to the remote Flume agent. That agent should be

// configured to listen with an AvroSource.

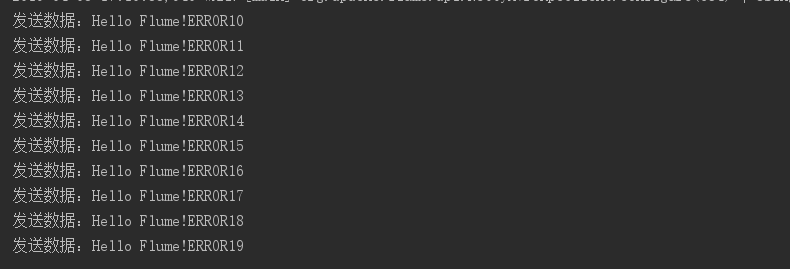

for (int i = 10; i < 20; i++) {

String sampleData = "Hello Flume!ERROR" + i;

client.sendDataToFlume(sampleData);

System.out.println("发送数据:" + sampleData);

}

client.cleanUp();

}

}

class MyRpcClientFacade {

private RpcClient client;

private String hostname;

private int port;

public void init(String hostname, int port) {

// Setup the RPC connection

this.hostname = hostname;

this.port = port;

this.client = RpcClientFactory.getDefaultInstance(hostname, port);

// Use the following method to create a thrift client (instead of the

// above line):

// this.client = RpcClientFactory.getThriftInstance(hostname, port);

}

public void sendDataToFlume(String data) {

// Create a Flume Event object that encapsulates the sample data

Event event = EventBuilder.withBody(data, Charset.forName("UTF-8"));

// Send the event

try {

client.append(event);

} catch (EventDeliveryException e) {

// clean up and recreate the client

client.close();

client = null;

client = RpcClientFactory.getDefaultInstance(hostname, port);

// Use the following method to create a thrift client (instead of

// the above line):

// this.client = RpcClientFactory.getThriftInstance(hostname, port);

}

}

public void cleanUp() {

// Close the RPC connection

client.close();

}

}- 测试(本地打印和kafka消费者打印)

1.4 IDEA操作LogFilterTopology.java

/**

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License"); you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

import java.util.ArrayList;

import java.util.Arrays;

import java.util.List;

import java.util.Properties;

import backtype.storm.Config;

import backtype.storm.LocalCluster;

import backtype.storm.spout.SchemeAsMultiScheme;

import backtype.storm.topology.BasicOutputCollector;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.TopologyBuilder;

import backtype.storm.topology.base.BaseBasicBolt;

import backtype.storm.tuple.Fields;

import backtype.storm.tuple.Tuple;

import backtype.storm.tuple.Values;

import storm.kafka.KafkaSpout;

import storm.kafka.SpoutConfig;

import storm.kafka.StringScheme;

import storm.kafka.ZkHosts;

import storm.kafka.bolt.KafkaBolt;

import storm.kafka.bolt.mapper.FieldNameBasedTupleToKafkaMapper;

import storm.kafka.bolt.selector.DefaultTopicSelector;

/**

* This topology demonstrates Storm's stream groupings and multilang

* capabilities.

*/

public class LogFilterTopology {

public static class FilterBolt extends BaseBasicBolt {

@Override

public void execute(Tuple tuple, BasicOutputCollector collector) {

String line = tuple.getString(0);

System.err.println("Accept: " + line);

// 包含ERROR的行留下

if (line.contains("ERROR")) {

System.err.println("Filter: " + line);

collector.emit(new Values(line));

}

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

// 定义message提供给后面FieldNameBasedTupleToKafkaMapper使用

declarer.declare(new Fields("message"));

}

}

public static void main(String[] args) throws Exception {

TopologyBuilder builder = new TopologyBuilder();

// https://github.com/apache/storm/tree/master/external/storm-kafka

// config kafka spout,话题

String topic = "testflume";

ZkHosts zkHosts = new ZkHosts("192.168.74.10:2181,192.168.74.11:2181,192.168.74.12:2181");

// /MyKafka,偏移量offset的根目录,记录队列取到了哪里

SpoutConfig spoutConfig = new SpoutConfig(zkHosts, topic, "/MyKafka", "MyTrack");// 对应一个应用

List<String> zkServers = new ArrayList<String>();

System.out.println(zkHosts.brokerZkStr);

for (String host : zkHosts.brokerZkStr.split(",")) {

zkServers.add(host.split(":")[0]);

}

spoutConfig.zkServers = zkServers;

spoutConfig.zkPort = 2181;

// 是否从头开始消费

spoutConfig.forceFromStart = true;

spoutConfig.socketTimeoutMs = 60 * 1000;

// StringScheme将字节流转解码成某种编码的字符串

spoutConfig.scheme = new SchemeAsMultiScheme(new StringScheme());

KafkaSpout kafkaSpout = new KafkaSpout(spoutConfig);

// set kafka spout

builder.setSpout("kafka_spout", kafkaSpout, 3);

// set bolt

builder.setBolt("filter", new FilterBolt(), 8).shuffleGrouping("kafka_spout");

// 数据写出

// set kafka bolt

// withTopicSelector使用缺省的选择器指定写入的topic: LogError

// withTupleToKafkaMapper tuple==>kafka的key和message

KafkaBolt kafka_bolt = new KafkaBolt().withTopicSelector(new DefaultTopicSelector("LogError"))

.withTupleToKafkaMapper(new FieldNameBasedTupleToKafkaMapper());

builder.setBolt("kafka_bolt", kafka_bolt, 2).shuffleGrouping("filter");

Config conf = new Config();

// set producer properties.

Properties props = new Properties();

props.put("metadata.broker.list", "192.168.74.10:9092,192.168.74.11:9092,192.168.74.12:9092");

/**

* Kafka生产者ACK机制 0 : 生产者不等待Kafka broker完成确认,继续发送下一条数据 1 :

* 生产者等待消息在leader接收成功确认之后,继续发送下一条数据 -1 :

* 生产者等待消息在follower副本接收到数据确认之后,继续发送下一条数据

*/

props.put("request.required.acks", "1");

props.put("serializer.class", "kafka.serializer.StringEncoder");

conf.put("kafka.broker.properties", props);

conf.put(Config.STORM_ZOOKEEPER_SERVERS, Arrays.asList(new String[] { "192.168.74.10", "192.168.74.11", "192.168.74.12" }));

// 本地方式运行

LocalCluster localCluster = new LocalCluster();

localCluster.submitTopology("mytopology", conf, builder.createTopology());

}

}- 测试(本地打印和kafka消费者打印)

- 贯穿测试1.3和1.4

demo2 掉话案例