docker网络之overlay

使用docker network的好处是:在同一个网络上的容器之间可以相互通信,而无需使用expose端口特性

本文使用docker-swarm进行overlay网络的分析。使用2个vmware模拟2个node节点,不关注swarm的使用,无关信息会有所删减,如不相关的接口或端口

- 将node1作为master,在node1上执行init swarm,初始化swarm环境

# docker swarm init

启动docker swarm之后可以在host上看到启动了2个端口:2377和7946,2377作为cluster管理端口,7946用于节点发现。swarm的overlay network会用到3个端口,由于此时没有创建overlay network,故没有4789端口(注:4789端口号为IANA分配的vxlan的UDP端口号)。官方描述参见use overlay network

# netstat -ntpl tcp6 0 0 :::2377 :::* LISTEN 3333/dockerd-curren tcp6 0 0 :::7946 :::* LISTEN 3333/dockerd-curren

- 设置node2加入swarm,同时该节点上也会打开一个7946端口,与swarm服务端通信。

docker swarm join --token SWMTKN-1-62iqlof4q9xj2vmlwlm2s03xfncq6v9urgysg96f4npe2qeuac-4iymsra0xv4f7ujtg1end3qva 192.168.80.130:2377

- 在node1上查看节点信息,可以看到2个节点信息,即node1和node2

# docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS 50u2a7anjo59k5yw4p4namzv4 localhost.localdomain Ready Active qetcamqa2xgk5rvpmhf03nxu1 * localhost.localdomain Ready Active Leader

查看网络信息,可以发现新增了如下网络,docker_gwbridge和ingress,前者提供通过bridge方式提供容器与host的通信,后者在默认情况下提供通过overlay方式与其他容器跨host通信

# docker network ls NETWORK ID NAME DRIVER SCOPE 6405d33c608c docker_gwbridge bridge local lgcns0epsksl ingress overlay swarm

- 在node1创建一个自定义的overlay网络

docker network create -d overlay --attachable my-overlay

- 在node1上创建一个连接到my-overlay的容器

# docker run -itd --network=my-overlay --name=CT1 centos /bin/sh

在node2上创建连接到my-overlay的容器

# docker run -itd --network=my-overlay --name=CT2 centos /bin/sh

在CT2上ping CT1的地址,可以ping通

sh-4.2# ping 10.0.0.2 PING 10.0.0.2 (10.0.0.2) 56(84) bytes of data. 64 bytes from 10.0.0.2: icmp_seq=1 ttl=64 time=1.20 ms 64 bytes from 10.0.0.2: icmp_seq=2 ttl=64 time=0.908 ms

查看node2上CT2容器的网络信息,可以看到overlay网络的接口为eth0,它对应的对端网卡编号为26;eth1对应的对端网卡编号为28,该网卡连接的网桥就是docker_gwbridge

sh-4.2# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

25: eth0@if26: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default

link/ether 02:42:0a:00:00:03 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.0.0.3/24 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:aff:fe00:3/64 scope link

valid_lft forever preferred_lft forever

27: eth1@if28: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:12:00:03 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet 172.18.0.3/16 scope global eth1

valid_lft forever preferred_lft forever

inet6 fe80::42:acff:fe12:3/64 scope link

valid_lft forever preferred_lft forever

sh-4.2# ip route

default via 172.18.0.1 dev eth1

10.0.0.0/24 dev eth0 proto kernel scope link src 10.0.0.3

172.18.0.0/16 dev eth1 proto kernel scope link src 172.18.0.3

CT2的eth1对应的网卡即为host上的vethd4eadd9,非overlay的报文使用该bridge转发,转发流程参见docker网络之bridge

# ip link show master docker_gwbridge

23: veth5d40a11@if22: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker_gwbridge state UP mode DEFAULT group default

link/ether 6a:4a:2a:95:dc:73 brd ff:ff:ff:ff:ff:ff link-netnsid 1

28: vethd4eadd9@if27: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker_gwbridge state UP mode DEFAULT group default

link/ether d6:90:57:a6:44:e0 brd ff:ff:ff:ff:ff:ff link-netnsid 3

overlay的网络通过CT2容器的eth0流出,那eth0对应的对端网卡在哪里?由于CT2连接到名为my-overlay的网络,在/var/run/docker/netns下查看该网络对应的namespace(1-9gtpq8ds3g),可以看到eth0对应该my-overlay的veth2,且它们连接到bridge br0

# docker network ls

NETWORK ID NAME DRIVER SCOPE

2cd167c9fb17 bridge bridge local

d84c003d86f9 docker_gwbridge bridge local

e8476b504e33 host host local

lgcns0epsksl ingress overlay swarm

9gtpq8ds3gsu my-overlay overlay swarm

96a70c1a9516 none null local

# ll

total 0

-r--r--r--. 1 root root 0 Nov 25 08:05 1-9gtpq8ds3g

-r--r--r--. 1 root root 0 Nov 25 08:05 1-lgcns0epsk

-r--r--r--. 1 root root 0 Nov 25 08:05 dd845ad1c97a

-r--r--r--. 1 root root 0 Nov 25 08:05 ingress_sbox

# nsenter --net=1-9gtpq8ds3g ip a

2: br0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default

link/ether 8a:11:66:30:16:aa brd ff:ff:ff:ff:ff:ff

inet 10.0.0.1/24 scope global br0

valid_lft forever preferred_lft forever

inet6 fe80::6823:3dff:fe77:c01c/64 scope link

valid_lft forever preferred_lft forever

24: vxlan1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master br0 state UNKNOWN group default

link/ether 8a:11:66:30:16:aa brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::8811:66ff:fe30:16aa/64 scope link

valid_lft forever preferred_lft forever

26: veth2@if25: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master br0 state UP group default

link/ether fe:41:38:d6:00:c6 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::fc41:38ff:fed6:c6/64 scope link

valid_lft forever preferred_lft forever

# nsenter --net=1-9gtpq8ds3g ip link show master br0

24: vxlan1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master br0 state UNKNOWN mode DEFAULT group default

link/ether 8a:11:66:30:16:aa brd ff:ff:ff:ff:ff:ff link-netnsid 0

26: veth2@if25: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master br0 state UP mode DEFAULT group default

link/ether fe:41:38:d6:00:c6 brd ff:ff:ff:ff:ff:ff link-netnsid 1

# nsenter --net=1-9gtpq8ds3g ip route 10.0.0.0/24 dev br0 proto kernel scope link src 10.0.0.1

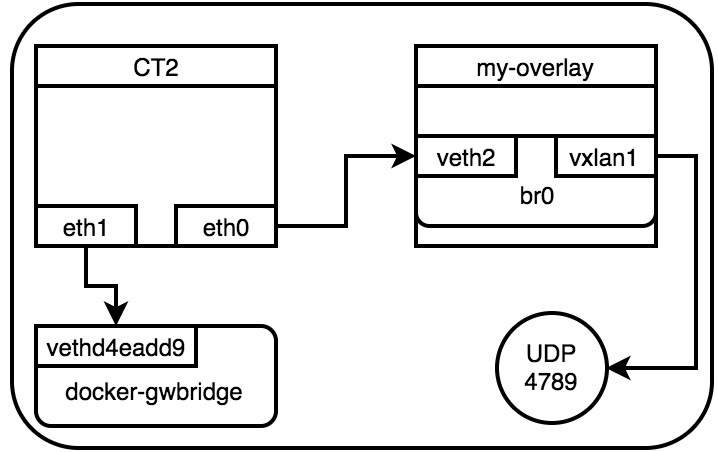

这样overlay在CT2上的报文走向如下,所有的容器使用bridge方式直接连接在默认的docker_gwbridge上,而overlay方式通过在my-overlay上的br0进行转发。这样做的好处是,当在一个host上同时运行多个容器的时候,仅需要一个vxlan的udp端口即可,所有的vxlan流量由br0转发。

br0的vxlan(该vxlan是由swarm创建的)会在host上开启一个4789的端口,报文过该端口进行跨主机传输

# netstat -anup Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name udp 0 0 0.0.0.0:4789 0.0.0.0:*

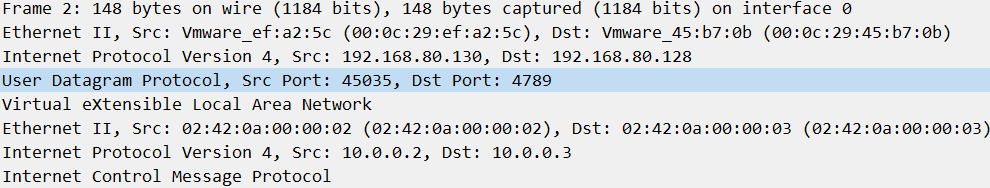

在CT2上ping CT1,并在node2上开启tcpdump抓包,可以看到有vxlan的报文

# tcpdump -i ens33 udp and port 4789 tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on ens33, link-type EN10MB (Ethernet), capture size 262144 bytes 16:31:41.604930 IP localhost.localdomain.57170 > 192.168.80.130.4789: VXLAN, flags [I] (0x08), vni 4097 IP 10.0.0.3 > 10.0.0.2: ICMP echo request, id 138, seq 1, length 64 16:31:41.606434 IP 192.168.80.130.45035 > localhost.localdomain.4789: VXLAN, flags [I] (0x08), vni 4097 IP 10.0.0.2 > 10.0.0.3: ICMP echo reply, id 138, seq 1, length 64

CT2 ping CT1的ping报文如下,可以看到外层为node2节点的地址,通过udp目的端口上送到node1的vxlan端口处理

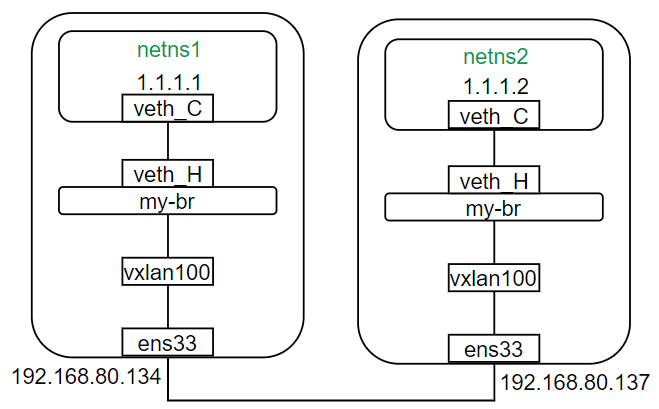

实现一个基于自定义的overlay网络

组网如下,2个node节点上分别创建一个bridge和一个netns,使用单播方式指定对端node(vxlan的多播方式参见linux 上实现 vxlan 网络)。

- node1配置如下(端口最好使用IANA指定的端口4789,其他端口wireshark无法解析):

ip netns add netns1 ip link add my-br type bridge ip link add veth_C type veth peer name veth_H ip link add vxlan100 type vxlan id 100 dstport 4789 remote 192.168.80.137 local 192.168.80.134 dev ens33 ip link set veth_C netns netns1 ip netns exec netns1 ip addr add 1.1.1.1/24 dev veth_C ip netns exec netns1 ip link set dev veth_C up ip link set dev my-br up ip link set dev vxlan100 up ip link set dev veth_H up ip link set dev veth_H master my-br ip link set dev vxlan100 master my-br

- node2配置如下:

ip netns add netns2 ip link add my-br type bridge ip link add veth_C type veth peer name veth_H ip link add vxlan100 type vxlan id 100 dstport 4789 remote 192.168.80.134 local 192.168.80.137 dev ens33 ip link set veth_C netns netns2 ip netns exec netns2 ip addr add 1.1.1.2/24 dev veth_C ip netns exec netns2 ip link set dev veth_C up ip link set dev my-br up ip link set dev vxlan100 up ip link set dev veth_H up ip link set dev veth_H master my-br ip link set dev vxlan100 master my-br

这样在node2的netns2上ping node1的netns1就可以ping通了

# ip netns exec netns2 ping 1.1.1.1 PING 1.1.1.1 (1.1.1.1) 56(84) bytes of data. 64 bytes from 1.1.1.1: icmp_seq=1 ttl=64 time=0.342 ms 64 bytes from 1.1.1.1: icmp_seq=2 ttl=64 time=0.460 ms

TIPS:

可以使用ip -d link show的方式查看接口的详细信息,如在node2上查看vxlan100的结果如下:

# ip -d link show vxlan100 9: vxlan100: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master my-br state UNKNOWN mode DEFAULT group default qlen 1000 link/ether a6:53:2a:b4:8f:d7 brd ff:ff:ff:ff:ff:ff promiscuity 1 vxlan id 100 remote 192.168.80.134 local 192.168.80.137 dev ens33 srcport 0 0 dstport 4789 ageing 300 noudpcsum noudp6zerocsumtx noudp6zerocsumrx bridge_slave state forwarding priority 32 cost 100 hairpin off guard off root_block off fastleave off learning on flood on port_id 0x8002 port_no 0x2 designated_port 32770 designated_cost 0 designated_bridge 8000.a6:53:2a:b4:8f:d7 designated_root 8000.a6:53:2a:b4:8f:d7 hold_timer 0.00 message_age_timer 0.00 forward_delay_timer 0.00 topology_change_ack 0 config_pending 0 proxy_arp off proxy_arp_wifi off mcast_router 1 mcast_fast_leave off mcast_flood on addrgenmode eui64 numtxqueues 1 numrxqueues 1 gso_max_size 65536 gso_max_segs 65535

- 使用bridge -s fdb show br 可以显示bridge的mac转发表,在node2上查看my-br的mac转发表如下:

第一列表示目的mac地址,全0的mac地址表示默认mac地址,类似默认路由;第三列表示达到目的mac的出接口,后面self表示自身的意思,即该条表项类似到达loopback接口的路由,permanent表示该表项是永久的。最后一条默认mac转发表中可以看到,到达node1(192.168.80.138)的出接口为vxlan100,对应设备接口为ens33(该设置是在创建vxlan接口时创建的)。used表示该表项使用/更新的时间。

# bridge -s fdb show br my-br 33:33:00:00:00:01 dev my-br self permanent 01:00:5e:00:00:01 dev my-br self permanent 33:33:ff:54:9c:74 dev my-br self permanent e6:2f:83:c4:88:e0 dev veth_H used 7352/7352 master my-br permanent e6:2f:83:c4:88:e0 dev veth_H vlan 1 used 7352/7352 master my-br permanent 33:33:00:00:00:01 dev veth_H self permanent 01:00:5e:00:00:01 dev veth_H self permanent 33:33:ff:c4:88:e0 dev veth_H self permanent a6:53:2a:b4:8f:d7 dev vxlan100 used 6060/6060 master my-br permanent a6:53:2a:b4:8f:d7 dev vxlan100 vlan 1 used 6060/6060 master my-br permanent 00:00:00:00:00:00 dev vxlan100 dst 192.168.80.134 via ens33 used 3156/6092 self permanent

- 特别注意:在自建overlay的场景下,最好关闭防火墙,否则报文可能无法上送到vxlan端口,也可以使用firewall-cmd命令单独开放vxlan端口,如下开放vxlan的4789端口

firewall-cmd --zone=public --add-port=4789/udp --permanent firewall-cmd --reload

参考:

https://docs.docker.com/v17.12/network/overlay/#create-an-overlay-network

https://www.securitynik.com/2016/12/docker-networking-internals-container.html

http://blog.nigelpoulton.com/demystifying-docker-overlay-networking/

https://github.com/docker/labs/blob/master/networking/concepts/06-overlay-networks.md

https://cumulusnetworks.com/blog/5-ways-design-container-network/

https://neuvector.com/network-security/docker-swarm-container-networking/

http://man7.org/linux/man-pages/man8/bridge.8.html

本文来自博客园,作者:charlieroro,转载请注明原文链接:https://www.cnblogs.com/charlieroro/p/9897975.html