Y4、common.py文件解读

-

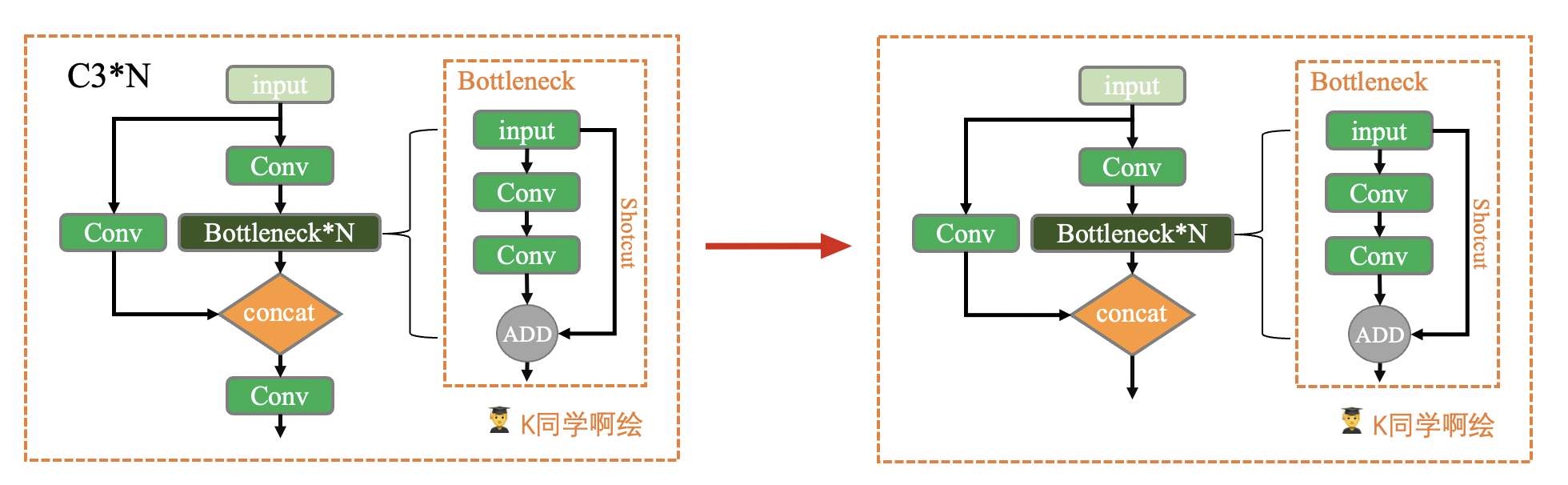

本周任务:将yolov5s网络模型中的C3模块按照下图方式修改,并跑通yolov5。

-

任务提示:仅需修改./models/common.py文件

1、文件信息

文件位置:./models/commonpy

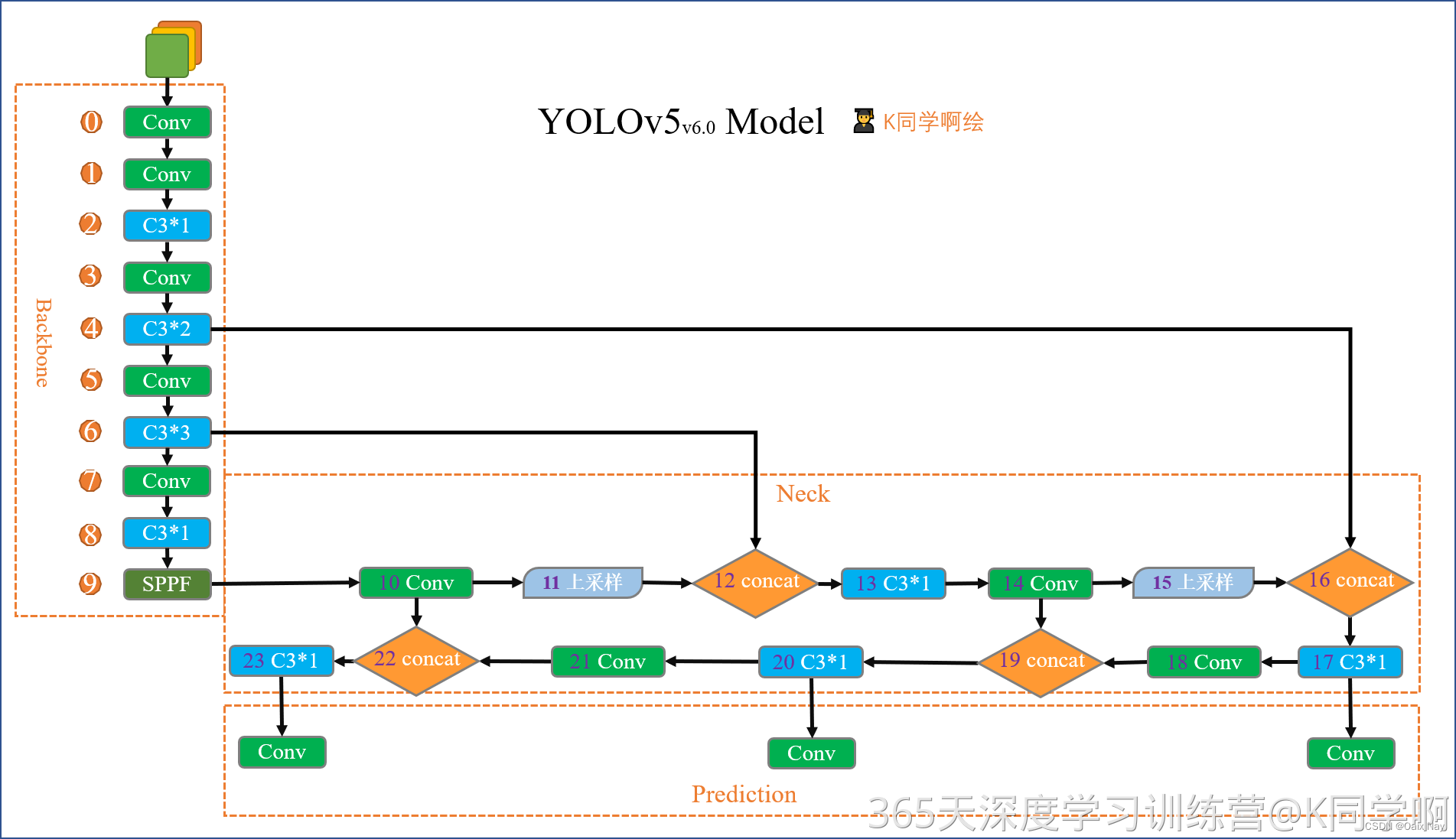

该文件是实现YOLO算法中各个模块的地方,如果我们需要修改某一模块(例如C3),那么就需要修改这个文件中对应模块的的定义。由于YOLOv5版本问题,同一个模块可能会看到不同的版本,这都是正常的,以官网为主即可。

2、导入所需要的包和基本配置

import ast

import contextlib

import json

import math # 数学函数模块

import platform

import warnings

import zipfile

from collections import OrderedDict, namedtuple

from copy import copy # 数据拷贝模块,分浅拷贝和深拷贝

from pathlib import Path # Path将str转换为Path对象,使字符串路径易于操作的模块

from urllib.parse import urlparse

import cv2

import numpy as np # numpy数组操作模块

import pandas as pd # pandas数组操作模块

import requests # Python的HTTP客户端库

import torch # pytorch深度学习框架

import torch.nn as nn # 专门为神经网络设计的模块化接口

from IPython.display import display

from PIL import Image # 图像基础操作模块

from torch.cuda import amp # 混合精度训练模块

from utils import TryExcept

from utils.dataloaders import exif_transpose, letterbox

from utils.general import (LOGGER, ROOT, Profile, check_requirements, check_suffix, check_version, colorstr,

increment_path, is_notebook, make_divisible, non_max_suppression, scale_boxes, xywh2xyxy,

xyxy2xywh, yaml_load)

from utils.plots import Annotator, colors, save_one_box

from utils.torch_utils import copy_attr, smart_inference_mode

3、基本组件的讲解:

3.1 autopad

这个模块可以根据输入的卷积核计算卷积模块所需的pad值。将会用于下面会讲到的 Conv 函数和 Classify 函数中。

def autopad(k, p=None, d=1): # kernel, padding, dilation

# Pad to 'same' shape outputs

if d > 1:

k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

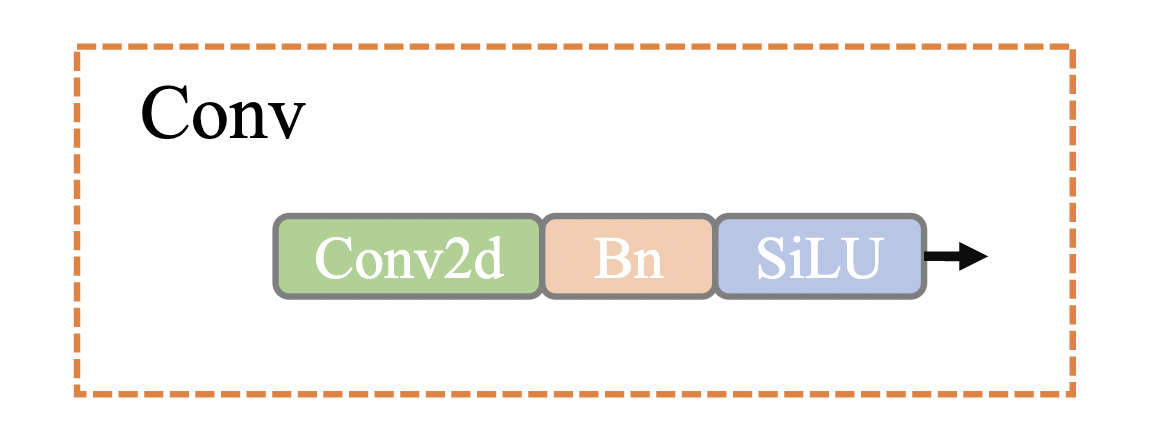

3.2 Conv

这个函数是整个网络中最基础的组件,由 卷积层 + BN层 + 激活函数组成,具体结构如下

class Conv(nn.Module):

# Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)

default_act = nn.SiLU() # default activation

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

''' 在Focus、Bottleneck、BottleneckCSP、C3、SPP、DWConv、TransformerBlock等模块中调用

Standard convolution : conv + BN + act

:params c1: 输入的channel值

:params c2: 输出的channel值

:params k: 卷积的kernel_size

:params s: 卷积的stride

:params p: 卷积的padding,默认是None,可以通过autopad自行计算需要的padding值

:params g: 卷积的groups数,1就是普通的卷积,>1就是深度可分离卷积

:params act: 激活函数类型,True就是SiLU()/Swish,False就是不使用激活函数,类型是nn.Module就使用传进来的激活函数类型

'''

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def forward_fuse(self, x):

''' 用于Model类的fuse函数

融合 Conv + BN 加速推理,一般用于测试/验证阶段

'''

return self.act(self.conv(x))

这个类中还有一个特殊函数 forward_fuse ,这是一个前向加速推理模块,在前向传播过程中,通过融合 Conv + BN 层,达到加速推理的作用,一般用于测试或验证阶段。

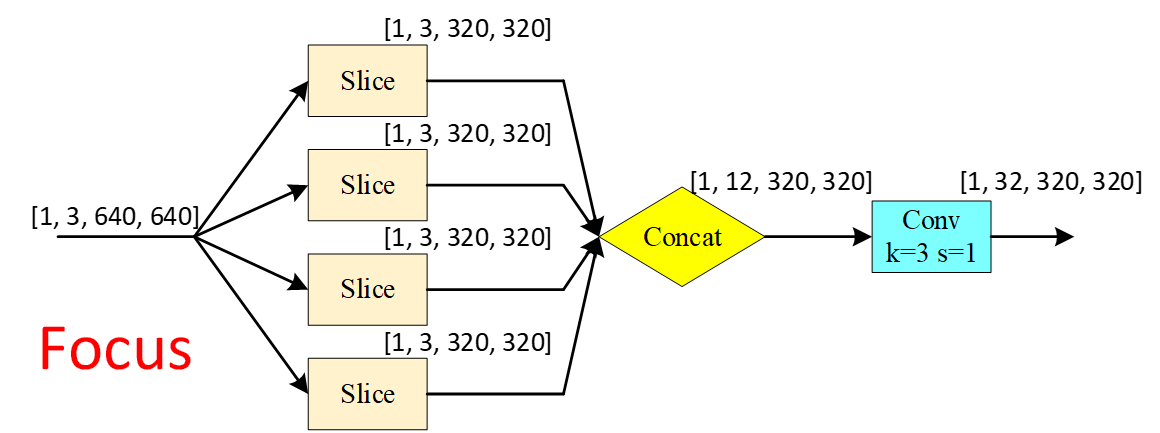

3.3 Focus

Focus模块是作者自己设计出来,为了减少浮点数和提高速度,而不是增加featuremap的,本质就是将图像进行切片,类似于下采样取值,将原图像的宽高信息切分,聚合到channel通道中。结构如下所示:

class Focus(nn.Module):

# Focus wh information into c-space 把宽度w和高度h的信息整合到c空间中

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

''' 在yolo.py的parse_model函数中被调用

理论:从高分辨率图像中,周期性的抽出像素点重构到低分辨率图像中,即将图像相邻的四个位置进行堆叠,

聚集wh维度信息到c通道中,提高每个点的感受野,并减少原始信息的丢失,该模块的设计主要是减少计算量加快速度。

先做4个slice,再concat,最后在做Conv

slice后 (b1,c1,w,h) -> 分成4个slice,每个slice(b,c1,w/2,h/2)

concat(dim=1)后 4个slice(b,c1,w/2,h/2) -> (b,4c1,w/2,h/2)

conv后 (b,4c1,w/2,h/2) -> (b,c2,w/2,h/2)

:params c1: slice后的channel

:params c2: Focus最终输出的channel

:params k: 最后卷积的kernel

:params s: 最后卷积的stride

:params p: 最后卷积的padding

:params g: 最后卷积的分组情况,=1普通卷积,>1深度可分离卷积

:params act: bool激活函数类型,默认True[SiLU()/Swish],False[不用激活函数]

'''

super().__init__()

self.conv = Conv(c1 * 4, c2, k, s, p, g, act=act)

# self.contract = Contract(gain=2)

def forward(self, x): # x(b,c,w,h) -> y(b,4c,w/2,h/2)

''' 有点像做了个下采样 '''

return self.conv(torch.cat((x[..., ::2, ::2], x[..., 1::2, ::2], x[..., ::2, 1::2], x[..., 1::2, 1::2]), 1))

# return self.conv(self.contract(x))

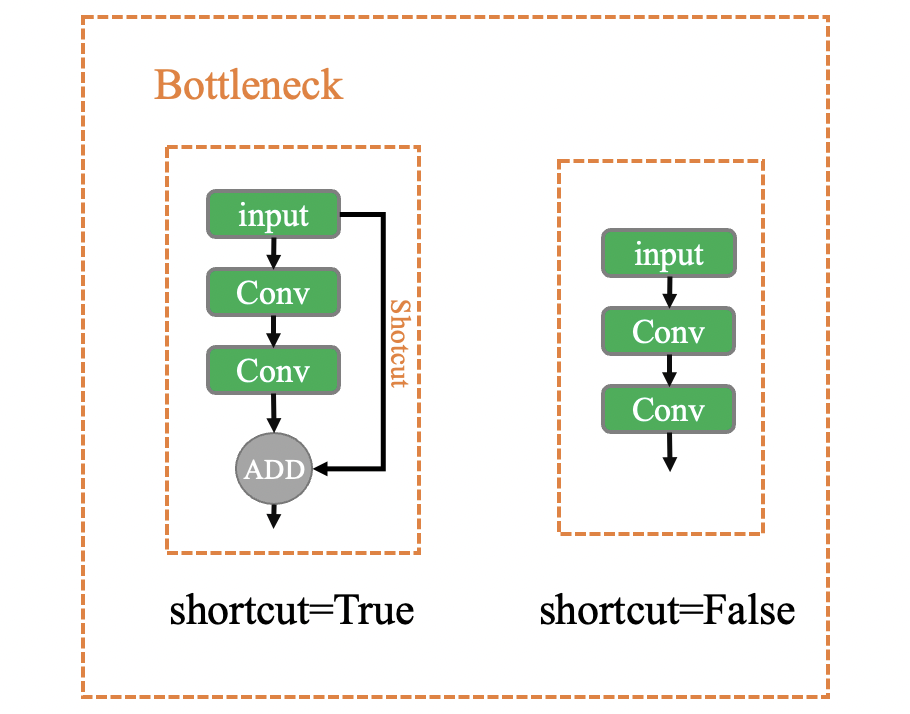

3.4 Bottleneck

模型结构

class Bottleneck(nn.Module):

# Standard bottleneck Conv + Conv + shortcut

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

''' 在BottleneckCSP和yolo.py的parse_model函数中被调用

:params c1: 第一个卷积的输入channel

:params c2: 第二个卷积的输入channel

:params shortcut: bool值,是否有shortcut连接,默认True

:params g: 卷积分组的个数,=1普通卷积,>1深度可分离卷积

:params e: expansion ratio,e*c2就是第一个卷积的输出channel=第二个卷积的输入channel

'''

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1) # 1x1

self.cv2 = Conv(c_, c2, 3, 1, g=g) # 3x3

self.add = shortcut and c1 == c2 # shortcut=Ture & c1==c2 才能做shortcut

def forward(self, x):

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

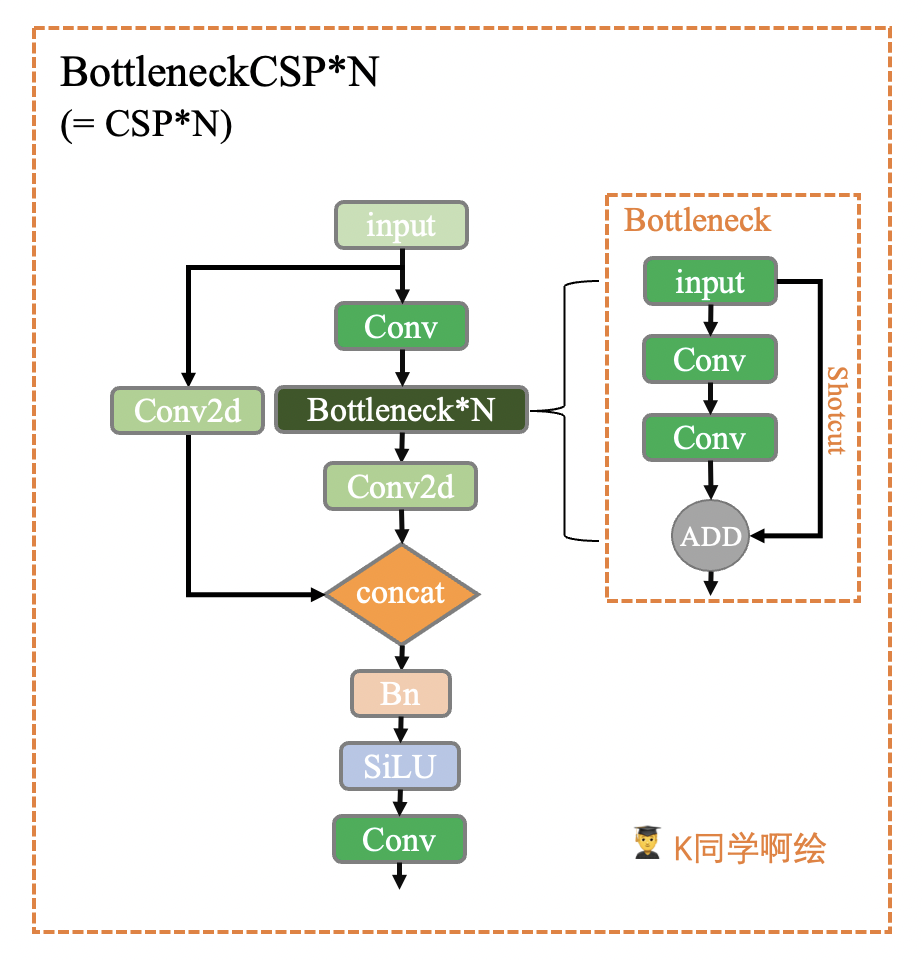

3.5 BottleneckCSP

这个模块是由Bottleneck和CSP结构组成。CSP结构来源于2019年发表的一篇论文:CSPNet: A New Backbone that can Enhance Learning Capability of CNN

这个模块和上面yolov5s中的C3模块等效,如果要用的话直接在yolov5s.yaml文件中将C3改成BottleneckCSP即可,但一般来说不用改,因为C3更好。

BottleneckCSP模块具体的结构如下所示:

class BottleneckCSP(nn.Module):

# CSP Bottleneck https://github.com/WongKinYiu/CrossStagePartialNetworks

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

''' 在C3模块和yolo.py的parse_model函数中被调用

:params c1: 整个BottleneckCSP的输入channel

:params c2: 整个BottleneckCSP的输出channel

:params n: 有n个Bottleneck

:params shortcut: bool值,Bottleneck中是否有shortcut,默认True

:params g: Bottleneck中的3x3卷积类型,=1普通卷积,>1深度可分离卷积

:params e: expansion ratio,e*c2=中间其它所有层的卷积核个数=中间所有层的的输入输出channel

'''

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = nn.Conv2d(c1, c_, 1, 1, bias=False)

self.cv3 = nn.Conv2d(c_, c_, 1, 1, bias=False)

self.cv4 = Conv(2 * c_, c2, 1, 1)

self.bn = nn.BatchNorm2d(2 * c_) # applied to cat(cv2, cv3) 2*c_

self.act = nn.SiLU()

# 叠加n次Bottleneck

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self, x):

y1 = self.cv3(self.m(self.cv1(x)))

y2 = self.cv2(x)

return self.cv4(self.act(self.bn(torch.cat((y1, y2), 1))))

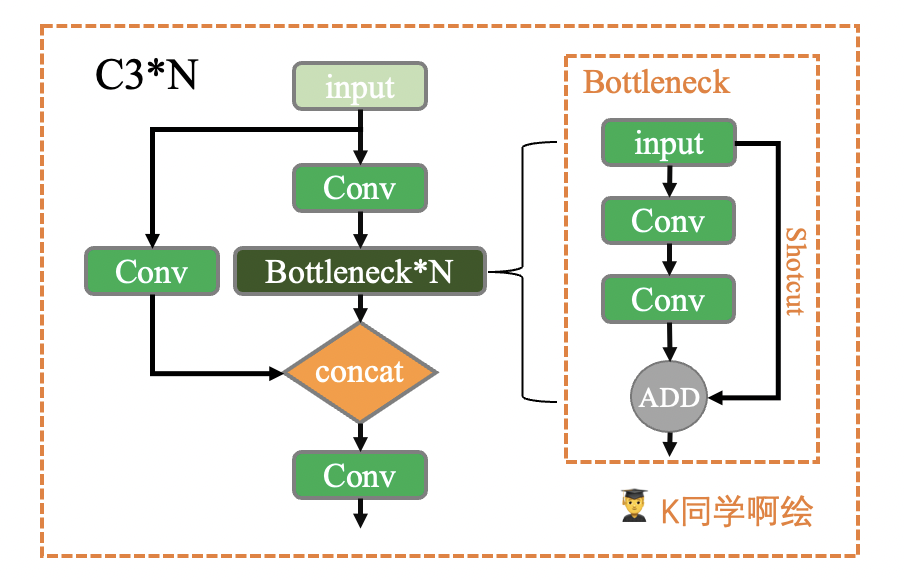

3.6 C3

这个模块是一种简化的BottleneckCSP,因为除了Bottleneck部分只有3个卷积,可以减少参数,所以取名C3。而原作者之所以用C3来代替BottleneckCSP也是有原因的,作者原话:

C3() is an improved version of CSPBottleneck(). It is simpler, faster and lighter with similar performance and better fuse characteristics.

class C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

''' 在C3RT模块和yolo.py的parse_model函数中被调用

:params c1: 整个C3的输入channel

:params c2: 整个C3的输出channel

:params n: 有n个子模块[Bottleneck/CrossConv]

:params shortcut: bool值,子模块[Bottlenec/CrossConv]中是否有shortcut,默认True

:params g: 子模块[Bottlenec/CrossConv]中的3x3卷积类型,=1普通卷积,>1深度可分离卷积

:params e: expansion ratio,e*c2=中间其它所有层的卷积核个数=中间所有层的的输入输出channel

'''

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

# 实验性 CrossConv

#self.m = nn.Sequential(*[CrossConv(c_, c_, 3, 1, g, 1.0, shortcut) for _ in range(n)])

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

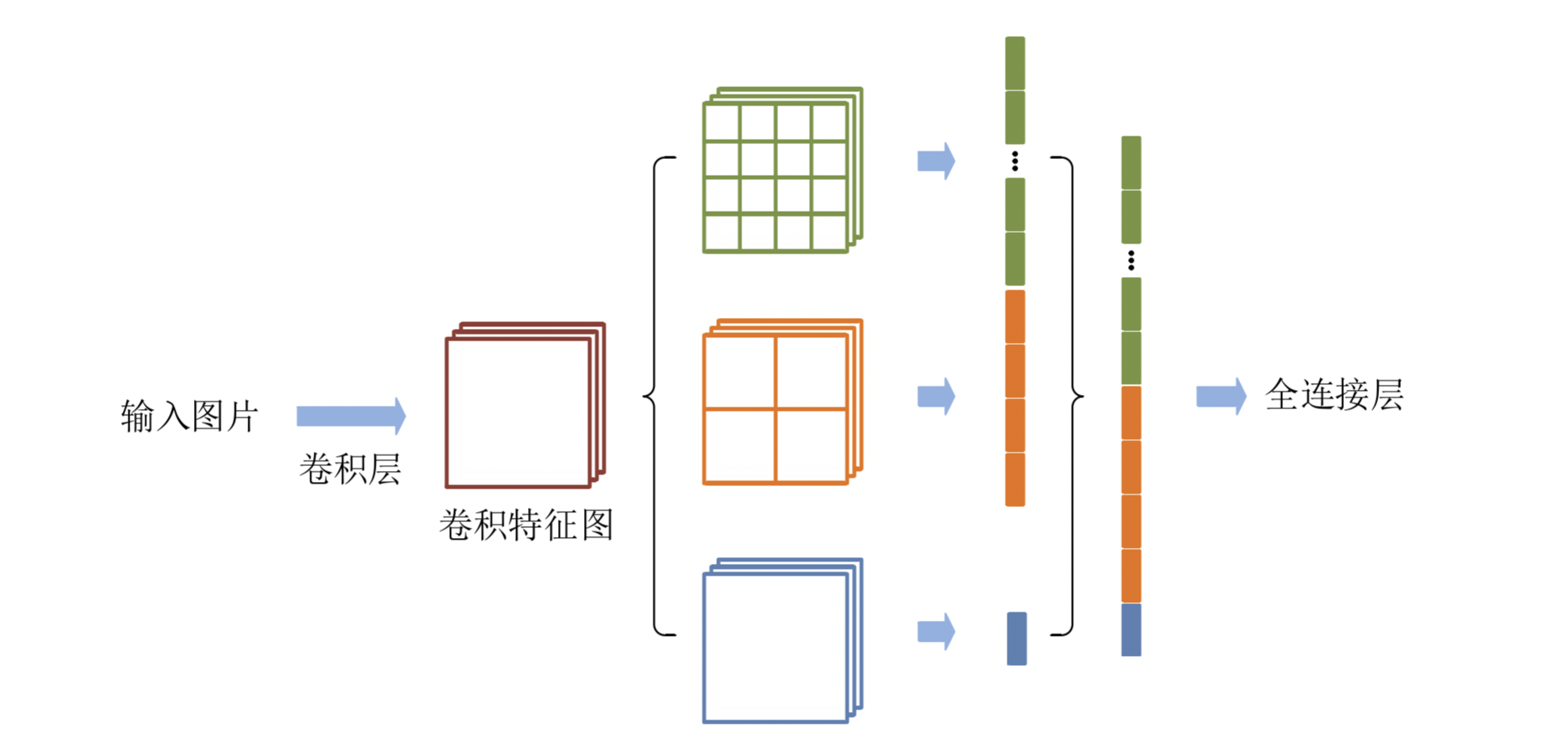

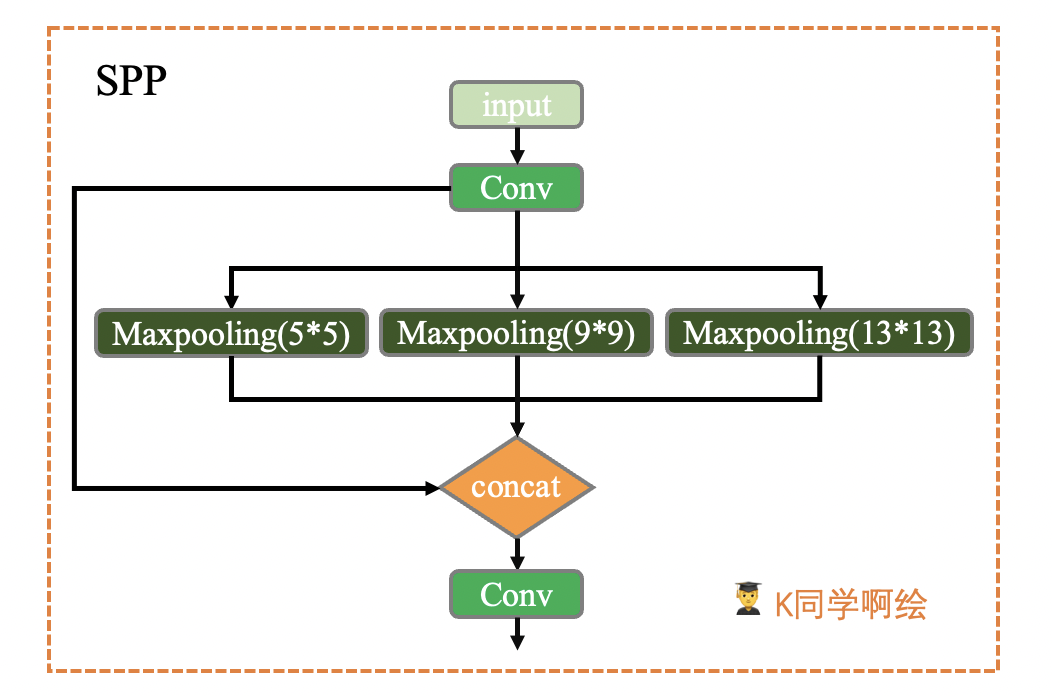

3.7 SPP

高层网络层的感受野的语义信息表征能力强,低层网络层的感受野空间细节信息表征能力强。空间金字塔池化(Spatial Pyramid Pooling,SPP)是目标检测算法中对高层特征进行多尺度池化以增加感受野的重要措施之一。经典的空间金字塔池化模块首先将输入的卷积特征分成不同的尺寸,然后每个尺寸提取固定维度的特征,最后将这些特征拼接成一个固定的维度,如下图1所示。输入的卷积特征图的大小为(w, h),第一层空间金字塔采用4x4的刻度对特征图进行划分,其将输入的特征图分成了16个块,每块的大小为(w/4, h/4);第二层空间金字塔采用2x2的刻度对特征图进行划分,将特征图分为4个块,每块大小为(w/2, h/2);第三层空间金字塔将整张特征图作为一块,进行特征提取操作,最终的特征向量为16+4+1=21维。

SPP模块具体结构如下所示:

class SPP(nn.Module):

# Spatial Pyramid Pooling (SPP) layer https://arxiv.org/abs/1406.4729

def __init__(self, c1, c2, k=(5, 9, 13)):

''' 在yolo.py的parse_model函数中被调用

:params c1: SPP模块的输入channel

:params c2: SPP模块的输出channel

:params k: 保存着三个maxpool的卷积核大小,默认是(5, 9, 13)

'''

super().__init__()

c_ = c1 // 2 # hidden channels

self.cv1 = Conv(c1, c_, 1, 1) # 第一层卷积

self.cv2 = Conv(c_ * (len(k) + 1), c2, 1, 1) # 最后一层卷积,+1是因为有len(k)+1个输入

self.m = nn.ModuleList([nn.MaxPool2d(kernel_size=x, stride=1, padding=x // 2) for x in k])

def forward(self, x):

x = self.cv1(x)

with warnings.catch_warnings():

warnings.simplefilter('ignore') # suppress torch 1.9.0 max_pool2d() warning

return self.cv2(torch.cat([x] + [m(x) for m in self.m], 1))

3.8 Concat

这个函数是将自身(a list of tensors)按照某个维度进行concat,通常用来合并前后两个feature map,也就是上面yolov5s结构图中的Concat。

class Concat(nn.Module):

# Concatenate a list of tensors along dimension

def __init__(self, dimension=1):

''' 在yolo.py的parse_model函数中被调用

:params dimension: 沿着哪个维度进行concat

'''

super().__init__()

self.d = dimension

def forward(self, x):

# x: a list of tensors

return torch.cat(x, self.d)

3.9 Contract、Expand

这两个函数用于改变feature map维度。

- Contract函数改变输入特征的shape,将feature map的 w 和 h 维度(缩小)的数据收缩到channel维度上(放大)。如:x(1,64,80,80) to x(1,256,40,40)。

- Expand函数也是改变输入特征的shape,不过与Contract的相反,是将channel维度(变小)的数据扩展到 W 和 H 维度(变大)。如:x(1,64,80,80) to x(1,16,160,160)。

class Contract(nn.Module):

# Contract width-height into channels, i.e. x(1,64,80,80) to x(1,256,40,40)

def __init__(self, gain=2):

''' 在yolo.py的parse_model函数中被调用,用的不多

改变输入特征的shape,将w和h维度(缩小)的数据收缩到channel维度上(放大)

'''

super().__init__()

self.gain = gain

def forward(self, x):

b, c, h, w = x.size() # assert (h / s == 0) and (W / s == 0), 'Indivisible gain'

s = self.gain # 2

x = x.view(b, c, h // s, s, w // s, s) # x(1,64,40,2,40,2)

# permute: 改变tensor的维度顺序

x = x.permute(0, 3, 5, 1, 2, 4).contiguous() # x(1,2,2,64,40,40)

# .view: 改变tensor的维度

return x.view(b, c * s * s, h // s, w // s) # x(1,256,40,40)

class Expand(nn.Module):

# Expand channels into width-height, i.e. x(1,64,80,80) to x(1,16,160,160)

def __init__(self, gain=2):

''' 在yolo.py的parse_model函数中被调用,用的不多

改变输入特征的shape,将channel维度(变小)的数据扩展到W和H维度上(变大)

'''

super().__init__()

self.gain = gain

def forward(self, x):

b, c, h, w = x.size() # assert C / s ** 2 == 0, 'Indivisible gain'

s = self.gain # 2

x = x.view(b, s, s, c // s ** 2, h, w) # x(1,2,2,16,80,80)

x = x.permute(0, 3, 4, 1, 5, 2).contiguous() # x(1,16,80,2,80,2)

return x.view(b, c // s ** 2, h * s, w * s) # x(1,16,160,160)

4、重要类

这几个函数都是属于模型的扩展模块。yolov5的作者将搭建模型的函数功能写的很齐全。不光包含搭建模型部分,还考虑到了各方面其它的功能,比如给模型搭载NMS功能,给模型封装成包含前处理、推理、后处理的模块(预处理 + 推理 + NMS),二次分类等等功能。

4.1 非极大值抑制(NMS)

非极大值抑制(Non-maximum Suppression(NMS))的作用简单来说就是模型检测出了很多框,我们应该留哪些。

YOLOv5中使用NMS算法来移除一些网络模型预测时生成的多余的检测框,该算法的核心思想是指搜索局部得分最大值预测并移除与局部最大值预测框重叠度超过一定阈值的检测框,需要注意的是,NMS算法对所有待检测目标类别分别执行,即为不同类别的检测框即使有重叠也不会被移除。

这个模块是给模型搭载NMS功能,直接调用的./utils/general.py文件的non_max_suppression()函数。

class NMS(nn.Module):

''' 在yolo.py中Model类的NMS函数中使用

NMS非极大值抑制 Non-Maximum Suppression (NMS) module

给模型model封装NMS,增加模型的扩展功能,但我们一般不用,一般直接在前向推理结束后再调用non_max_suppression函数

'''

conf = 0.25 # 置信度阈值

iou = 0.45 # IOU阈值

classes = None # 是否NMS后只保留指定的类别

max_det = 1000 # 每张图的最大目标个数

def __init__(self):

super(NMS, self).__init__()

def forward(self, x):

'''

:params x[0]: [batch, num_anchors(3个yolo预测层),(x+y+w+h+1+num_classes)]

直接调用的是general.py中的non_max_suppression函数给Model扩展NMS功能

'''

return non_max_suppression(x[0], self.conf, iou_thres=self.iou, classes=self.classes, max_det=self.max_det)

4.2 AutoShape

这个模块是一个模型扩展模块,给模型封装成包含前处理、推理、后处理的模块(预处理 + 推理 + NMS),用的不多

class AutoShape(nn.Module):

# YOLOv5 input-robust model wrapper for passing cv2/np/PIL/torch inputs. Includes preprocessing, inference and NMS

# YOLOv5模型包装器,用于传递 cv2/np/PIL/torch输入

# 包括预处理(preprocessing)、推理(inference)和NMS

conf = 0.25 # NMS confidence threshold

iou = 0.45 # NMS IoU threshold

agnostic = False # NMS class-agnostic

multi_label = False # NMS multiple labels per box

classes = None # (optional list) filter by class, i.e. = [0, 15, 16] for COCO persons, cats and dogs

max_det = 1000 # maximum number of detections per image

amp = False # Automatic Mixed Precision (AMP) inference

def __init__(self, model, verbose=True):

super().__init__()

if verbose:

LOGGER.info('Adding AutoShape... ')

copy_attr(self, model, include=('yaml', 'nc', 'hyp', 'names', 'stride', 'abc'), exclude=()) # copy attributes

self.dmb = isinstance(model, DetectMultiBackend) # DetectMultiBackend() instance

self.pt = not self.dmb or model.pt # PyTorch model

# 开启验证模式

self.model = model.eval()

if self.pt:

m = self.model.model.model[-1] if self.dmb else self.model.model[-1] # Detect()

m.inplace = False # Detect.inplace=False for safe multithread inference

m.export = True # do not output loss values

def _apply(self, fn):

# Apply to(), cpu(), cuda(), half() to model tensors that are not parameters or registered buffers

self = super()._apply(fn)

if self.pt:

m = self.model.model.model[-1] if self.dmb else self.model.model[-1] # Detect()

m.stride = fn(m.stride)

m.grid = list(map(fn, m.grid))

if isinstance(m.anchor_grid, list):

m.anchor_grid = list(map(fn, m.anchor_grid))

return self

@smart_inference_mode()

def forward(self, ims, size=640, augment=False, profile=False):

# Inference from various sources. For size(height=640, width=1280), RGB images example inputs are:

# file: ims = 'data/images/zidane.jpg' # str or PosixPath

# URI: = 'https://ultralytics.com/images/zidane.jpg'

# OpenCV: = cv2.imread('image.jpg')[:,:,::-1] # HWC BGR to RGB x(640,1280,3)

# PIL: = Image.open('image.jpg') or ImageGrab.grab() # HWC x(640,1280,3)

# numpy: = np.zeros((640,1280,3)) # HWC

# torch: = torch.zeros(16,3,320,640) # BCHW (scaled to size=640, 0-1 values)

# multiple: = [Image.open('image1.jpg'), Image.open('image2.jpg'), ...] # list of images

dt = (Profile(), Profile(), Profile())

with dt[0]:

if isinstance(size, int): # expand

size = (size, size)

p = next(self.model.parameters()) if self.pt else torch.empty(1, device=self.model.device) # param

autocast = self.amp and (p.device.type != 'cpu') # Automatic Mixed Precision (AMP) inference

# 图片如果是tensor格式,说明是预处理过的,直接正常进行前向推理即可,NMS在推理结束进行(函数外写)

if isinstance(ims, torch.Tensor): # torch

with amp.autocast(autocast):

return self.model(ims.to(p.device).type_as(p), augment=augment) # inference

# Pre-process

n, ims = (len(ims), list(ims)) if isinstance(ims, (list, tuple)) else (1, [ims]) # number, list of images

shape0, shape1, files = [], [], [] # image and inference shapes, filenames

for i, im in enumerate(ims):

f = f'image{i}' # filename

if isinstance(im, (str, Path)): # filename or uri

im, f = Image.open(requests.get(im, stream=True).raw if str(im).startswith('http') else im), im

im = np.asarray(exif_transpose(im))

elif isinstance(im, Image.Image): # PIL Image

im, f = np.asarray(exif_transpose(im)), getattr(im, 'filename', f) or f

files.append(Path(f).with_suffix('.jpg').name)

if im.shape[0] < 5: # image in CHW

im = im.transpose((1, 2, 0)) # reverse dataloader .transpose(2, 0, 1)

im = im[..., :3] if im.ndim == 3 else cv2.cvtColor(im, cv2.COLOR_GRAY2BGR) # enforce 3ch input

s = im.shape[:2] # HWC

shape0.append(s) # image shape

g = max(size) / max(s) # gain

shape1.append([int(y * g) for y in s])

ims[i] = im if im.data.contiguous else np.ascontiguousarray(im) # update

shape1 = [make_divisible(x, self.stride) for x in np.array(shape1).max(0)] # inf shape

x = [letterbox(im, shape1, auto=False)[0] for im in ims] # pad

x = np.ascontiguousarray(np.array(x).transpose((0, 3, 1, 2))) # stack and BHWC to BCHW

x = torch.from_numpy(x).to(p.device).type_as(p) / 255 # uint8 to fp16/32

with amp.autocast(autocast):

# Inference

with dt[1]:

y = self.model(x, augment=augment) # forward

# Post-process

with dt[2]:

y = non_max_suppression(y if self.dmb else y[0],

self.conf,

self.iou,

self.classes,

self.agnostic,

self.multi_label,

max_det=self.max_det) # NMS

for i in range(n):

scale_boxes(shape1, y[i][:, :4], shape0[i])

return Detections(ims, y, files, dt, self.names, x.shape)

4.3 Detections

这是专门针对目标检测的封装类

class Detections:

# YOLOv5 detections class for inference results

# YOLOv5推理结果检测类

def __init__(self, ims, pred, files, times=(0, 0, 0), names=None, shape=None):

super().__init__()

d = pred[0].device # device

gn = [torch.tensor([*(im.shape[i] for i in [1, 0, 1, 0]), 1, 1], device=d) for im in ims] # normalizations

self.ims = ims # list of images as numpy arrays

self.pred = pred # list of tensors pred[0] = (xyxy, conf, cls)

self.names = names # class names

self.files = files # image filenames

self.times = times # profiling times

self.xyxy = pred # xyxy pixels

self.xywh = [xyxy2xywh(x) for x in pred] # xywh pixels

self.xyxyn = [x / g for x, g in zip(self.xyxy, gn)] # xyxy normalized

self.xywhn = [x / g for x, g in zip(self.xywh, gn)] # xywh normalized

self.n = len(self.pred) # number of images (batch size)

self.t = tuple(x.t / self.n * 1E3 for x in times) # timestamps (ms)

self.s = tuple(shape) # inference BCHW shape

def _run(self, pprint=False, show=False, save=False, crop=False, render=False, labels=True, save_dir=Path('')):

s, crops = '', []

for i, (im, pred) in enumerate(zip(self.ims, self.pred)):

s += f'\nimage {i + 1}/{len(self.pred)}: {im.shape[0]}x{im.shape[1]} ' # string

if pred.shape[0]:

for c in pred[:, -1].unique():

n = (pred[:, -1] == c).sum() # detections per class

s += f"{n} {self.names[int(c)]}{'s' * (n > 1)}, " # add to string

s = s.rstrip(', ')

if show or save or render or crop:

annotator = Annotator(im, example=str(self.names))

for *box, conf, cls in reversed(pred): # xyxy, confidence, class

label = f'{self.names[int(cls)]} {conf:.2f}'

if crop:

file = save_dir / 'crops' / self.names[int(cls)] / self.files[i] if save else None

crops.append({

'box': box,

'conf': conf,

'cls': cls,

'label': label,

'im': save_one_box(box, im, file=file, save=save)})

else: # all others

annotator.box_label(box, label if labels else '', color=colors(cls))

im = annotator.im

else:

s += '(no detections)'

im = Image.fromarray(im.astype(np.uint8)) if isinstance(im, np.ndarray) else im # from np

if show:

display(im) if is_notebook() else im.show(self.files[i])

if save:

f = self.files[i]

im.save(save_dir / f) # save

if i == self.n - 1:

LOGGER.info(f"Saved {self.n} image{'s' * (self.n > 1)} to {colorstr('bold', save_dir)}")

if render:

self.ims[i] = np.asarray(im)

if pprint:

s = s.lstrip('\n')

return f'{s}\nSpeed: %.1fms pre-process, %.1fms inference, %.1fms NMS per image at shape {self.s}' % self.t

if crop:

if save:

LOGGER.info(f'Saved results to {save_dir}\n')

return crops

@TryExcept('Showing images is not supported in this environment')

def show(self, labels=True):

self._run(show=True, labels=labels) # show results

def save(self, labels=True, save_dir='runs/detect/exp', exist_ok=False):

save_dir = increment_path(save_dir, exist_ok, mkdir=True) # increment save_dir

self._run(save=True, labels=labels, save_dir=save_dir) # save results

def crop(self, save=True, save_dir='runs/detect/exp', exist_ok=False):

save_dir = increment_path(save_dir, exist_ok, mkdir=True) if save else None

return self._run(crop=True, save=save, save_dir=save_dir) # crop results

def render(self, labels=True):

self._run(render=True, labels=labels) # render results

return self.ims

def pandas(self):

# return detections as pandas DataFrames, i.e. print(results.pandas().xyxy[0])

new = copy(self) # return copy

ca = 'xmin', 'ymin', 'xmax', 'ymax', 'confidence', 'class', 'name' # xyxy columns

cb = 'xcenter', 'ycenter', 'width', 'height', 'confidence', 'class', 'name' # xywh columns

for k, c in zip(['xyxy', 'xyxyn', 'xywh', 'xywhn'], [ca, ca, cb, cb]):

a = [[x[:5] + [int(x[5]), self.names[int(x[5])]] for x in x.tolist()] for x in getattr(self, k)] # update

setattr(new, k, [pd.DataFrame(x, columns=c) for x in a])

return new

def tolist(self):

# return a list of Detections objects, i.e. 'for result in results.tolist():'

r = range(self.n) # iterable

x = [Detections([self.ims[i]], [self.pred[i]], [self.files[i]], self.times, self.names, self.s) for i in r]

# for d in x:

# for k in ['ims', 'pred', 'xyxy', 'xyxyn', 'xywh', 'xywhn']:

# setattr(d, k, getattr(d, k)[0]) # pop out of list

return x

def print(self):

LOGGER.info(self.__str__())

def __len__(self): # override len(results)

return self.n

def __str__(self): # override print(results)

return self._run(pprint=True) # print results

def __repr__(self):

return f'YOLOv5 {self.__class__} instance\n' + self.__str__()

4.4 Classify

这是一个二级分类模块。什么是二级分类模块?比如做车牌识别,先识别出车牌,如果相对车牌上的字进行识别,就需要二级分类进一步检测。

class Classify(nn.Module):

# YOLOv5 classification head, i.e. x(b,c1,20,20) to x(b,c2)

def __init__(self, c1, c2, k=1, s=1, p=None, g=1): # ch_in, ch_out, kernel, stride, padding, groups

''' 这是一个二级分类模块

如果对模型输出的分类在进行分类,就可以用这个模块。

不过这里这个类写的比较简单,若进行复杂的二级分类,可以根据自己的实际任务改下,这里代码不唯一。

'''

super().__init__()

c_ = 1280 # efficientnet_b0 size

self.conv = Conv(c1, c_, k, s, autopad(k, p), g)

self.pool = nn.AdaptiveAvgPool2d(1) # to x(b,c_,1,1)

self.drop = nn.Dropout(p=0.0, inplace=True)

self.linear = nn.Linear(c_, c2) # to x(b,c2)

def forward(self, x):

if isinstance(x, list):

x = torch.cat(x, 1)

return self.linear(self.drop(self.pool(self.conv(x)).flatten(1)))

5、调整模型

C3模块修改如下(去掉concat后的卷积层):

class C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

''' 在C3RT模块和yolo.py的parse_model函数中被调用

:params c1: 整个C3的输入channel

:params c2: 整个C3的输出channel

:params n: 有n个子模块[Bottleneck/CrossConv]

:params shortcut: bool值,子模块[Bottlenec/CrossConv]中是否有shortcut,默认True

:params g: 子模块[Bottlenec/CrossConv]中的3x3卷积类型,=1普通卷积,>1深度可分离卷积

:params e: expansion ratio,e*c2=中间其它所有层的卷积核个数=中间所有层的的输入输出channel

'''

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

# 实验性 CrossConv

#self.m = nn.Sequential(*[CrossConv(c_, c_, 3, 1, g, 1.0, shortcut) for _ in range(n)])

def forward(self, x):

#return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

# 移除cv3卷积层后,若要保持最终输出的channel仍为c2,则中间层的channel需为c2/2

# 设置e=0.5即可,取默认值不变

return torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1)

6、运行并打印模型查看

(Pytorch) E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master>python train.py --img 640 --batch 8 --epoch 1 --data data/fruits.yaml --cfg models/yolov5s.yaml --weights weights/yolov5s.pt --device 0

train: weights=weights/yolov5s.pt, cfg=models/yolov5s.yaml, data=data/fruits.yaml, hyp=data\hyps\hyp.scratch-low.yaml, epochs=1, batch_size=8, imgsz=640, rect=False, resume=False, nosave=False, noval=False, noautoanchor=False, noplots=False, evolve=None, bucket=, cache=None, image_weights=False, device=0, multi_scale=False, single_cls=False, optimizer=SGD, sync_bn=False, workers=8, project=runs\train, name=exp, exist_ok=False, quad=False, cos_lr=False, label_smoothing=0.0, patience=100, freeze=[0], save_period=-1, seed=0, local_rank=-1, entity=None, upload_dataset=False, bbox_interval=-1, artifact_alias=latest

github: skipping check (not a git repository), for updates see https://github.com/ultralytics/yolov5

YOLOv5 2022-12-8 Python-3.8.12 torch-1.8.1+cu111 CUDA:0 (NVIDIA GeForce GTX 1660 Ti, 6144MiB)

hyperparameters: lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.5, cls_pw=1.0, obj=1.0, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.0, copy_paste=0.0

ClearML: run 'pip install clearml' to automatically track, visualize and remotely train YOLOv5 in ClearML

Comet: run 'pip install comet_ml' to automatically track and visualize YOLOv5 runs in Comet

TensorBoard: Start with 'tensorboard --logdir runs\train', view at http://localhost:6006/

Overriding model.yaml nc=80 with nc=4

from n params module arguments

0 -1 1 3520 models.common.Conv [3, 32, 6, 2, 2]

1 -1 1 18560 models.common.Conv [32, 64, 3, 2]

2 -1 1 18816 models.common.C3 [64, 64, 1]

3 -1 1 73984 models.common.Conv [64, 128, 3, 2]

4 -1 1 74496 models.common.C3 [128, 128, 1]

5 -1 1 295424 models.common.Conv [128, 256, 3, 2]

6 -1 2 460800 models.common.C3 [256, 256, 2]

7 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

8 -1 1 1182720 models.common.C3 [512, 512, 1]

9 -1 1 656896 models.common.SPPF [512, 512, 5]

10 -1 1 131584 models.common.Conv [512, 256, 1, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 models.common.Concat [1]

13 -1 1 361984 models.common.C3 [512, 256, 1, False]

14 -1 1 33024 models.common.Conv [256, 128, 1, 1]

15 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

16 [-1, 4] 1 0 models.common.Concat [1]

17 -1 1 90880 models.common.C3 [256, 128, 1, False]

18 -1 1 147712 models.common.Conv [128, 128, 3, 2]

19 [-1, 14] 1 0 models.common.Concat [1]

20 -1 1 296448 models.common.C3 [256, 256, 1, False]

21 -1 1 590336 models.common.Conv [256, 256, 3, 2]

22 [-1, 10] 1 0 models.common.Concat [1]

23 -1 1 1182720 models.common.C3 [512, 512, 1, False]

24 [17, 20, 23] 1 24273 models.yolo.Detect [4, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [128, 256, 512]]

YOLOv5s summary: 200 layers, 6824849 parameters, 6824849 gradients, 13.2 GFLOPs

Transferred 318/325 items from weights\yolov5s.pt

AMP: checks passed

optimizer: SGD(lr=0.01) with parameter groups 53 weight(decay=0.0), 56 weight(decay=0.0005), 56 bias

train: Scanning E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master\paper_data\train...

train: WARNING Cache directory E:\WorkSpace_GuanXiang\0.\365\2.YOLOv5\yolov5-master\paper_data is not writeable: [WinError 183] : 'E:\\WorkSpace_GuanXiang\\0.\\365\\2.YOLOv5\\yolov5-master\\paper_data\\train.cache.npy' -> 'E:\\WorkSpace_GuanXiang\\0.\\365\\2.YOLOv5\\yolov5-master\\paper_data\\train.cache'

val: Scanning E:\WorkSpace_GuanXiang\0.学习资料\365天深度学习训练营\2.YOLOv5白皮书\yolov5-master\paper_data\val.cache..

AutoAnchor: 5.35 anchors/target, 1.000 Best Possible Recall (BPR). Current anchors are a good fit to dataset

Plotting labels to runs\train\exp2\labels.jpg...

Image sizes 640 train, 640 val

Using 8 dataloader workers

Logging results to runs\train\exp2

Starting training for 1 epochs...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

0/0 1.54G nan nan nan 42 640: 100%|██████████| 20/20 00:22

Class Images Instances P R mAP50 mAP50-95: 100%|██████████| 2/2 00:00

all 20 60 0 0 0 0

1 epochs completed in 0.007 hours.

Optimizer stripped from runs\train\exp2\weights\last.pt, 14.0MB

Optimizer stripped from runs\train\exp2\weights\best.pt, 14.0MB

Validating runs\train\exp2\weights\best.pt...

Fusing layers...

YOLOv5s summary: 147 layers, 6815729 parameters, 0 gradients, 13.1 GFLOPs

Class Images Instances P R mAP50 mAP50-95: 100%|██████████| 2/2 00:00

all 20 60 0 0 0 0

Results saved to runs\train\exp2

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 震惊!C++程序真的从main开始吗?99%的程序员都答错了

· 【硬核科普】Trae如何「偷看」你的代码?零基础破解AI编程运行原理

· 单元测试从入门到精通

· 上周热点回顾(3.3-3.9)

· winform 绘制太阳,地球,月球 运作规律