基于卷积神经网络的美食分类

使用卷积神经网络解决美食图片的分类问题:::数据集在我这里,私聊给!!!!!!!!!

环境:python3.7 , 飞浆版本2.0 , 操作平台pycharm

步骤1:美食图片数据集介绍与加载:

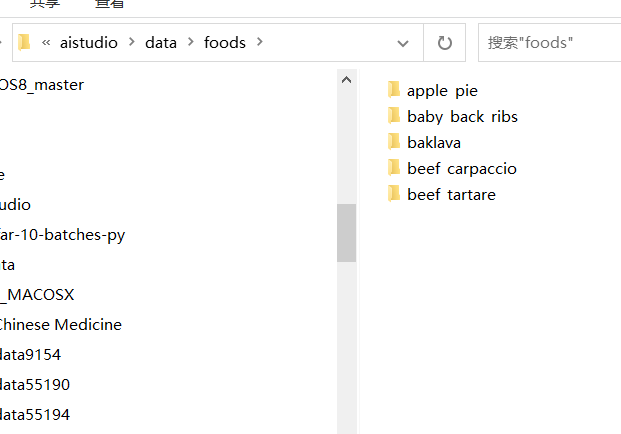

本实践使用的数据集包含5000张格式为jpg的三通道彩色图像,共5种食物类别。对于本实践中的数据包,具体处理与加载的方式与宝石分类类似,如不知,则请看我之前宝石分类的那篇文章

https://www.cnblogs.com/caizhou520/p/17810369.html

首先定义个方法对数据集的压缩包进行解压缩,解压完成之后可以看到数据集:

1 def unzip_data(src_path,target_path): 2 ''' 3 解压原始数据集,将src_path路径下的zip包解压至target_path目录下 4 ''' 5 if(not os.path.isdir(target_path + "foods")): 6 z = zipfile.ZipFile(src_path, 'r') 7 z.extractall(path=target_path) 8 z.close()

然后,定义get_data_list()方法遍历文件夹和图片,按照一定比例将数据分为训练集和验证集,并生成对应的train.txt和eval.txt文档,然后用json的形式对整个数据集进行一个说明;

1 def get_data_list(target_path,train_list_path,eval_list_path): 2 ''' 3 生成数据列表 4 ''' 5 #存放所有类别的信息 6 class_detail = [] 7 #获取所有类别保存的文件夹名称 8 data_list_path=target_path+"foods/" 9 class_dirs = os.listdir(data_list_path) 10 #总的图像数量 11 all_class_images = 0 12 #存放类别标签 13 class_label=0 14 #存放类别数目 15 class_dim = 0 16 #存储要写进eval.txt和train.txt中的内容 17 trainer_list=[] 18 eval_list=[] 19 #读取每个类别 20 for class_dir in class_dirs: 21 if class_dir != ".DS_Store": 22 class_dim += 1 23 #每个类别的信息 24 class_detail_list = {} 25 eval_sum = 0 26 trainer_sum = 0 27 #统计每个类别有多少张图片 28 class_sum = 0 29 #获取类别路径 30 path = data_list_path + class_dir 31 # 获取所有图片 32 img_paths = os.listdir(path) 33 for img_path in img_paths: # 遍历文件夹下的每个图片 34 name_path = path + '/' + img_path # 每张图片的路径 35 if class_sum % 10 == 0: # 每10张图片取一个做验证数据 36 eval_sum += 1 # test_sum为测试数据的数目 37 eval_list.append(name_path + "\t%d" % class_label + "\n") 38 else: 39 trainer_sum += 1 40 trainer_list.append(name_path + "\t%d" % class_label + "\n")#trainer_sum测试数据的数目 41 class_sum += 1 #每类图片的数目 42 all_class_images += 1 #所有类图片的数目 43 44 # 说明的json文件的class_detail数据 45 class_detail_list['class_name'] = class_dir #类别名称 46 class_detail_list['class_label'] = class_label #类别标签 47 class_detail_list['class_eval_images'] = eval_sum #该类数据的测试集数目 48 class_detail_list['class_trainer_images'] = trainer_sum #该类数据的训练集数目 49 class_detail.append(class_detail_list) 50 #初始化标签列表 51 train_parameters['label_dict'][str(class_label)] = class_dir 52 class_label += 1 53 54 #初始化分类数 55 train_parameters['class_dim'] = class_dim 56 57 #乱序 58 random.shuffle(eval_list) 59 with open(eval_list_path, 'a') as f: 60 for eval_image in eval_list: 61 f.write(eval_image) 62 63 random.shuffle(trainer_list) 64 with open(train_list_path, 'a') as f2: 65 for train_image in trainer_list: 66 f2.write(train_image) 67 68 # 说明的json文件信息 69 readjson = {} 70 readjson['all_class_name'] = data_list_path #文件父目录 71 readjson['all_class_images'] = all_class_images 72 readjson['class_detail'] = class_detail 73 jsons = json.dumps(readjson, sort_keys=True, indent=4, separators=(',', ': ')) 74 with open(train_parameters['readme_path'],'w') as f: 75 f.write(jsons) 76 print ('生成数据列表完成!')

生成train.txt文本和eval.txt文本:

生成训练数据和测试数据:

1 class FoodDataset(paddle.io.Dataset): 2 def __init__(self, data_path, mode='train'): 3 """ 4 数据读取器 5 :param data_path: 数据集所在路径 6 :param mode: train or eval 7 """ 8 super().__init__() 9 self.data_path = data_path 10 self.img_paths = [] 11 self.labels = [] 12 13 if mode == 'train': 14 with open(os.path.join(self.data_path, "train.txt"), "r", encoding="utf-8") as f: 15 self.info = f.readlines() 16 for img_info in self.info: 17 img_path, label = img_info.strip().split('\t') 18 self.img_paths.append(img_path) 19 self.labels.append(int(label)) 20 21 else: 22 with open(os.path.join(self.data_path, "eval.txt"), "r", encoding="utf-8") as f: 23 self.info = f.readlines() 24 for img_info in self.info: 25 img_path, label = img_info.strip().split('\t') 26 self.img_paths.append(img_path) 27 self.labels.append(int(label)) 28 29 30 def __getitem__(self, index): 31 """ 32 获取一组数据 33 :param index: 文件索引号 34 :return: 35 """ 36 # 第一步打开图像文件并获取label值 37 img_path = self.img_paths[index] 38 img = Image.open(img_path) 39 if img.mode != 'RGB': 40 img = img.convert('RGB') 41 img = img.resize((64, 64), Image.BILINEAR) 42 img = np.array(img).astype('float32') 43 img = img.transpose((2, 0, 1)) / 255 44 label = self.labels[index] 45 label = np.array([label], dtype="int64") 46 return img, label 47 48 def print_sample(self, index: int = 0): 49 print("文件名", self.img_paths[index], "\t标签值", self.labels[index]) 50 51 def __len__(self): 52 return len(self.img_paths) 53 54 55 # In[7]: 56 57 58 #训练数据加载 59 train_dataset = FoodDataset(data_path='/home/aistudio/data/',mode='train') 60 train_loader = paddle.io.DataLoader(train_dataset, batch_size=train_parameters['train_batch_size'], shuffle=True) 61 #测试数据加载 62 eval_dataset = FoodDataset(data_path='/home/aistudio/data/',mode='eval') 63 eval_loader = paddle.io.DataLoader(eval_dataset, batch_size = 8, shuffle=False)

步骤2:自定义卷积神经网络:

1 #定义卷积网络 2 class MyCNN(nn.Layer): 3 def __init__(self): 4 super(MyCNN,self).__init__() 5 # in_channels, out_channels, kernel_size, stride=1, padding=0 6 self.conv0 = nn.Conv2D(in_channels = 3,out_channels=64, kernel_size=3,padding=0,stride=1) 7 self.pool0 = nn.MaxPool2D(kernel_size = 2,stride = 2) 8 self.conv1 = nn.Conv2D(in_channels = 64,out_channels=128,kernel_size=3,padding=0, stride = 1) 9 self.pool1 = nn.MaxPool2D(kernel_size = 2, stride = 2) 10 self.conv2 = nn.Conv2D(in_channels = 128,out_channels=128,kernel_size=5,padding=0) 11 self.pool2 = nn.MaxPool2D(kernel_size = 2, stride = 2) 12 self.fc1 = nn.Linear(in_features=128*5*5,out_features=5) 13 14 def forward(self,input): 15 x = self.conv0(input) 16 x = self.pool0(x) 17 x = self.conv1(x) 18 x = self.pool1(x) 19 x = self.conv2(x) 20 x = self.pool2(x) 21 x = paddle.reshape(x,shape=[-1,128*5*5]) 22 y = self.fc1(x) 23 24 return y 25 26 27 # In[31]: 28 29 30 # 实例化网络 31 model = MyCNN() 32 # 定义输入 33 input_define = paddle.static.InputSpec(shape=[-1, 3 , 64, 64], 34 dtype="float32", 35 name="img") 36 37 label_define = paddle.static.InputSpec(shape=[-1, 1], 38 dtype="int64", 39 name="label") 40 model = paddle.Model(model, inputs=input_define, labels=label_define) 41 params_info = model.summary((1,3,64,64)) 42 print(params_info) # 打印模型基础结构和参数信息

打印出网络结构图,如下图所示,总共做了三次卷积和池化操作,最后经过全连接层,然后再输出5个类别图像的比率,选择概率大的图片:

步骤3:模型的训练:

定义训练集的准确率图和损失率图:

1 Batch=0 2 Batchs=[] 3 all_train_accs=[] 4 def draw_train_acc(Batchs, train_accs): 5 title="training accs" 6 plt.title(title, fontsize=24) 7 plt.xlabel("batch", fontsize=14) 8 plt.ylabel("acc", fontsize=14) 9 plt.plot(Batchs, train_accs, color='green', label='training accs') 10 plt.legend() 11 plt.grid() 12 plt.show() 13 14 all_train_loss=[] 15 def draw_train_loss(Batchs, train_loss): 16 title="training loss" 17 plt.title(title, fontsize=24) 18 plt.xlabel("batch", fontsize=14) 19 plt.ylabel("loss", fontsize=14) 20 plt.plot(Batchs, train_loss, color='red', label='training loss') 21 plt.legend() 22 plt.grid() 23 plt.show()

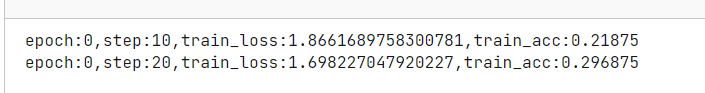

对模型进行训练,把训练好的模型的准确率和损失率通过定义的matplotlib画出来:

1 model=MyCNN() # 模型实例化 2 model.train() # 训练模式 3 cross_entropy = paddle.nn.CrossEntropyLoss() 4 opt=paddle.optimizer.SGD(learning_rate=0.001, parameters=model.parameters()) 5 6 epochs_num=train_parameters['num_epochs'] #迭代次数 7 for pass_num in range(train_parameters['num_epochs']): 8 for batch_id,data in enumerate(train_loader()): 9 image = data[0] 10 label = data[1] 11 predict=model(image) #数据传入model 12 # print(predict) 13 # print(np.argmax(predict,axis=1)) 14 loss=cross_entropy(predict,label) 15 acc=paddle.metric.accuracy(predict,label.reshape([-1,1]))#计算精度 16 # acc = np.mean(label==np.argmax(predict,axis=1)) 17 18 if batch_id!=0 and batch_id%10==0: 19 Batch = Batch+10 20 Batchs.append(Batch) 21 all_train_loss.append(loss.numpy()[0]) 22 all_train_accs.append(acc.numpy()[0]) 23 print("epoch:{},step:{},train_loss:{},train_acc:{}".format(pass_num,batch_id,loss.numpy()[0],acc.numpy()[0])) 24 loss.backward() 25 opt.step() 26 opt.clear_grad() #opt.clear_grad()来重置梯度 27 paddle.save(model.state_dict(),'MyCNN')#保存模型 28 draw_train_acc(Batchs,all_train_accs) 29 draw_train_loss(Batchs,all_train_loss)

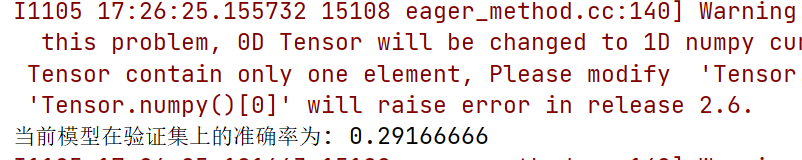

步骤4:使用验证集数据对模型的好坏进行评估:

1 #模型评估 2 para_state_dict = paddle.load("MyCNN") 3 model = MyCNN() 4 model.set_state_dict(para_state_dict) #加载模型参数 5 model.eval() #验证模式 6 7 accs = [] 8 9 for batch_id,data in enumerate(eval_loader()):#测试集 10 image=data[0] 11 label=data[1] 12 predict=model(image) 13 acc=paddle.metric.accuracy(predict,label) 14 accs.append(acc.numpy()[0]) 15 avg_acc = np.mean(accs) 16 print("当前模型在验证集上的准确率为:",avg_acc)

由图可知,其模型在验证集的准确率较低。可能是由于训练迭代的次数比较少,建议改进模型进行迭代;

步骤5:预测一个新的图像是否和真实的类别保持一致

1 ''' 2 模型预测 3 ''' 4 5 para_state_dict = paddle.load("MyCNN") 6 model = MyCNN() 7 model.set_state_dict(para_state_dict) #加载模型参数 8 model.eval() #验证模式 9 10 #展示预测图片 11 infer_path='data/foods/baklava/1936599.jpg' 12 img = Image.open(infer_path) 13 plt.imshow(img) #根据数组绘制图像 14 plt.show() #显示图像 15 #对预测图片进行预处理 16 infer_imgs = [] 17 infer_imgs.append(load_image(infer_path)) 18 infer_imgs = np.array(infer_imgs) 19 label_dic = train_parameters['label_dict'] 20 for i in range(len(infer_imgs)): 21 data = infer_imgs[i] 22 dy_x_data = np.array(data).astype('float32') 23 dy_x_data=dy_x_data[np.newaxis,:, : ,:] 24 img = paddle.to_tensor (dy_x_data) 25 out = model(img) 26 lab = np.argmax(out.numpy()) #argmax():返回最大数的索引 27 print("第{}个样本,被预测为:{},真实标签为:{}".format(i+1,label_dic[str(lab)],infer_path.split('/')[-2]) ) 28 print("结束")

本实验完整代码如下所示:

1 #!/usr/bin/env python 2 # coding: utf-8 3 4 # 5 6 # ## 任务描述: 7 # 8 # ### 如何根据据图像的视觉内容为图像赋予一个语义类别是**图像分类**的目标,也是图像检索、图像内容分析和目标识别等问题的基础。 9 # 10 # ### 本实践旨在通过一个美食分类的案列,让大家理解和掌握如何使用飞桨动态图搭建一个**卷积神经网络**。 11 # 12 # ### 特别提示:本实践所用数据集均来自互联网,请勿用于商务用途。 13 14 # In[32]: 15 16 17 import os 18 import zipfile 19 import random 20 import json 21 import paddle 22 import sys 23 import numpy as np 24 from PIL import Image 25 from PIL import ImageEnhance 26 import paddle 27 from paddle import fluid 28 import matplotlib.pyplot as plt 29 import paddle.vision.transforms as T 30 import paddle.nn as nn 31 import paddle.nn.functional as F 32 33 34 # In[33]: 35 36 37 ''' 38 参数配置 39 ''' 40 train_parameters = { 41 "input_size": [3, 64, 64], #输入图片的shape 42 "class_dim": 5, #分类数 43 "src_path":"data/data42610/foods.zip", #原始数据集路径 44 "target_path":"/home/aistudio/data/", #要解压的路径 45 "train_list_path": "/home/aistudio/data/train.txt", #train.txt路径 46 "eval_list_path": "/home/aistudio/data/eval.txt", #eval.txt路径 47 "readme_path": "/home/aistudio/data/readme.json", #readme.json路径 48 "label_dict":{}, #标签字典 49 "num_epochs": 2, #训练轮数 50 "train_batch_size": 64, #训练时每个批次的大小 51 "learning_strategy": { #优化函数相关的配置 52 "lr": 0.01 #超参数学习率 53 } 54 } 55 56 57 # In[34]: 58 59 60 print(paddle.__version__) 61 62 63 # # **一、数据准备** 64 # 65 # ### (1)解压原始数据集 66 # 67 # ### (2)按照比例划分训练集与验证集 68 # 69 # ### (3)乱序,生成数据列表 70 # 71 # ### (4)构造训练数据集提供器和验证数据集提供器 72 73 # In[35]: 74 75 76 77 def unzip_data(src_path,target_path): 78 ''' 79 解压原始数据集,将src_path路径下的zip包解压至target_path目录下 80 ''' 81 if(not os.path.isdir(target_path + "foods")): 82 z = zipfile.ZipFile(src_path, 'r') 83 z.extractall(path=target_path) 84 z.close() 85 86 def get_data_list(target_path,train_list_path,eval_list_path): 87 ''' 88 生成数据列表 89 ''' 90 #存放所有类别的信息 91 class_detail = [] 92 #获取所有类别保存的文件夹名称 93 data_list_path=target_path+"foods/" 94 class_dirs = os.listdir(data_list_path) 95 #总的图像数量 96 all_class_images = 0 97 #存放类别标签 98 class_label=0 99 #存放类别数目 100 class_dim = 0 101 #存储要写进eval.txt和train.txt中的内容 102 trainer_list=[] 103 eval_list=[] 104 #读取每个类别 105 for class_dir in class_dirs: 106 if class_dir != ".DS_Store": 107 class_dim += 1 108 #每个类别的信息 109 class_detail_list = {} 110 eval_sum = 0 111 trainer_sum = 0 112 #统计每个类别有多少张图片 113 class_sum = 0 114 #获取类别路径 115 path = data_list_path + class_dir 116 # 获取所有图片 117 img_paths = os.listdir(path) 118 for img_path in img_paths: # 遍历文件夹下的每个图片 119 name_path = path + '/' + img_path # 每张图片的路径 120 if class_sum % 10 == 0: # 每10张图片取一个做验证数据 121 eval_sum += 1 # test_sum为测试数据的数目 122 eval_list.append(name_path + "\t%d" % class_label + "\n") 123 else: 124 trainer_sum += 1 125 trainer_list.append(name_path + "\t%d" % class_label + "\n")#trainer_sum测试数据的数目 126 class_sum += 1 #每类图片的数目 127 all_class_images += 1 #所有类图片的数目 128 129 # 说明的json文件的class_detail数据 130 class_detail_list['class_name'] = class_dir #类别名称 131 class_detail_list['class_label'] = class_label #类别标签 132 class_detail_list['class_eval_images'] = eval_sum #该类数据的测试集数目 133 class_detail_list['class_trainer_images'] = trainer_sum #该类数据的训练集数目 134 class_detail.append(class_detail_list) 135 #初始化标签列表 136 train_parameters['label_dict'][str(class_label)] = class_dir 137 class_label += 1 138 139 #初始化分类数 140 train_parameters['class_dim'] = class_dim 141 142 #乱序 143 random.shuffle(eval_list) 144 with open(eval_list_path, 'a') as f: 145 for eval_image in eval_list: 146 f.write(eval_image) 147 148 random.shuffle(trainer_list) 149 with open(train_list_path, 'a') as f2: 150 for train_image in trainer_list: 151 f2.write(train_image) 152 153 # 说明的json文件信息 154 readjson = {} 155 readjson['all_class_name'] = data_list_path #文件父目录 156 readjson['all_class_images'] = all_class_images 157 readjson['class_detail'] = class_detail 158 jsons = json.dumps(readjson, sort_keys=True, indent=4, separators=(',', ': ')) 159 with open(train_parameters['readme_path'],'w') as f: 160 f.write(jsons) 161 print ('生成数据列表完成!') 162 163 164 # In[5]: 165 166 167 ''' 168 参数初始化 169 ''' 170 src_path=train_parameters['src_path'] 171 target_path=train_parameters['target_path'] 172 train_list_path=train_parameters['train_list_path'] 173 eval_list_path=train_parameters['eval_list_path'] 174 batch_size=train_parameters['train_batch_size'] 175 176 ''' 177 解压原始数据到指定路径 178 ''' 179 unzip_data(src_path,target_path) 180 181 ''' 182 划分训练集与验证集,乱序,生成数据列表 183 ''' 184 #每次生成数据列表前,首先清空train.txt和eval.txt 185 with open(train_list_path, 'w') as f: 186 f.seek(0) 187 f.truncate() 188 with open(eval_list_path, 'w') as f: 189 f.seek(0) 190 f.truncate() 191 192 #生成数据列表 193 get_data_list(target_path,train_list_path,eval_list_path) 194 195 196 # In[6]: 197 198 199 200 class FoodDataset(paddle.io.Dataset): 201 def __init__(self, data_path, mode='train'): 202 """ 203 数据读取器 204 :param data_path: 数据集所在路径 205 :param mode: train or eval 206 """ 207 super().__init__() 208 self.data_path = data_path 209 self.img_paths = [] 210 self.labels = [] 211 212 if mode == 'train': 213 with open(os.path.join(self.data_path, "train.txt"), "r", encoding="utf-8") as f: 214 self.info = f.readlines() 215 for img_info in self.info: 216 img_path, label = img_info.strip().split('\t') 217 self.img_paths.append(img_path) 218 self.labels.append(int(label)) 219 220 else: 221 with open(os.path.join(self.data_path, "eval.txt"), "r", encoding="utf-8") as f: 222 self.info = f.readlines() 223 for img_info in self.info: 224 img_path, label = img_info.strip().split('\t') 225 self.img_paths.append(img_path) 226 self.labels.append(int(label)) 227 228 229 def __getitem__(self, index): 230 """ 231 获取一组数据 232 :param index: 文件索引号 233 :return: 234 """ 235 # 第一步打开图像文件并获取label值 236 img_path = self.img_paths[index] 237 img = Image.open(img_path) 238 if img.mode != 'RGB': 239 img = img.convert('RGB') 240 img = img.resize((64, 64), Image.BILINEAR) 241 img = np.array(img).astype('float32') 242 img = img.transpose((2, 0, 1)) / 255 243 label = self.labels[index] 244 label = np.array([label], dtype="int64") 245 return img, label 246 247 def print_sample(self, index: int = 0): 248 print("文件名", self.img_paths[index], "\t标签值", self.labels[index]) 249 250 def __len__(self): 251 return len(self.img_paths) 252 253 254 # In[7]: 255 256 257 #训练数据加载 258 train_dataset = FoodDataset(data_path='/home/aistudio/data/',mode='train') 259 train_loader = paddle.io.DataLoader(train_dataset, batch_size=train_parameters['train_batch_size'], shuffle=True) 260 #测试数据加载 261 eval_dataset = FoodDataset(data_path='/home/aistudio/data/',mode='eval') 262 eval_loader = paddle.io.DataLoader(eval_dataset, batch_size = 8, shuffle=False) 263 264 265 # In[8]: 266 267 268 print(train_dataset.__len__()) 269 print(eval_dataset.__len__()) 270 271 272 # # **二、模型配置** 273 # 274 # 275 276 # In[30]: 277 278 279 #定义卷积网络 280 class MyCNN(nn.Layer): 281 def __init__(self): 282 super(MyCNN,self).__init__() 283 # in_channels, out_channels, kernel_size, stride=1, padding=0 284 self.conv0 = nn.Conv2D(in_channels = 3,out_channels=64, kernel_size=3,padding=0,stride=1) 285 self.pool0 = nn.MaxPool2D(kernel_size = 2,stride = 2) 286 self.conv1 = nn.Conv2D(in_channels = 64,out_channels=128,kernel_size=3,padding=0, stride = 1) 287 self.pool1 = nn.MaxPool2D(kernel_size = 2, stride = 2) 288 self.conv2 = nn.Conv2D(in_channels = 128,out_channels=128,kernel_size=5,padding=0) 289 self.pool2 = nn.MaxPool2D(kernel_size = 2, stride = 2) 290 self.fc1 = nn.Linear(in_features=128*5*5,out_features=5) 291 292 def forward(self,input): 293 x = self.conv0(input) 294 x = self.pool0(x) 295 x = self.conv1(x) 296 x = self.pool1(x) 297 x = self.conv2(x) 298 x = self.pool2(x) 299 x = paddle.reshape(x,shape=[-1,128*5*5]) 300 y = self.fc1(x) 301 302 return y 303 304 305 # In[31]: 306 307 308 # 实例化网络 309 model = MyCNN() 310 # 定义输入 311 input_define = paddle.static.InputSpec(shape=[-1, 3 , 64, 64], 312 dtype="float32", 313 name="img") 314 315 label_define = paddle.static.InputSpec(shape=[-1, 1], 316 dtype="int64", 317 name="label") 318 model = paddle.Model(model, inputs=input_define, labels=label_define) 319 params_info = model.summary((1,3,64,64)) 320 print(params_info) # 打印模型基础结构和参数信息 321 322 323 # # **三、模型训练 && 四、模型评估** 324 325 # In[24]: 326 327 328 Batch=0 329 Batchs=[] 330 all_train_accs=[] 331 def draw_train_acc(Batchs, train_accs): 332 title="training accs" 333 plt.title(title, fontsize=24) 334 plt.xlabel("batch", fontsize=14) 335 plt.ylabel("acc", fontsize=14) 336 plt.plot(Batchs, train_accs, color='green', label='training accs') 337 plt.legend() 338 plt.grid() 339 plt.show() 340 341 all_train_loss=[] 342 def draw_train_loss(Batchs, train_loss): 343 title="training loss" 344 plt.title(title, fontsize=24) 345 plt.xlabel("batch", fontsize=14) 346 plt.ylabel("loss", fontsize=14) 347 plt.plot(Batchs, train_loss, color='red', label='training loss') 348 plt.legend() 349 plt.grid() 350 plt.show() 351 352 353 # In[25]: 354 355 356 model=MyCNN() # 模型实例化 357 model.train() # 训练模式 358 cross_entropy = paddle.nn.CrossEntropyLoss() 359 opt=paddle.optimizer.SGD(learning_rate=0.001, parameters=model.parameters()) 360 361 epochs_num=train_parameters['num_epochs'] #迭代次数 362 for pass_num in range(train_parameters['num_epochs']): 363 for batch_id,data in enumerate(train_loader()): 364 image = data[0] 365 label = data[1] 366 predict=model(image) #数据传入model 367 # print(predict) 368 # print(np.argmax(predict,axis=1)) 369 loss=cross_entropy(predict,label) 370 acc=paddle.metric.accuracy(predict,label.reshape([-1,1]))#计算精度 371 # acc = np.mean(label==np.argmax(predict,axis=1)) 372 373 if batch_id!=0 and batch_id%10==0: 374 Batch = Batch+10 375 Batchs.append(Batch) 376 all_train_loss.append(loss.numpy()[0]) 377 all_train_accs.append(acc.numpy()[0]) 378 print("epoch:{},step:{},train_loss:{},train_acc:{}".format(pass_num,batch_id,loss.numpy()[0],acc.numpy()[0])) 379 loss.backward() 380 opt.step() 381 opt.clear_grad() #opt.clear_grad()来重置梯度 382 paddle.save(model.state_dict(),'MyCNN')#保存模型 383 draw_train_acc(Batchs,all_train_accs) 384 draw_train_loss(Batchs,all_train_loss) 385 386 387 # # **五、模型评估** 388 389 # In[12]: 390 391 392 #模型评估 393 para_state_dict = paddle.load("MyCNN") 394 model = MyCNN() 395 model.set_state_dict(para_state_dict) #加载模型参数 396 model.eval() #验证模式 397 398 accs = [] 399 400 for batch_id,data in enumerate(eval_loader()):#测试集 401 image=data[0] 402 label=data[1] 403 predict=model(image) 404 acc=paddle.metric.accuracy(predict,label) 405 accs.append(acc.numpy()[0]) 406 avg_acc = np.mean(accs) 407 print("当前模型在验证集上的准确率为:",avg_acc) 408 409 410 # In[13]: 411 412 413 import os 414 import zipfile 415 416 def unzip_infer_data(src_path,target_path): 417 ''' 418 解压预测数据集 419 ''' 420 if(not os.path.isdir(target_path)): 421 z = zipfile.ZipFile(src_path, 'r') 422 z.extractall(path=target_path) 423 z.close() 424 425 426 def load_image(img_path): 427 ''' 428 预测图片预处理 429 ''' 430 img = Image.open(img_path) 431 if img.mode != 'RGB': 432 img = img.convert('RGB') 433 img = img.resize((64, 64), Image.BILINEAR) 434 img = np.array(img).astype('float32') 435 img = img.transpose((2, 0, 1)) # HWC to CHW 436 img = img/255 # 像素值归一化 437 return img 438 439 440 infer_src_path = '/home/aistudio/data/data42610/foods.zip' 441 infer_dst_path = '/home/aistudio/data/foods_test' 442 unzip_infer_data(infer_src_path,infer_dst_path) 443 444 ''' 445 模型预测 446 ''' 447 448 para_state_dict = paddle.load("MyCNN") 449 model = MyCNN() 450 model.set_state_dict(para_state_dict) #加载模型参数 451 model.eval() #验证模式 452 453 #展示预测图片 454 infer_path='data/foods/baklava/1936599.jpg' 455 img = Image.open(infer_path) 456 plt.imshow(img) #根据数组绘制图像 457 plt.show() #显示图像 458 #对预测图片进行预处理 459 infer_imgs = [] 460 infer_imgs.append(load_image(infer_path)) 461 infer_imgs = np.array(infer_imgs) 462 label_dic = train_parameters['label_dict'] 463 for i in range(len(infer_imgs)): 464 data = infer_imgs[i] 465 dy_x_data = np.array(data).astype('float32') 466 dy_x_data=dy_x_data[np.newaxis,:, : ,:] 467 img = paddle.to_tensor (dy_x_data) 468 out = model(img) 469 lab = np.argmax(out.numpy()) #argmax():返回最大数的索引 470 print("第{}个样本,被预测为:{},真实标签为:{}".format(i+1,label_dic[str(lab)],infer_path.split('/')[-2]) ) 471 print("结束")

祈福@点亮希望

浙公网安备 33010602011771号

浙公网安备 33010602011771号